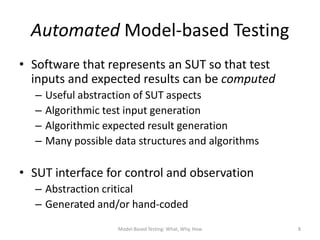

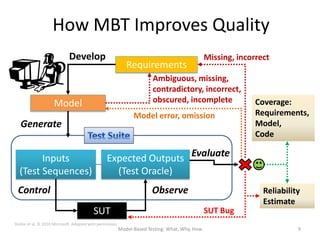

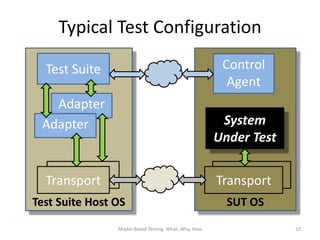

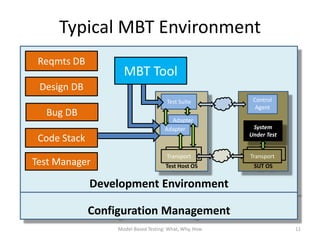

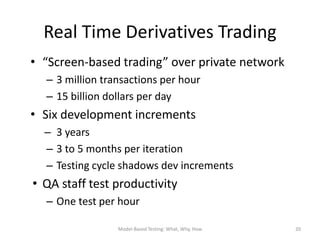

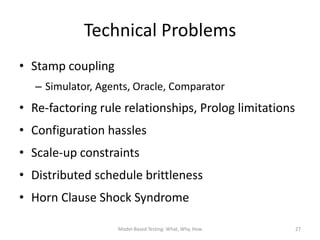

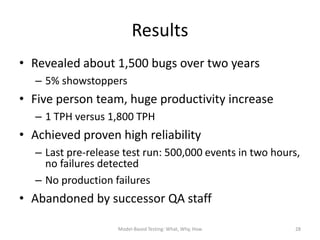

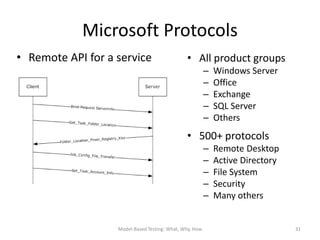

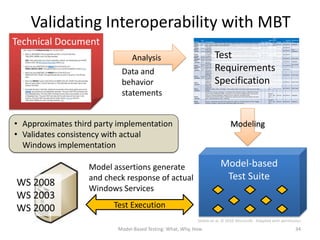

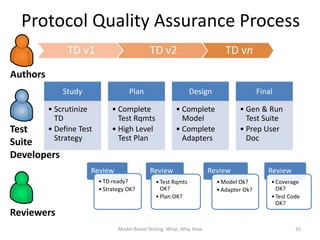

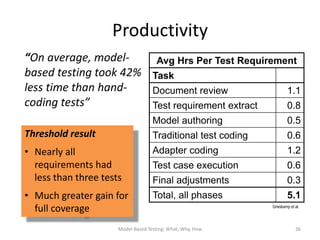

The document discusses model-based testing (MBT), emphasizing its benefits in reducing testing costs, time to market, and improving product quality through automated test generation. It presents case studies, particularly in automated derivatives trading and Microsoft protocol interoperability, showcasing how MBT can efficiently identify bugs and enhance testing productivity. Additionally, it highlights the technical challenges and solutions in implementing MBT within software development environments.

![What is a Test Model?

TwoPlayerGame

TwoPlayerGame Mode Machine test design pattern

Two Play erG a me ( )

+TwoPlayerGame() α

p1 _S tart ( ) / p2 _S tart ( ) /

+p1_Start( ) s im u lat eV olle y( ) s im u lat eV olle y( ) ThreePlayerGame( ) /TwoPlayerGame( )

+p1_WinsVolley( ) G am e S tarte d α

-p1_AddPoint( )

+p1_IsWinner( ) p1 _W ins V olle y ( ) p2 _W ins V olle y ( )

p1_Start( ) / p3_Start( )/

+p1_IsServer( ) [th is .p 1_ Sc ore ( ) < 20 ] / [th is .p 2_ Sc ore ( ) < 20 ] / simulateVolley( ) simulateVolley( )

+p1_Points( )

th is .p 1A ddP o in t( ) th is .p 2A ddP o in t( ) Game Started

s im u lat eV olle y( ) p1 _W ins V olle y ( ) / s im u lat eV olle y( )

+p2_Start( ) s im u lat eV olle y( )

+p2_WinsVolley( ) P la ye r 1 P la ye r 2 p2_Start( ) /

-p2_AddPoint( ) S erv ed S erv ed

simulateVolley( )

+p2_IsWinner( ) p2 _W ins V olle y ( ) / p1_WinsVolley( ) /

+p2_IsServer( ) p1 _W ins V olle y ( )

s im u lat eV olle y( )

p2 _W ins V olle y ( )

simulateVolley( )

+p2_Points( ) [th is .p 1_ Sc ore ( ) = = 20] / [th is .p 2_ Sc ore ( ) = = 20] /

th is .p 1A ddP o in t( ) th is .p 1A ddP o in t( )

+~( )

p2_WinsVolley( )

P la ye r 1 P la ye r 2 p1_WinsVolley( ) [this.p2_Score( ) < 20] / p3_WinsVolley( )

p1 _Is W in ner( ) /

retu rn TR UE ;

Won Won p2 _Is W in ner( ) /

retu rn TR UE ;

[this.p1_Score( ) < 20] / this.p2AddPoint( ) [this.p3_Score( ) < 20] /

~( ) ~( ) this.p1AddPoint( ) simulateVolley( ) this.p3AddPoint( )

ω

simulateVolley( ) simulateVolley( )

p1_WinsVolley( ) / p2_WinsVolley( ) /

simulateVolley( ) simulateVolley( )

Player 1 Player 2 Player 3

Served Served Served

ThreePlayerGame p2_WinsVolley( ) / p3_WinsVolley( ) /

simulateVolley( ) simulateVolley( )

Th ree P la y erG am e ( ) / Two P la y erG am e ( )

α p1_WinsVolley( ) p3_WinsVolley( )

p 3_ S tart ( ) / [this.p1_Score( ) == 20] / [this.p3_Score( ) == 20] /

s im ulat eV o lley ( )

ThreePlayerGame p 3_ W ins V o lle y( ) /

G a m e S ta rt ed

this.p1AddPoint( ) this.p3AddPoint( )

s im ulat eV o lley ( ) p3_WinsVolley( ) /

+ThreePlayerGame() p 3_ W ins V o lle y( ) simulateVolley( )

+p3_Start( ) [t his .p 3_ S co re ( ) < 2 0] /

p2_WinsVolley( )

th is . p3 A dd P oint ( )

+p3_WinsVolley( ) Tw oP lay erG am e ( )

s im ulat eV o lley ( ) [this.p2_Score( ) == 20] /

-p3_AddPoint( ) p 1_ W ins V o lle y( ) /

+p3_IsWinner( )

s im ulat eV o lley ( ) this.p1AddPoint( )

P la y er 3

+p3_IsServer( ) S erv e d

+p3_Points( ) p 2_ W ins V o lle y( ) /

s im ulat eV o lley ( )

+~( ) p 3_ W ins V o lle y( )

[t his .p 3_ S co re ( ) = = 2 0] /

p1_IsWinner( ) / p2_IsWinner( ) / p3_IsWinner( ) /

th is . p3 A dd P oint ( ) return TRUE; Player 1 return TRUE; Player 2 Player 3 return TRUE;

Won Won Won

P la y er 3

W on p 3_ Is W in ne r( ) / ~( )

ret urn TR UE ; ~( ) ~( )

~( )

ω ω

SUT Design Model Test Model

Model-Based Testing: What, Why, How

6](https://image.slidesharecdn.com/model-based-testing-ignite-130209140757-phpapp01/85/Model-Based-Testing-Why-What-How-6-320.jpg)

![Model-based Test Suite

1 ThreePlayerGame( )

• N+ Strategy 2

3

p1_Start( )

p2_Start( )

8 Player 2 Served

4 p3_Start( )

– Start at α 5 p1_WinsVolley( )

Player 1 Served

11 Player 3 Served

17 omega

6 p1_WinsVolley( )[this.p1_Score( ) < 20]

*7

– Follow transition 7

8

p1_WinsVolley( ) [this.p1_Score( ) == 20]

p2_WinsVolley( )

Player 1 W on

14

Player 1 W on

path 9

10

p2_WinsVolley( ) [this.p2_Score( ) < 20]

p2_WinsVolley( ) [this.p2_Score( ) == 20]

*6 Player 1 Served

2

– Stop if ω or visited *9 Player 2 Served

– Three loop

11 Player 3 Served

1 3

alpha Gam eStarted Player 2 Served 17 omega

* 10

iterations Player 2 W on

15

Player 2 W on

– Assumes state 5 Player 1 Served

4

* 12

observer Player 3 Served

17 omega

11 p3_WinsVolley( )

* 13

– Try all sneak paths

Player 3 W on

12 p3_WinsVolley( ) [this.p3_Score( ) < 20] 16

Player 3 Served Player 3 W on

13 p3_WinsVolley( ) [this.p3_Score( ) == 20] 8

Player 2 Served

14 p1_IsWinner( )

15 p2_IsWinner( )

16 p3_IsWinner( ) 5 Player 1 Served

17 ~( )

N+ Test Suite

Model-Based Testing: What, Why, How

7](https://image.slidesharecdn.com/model-based-testing-ignite-130209140757-phpapp01/85/Model-Based-Testing-Why-What-How-7-320.jpg)