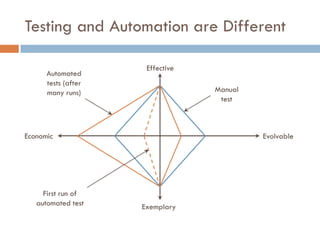

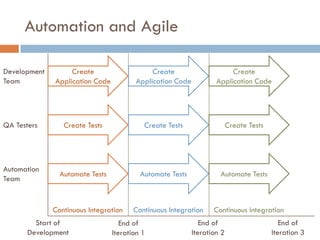

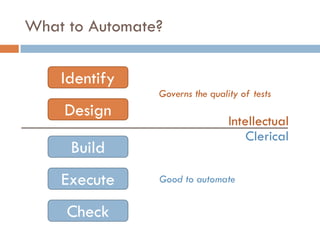

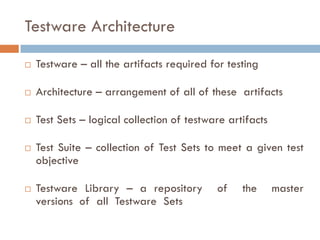

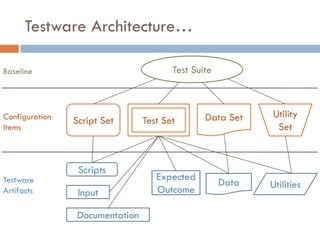

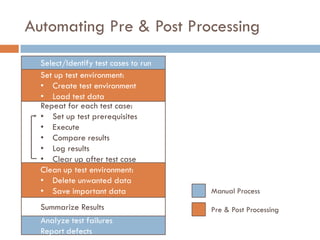

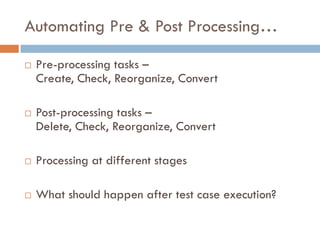

The document discusses test automation, highlighting its efficiency over manual testing and the various benefits such as consistency, reuse, and earlier time to market. It outlines the process of automation, including writing scripts and the architecture of testware, while also emphasizing the limitations of automation such as not replacing manual testing and dependence on test quality. Career opportunities in test automation are mentioned, including roles such as test automation architect and test automator.