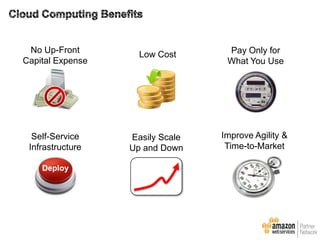

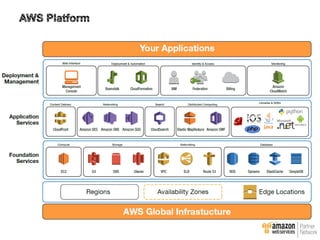

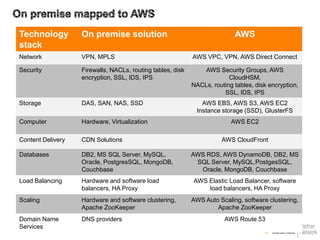

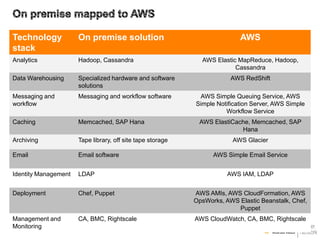

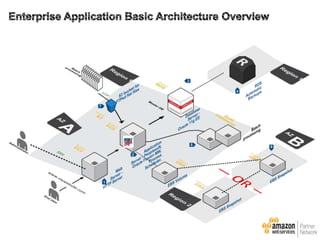

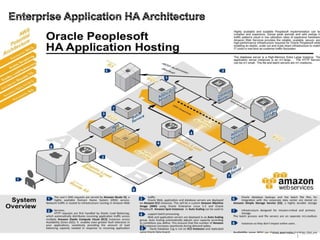

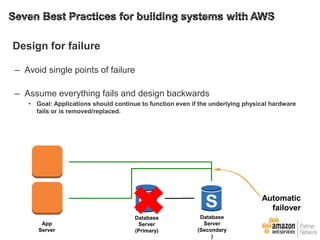

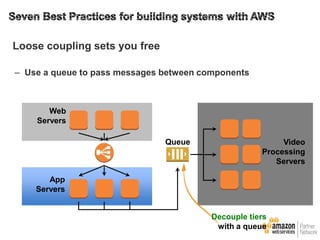

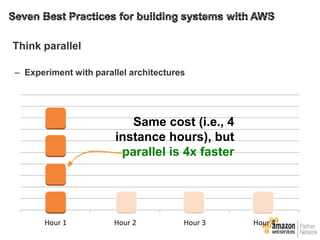

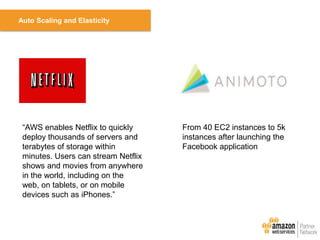

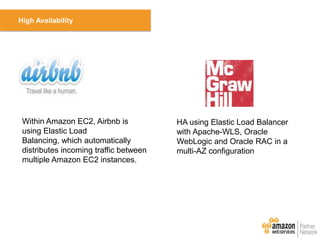

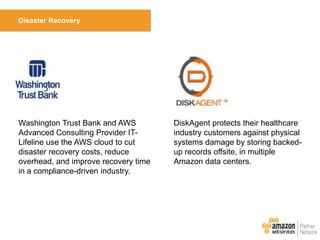

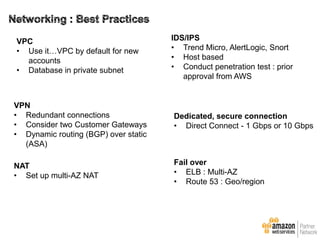

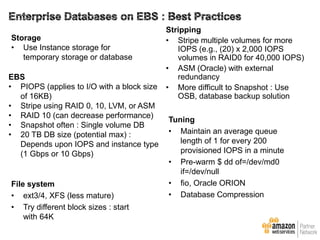

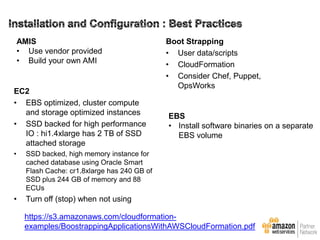

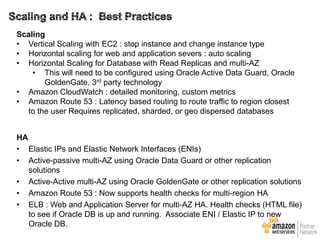

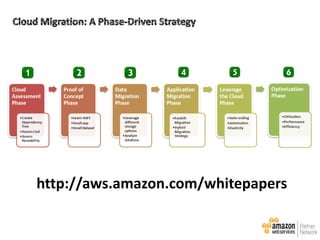

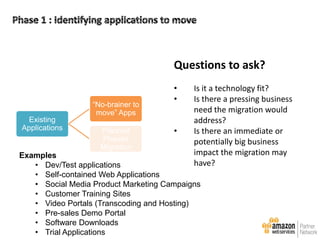

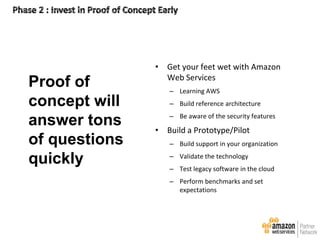

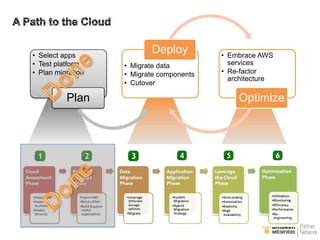

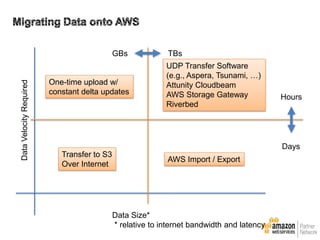

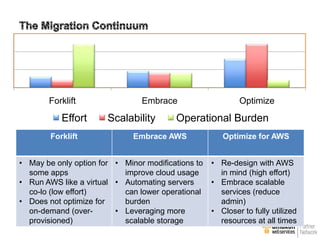

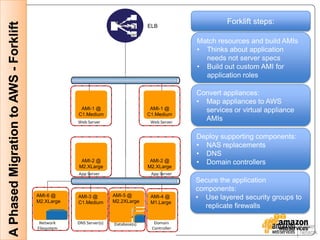

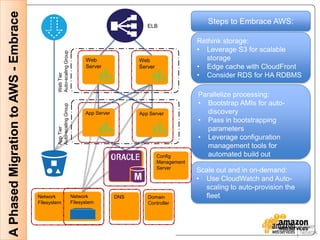

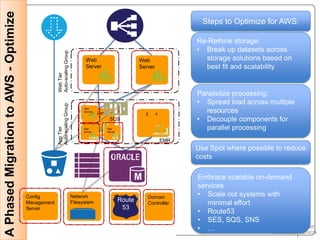

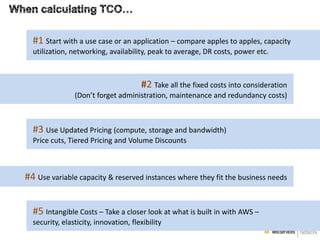

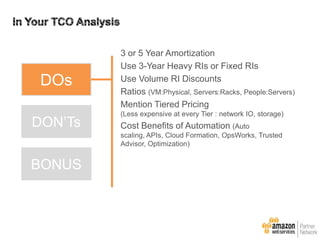

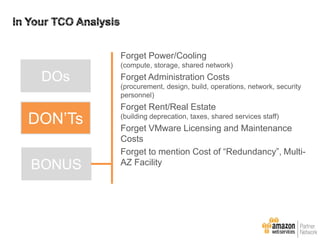

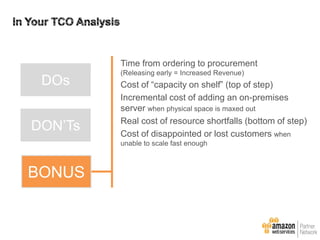

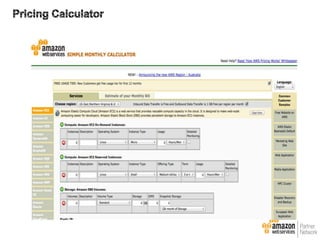

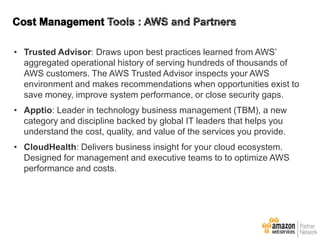

This document provides an overview of why enterprises choose AWS and best practices for migrating applications to AWS. It discusses AWS design principles like designing for failure and implementing elasticity. It also covers topics like calculating total cost of ownership, customer migration lessons learned, and next steps to optimize applications in AWS.