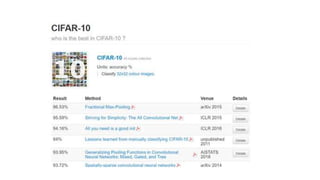

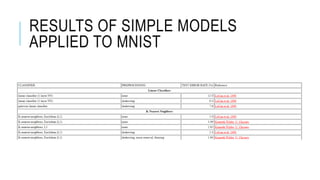

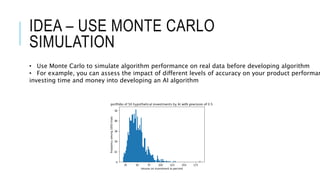

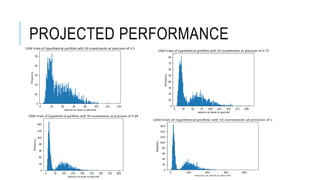

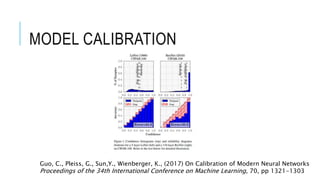

This document discusses methods for setting performance targets and evaluating uncertainty for AI models. It recommends using Monte Carlo simulations to project how different levels of accuracy would impact product performance before developing complex algorithms. This allows determining if baseline accuracy from simple models is sufficient or if higher accuracy targets are needed. Simulations can also estimate if gathering more data would significantly improve performance. Calibration of confidence scores is important for applications requiring per-instance decisions or risk assessments.