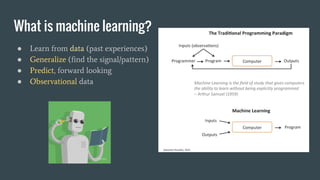

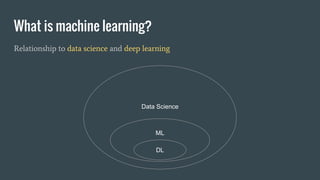

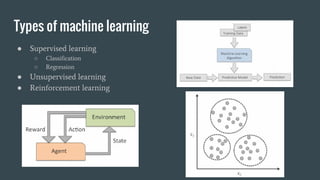

This document outlines an introductory machine learning course, covering key concepts, applications, and types of machine learning like supervised and unsupervised learning. It discusses techniques like linear regression, classification, and handling overfitting. The course will include tutorials on sentiment analysis, spam filtering, stock prediction, image recognition and recommendation engines using Python and Scala. Later classes cover machine learning at scale using tools like Spark MLLib.

![(Linear) Regression example

● Boston housing dataset

● Median value of houses (MV)

vs. average # rooms (RM)

from sklearn.linear_model import LinearRegression

model = LinearRegression()

x, y = housing[['RM']], housing['MV']

model.fit(x, y)

model.score(x, y)

R2=0.48](https://image.slidesharecdn.com/4e29af15-76d0-4a1f-9798-bb939df6f526-160525062633/85/Machine-learning-key-concepts-12-320.jpg)

![(Linear) Regression example

● Boston housing dataset

● Median value of houses (MV)

vs. average # rooms (RM),

and industrial zoning proportions (INDUS)

from sklearn.linear_model import LinearRegression

model = LinearRegression()

x, y = housing[['RM', ‘INDUS’]], housing['MV']

model.fit(x, y)

model.score(x, y)

R2=0.53](https://image.slidesharecdn.com/4e29af15-76d0-4a1f-9798-bb939df6f526-160525062633/85/Machine-learning-key-concepts-13-320.jpg)