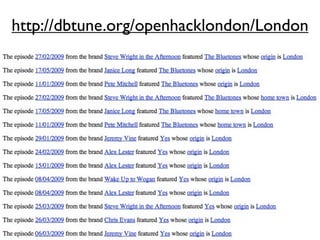

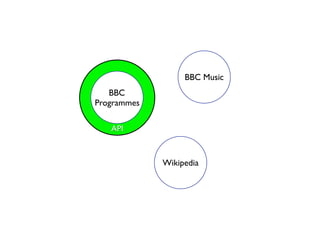

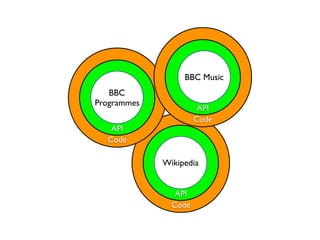

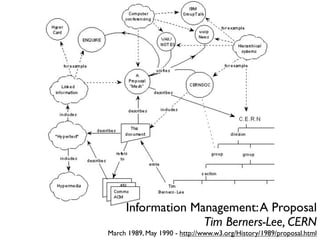

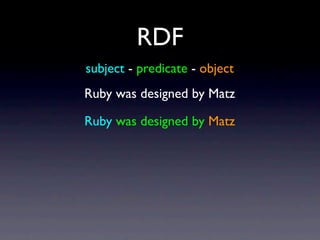

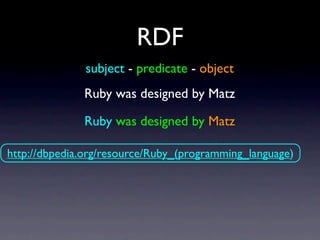

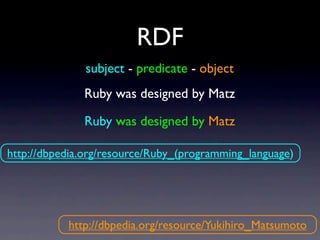

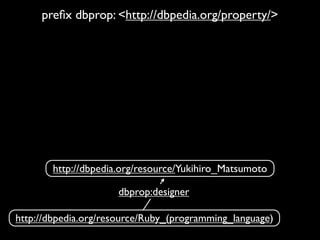

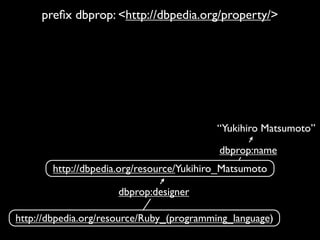

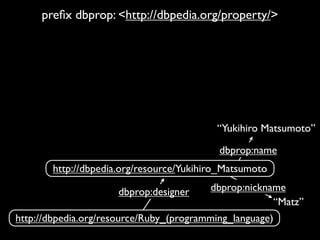

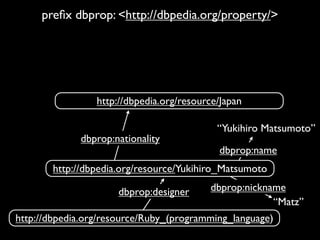

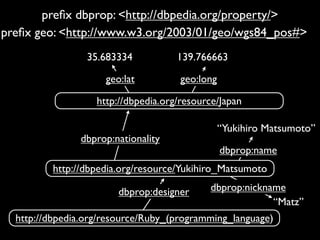

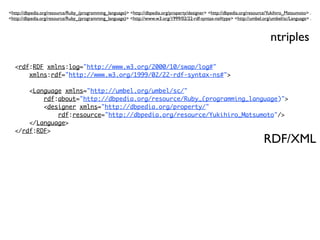

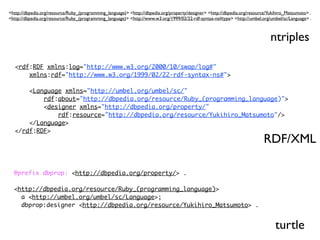

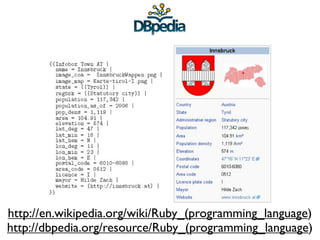

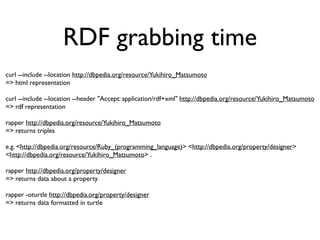

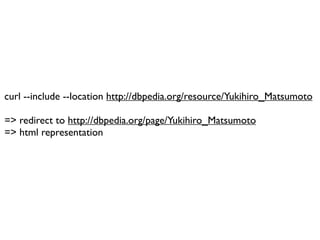

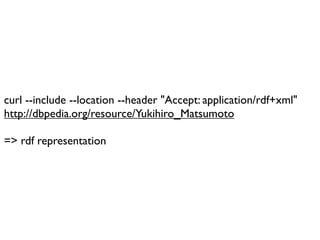

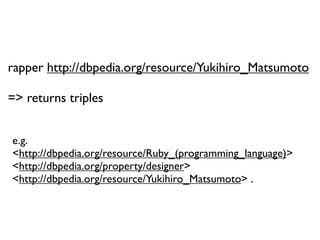

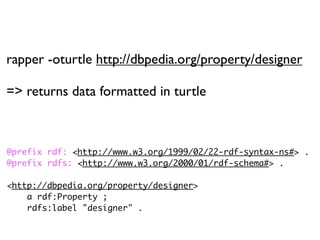

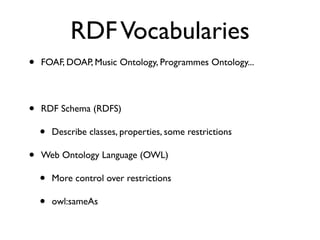

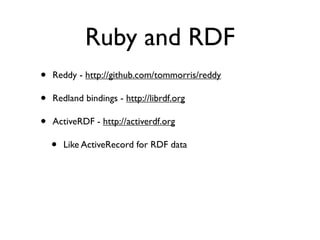

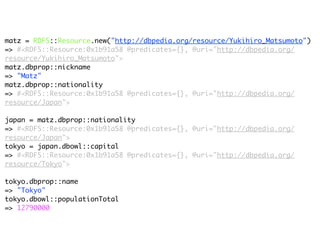

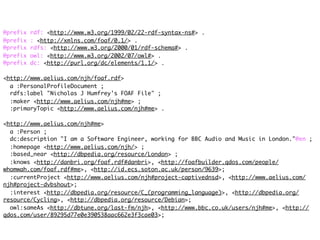

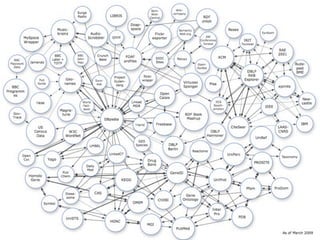

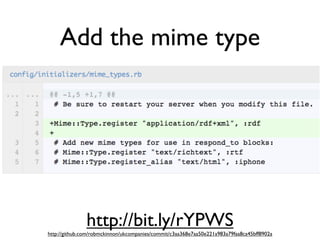

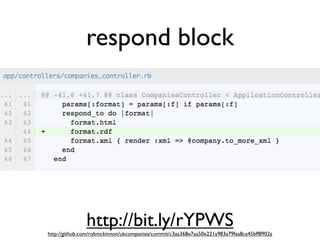

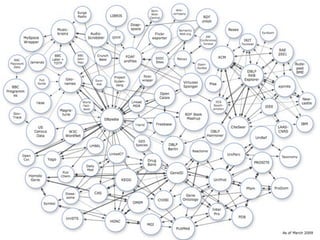

The document discusses Linked Data and RDF, describing how data from different sources on the web can be connected using URIs, HTTP, and structured data formats like RDF. It provides examples of retrieving and representing data from DBpedia in RDF format using Ruby tools and libraries. It also discusses publishing RDF from a Rails application by adding MIME types and generating RDF representations.