lecture1.pptx

•Download as PPTX, PDF•

0 likes•19 views

Pre-requisites Probability Linear algebra, optimization, high school calculus, … Recommended Books “Machine Learning: A Probabilistic Perspective”, Kevin Murphy “Pattern Recognition and Machine Learning”, Christopher Bishop “An Introduction to Probabilistic Graphical Models”, Michael Jordan Evaluation Assignments Exam

Report

Share

Report

Share

Recommended

More Related Content

Similar to lecture1.pptx

Similar to lecture1.pptx (20)

ML platforms & auto ml - UEM annotated (2) - #digitalbusinessweek

ML platforms & auto ml - UEM annotated (2) - #digitalbusinessweek

From science to engineering, the process to build a machine learning product

From science to engineering, the process to build a machine learning product

A business level introduction to Artificial Intelligence - Louis Dorard @ PAP...

A business level introduction to Artificial Intelligence - Louis Dorard @ PAP...

BigMLSchool: ML Platforms and AutoML in the Enterprise

BigMLSchool: ML Platforms and AutoML in the Enterprise

[DevDay2019] How do I test AI models? - By Minh Hoang, Senior QA Engineer at KMS![[DevDay2019] How do I test AI models? - By Minh Hoang, Senior QA Engineer at KMS](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[DevDay2019] How do I test AI models? - By Minh Hoang, Senior QA Engineer at KMS](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

[DevDay2019] How do I test AI models? - By Minh Hoang, Senior QA Engineer at KMS

Before Kaggle : from a business goal to a Machine Learning problem

Before Kaggle : from a business goal to a Machine Learning problem

Madrian_Summit Conference - AI and Business Education - 2023-11-30.pptx

Madrian_Summit Conference - AI and Business Education - 2023-11-30.pptx

Recently uploaded

In today's digital world, credit card fraud is a growing concern. This project explores machine learning techniques for credit card fraud detection. We delve into building models that can identify suspicious transactions in real-time, protecting both consumers and financial institutions. for more detection and machine learning algorithm explore data science and analysis course: https://bostoninstituteofanalytics.org/data-science-and-artificial-intelligence/Credit Card Fraud Detection: Safeguarding Transactions in the Digital Age

Credit Card Fraud Detection: Safeguarding Transactions in the Digital AgeBoston Institute of Analytics

Solution manual for managerial accounting 8th edition by john wild ken shaw barbara chiappetta (1).Solution manual for managerial accounting 8th edition by john wild ken shaw b...

Solution manual for managerial accounting 8th edition by john wild ken shaw b...rightmanforbloodline

Jual Obat Aborsi (088980685493) Obat Aborsi Cytotec Asli.

Klinik _ Apotik Online Solusi Menggugurkan Masalah Kehamilan Anda | Jual Obat Aborsi Asli ( Wa – 088980685493 )

KLINIK ABORSI TERPEECAYA _ Jual Obat Aborsi Cytotec Misoprostol Asli 100% Ampuh Hanya 3 Jam Langsung Gugur || OBAT PENGGUGUR JANIN KANDUNGAN AMPUH |

Jual Obat Aborsi Asli, Ampuh, Manjur, Tuntas | OBAT ABORSI OLINE “APOTIK Jual Obat Cytotec, Gastrul, Gynacoside Asli Ampuh. JUAL ” Obat Aborsi Tuntas | Obat Aborsi Manjur | Obat Aborsi Ampuh | Obat Penggugur Janin | Obat Pencegah Kehamilan | Obat Pelancar Haid | Obat terlambat Bulan | Ciri Obat Aborsi Asli | Obat Telat Bulan | GDP Aborsi Asli |

Obat Penggugur Kandungan, Obat Aborsi Batam, Obat Aborsi Banjarmasin, Obat Aborsi Banjarbaru, Obat Aborsi Banjar, Obat Aborsi Bandung, Obat Aborsi Bandar Lampung, Obat Aborsi Banda Aceh, Obat Aborsi Balikpapan, Obat Aborsi Ambon, Obat Aborsi Batu, Obat Aborsi Baubau, Obat Aborsi Bekasi, Obat Aborsi Bengkulu, Obat Aborsi Bima, Obat Aborsi Binjai, Obat Aborsi Bitung, Obat Aborsi Blitar, Obat Aborsi Bogor, Obat Aborsi Bontang, Obat Aborsi Bukittinggi, Obat Aborsi Cilegon, Obat Aborsi Cimahi, Obat Aborsi Cirebon, Obat Aborsi Denpasar Bali, Obat Aborsi Depok, Obat Aborsi Dumai, Obat Aborsi Gorontalo, Obat Aborsi Jambi, Obat Aborsi Jakarta, Obat Aborsi Sawahlunto, Obat Aborsi Kendari, Obat Aborsi Kediri, Obat Aborsi Jayapura, Obat Aborsi Langsa, Obat Aborsi Kupang, Obat Aborsi Surabaya , Obat Aborsi Kotamobagu, Obat Aborsi Medan, Obat Aborsi Madiun,Obat Aborsi Lubuklinggau, Obat Aborsi Lhokseumawe, Obat Aborsi Magelang, Obat Aborsi Makassar, Obat Aborsi Malang, Obat Aborsi Manado, Obat Aborsi Mataram, Obat Aborsi Metro, Obat Aborsi Mojokerto, Obat Aborsi Meulaboh, Obat Aborsi Padang, Obat Aborsi Padang Panjang, Obat Aborsi Padang Sidempuan, Obat Aborsi Pagaralam, Obat Aborsi Palangkaraya, Obat Aborsi Pangkal Pinang, Obat Aborsi Palu, Obat Aborsi Palopo, Obat Aborsi Palembang, Obat Aborsi Parepare, Obat Aborsi Pariaman, Obat Aborsi Pasuruan, Obat Aborsi Payakumbuh, Obat Aborsi Pontianak, Obat Aborsi Pematang Siantar, Obat Aborsi Pekanbaru, Obat Aborsi Pekalongan, Obat Aborsi Salatiga, Obat Aborsi Sabang, Obat Aborsi Purwokerto, Obat Aborsi Probolinggo, Obat Aborsi PRABUMULIH, Obat Aborsi Samarinda, Obat Aborsi Semarang, Obat Aborsi Serang, Obat Aborsi Sibolga, Obat Aborsi singkawang,Obat Aborsi Solok, Obat Aborsi Sorong, Obat Aborsi Subulussalam, Obat Aborsi Sukabumi, Obat Aborsi Sungai Penuh, Obat Aborsi Surakarta, Obat Aborsi Tangerang, Obat Aborsi Tangerang Selatan, Obat Aborsi Tanjung Pinang, Obat Aborsi Tarakan, Obat Aborsi Tasikmalaya, Obat Aborsi Tegal , Obat Abersi Ternate, Obat Abersi Tidore Kepulauan, Obat Abersi Tomohon, Obat Abersi Tual, Obat Abersi Tanjung Balai, Obat Abersi Tebing Tinggi, Obat ABORSI YOGYAKARTA.OBAT ABORSI TEBING TINGGI, OBAT ABORSI YOGYAKARTA.OBAT Ogyakarta.Obat Aborsi Tidore Kepulauan, Obat Aborsi Tomohon, Obat Aborsi Tual, Obat Aborsi Tanjung Balai, Obat AborsiJual Obat Aborsi Lhokseumawe ( Asli No.1 ) 088980685493 Obat Penggugur Kandun...

Jual Obat Aborsi Lhokseumawe ( Asli No.1 ) 088980685493 Obat Penggugur Kandun...Obat Aborsi 088980685493 Jual Obat Aborsi

Recently uploaded (20)

Jual Obat Aborsi Bandung (Asli No.1) Wa 082134680322 Klinik Obat Penggugur Ka...

Jual Obat Aborsi Bandung (Asli No.1) Wa 082134680322 Klinik Obat Penggugur Ka...

Credit Card Fraud Detection: Safeguarding Transactions in the Digital Age

Credit Card Fraud Detection: Safeguarding Transactions in the Digital Age

bams-3rd-case-presentation-scabies-12-05-2020.pptx

bams-3rd-case-presentation-scabies-12-05-2020.pptx

Solution manual for managerial accounting 8th edition by john wild ken shaw b...

Solution manual for managerial accounting 8th edition by john wild ken shaw b...

Northern New England Tableau User Group (TUG) May 2024

Northern New England Tableau User Group (TUG) May 2024

Statistics Informed Decisions Using Data 5th edition by Michael Sullivan solu...

Statistics Informed Decisions Using Data 5th edition by Michael Sullivan solu...

Jual Obat Aborsi Lhokseumawe ( Asli No.1 ) 088980685493 Obat Penggugur Kandun...

Jual Obat Aborsi Lhokseumawe ( Asli No.1 ) 088980685493 Obat Penggugur Kandun...

SAC 25 Final National, Regional & Local Angel Group Investing Insights 2024 0...

SAC 25 Final National, Regional & Local Angel Group Investing Insights 2024 0...

How to Transform Clinical Trial Management with Advanced Data Analytics

How to Transform Clinical Trial Management with Advanced Data Analytics

Chapter 1 - Introduction to Data Mining Concepts and Techniques.pptx

Chapter 1 - Introduction to Data Mining Concepts and Techniques.pptx

obat aborsi Tarakan wa 081336238223 jual obat aborsi cytotec asli di Tarakan9...

obat aborsi Tarakan wa 081336238223 jual obat aborsi cytotec asli di Tarakan9...

Abortion Clinic in Kempton Park +27791653574 WhatsApp Abortion Clinic Service...

Abortion Clinic in Kempton Park +27791653574 WhatsApp Abortion Clinic Service...

lecture1.pptx

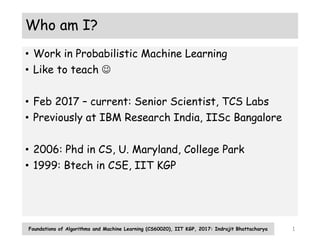

- 1. Who am I? • Work in Probabilistic Machine Learning • Like to teach • Feb 2017 – current: Senior Scientist, TCS Labs • Previously at IBM Research India, IISc Bangalore • 2006: Phd in CS, U. Maryland, College Park • 1999: Btech in CSE, IIT KGP Foundations of Algorithms and Machine Learning (CS60020), IIT KGP, 2017: Indrajit Bhattacharya 1

- 2. What about you? • And what would you like to get out of this course? Foundations of Algorithms and Machine Learning (CS60020), IIT KGP, 2017: Indrajit Bhattacharya 2

- 3. Logistics • Pre-requisites • Probability • Linear algebra, optimization, high school calculus, … • Recommended Books • “Machine Learning: A Probabilistic Perspective”, Kevin Murphy • “Pattern Recognition and Machine Learning”, Christopher Bishop • “An Introduction to Probabilistic Graphical Models”, Michael Jordan • Evaluation • Assignments • Exam Foundations of Algorithms and Machine Learning (CS60020), IIT KGP, 2017: Indrajit Bhattacharya 3

- 4. Machine Learning: Why and What? Foundations of Algorithms and Machine Learning (CS60020), IIT KGP, 2017: Indrajit Bhattacharya 4

- 5. How are ML problems different? • Learning • What does it mean? • Abductive and inductive reasoning vs deductive reasoning • Why do machines / programs need to learn? • Does it “make sense” to learn to sort or to add? Foundations of Algorithms and Machine Learning (CS60020), IIT KGP, 2017: Indrajit Bhattacharya 5

- 6. Why has ML taken off? • Data • Computational resources, storage • Business interest • Toolkits • Sound mathematical principles Foundations of Algorithms and Machine Learning (CS60020), IIT KGP, 2017: Indrajit Bhattacharya 6

- 7. Types of ML problems: Supervised Learning • Predict response / output / label given input • Input: d-dimensional vector. Features / attributes • Output: • Categorical: Binary, Multi-class, Multi-label • Real valued: Simple regression, multiple regression • Ordinal: Ordinal regression • Ranking: Learning to rank • Structured: Sequence, Tree, Graph • Training set: both inputs and outputs available • Test set: for evaluation; only inputs available Foundations of Algorithms and Machine Learning (CS60020), IIT KGP, 2017: Indrajit Bhattacharya 7

- 8. Types of ML problems: Unsupervised Learning • Also called Knowledge discovery • No labels available • No clear notion of training or test • Discover “patterns” e.g. clusters in the data • Density estimation • Evaluation? Foundations of Algorithms and Machine Learning (CS60020), IIT KGP, 2017: Indrajit Bhattacharya 8

- 9. Types of ML Problems: Reinforcement Learning 1. A robot vehicle on Mars 2. Learning to play chess • Direct feedback only at the end • Possibly closer to human learning • Not covered in this course Foundations of Algorithms and Machine Learning (CS60020), IIT KGP, 2017: Indrajit Bhattacharya 9

- 10. Concept Learning: Example • Hidden set of instances called concept • Need to predict if a test instance is in that set • Given training examples from that set Foundations of Algorithms and Machine Learning (CS60020), IIT KGP, 2017: Indrajit Bhattacharya 10

- 11. Probabilistic Concept Learning • What are possible concepts? (Hypothesis space) • How likely are the training examples to come from a particular concept? (Training likelihood) • How likely is the test example to belong to a particular concept? (Test Likelihood) • Bayesian Concept Learning • How likely is a particular concept apriori? (Prior probability) • How is likely is a particular concept after seeing the training data? (posterior probability) • How likely is the test example considering all these concepts and their posterior probabilities? Foundations of Algorithms and Machine Learning (CS60020), IIT KGP, 2017: Indrajit Bhattacharya 11

- 12. Notions in ML • Overfitting, generalization, regularization • Validation of learning: whether future test instances are correctly predicted • Curse of dimensionality • Lots of features is not always a good idea • Model selection • Need to know many different ML models • “No free lunch” theorem Foundations of Algorithms and Machine Learning (CS60020), IIT KGP, 2017: Indrajit Bhattacharya 12

- 13. Course Plan • Module 1 • Introduction • Machine Learning: What and why • Probability review for Machine Learning • Estimation and decision theory • Simple probabilistic models for classification and regression • Module 2 • Latent variable models • Graphical Models: Models for complex (non-iid) data • Module 3 • Non parametric models, Kernel methods • Ensemble methods Foundations of Algorithms and Machine Learning (CS60020), IIT KGP, 2017: Indrajit Bhattacharya 13