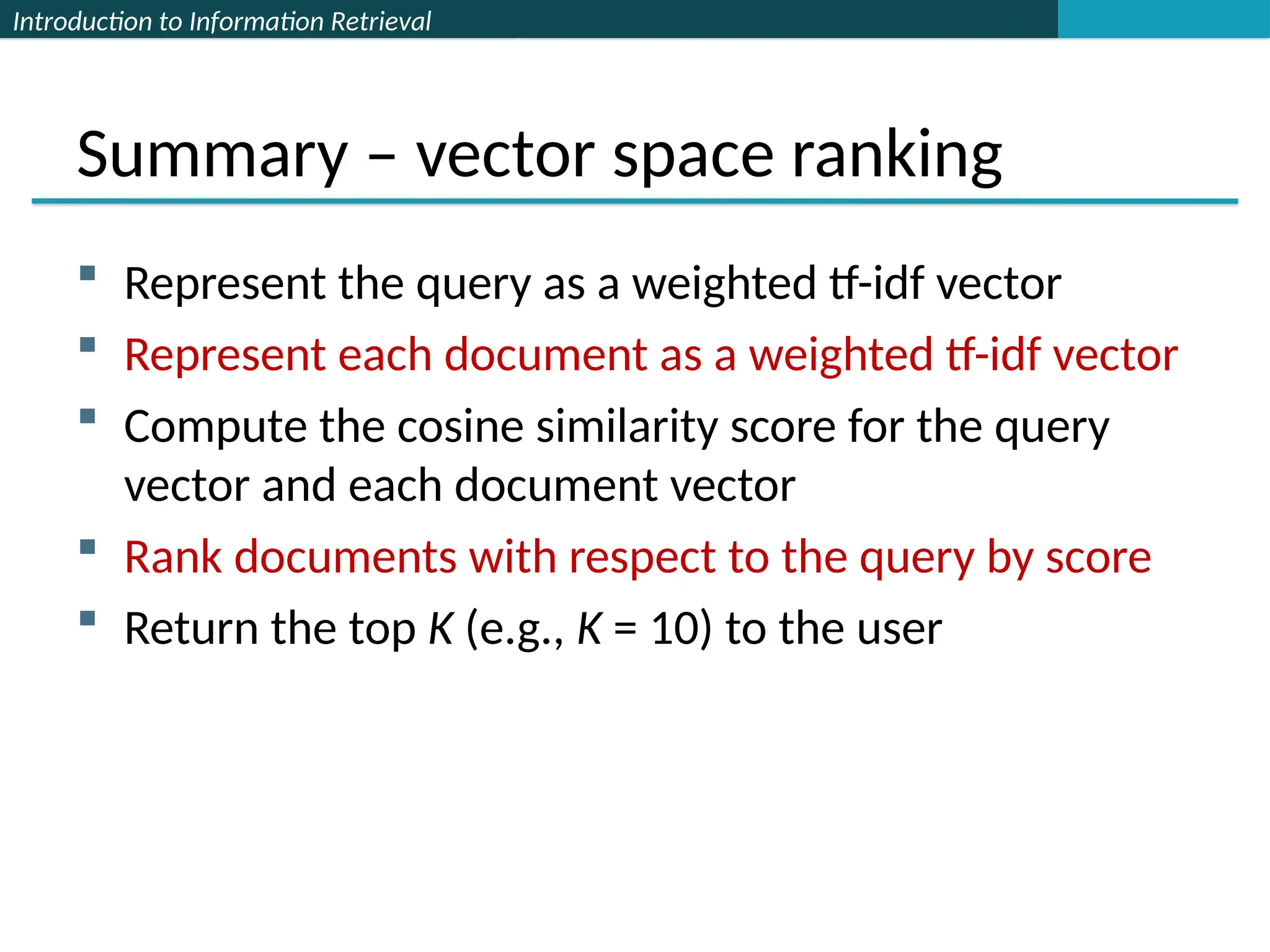

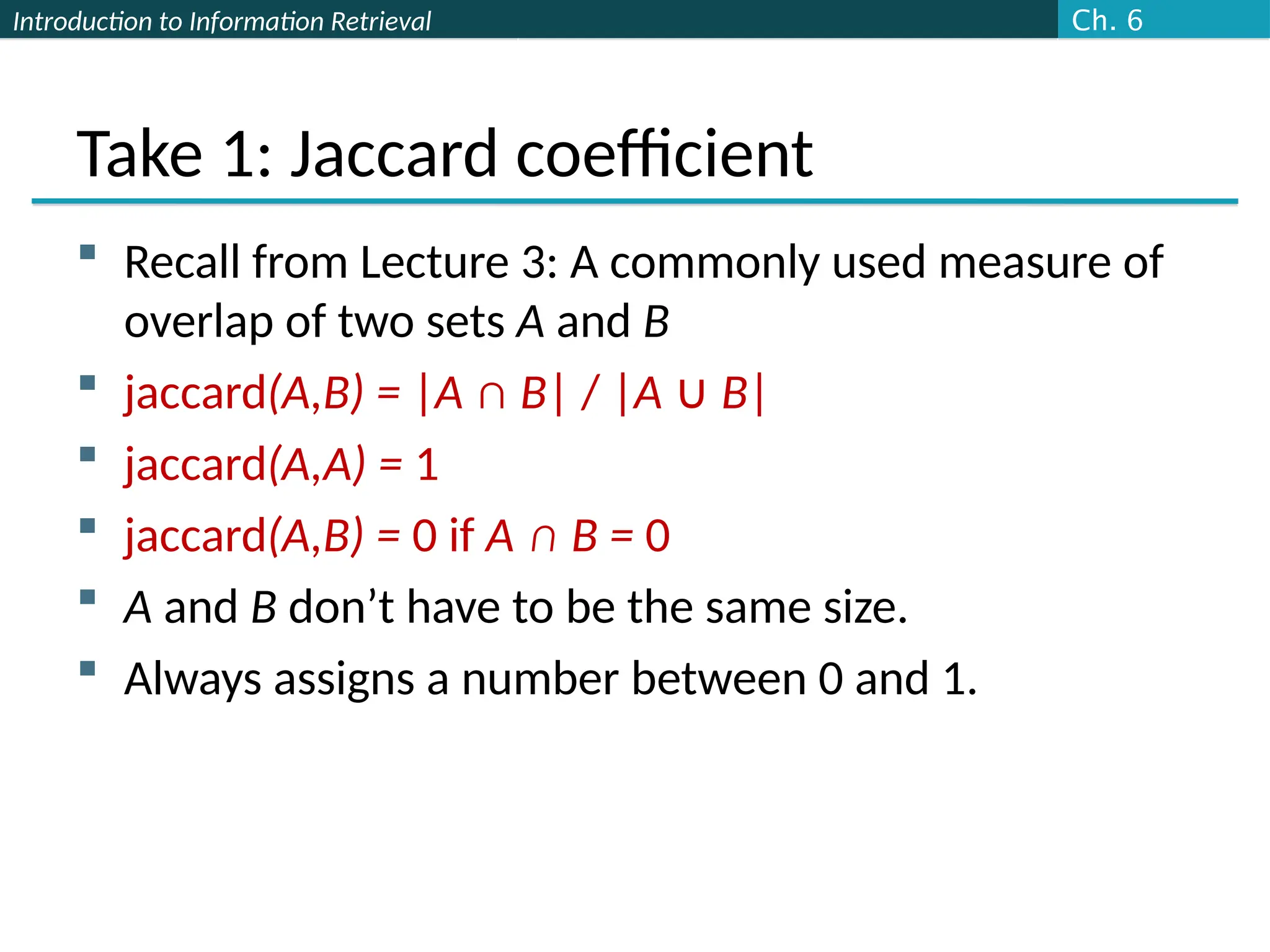

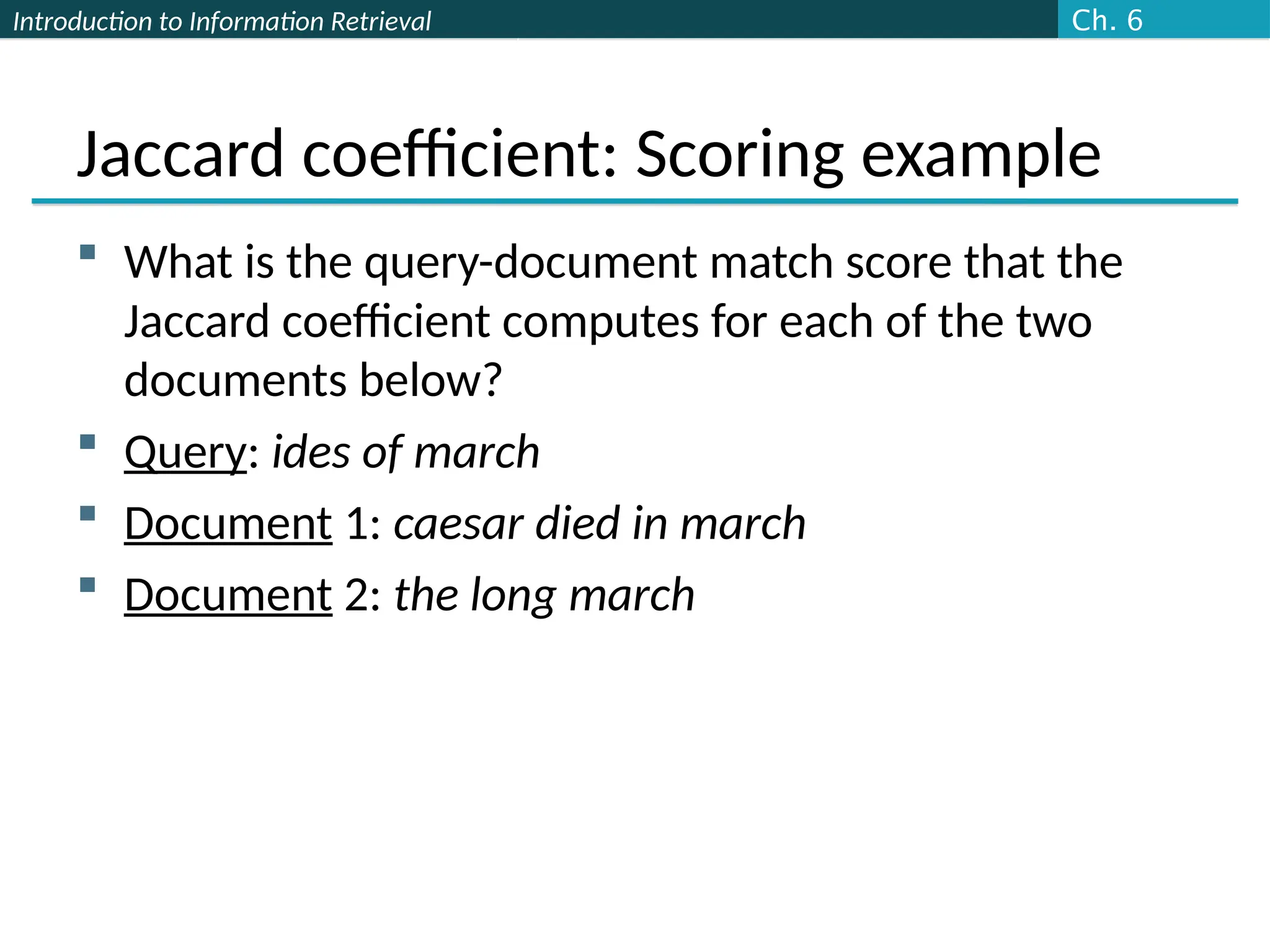

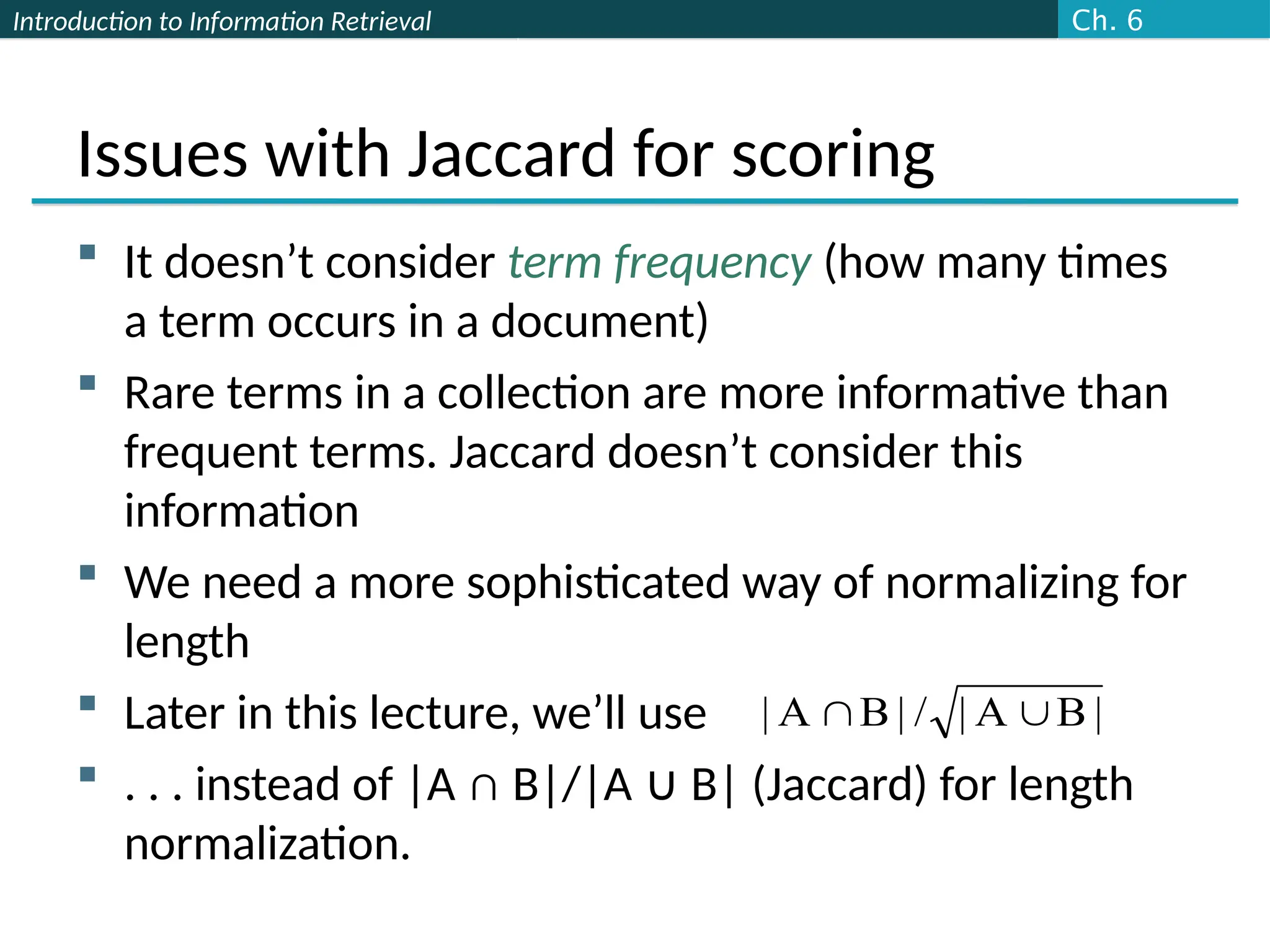

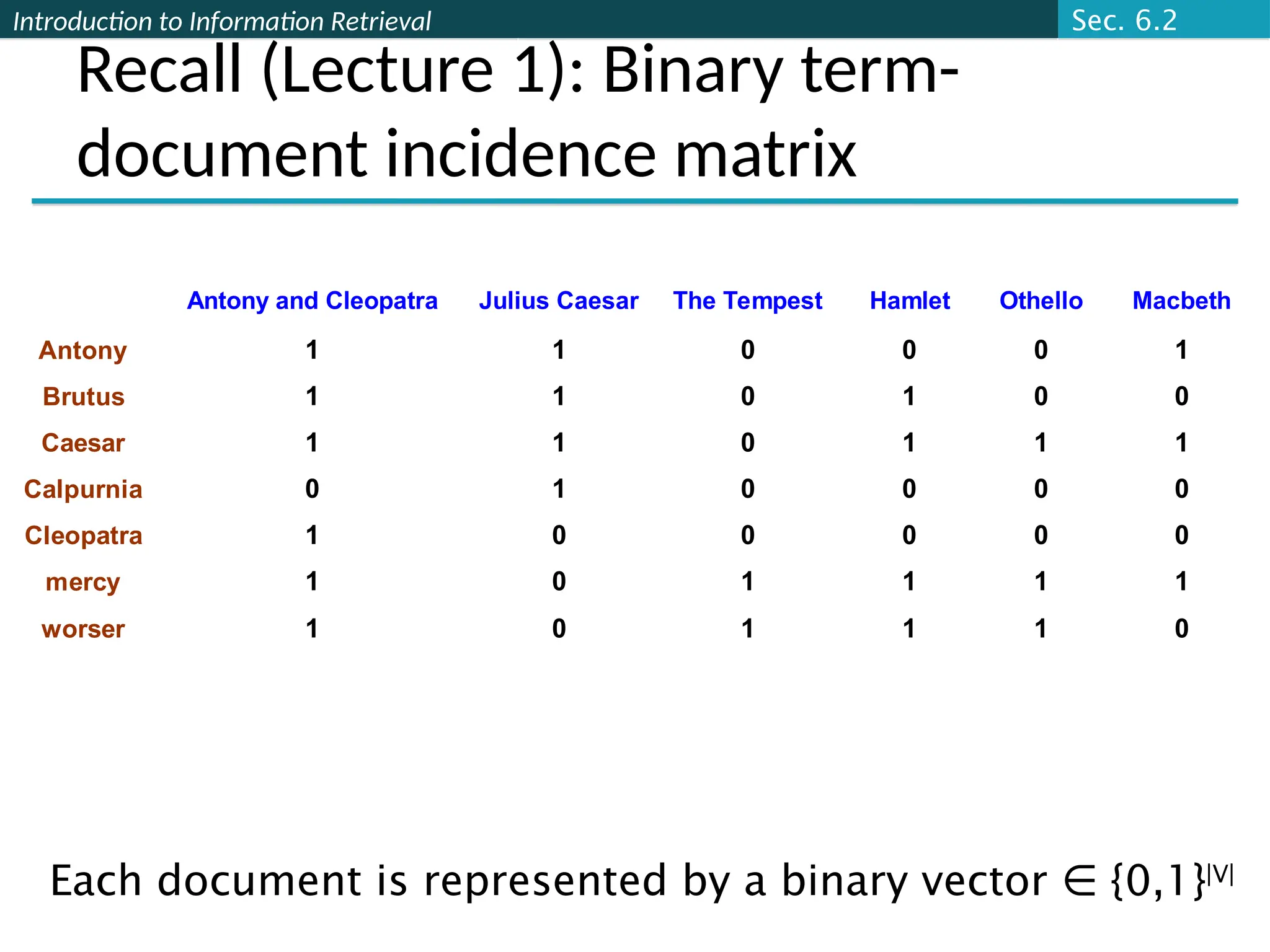

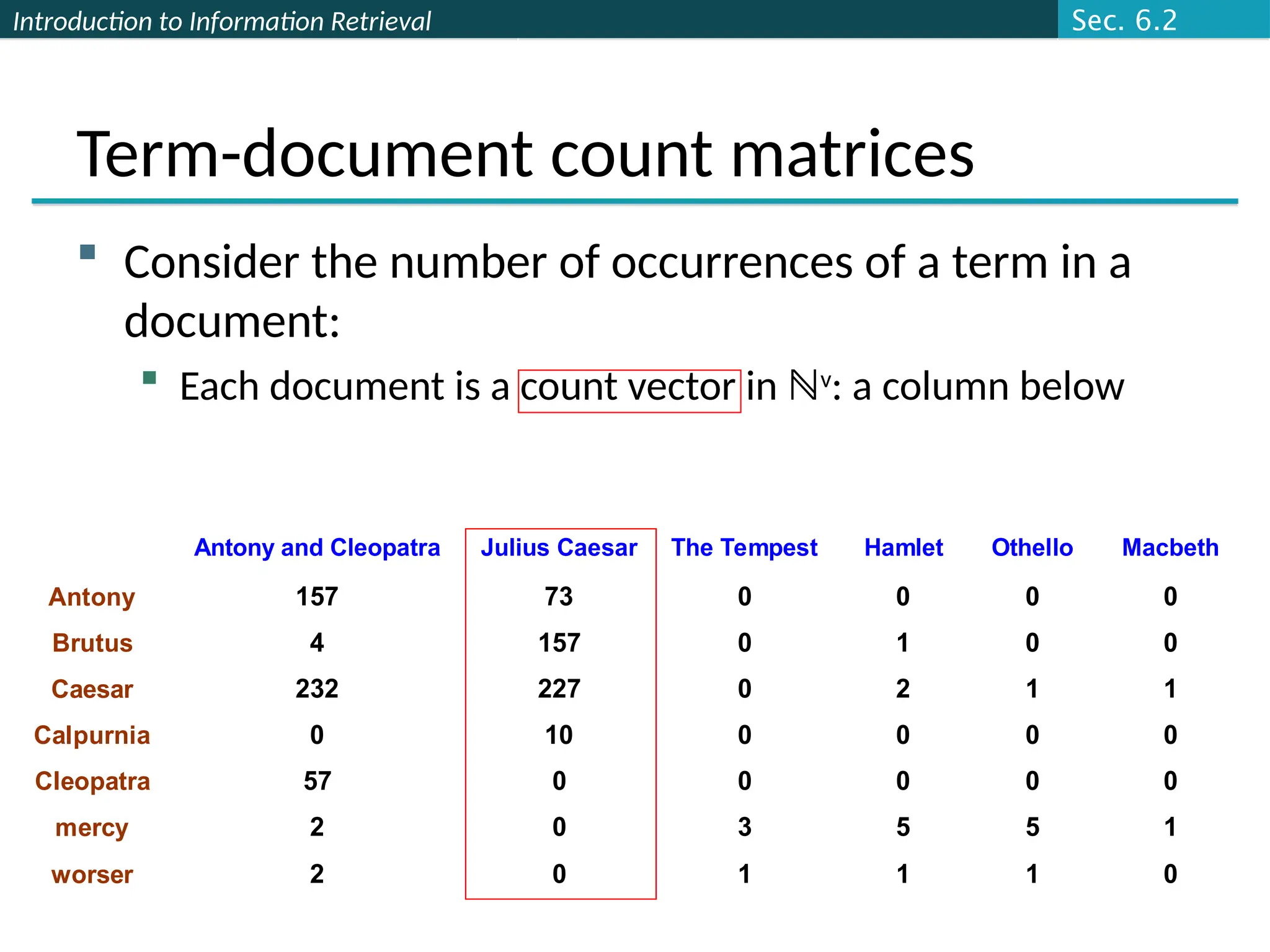

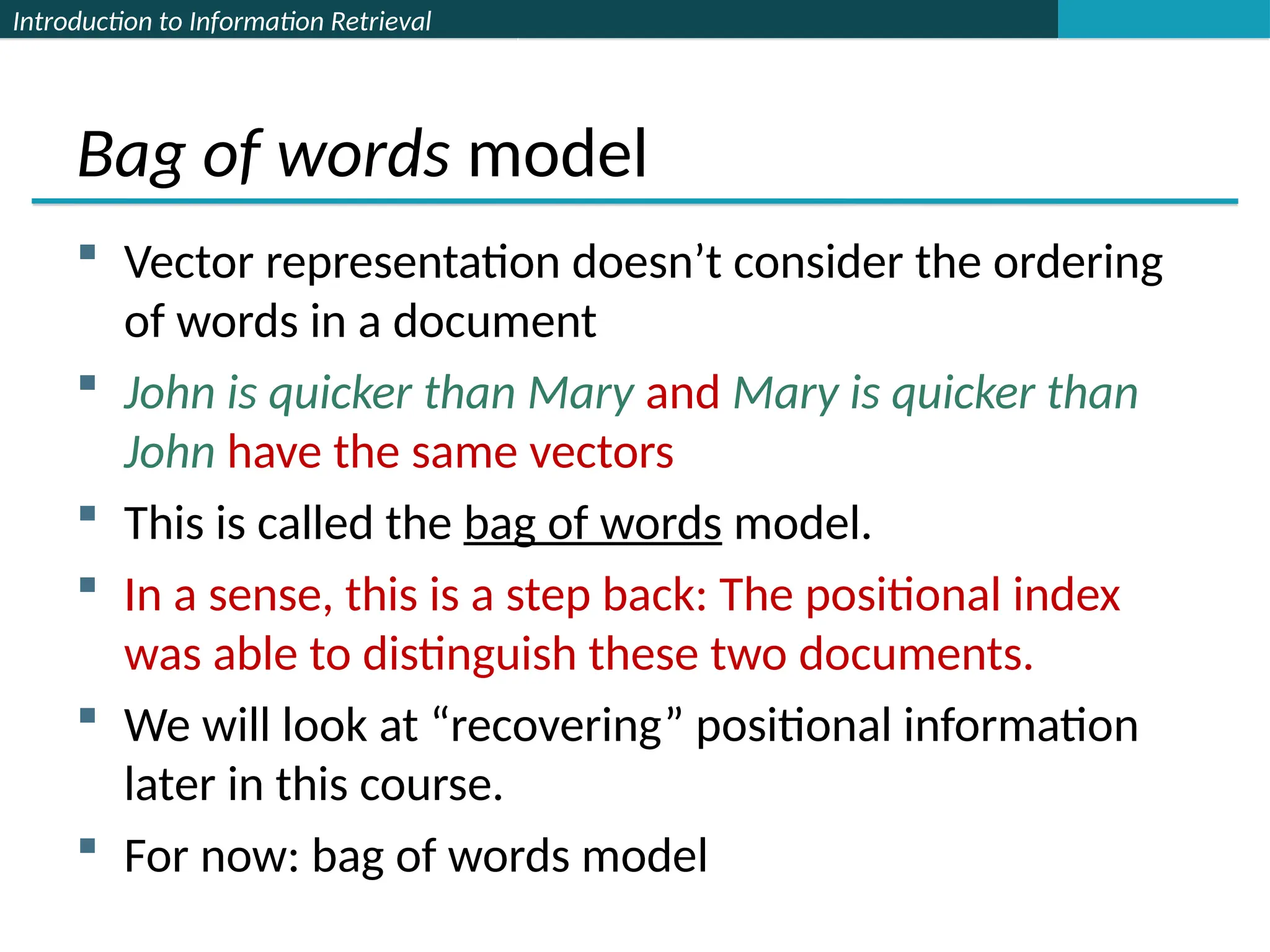

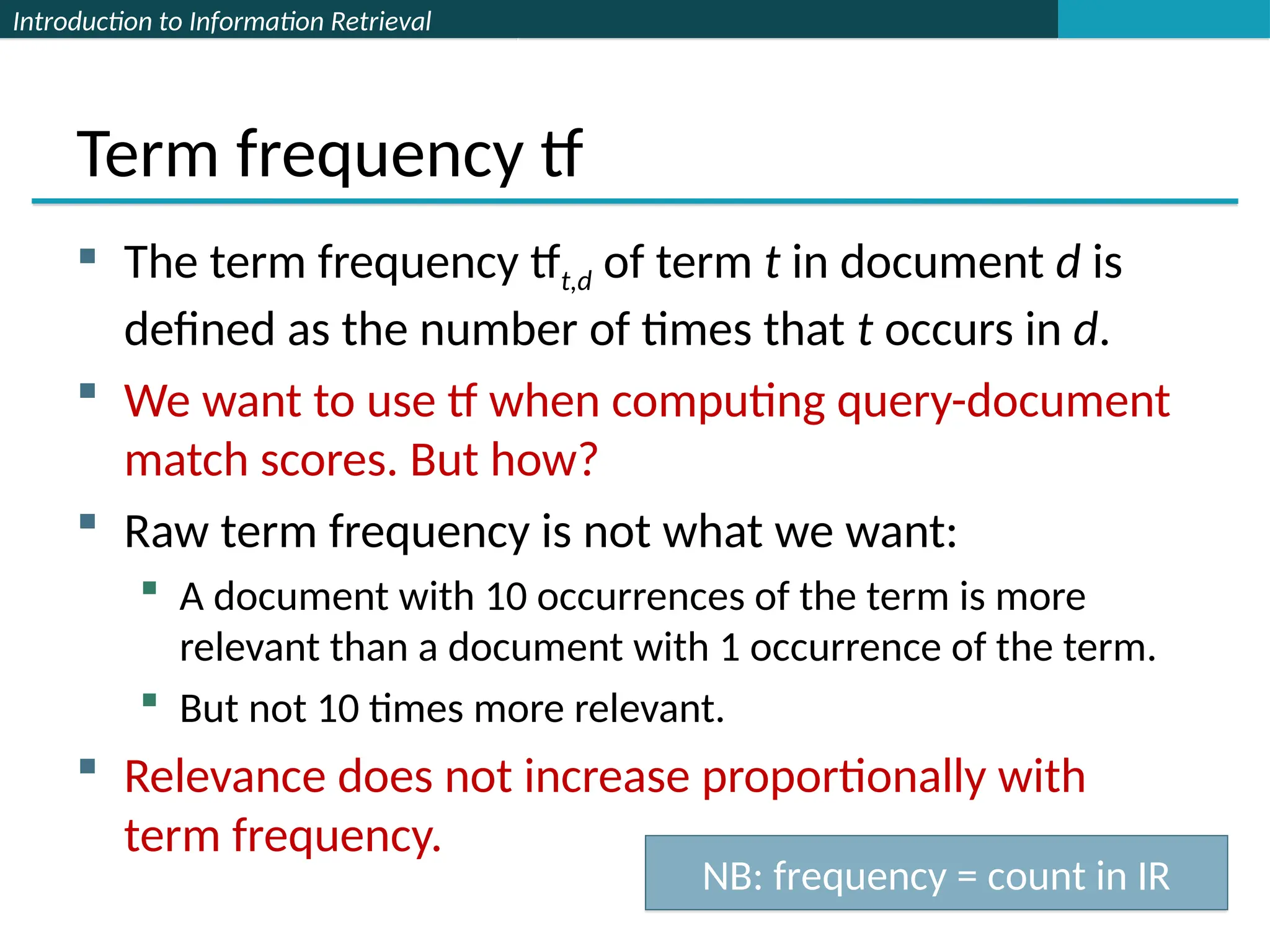

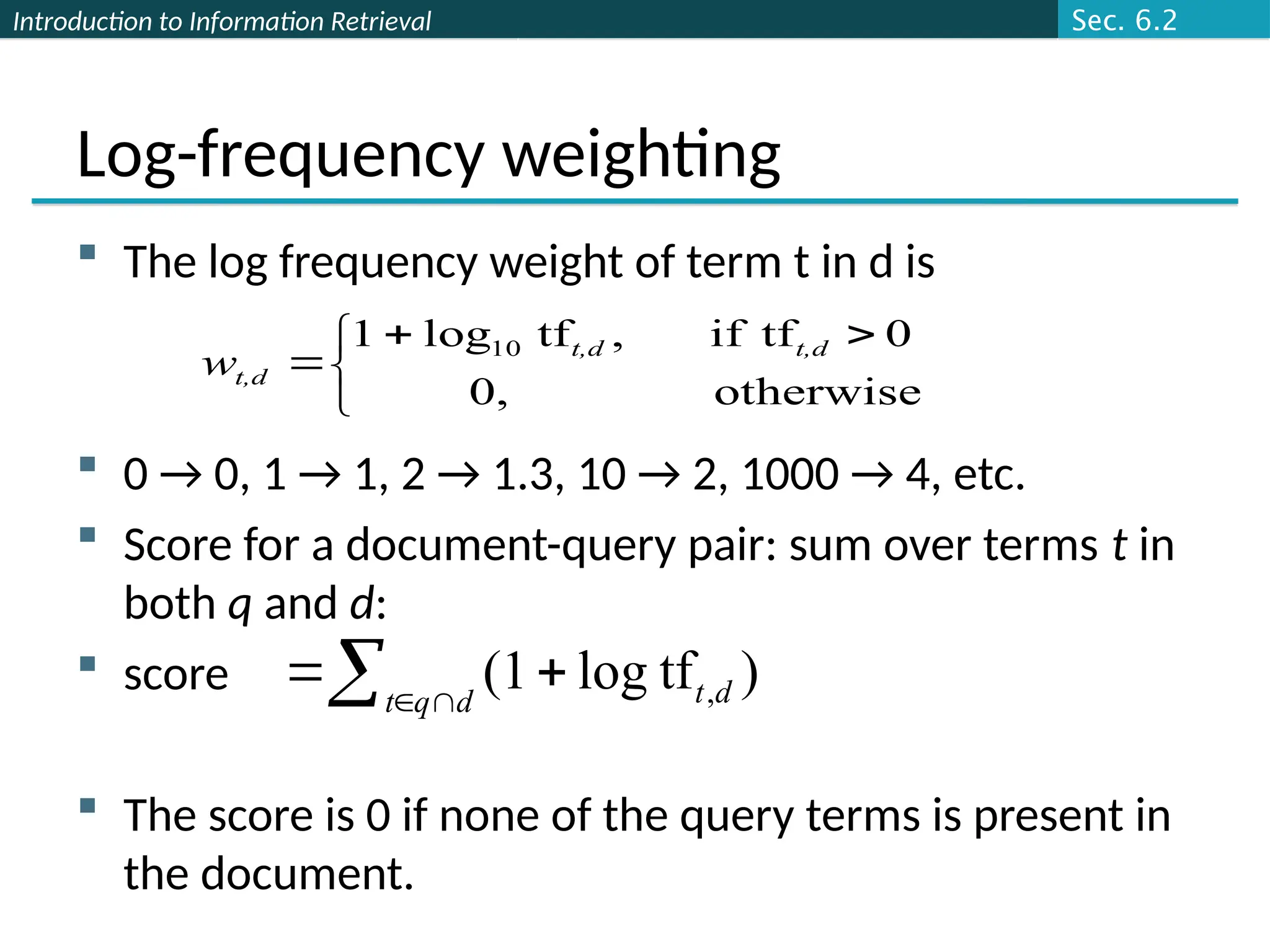

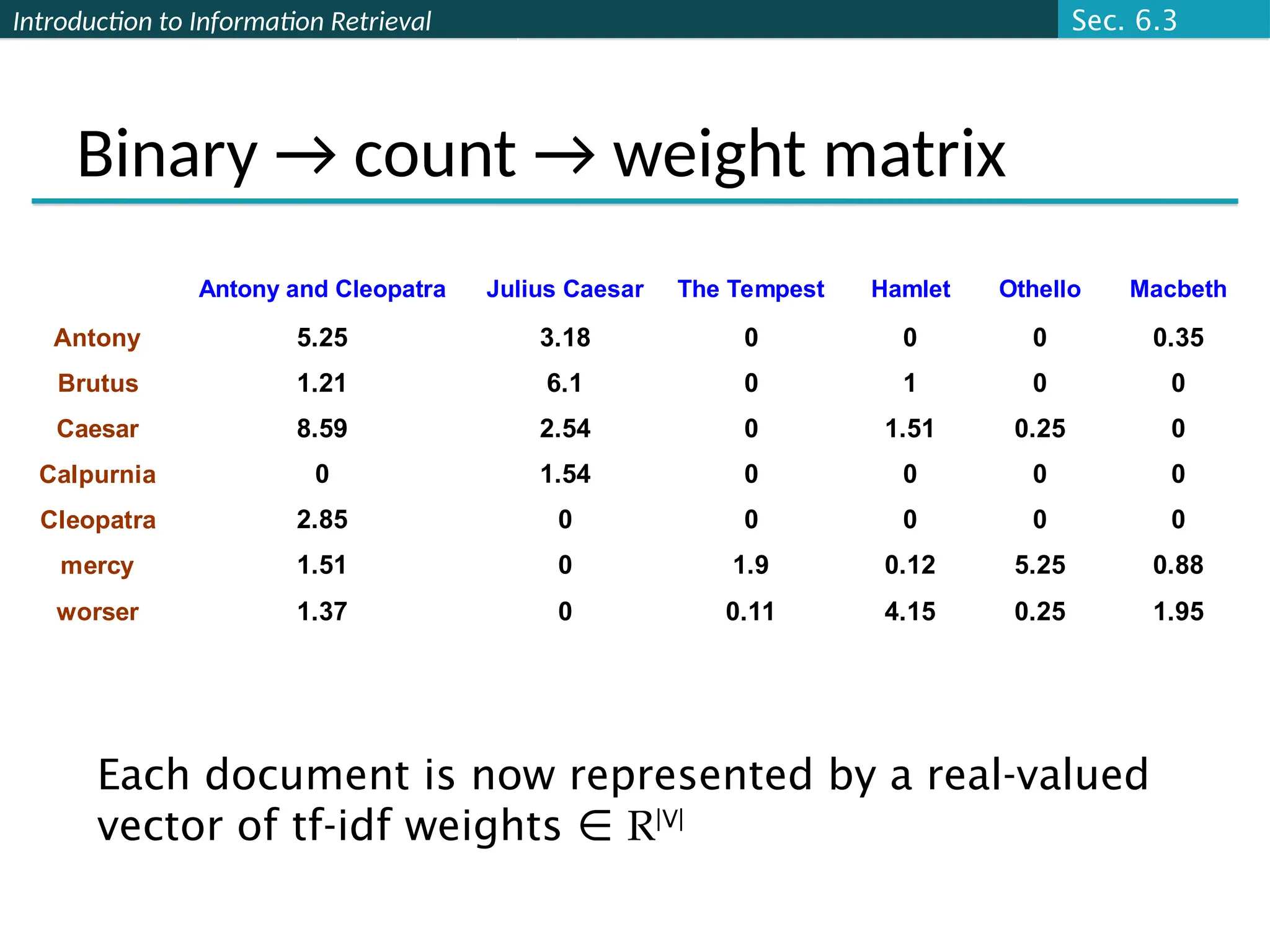

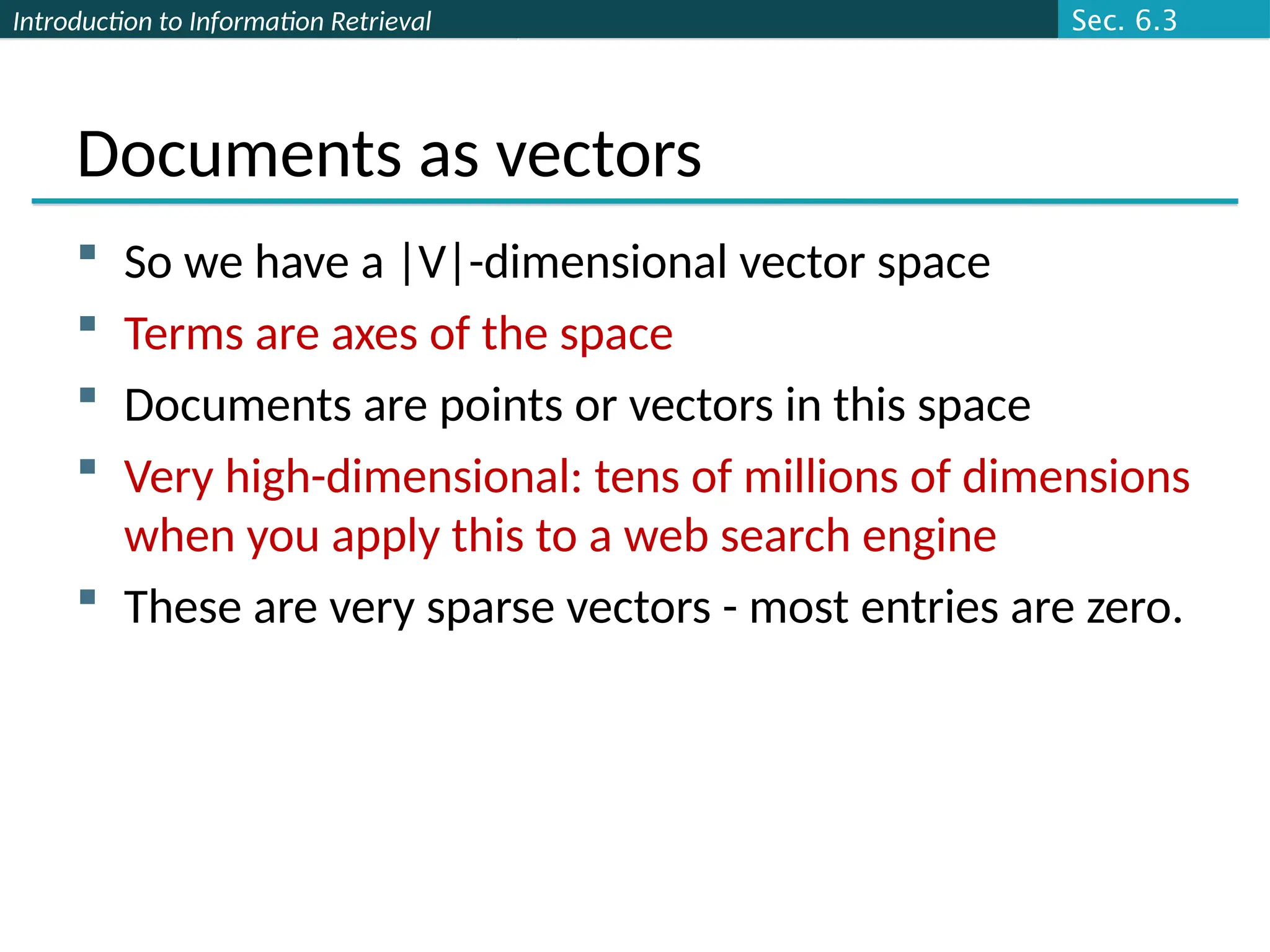

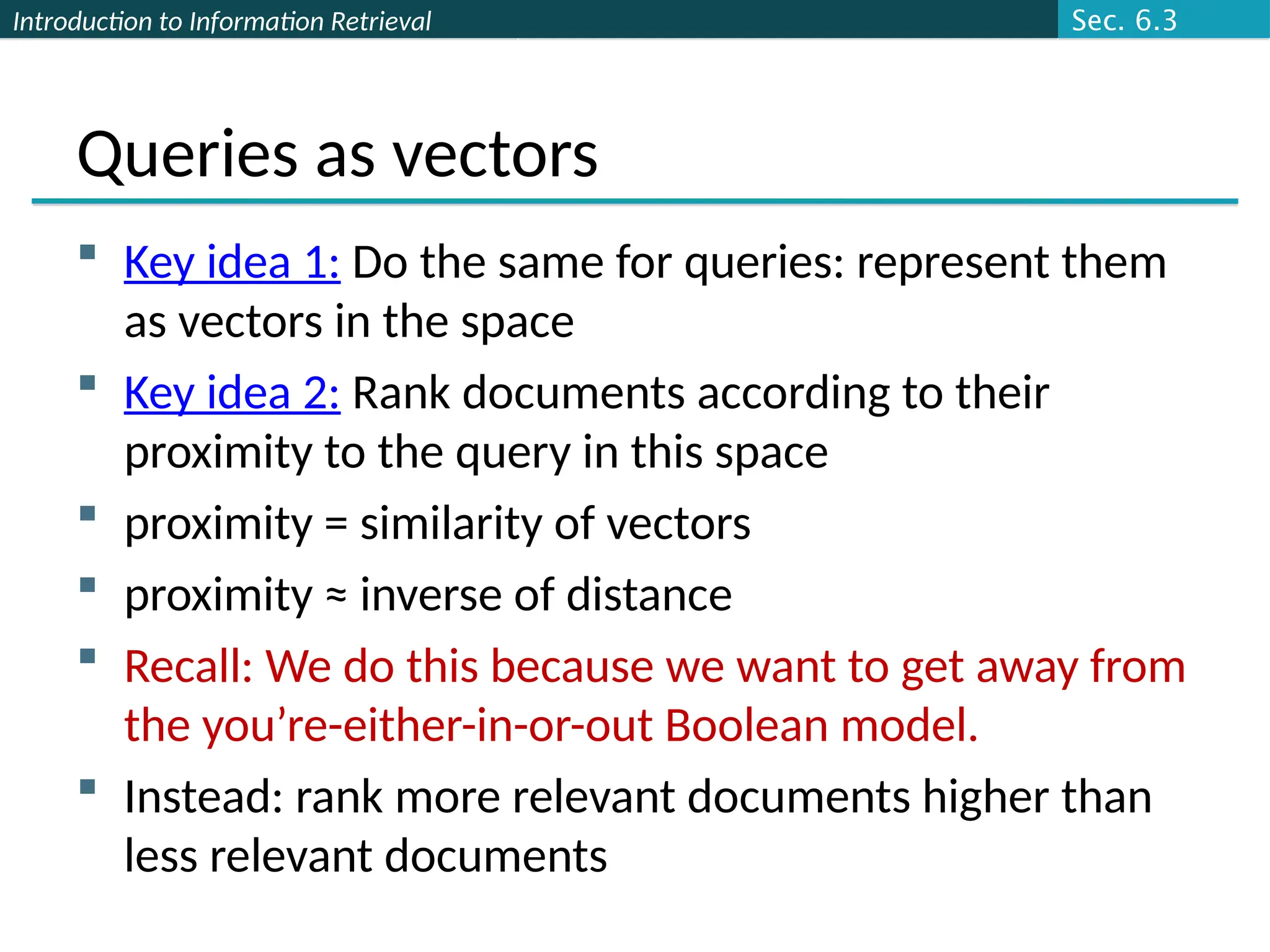

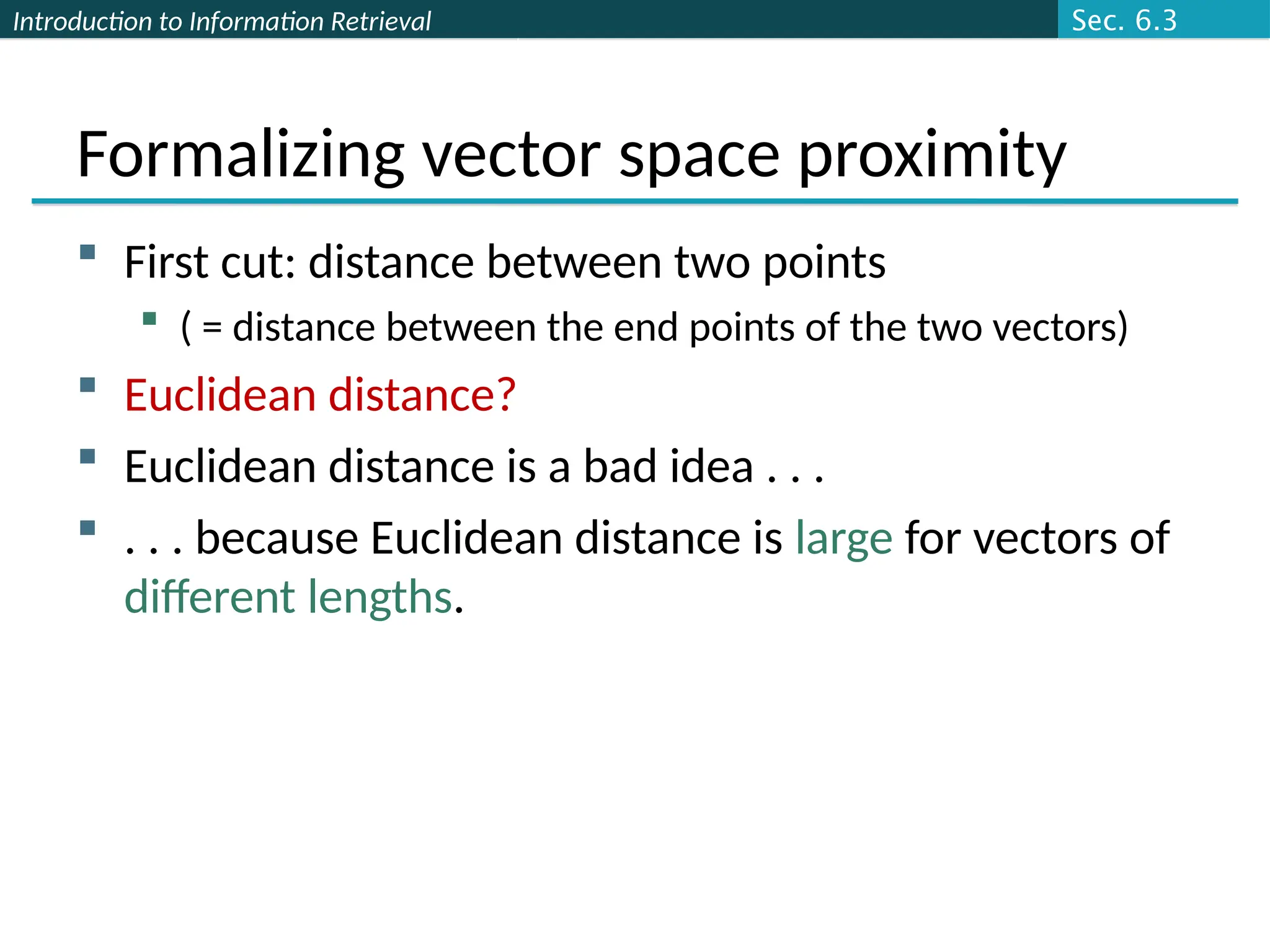

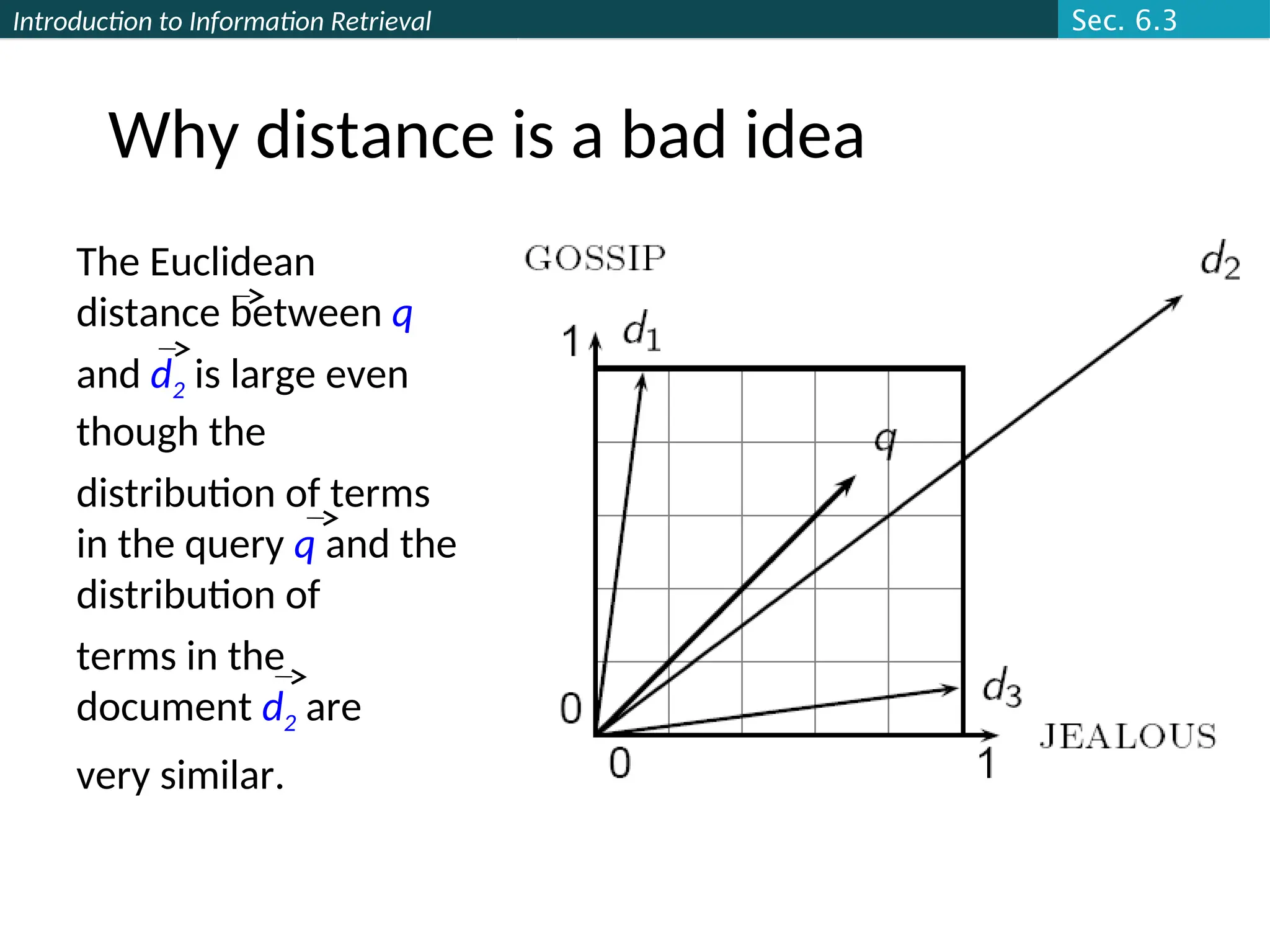

This document serves as an introduction to information retrieval principles, focusing on scoring, term weighting, and the vector space model. It discusses the shortcomings of boolean search methods and presents ranked retrieval as a more effective approach to handling user queries, highlighting various scoring methods like the Jaccard coefficient, term frequency, and TF-IDF weighting. Additionally, it covers concepts such as document vectors in high-dimensional space and cosine similarity for ranking relevant documents.

![Introduction to Information Retrieval

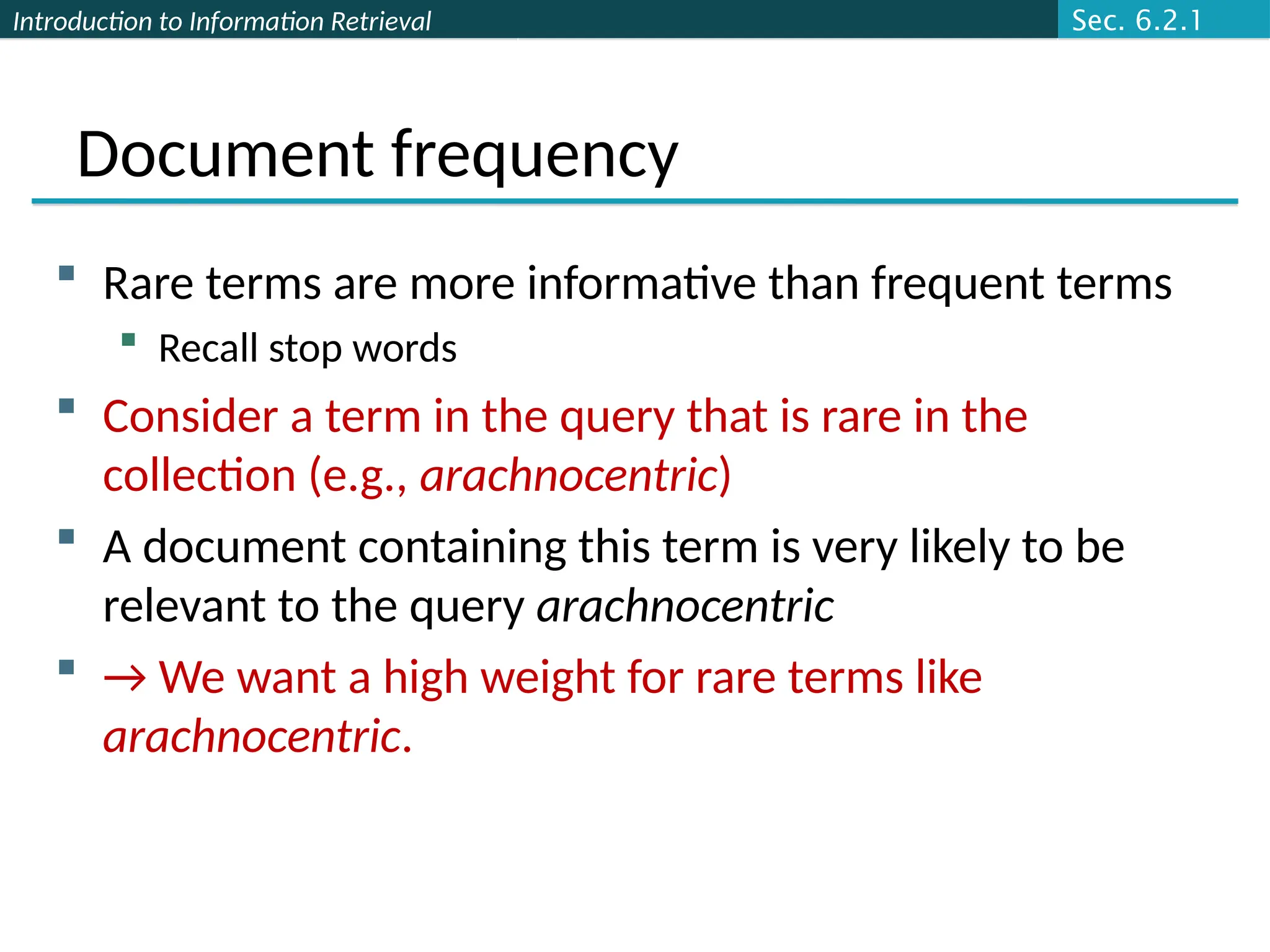

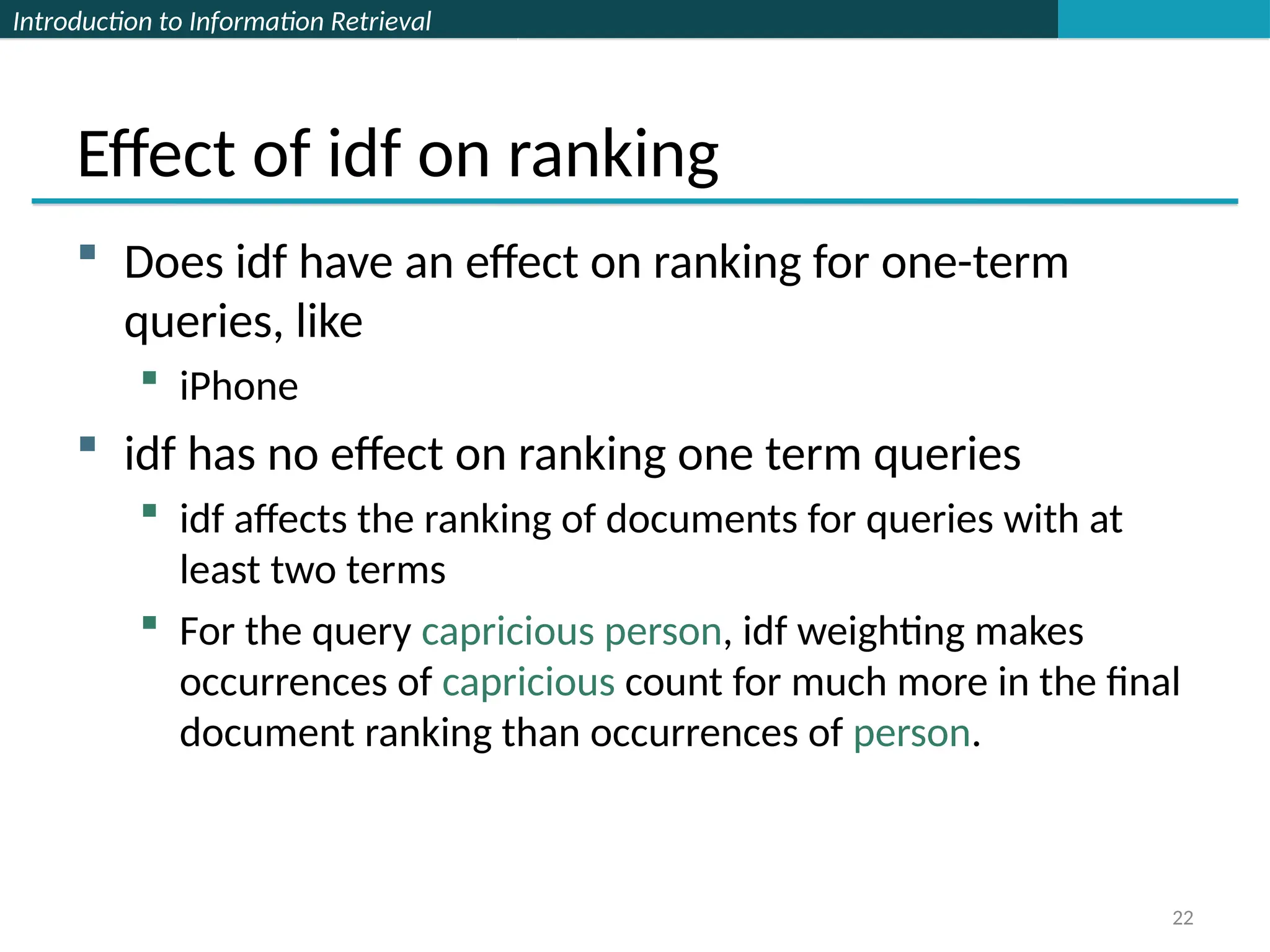

Scoring as the basis of ranked retrieval

We wish to return in order the documents most likely

to be useful to the searcher

How can we rank-order the documents in the

collection with respect to a query?

Assign a score – say in [0, 1] – to each document

This score measures how well document and query

“match”.

Ch. 6](https://image.slidesharecdn.com/lecture6-tfidf-240921173650-5df2353f/75/lecture-TFIDF-information-retrieval-ppt-8-2048.jpg)

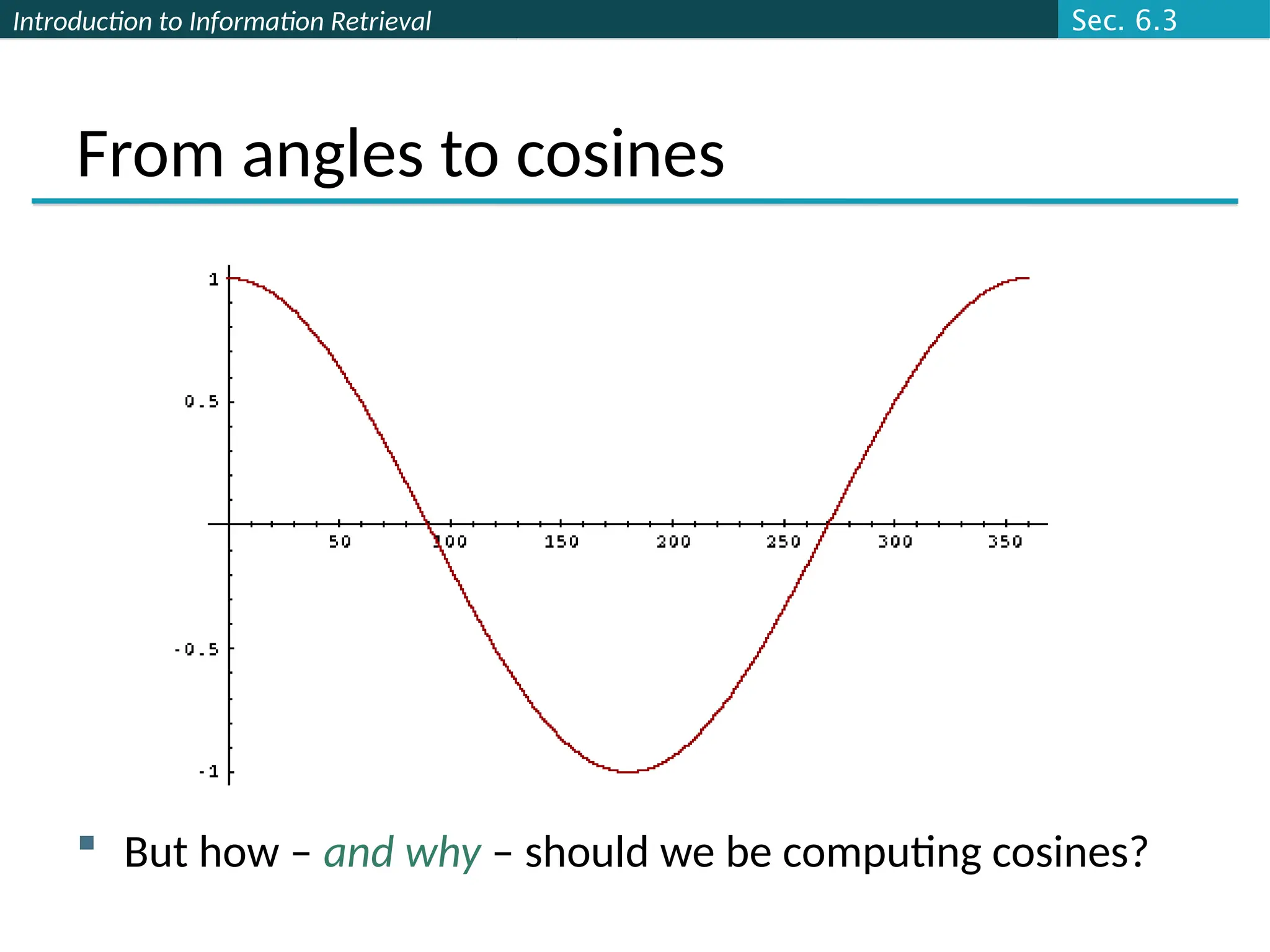

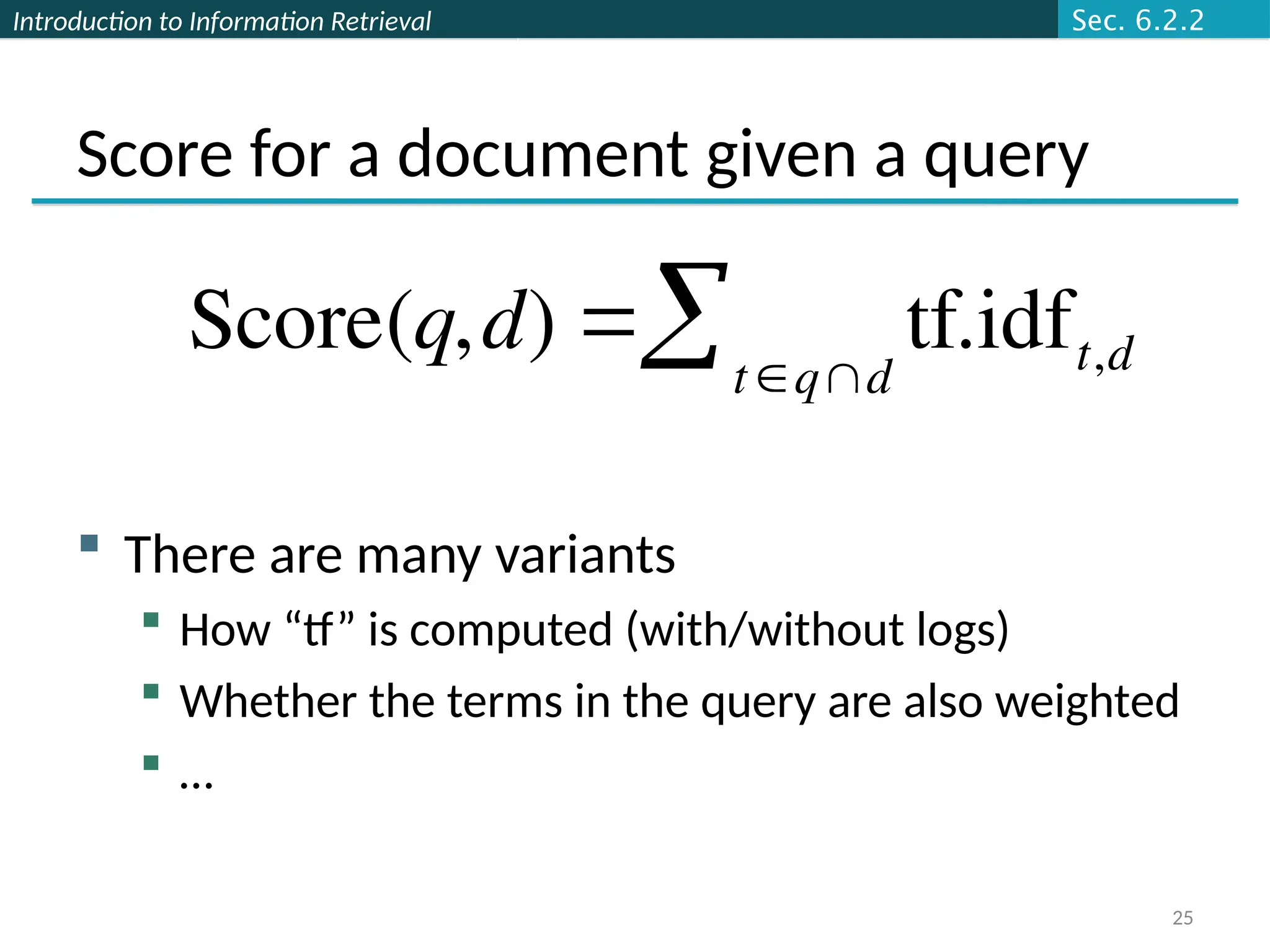

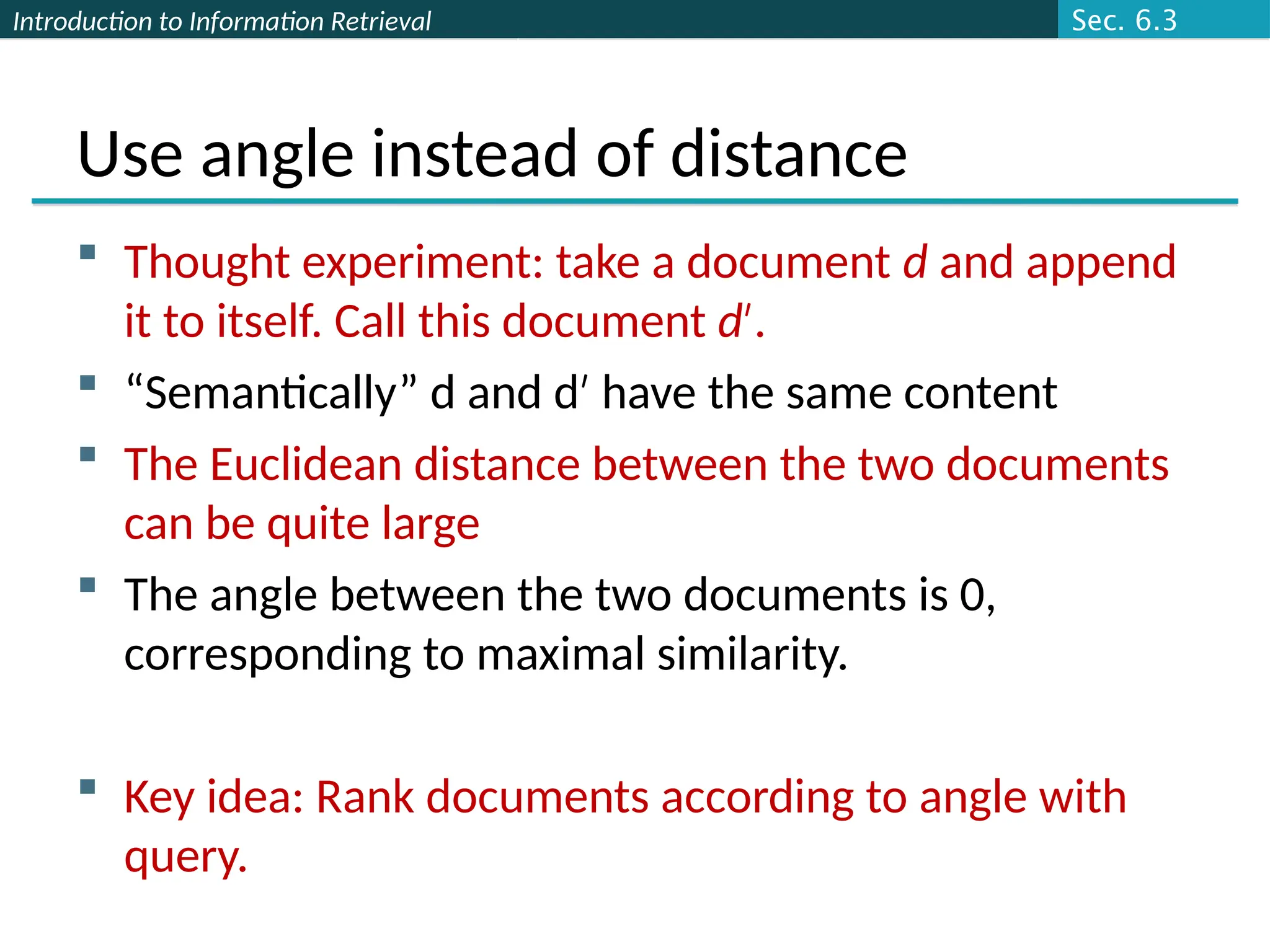

![Introduction to Information Retrieval

From angles to cosines

The following two notions are equivalent.

Rank documents in decreasing order of the angle between

query and document

Rank documents in increasing order of

cosine(query,document)

Cosine is a monotonically decreasing function for the

interval [0o

, 180o

]

Sec. 6.3](https://image.slidesharecdn.com/lecture6-tfidf-240921173650-5df2353f/75/lecture-TFIDF-information-retrieval-ppt-32-2048.jpg)