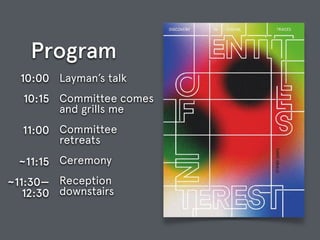

Layman's Talk: Entities of Interest --- Discovery in Digital Traces

•

0 likes•265 views

The document outlines a program that includes a committee grilling a speaker at 10:00, the committee retreating afterwards, a ceremony at 10:15, and a reception downstairs from 11:00 to 12:30.

Report

Share

Report

Share

Download to read offline

Recommended

Pragmatic ethical and fair AI for data scientists

Slides for an intro talk I gave at the first DDMA Monthly Meetup AI.

30 Tools and Tips to Speed Up Your Digital Workflow

Have you ever found yourself wasting a considerable amount of time performing some annoying, repetitive process within a common application, social media website, or your web browser? Wish there was a "magic" shortcut or simply a better way of getting it done? There most likely is.

While having a solid strategy should always be the first priority before engaging in the digital/social media space, it's also smart to arm yourself with a set of tools that will help you with the tactical implementation of your plan. These presentation slides provide 30 tools and tips hand-picked from Mike Kujawski's personal experience, day-to-day observations, and interactions with his consulting and training clients.

These tools are meant to help you be more efficient and effective as a communicator in today's digital world, where agility and "life-hacking" skills are becoming increasingly valued.

Introduction to the Responsible Use of Social Media Monitoring and SOCMINT Tools

These are my slides from a custom tool-based demonstration workshop I was asked to do where I went over various free tools that can be used to obtain valuable public data.

Filth and lies: analysing social media

Talk given to the Sheffield IT research forum in December 2021

Why people stop using sina weibo?

Why people stop using sina weibo? Information System and User Behavior Analysis

Privacy-preserving Data Mining in Industry (WSDM 2019 Tutorial)

Preserving privacy of users is a key requirement of web-scale data mining applications and systems such as web search, recommender systems, crowdsourced platforms, and analytics applications, and has witnessed a renewed focus in light of recent data breaches and new regulations such as GDPR. In this tutorial, we will first present an overview of privacy breaches over the last two decades and the lessons learned, key regulations and laws, and evolution of privacy techniques leading to differential privacy definition / techniques. Then, we will focus on the application of privacy-preserving data mining techniques in practice, by presenting case studies such as Apple's differential privacy deployment for iOS / macOS, Google's RAPPOR, LinkedIn Salary, and Microsoft's differential privacy deployment for collecting Windows telemetry. We will conclude with open problems and challenges for the data mining / machine learning community, based on our experiences in industry.

Text analysis-semantic-search

Tutorial on text analysis for Semantic search, given at BCS Search Solutions, London, 2018

Online text data for machine learning, data science, and research - Who can p...

This slide deck concerns online text data for machine learning, artificial intelligence, data science, and scientific research. After this talk, you’ll know who can provide online text data, what types of data are hard to get, and principal data hygiene factors.

Updated in August 2019.

Recommended

Pragmatic ethical and fair AI for data scientists

Slides for an intro talk I gave at the first DDMA Monthly Meetup AI.

30 Tools and Tips to Speed Up Your Digital Workflow

Have you ever found yourself wasting a considerable amount of time performing some annoying, repetitive process within a common application, social media website, or your web browser? Wish there was a "magic" shortcut or simply a better way of getting it done? There most likely is.

While having a solid strategy should always be the first priority before engaging in the digital/social media space, it's also smart to arm yourself with a set of tools that will help you with the tactical implementation of your plan. These presentation slides provide 30 tools and tips hand-picked from Mike Kujawski's personal experience, day-to-day observations, and interactions with his consulting and training clients.

These tools are meant to help you be more efficient and effective as a communicator in today's digital world, where agility and "life-hacking" skills are becoming increasingly valued.

Introduction to the Responsible Use of Social Media Monitoring and SOCMINT Tools

These are my slides from a custom tool-based demonstration workshop I was asked to do where I went over various free tools that can be used to obtain valuable public data.

Filth and lies: analysing social media

Talk given to the Sheffield IT research forum in December 2021

Why people stop using sina weibo?

Why people stop using sina weibo? Information System and User Behavior Analysis

Privacy-preserving Data Mining in Industry (WSDM 2019 Tutorial)

Preserving privacy of users is a key requirement of web-scale data mining applications and systems such as web search, recommender systems, crowdsourced platforms, and analytics applications, and has witnessed a renewed focus in light of recent data breaches and new regulations such as GDPR. In this tutorial, we will first present an overview of privacy breaches over the last two decades and the lessons learned, key regulations and laws, and evolution of privacy techniques leading to differential privacy definition / techniques. Then, we will focus on the application of privacy-preserving data mining techniques in practice, by presenting case studies such as Apple's differential privacy deployment for iOS / macOS, Google's RAPPOR, LinkedIn Salary, and Microsoft's differential privacy deployment for collecting Windows telemetry. We will conclude with open problems and challenges for the data mining / machine learning community, based on our experiences in industry.

Text analysis-semantic-search

Tutorial on text analysis for Semantic search, given at BCS Search Solutions, London, 2018

Online text data for machine learning, data science, and research - Who can p...

This slide deck concerns online text data for machine learning, artificial intelligence, data science, and scientific research. After this talk, you’ll know who can provide online text data, what types of data are hard to get, and principal data hygiene factors.

Updated in August 2019.

Using language to save the world: interactions between society, behaviour and...

Talk at GESIS Institute - department of Knowledge Technologies for the Social Sciences.

Birds Bears and Bs:Optimal SEO for Today's Search Engines

In February of 2012, Google began launching the Panda Update (bears), the first of many steps away from a link-based model of relevance to a user experience model of relevance. This bearish focus on relevance use algorithms to determine a positive user experience focused on click-through (does the user select the result), bounce rate (does the user take action once they arrive at the landing page) and conversion (does the landing page satisfy the user’s information need). Content and information design became the foundation for relevance. Sadly, no one at Google told the content strategists, user experience professionals and information architects about their new influence on search engine performance. In April of 2012, Google followed up with the Penguin update (birds), a direct assault on link building, a mainstay of traditional search engine optimization (SEO). The Penguin algorithm evaluates the context and quality of links pointing to a site. Website found to be “over optimized” with low quality links are removed from Google’s index. Matt Cutts, GOogle Webmaster and the public face of Google, summed this up best: “And so that’s the sort of thing where we try to make the web site, uh Google Bot smarter, we try to make our relevance more adaptive so that people don’t do SEO, we handle that...” Sadly, Google is short on detail about how they are handling SEO, what constitutes adaptive relevance and how user experience professionals, information architects and content strategists can contribute thought-processing biped wisdom to computational algorithmic adaptive relevance so that searchers find what they are looking for even when they do not know that that is. This presentation will provide a brief introduction to the inner workings of information retrieval, the foundation of all search engines, even Google. On this foundation, I will dive deep into the Bs of how to optimize Web sites for today’s search technology: Be focused, Be authoritative, Be contextual and Be engaging. Birds (Penguin), Bears (Panda) & Bees: Optimal SEO will provide insight into recent search engine changes, proscriptive optimization guidance for usability and content strategy and foresight into the future direction of search.

Researching Social Media – Big Data and Social Media Analysis

Researching Social Media – Big Data and Social Media Analysis, presentation for the Social Media for Researchers: A Sheffield Universities Social Media Symposium, 23 September 2014

Privacy-preserving Data Mining in Industry (WWW 2019 Tutorial)

Preserving privacy of users is a key requirement of web-scale data mining applications and systems such as web search, recommender systems, crowdsourced platforms, and analytics applications, and has witnessed a renewed focus in light of recent data breaches and new regulations such as GDPR. In this tutorial, we will first present an overview of privacy breaches over the last two decades and the lessons learned, key regulations and laws, and evolution of privacy techniques leading to differential privacy definition / techniques. Then, we will focus on the application of privacy-preserving data mining techniques in practice, by presenting case studies such as Apple's differential privacy deployment for iOS / macOS, Google's RAPPOR, LinkedIn Salary, and Microsoft's differential privacy deployment for collecting Windows telemetry. We will conclude with open problems and challenges for the data mining / machine learning community, based on our experiences in industry.

Social media engagement

Presentation for: Masterclass 19: Using social media in public engagement for the Public Engagement & Impact Team at The University of Sheffield, 26 November 2014.

Matching Mobile Applications for Cross Promotion

Matching Mobile Applications for Cross Promotion Presented in Conference on Big Data Marketing Analytics, Chicago, IL

Ethics in Data Science and Machine Learning

Introduction and overview on ethics in data science and machine learning, variations and examples of algorithmic bias, and a call-to-action for self-regulation. Given by Thierry Silbermann as part of the Sao Paulo Machine Learning Meetup, theme: "Ethics".

https://www.linkedin.com/in/thierrysilbermann

https://twitter.com/silbermannt

https://github.com/thierry-silbermann

Social Media Forensics for Investigators

With 1.2 billion monthly active users on Facebook alone, it’s not surprising that social media networks can be a rich source of information for investigators. And because Americans spend more time on social media than any other major Internet activity, including email, social media information and evidence is plentiful. You just need to know how to get it.

Finding, preserving and collecting social media evidence often requires some forensic skills, as well as an understanding of the laws that govern its collection and use. It’s important for investigators to be aware of both the possibilities and limitations of social media forensics.

Frontiers of Computational Journalism week 3 - Information Filter Design

Taught at Columbia Journalism School, Fall 2018

Full syllabus and lecture videos at http://www.compjournalism.com/?p=218

disinformation risk management: leveraging cyber security best practices to s...

Talk given in May 2021 NYU computational disinformation symposium.

Smashing silos ia-ux-meetup-mar112014

While we have been busy trying to "define the damn thing" IA or answering the age old question of who rules, UX, IxDA or IA, the search engines have been busily transitioning to a machine mediated experience model for ranking. This means that SEO is now the responsibility of UX/IA whether we like it or not. This presentation lays out how search engines evaluate user experience and how we can influence this evaluation with an optimized design.

Creating a Data-Driven Government: Big Data With Purpose

The U.S. Department of Commerce collects, processes and disseminates data on a range of issues that impact our nation. Whether it's data on the economy, the environment, or technology, data is critical in fulfilling the Department's mission of creating the conditions for economic growth and opportunity. It is this data that provides insight, drives innovation, and transforms our lives. The U.S. Department of Commerce has become known as "America's Data Agency" due to the tens of thousands of datasets including satellite imagery, material standards and demographic surveys.

But having a host of data and ensuring that this data is open and accessible to all are two separate issues. The latter, expanding open data access, is now a key pillar of the Commerce Department's mission. It was this focus on enhancing open data that led to the creation of the Commerce Data Service (CDS).

The mission at the Commerce Data Service is to enable more people to use big data from across the department in innovative ways and across multiple fields. In this talk, I will explore how we are using big data to create a data-driven government.

This talk is a keynote given at the Texas tech University's Big Data Symposium.

Designing Cybersecurity Policies with Field Experiments

Designing Cybersecurity Policies with Field Experiments

Fairness, Transparency, and Privacy in AI @ LinkedIn

How do we protect privacy of users in large-scale systems? How do we ensure fairness and transparency when developing machine learned models? With the ongoing explosive growth of AI/ML models and systems, these are some of the ethical and legal challenges encountered by researchers and practitioners alike. In this talk (presented at QConSF 2018), we first present an overview of privacy breaches as well as algorithmic bias / discrimination issues observed in the Internet industry over the last few years and the lessons learned, key regulations and laws, and evolution of techniques for achieving privacy and fairness in data-driven systems. We motivate the need for adopting a "privacy and fairness by design" approach when developing data-driven AI/ML models and systems for different consumer and enterprise applications. We also focus on the application of privacy-preserving data mining and fairness-aware machine learning techniques in practice, by presenting case studies spanning different LinkedIn applications, and conclude with the key takeaways and open challenges.

Frontiers of Computational Journalism week 2 - Text Analysis

Taught at Columbia Journalism School, Fall 2018

Full syllabus and lecture videos at http://www.compjournalism.com/?p=218

Myths and challenges in knowledge extraction and analysis from human-generate...

For centuries, science (in German "Wissenschaft") has aimed to create ("schaften") new knowledge ("Wissen") from the observation of physical phenomena, their modelling, and empirical validation. Recently, a new source of knowledge has emerged: not (only) the physical world any more, but the virtual world, namely the Web with its ever-growing stream of data materialized in the form of social network chattering, content produced on demand by crowds of people, messages exchanged among interlinked devices in the Internet of Things. The knowledge we may find there can be dispersed, informal, contradicting, unsubstantiated and ephemeral today, while already tomorrow it may be commonly accepted. The challenge is once again to capture and create knowledge that is new, has not been formalized yet in existing knowledge bases, and is buried inside a big, moving target (the live stream of online data). The myth is that existing tools (spanning fields like semantic web, machine learning, statistics, NLP, and so on) suffice to the objective. While this may still be far from true, some existing approaches are actually addressing the problem and provide preliminary insights into the possibilities that successful attempts may lead to.

The talk explores the mixed realistic-utopian domain of knowledge extraction and reports on some tools and cases where digital and physical world have brought together for better understanding our society.

More Related Content

What's hot

Using language to save the world: interactions between society, behaviour and...

Talk at GESIS Institute - department of Knowledge Technologies for the Social Sciences.

Birds Bears and Bs:Optimal SEO for Today's Search Engines

In February of 2012, Google began launching the Panda Update (bears), the first of many steps away from a link-based model of relevance to a user experience model of relevance. This bearish focus on relevance use algorithms to determine a positive user experience focused on click-through (does the user select the result), bounce rate (does the user take action once they arrive at the landing page) and conversion (does the landing page satisfy the user’s information need). Content and information design became the foundation for relevance. Sadly, no one at Google told the content strategists, user experience professionals and information architects about their new influence on search engine performance. In April of 2012, Google followed up with the Penguin update (birds), a direct assault on link building, a mainstay of traditional search engine optimization (SEO). The Penguin algorithm evaluates the context and quality of links pointing to a site. Website found to be “over optimized” with low quality links are removed from Google’s index. Matt Cutts, GOogle Webmaster and the public face of Google, summed this up best: “And so that’s the sort of thing where we try to make the web site, uh Google Bot smarter, we try to make our relevance more adaptive so that people don’t do SEO, we handle that...” Sadly, Google is short on detail about how they are handling SEO, what constitutes adaptive relevance and how user experience professionals, information architects and content strategists can contribute thought-processing biped wisdom to computational algorithmic adaptive relevance so that searchers find what they are looking for even when they do not know that that is. This presentation will provide a brief introduction to the inner workings of information retrieval, the foundation of all search engines, even Google. On this foundation, I will dive deep into the Bs of how to optimize Web sites for today’s search technology: Be focused, Be authoritative, Be contextual and Be engaging. Birds (Penguin), Bears (Panda) & Bees: Optimal SEO will provide insight into recent search engine changes, proscriptive optimization guidance for usability and content strategy and foresight into the future direction of search.

Researching Social Media – Big Data and Social Media Analysis

Researching Social Media – Big Data and Social Media Analysis, presentation for the Social Media for Researchers: A Sheffield Universities Social Media Symposium, 23 September 2014

Privacy-preserving Data Mining in Industry (WWW 2019 Tutorial)

Preserving privacy of users is a key requirement of web-scale data mining applications and systems such as web search, recommender systems, crowdsourced platforms, and analytics applications, and has witnessed a renewed focus in light of recent data breaches and new regulations such as GDPR. In this tutorial, we will first present an overview of privacy breaches over the last two decades and the lessons learned, key regulations and laws, and evolution of privacy techniques leading to differential privacy definition / techniques. Then, we will focus on the application of privacy-preserving data mining techniques in practice, by presenting case studies such as Apple's differential privacy deployment for iOS / macOS, Google's RAPPOR, LinkedIn Salary, and Microsoft's differential privacy deployment for collecting Windows telemetry. We will conclude with open problems and challenges for the data mining / machine learning community, based on our experiences in industry.

Social media engagement

Presentation for: Masterclass 19: Using social media in public engagement for the Public Engagement & Impact Team at The University of Sheffield, 26 November 2014.

Matching Mobile Applications for Cross Promotion

Matching Mobile Applications for Cross Promotion Presented in Conference on Big Data Marketing Analytics, Chicago, IL

Ethics in Data Science and Machine Learning

Introduction and overview on ethics in data science and machine learning, variations and examples of algorithmic bias, and a call-to-action for self-regulation. Given by Thierry Silbermann as part of the Sao Paulo Machine Learning Meetup, theme: "Ethics".

https://www.linkedin.com/in/thierrysilbermann

https://twitter.com/silbermannt

https://github.com/thierry-silbermann

Social Media Forensics for Investigators

With 1.2 billion monthly active users on Facebook alone, it’s not surprising that social media networks can be a rich source of information for investigators. And because Americans spend more time on social media than any other major Internet activity, including email, social media information and evidence is plentiful. You just need to know how to get it.

Finding, preserving and collecting social media evidence often requires some forensic skills, as well as an understanding of the laws that govern its collection and use. It’s important for investigators to be aware of both the possibilities and limitations of social media forensics.

Frontiers of Computational Journalism week 3 - Information Filter Design

Taught at Columbia Journalism School, Fall 2018

Full syllabus and lecture videos at http://www.compjournalism.com/?p=218

disinformation risk management: leveraging cyber security best practices to s...

Talk given in May 2021 NYU computational disinformation symposium.

Smashing silos ia-ux-meetup-mar112014

While we have been busy trying to "define the damn thing" IA or answering the age old question of who rules, UX, IxDA or IA, the search engines have been busily transitioning to a machine mediated experience model for ranking. This means that SEO is now the responsibility of UX/IA whether we like it or not. This presentation lays out how search engines evaluate user experience and how we can influence this evaluation with an optimized design.

Creating a Data-Driven Government: Big Data With Purpose

The U.S. Department of Commerce collects, processes and disseminates data on a range of issues that impact our nation. Whether it's data on the economy, the environment, or technology, data is critical in fulfilling the Department's mission of creating the conditions for economic growth and opportunity. It is this data that provides insight, drives innovation, and transforms our lives. The U.S. Department of Commerce has become known as "America's Data Agency" due to the tens of thousands of datasets including satellite imagery, material standards and demographic surveys.

But having a host of data and ensuring that this data is open and accessible to all are two separate issues. The latter, expanding open data access, is now a key pillar of the Commerce Department's mission. It was this focus on enhancing open data that led to the creation of the Commerce Data Service (CDS).

The mission at the Commerce Data Service is to enable more people to use big data from across the department in innovative ways and across multiple fields. In this talk, I will explore how we are using big data to create a data-driven government.

This talk is a keynote given at the Texas tech University's Big Data Symposium.

Designing Cybersecurity Policies with Field Experiments

Designing Cybersecurity Policies with Field Experiments

Fairness, Transparency, and Privacy in AI @ LinkedIn

How do we protect privacy of users in large-scale systems? How do we ensure fairness and transparency when developing machine learned models? With the ongoing explosive growth of AI/ML models and systems, these are some of the ethical and legal challenges encountered by researchers and practitioners alike. In this talk (presented at QConSF 2018), we first present an overview of privacy breaches as well as algorithmic bias / discrimination issues observed in the Internet industry over the last few years and the lessons learned, key regulations and laws, and evolution of techniques for achieving privacy and fairness in data-driven systems. We motivate the need for adopting a "privacy and fairness by design" approach when developing data-driven AI/ML models and systems for different consumer and enterprise applications. We also focus on the application of privacy-preserving data mining and fairness-aware machine learning techniques in practice, by presenting case studies spanning different LinkedIn applications, and conclude with the key takeaways and open challenges.

Frontiers of Computational Journalism week 2 - Text Analysis

Taught at Columbia Journalism School, Fall 2018

Full syllabus and lecture videos at http://www.compjournalism.com/?p=218

What's hot (20)

Adding value to NLP: a little semantics goes a long way

Adding value to NLP: a little semantics goes a long way

Using language to save the world: interactions between society, behaviour and...

Using language to save the world: interactions between society, behaviour and...

Birds Bears and Bs:Optimal SEO for Today's Search Engines

Birds Bears and Bs:Optimal SEO for Today's Search Engines

Researching Social Media – Big Data and Social Media Analysis

Researching Social Media – Big Data and Social Media Analysis

Privacy-preserving Data Mining in Industry (WWW 2019 Tutorial)

Privacy-preserving Data Mining in Industry (WWW 2019 Tutorial)

Frontiers of Computational Journalism week 3 - Information Filter Design

Frontiers of Computational Journalism week 3 - Information Filter Design

disinformation risk management: leveraging cyber security best practices to s...

disinformation risk management: leveraging cyber security best practices to s...

Creating a Data-Driven Government: Big Data With Purpose

Creating a Data-Driven Government: Big Data With Purpose

Designing Cybersecurity Policies with Field Experiments

Designing Cybersecurity Policies with Field Experiments

Fairness, Transparency, and Privacy in AI @ LinkedIn

Fairness, Transparency, and Privacy in AI @ LinkedIn

Frontiers of Computational Journalism week 2 - Text Analysis

Frontiers of Computational Journalism week 2 - Text Analysis

Similar to Layman's Talk: Entities of Interest --- Discovery in Digital Traces

Myths and challenges in knowledge extraction and analysis from human-generate...

For centuries, science (in German "Wissenschaft") has aimed to create ("schaften") new knowledge ("Wissen") from the observation of physical phenomena, their modelling, and empirical validation. Recently, a new source of knowledge has emerged: not (only) the physical world any more, but the virtual world, namely the Web with its ever-growing stream of data materialized in the form of social network chattering, content produced on demand by crowds of people, messages exchanged among interlinked devices in the Internet of Things. The knowledge we may find there can be dispersed, informal, contradicting, unsubstantiated and ephemeral today, while already tomorrow it may be commonly accepted. The challenge is once again to capture and create knowledge that is new, has not been formalized yet in existing knowledge bases, and is buried inside a big, moving target (the live stream of online data). The myth is that existing tools (spanning fields like semantic web, machine learning, statistics, NLP, and so on) suffice to the objective. While this may still be far from true, some existing approaches are actually addressing the problem and provide preliminary insights into the possibilities that successful attempts may lead to.

The talk explores the mixed realistic-utopian domain of knowledge extraction and reports on some tools and cases where digital and physical world have brought together for better understanding our society.

London data and digital masterclass for councillors slides 14-Feb-20

On 14th February 2020, the Local Government association ran a masterclass discussion day for councillors and elected members on data and digital transformation in local government. It took place in London. This is the slide set that was used to steer discussions

Getting started in Data Science (April 2017, Los Angeles)

Getting started in Data Science (April 2017, Los Angeles)

Big data-and-creativity v.1

Einstein published his ideas and became a pivotal element in shifting the way we think about physics - from the Newtonian model to the Quantum - in turn this changed the way we think about the world and allowed us to develop new ways of engaging with the world.

We are at a similar juncture. The development of computational technologies allows us to think about astronomical volumes of data and to make meaning of that data.

The mindshift that occurs is that “the machine is our friend”. The computer, like all machines, extends our capabilities. As a consequence the types of thinking now required in industry are those that get away from thinking like a computer and shift towards creative engagement with possibilities. Logical thinking is still necessary but it starts to be driven by imagination.

Computational thinking and data science change the way we think about defining and solving problems.

The age of creativity - which increasingly extends its impact from arts applications to business, scientific, technological, entrepreneurship, political, and other contexts.

Going To A Data Hack - Govhack 2015

This is my experience of going to my first data hackathon, Govhack 2015 and what it taught me.

A Hackathon is an event where you gather a heap of resources and people, form small teams and try to deliver as fully realised solution to a set theme or problem in a short intense amount of time.

Normally a hackathon is focused on delivering working software, but in the case of a data hackathon you work from a heap of datasets and try to deliver something of value, that can be working software, but often is something else. For this reason non coders can participate in a data hack easily.

Another difference is a hackathon normally revolves around creating some sort of business (be that profit or non-profit) idea and validating it.

Data hackathons are about understanding and realising value from data, and that value can often just be delivering better access to the information the data represents.

Social Web 2014: Final Presentations (Part I)

Final presentations by students in the Social Web Course at the VU University Amsterdam, 2014 (groups 1-15)

Getting comfortable with Data

Talk at a Data Journalism BootCamp organised by ICFJ, World Bank Group and African Media Initiative in New Delhi to a group of 60 journalists, coders and social sector folks. Other amazing sessions included those from Govind Ethiraj of IndiaSpend, Andrew from BBC, Parul from Google, Nasr from HacksHacker, Thej from DataMeet and David from Code for Africa. http://delhi.dbootcamp.org/

Let’s hunt the target using OSINT

This is the slides of the online talk given at @NullBhopal. This introduces people to Open Source INTelligence and their uses in daily life and pentesting.

Data visualization for development

Lecture to SIPA students on basics of creating data visualisations in multi-language, very-diverse-datasets developing-world / emerging-economy environments.

Reining in the Data ITAG tech360 Penn State Great Valley 2015

Social impact of the privacy crisis in the post snowden era. What we thought was secure has been compromised. We think we want anonymity, but that promotes bad activity.

OSINT- Leveraging data into intelligence

Open-source intelligence (OSINT) is intelligence collected from publicly available sources. In the intelligence community (IC), the term "open" refers to overt, publicly available sources (as opposed to covert or clandestine sources); it is not related to open-source software or public intelligence.

Intro to Data Science

You've heard the news, Data Science is the cool new career opportunity sweeping the world. Come learn from Thinkful Mentors all about this new and exciting industry.

Digital Tools, Trends and Methodologies in the Humanities and Social Sciences

This interactive seminar will explore trends and initiatives in the digital community of practice in the humanities and the social sciences. Participants will come away with a appreciation of from where the field has emerged and how it interacts with traditional disciplines. This seminar will be of interest to those in traditional disciplines as well as the wider academy as digital humanities is both collaborative and multidisciplinary in practise. It is intended to form a broad and easy introduction to the practise of digital humanities and will appeal especially to new scholar who is open to the potential to combine their traditional scholarship with digital tools and methodologies. It is *introductory* in nature.

Similar to Layman's Talk: Entities of Interest --- Discovery in Digital Traces (20)

Myths and challenges in knowledge extraction and analysis from human-generate...

Myths and challenges in knowledge extraction and analysis from human-generate...

London data and digital masterclass for councillors slides 14-Feb-20

London data and digital masterclass for councillors slides 14-Feb-20

Getting started in Data Science (April 2017, Los Angeles)

Getting started in Data Science (April 2017, Los Angeles)

Reining in the Data ITAG tech360 Penn State Great Valley 2015

Reining in the Data ITAG tech360 Penn State Great Valley 2015

Noticing the Nuance: Designing intelligent systems that can understand semant...

Noticing the Nuance: Designing intelligent systems that can understand semant...

Digital Tools, Trends and Methodologies in the Humanities and Social Sciences

Digital Tools, Trends and Methodologies in the Humanities and Social Sciences

More from David Graus

Bias in Recommendations

Slidedeck of my lecture at SIKS Course "Advances in Information Retrieval"

Read more here: https://graus.nu/blog/bias-in-recommendations-lecture-siks-course-on-advances-in-ir/

RecSys in the Media Industry: Relevance, Recency, Popularity, and Diversity.

Slides of my lecture at the ACM Summer School on Recommender Systems in Gothenburg, Sweden.

CAT/AI: Computer Assisted Translation

Assessment for Impact

Slides of our pitch at the Hackathon for Peace, Justice and Security, for our project for Translators Without Borders

Opening the Black Box of User Profiles in Content-based Recommender Systems

Slides of my talk at at ICAI's Fair and Transparant Machine Learning Meetup on our ICT with Industry project: "Reading News with a Purpose"

Zoeken, vinden, en aanbevelen: personalisatie vs. privacy

Lezing op de VOGIN-IP-lezing op 28 maart 2018 bij de Openbare Bibliotheek Amsterdam.

DISCLAIMER: dit praatje is een mooi stukje ouderwetse (menselijke) manipulatie: expert komt met een 5-tal aanbevelingen :-).

"Tegenwoordig kijkt men steeds vaker met argusogen naar technologiebedrijven die op grote schaal gebruikersgedrag verzamelen. In dit praatje zet ik uiteen waarom het inzetten van gebruikersgedrag van belang is, en hoe het wordt gebruikt om informatie effectief te kunnen ontsluiten en doorzoekbaar maken, of het nu gaat om een zoekmachine als Google, die zich een weg moet banen door een web van miljarden pagina’s, of een service als Spotify, die haar gebruikers graag de juiste muziek blijft aanbieden."

Financial News Mining @ PyData Amsterdam

Slides of the talk I gave at PyData Amsterdam.

Abstract:

"The FD Mediagroep collects, analyses and filters valuable and relevant information, 24/7, for an influential group of professionals, business executives and high net worth individuals. Company.info (part of FDMG) provides complete, reliable, up-to-date company information and business news about no less than 2.7 million companies and other legal entities in the Netherlands. For Company.info we continuously monitor and crawl hundreds of (online) news sources, resulting in a large archive of (Dutch) business-related news, spanning hundreds of thousands of articles. These articles are automatically enriched, by linking the profiles of companies that are mentioned in the articles, using a custom in-house entity linking framework built in Python. In this talk, I will briefly explain the entity linking task, I will detail the implementation of our custom entity linking framework, and our pipeline for crawling and enriching news articles."

De Macht van Data --- Hoe algoritmen ons leven vormgeven

Slides of the introductory talk I gave at an event at De Balie: "De macht van data" on June 18th, 2017.

For a video recording of the talk see: http://graus.co/blog/mini-college-algoritmen/

Financial News Mining @ FD Mediagroep/Company.info

Talk I gave at the Data Science Northeast Netherlands Meetup, where I detail the custom in-house entity linking framework, sentiment analysis, and entity salience scoring model we developed for Company.info, in addition to showing some example applications of our corpus of news articles linked to organization profiles.

Big Data & Machine Learning - Mogelijkheden & Valkuilen

Keynote @ Intelligence Dag (Koninklijke Marechaussee)

Analyzing and Predicting Task Reminders

Slides of my talk at The 24th Conference on User Modeling, Adaptation and Personalization (UMAP 2016) in Halifax, Canada.

Dynamic Collective Entity Representations for Entity Ranking

at the Ninth ACM International Conference on Web Search and Data Mining (WSDM 2016)

Generating Pseudo-ground Truth for Detecting New Concepts in Social Streams

The manual curation of knowledge bases is a bottleneck in fast paced domains where new concepts constantly emerge. Identification of nascent concepts is important for improving early entity linking, content interpretation, and recommendation of new content in real-time applications. We present an unsupervised method for generating pseudo-ground truth for training a named entity recognizer to specifically identify entities that will become concepts in a knowledge base in the setting of social streams. We show that our method is able to deal with missing labels, justifying the use of pseudo-ground truth generation in this task. Finally, we show how our method significantly outperforms a lexical-matching baseline, by leveraging strategies for sampling pseudo-ground truth based on entity confidence scores and textual quality of input documents.

yourHistory - entity linking for a personalized timeline of historic events

slides for yH talk @ ICT.OPEN2013 (Intelligent Systems track)

Semantic Annotation of the Cyttron Database

Final Presentation for my MSc Graduation Project.

Abstract:

"Semantic annotation uses human knowledge formalized in ontologies to enrich texts, by providing structured and machine-understandable information of its content. This paper proposes an approach for automatically annotating texts of the Cyttron Scientific Image Database, using the NCI Thesaurus ontology. Several frequency-based keyword extraction algorithms were implemented and evaluated, aiming to extract important concepts and exclude less relevant ones. Furthermore, topic classification algorithms were applied to identify important concepts which do not occur in the text. The algorithms were evaluated by comparison to annotations provided by experts. Semantic networks were generated from these annotations and an ontology-based similarity metric was applied to perform the comparison. Finally the networks were visualized to provide further insights into the differences of the semantic structure generated by humans, and the algorithms."

More information: http://graus.nu/category/thesis

Semantic annotation, clustering and visualization

"Practise" presentation of my MSc thesis I did for the Leiden University Bio-imaging Group. More information @ http://graus.nu/category/thesis/

More from David Graus (20)

RecSys in the Media Industry: Relevance, Recency, Popularity, and Diversity.

RecSys in the Media Industry: Relevance, Recency, Popularity, and Diversity.

CAT/AI: Computer Assisted Translation

Assessment for Impact

CAT/AI: Computer Assisted Translation

Assessment for Impact

Opening the Black Box of User Profiles in Content-based Recommender Systems

Opening the Black Box of User Profiles in Content-based Recommender Systems

Zoeken, vinden, en aanbevelen: personalisatie vs. privacy

Zoeken, vinden, en aanbevelen: personalisatie vs. privacy

De Macht van Data --- Hoe algoritmen ons leven vormgeven

De Macht van Data --- Hoe algoritmen ons leven vormgeven

Financial News Mining @ FD Mediagroep/Company.info

Financial News Mining @ FD Mediagroep/Company.info

Big Data & Machine Learning - Mogelijkheden & Valkuilen

Big Data & Machine Learning - Mogelijkheden & Valkuilen

Dynamic Collective Entity Representations for Entity Ranking

Dynamic Collective Entity Representations for Entity Ranking

Dynamic Collective Entity Representations for Entity Ranking

Dynamic Collective Entity Representations for Entity Ranking

David Graus - Entity Linking (at SEA), Search Engines Amsterdam, Fri June 27th

David Graus - Entity Linking (at SEA), Search Engines Amsterdam, Fri June 27th

Understanding Email Traffic (talk @ E-Discovery NL Symposium)

Understanding Email Traffic (talk @ E-Discovery NL Symposium)

Generating Pseudo-ground Truth for Detecting New Concepts in Social Streams

Generating Pseudo-ground Truth for Detecting New Concepts in Social Streams

yourHistory - entity linking for a personalized timeline of historic events

yourHistory - entity linking for a personalized timeline of historic events

Recently uploaded

Observation of Io’s Resurfacing via Plume Deposition Using Ground-based Adapt...

Since volcanic activity was first discovered on Io from Voyager images in 1979, changes

on Io’s surface have been monitored from both spacecraft and ground-based telescopes.

Here, we present the highest spatial resolution images of Io ever obtained from a groundbased telescope. These images, acquired by the SHARK-VIS instrument on the Large

Binocular Telescope, show evidence of a major resurfacing event on Io’s trailing hemisphere. When compared to the most recent spacecraft images, the SHARK-VIS images

show that a plume deposit from a powerful eruption at Pillan Patera has covered part

of the long-lived Pele plume deposit. Although this type of resurfacing event may be common on Io, few have been detected due to the rarity of spacecraft visits and the previously low spatial resolution available from Earth-based telescopes. The SHARK-VIS instrument ushers in a new era of high resolution imaging of Io’s surface using adaptive

optics at visible wavelengths.

Nutraceutical market, scope and growth: Herbal drug technology

As consumer awareness of health and wellness rises, the nutraceutical market—which includes goods like functional meals, drinks, and dietary supplements that provide health advantages beyond basic nutrition—is growing significantly. As healthcare expenses rise, the population ages, and people want natural and preventative health solutions more and more, this industry is increasing quickly. Further driving market expansion are product formulation innovations and the use of cutting-edge technology for customized nutrition. With its worldwide reach, the nutraceutical industry is expected to keep growing and provide significant chances for research and investment in a number of categories, including vitamins, minerals, probiotics, and herbal supplements.

Richard's entangled aventures in wonderland

Since the loophole-free Bell experiments of 2020 and the Nobel prizes in physics of 2022, critics of Bell's work have retreated to the fortress of super-determinism. Now, super-determinism is a derogatory word - it just means "determinism". Palmer, Hance and Hossenfelder argue that quantum mechanics and determinism are not incompatible, using a sophisticated mathematical construction based on a subtle thinning of allowed states and measurements in quantum mechanics, such that what is left appears to make Bell's argument fail, without altering the empirical predictions of quantum mechanics. I think however that it is a smoke screen, and the slogan "lost in math" comes to my mind. I will discuss some other recent disproofs of Bell's theorem using the language of causality based on causal graphs. Causal thinking is also central to law and justice. I will mention surprising connections to my work on serial killer nurse cases, in particular the Dutch case of Lucia de Berk and the current UK case of Lucy Letby.

Astronomy Update- Curiosity’s exploration of Mars _ Local Briefs _ leadertele...

Article written for leader telegram

Multi-source connectivity as the driver of solar wind variability in the heli...

The ambient solar wind that flls the heliosphere originates from multiple

sources in the solar corona and is highly structured. It is often described

as high-speed, relatively homogeneous, plasma streams from coronal

holes and slow-speed, highly variable, streams whose source regions are

under debate. A key goal of ESA/NASA’s Solar Orbiter mission is to identify

solar wind sources and understand what drives the complexity seen in the

heliosphere. By combining magnetic feld modelling and spectroscopic

techniques with high-resolution observations and measurements, we show

that the solar wind variability detected in situ by Solar Orbiter in March

2022 is driven by spatio-temporal changes in the magnetic connectivity to

multiple sources in the solar atmosphere. The magnetic feld footpoints

connected to the spacecraft moved from the boundaries of a coronal hole

to one active region (12961) and then across to another region (12957). This

is refected in the in situ measurements, which show the transition from fast

to highly Alfvénic then to slow solar wind that is disrupted by the arrival of

a coronal mass ejection. Our results describe solar wind variability at 0.5 au

but are applicable to near-Earth observatories.

filosofia boliviana introducción jsjdjd.pptx

La filosofía boliviana y la búsqueda por construir pensamientos propios

insect taxonomy importance systematics and classification

documents provide information about insect classification and taxonomy of insect

Comparative structure of adrenal gland in vertebrates

Adrenal gland comparative structures in vertebrates

RNA INTERFERENCE: UNRAVELING GENETIC SILENCING

Introduction:

RNA interference (RNAi) or Post-Transcriptional Gene Silencing (PTGS) is an important biological process for modulating eukaryotic gene expression.

It is highly conserved process of posttranscriptional gene silencing by which double stranded RNA (dsRNA) causes sequence-specific degradation of mRNA sequences.

dsRNA-induced gene silencing (RNAi) is reported in a wide range of eukaryotes ranging from worms, insects, mammals and plants.

This process mediates resistance to both endogenous parasitic and exogenous pathogenic nucleic acids, and regulates the expression of protein-coding genes.

What are small ncRNAs?

micro RNA (miRNA)

short interfering RNA (siRNA)

Properties of small non-coding RNA:

Involved in silencing mRNA transcripts.

Called “small” because they are usually only about 21-24 nucleotides long.

Synthesized by first cutting up longer precursor sequences (like the 61nt one that Lee discovered).

Silence an mRNA by base pairing with some sequence on the mRNA.

Discovery of siRNA?

The first small RNA:

In 1993 Rosalind Lee (Victor Ambros lab) was studying a non- coding gene in C. elegans, lin-4, that was involved in silencing of another gene, lin-14, at the appropriate time in the

development of the worm C. elegans.

Two small transcripts of lin-4 (22nt and 61nt) were found to be complementary to a sequence in the 3' UTR of lin-14.

Because lin-4 encoded no protein, she deduced that it must be these transcripts that are causing the silencing by RNA-RNA interactions.

Types of RNAi ( non coding RNA)

MiRNA

Length (23-25 nt)

Trans acting

Binds with target MRNA in mismatch

Translation inhibition

Si RNA

Length 21 nt.

Cis acting

Bind with target Mrna in perfect complementary sequence

Piwi-RNA

Length ; 25 to 36 nt.

Expressed in Germ Cells

Regulates trnasposomes activity

MECHANISM OF RNAI:

First the double-stranded RNA teams up with a protein complex named Dicer, which cuts the long RNA into short pieces.

Then another protein complex called RISC (RNA-induced silencing complex) discards one of the two RNA strands.

The RISC-docked, single-stranded RNA then pairs with the homologous mRNA and destroys it.

THE RISC COMPLEX:

RISC is large(>500kD) RNA multi- protein Binding complex which triggers MRNA degradation in response to MRNA

Unwinding of double stranded Si RNA by ATP independent Helicase

Active component of RISC is Ago proteins( ENDONUCLEASE) which cleave target MRNA.

DICER: endonuclease (RNase Family III)

Argonaute: Central Component of the RNA-Induced Silencing Complex (RISC)

One strand of the dsRNA produced by Dicer is retained in the RISC complex in association with Argonaute

ARGONAUTE PROTEIN :

1.PAZ(PIWI/Argonaute/ Zwille)- Recognition of target MRNA

2.PIWI (p-element induced wimpy Testis)- breaks Phosphodiester bond of mRNA.)RNAse H activity.

MiRNA:

The Double-stranded RNAs are naturally produced in eukaryotic cells during development, and they have a key role in regulating gene expression .

Structural Classification Of Protein (SCOP)

A brief information about the SCOP protein database used in bioinformatics.

The Structural Classification of Proteins (SCOP) database is a comprehensive and authoritative resource for the structural and evolutionary relationships of proteins. It provides a detailed and curated classification of protein structures, grouping them into families, superfamilies, and folds based on their structural and sequence similarities.

Seminar of U.V. Spectroscopy by SAMIR PANDA

Spectroscopy is a branch of science dealing the study of interaction of electromagnetic radiation with matter.

Ultraviolet-visible spectroscopy refers to absorption spectroscopy or reflect spectroscopy in the UV-VIS spectral region.

Ultraviolet-visible spectroscopy is an analytical method that can measure the amount of light received by the analyte.

Recently uploaded (20)

Observation of Io’s Resurfacing via Plume Deposition Using Ground-based Adapt...

Observation of Io’s Resurfacing via Plume Deposition Using Ground-based Adapt...

Nutraceutical market, scope and growth: Herbal drug technology

Nutraceutical market, scope and growth: Herbal drug technology

Body fluids_tonicity_dehydration_hypovolemia_hypervolemia.pptx

Body fluids_tonicity_dehydration_hypovolemia_hypervolemia.pptx

Astronomy Update- Curiosity’s exploration of Mars _ Local Briefs _ leadertele...

Astronomy Update- Curiosity’s exploration of Mars _ Local Briefs _ leadertele...

Multi-source connectivity as the driver of solar wind variability in the heli...

Multi-source connectivity as the driver of solar wind variability in the heli...

Circulatory system_ Laplace law. Ohms law.reynaults law,baro-chemo-receptors-...

Circulatory system_ Laplace law. Ohms law.reynaults law,baro-chemo-receptors-...

insect taxonomy importance systematics and classification

insect taxonomy importance systematics and classification

Comparative structure of adrenal gland in vertebrates

Comparative structure of adrenal gland in vertebrates

platelets- lifespan -Clot retraction-disorders.pptx

platelets- lifespan -Clot retraction-disorders.pptx

Lateral Ventricles.pdf very easy good diagrams comprehensive

Lateral Ventricles.pdf very easy good diagrams comprehensive

Layman's Talk: Entities of Interest --- Discovery in Digital Traces

- 1. Program Layman’s talk Committee comes and grills me Committee retreats Ceremony Reception downstairs 10:00 10:15 11:00 ~11:15 ~11:30— 12:30

- 5. Entities of Interest Discovery in Digital Traces

- 6. Entities of Interest Discovery in Digital Traces Object of study

- 7. Entities of Interest Discovery in Digital Traces Object of study Task

- 8. Entities of Interest Discovery in Digital Traces Object of study Task Domain

- 9. Entities of Interest Discovery in Digital Traces

- 10. Entities of Interest Discovery in Digital Traces

- 11. Entities of Interest Discovery in Digital Traces

- 12. Entities of Interest Discovery in Digital Traces

- 13. Entities of Interest Discovery in Digital Traces

- 14. Entities of Interest Discovery in Digital Traces

- 15. Entities of Interest Discovery in Digital Traces

- 16. • Gain new insights/discover new information Entities of Interest Discovery in Digital Traces

- 17. • Gain new insights/discover new information • Answer questions: Who was involved? What happened? Where, when and why did it happen? Entities of Interest Discovery in Digital Traces

- 18. • Gain new insights/discover new information • Answer questions: Who was involved? What happened? Where, when and why did it happen? Entities of Interest Discovery in Digital Traces

- 19. Entities of Interest Discovery in Digital Traces

- 20. Entities of Interest Discovery in Digital Traces • “Things with distinct and independent existence”

- 21. Entities of Interest Discovery in Digital Traces • “Things with distinct and independent existence” • Real-world entities central to answering 5 W’s.

- 22. Entities of Interest Discovery in Digital Traces • “Things with distinct and independent existence” • Real-world entities central to answering 5 W’s.

- 23. Entities of Interest Discovery in Digital Traces • “Things with distinct and independent existence” • Real-world entities central to answering 5 W’s.

- 24. Challenges

- 25. Challenges • Language is “noisy”

- 26. Challenges • Language is “noisy” • “Big Data”

- 27. Methods

- 29. Methods • Information Retrieval • Searching & finding things

- 30. Methods • Information Retrieval • Searching & finding things • Natural Language Processing

- 31. Methods • Information Retrieval • Searching & finding things • Natural Language Processing • (automated) ’understanding’ of language

- 32. Methods • Information Retrieval • Searching & finding things • Natural Language Processing • (automated) ’understanding’ of language • Machine Learning

- 33. Methods • Information Retrieval • Searching & finding things • Natural Language Processing • (automated) ’understanding’ of language • Machine Learning • Using programs that ‘learn’ to do something

- 35. Two types of Entities of Interest

- 36. Two types of Entities of Interest Part 1: Entities in digital traces

- 37. Two types of Entities of Interest Part 1: Entities in digital traces • Content/data

- 38. Two types of Entities of Interest Part 1: Entities in digital traces • Content/data Part 2: Entities that produce digital traces

- 39. Two types of Entities of Interest Part 1: Entities in digital traces • Content/data Part 2: Entities that produce digital traces • Context/metadata

- 40. Part I Part 1: Entities in digital traces

- 41. Part I Part 1: Emerging Entities in digital traces

- 44. First mention

- 45. Wikipedia Page CreatedFirst mention

- 46. Wikipedia Page CreatedFirst mention Are there common temporal patterns in how entities emerge in online text streams?

- 47. Wikipedia Page CreatedFirst mention Are there common temporal patterns in how entities emerge in online text streams?Yes!

- 50. Can we leverage prior knowledge of entities to bootstrap the discovery of new entities?

- 51. Can we leverage prior knowledge of entities to bootstrap the discovery of new entities? Yes!

- 54. *****

- 55. ***** Can we leverage collective intelligence to construct entity representations for in- creased retrieval effectiveness of entities of interest?

- 56. ***** Can we leverage collective intelligence to construct entity representations for in- creased retrieval effectiveness of entities of interest? Yes!

- 57. Part II Entities of Interest: Producers of digital traces

- 58. Part II Entities of Interest: Producers of digital traces Aim: Study and predict real-world activity from digital traces

- 59. Part II Entities of Interest: Producers of digital traces Aim: Study and predict real-world activity from digital traces Two case-studies

- 63. d.p.graus@uva.nl z.ren@uva.nl derijke@uva.nl Can we predict email communication through modeling email content and communication graph properties?

- 64. d.p.graus@uva.nl z.ren@uva.nl derijke@uva.nl Can we predict email communication through modeling email content and communication graph properties? Yes!

- 68. Creation times Notification times Creation times Notification times

- 69. Creation times Notification times Creation times Notification times Can we identify patterns in the times at which people create reminders, and, via notification times, when the associated tasks are to be executed?

- 70. Creation times Notification times Creation times Notification times Can we identify patterns in the times at which people create reminders, and, via notification times, when the associated tasks are to be executed? Yes!

- 71. In Summary • Part 1: We propose methods for analyzing, predicting, and retrieving emerging entities • Part 2: We propose methods for predicting future activity by leveraging digital traces.

- 73. Program Committee comes and grills me Committee retreats Ceremony Reception downstairs 10:15 11:00 ~11:15 ~11:30— 12:30