KatKennedy REU D.C. Poster

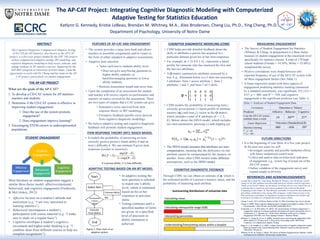

- 1. ABSTRACT The Cognitive Diagnostic Computerized Adaptive Testing (CD-CAT) for AP Statistics, also known as the AP-CAT project, is a five-year project funded by the NSF. This project utilizes computerized adaptive testing, IRT modeling, and cognitive diagnostic modeling to help assess, educate, and engage students in AP statistics courses. Supported by the NSF REU program at University of Notre Dame, I had the opportunity to work with Dr. Cheng and her team on the AP- CAT project, particularly on student engagement. What are the goals of the AP-CAT? 1. To develop a CD-CAT system for AP statistics teachers and students. 2. Determine if the CD-CAT system is effective in improving student engagement: 1. Does the use of the system promote engagement? 2. Does engagement improve learning? 3. Encouraging STEM careers in underrepresented populations. THE AP-CAT PROJECT Most literature on student engagement suggest a similar three-factor model: affective/emotional, behavioral, and cognitive engagement (Fredericks & McColskey, 2012): • Affective focuses on a student’s attitude and motivation (e.g. “I am very interested in learning statistics”). • Behavioral encompasses a student’s participation with course material (e.g. “I make sure to study on a regular basis”). • Cognitive envelopes a student’s cognitive investment and higher-order thinking (e.g. “I combine ideas from different courses to help me complete assignments”). Student Engagement Affective Engagement Behavioral Engagement Cognitive Engagement STUDENT ENGAGEMENT • The system provides a large item bank and allows teachers to assemble assignments and/or exams in the form of either standard or adaptive assessments. • Adaptive item selection: • Tailor each test to students ability level. • Does not give easy/boring questions to higher ability students, or hard/discouraging questions to lower ability students; • Shortens assessment length and saves time. • Upon the completion of an assessment the student and teacher will receive reports on performance and mastery on topics covered by the assessment. There are two types of outputs that a CAT system can give: • Summative score (derived from item response theory or IRT modeling); • Formative feedback (profile score derived from cognitive diagnostic modeling). • We believe adaptive testing and cognitive diagnostic feedback will promote student engagement. FEATURES OF AP-CAT AND ENGAGEMENT ITEM RESPONSE THEORY (IRT): RASCH MODEL ADAPTIVE TESTING BASED ON AN IRT MODEL • In adaptive testing the next question is selected to match one’s ability level, which is estimated based on his or her responses to previous items. • Testing continues until a specified number of items are given, or a specified level of precision in ability estimation is achieved. • It models the probability of answering an item correctly given a person’s latent ability θ and an item’s difficulty δ. We can estimate θ given item responses (correct or incorrect). Estimate Ability Figure 1: Flow chart of an adaptive system. NO Start Select Item Termination Criteria Met? Estimate Final Ability End • CDM helps provide detailed feedback about the skills or attributes a person has acquired in a particular domain given his or her item responses. • For example, 𝒂 = 1 0 0 1 1 , represents a latent profile for someone who has mastered the first and the last two attributes. • A Q-matrix summarizes attributes assessed by a test. E.g., illustrated below is a 3-item test assessing 5 attributes. Item 1 assess attribute 1, Item 2 attributes 1 and 3, and Item 3 all 5 skills. • CDM models the probability of answering item j correctly given person i’s latent profile of attribute mastery (𝒂&) and item j’s vector in the Q-matrix, which includes a total of K attributes (k = 1, 2, … K). Below shows the DINA model, which includes two item parameters: guessing (s) and slipping (g). 𝜂&( = ∏ 𝛼&+ ,(+- +./ P 𝑋&( = 1 𝒂&, 𝑠(, 𝑔( = 𝑔( /5678 (1 − 𝑠()678 • The DINA model assumes that attributes are non- compensatory, meaning that the deficiency on one attribute cannot be compensated by the mastery on another. Some other CDM models make different assumptions, such as the DINO model. COGNITIVE DIAGNOSTIC MODELING (CDM) COGNITIVE DIAGNOSTIC FEEDBACK Through CDM, we can obtain an estimate of 𝒂, which is the estimated profile of a person’s mastery status, and the probability of mastering each attribute. 𝑸 = 1 0 0 1 0 1 1 1 1 0 0 0 0 1 1 Summarizing distributions of univariate data Calculating mean Understanding/Interpreting values within a boxplot Calculating interquartile range (IQR) Interpreting percentiles MEASURING ENGAGEMENT • The Survey of Student Engagement for Statistics (Whitney & Cheng, in preparation) is a three factor measure of student engagement at the classroom level specifically for statistics classes. A total of 178 high school students (Female = 54.50%, White = 55.06%) completed the survey. • Positive correlations were found between self- reported frequency of use of the AP-CAT system with all three engagement factors (See Table 1). • A linear regression model with three aspects of engagement predicting statistics learning (measured by a standard assessment), was significant, F(3,121) = 4.67, p = .004, and R2 = .104, and cognitive engagement is the significant predictor (see Table 1). REFERENCES AND ACKNOWLEDGEMENTS I would like to thank Dr. Ying Cheng, Brendan M. Whitney, Alex Broderson, and Dr. Cheng Liu for their guidance and mentorship during my summer research experience. Thank you to Kristie LeBeau, my lab partner, for being with me every step of the way. I would also like to extend my most sincere gratitude to the Center for Research Computing at Notre Dame, Dr. Paul Brenner, and Kallie O’Connell for their dedication and selfless contribution to the summer REU programs. A final thank you goes to the National Science Foundation for funding my research contributions this summer and investing in my future as a computational social science scholar. Cheng, Y. (n.d.). AP-CAT Home. Retrieved July 13, 2016, from https://ap- cat.crc.nd.edu/ Cheng, Y. (2009). When cognitive diagnosis meets computerized adaptive testing: CD-CAT. Psychometrika, 74(4), 619-632. doi:10.1007/s11336-009-9123-2 Fredericks, J. A., & McColskey, W. (2012). The measurements of student engagement: A comparative analysis of variaous methods and student self-report instruments. In S. L. Christenson, A. L. Reschly, & C. Wylie (Eds), Handbook of Research on Student Engagement (763-782). New York, Springer Science + Business Media. Huebner, A., Wang, B., & Lee, S. (2009). Practical issues concerning the application of the DINA model to CAT data. In D. J. Weiss (Ed.), Proceedings of the 2009 GMAC Conference on Computerized Adaptive Testing. Rupp, A. A., & Templin, J. L. (2007). Unique characteristics of cognitive diagnostic models. Paper presented at the Annual Meeting of the National Council on Measurement in Education, Chicago, IL. Whitney, B. M., & Cheng, Y. (2016). The Survey of Student Engagement for Statistics: Initial development and validation. Manuscript in preparation. Table 1: Analyses of Student Engagement Data Correlation Outcomes (r Values) Predictor Affective Behavior Cognitive I use the AP-CAT system multiple times a week. .14 .36** .21* Linear Regression Outcomes (Standardized β) Statistics learning .060 -.056 .30* * p < .05 ** p < .001 FUTURE DIRECTIONS • It is the beginning of year three of a five year project. In the next two years we will: • Investigate causality and possible mediation effects with future randomized control trials. • Collect and analyze data on behavioral indicators of engagement, e.g., system log of actual use of the AP-CAT system. • Further validation of the engagement survey and expand sample in diversity. Department of Psychology, University of Notre Dame Katlynn G. Kennedy, Kristie LeBeau, Brendan M. Whitney, M.A., Alex Brodersen, Cheng Liu, Ph.D., Ying Cheng, Ph.D. The AP-CAT Project: Integrating Cognitive Diagnostic Modeling with Computerized Adaptive Testing for Statistics Education