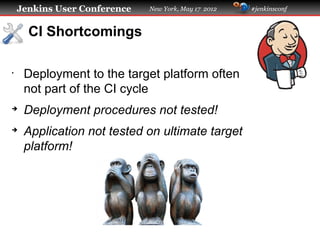

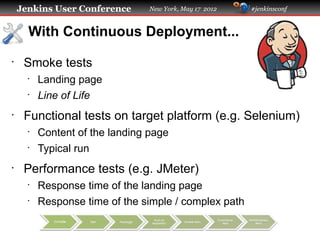

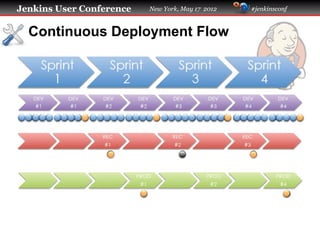

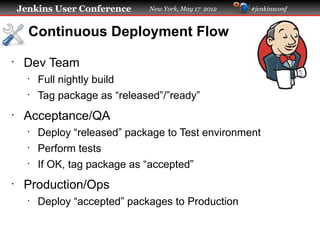

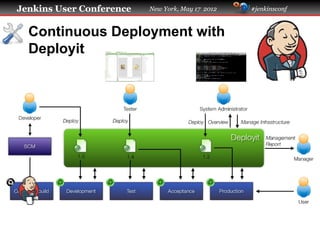

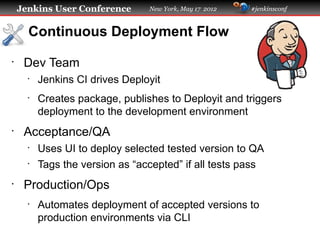

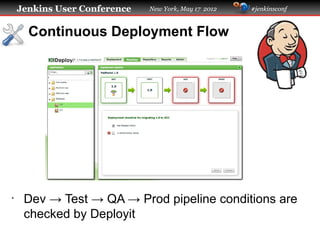

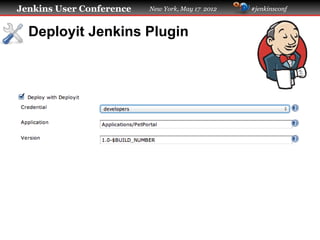

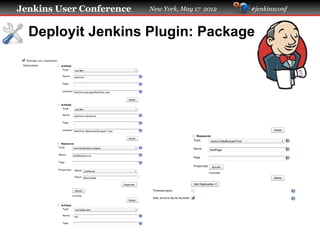

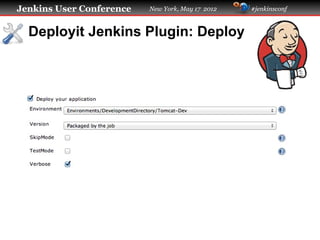

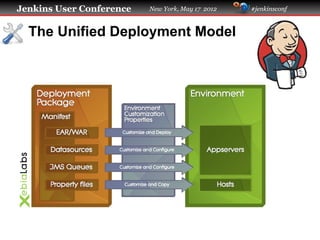

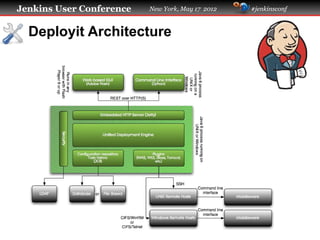

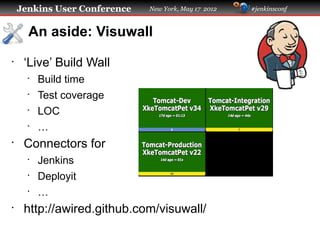

The presentation at the Jenkins User Conference discusses advanced continuous deployment using Jenkins, emphasizing improved software deployment practices and the integration of automated tests throughout the deployment cycle. It outlines a continuous deployment flow involving development, testing, and production phases, alongside best practices for package management and security measures. Key challenges and solutions related to middleware, security credentials, and deployment monitoring are also highlighted.