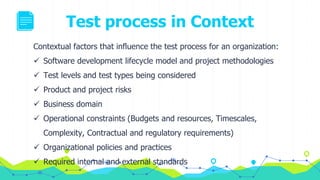

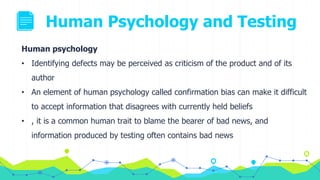

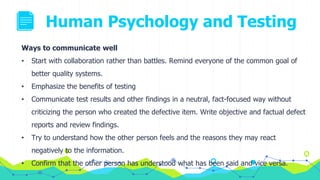

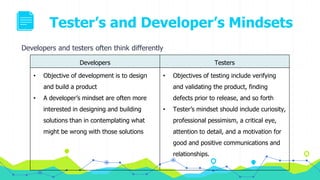

The document provides an overview of fundamentals of testing including the testing process, psychology of testing, and exams. It describes the typical activities in a test process including test planning, monitoring and control, analysis, design, implementation, execution, and completion. For each activity, it outlines the common tasks and work products. It also discusses how human psychology and the different mindsets of testers and developers can impact testing. The document emphasizes the importance of independence in testing to avoid author bias and more effectively find defects.