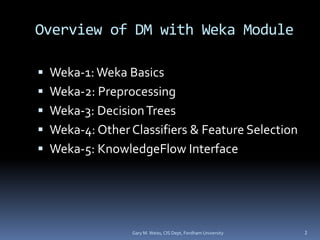

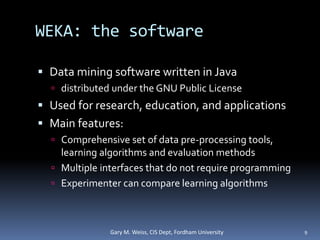

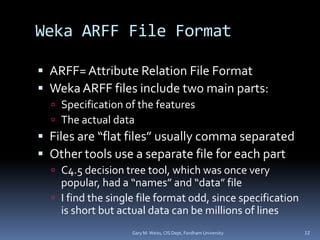

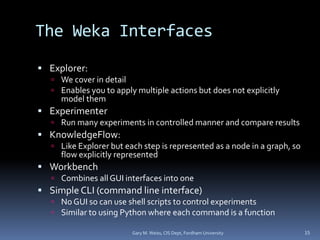

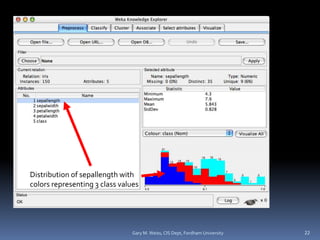

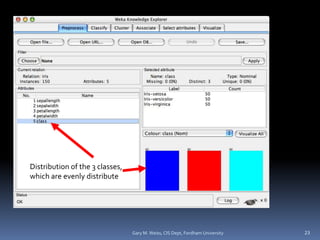

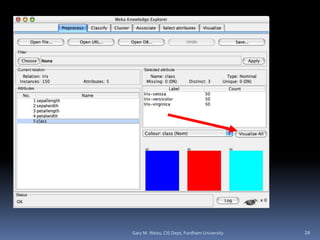

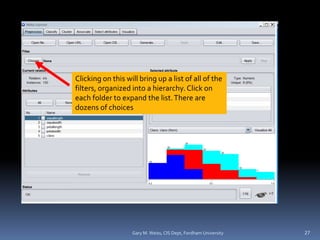

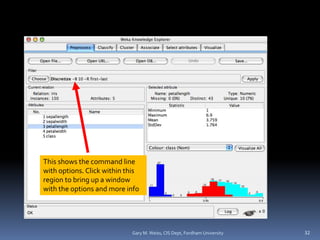

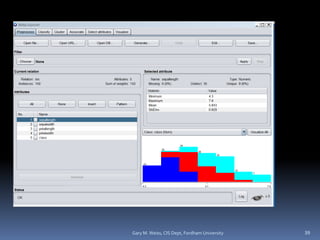

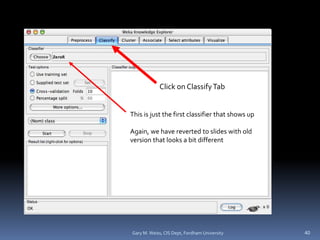

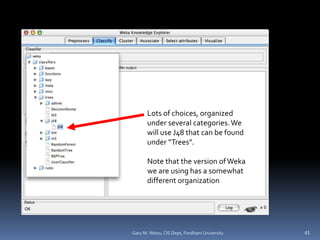

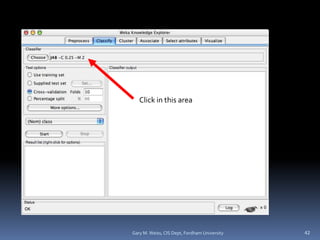

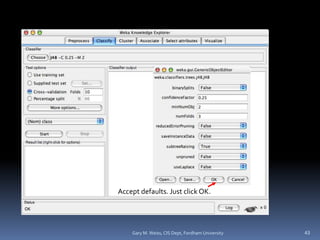

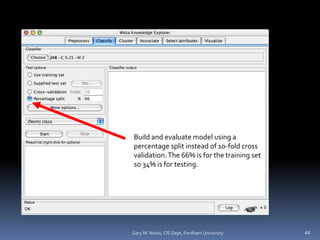

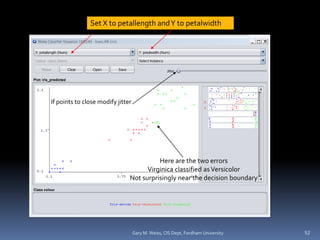

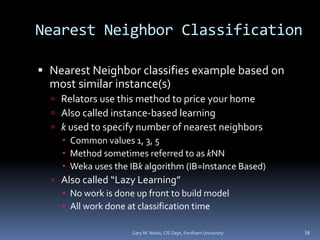

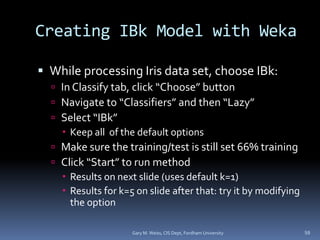

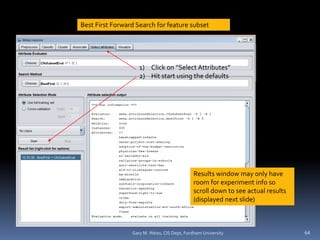

This document provides an overview of using the Weka data mining software. It discusses Weka's capabilities for data preprocessing, classification, and feature selection. The document is divided into multiple sections that cover Weka basics, preprocessing with Weka, decision trees in Weka, and other classifiers and feature selection. It emphasizes hands-on experience with Weka and encourages readers to install Weka and work through examples on their own computer.