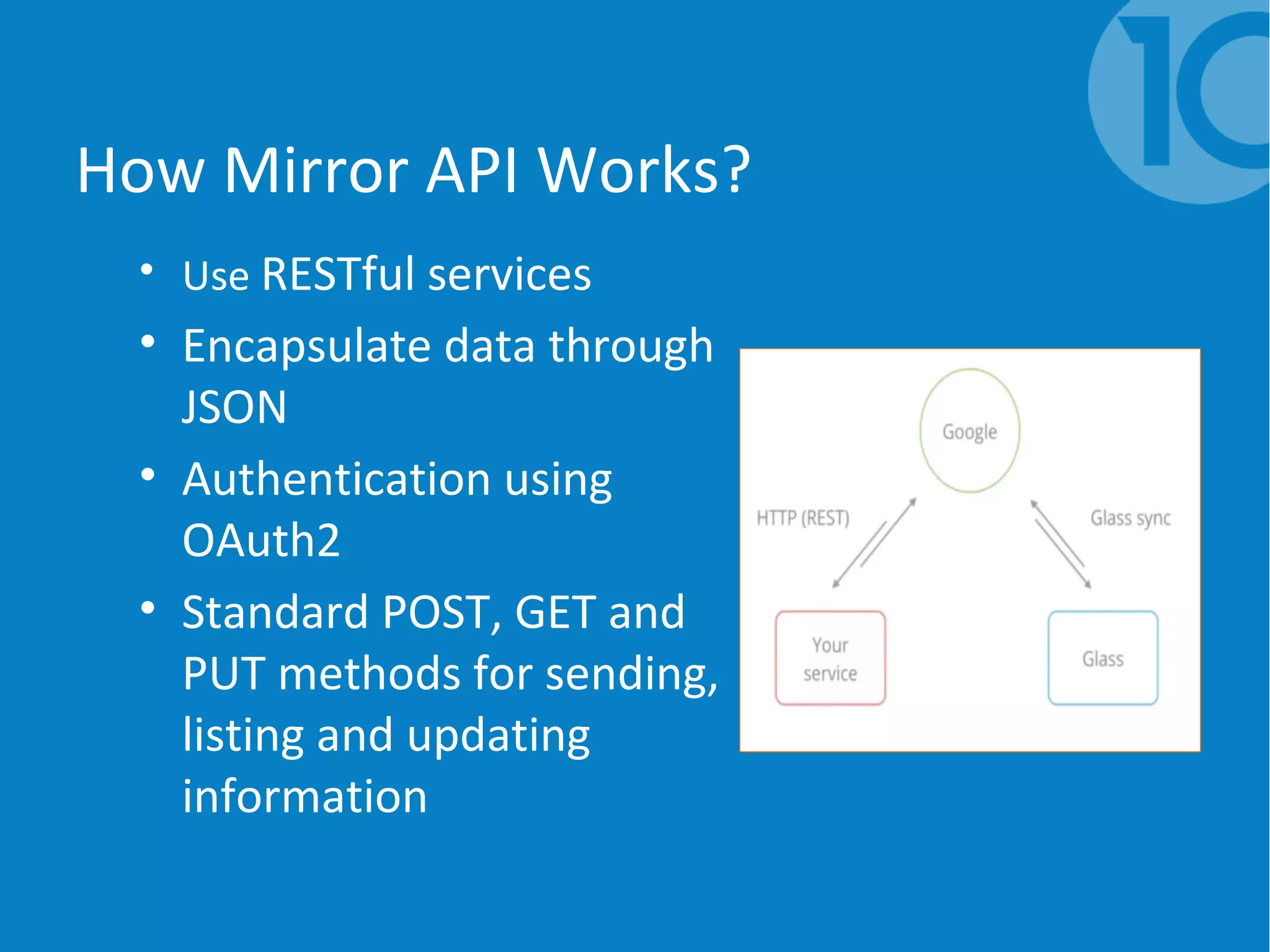

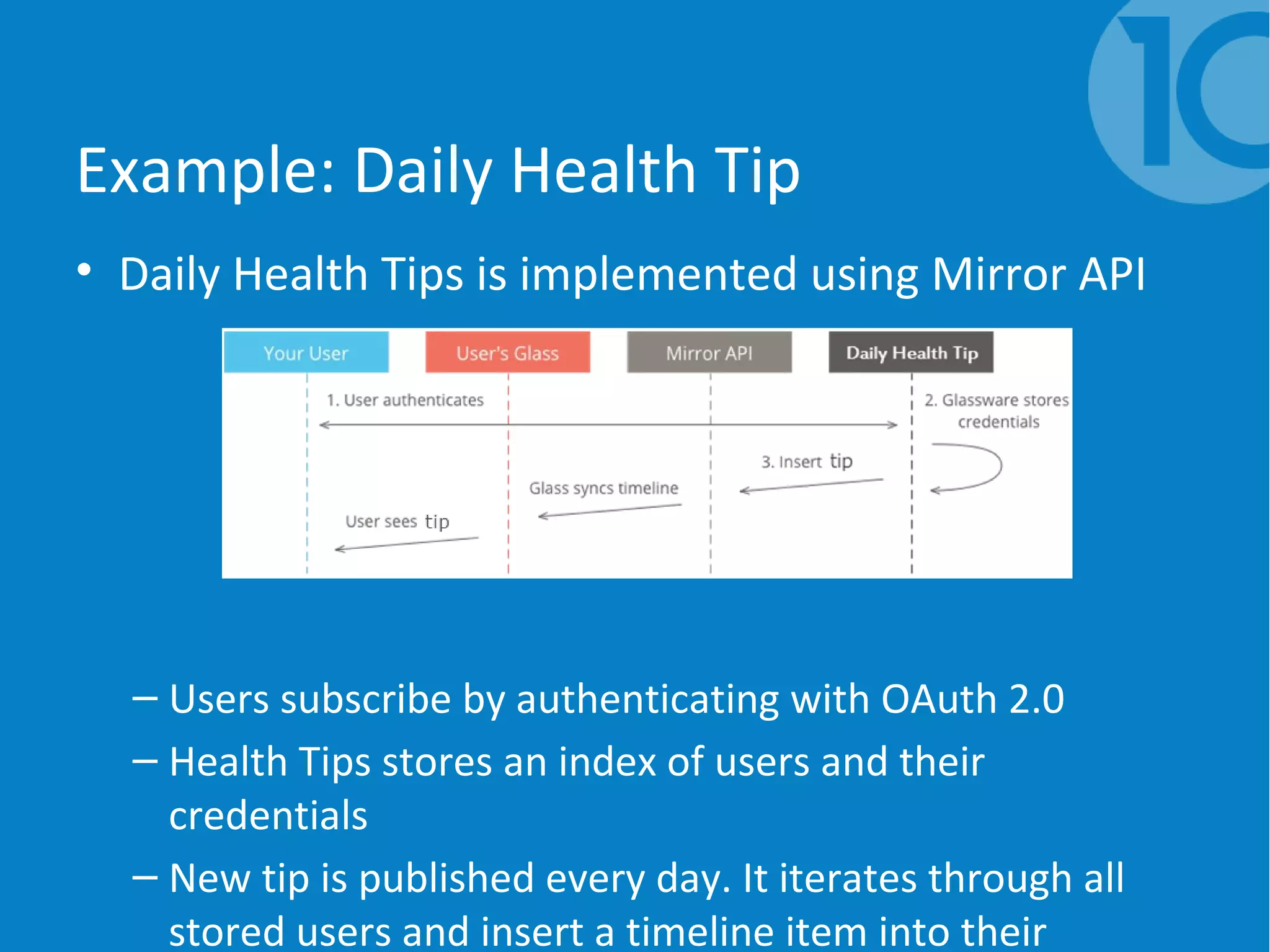

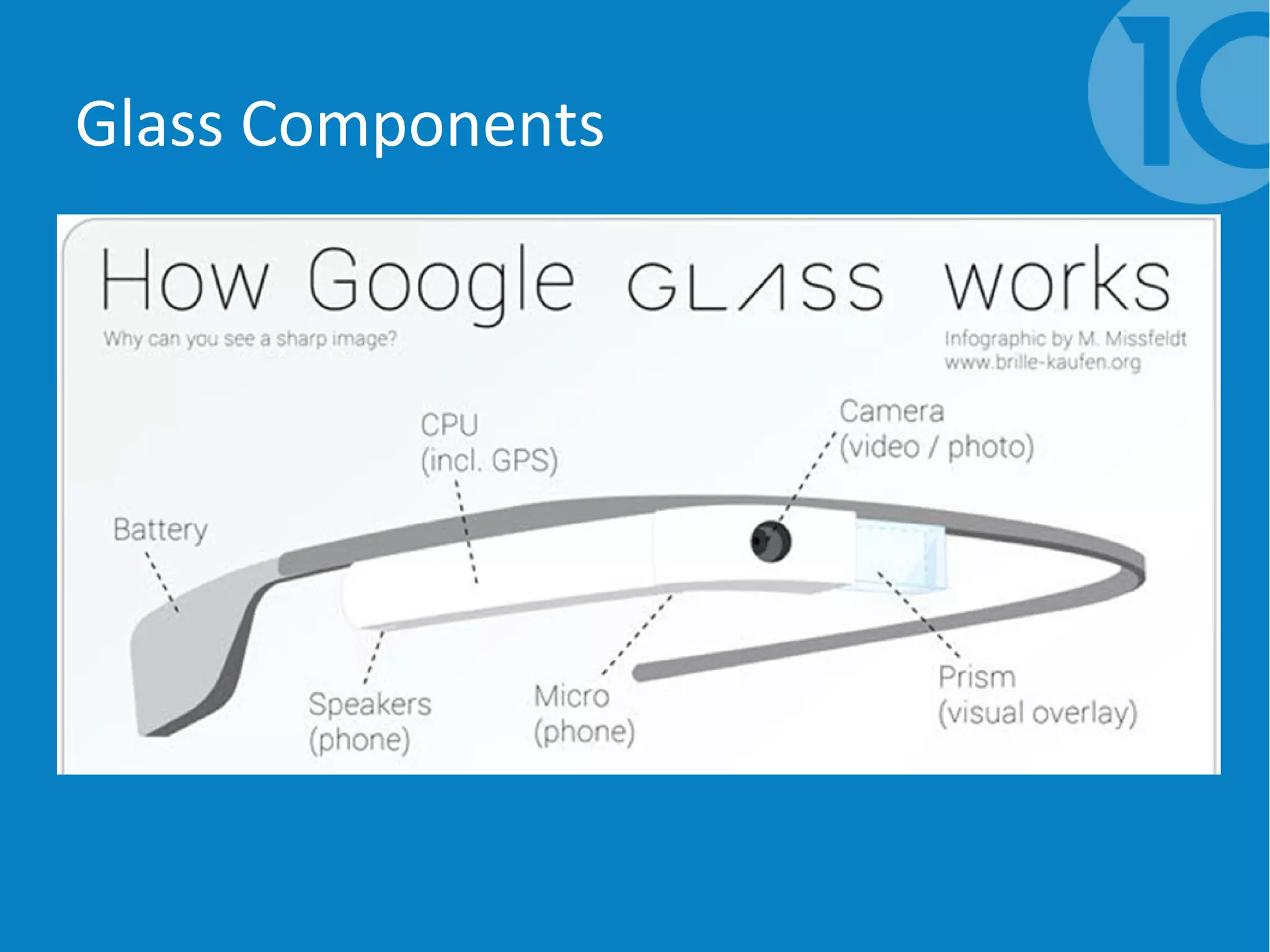

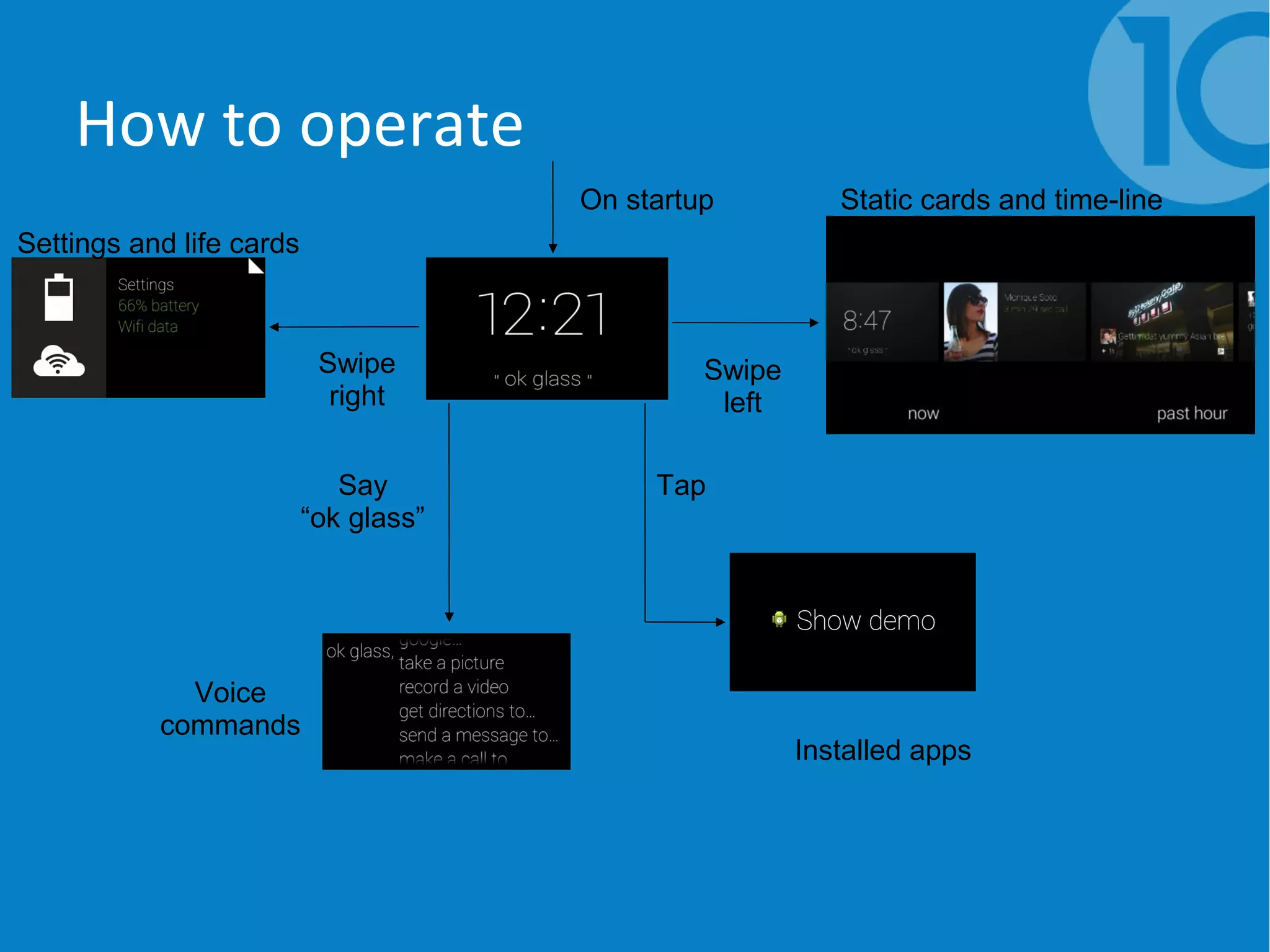

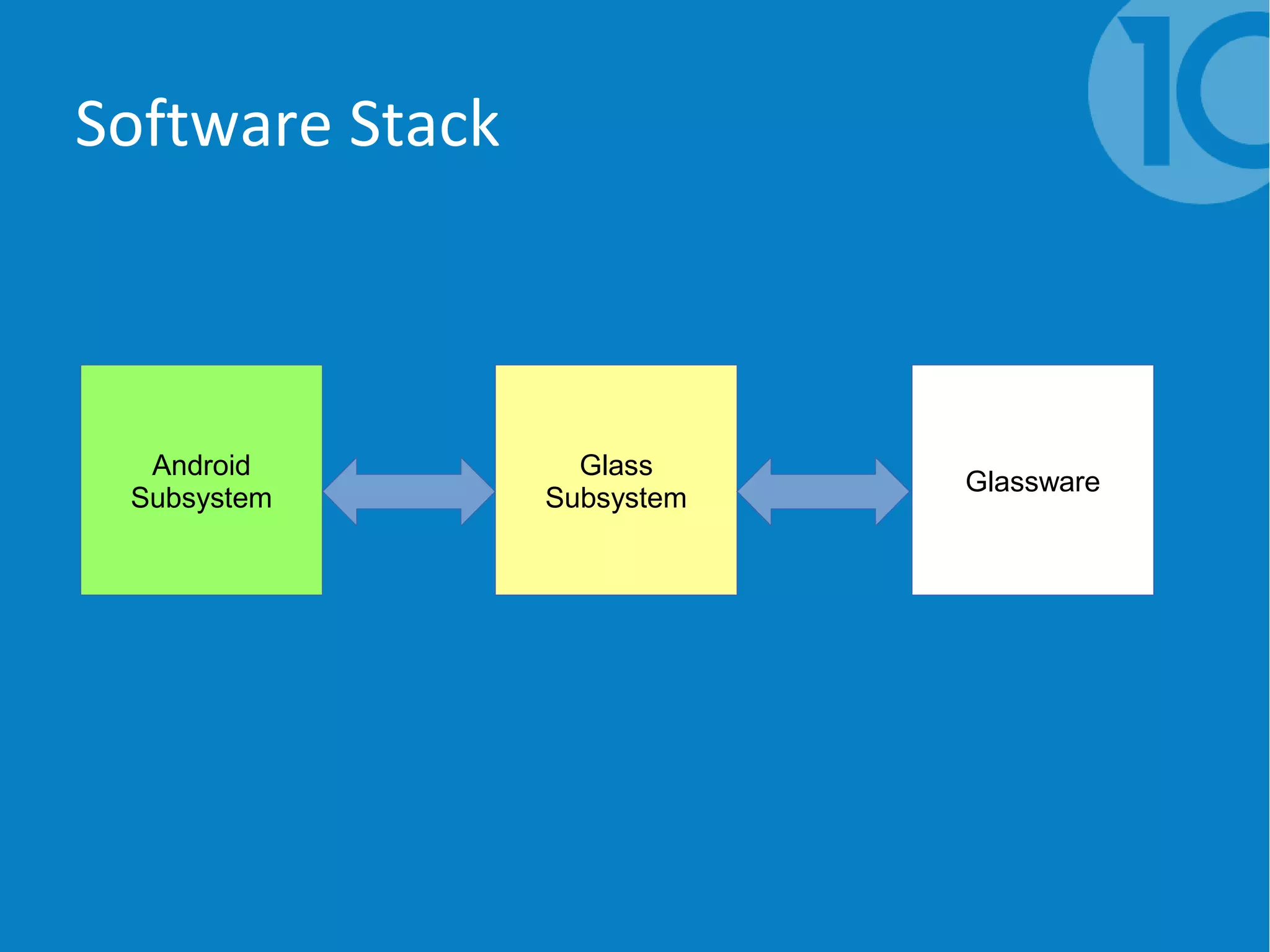

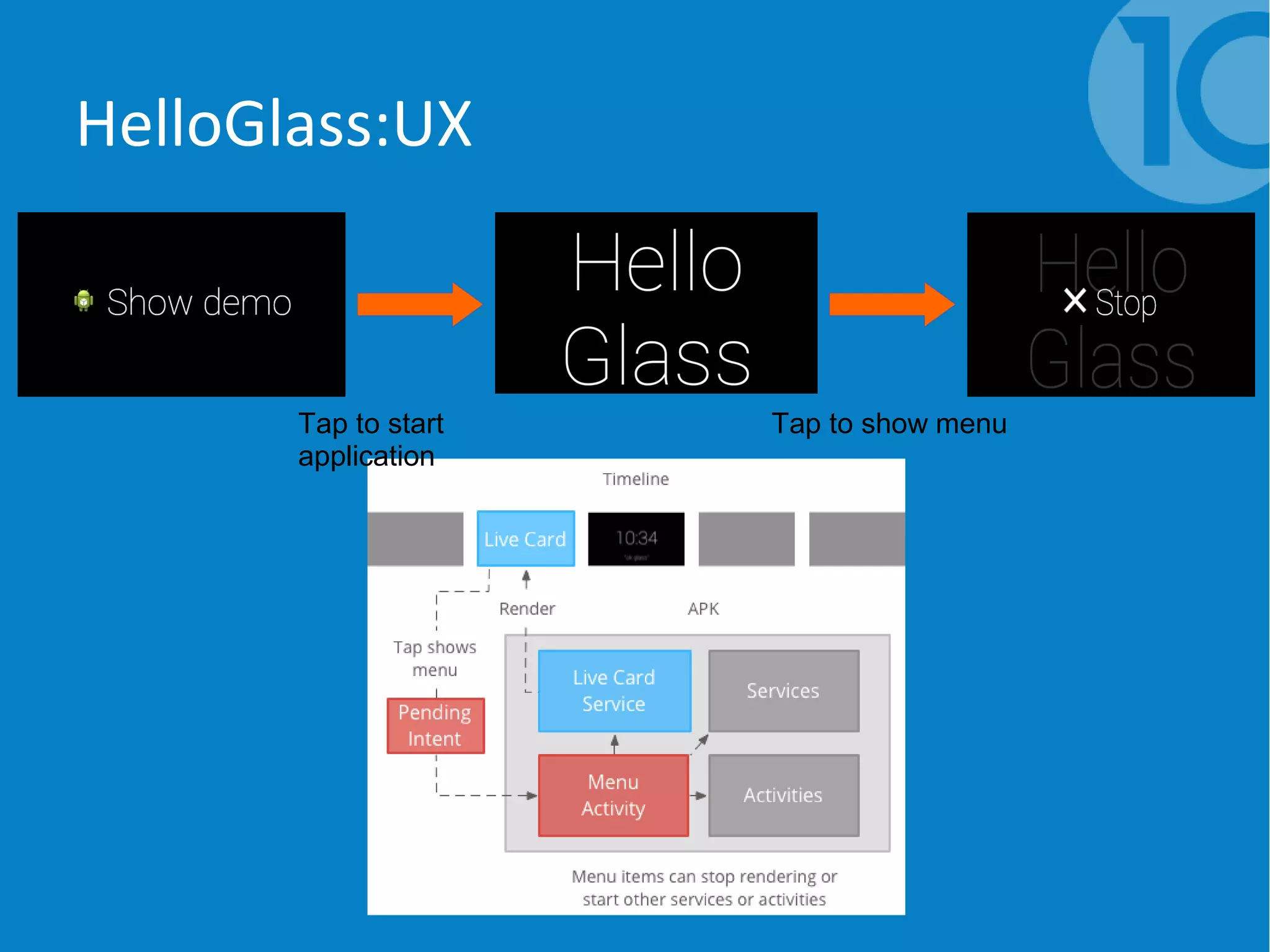

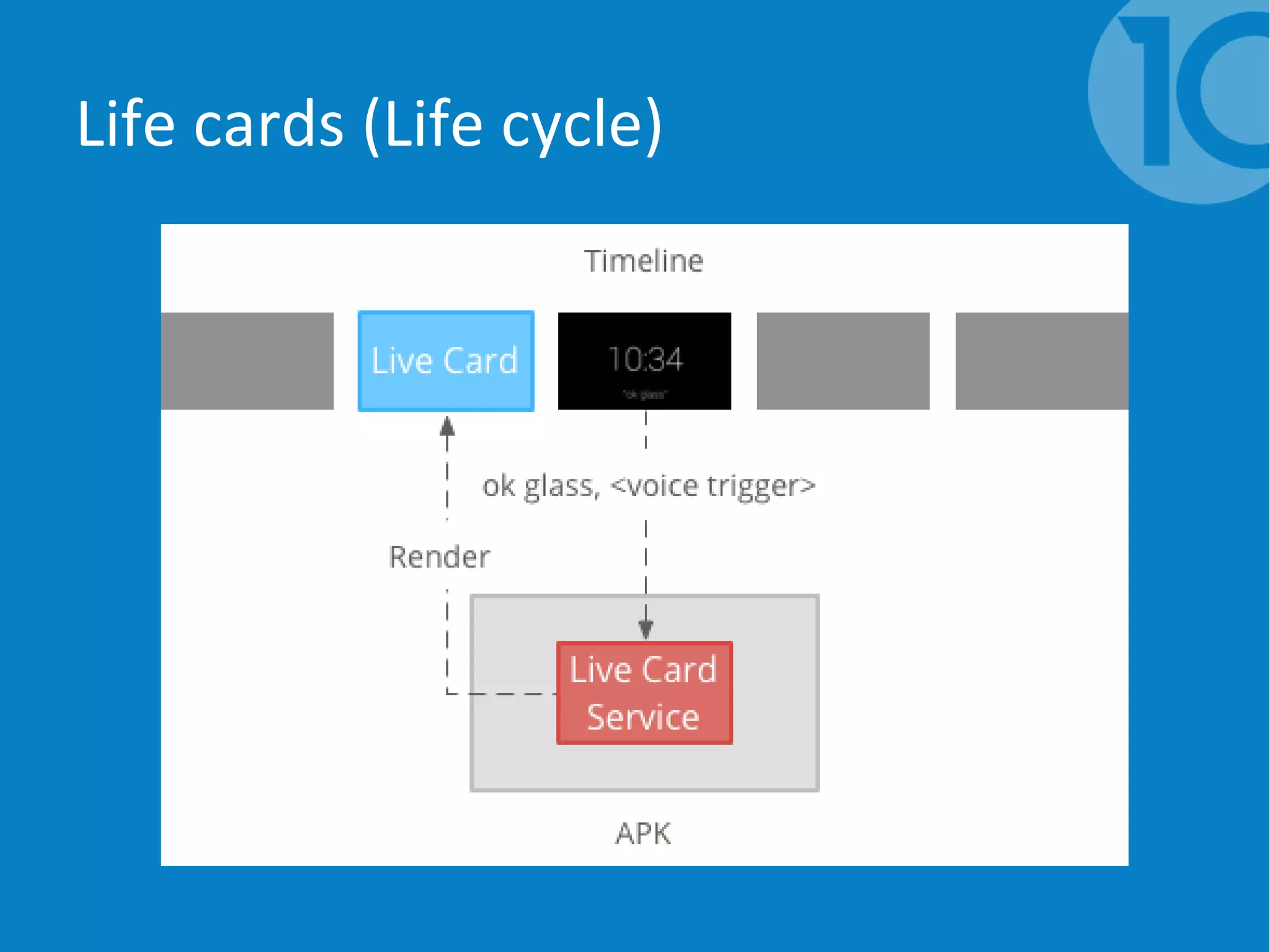

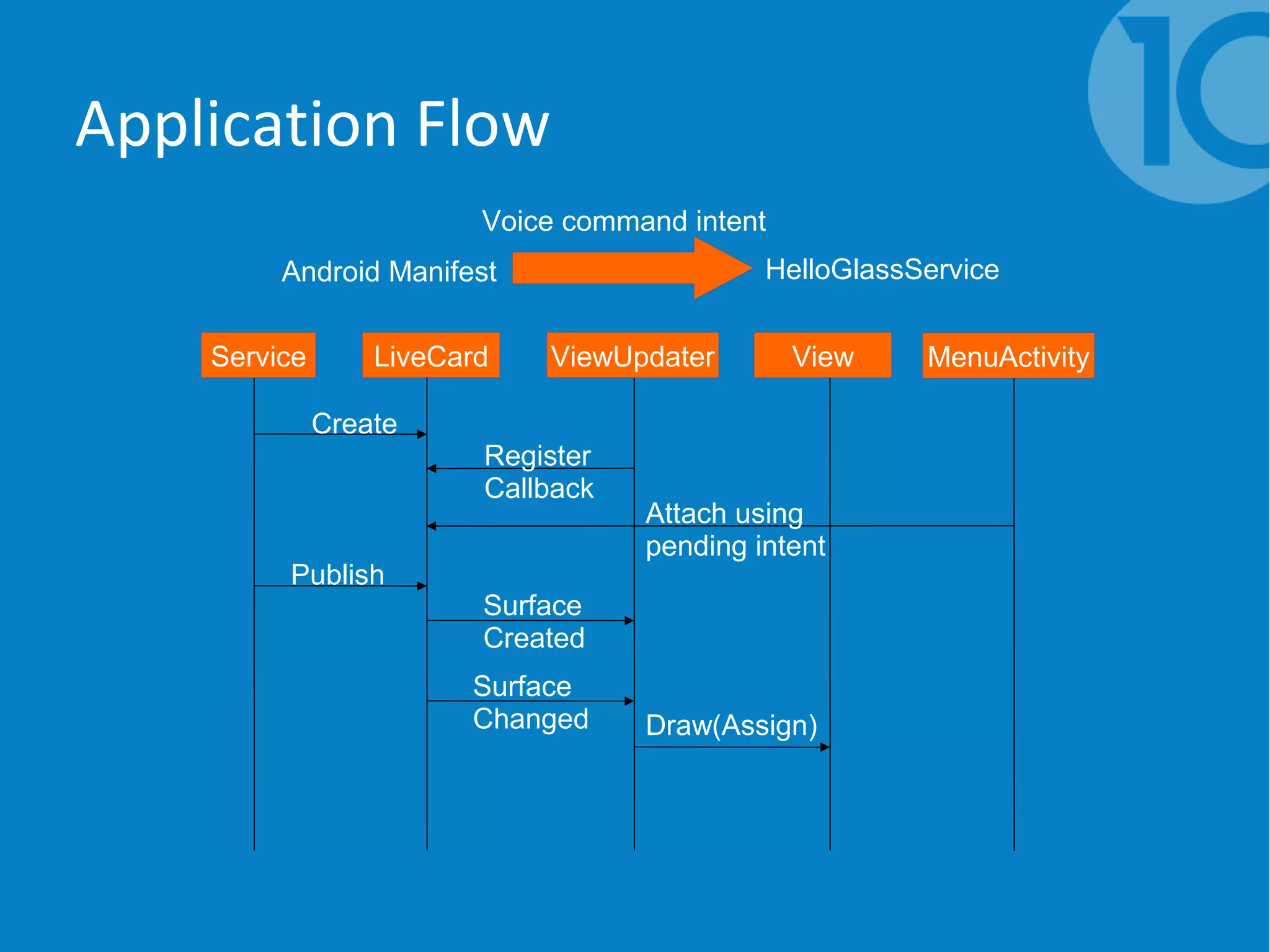

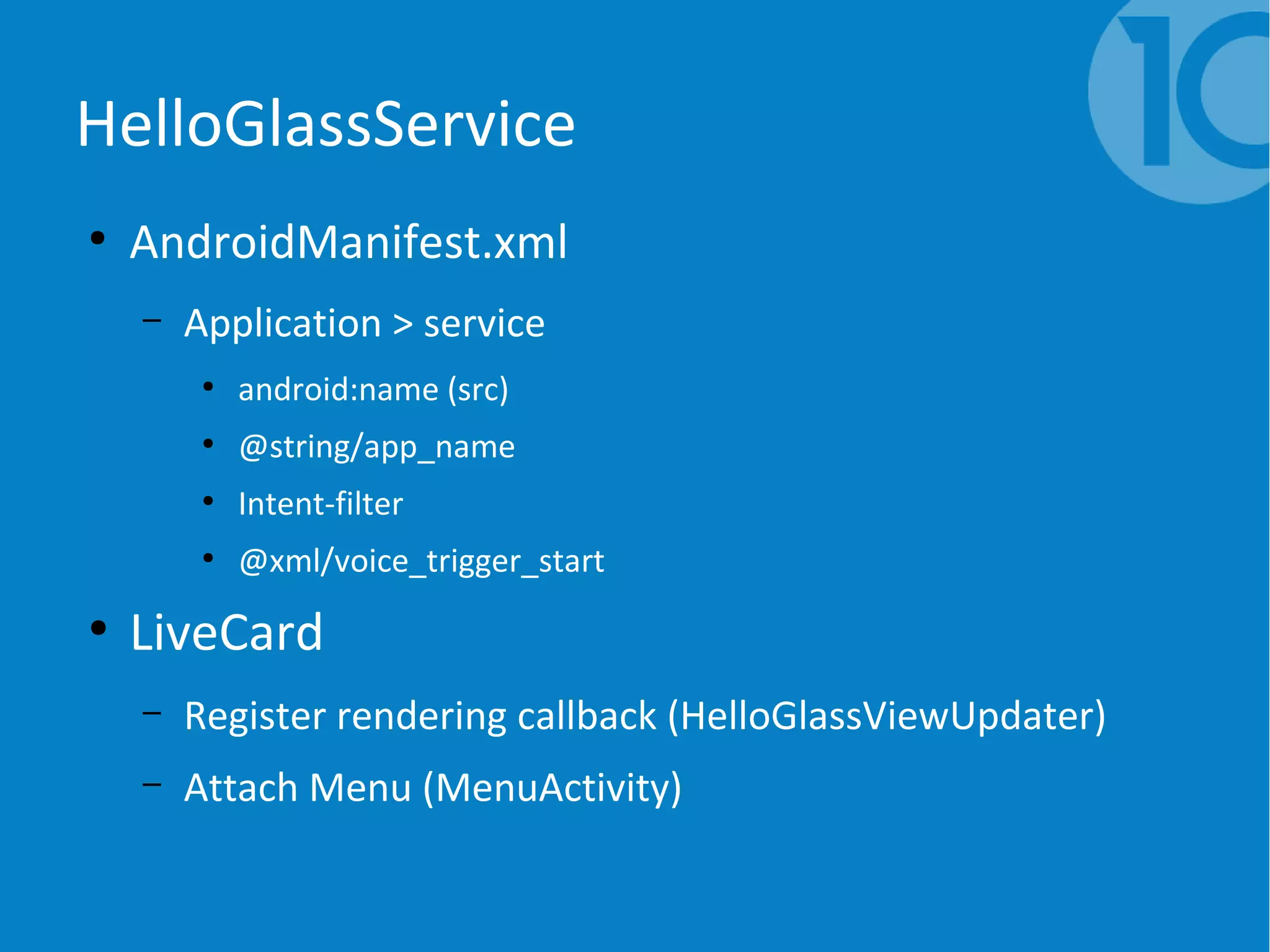

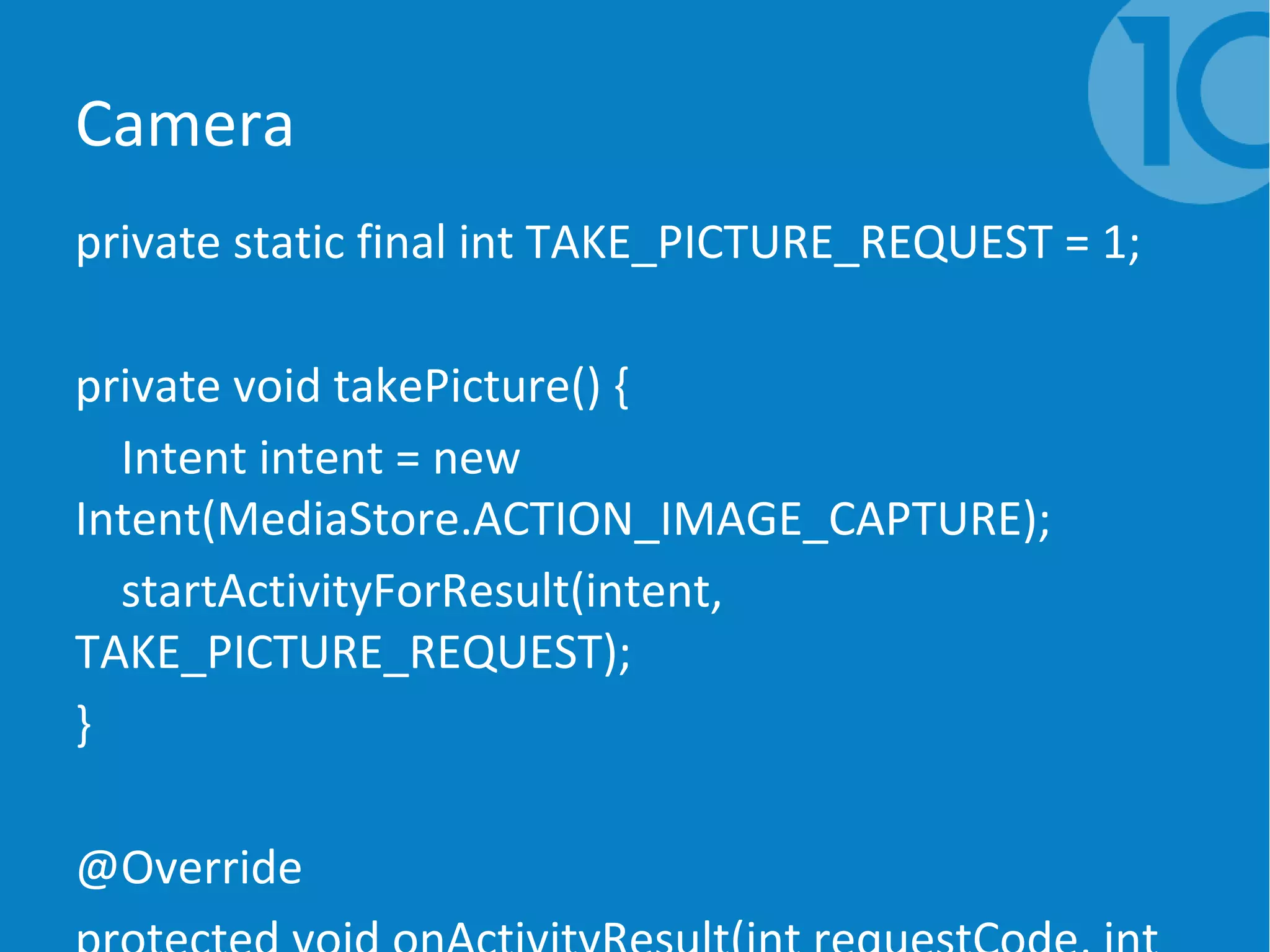

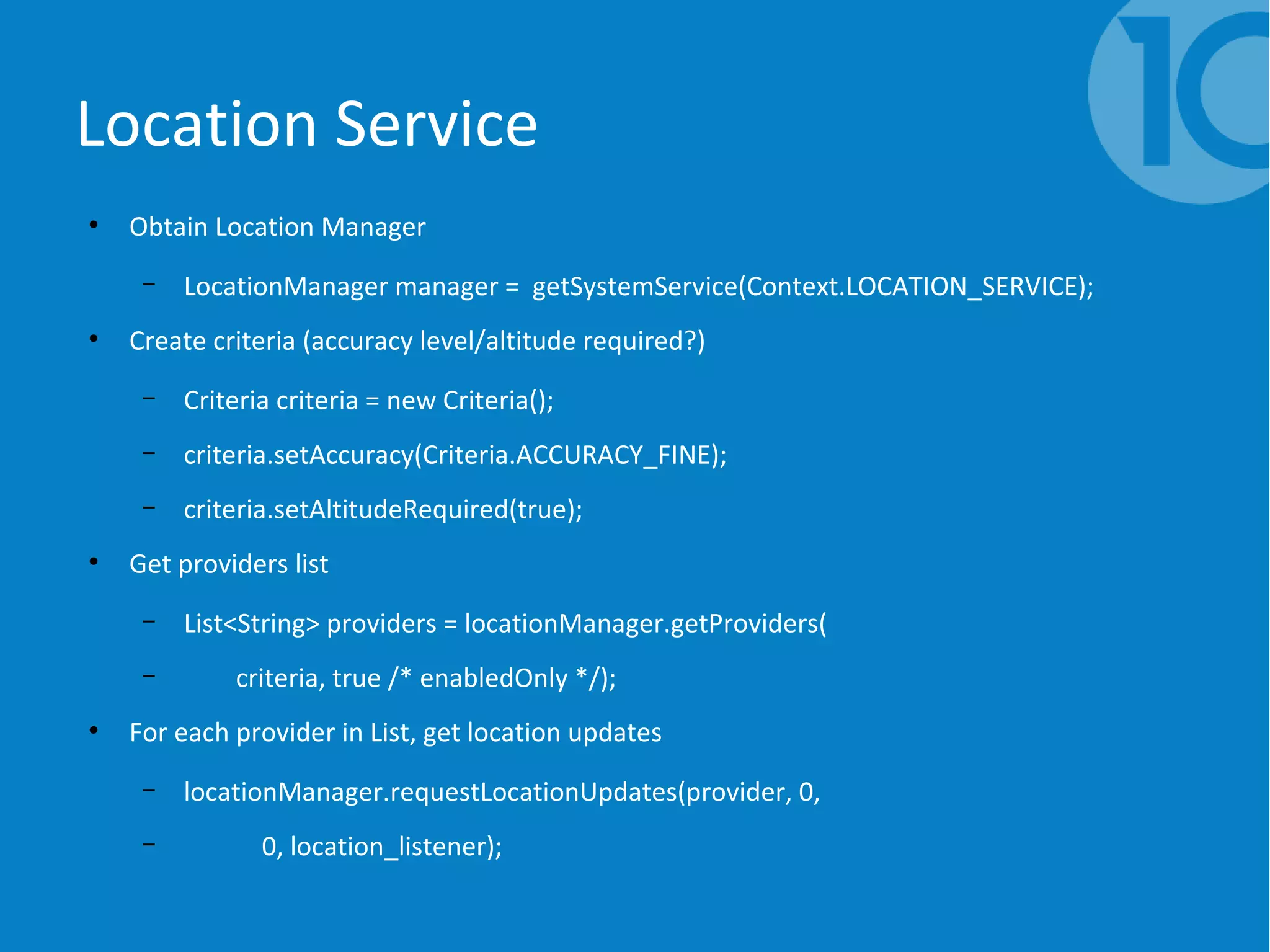

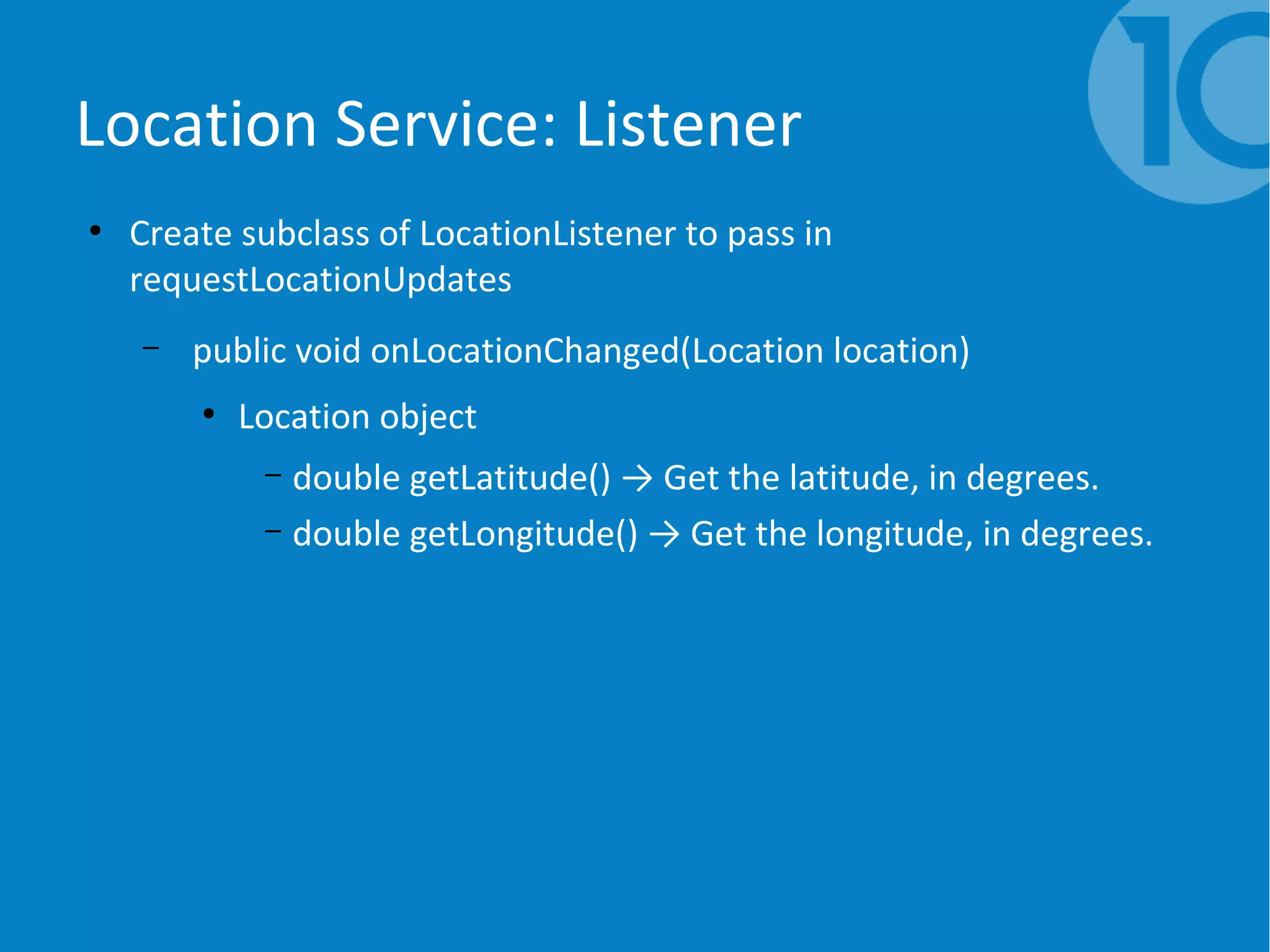

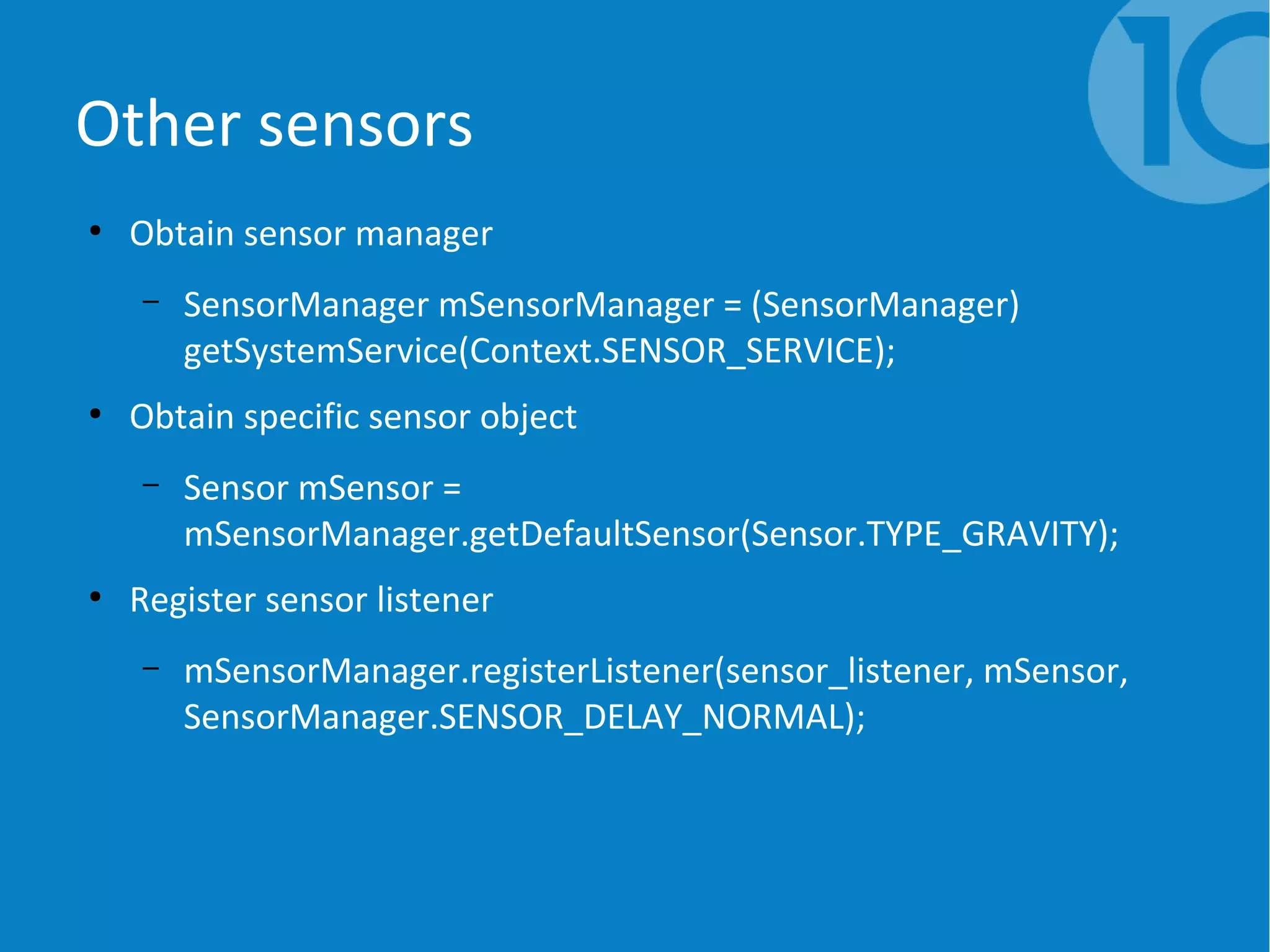

This document provides an overview and introduction to developing applications for Google Glass. It discusses the key components of Glass including live cards, static cards and immersion. It then covers the software stack and looks at building a basic "Hello World" application using the Glass Development Kit (GDK). The document also discusses design patterns for Glassware including ongoing tasks, services and the Mirror API. It provides examples of using sensors, location services and other Glass features in applications. Finally, it discusses design principles for Glass and explores some example Glass apps.

![Other sensors: Listener

●

Create subclass of SensorEventListener to pass in registerListener

– public final void onSensorChanged(SensorEvent event)

●

SensorEvent object

– float sensorVal = event.values[0];](https://image.slidesharecdn.com/finalpresentation-140807050551-phpapp02/75/Introduction-to-google-glass-44-2048.jpg)