This project develops a method to interpolate evolutionary tracks for stars of arbitrary masses using existing published model grids. The method is tested and shown to be accurate to better than 1%. Given observations of a star's radius and luminosity, the project also determines if the star's properties place it in an ambiguous region, requiring a mass range, or a well-defined region, with a single mass value. A point-in-polygon algorithm is used to classify regions. This allows study of how stellar rotation affects evolution by comparing observational properties to evolutionary tracks.

![Interpolating Evolutionary Tracks of Rapidly Rotating Stars

Danielle Kumpulanian

Department of Physics & Astronomy, Stony Brook University

(Department of Physics, Applied Physics & Astronomy, Rensselaer Polytechnic Institute)

(Dated: August 5, 2005)

This project has two purposes: to provide an accurate method of interpolating data from

published evolutionary models grids, and to solve the problem of inferring the mass or

range of possible mass values of a star given its radius and luminosity. The first is

necessary because the grids only cover a limited set of mass values, and studying an

object of an arbitrary mass requires data for that mass to be interpolated and used.

In this case, the stellar models grids were those published by A. Claret in 2004. These

tracks were plotted on a log(radius) vs. log(luminosity) diagram. The interpolation

method was tested by using the existing tracks and linearly interpolating one intermediate

track. This test showed that this interpolation method could be used, accurate to better

than 1%, for any log(mass) in the range of the log(mass) values given in the grid, and

therefore, new models grids could be accurately generated using existing ones.

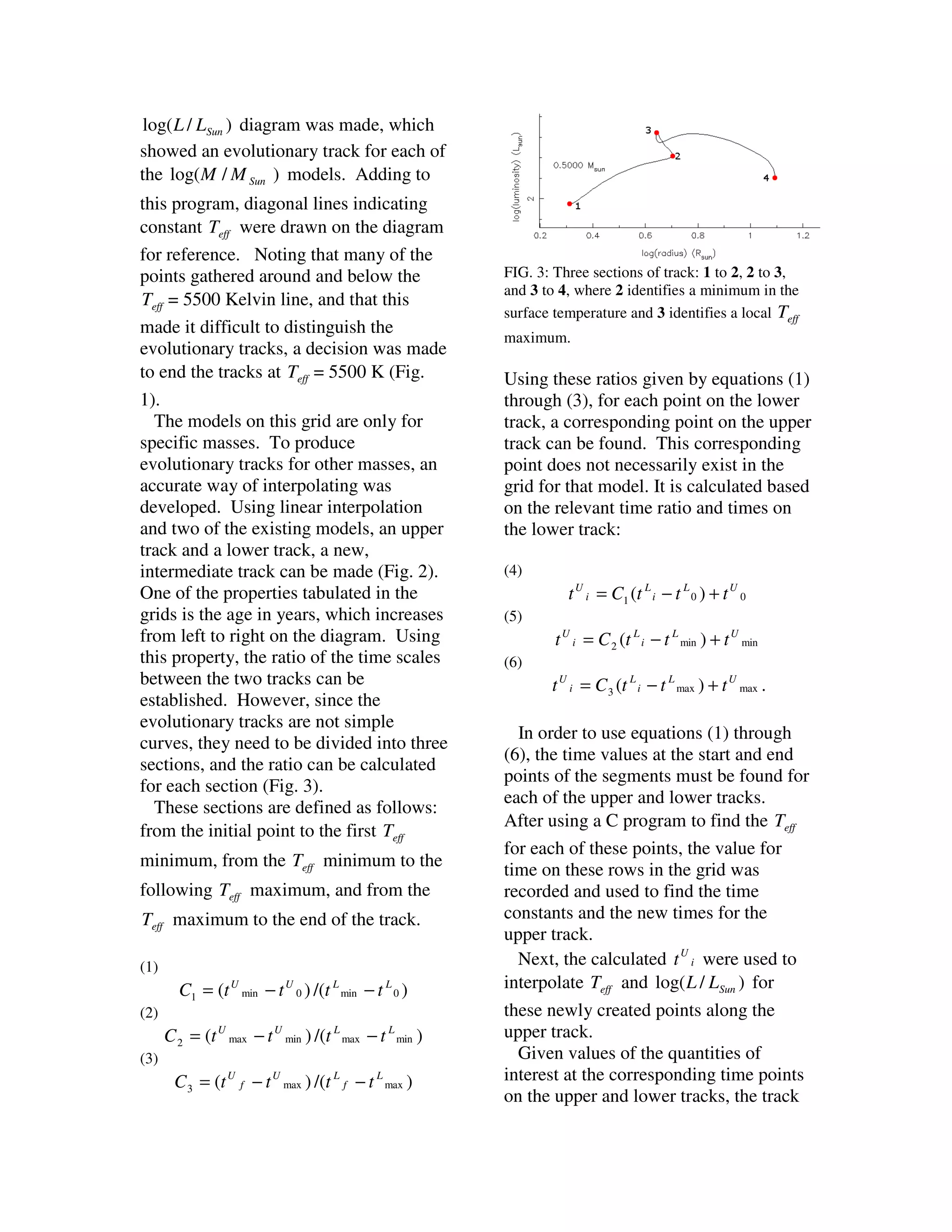

The evolutionary tracks plotted on the log(radius) vs. log(luminosity) plot are complicated and

include loops. Because of this, the tracks are divided into three sections, with the middle section

being the loop area. In this area, multiple values for log(mass) can exist, and a range of values can

be determined. In the other areas, one value can be found. So the process requires finding first

which area the [log(radius),log(luminosity)] point in question occupies and, if it is in the loop

region, finding the range of log(mass) involved.

This project fits into a larger context. Two properties of stars, radius and luminosity, are

known to remain unchanged when the stars rotate. These can be found using

observational techniques, and using these two quantities, other properties of the star can

be deduced. This allows for study of the star's evolutionary state and how rotation affects

stellar evolution.

Introduction

project, is the grid published by A.

Stellar evolution is the life history of a Claret1. To be useful, the grids need to

star. Since the lifetimes of stars can be be able to be manipulated to produce

millions or billions of years, a single star tracks for any arbitrary mass. A solution

cannot be observed for its entire lifetime. to this problem is to interpolate data for

All different stages of evolution can be arbitrary masses using the given data

observed. Stars are always being born from the grids.

and always dying, so there are examples Another matter is to estimate the mass

of all stages of stellar life. Using these of a star by comparing observations of L,

observations, detailed calculations can Teff and R with predictions. This can

be made about how the properties of result in a single estimated mass or a

stars of a given mass will change with range of values for mass, depending on

time. These calculations can be used to which segment of the evolutionary track

draw a predicted evolutionary track of a the given star is on.

star with a given mass.

“Grids” of these evolutionary tracks Interpolating Evolutionary Tracks

are published, with a considerable

difference between each mass, so not A C program was written to plot the

every possible mass of a star is data from Claret’s grid. Using

accounted for. Specifically, used in this PGPLOT, a log( R / RSun ) vs.](https://image.slidesharecdn.com/dkumpulanian2005reupaper-130122055250-phpapp01/75/Interpolating-evolutionary-tracks-of-rapidly-rotating-stars-paper-1-2048.jpg)

![for the intermediate mass value can be track and the two tracks used to compose

produced by linearly interpolating in it.

log( M / M Sun ) .

(7) Results: Interpolation of Evolutionary

log( M / M Sun ) − log( M / M Sun )

L Tracks

wt + =

log( M / M Sun ) L − log( M / M Sun )U Testing the interpolation method

(8)

showed it to be accurate to better than

log( M / M Sun ) − log( M / M Sun )U 2% for a difference of 0.2000

wt − =

log( M / M Sun ) L − log( M / M Sun )U log( M / M Sun ) between the two models

(9)

used to interpolate the third model. In

x = x1 wt + + x 2 wt − using this method for interpolating an

arbitrary track, it should be accurate to

In equation (9), x represents the quantity better than 1% as the difference between

being interpolated. the upper and lower tracks decreases.

To test this method of interpolation, an

existing evolutionary track was

interpolated using the tracks above and Finding Mass, given Radius and

below it, and all three were plotted on a Luminosity

log( R / RSun ) vs. log( L / LSun ) diagram,

along with the actual model for the Consider a log( R / RSun ) vs.

intermediate value. Specifically, the log( L / LSun ) diagram where the space

log( M / M Sun ) =0.6000 track was between each track is much smaller than

reconstructed using the on the diagram in Fig. 2. Not only does

log( M / M Sun ) =0.5000 and each track loop over itself, it loops over

other tracks as well. In this ambiguous

log( M / M Sun ) =0.7000 tracks (Fig. 2).

region of the diagram, for a given

It appears that the intermediate track is [ log( R / RSun ) , log( L / LSun ) ], an infinite

reproduced to within ~2%. One expects

number of tracks pass through this point.

interpolation between adjacent tracks to

This means that there is a range of

be much more accurate. Satisfied this

possible masses for a star with these

method will work, a C subroutine was

properties. One significant problem to

written to make this possible. Given a

solve is how to tell if a point is in a

log( M / M Sun ) within the range of those particular region given values for its

in the grid, the subroutine used the luminosity and the radius.

interpolation method described above to If the tracks are divided into three

create a new evolutionary track, with all sections, a polygon can be drawn by

of the properties, not limited to Teff , connecting the Teff min points, connecting

log( R / RSun ) , and log( L / LSun ) . the Teff max points, and connecting the

Another subroutine gives the option of end points of these two groups (Fig. 4).

writing this data to a file, and yet another If a [ log( R / RSun ) , log( L / LSun ) ] is

subroutine uses PGPLOT to plot the new

within this polygon, a range of values for

its log( M / M Sun ) can be found. If it is](https://image.slidesharecdn.com/dkumpulanian2005reupaper-130122055250-phpapp01/75/Interpolating-evolutionary-tracks-of-rapidly-rotating-stars-paper-3-2048.jpg)

![Conclusions track for that star can be drawn. Since

log( R / RSun ) and log( L / LSun ) are

A method of interpolating data from independent of rotation, this allows for

existing evolutionary models grids was study of the star’s evolutionary state and

developed and was tested, accurate to how its rotation affects its evolution.

better than 1%.

Noting the complexity of the

evolutionary tracks, they can be divided Acknowledgements

up into three sections: two simple

sections and one uncertain section. The author would like to thank Prof.

Polygons containing these sections can Deane Peterson for advising her on this

be drawn using multiple tracks, with project. Also, thanks to Joseph Yasi for

vertices at the endpoints of the track his help with debugging. This project

sections. If a point was made possible by a grant from the

[ log( R / RSun ) , log( L / LSun ) ] falls within National Science Foundation (Phy–

one of the two simple sections, its 0243935).

log( M / M Sun ) can be calculated. If it

falls within the middle, multivalued area,

References

a range of values for its log( M / M Sun )

can be found. In order to decide which 1. A. Claret, Astron. Astrophys. 424, 919 (2004).

area the point is in, a point-in-polygon 2. D. R. Finley, Point-In-Polygon Algorithm

(1998), URL:

algorithm must be used.

http://www.alienryderflex.com/polygon/.

After obtaining the log( R / RSun ) and

the log( L / LSun ) of a star through

observation, the remaining properties

can be deduced, and an evolutionary](https://image.slidesharecdn.com/dkumpulanian2005reupaper-130122055250-phpapp01/75/Interpolating-evolutionary-tracks-of-rapidly-rotating-stars-paper-5-2048.jpg)