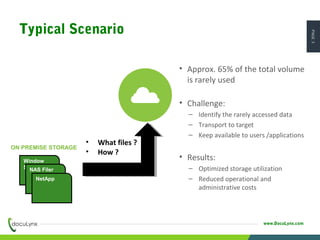

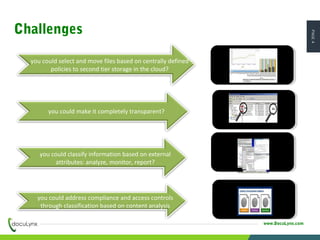

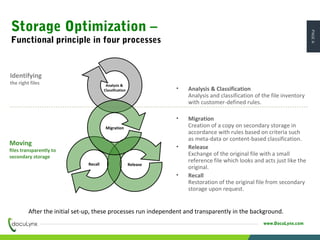

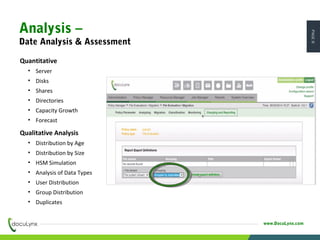

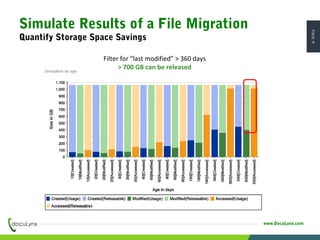

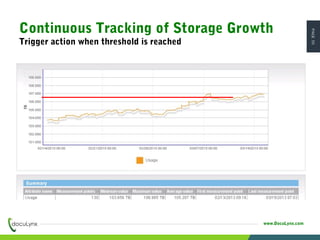

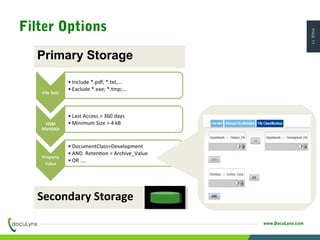

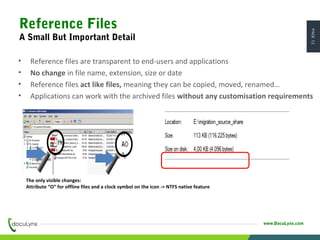

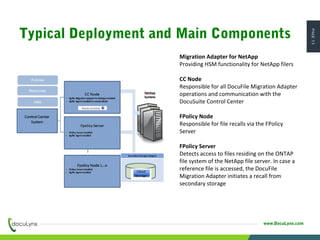

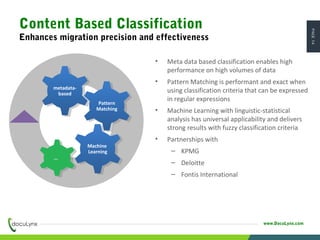

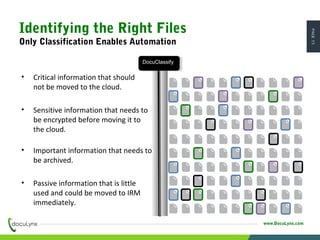

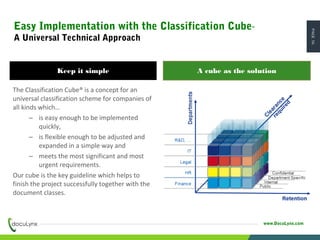

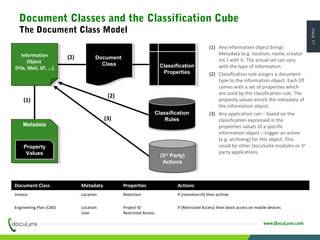

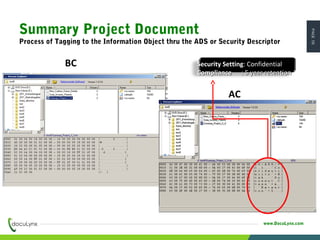

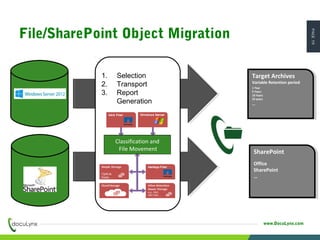

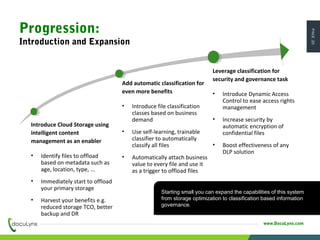

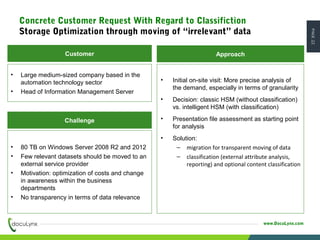

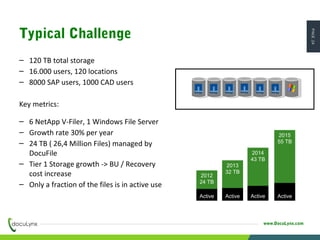

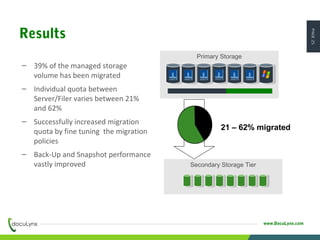

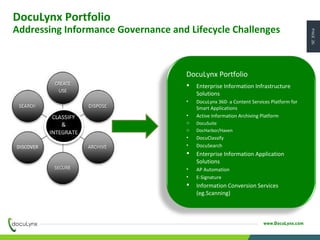

The document discusses the challenges of managing exponentially growing unstructured data and presents cloud storage as a viable solution to optimize storage utilization and reduce costs. It emphasizes intelligent content management strategies, including automatic classification and migration of files based on defined policies, enhancing security and compliance. The presentation outlines a successful implementation case with a significant reduction in storage total cost of ownership and improved data management efficiency.