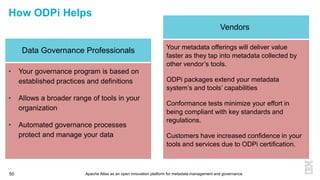

This document discusses metadata and the importance of metadata management. It introduces Apache Atlas as an open source platform for metadata management and governance. Key points include:

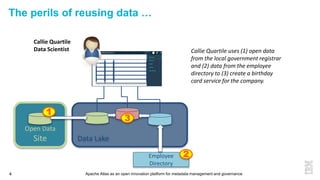

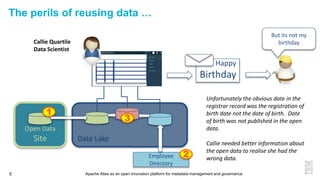

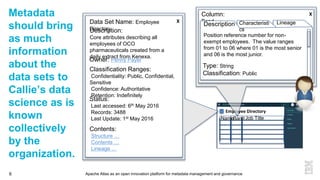

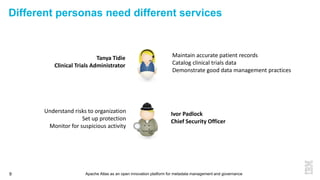

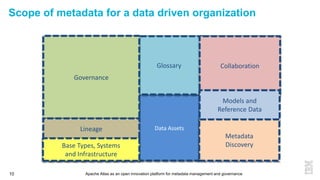

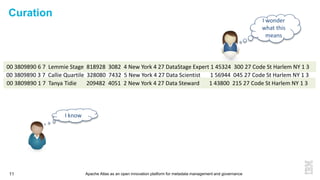

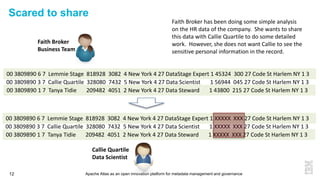

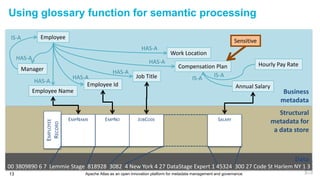

- Metadata is important for data reuse, analytics, and governance. It provides context and meaning about data.

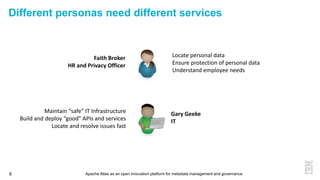

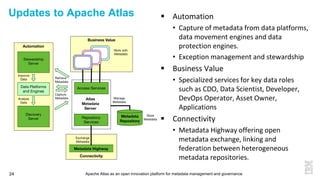

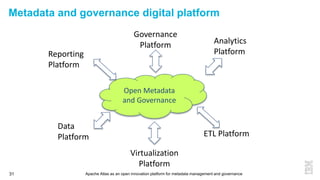

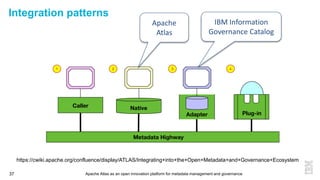

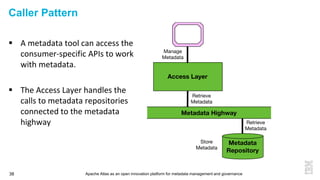

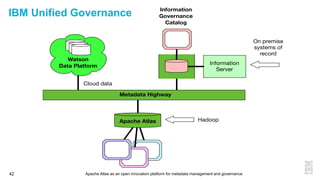

- Current reality is that metadata is often not well supported or integrated across tools. Apache Atlas aims to provide an open, unified approach.

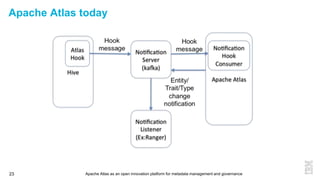

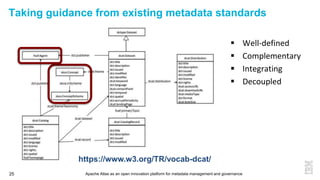

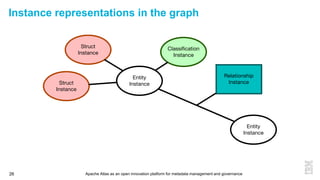

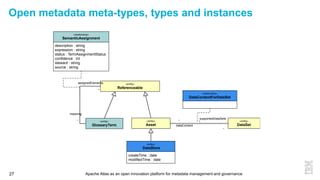

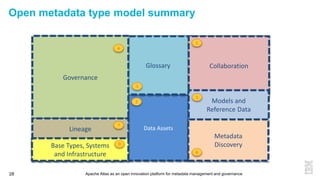

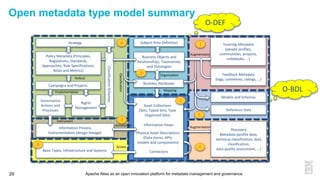

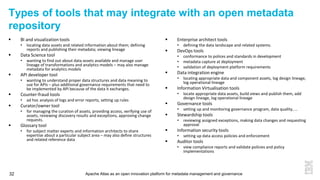

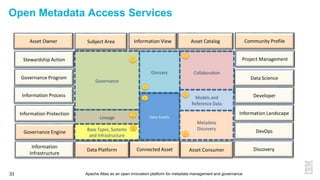

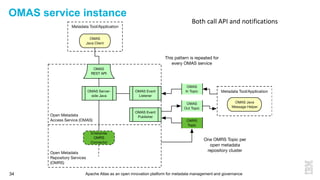

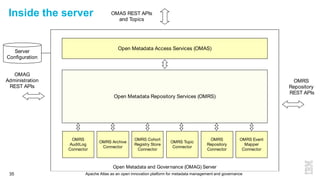

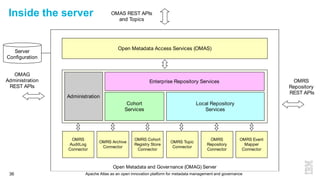

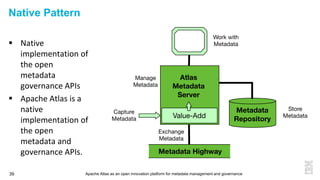

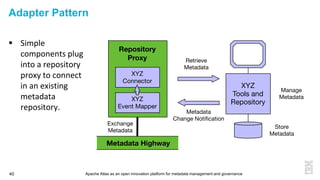

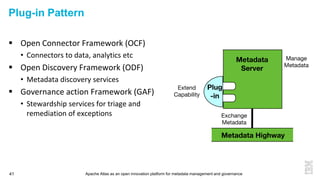

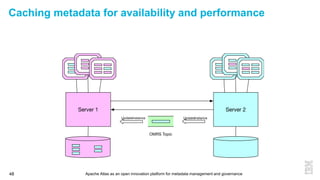

- Apache Atlas has graduated to a top-level Apache project. It provides a type-agnostic metadata store and interfaces that can be accessed by various tools.

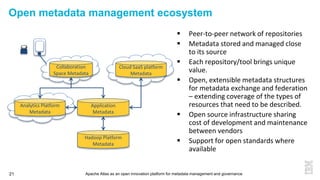

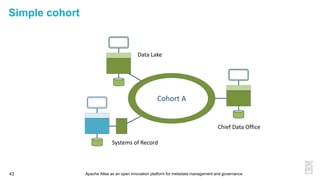

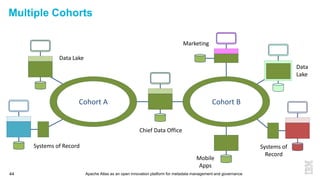

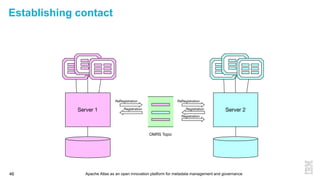

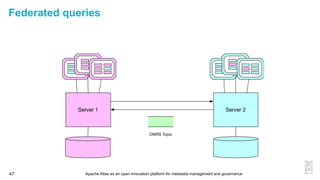

- The vision is for an open ecosystem where metadata is shared and federated across repositories from different vendors and tools.