Embed presentation

Downloaded 21 times

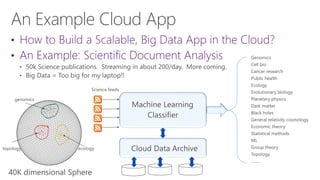

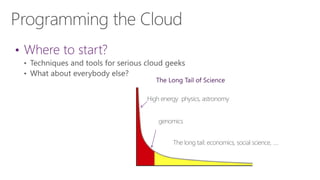

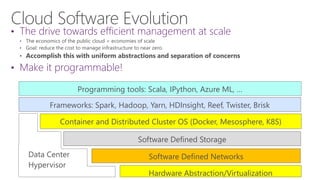

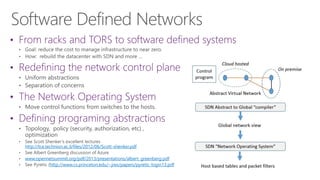

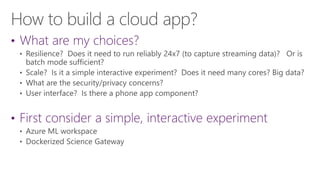

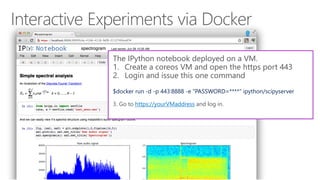

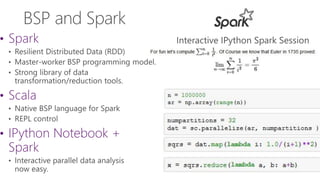

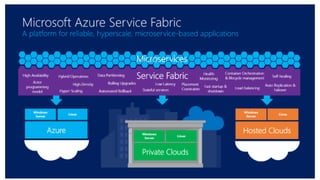

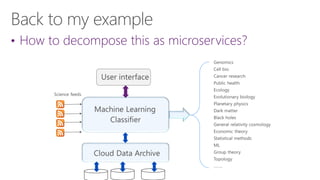

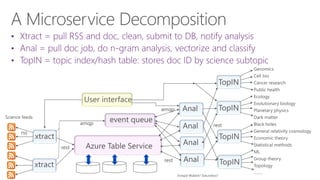

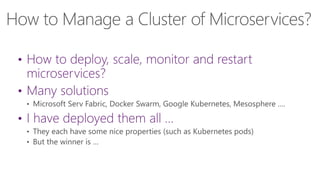

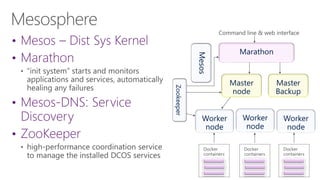

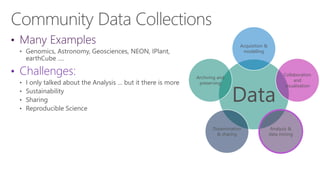

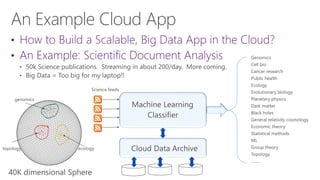

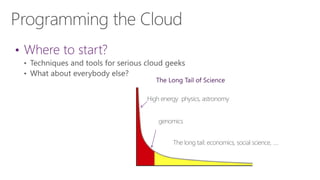

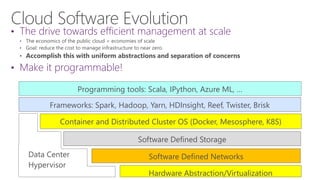

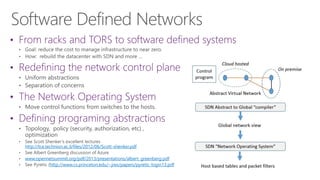

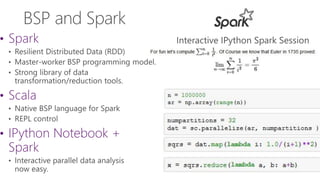

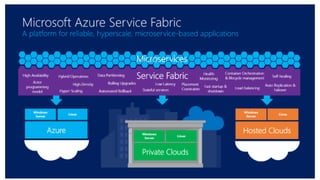

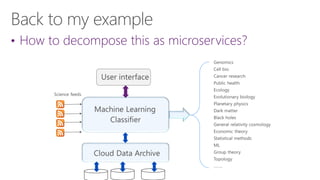

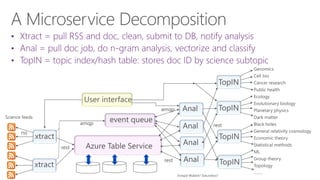

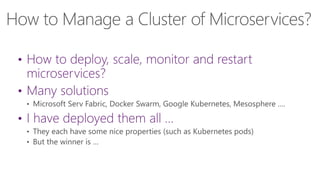

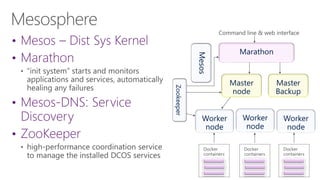

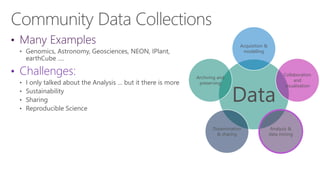

The document discusses the evolution of research fields into data science domains, emphasizing the integration of theory, experiment, and simulation using large datasets. It highlights various programming tools, frameworks, and the challenges of sustainability, sharing, and reproducibility in data science. Furthermore, it outlines the importance of collaboration in data acquisition, modeling, and analysis.