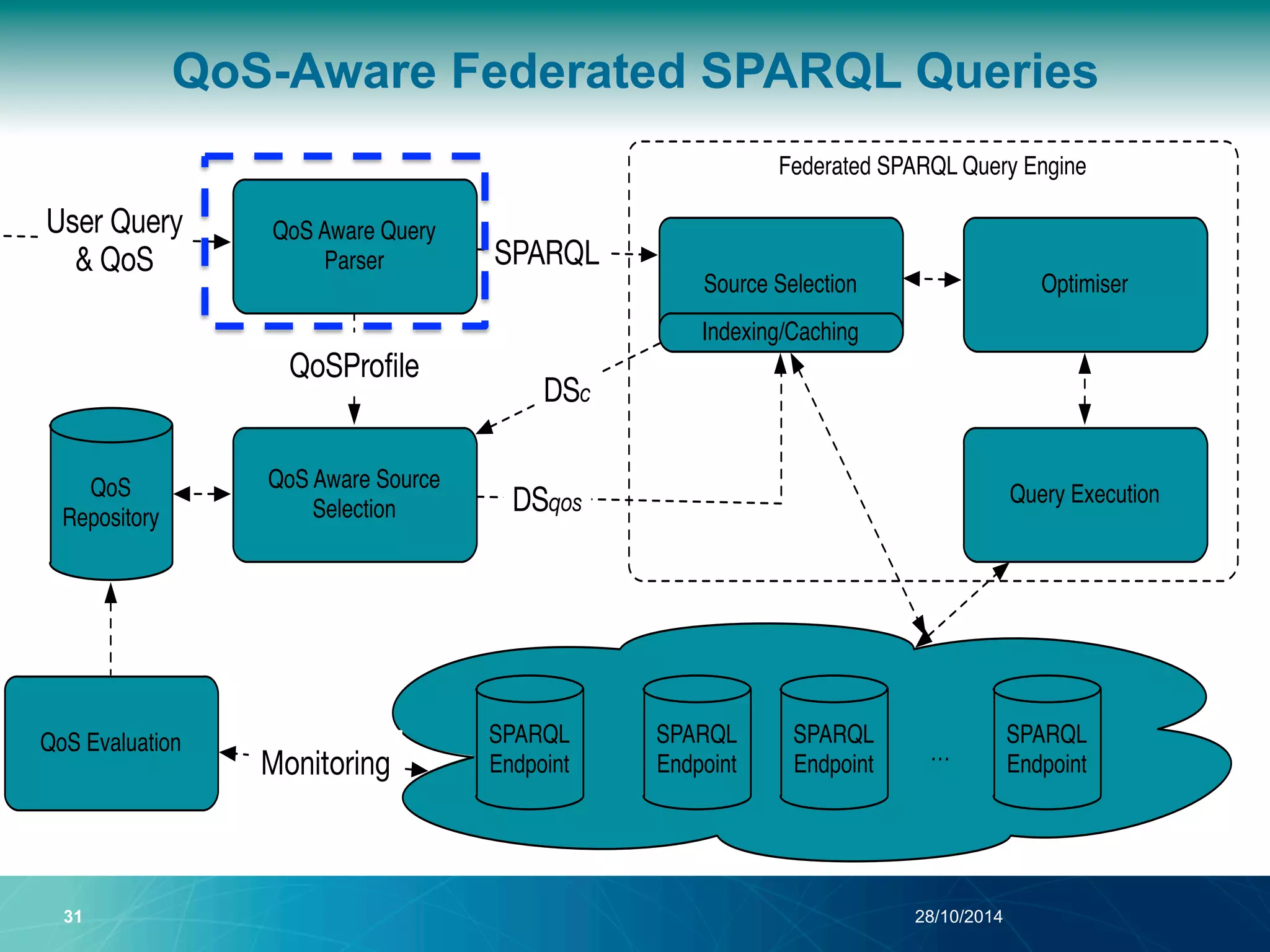

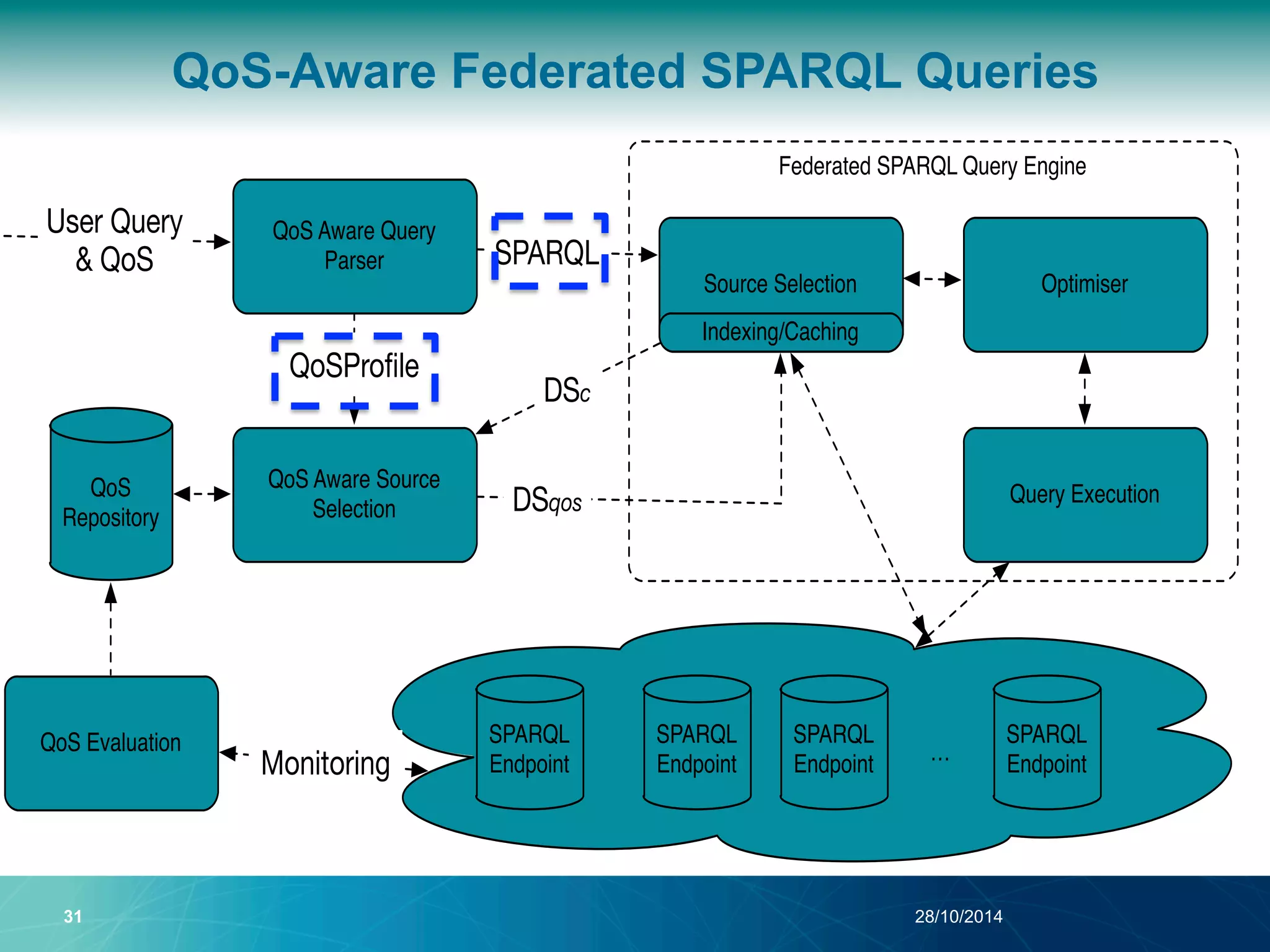

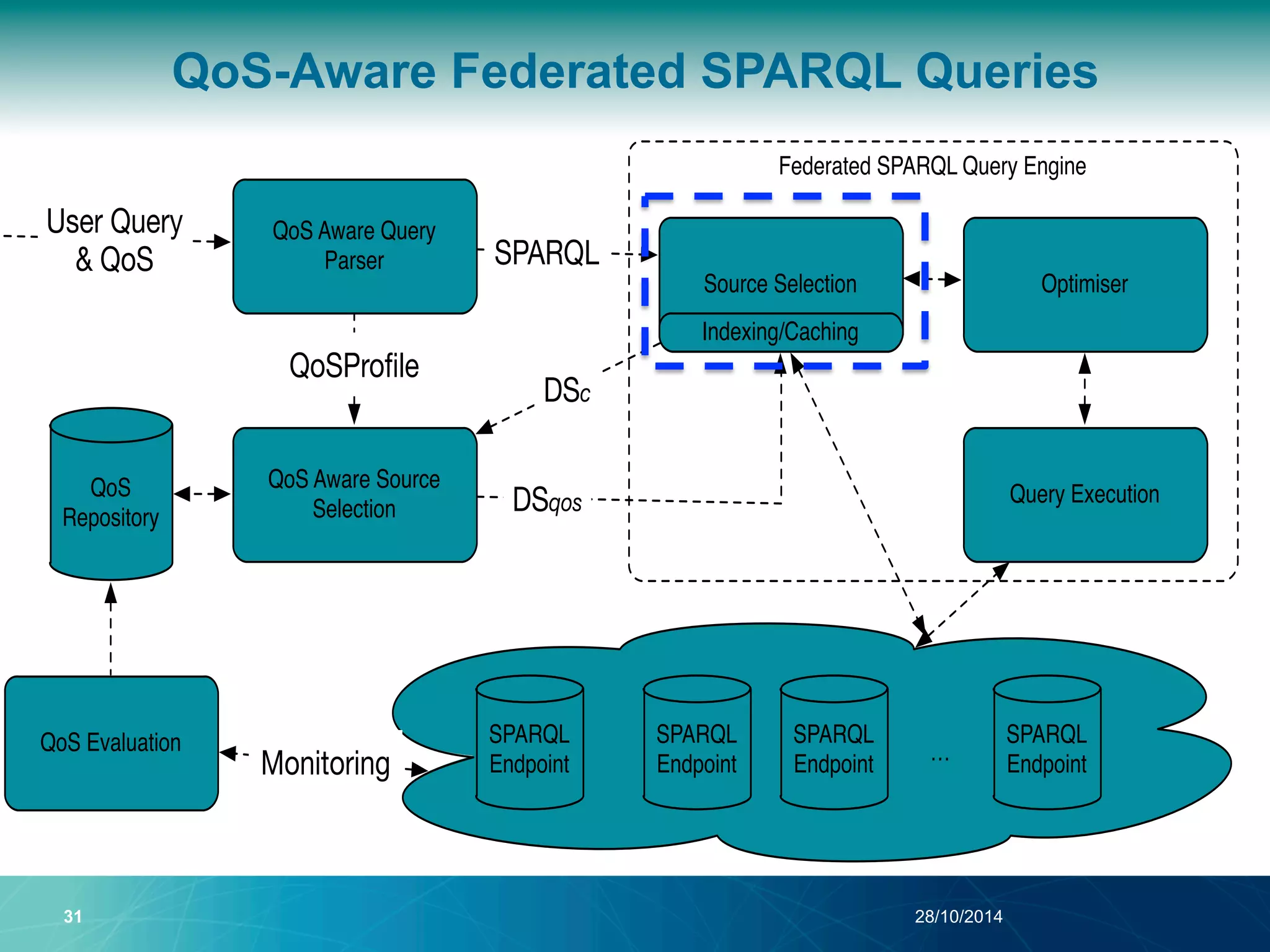

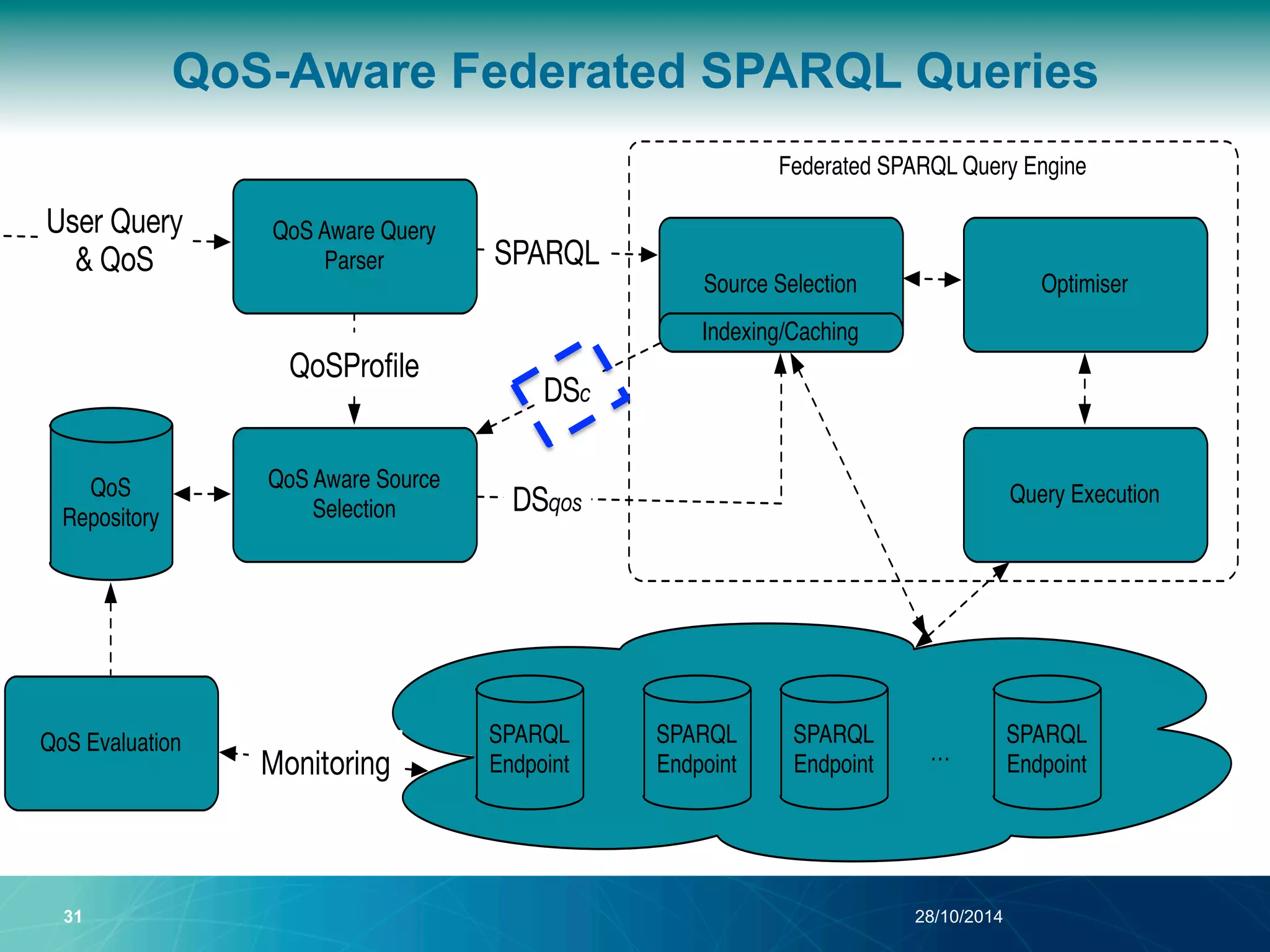

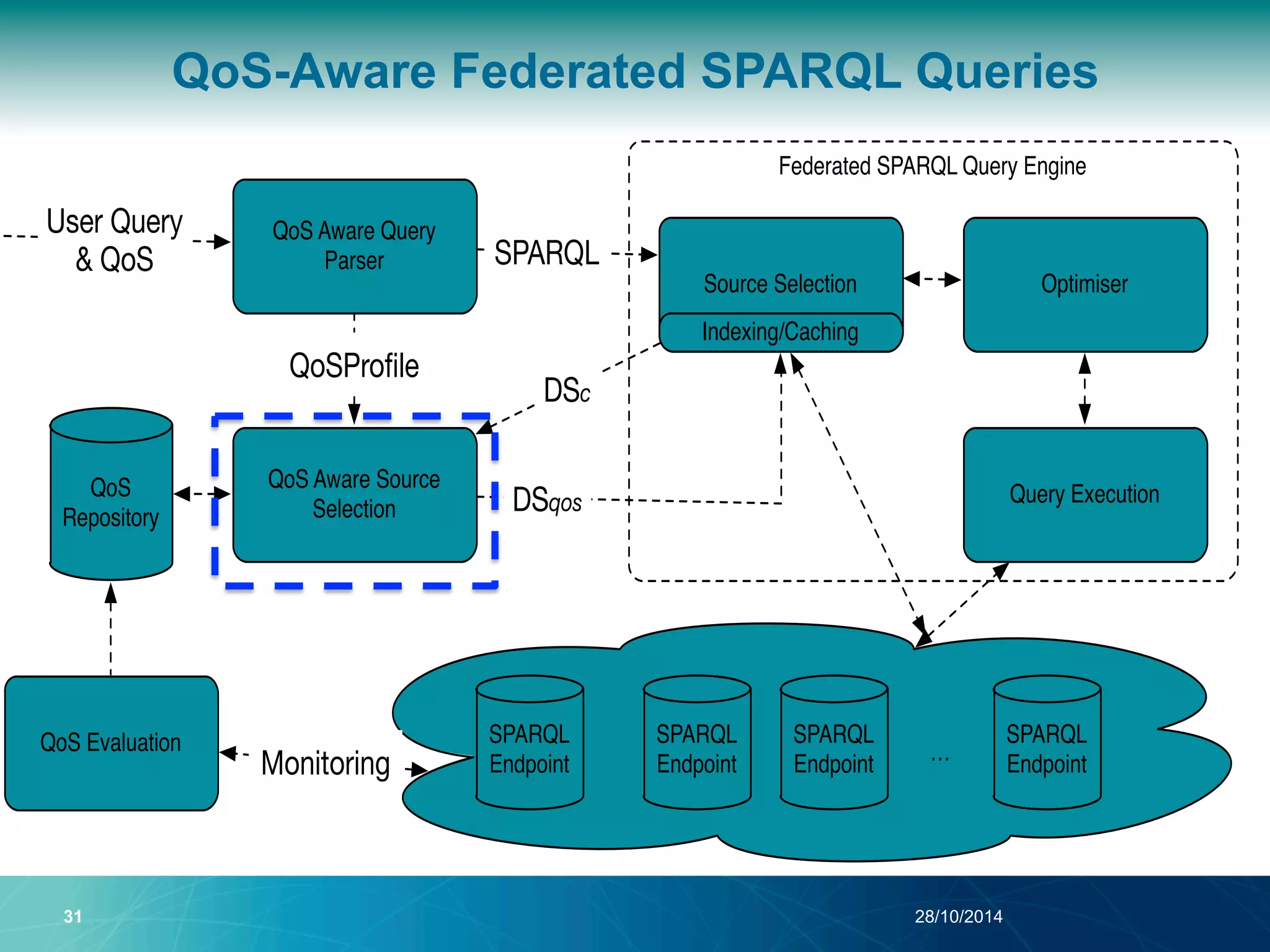

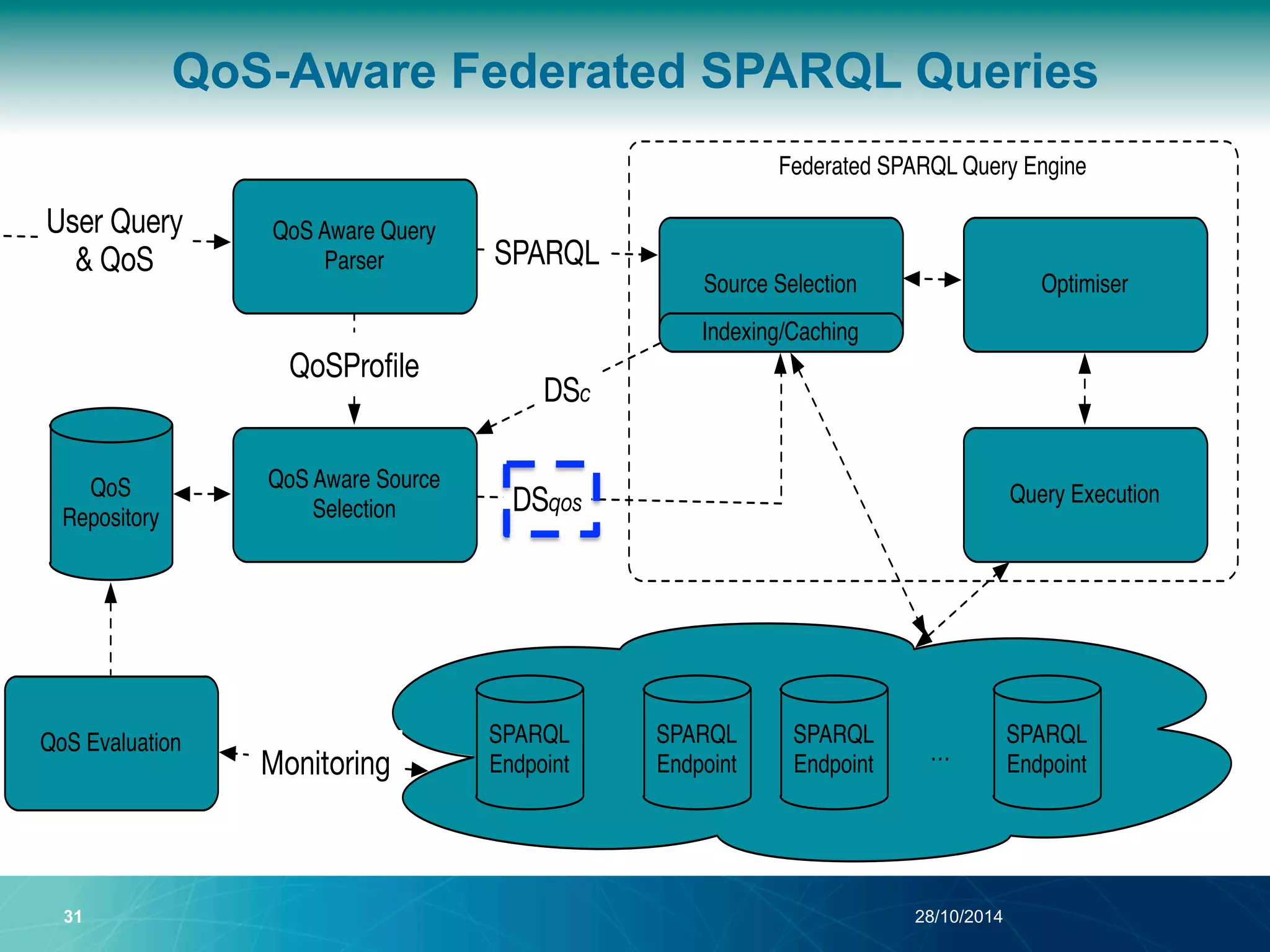

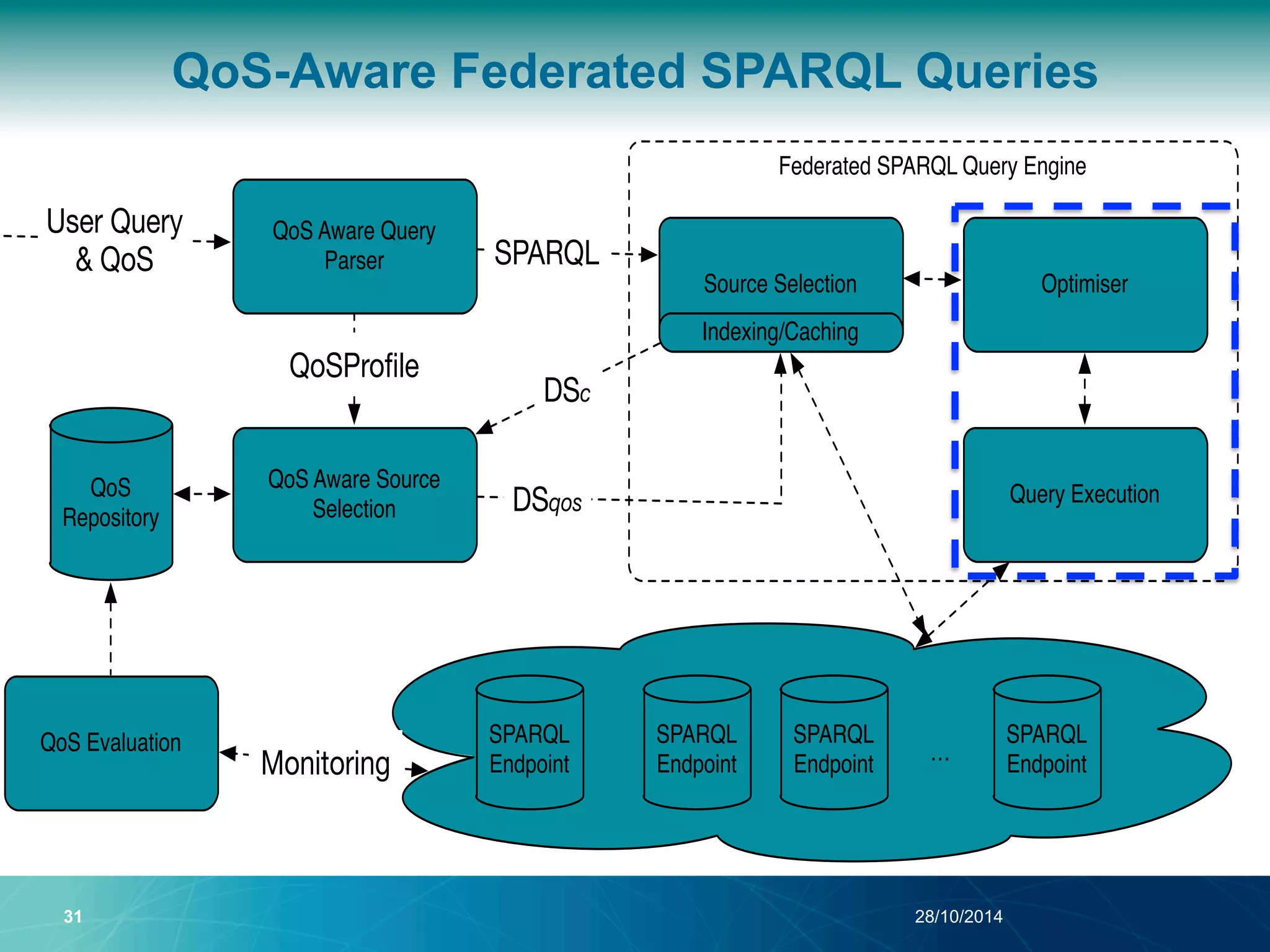

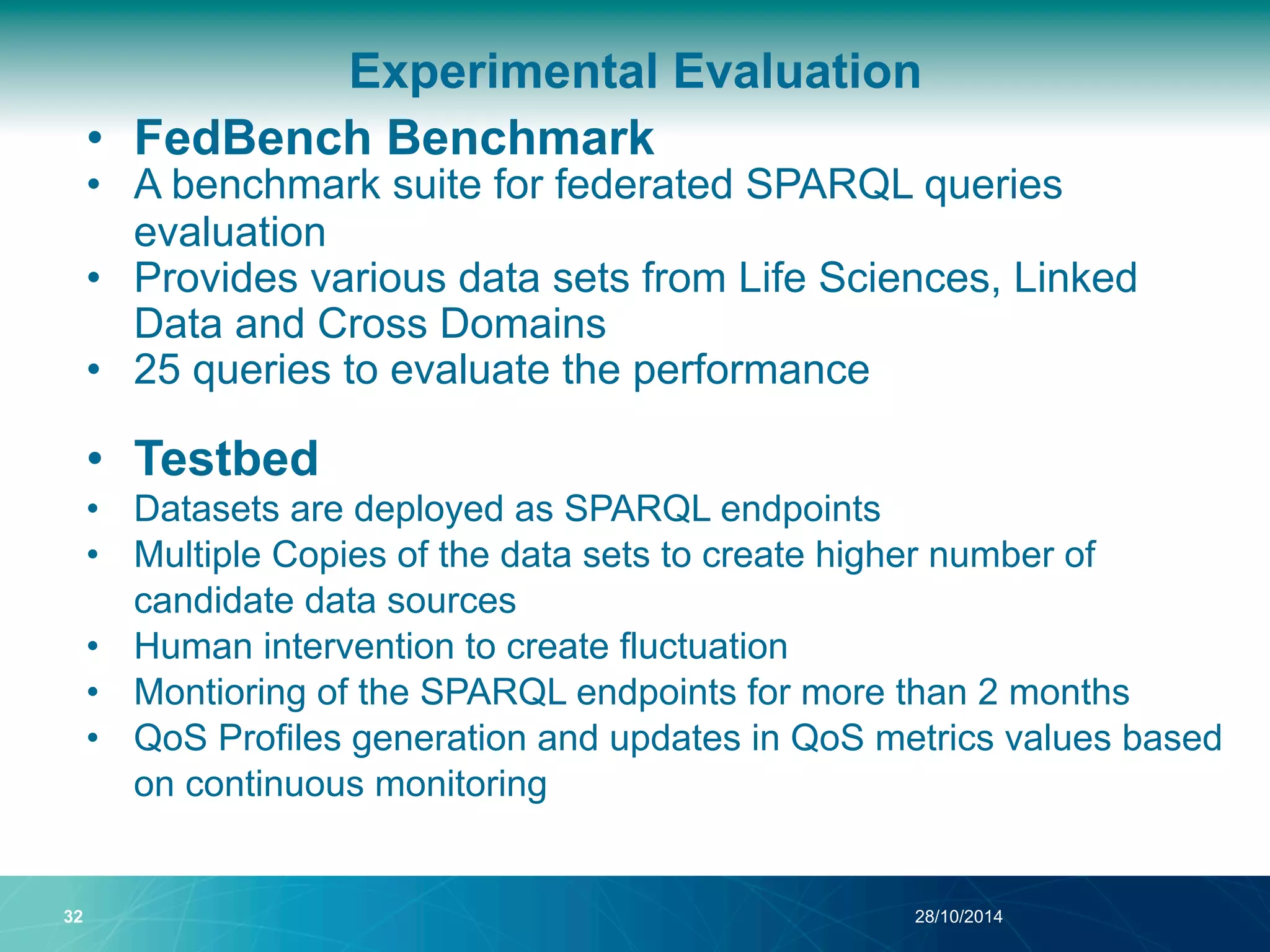

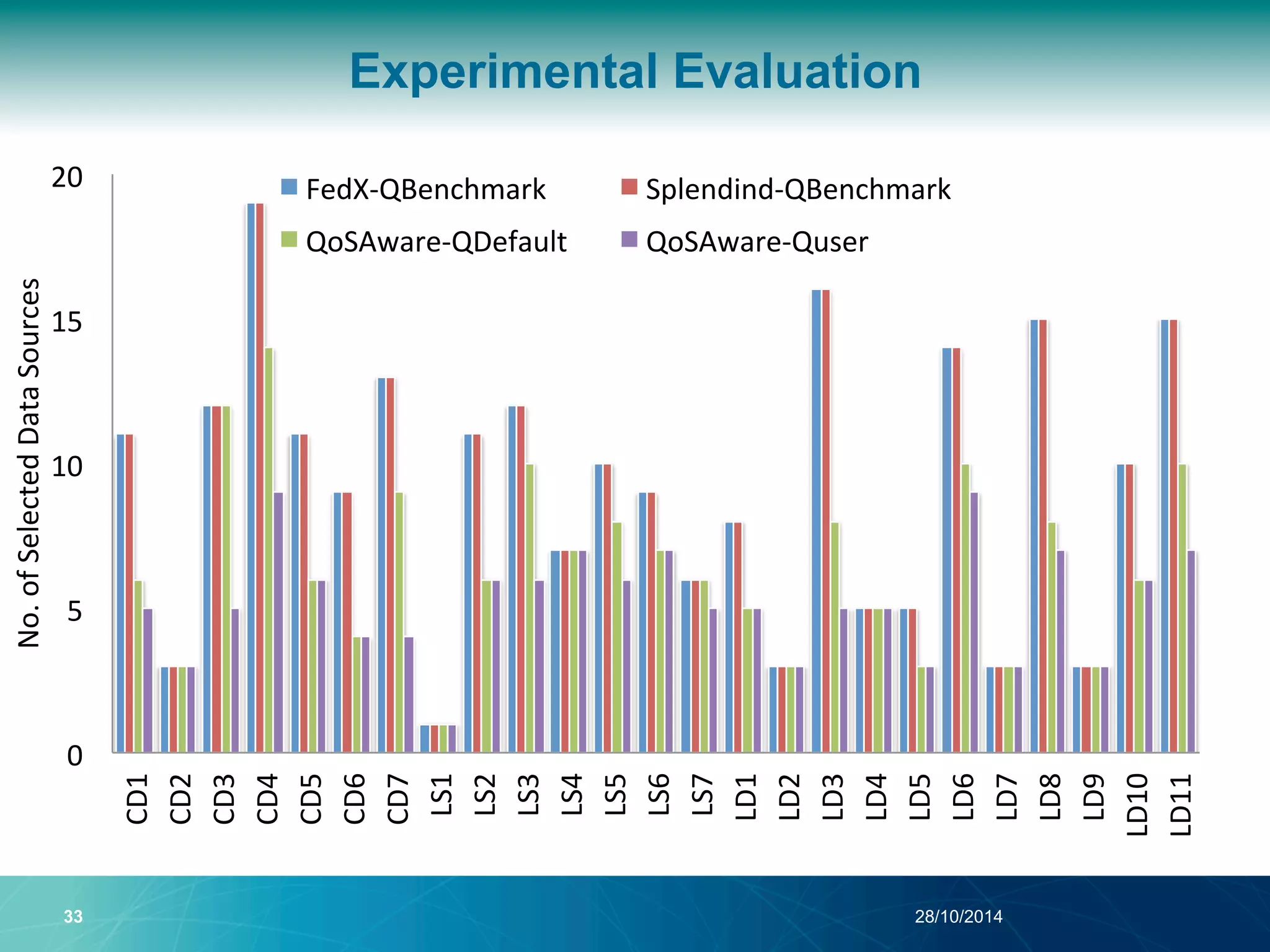

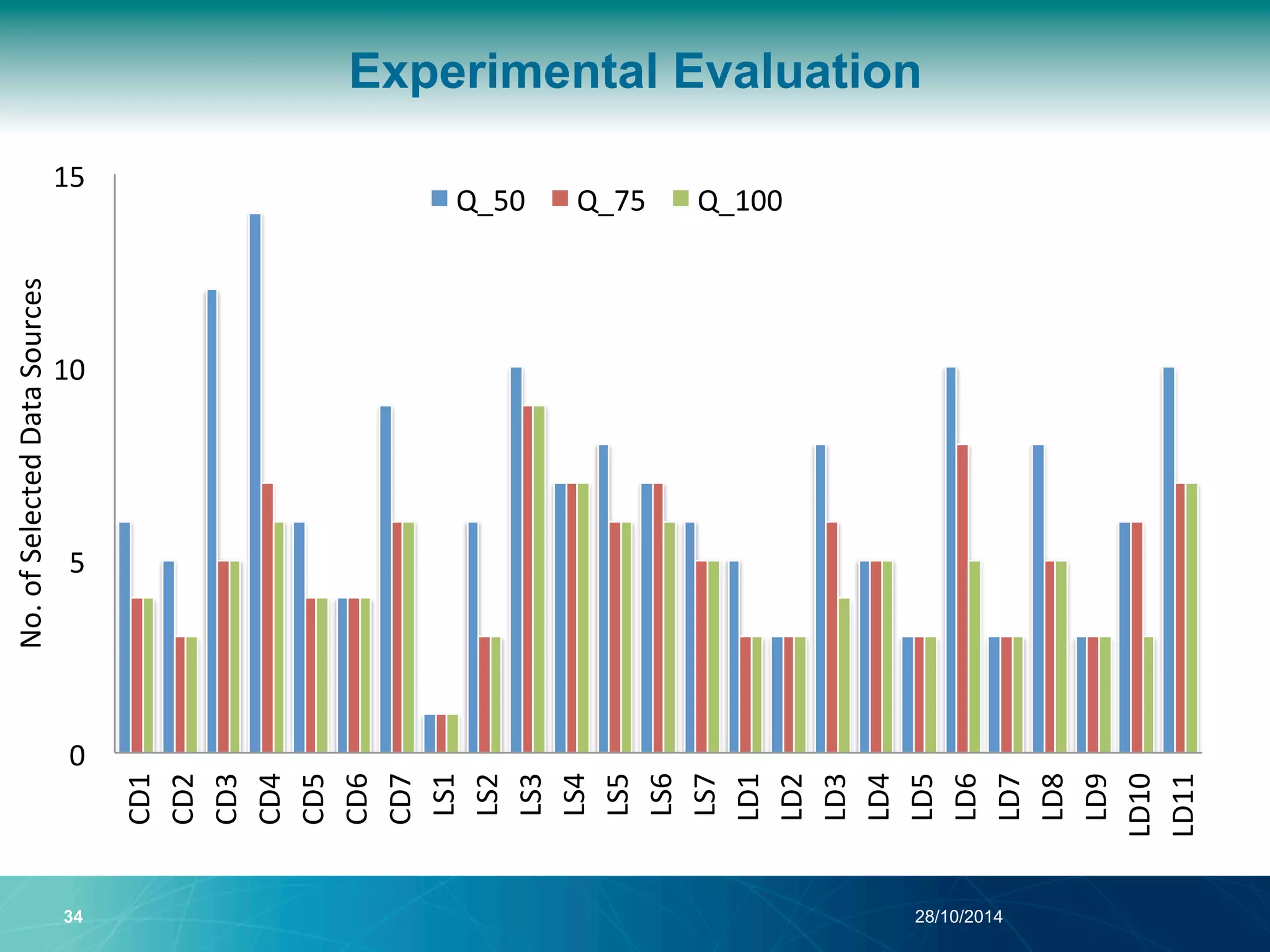

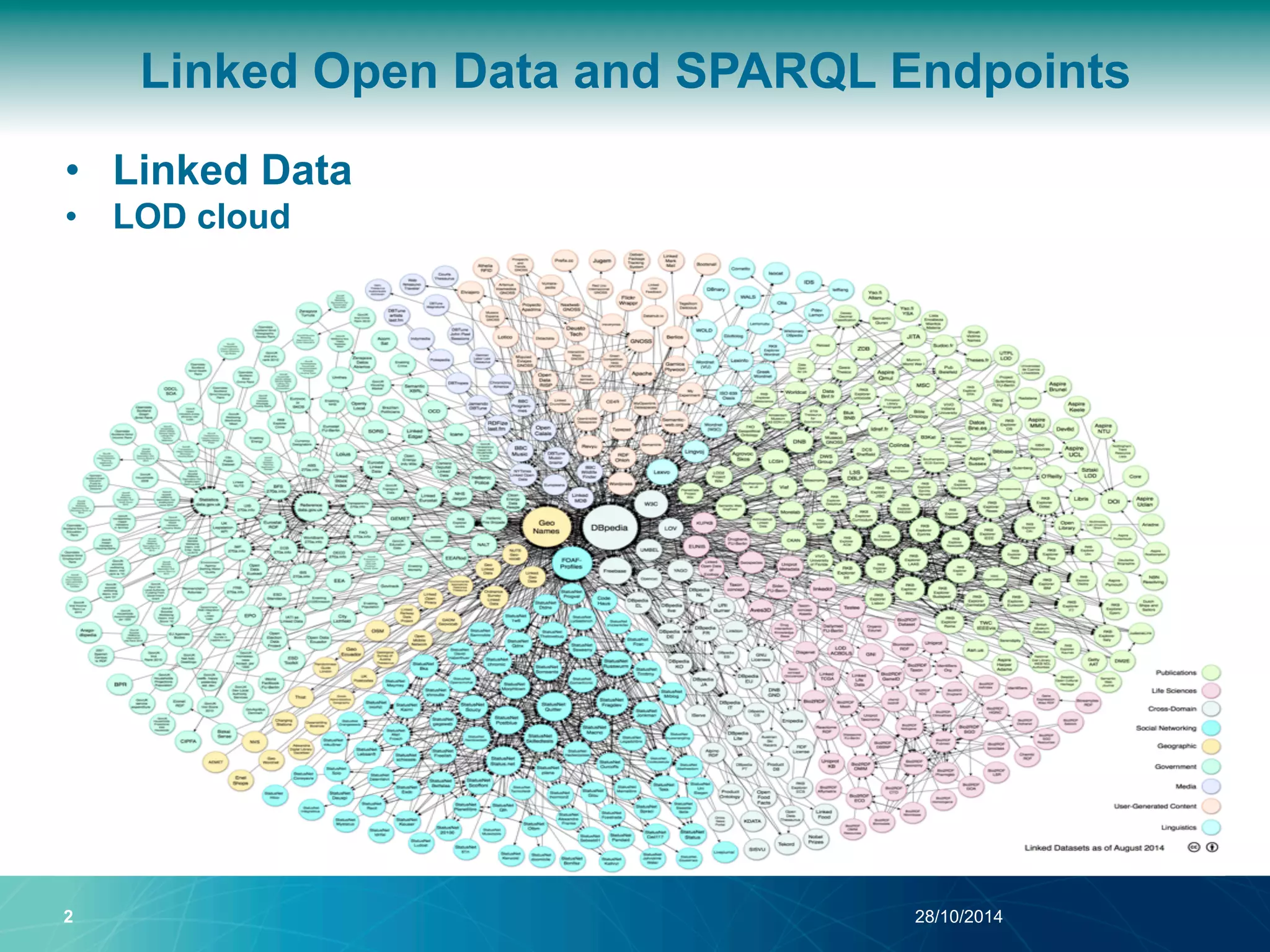

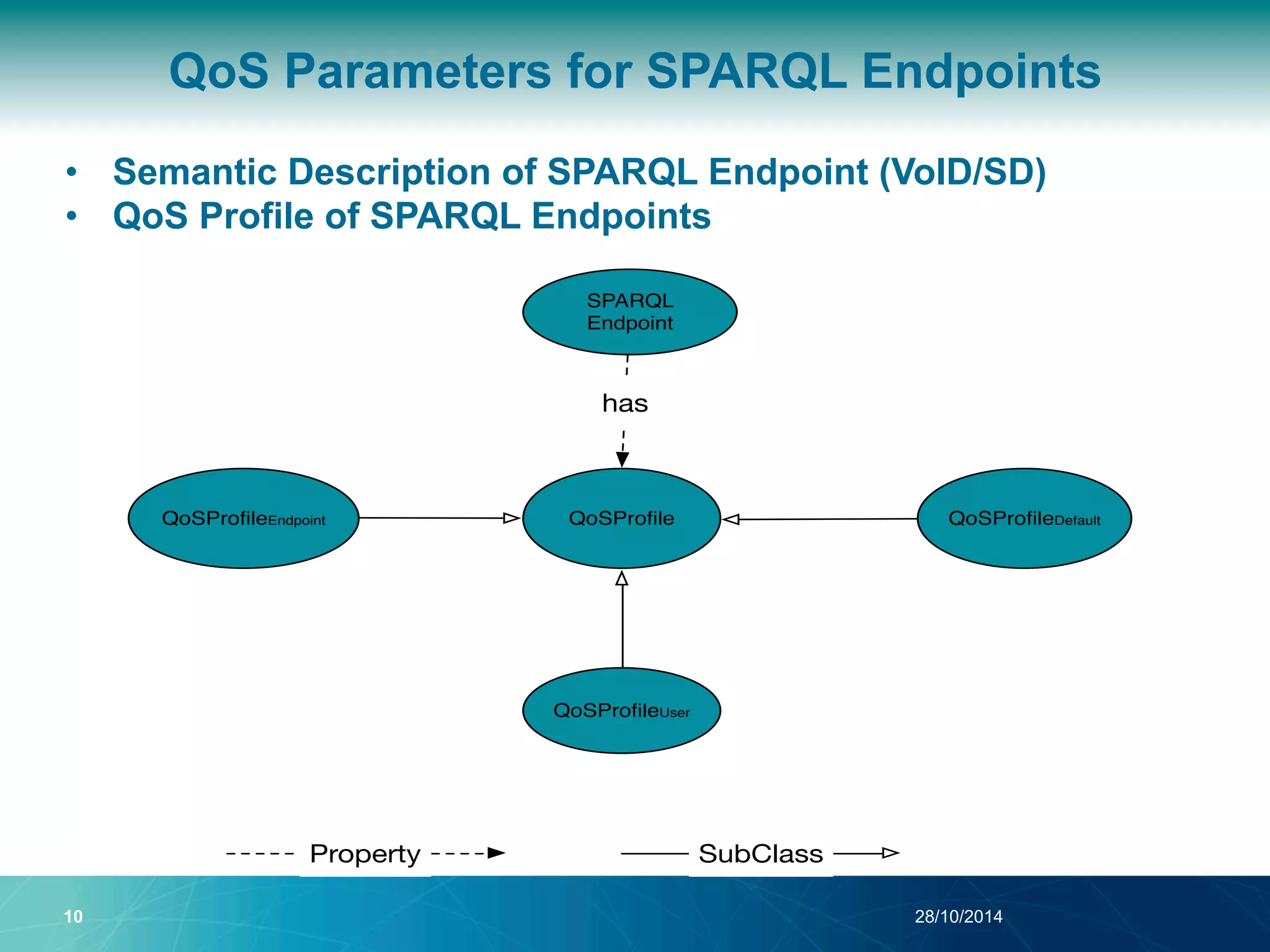

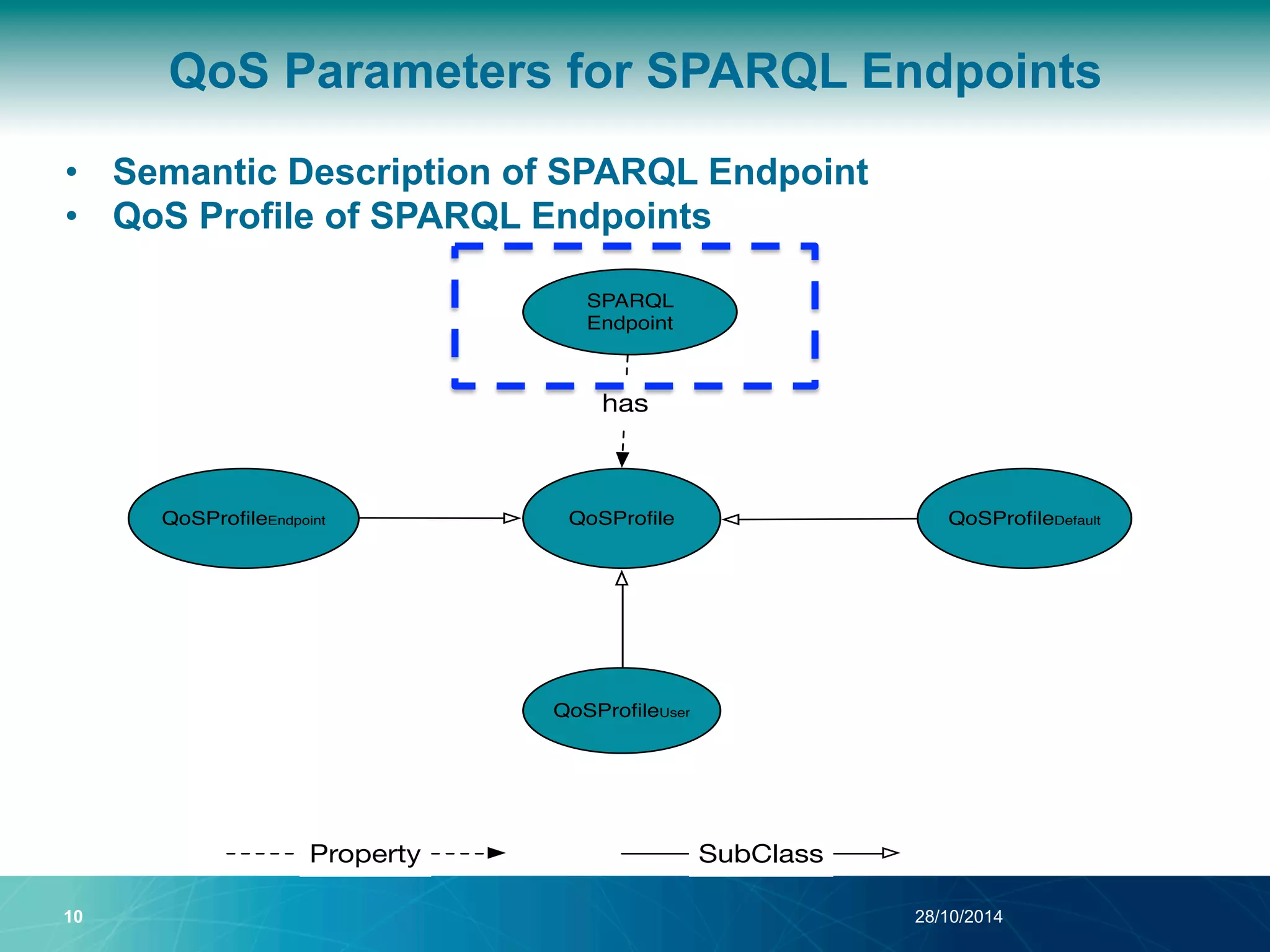

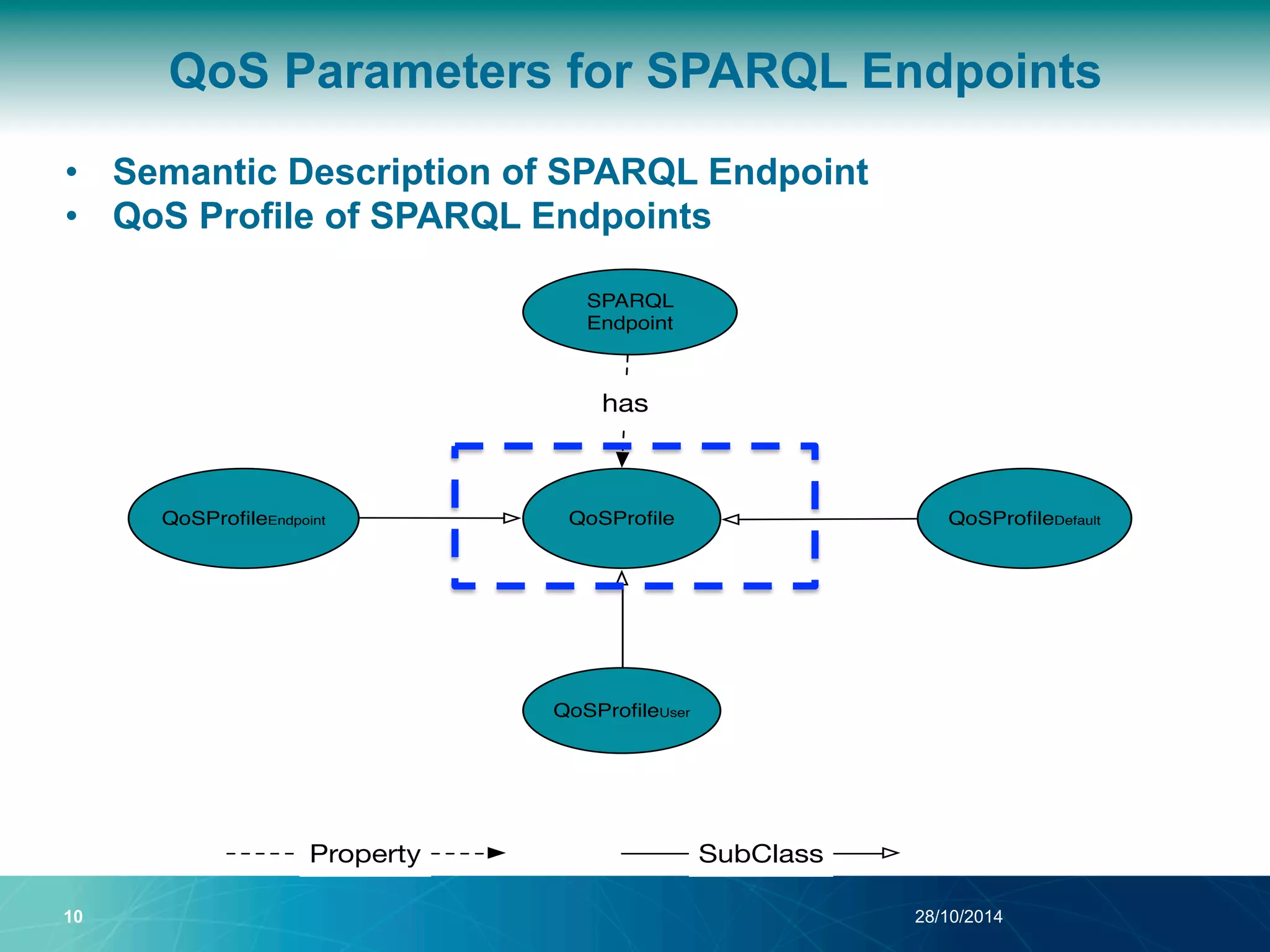

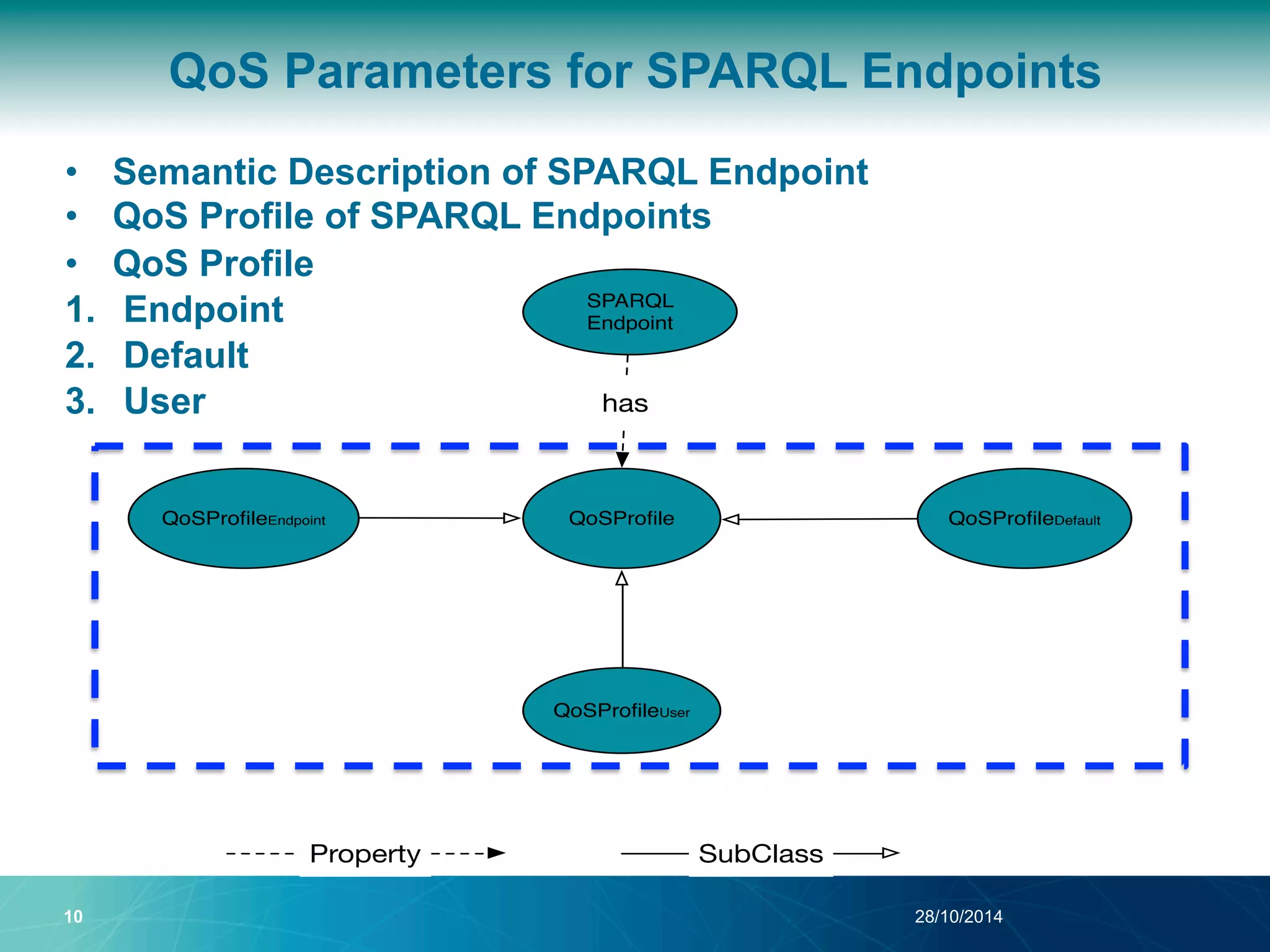

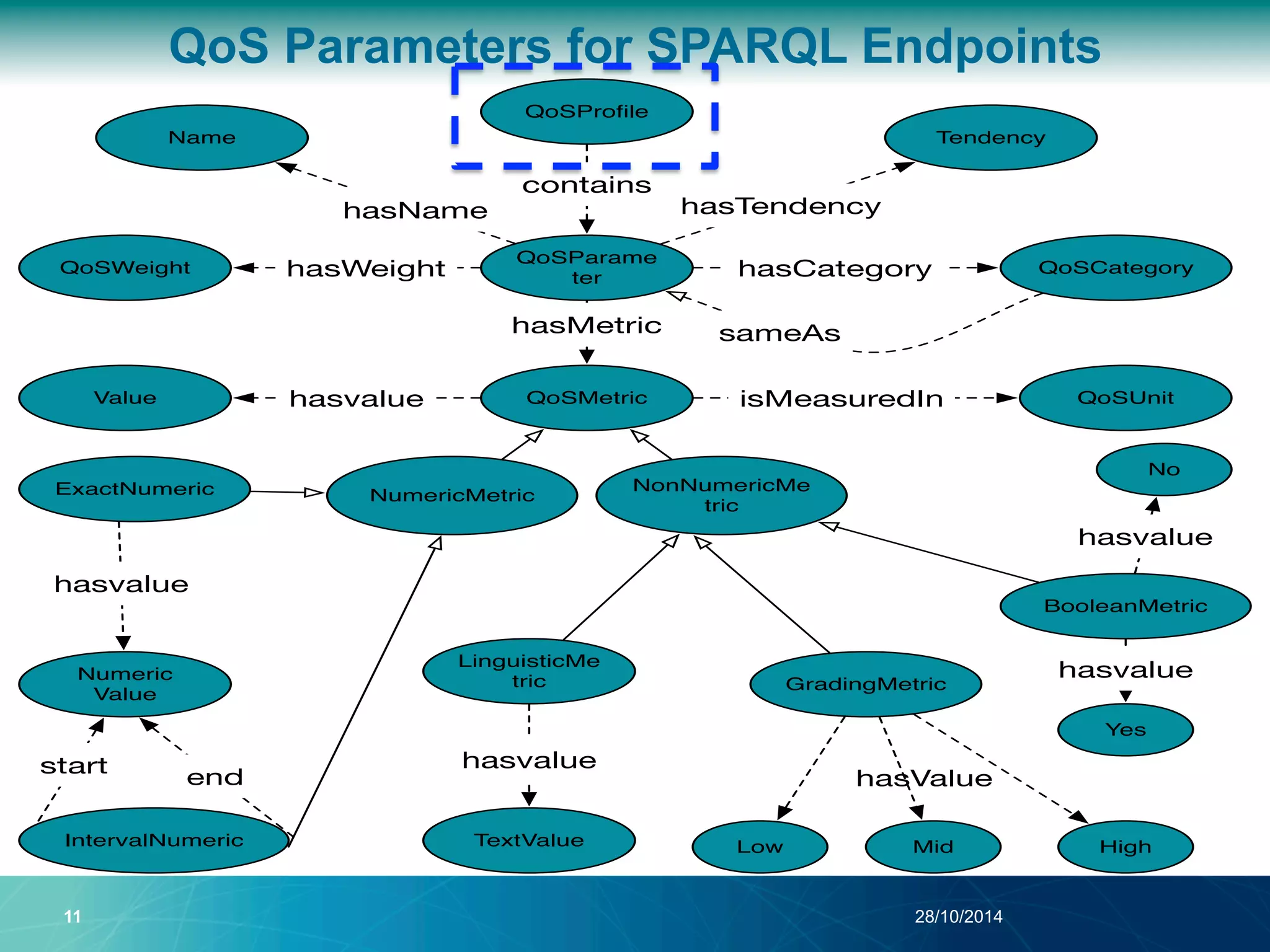

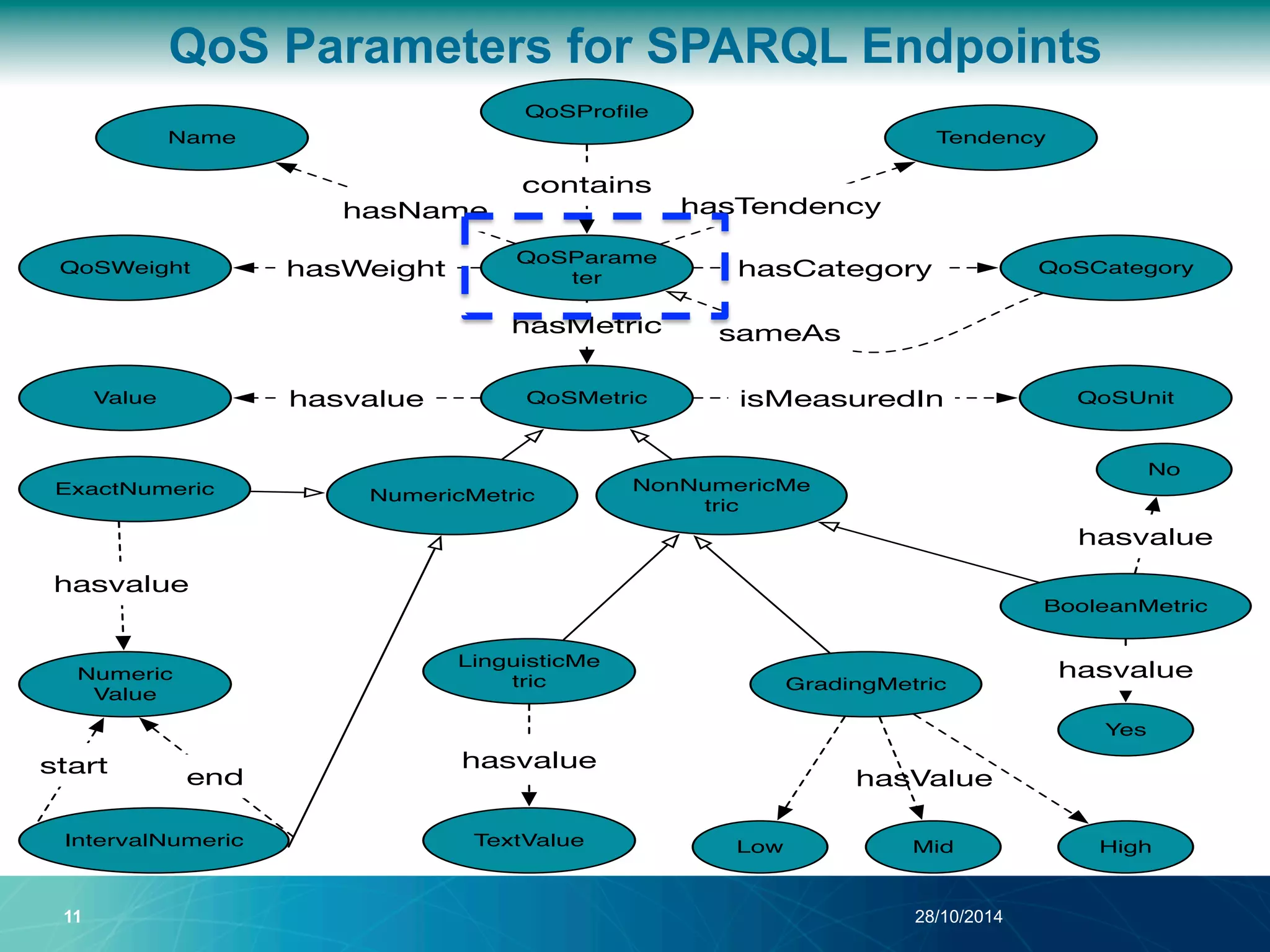

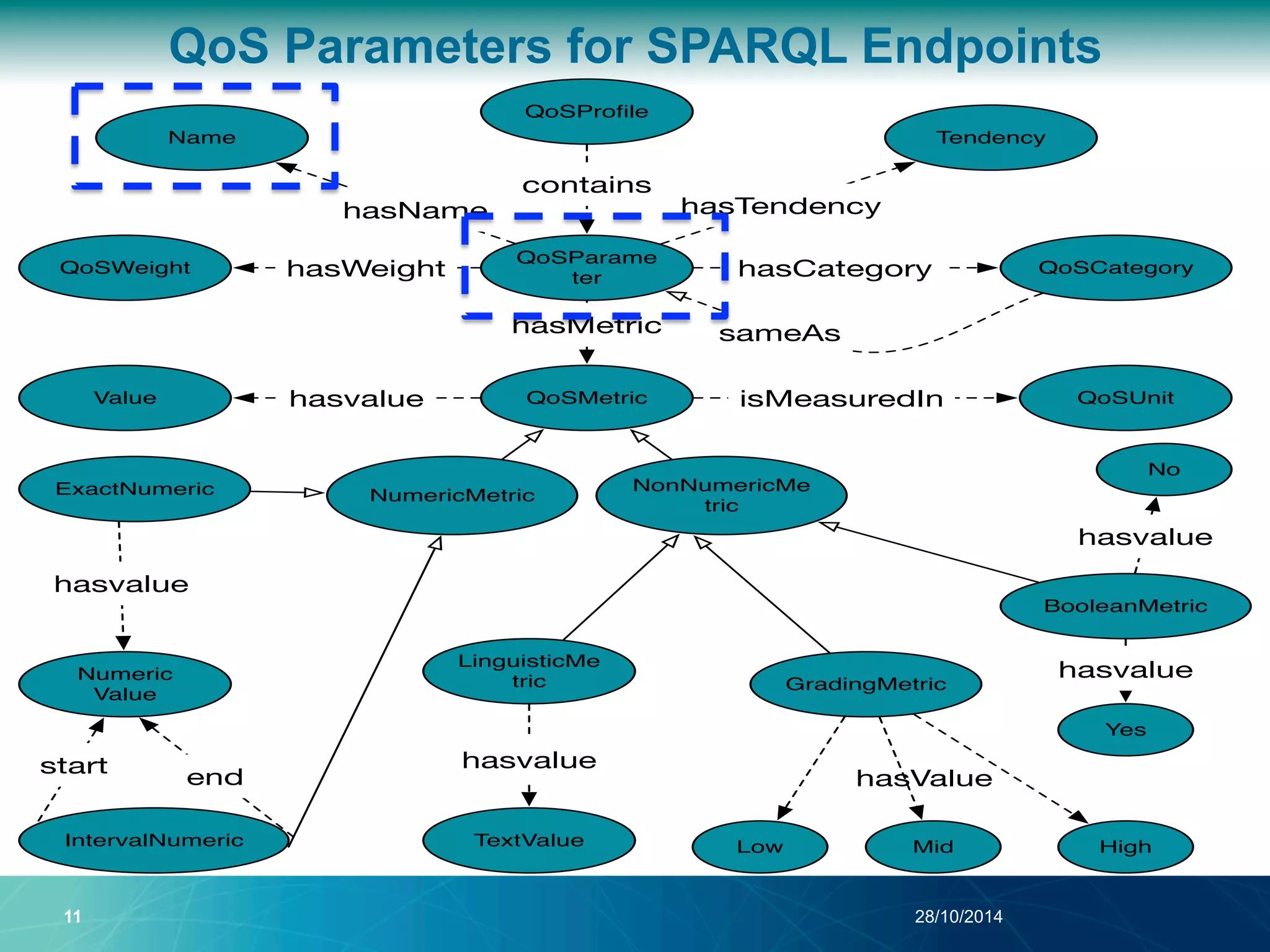

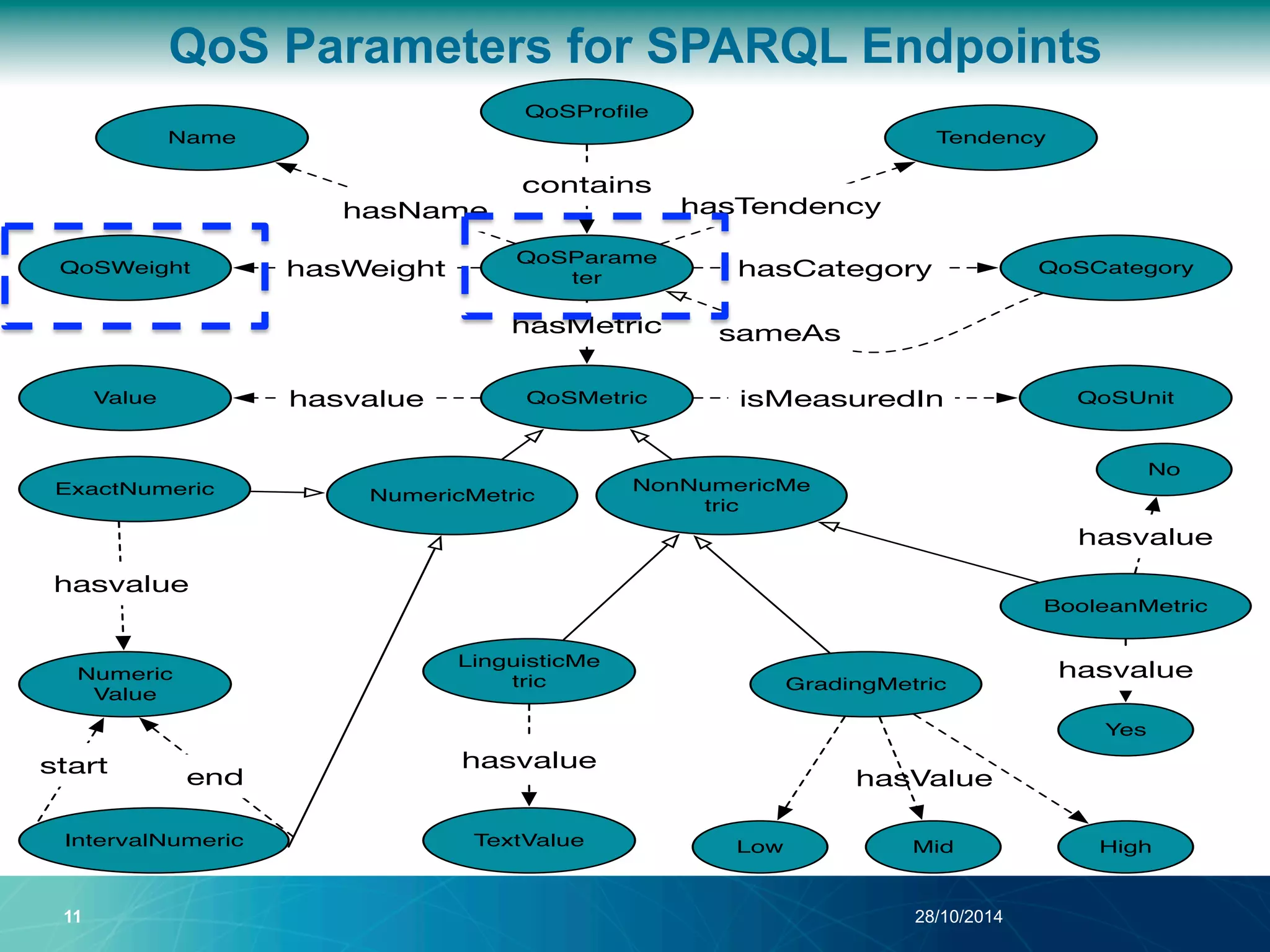

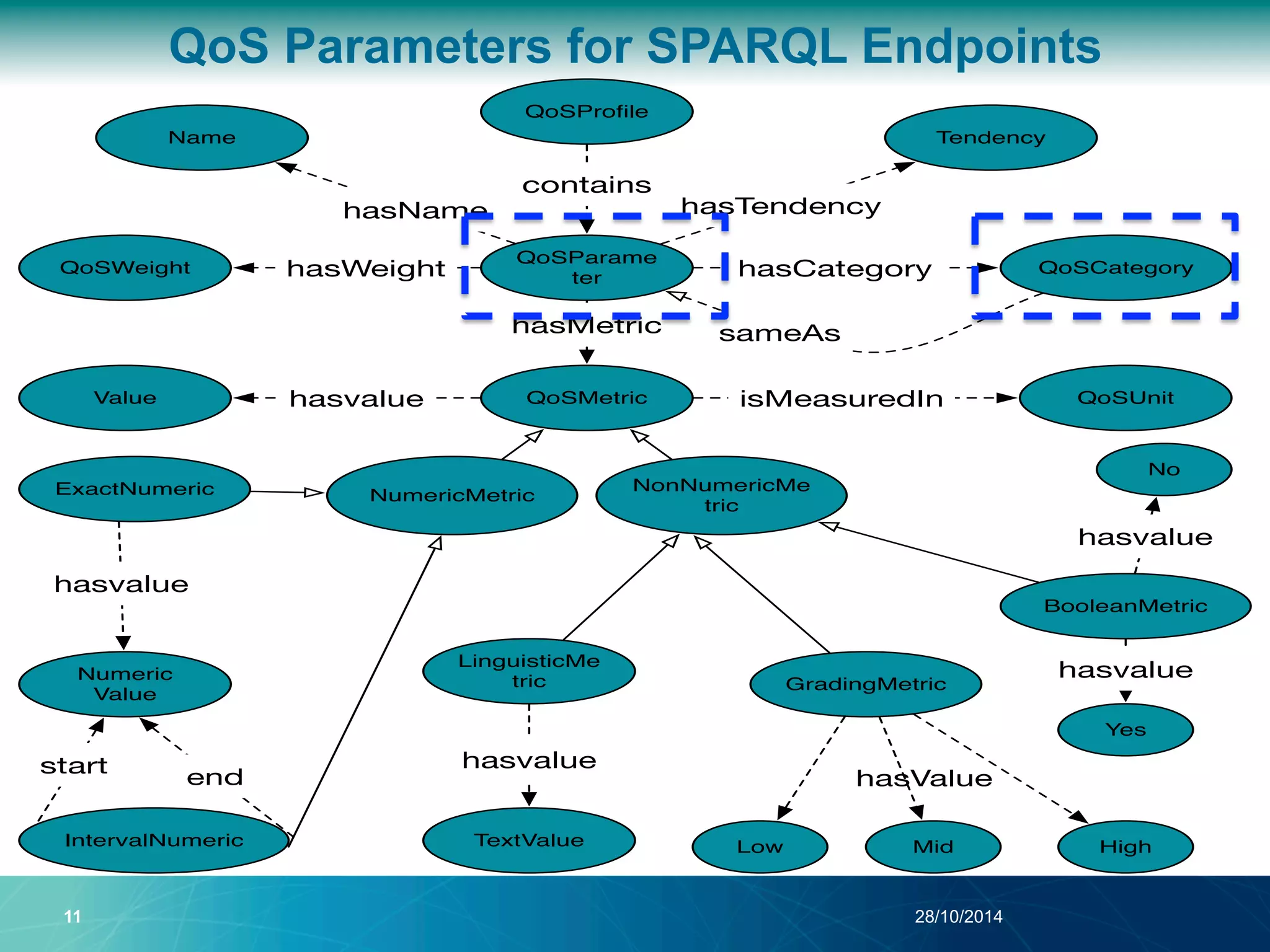

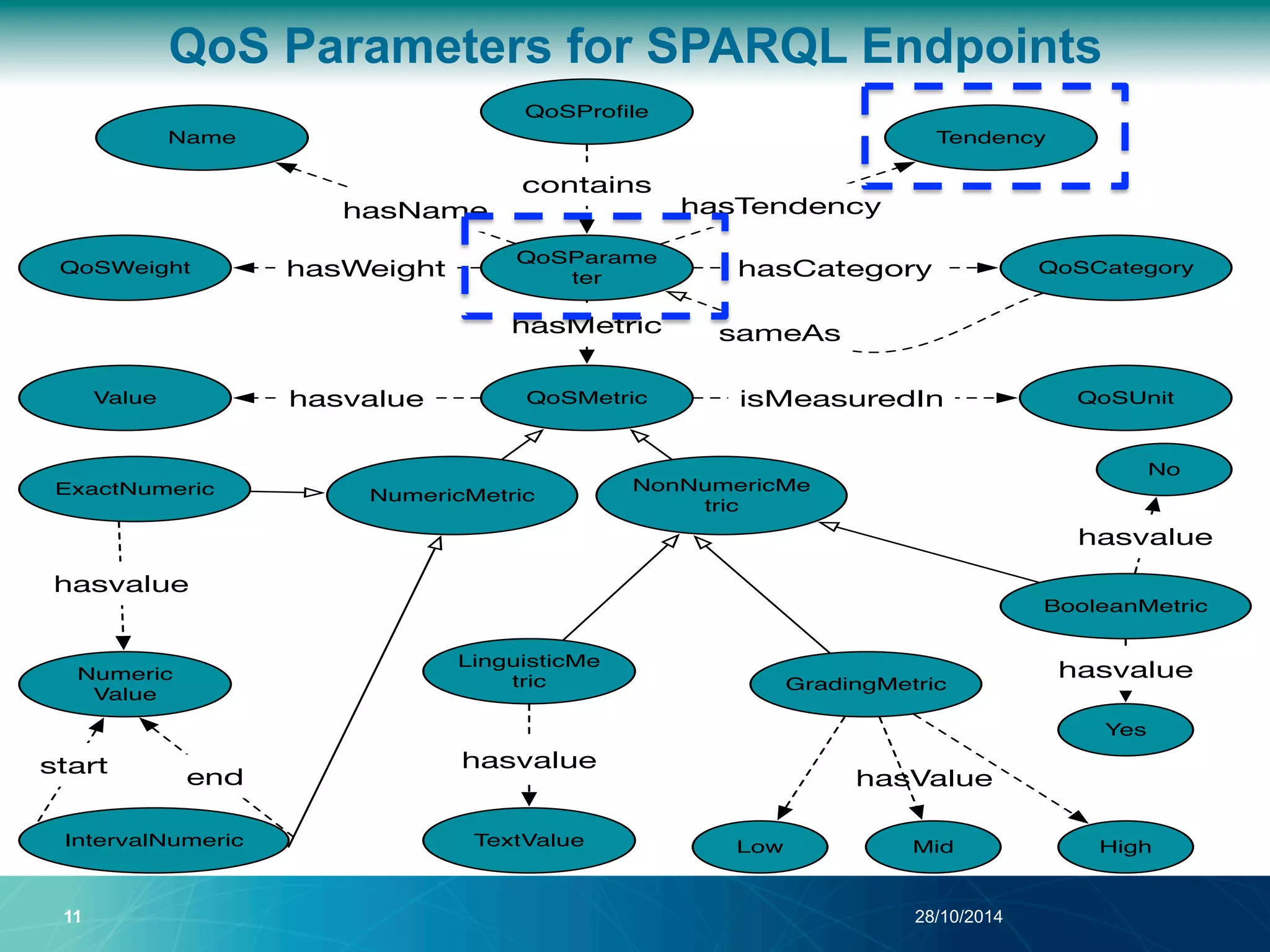

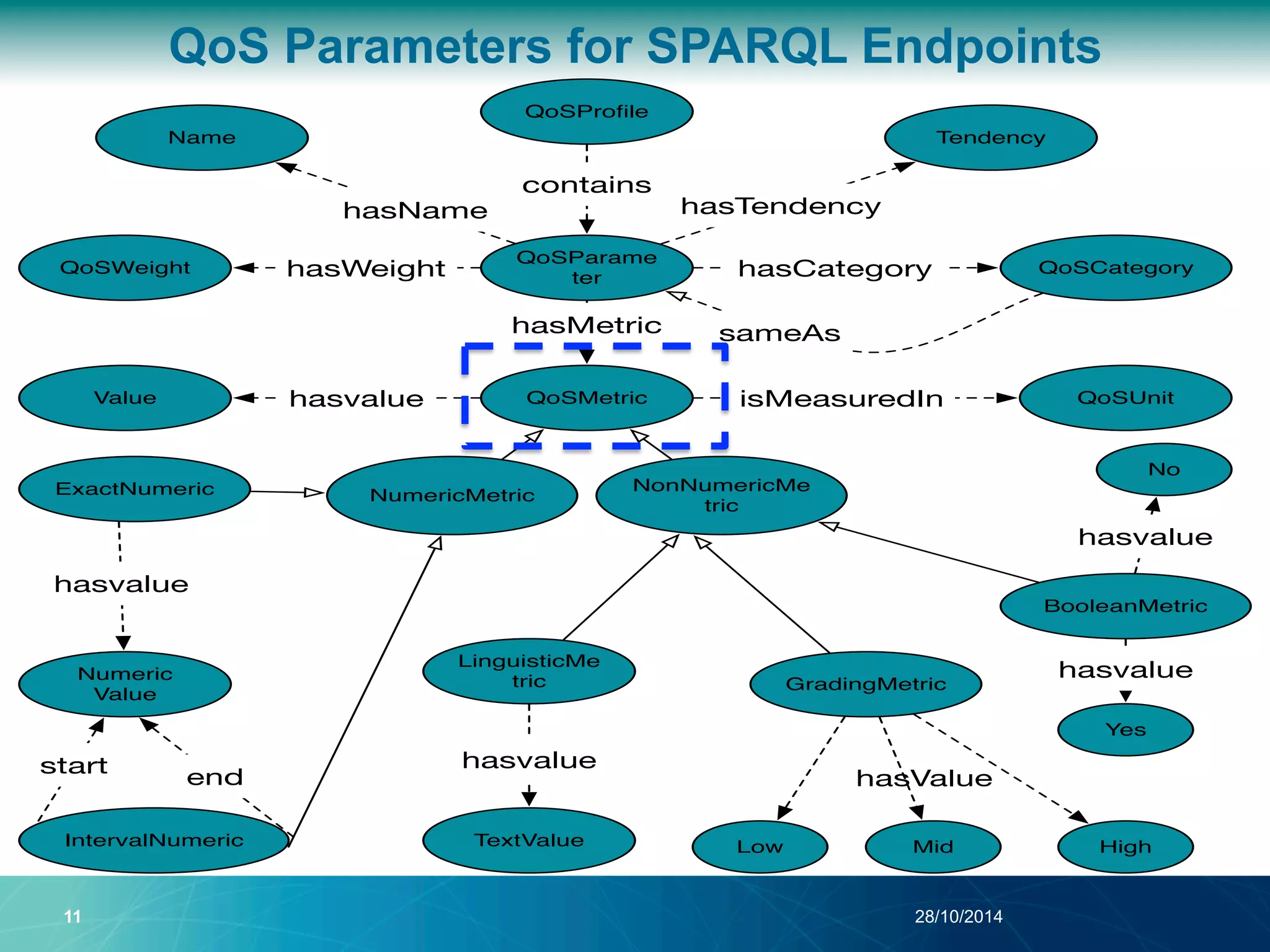

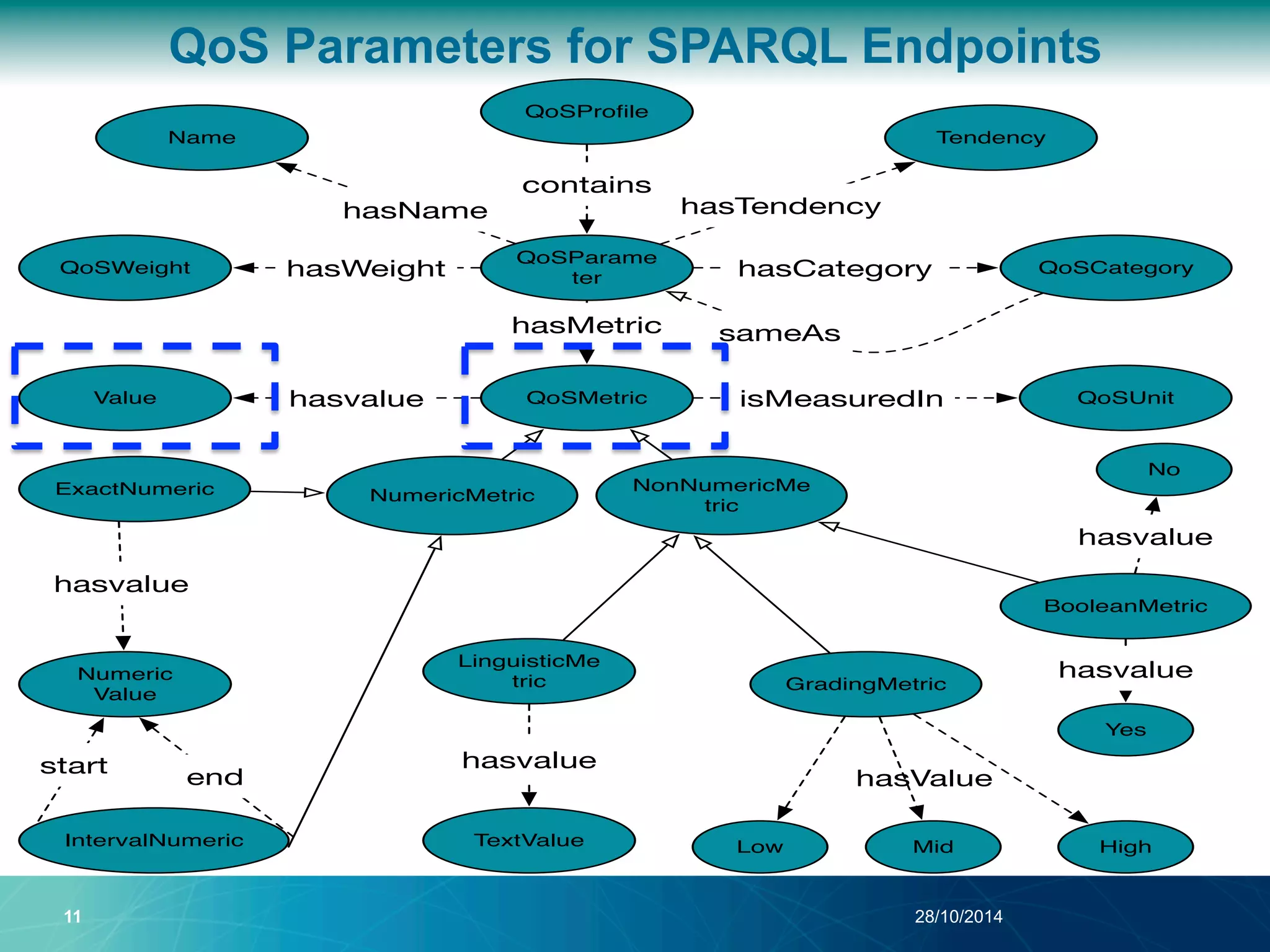

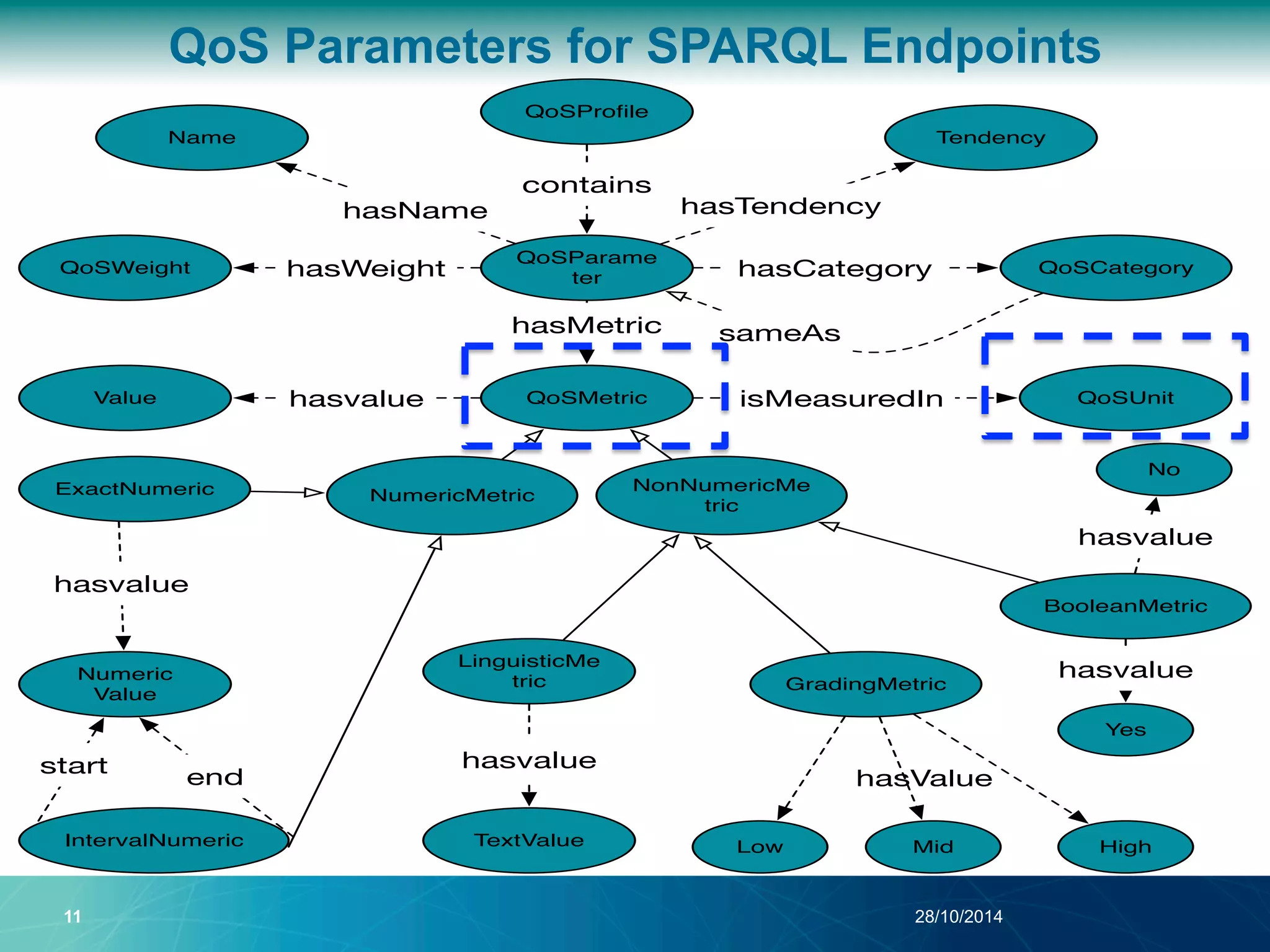

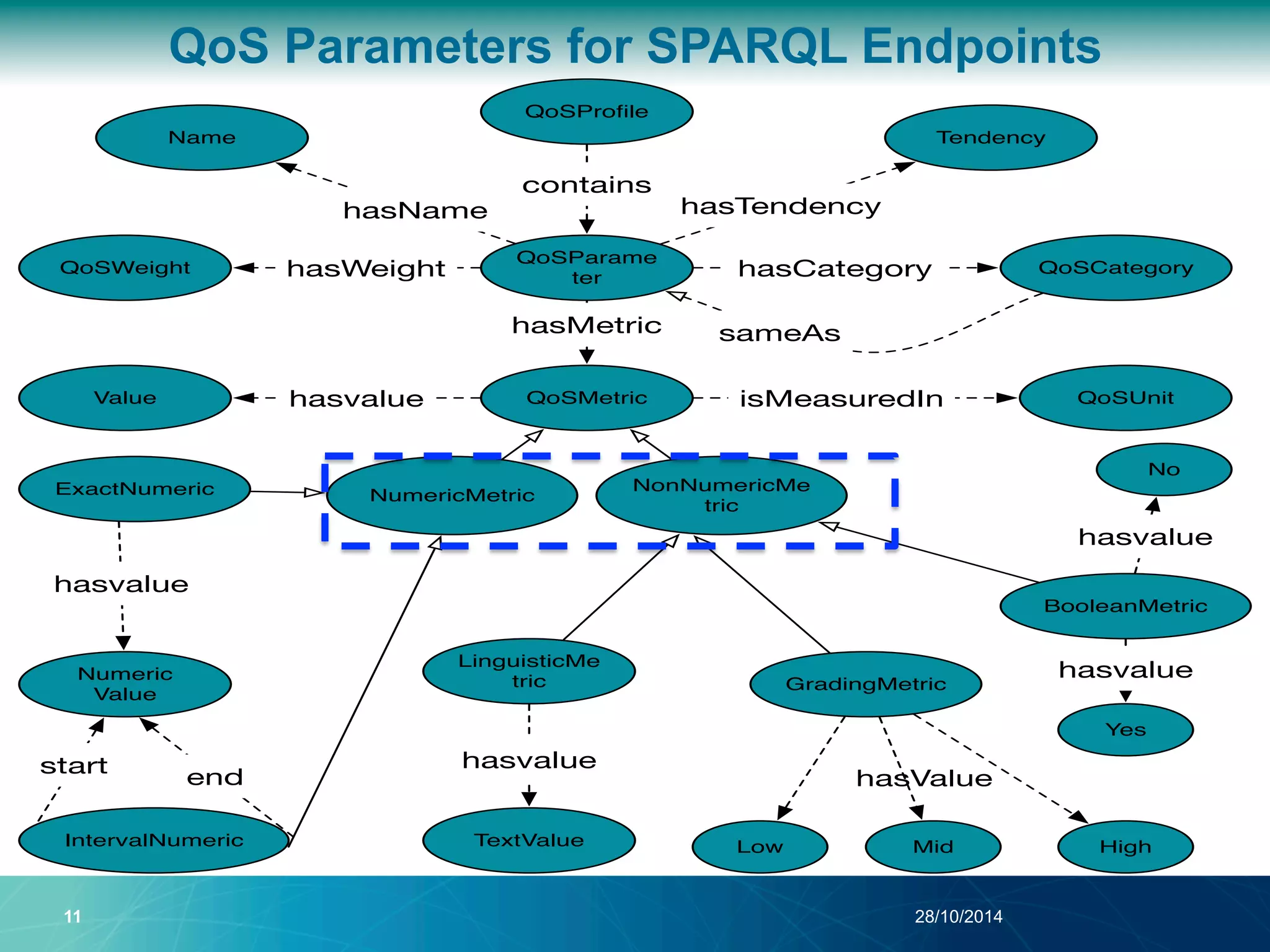

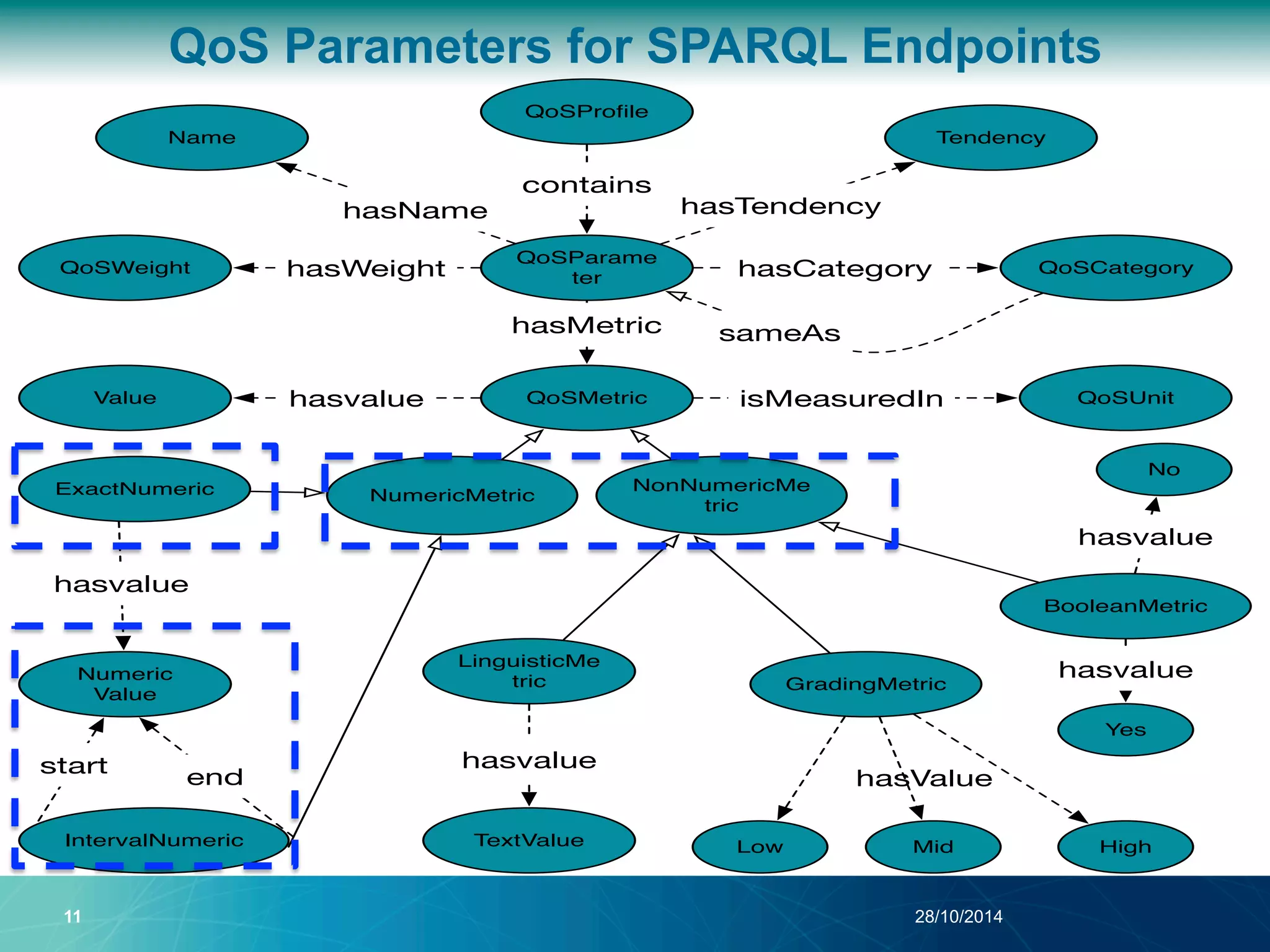

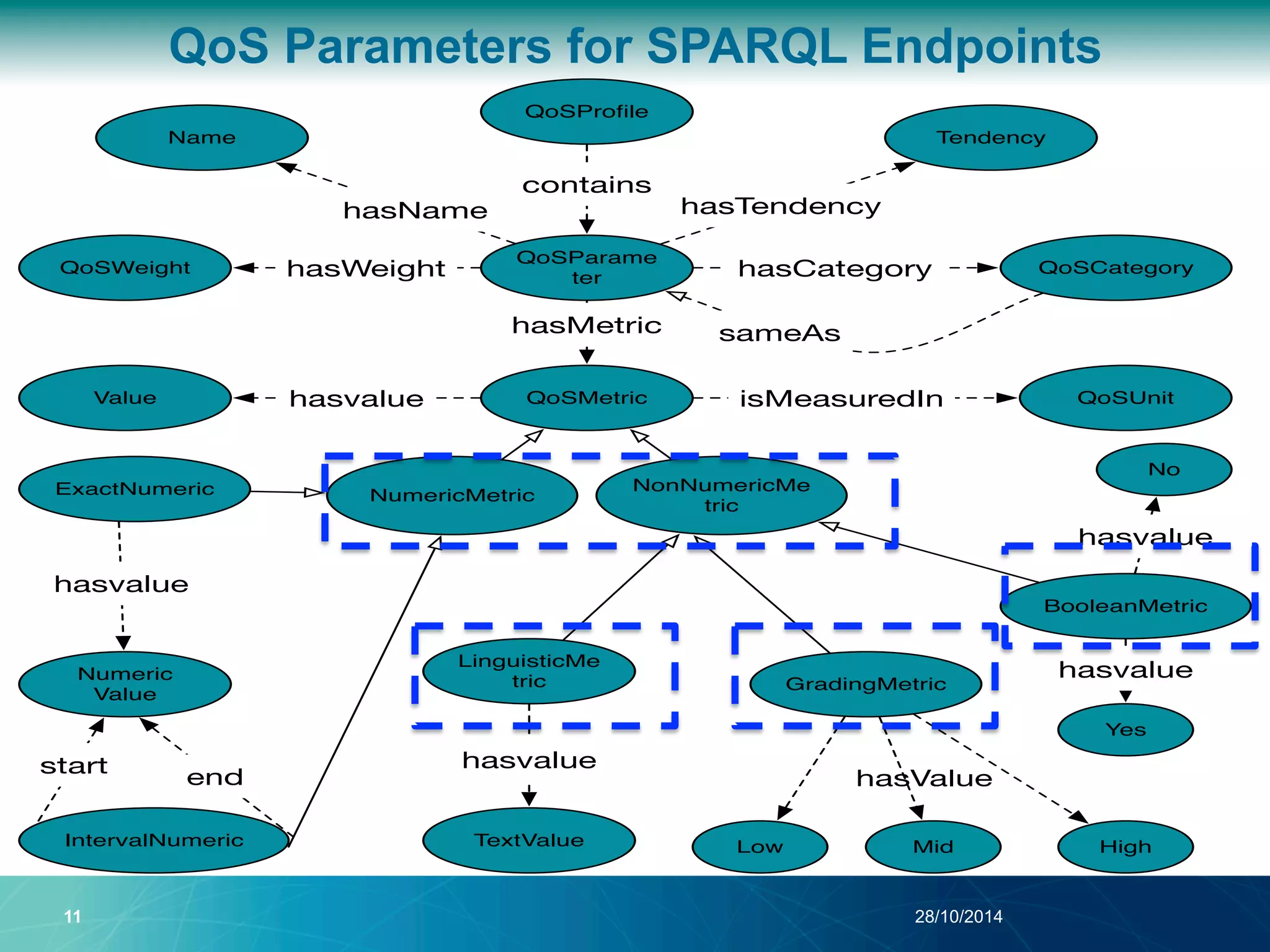

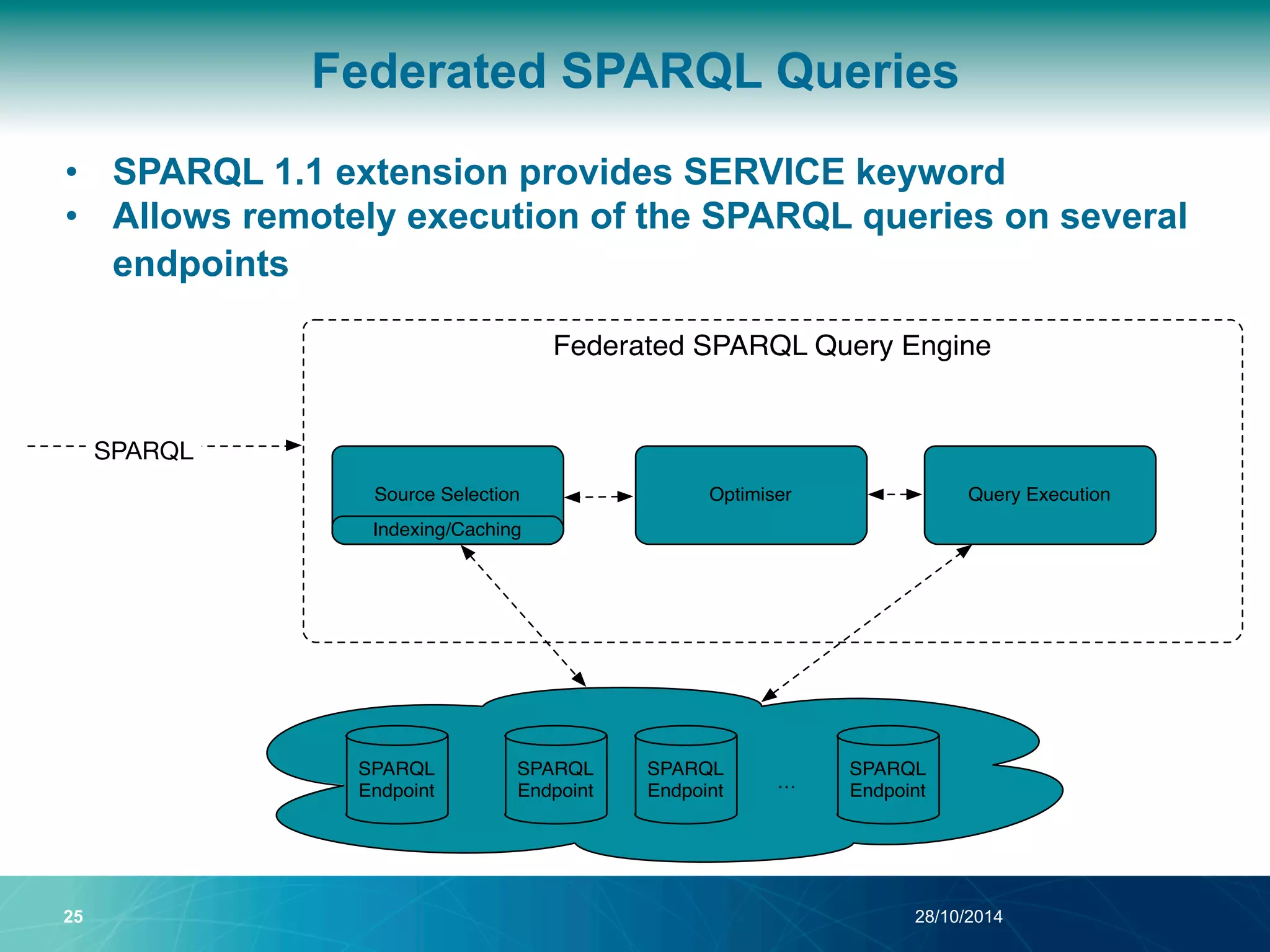

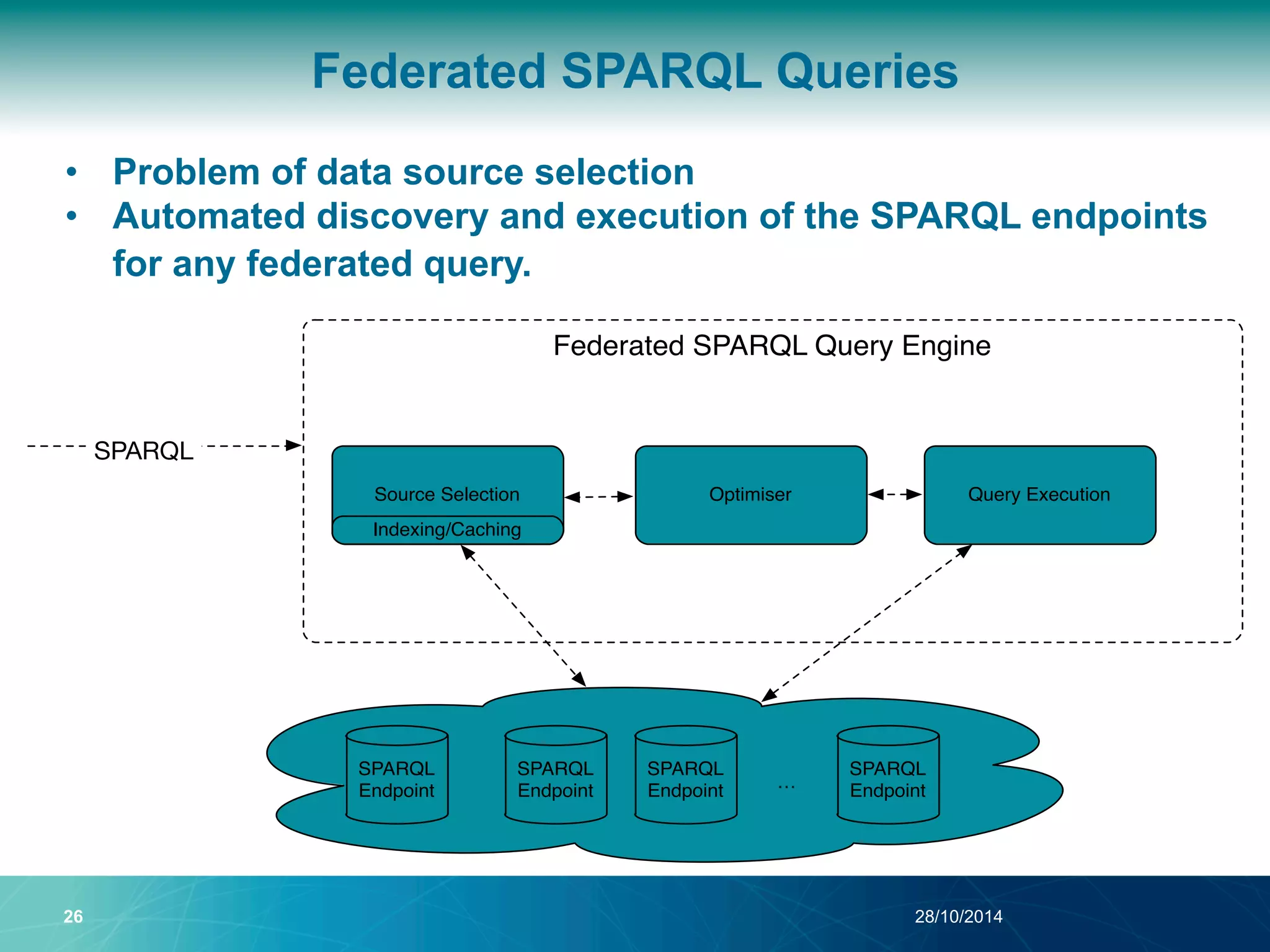

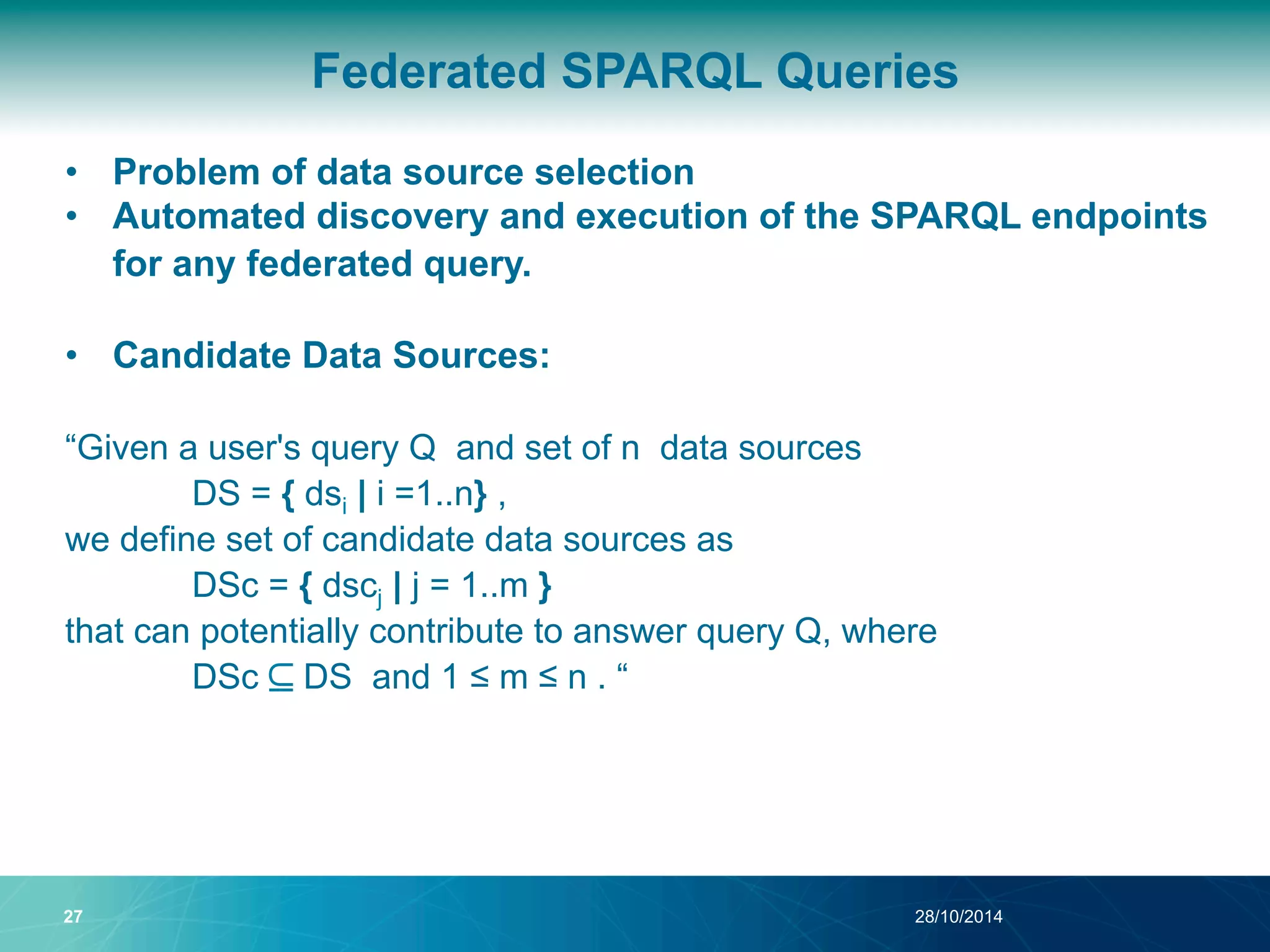

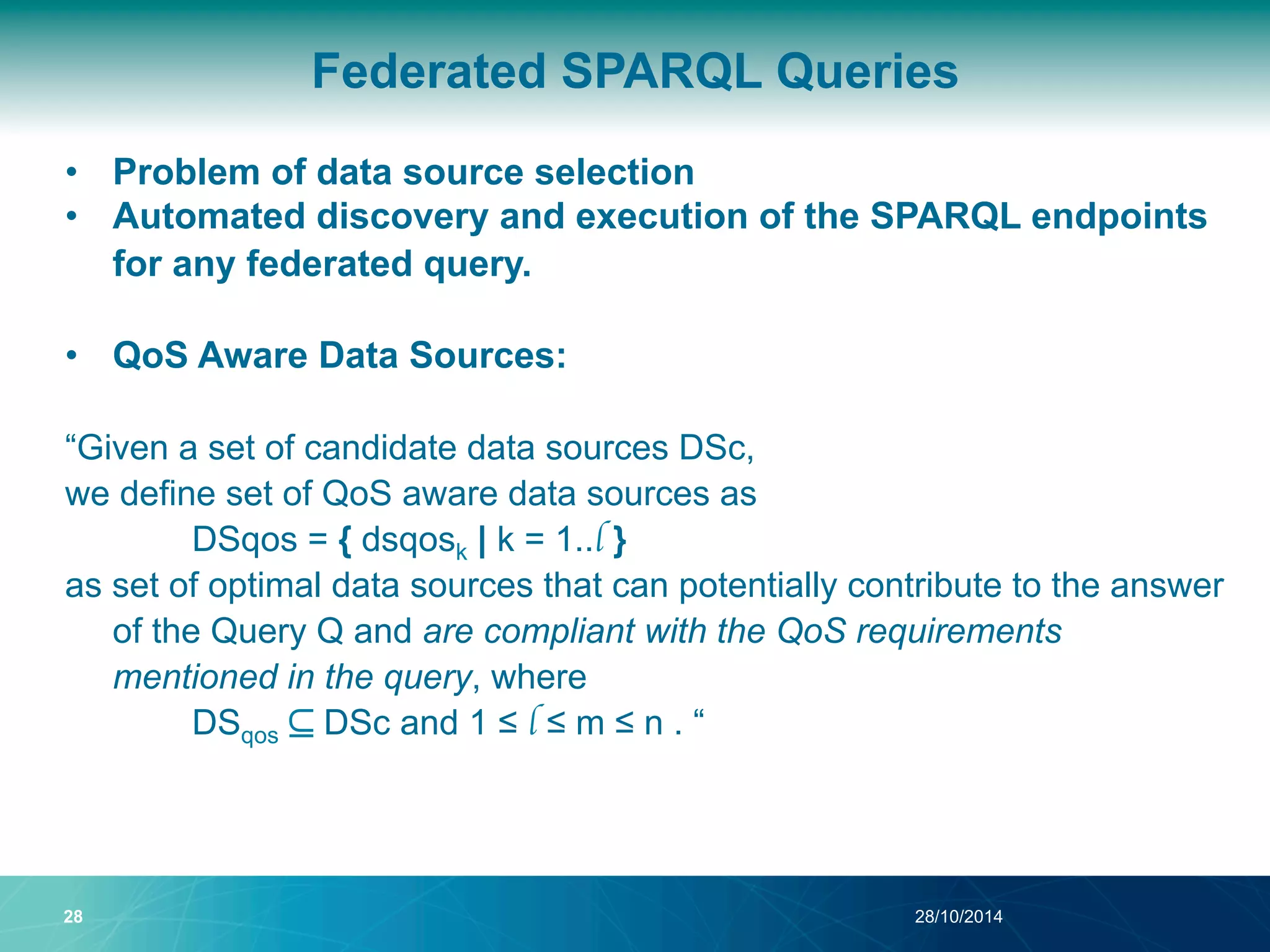

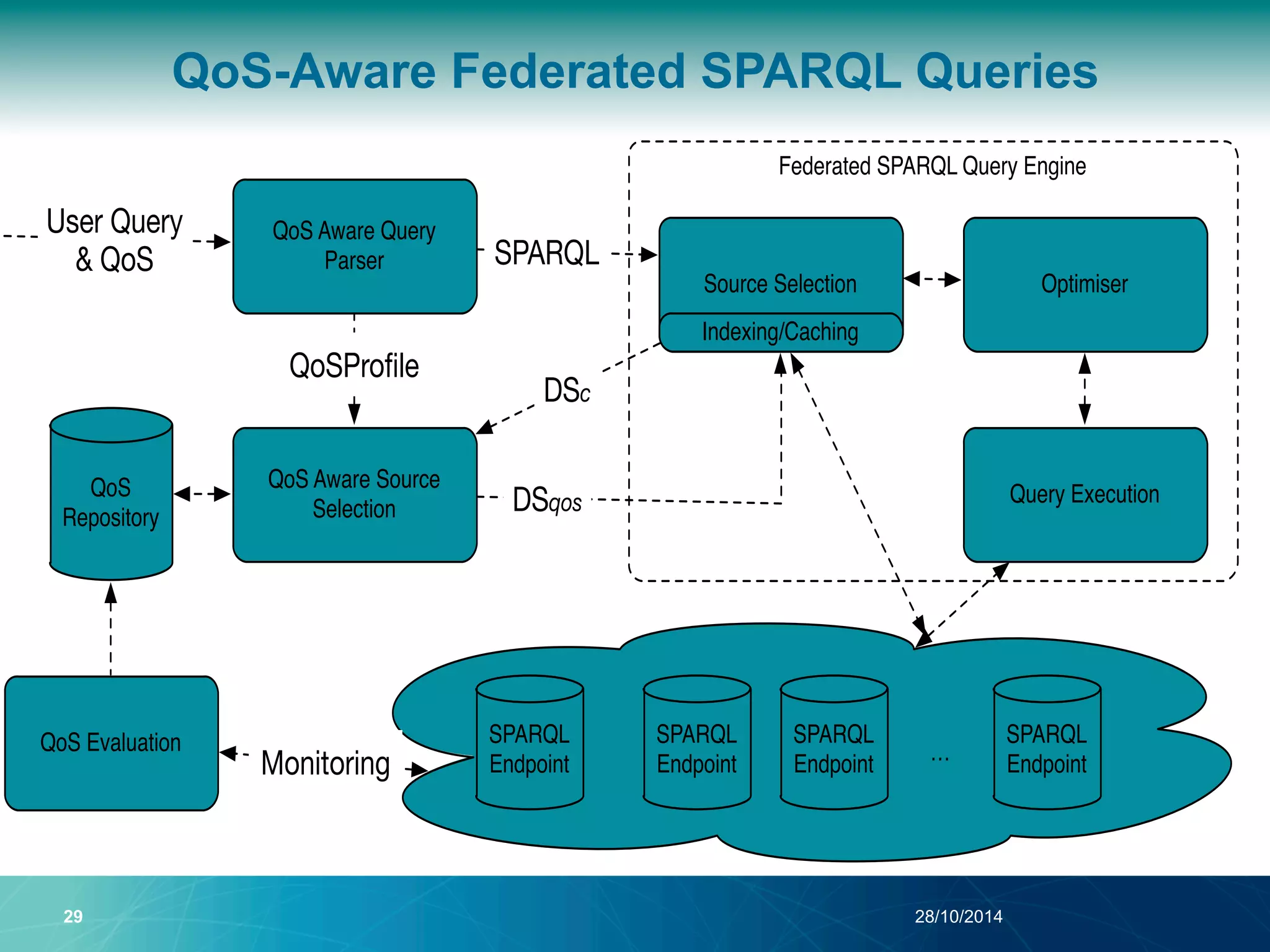

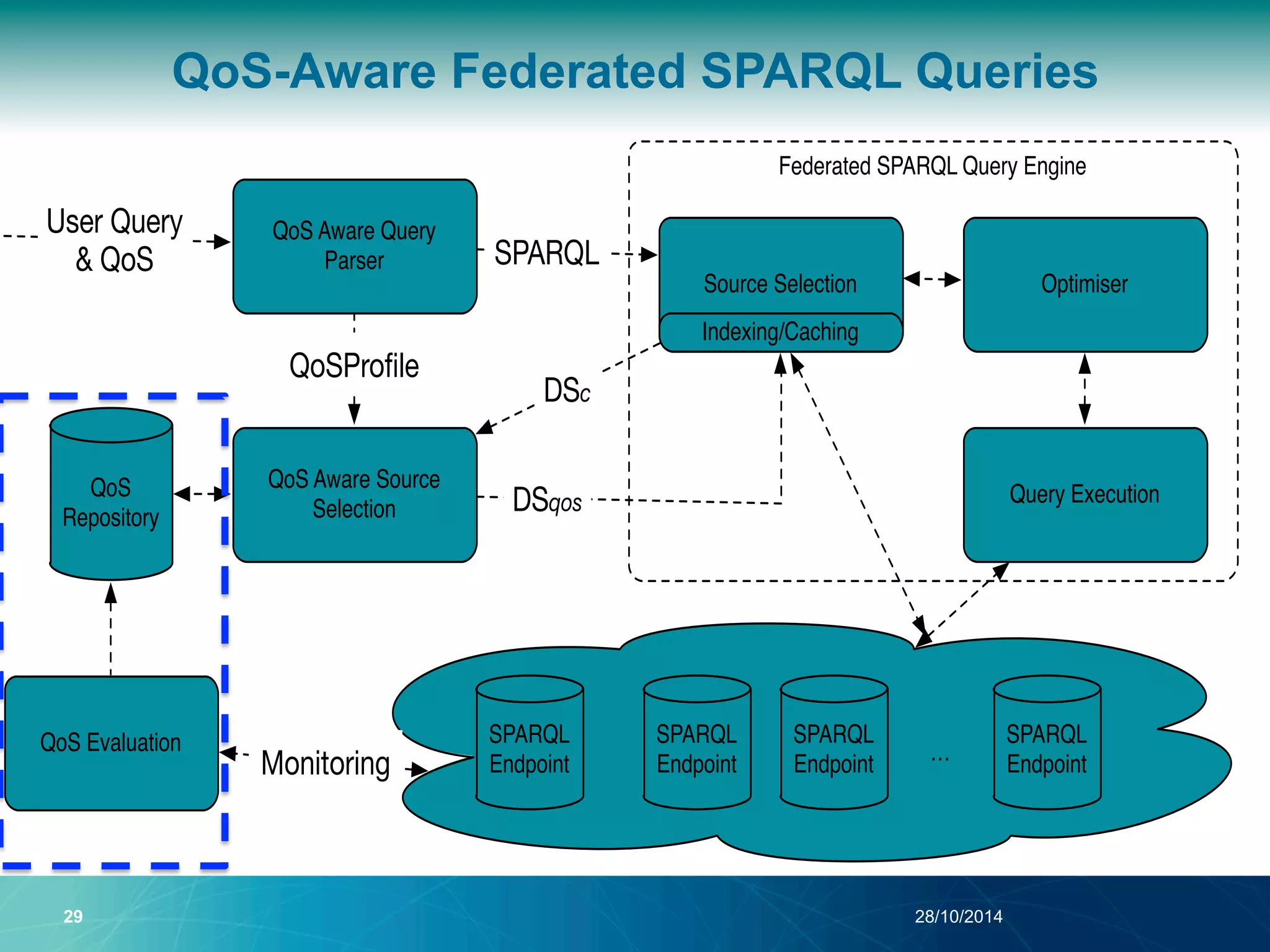

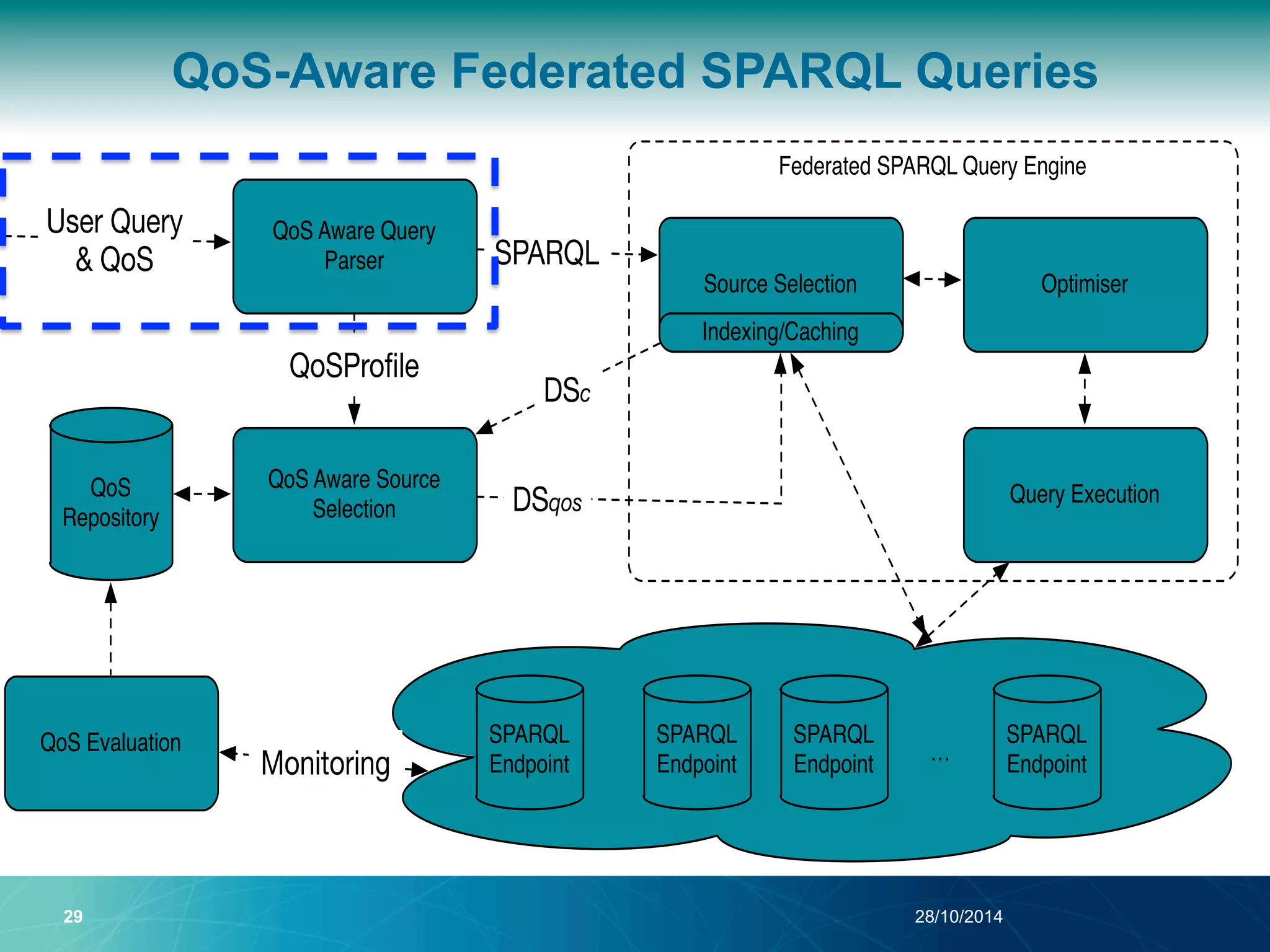

The document discusses a Quality of Service (QoS) aware mechanism for monitoring SPARQL endpoints and selecting data sources for federated SPARQL queries. It highlights the identification and evaluation of various QoS parameters related to SPARQL endpoints, such as performance and data quality, as well as the importance of continuous monitoring. The document also addresses the challenges in automated discovery and execution of SPARQL queries across multiple endpoints while ensuring QoS compliance.

![SPARQL Extension with QoS

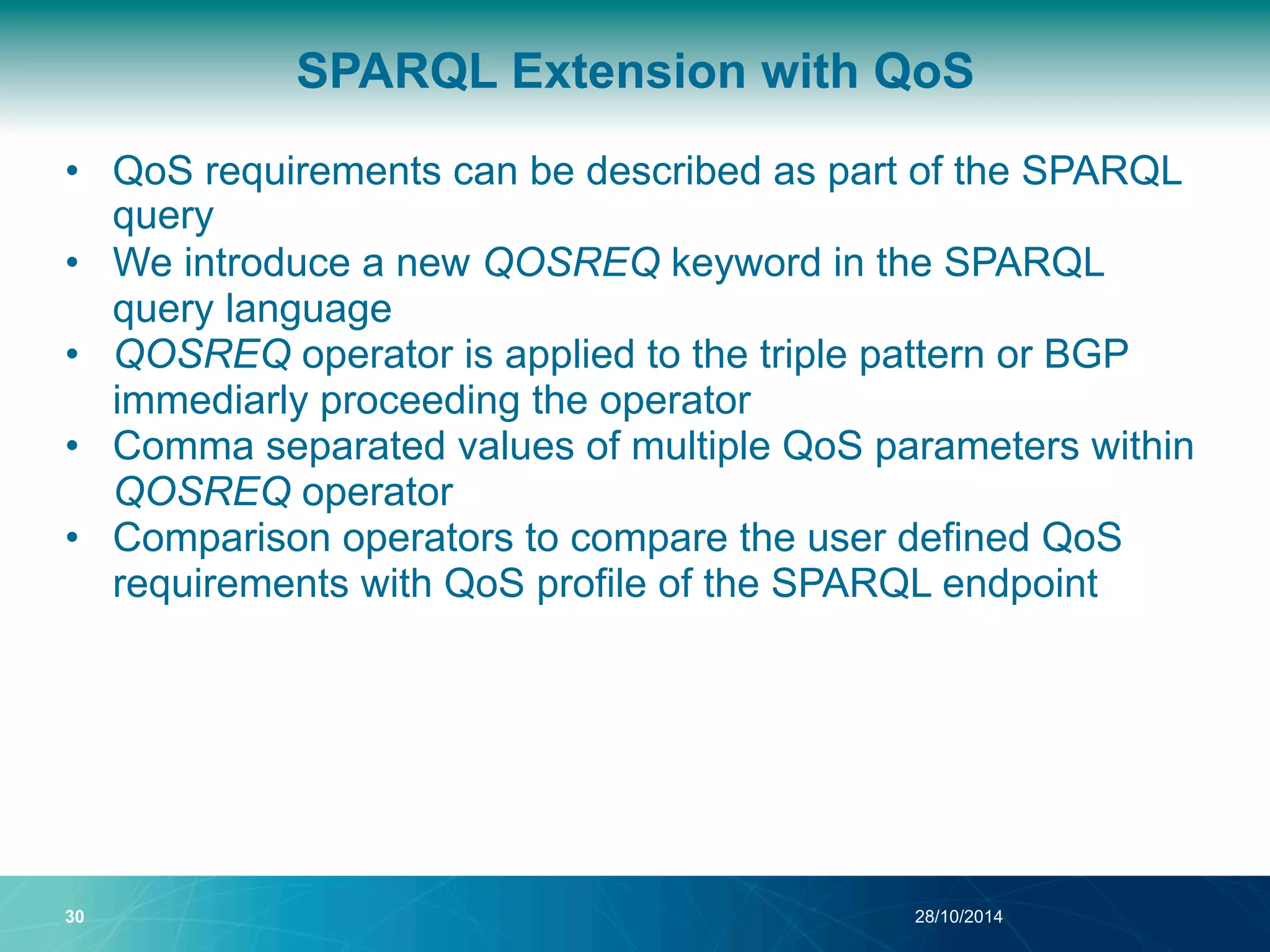

• QoS requirements can be described as part of the SPARQL

query

SELECT ?drug ?keggUrl ?chebiImage

WHERE {

?drug rdf:type drugbank:drugs .

QOSREQ[ qs:ResponseTime < 10 , qs:SizeLimit > 10000]

?drug drugbank:keggCompoundId ?keggDrug .

?keggDrug bio2rdf:u r l ?keggUrl .

{

?drug drugbank:genericName ?drugBankName .

?chebiDrug purl:title ?drugBankName .

}

QOSREQ[ qs:DatasetDescription = 'VoID' ,

qs:MeanUpTime > 80 ]

?chebiDrug chebi:image ?chebiImage . }

30 28/10/2014](https://image.slidesharecdn.com/odbase2014withoutbackup-141028084310-conversion-gate01/75/How-good-is-your-SPARQL-endpoint-A-QoS-Aware-SPARQL-Endpoint-Monitoring-and-Data-Source-Selection-Mechanism-for-Federated-SPARQL-Queries-67-2048.jpg)