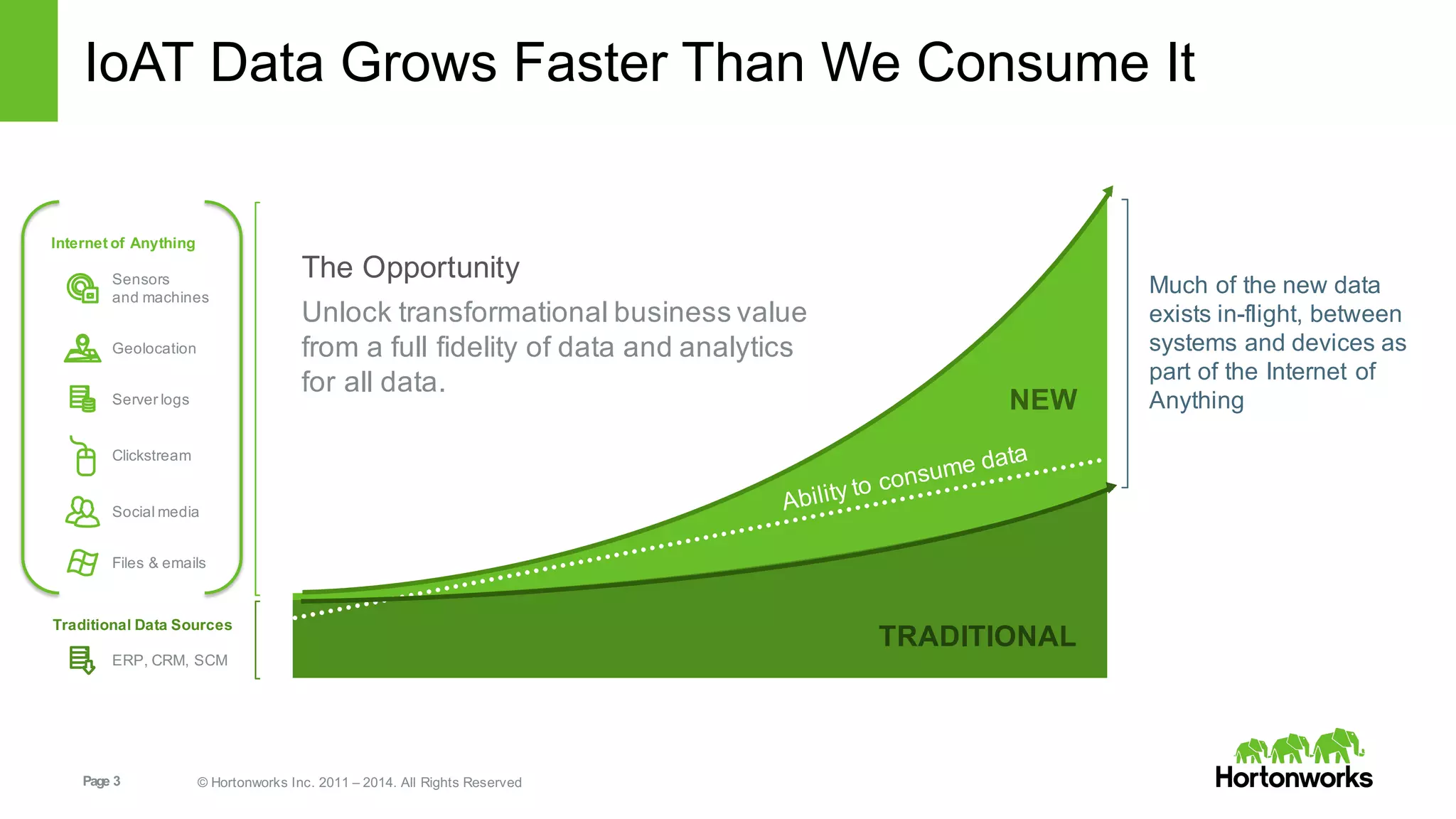

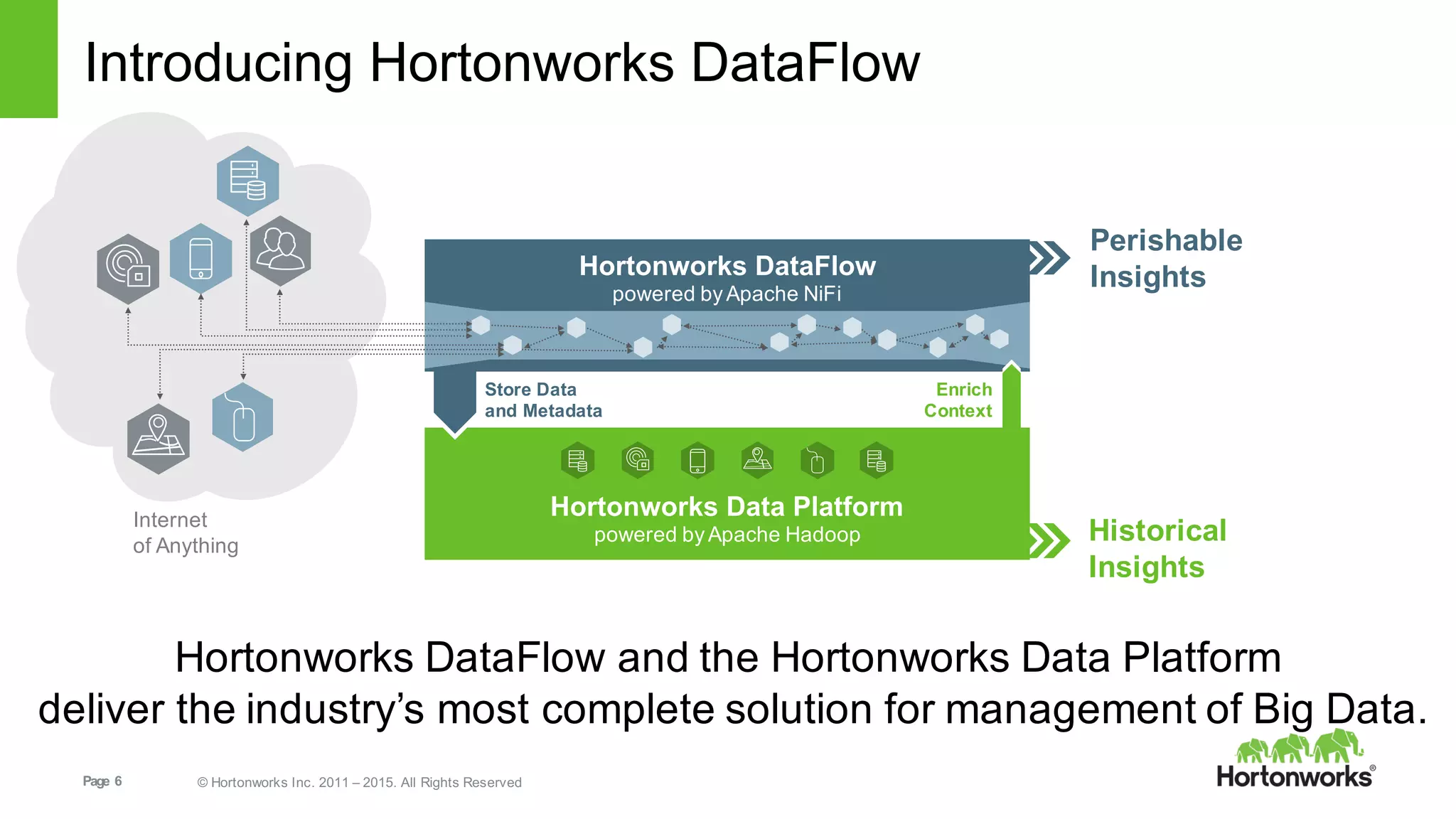

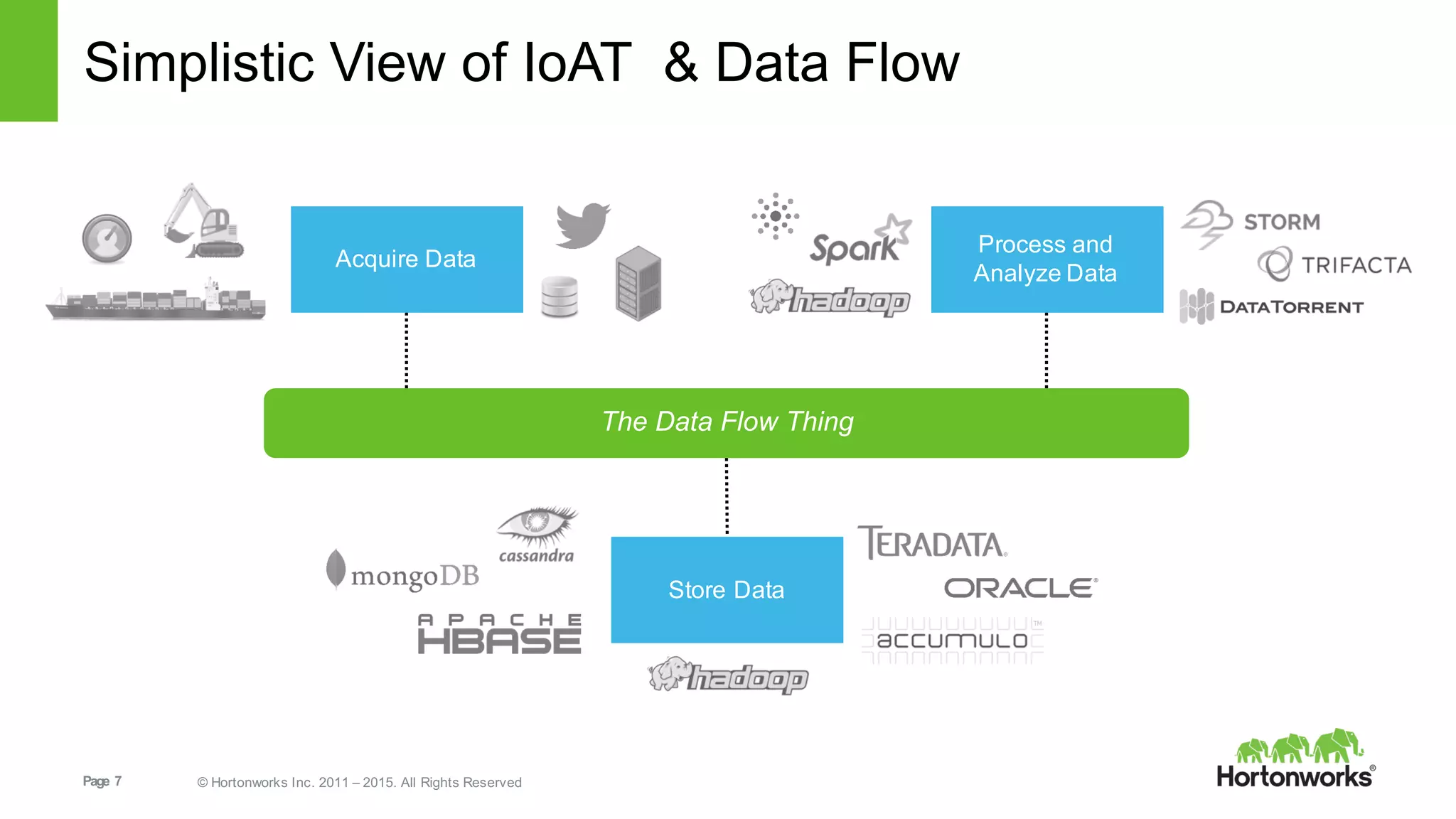

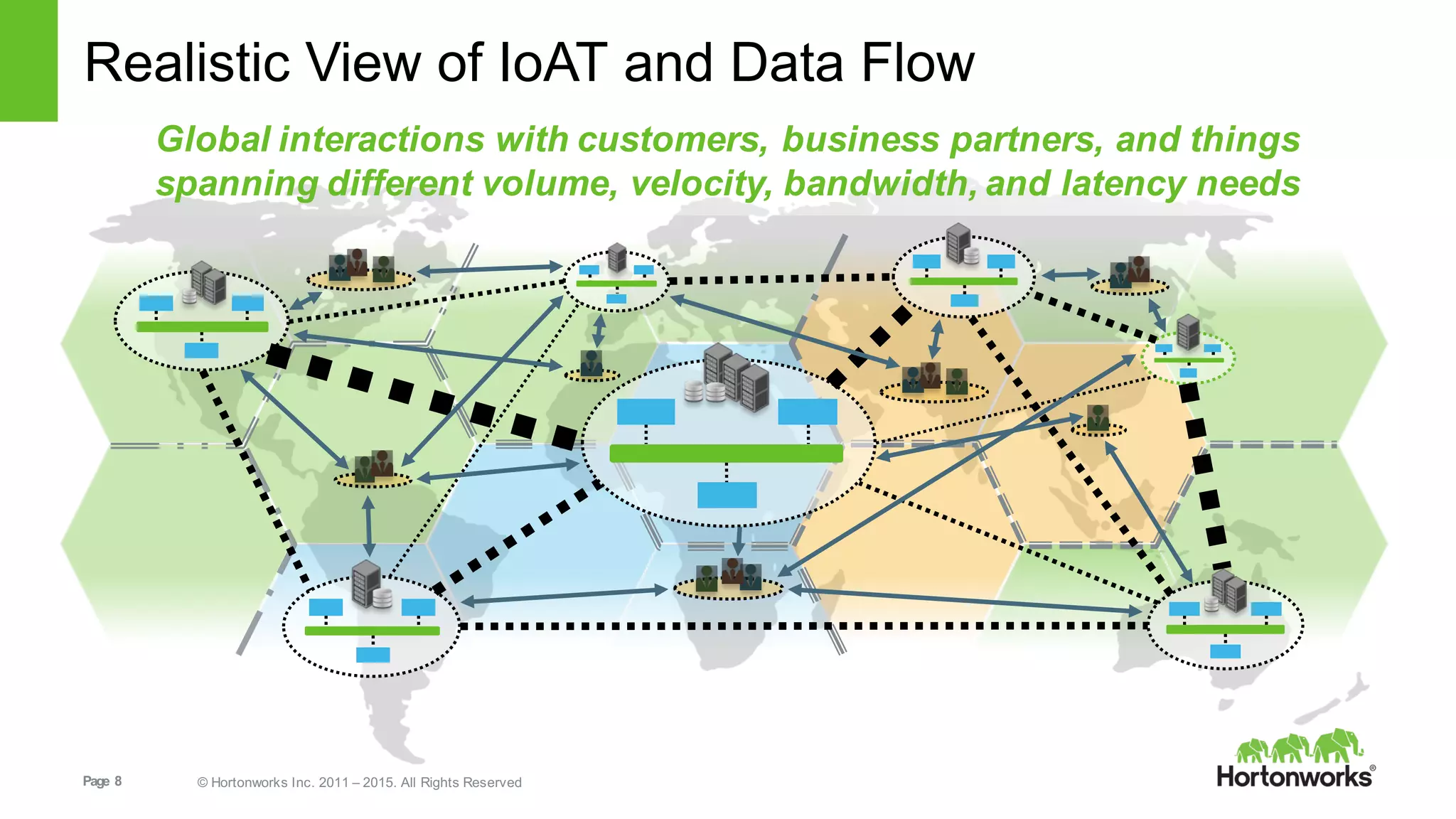

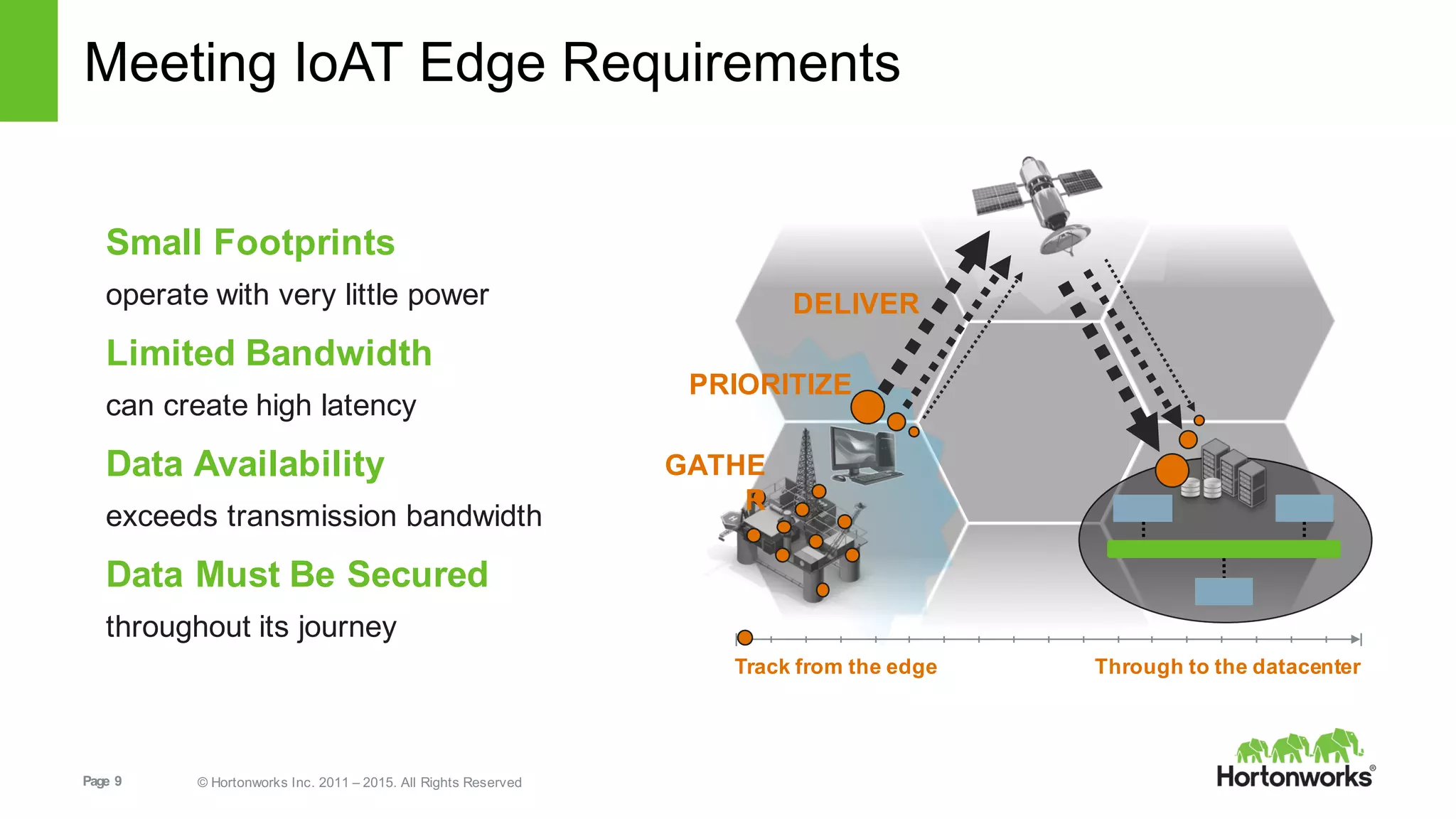

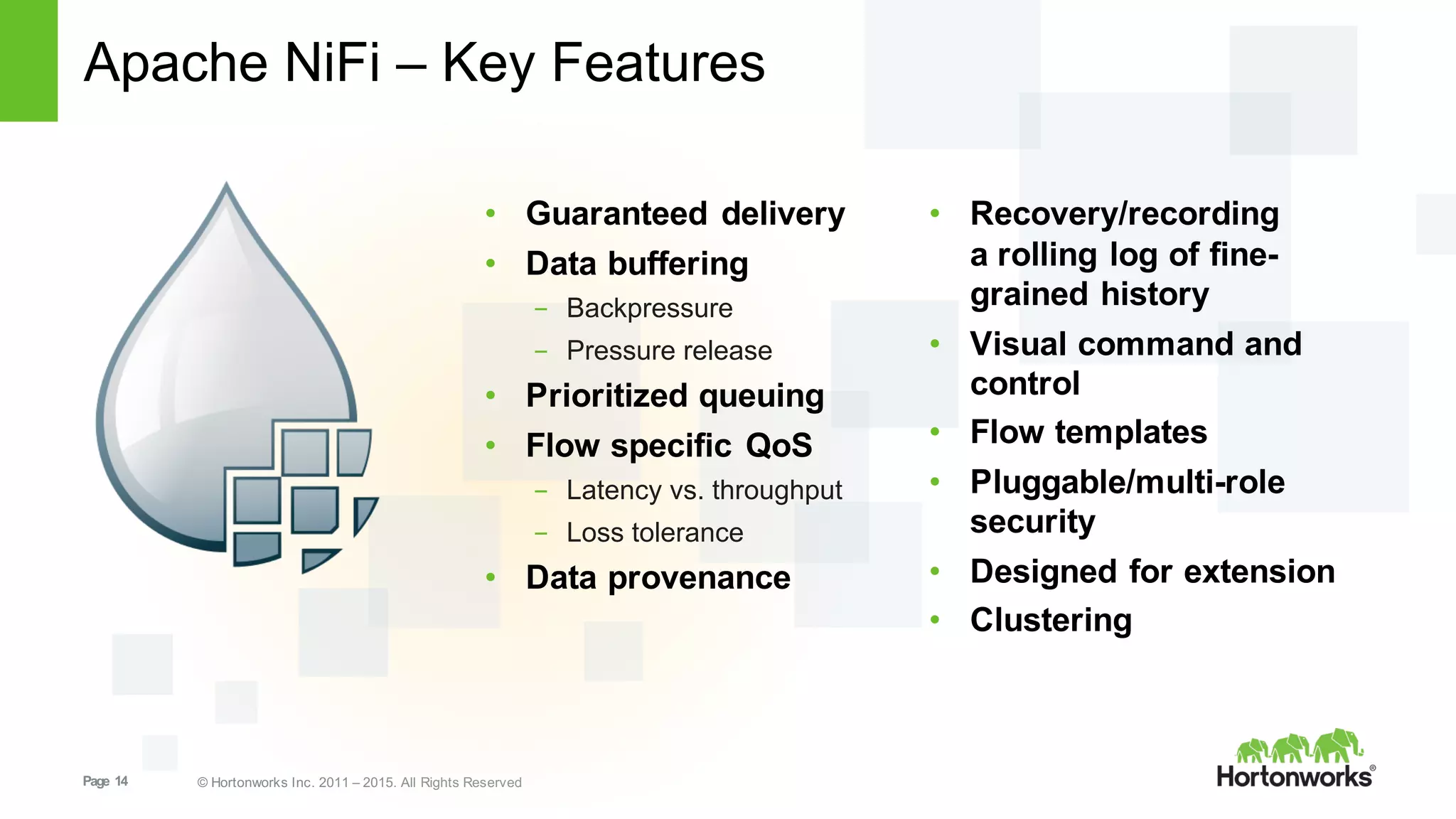

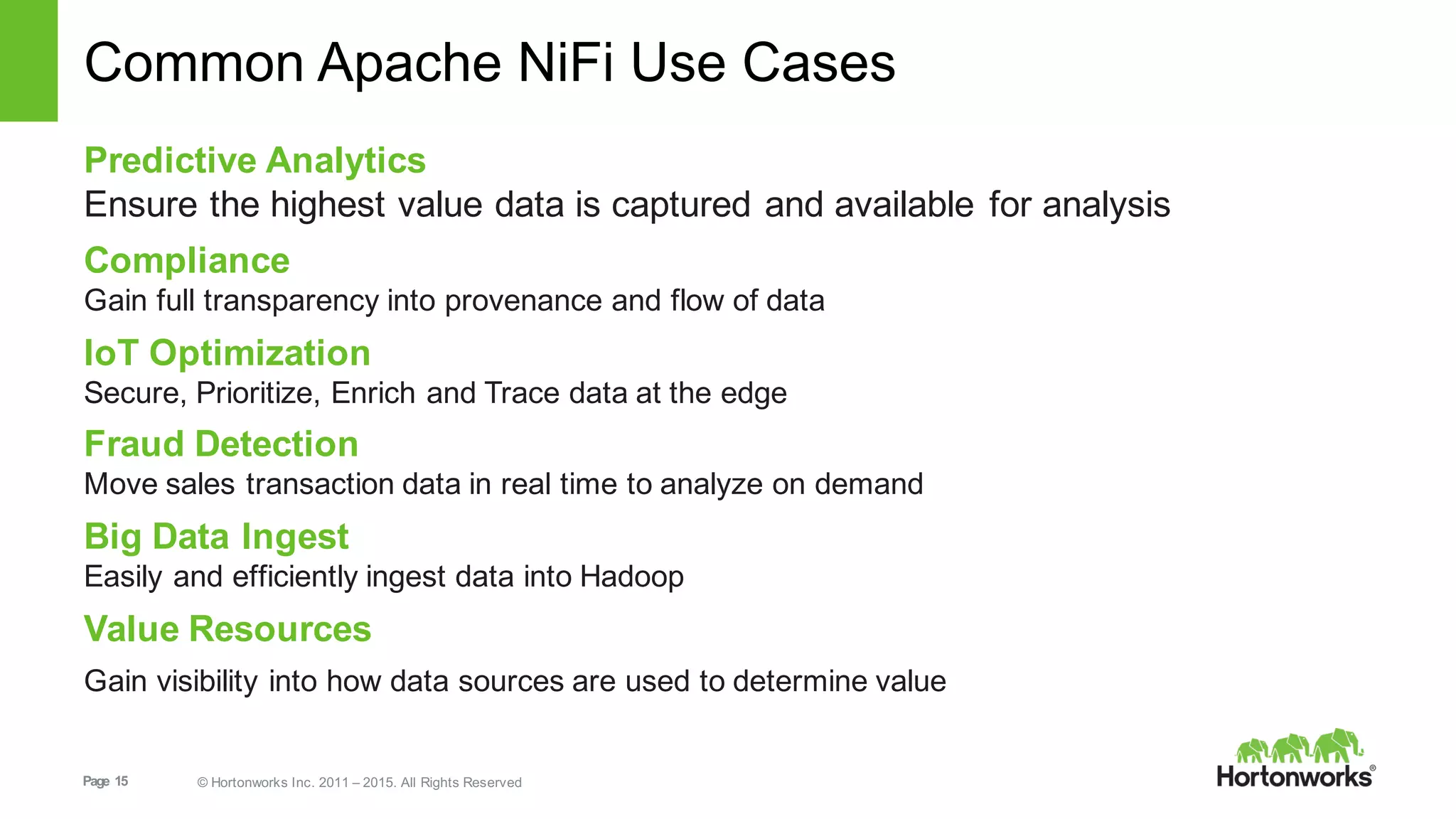

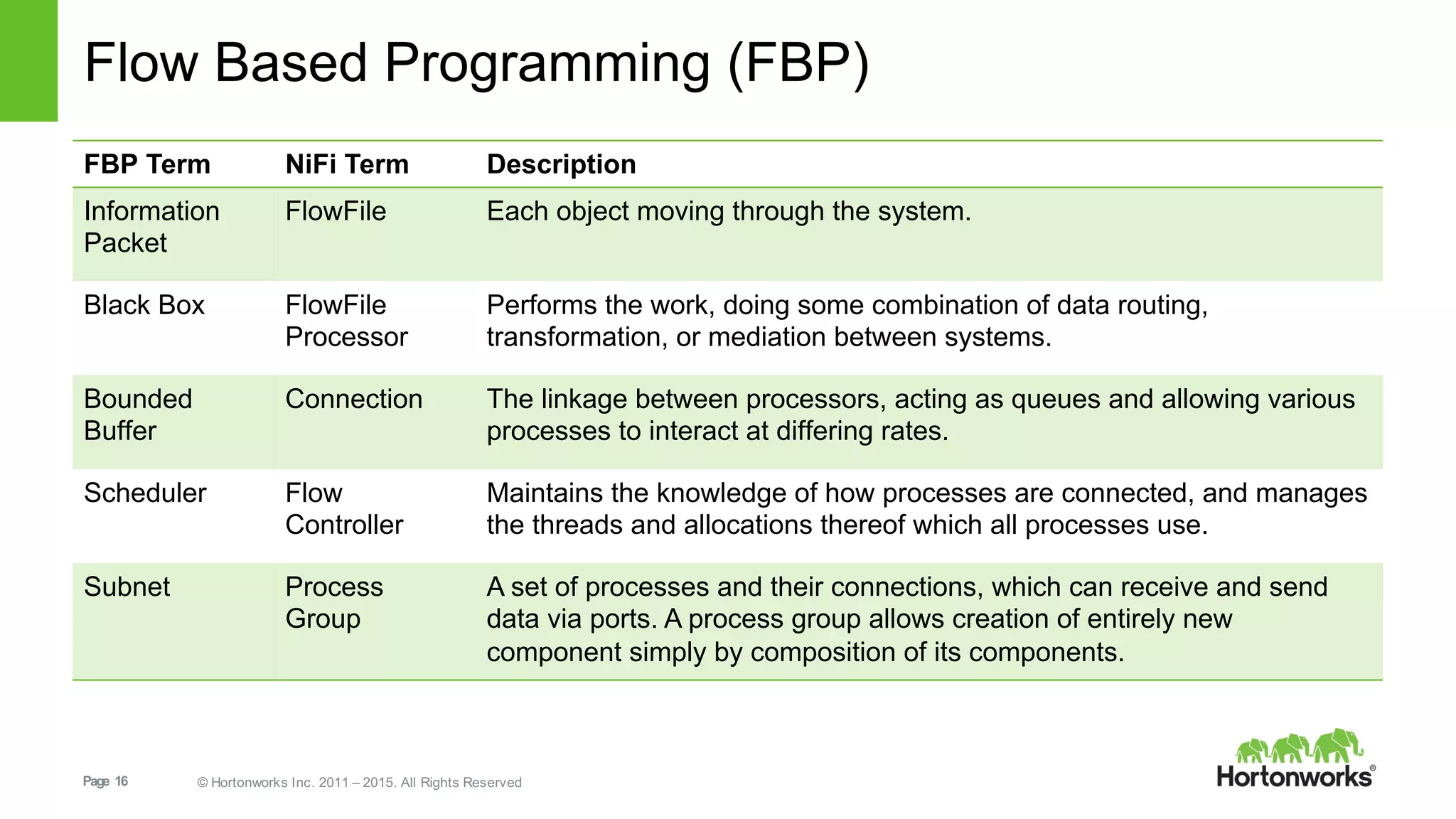

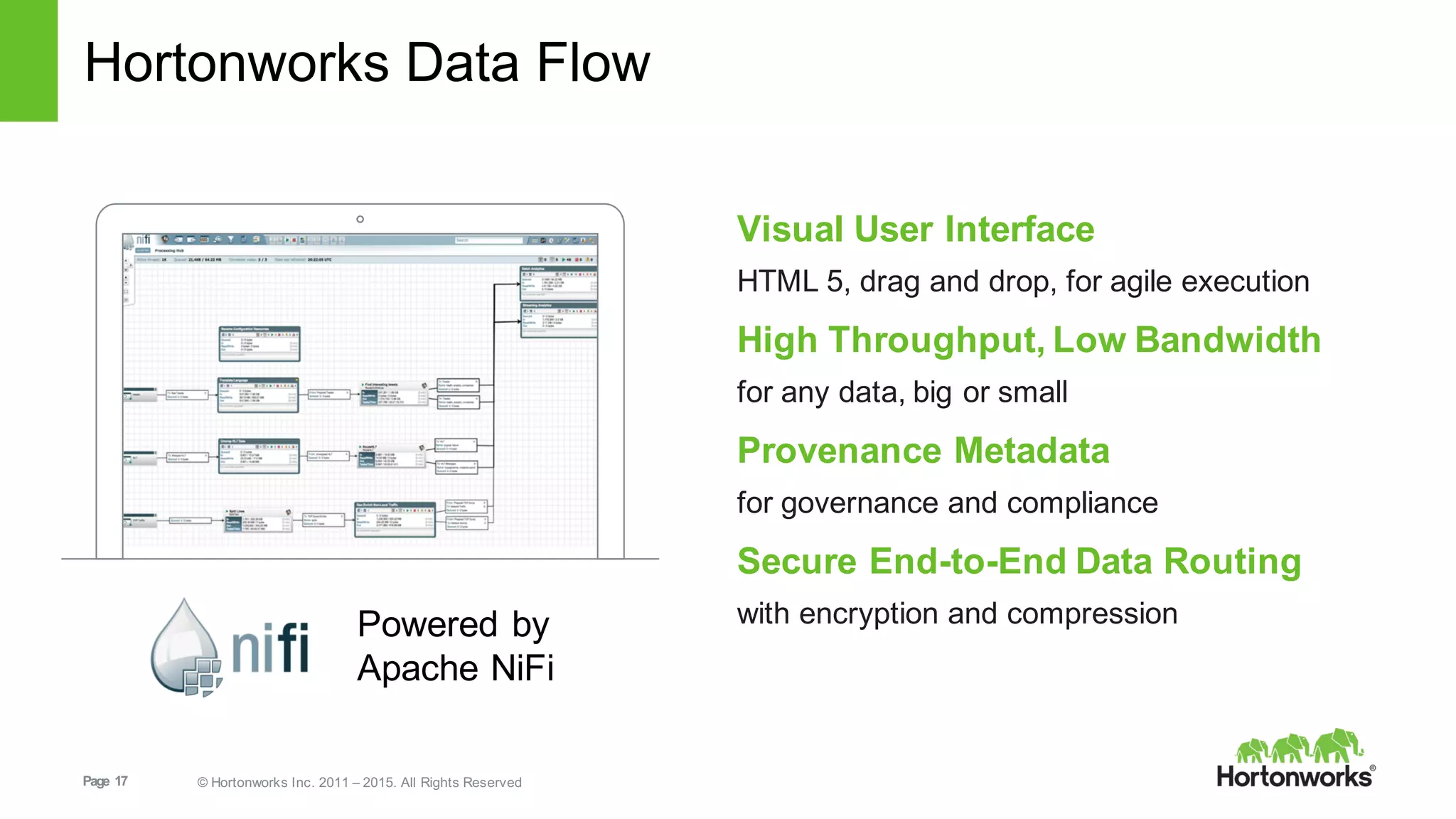

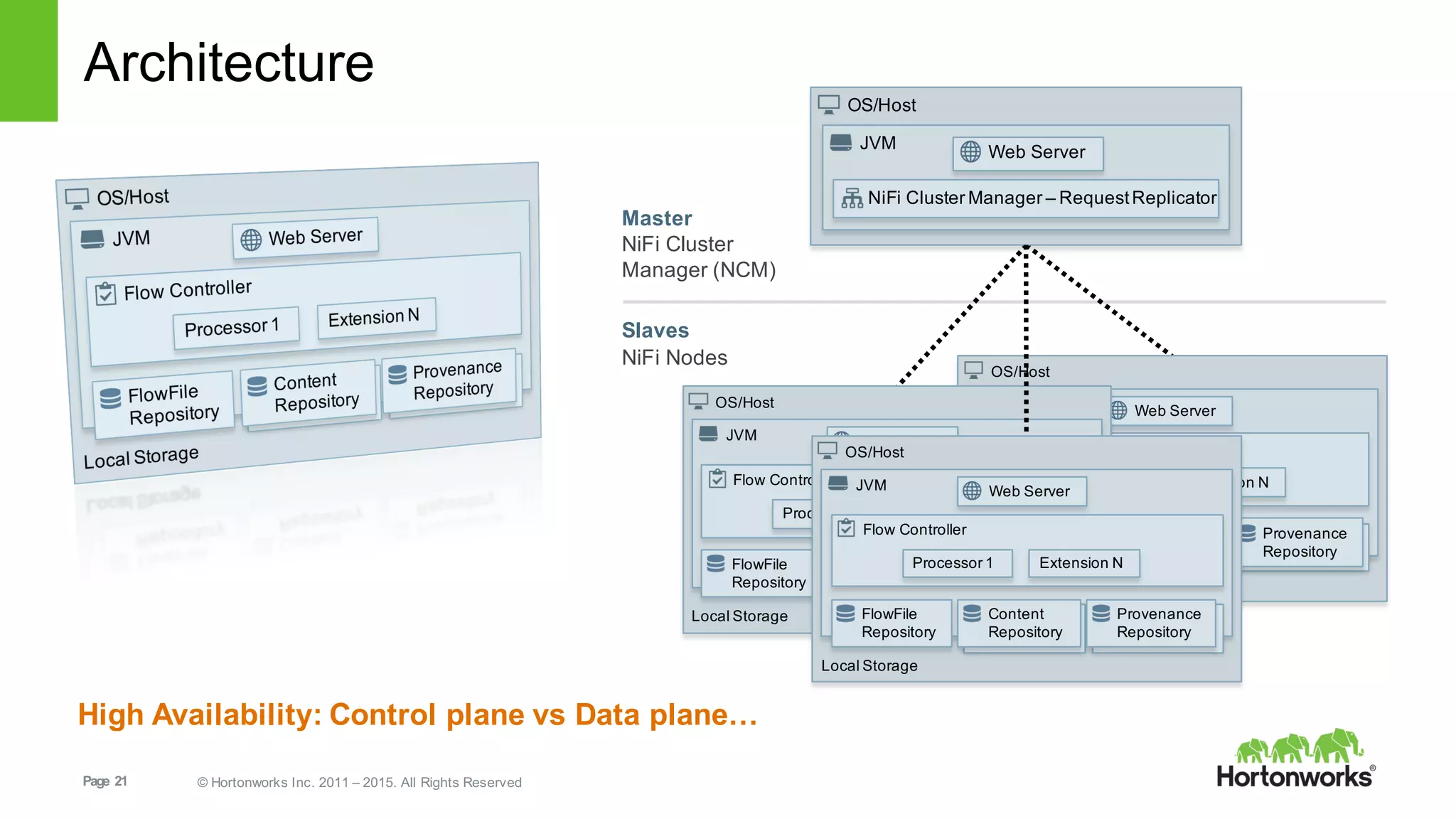

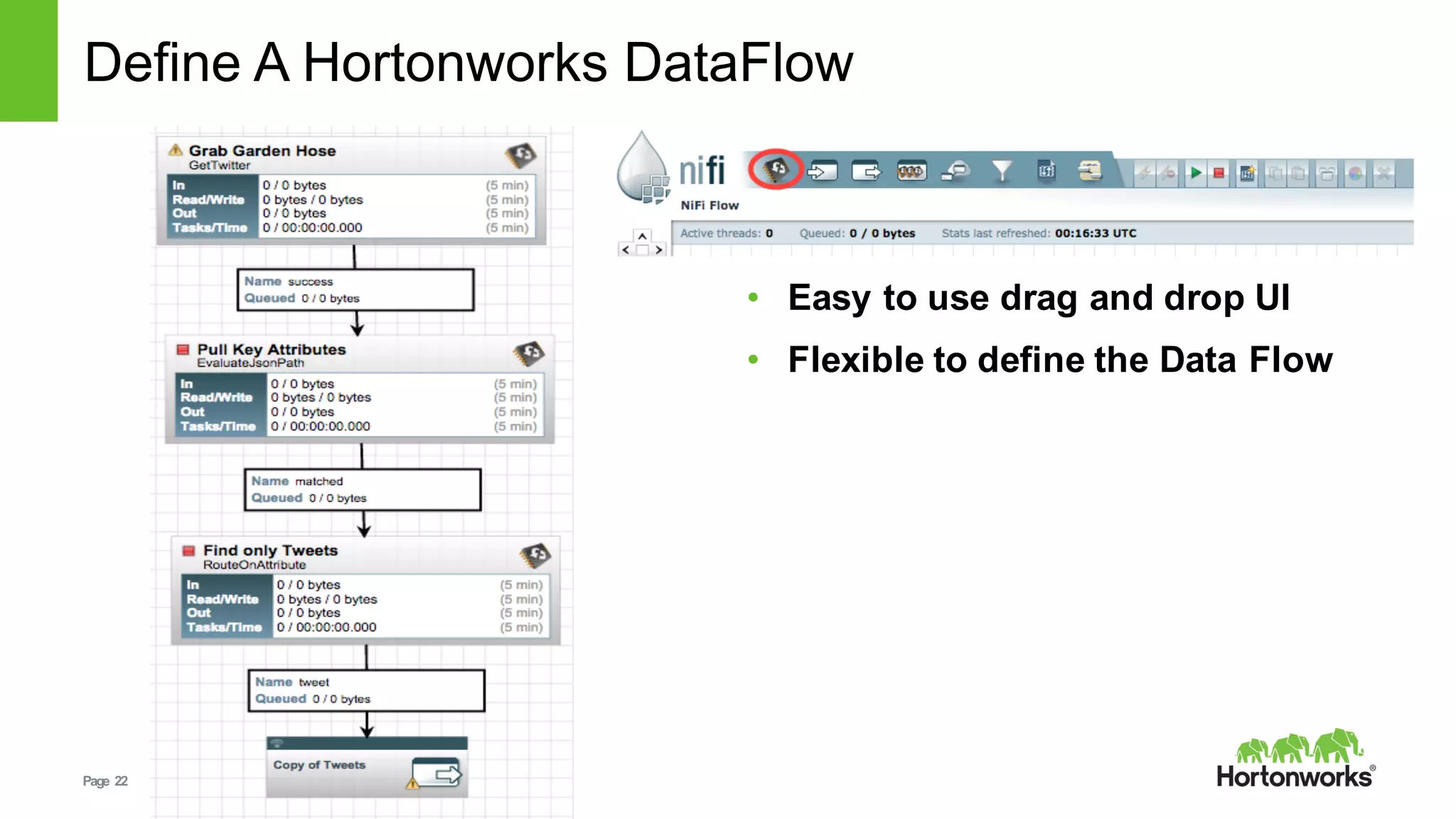

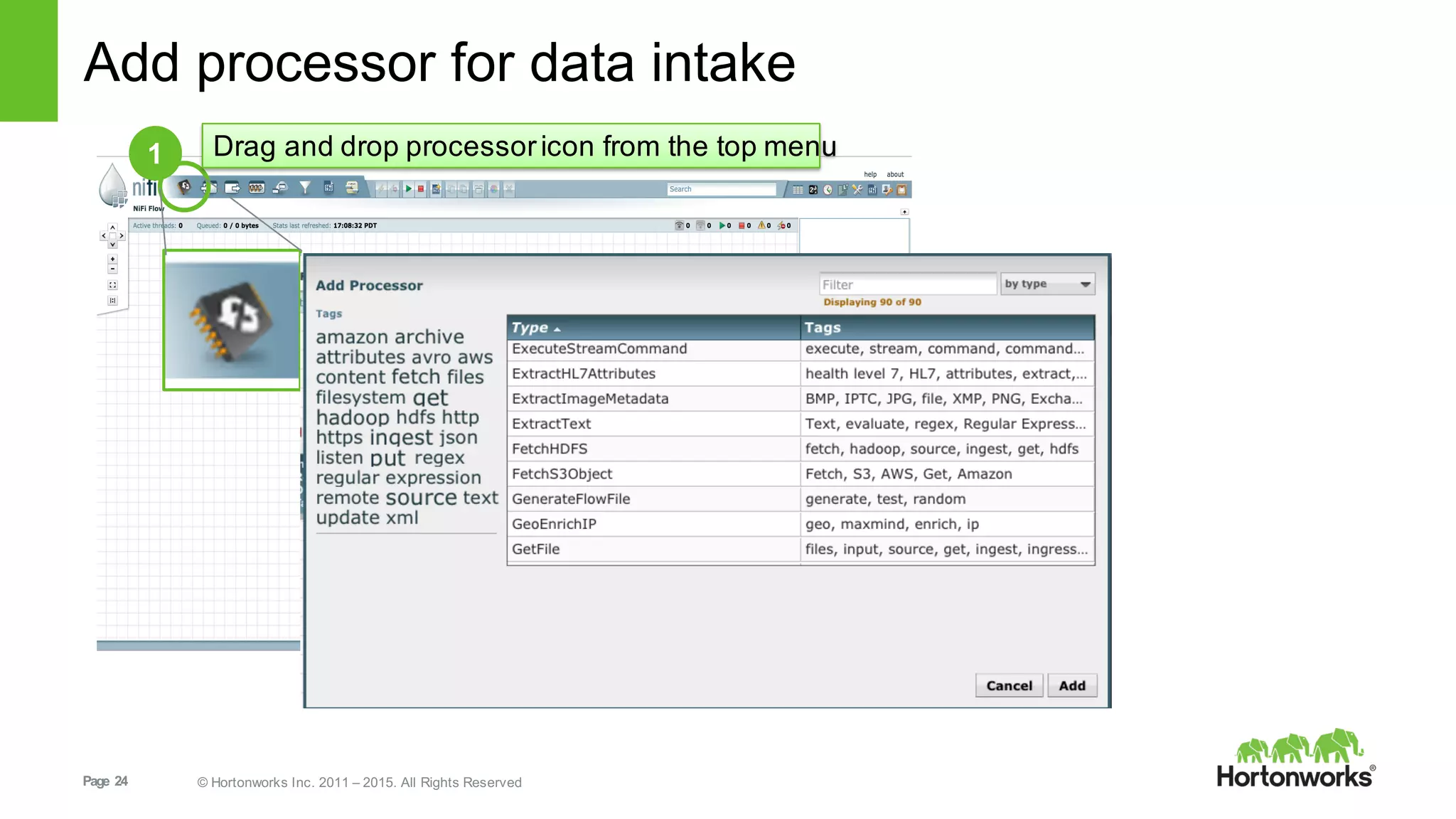

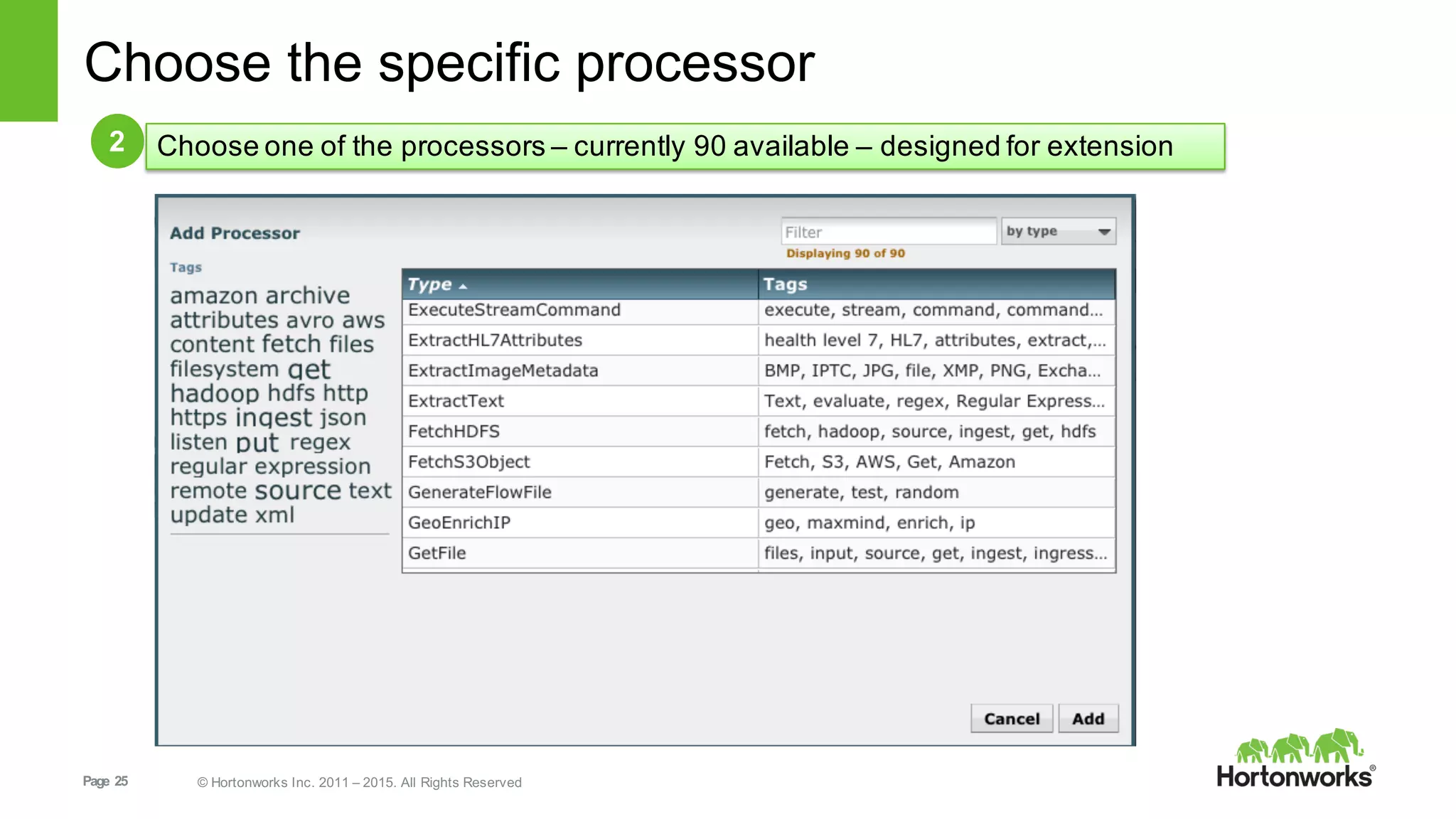

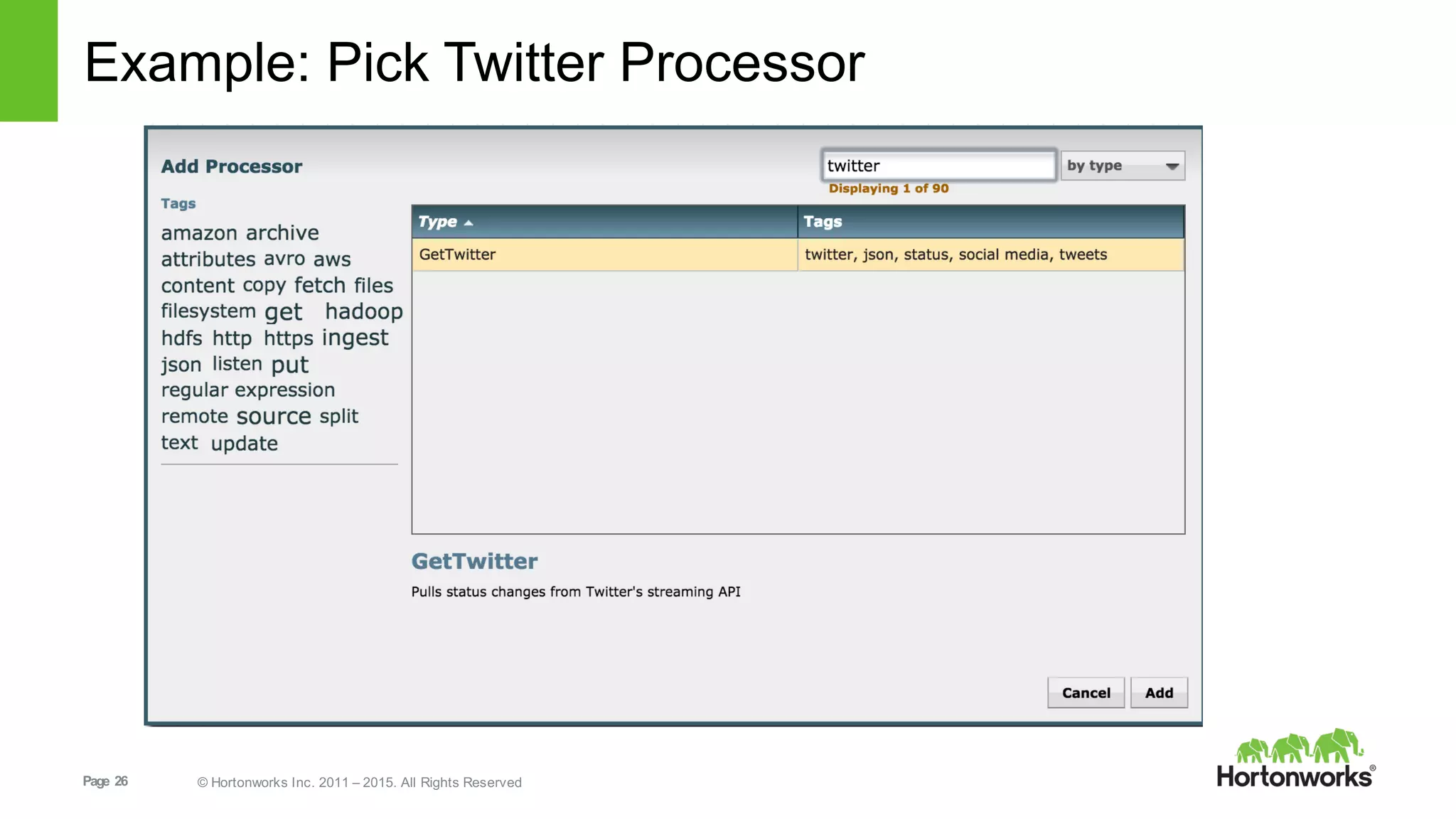

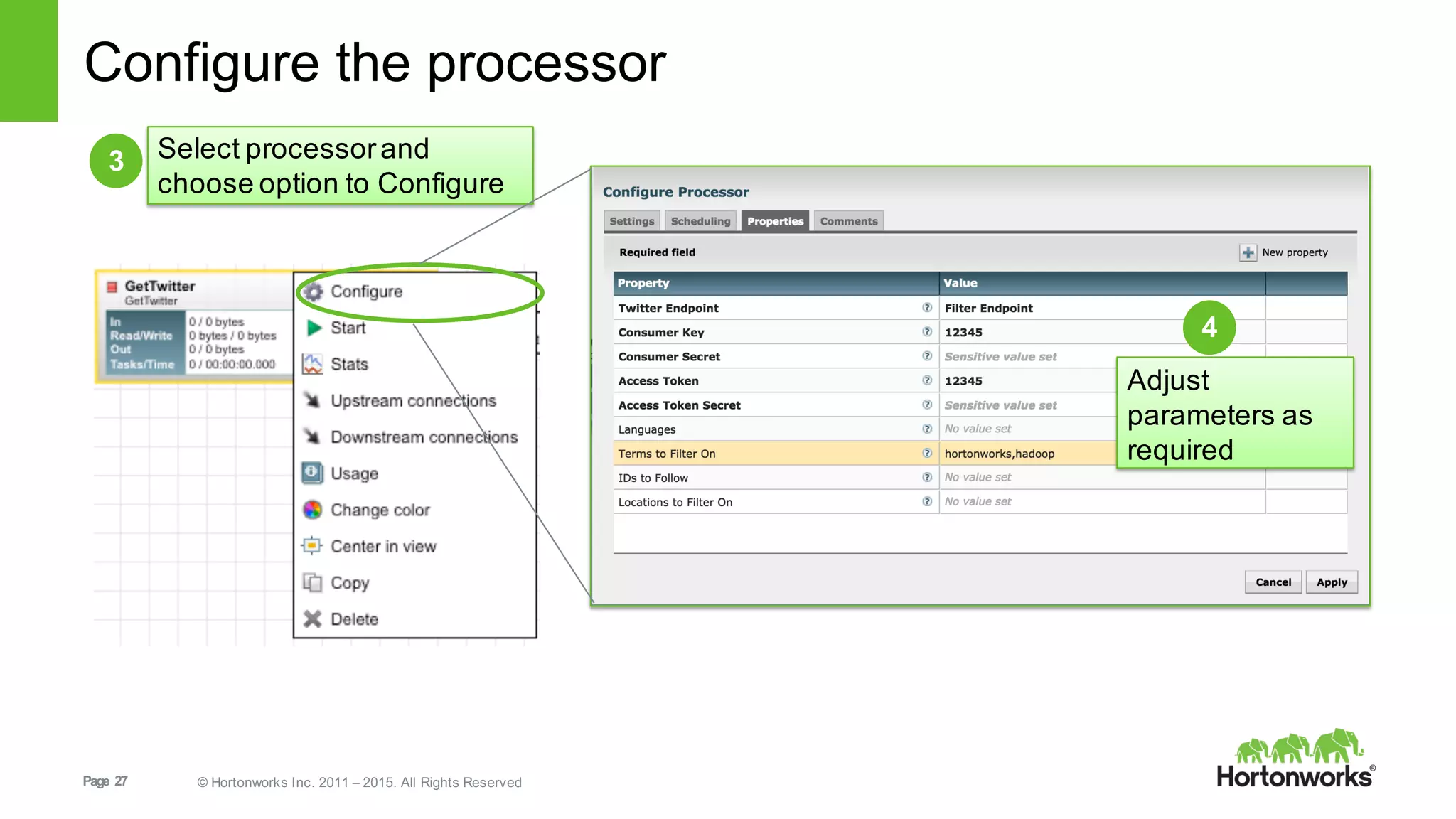

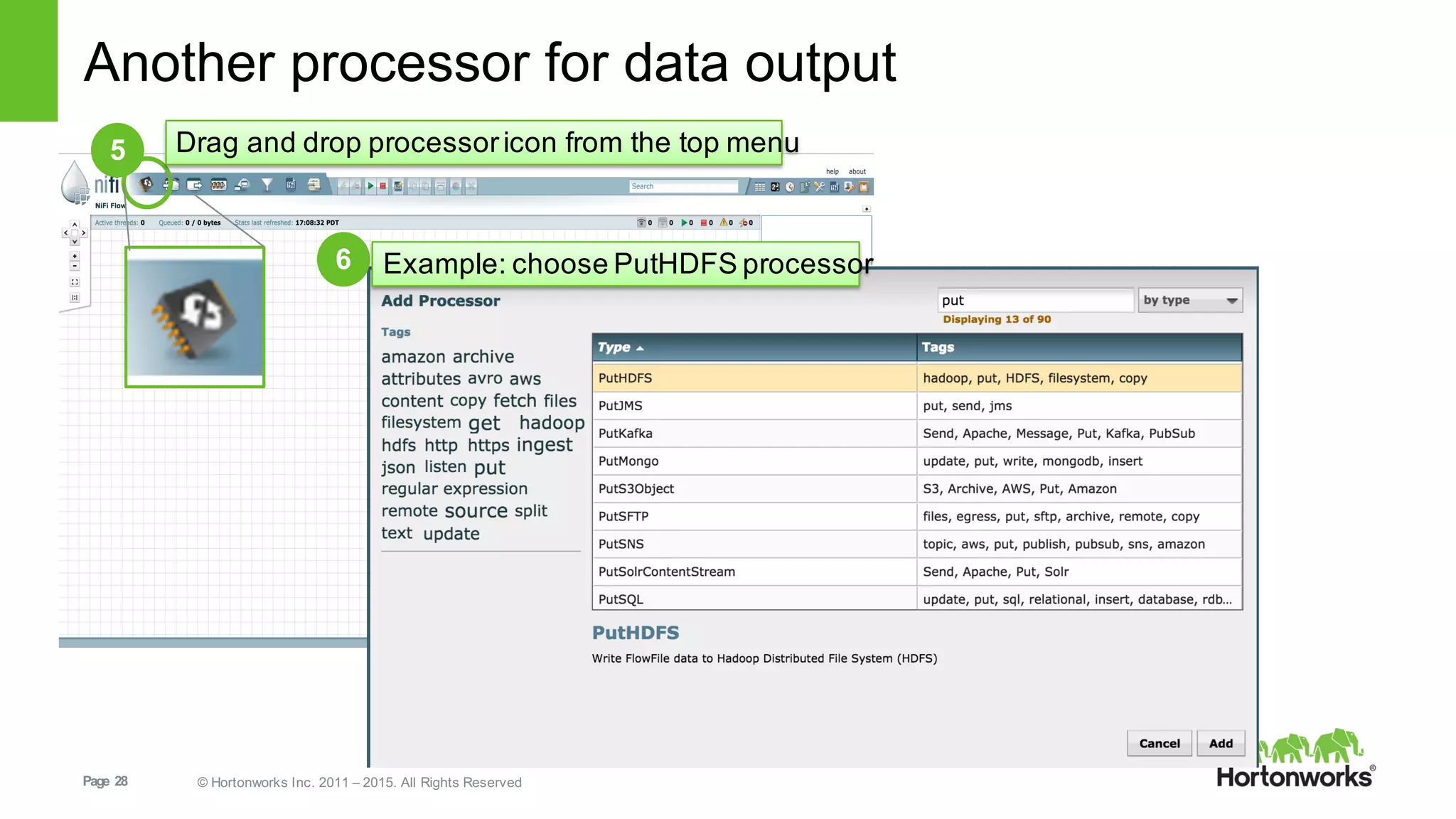

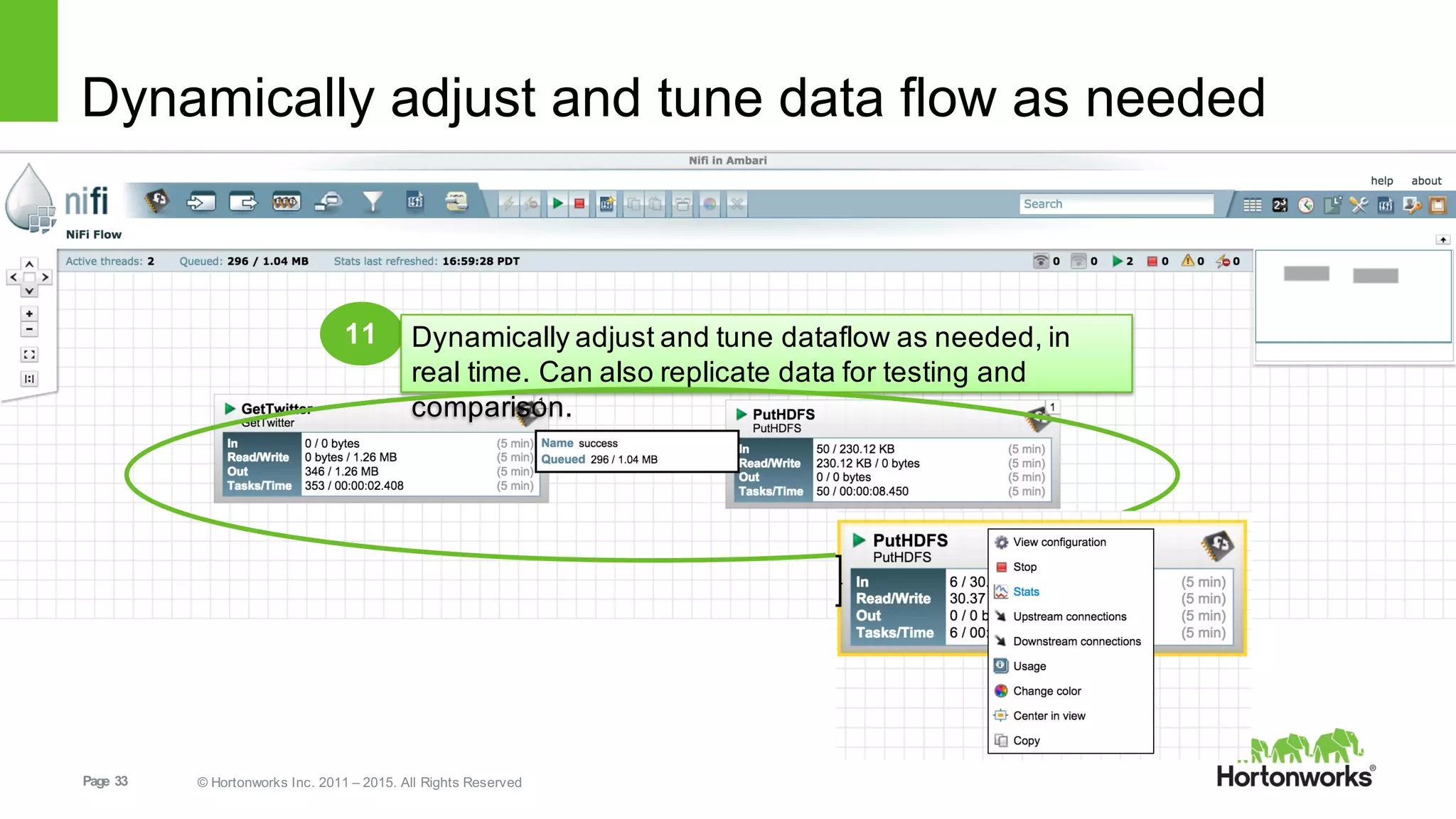

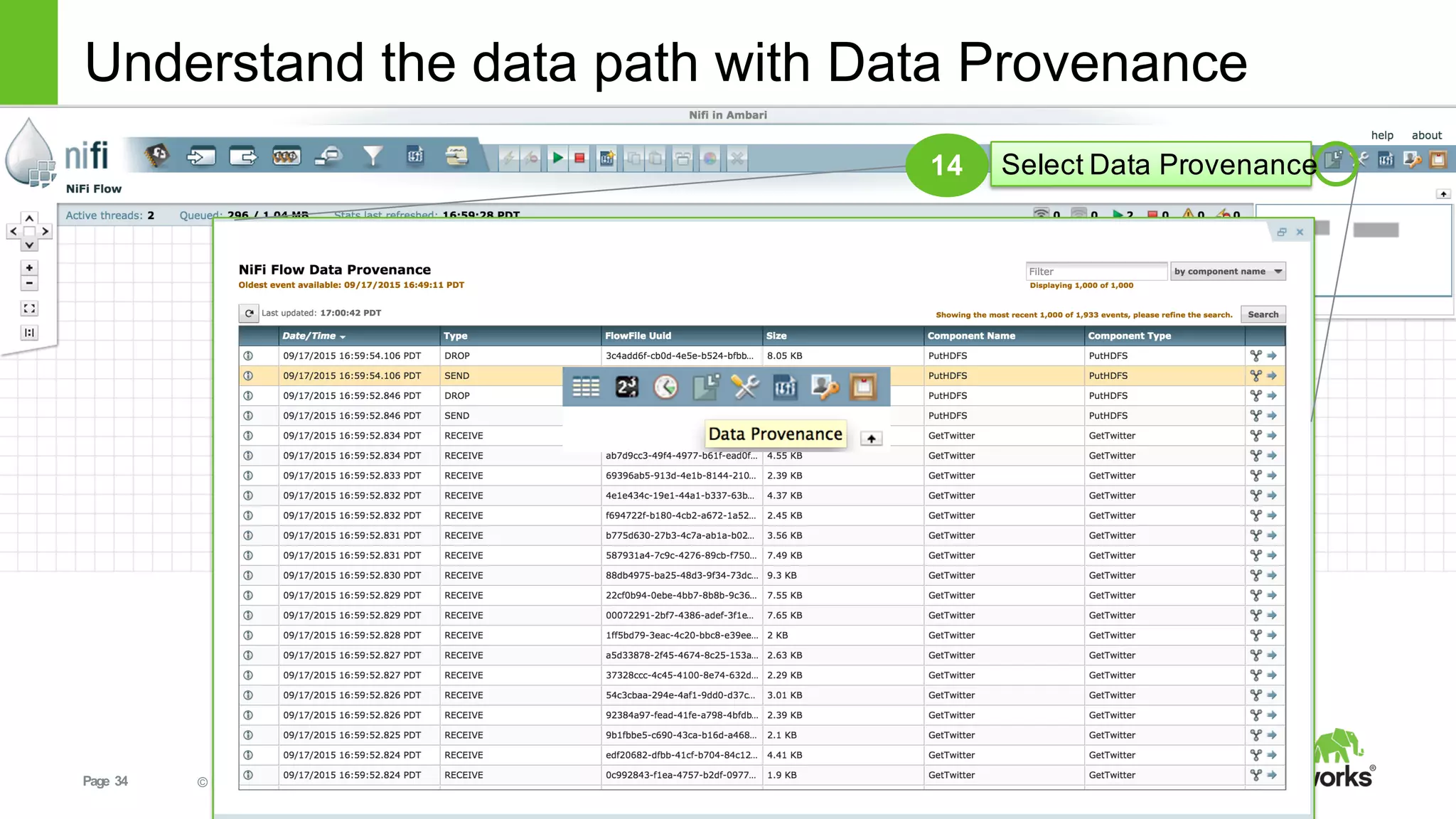

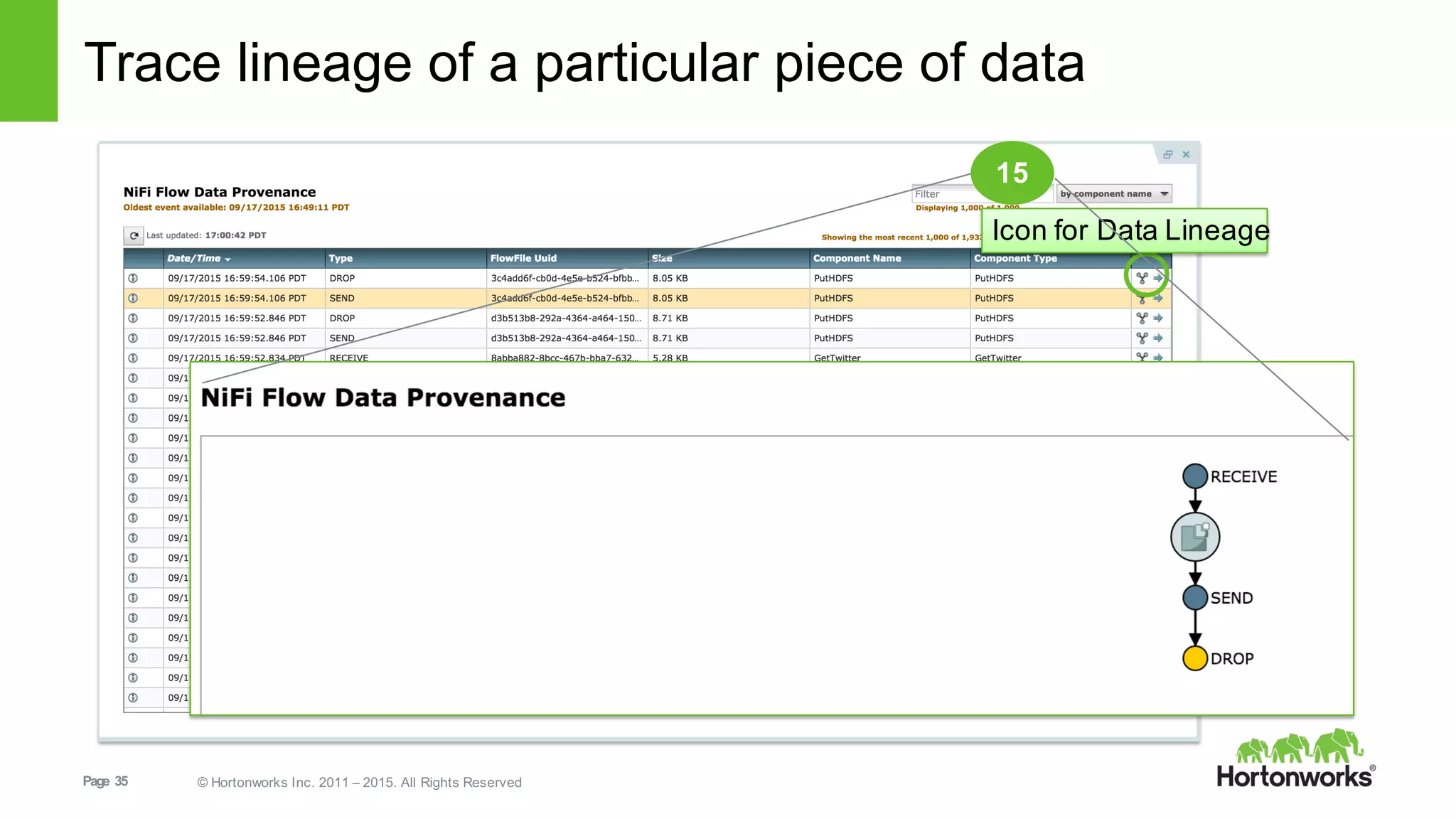

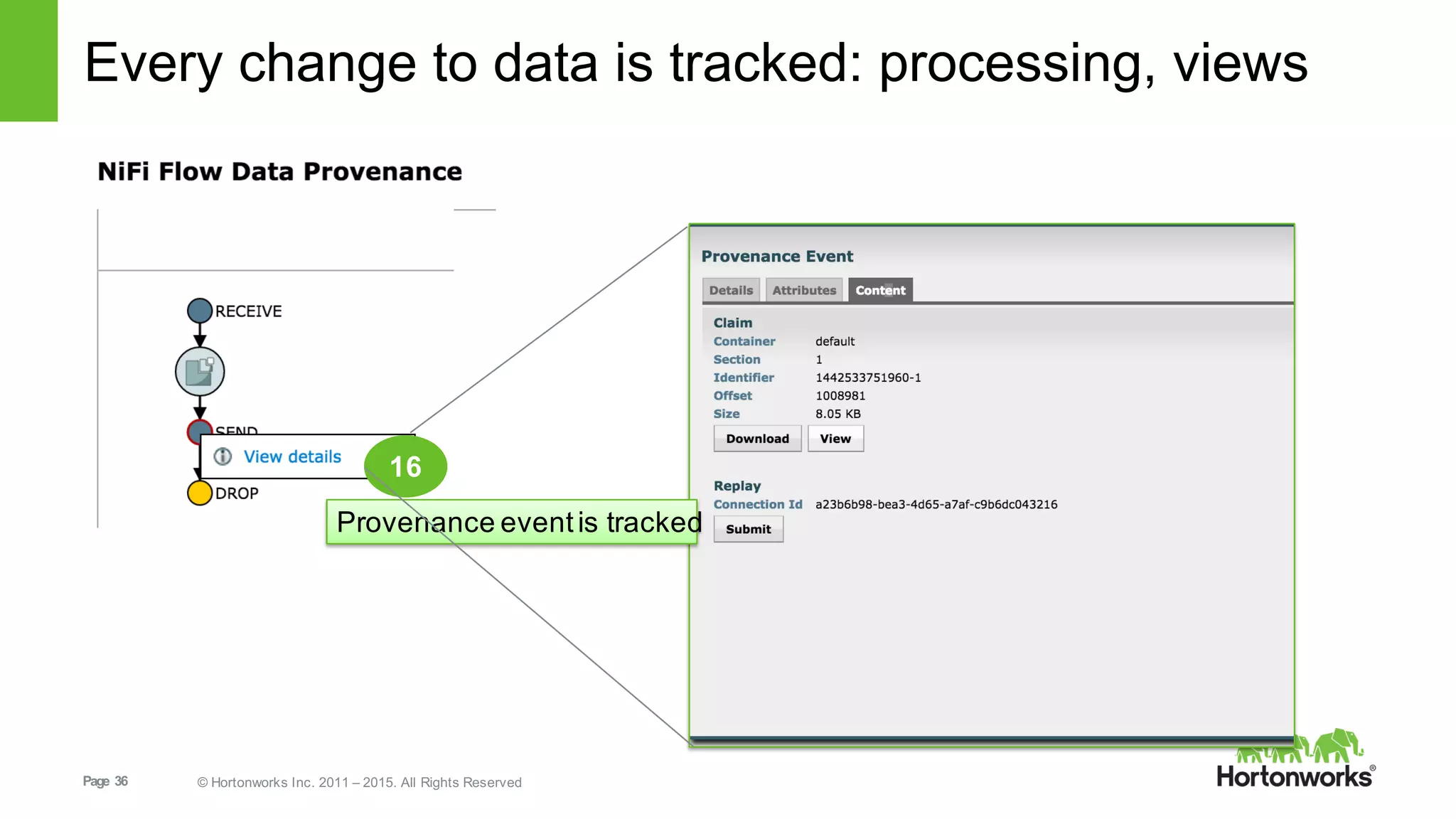

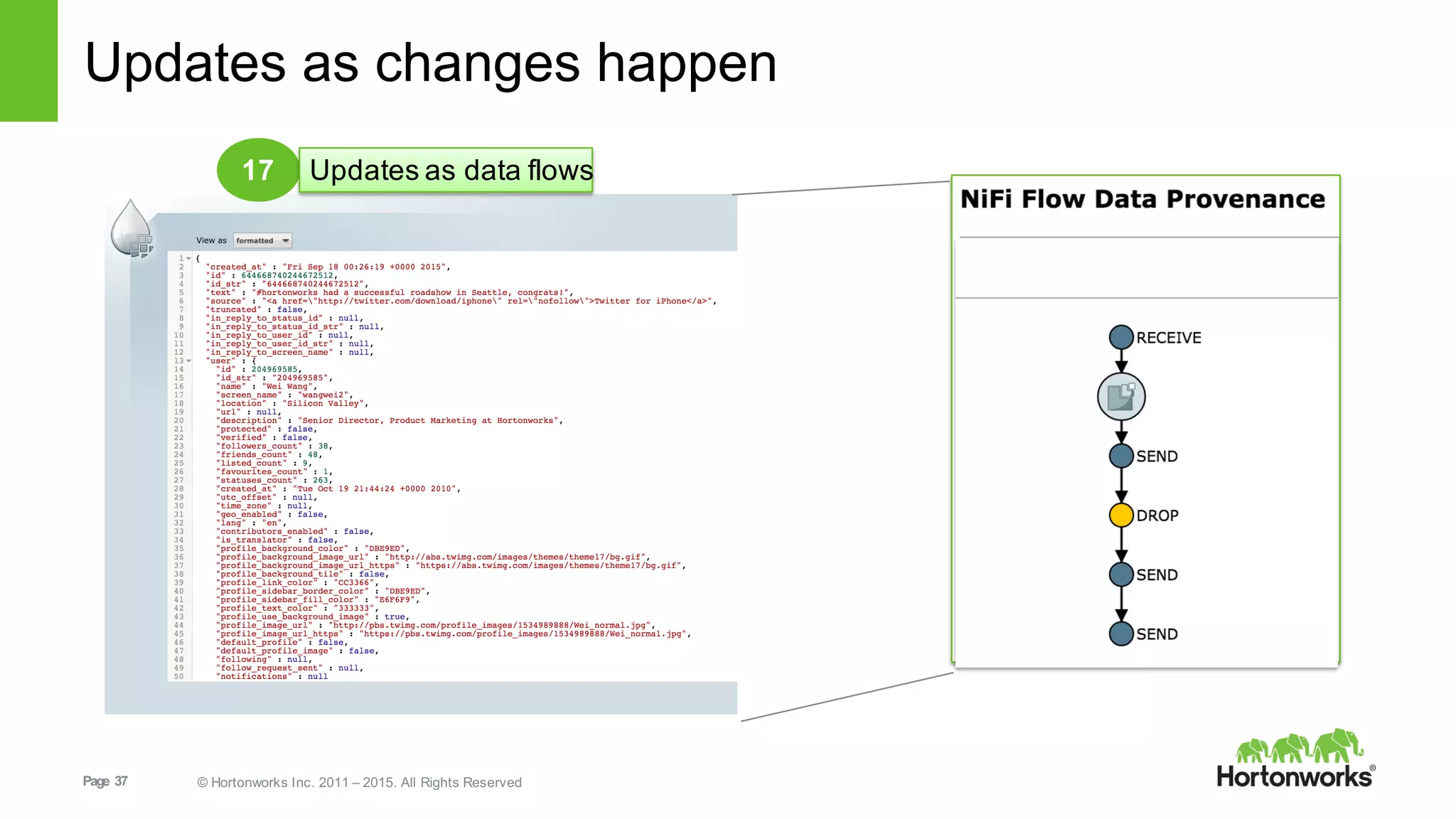

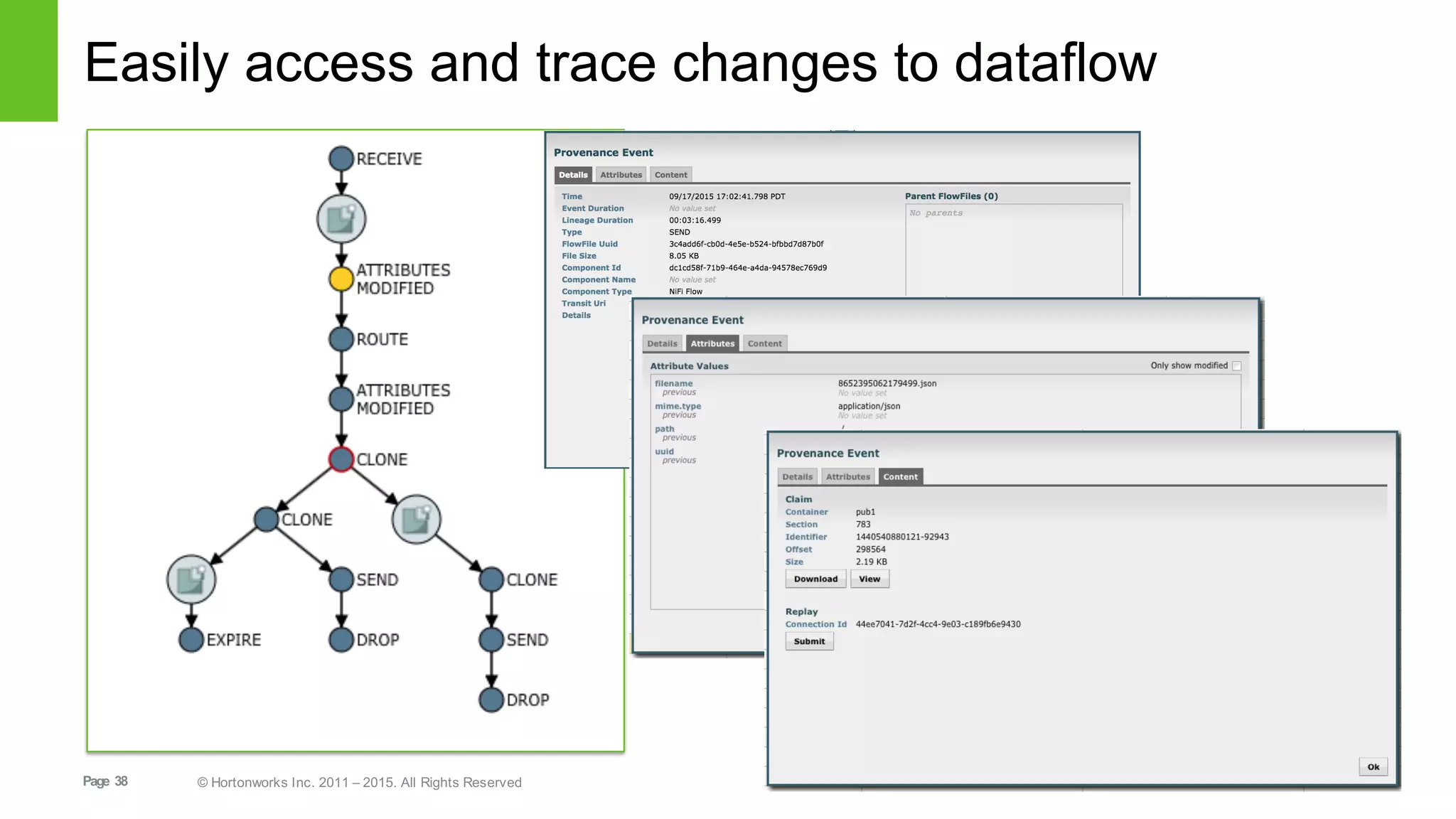

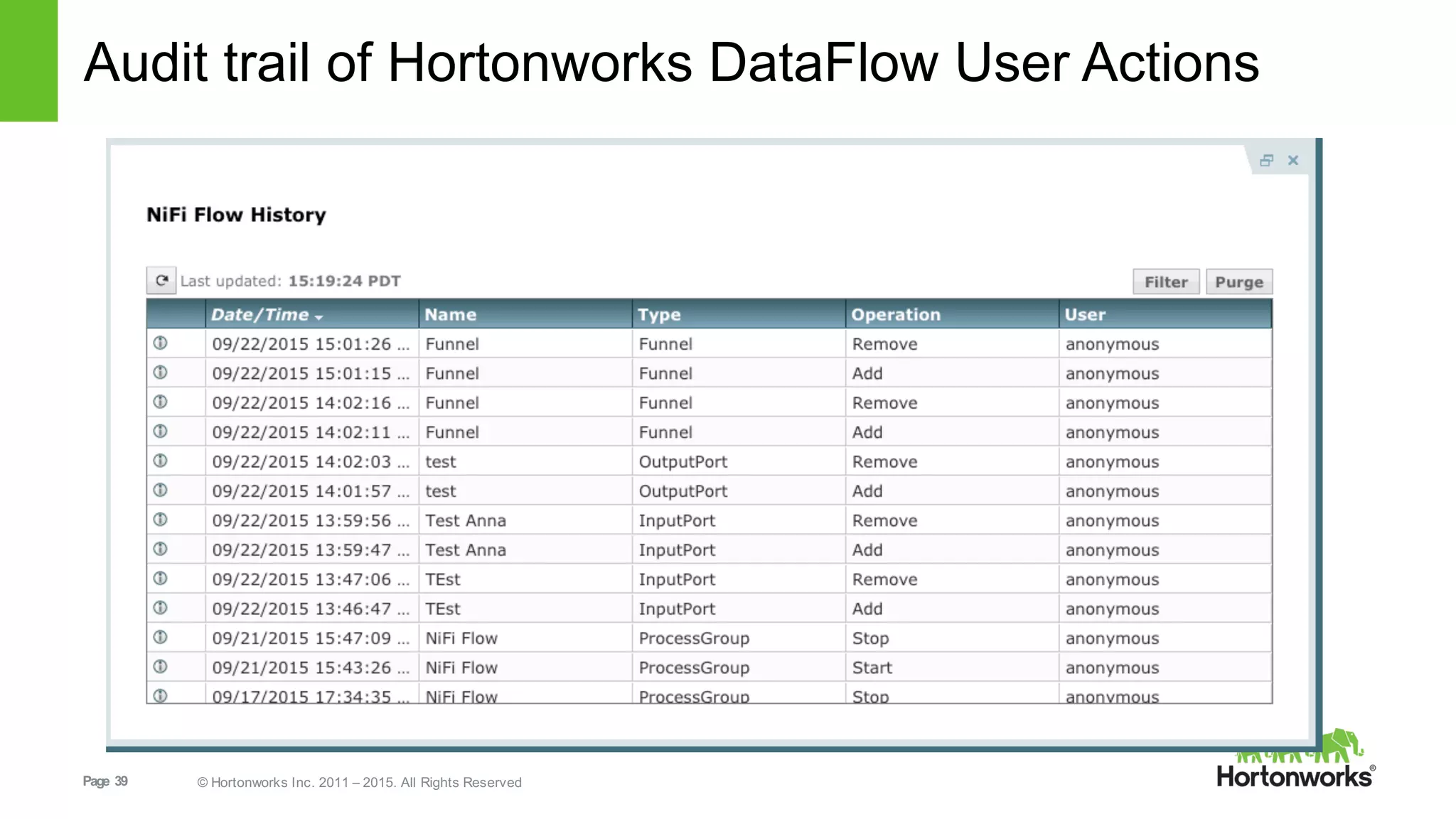

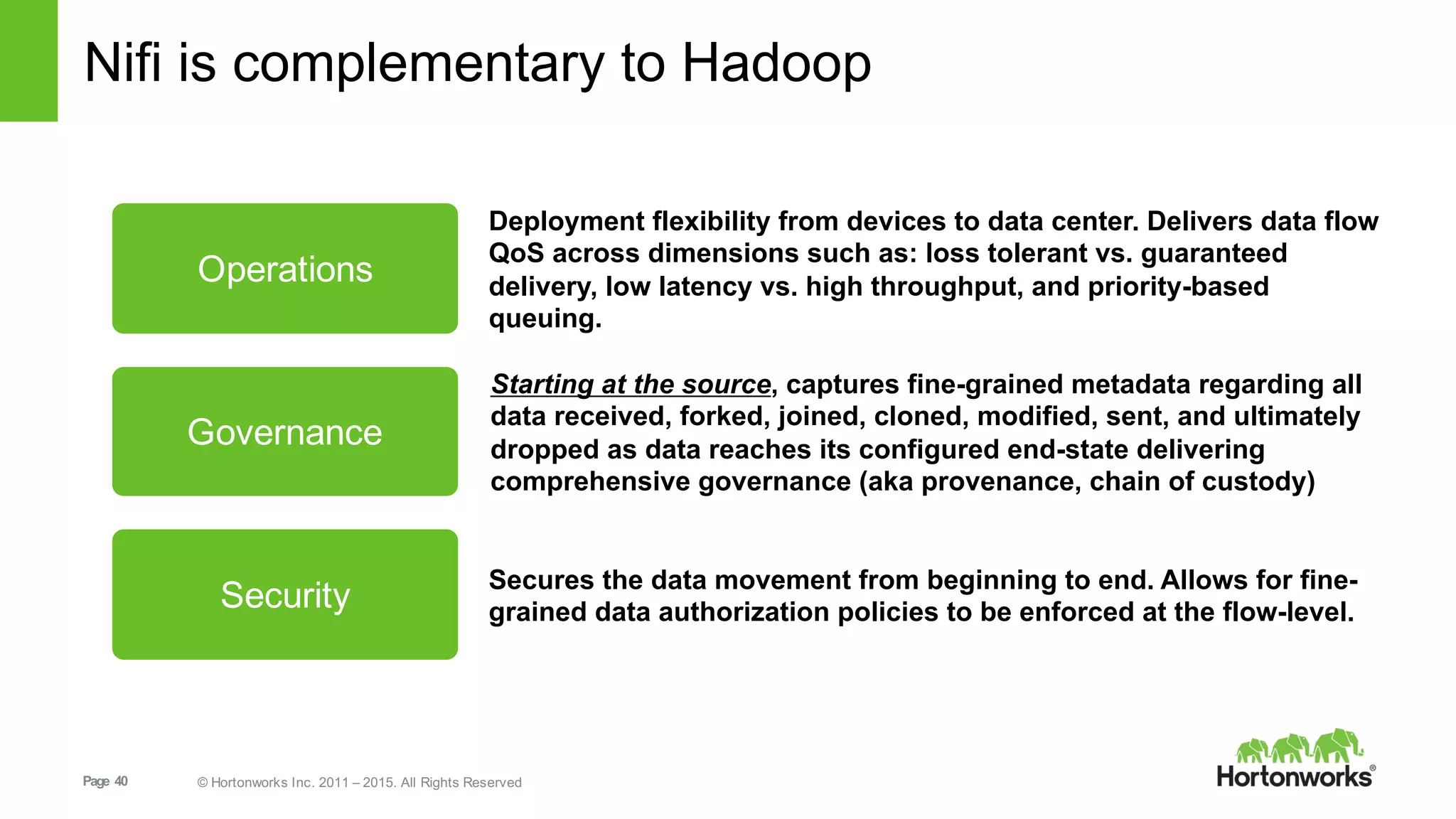

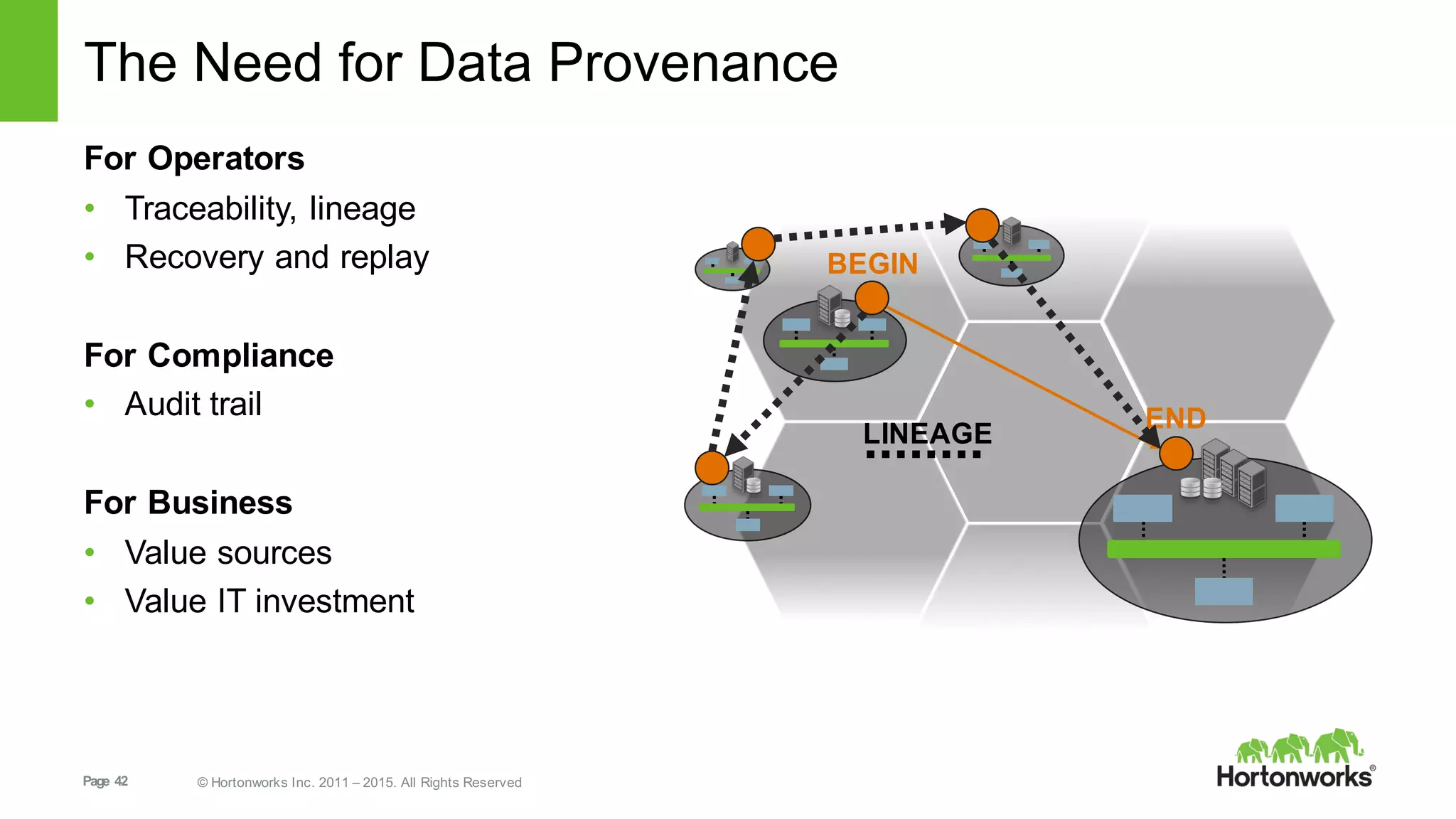

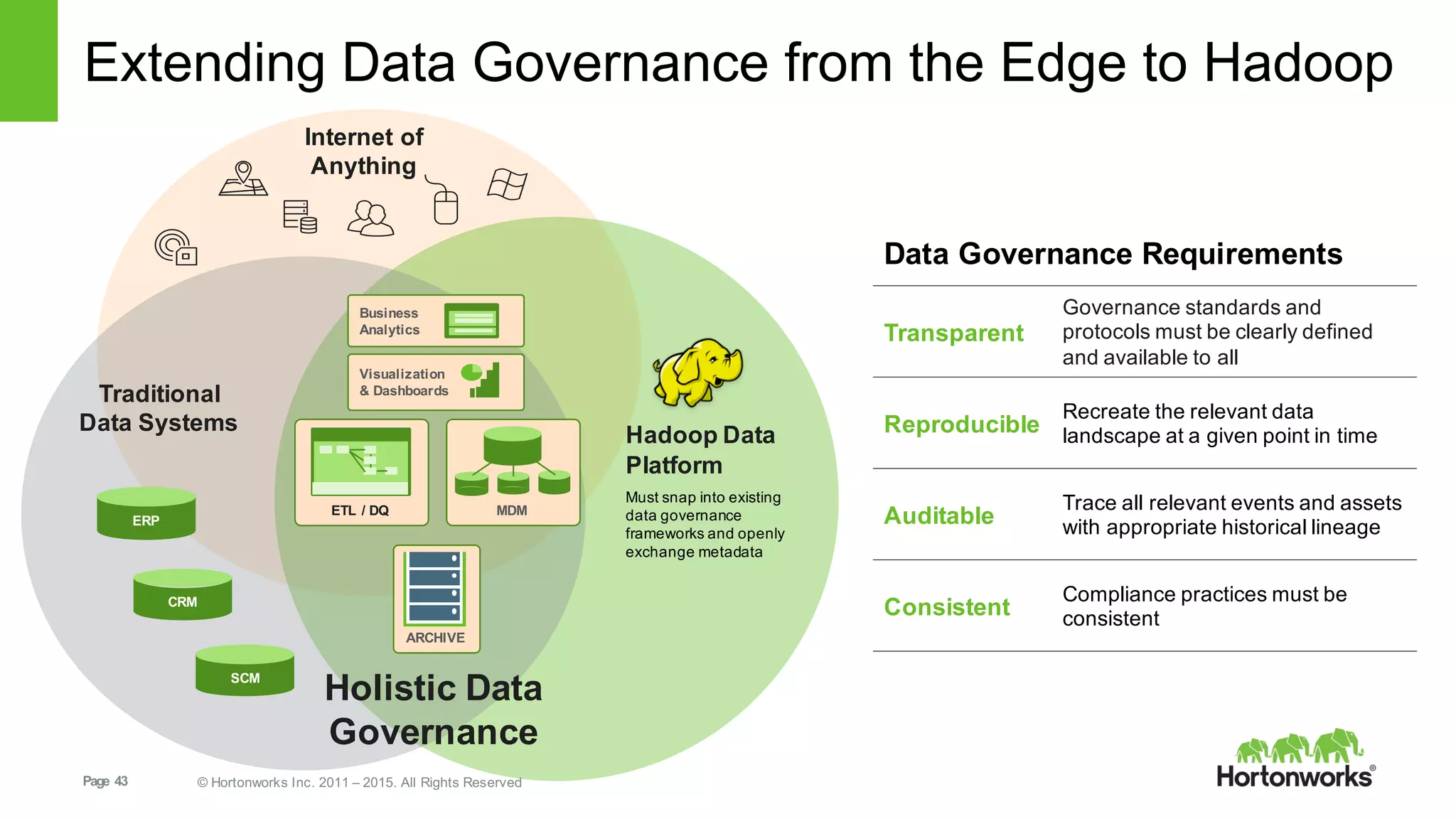

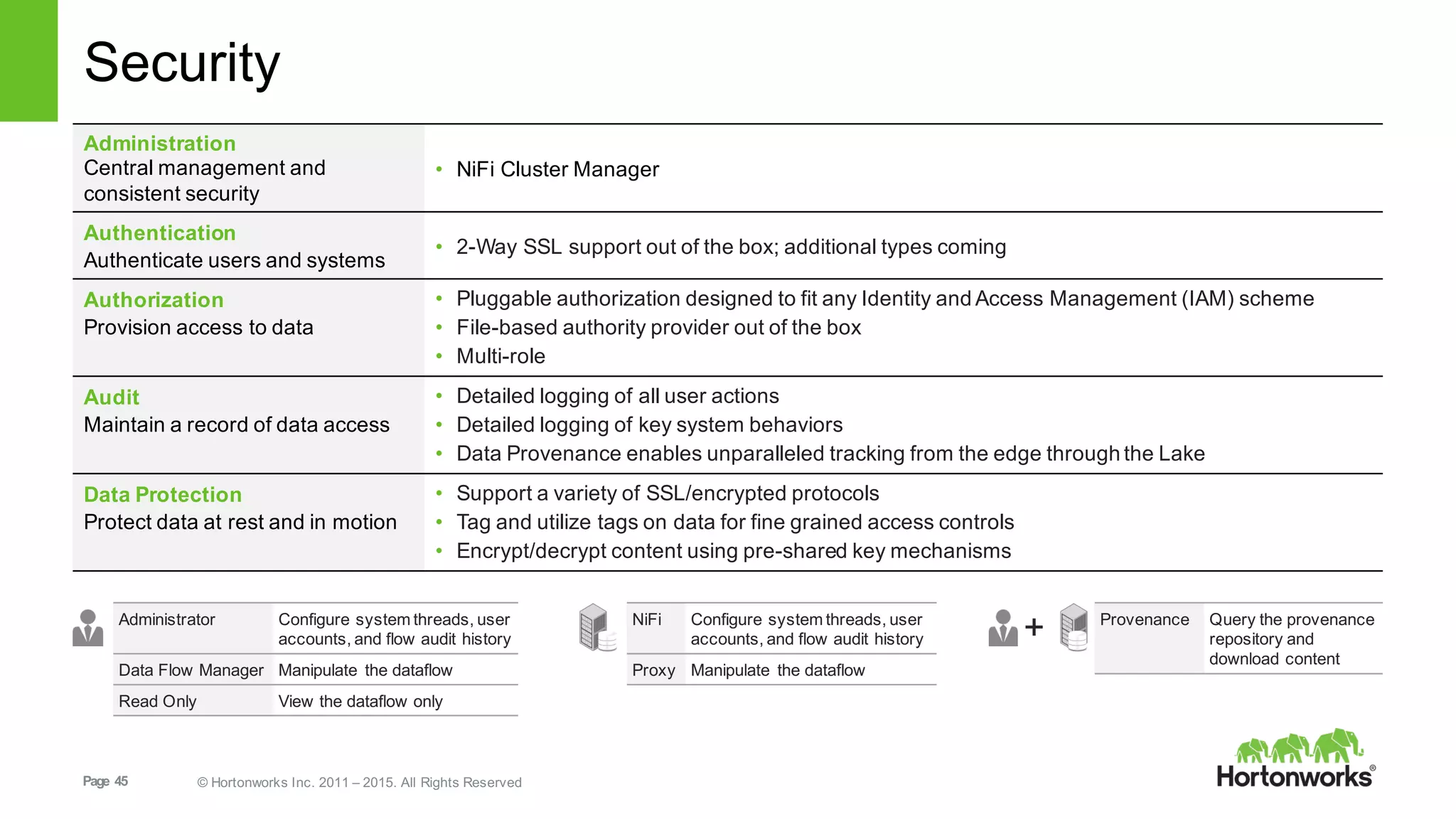

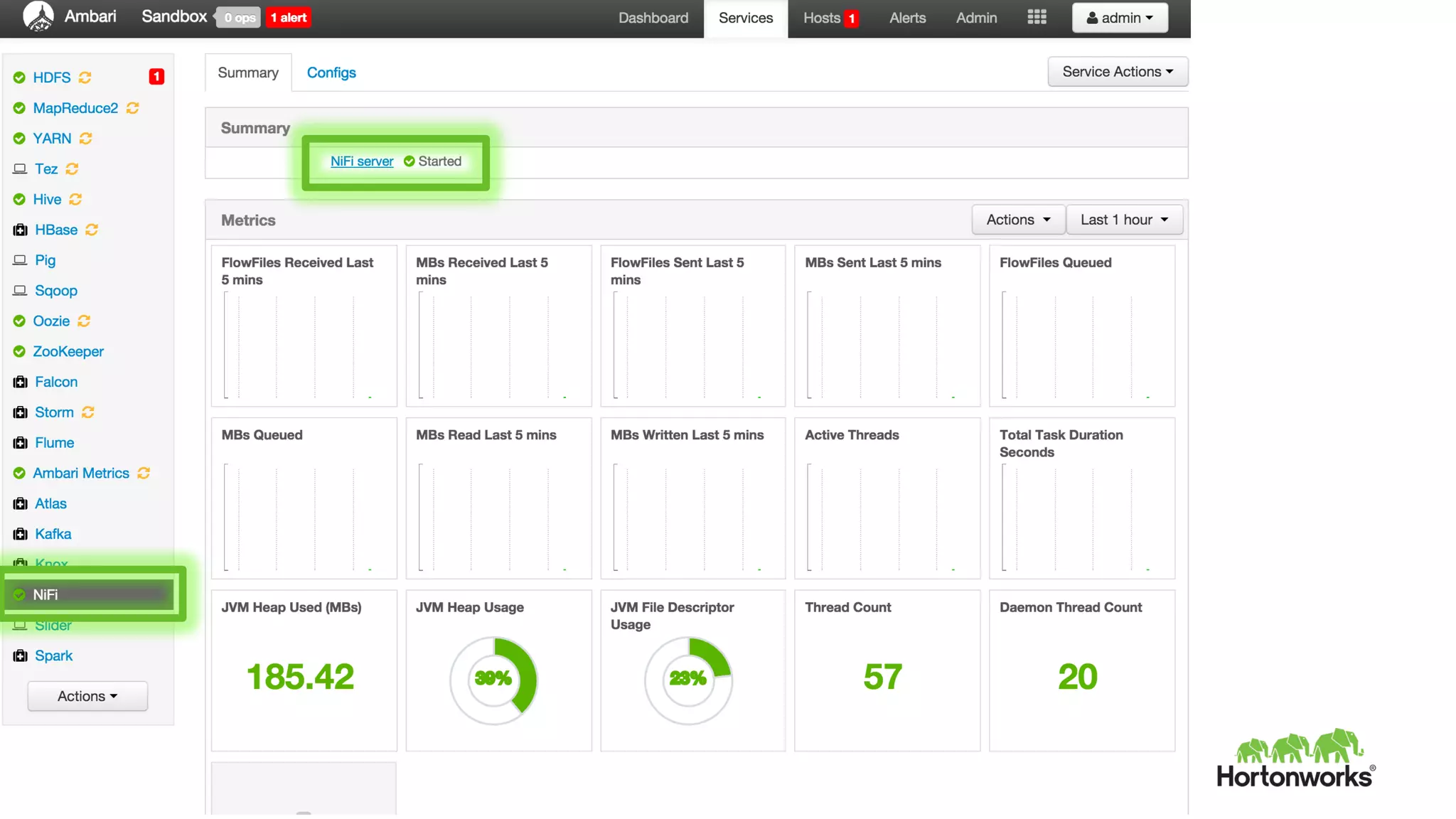

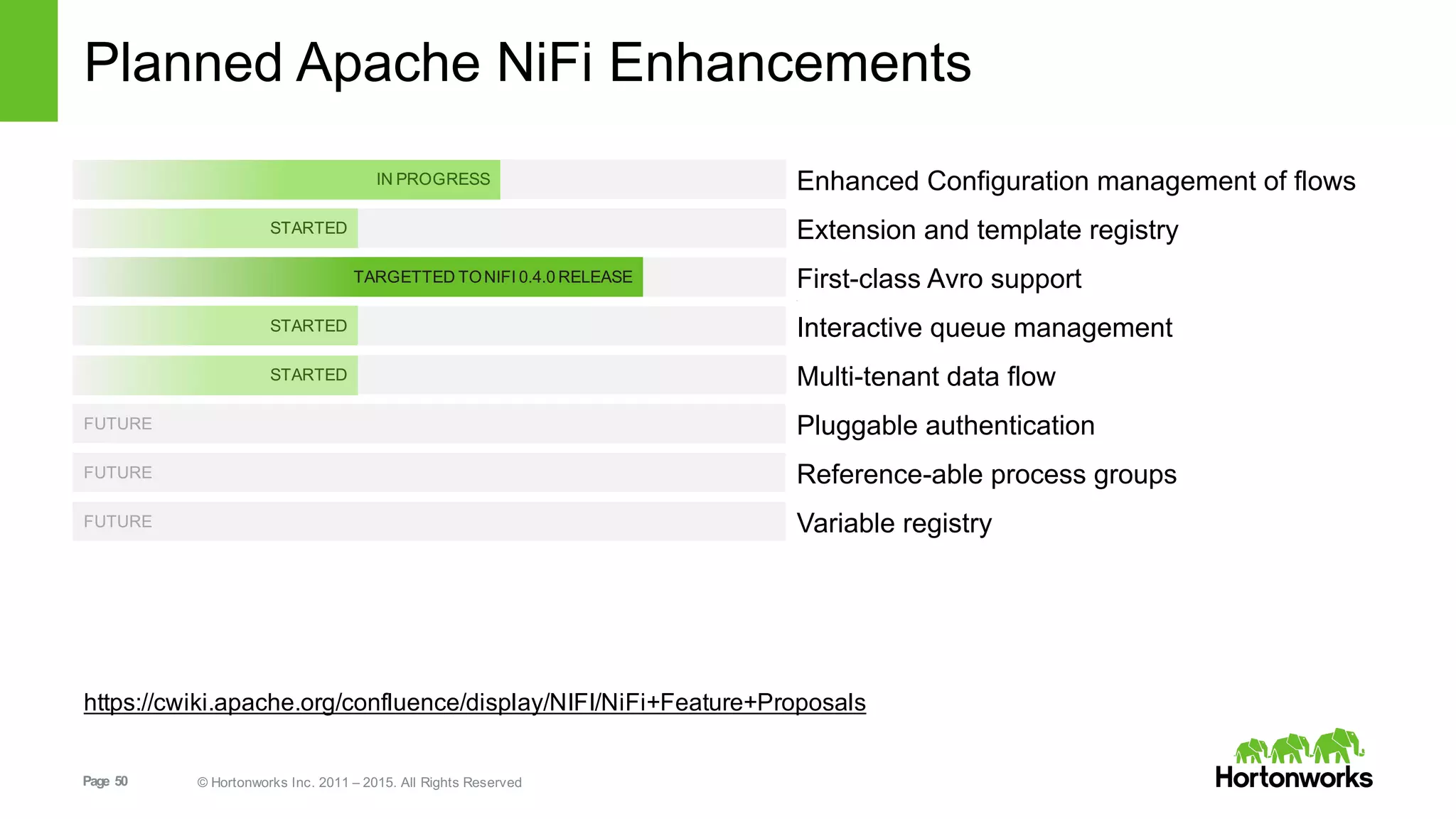

This document provides an overview of Hortonworks DataFlow, which is powered by Apache NiFi. It discusses how the growth of IoT data is outpacing our ability to consume it and how NiFi addresses the new requirements around collecting, securing and analyzing data in motion. Key features of NiFi are highlighted such as guaranteed delivery, data provenance, and its ability to securely manage bidirectional data flows in real-time. Common use cases like predictive analytics, compliance and IoT optimization are also summarized.