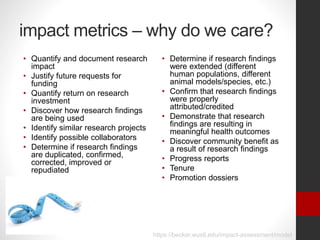

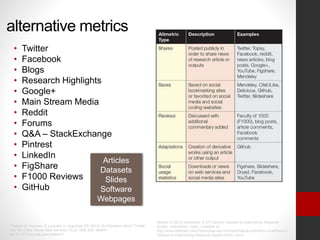

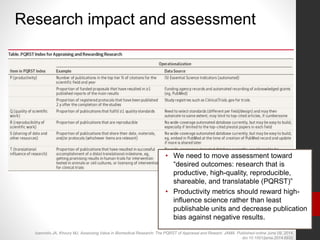

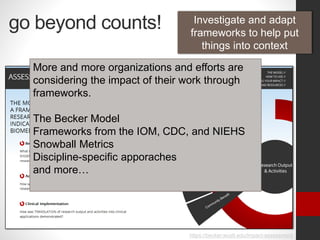

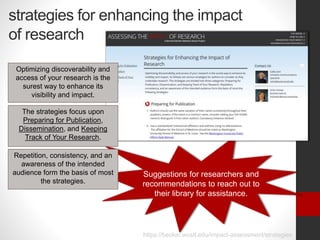

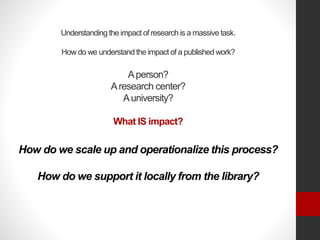

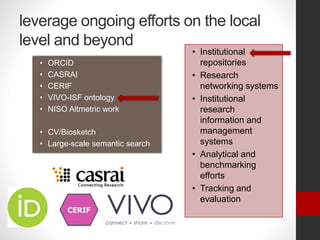

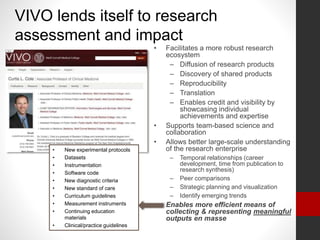

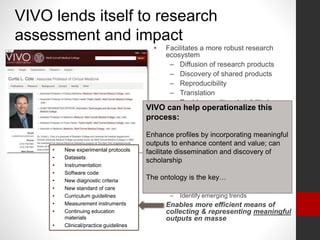

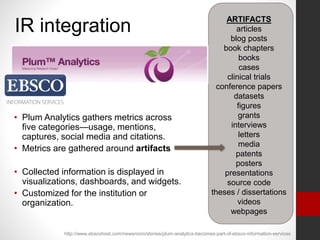

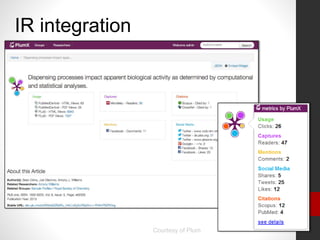

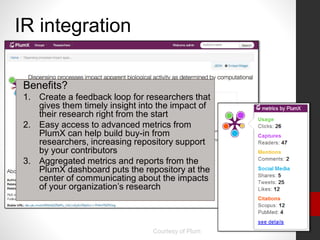

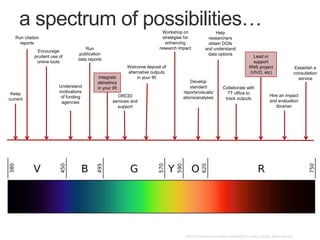

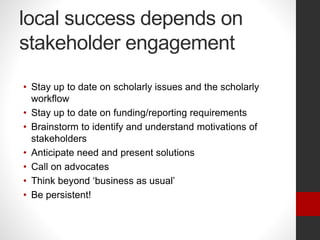

The document discusses the role of libraries in assessing and reporting the impact of research, emphasizing the importance of metrics and frameworks for quantifying research impact and facilitating collaboration. It highlights alternative metrics, the need for strategic approaches to enhance research visibility, and the integration of tools like Plum Analytics for monitoring research outputs. The author calls for proactive engagement with stakeholders to improve research assessment practices at both local and institutional levels.