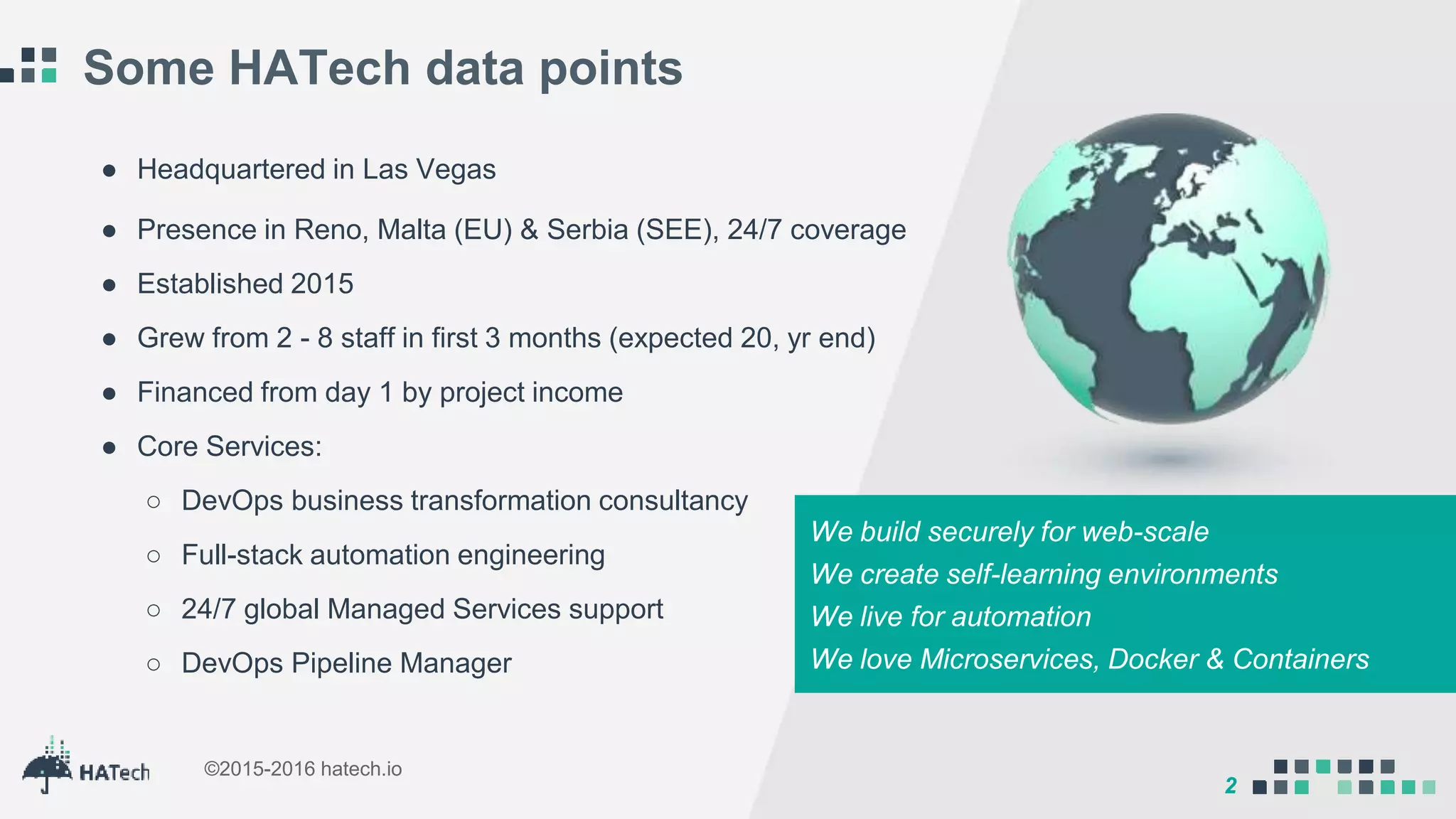

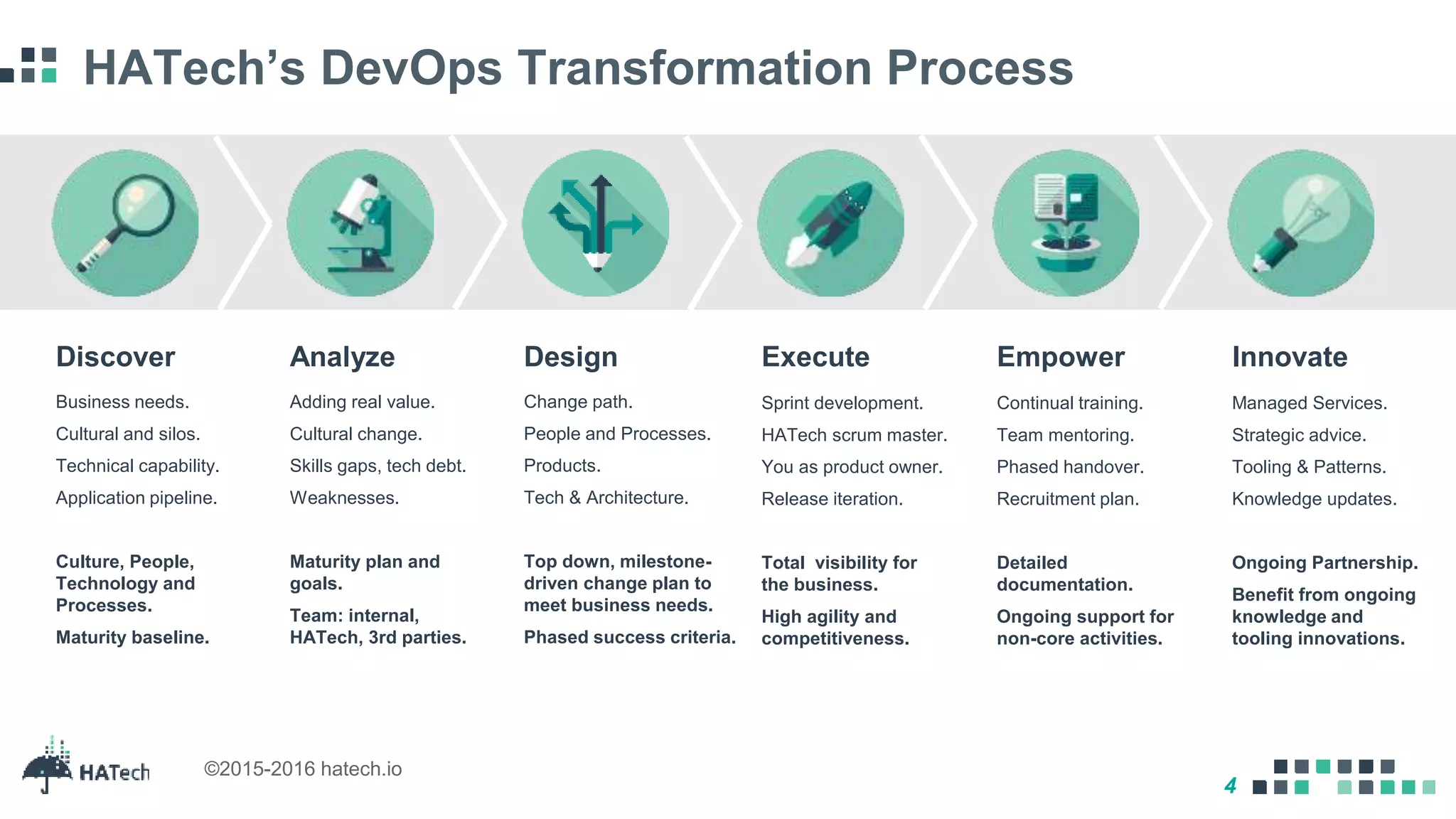

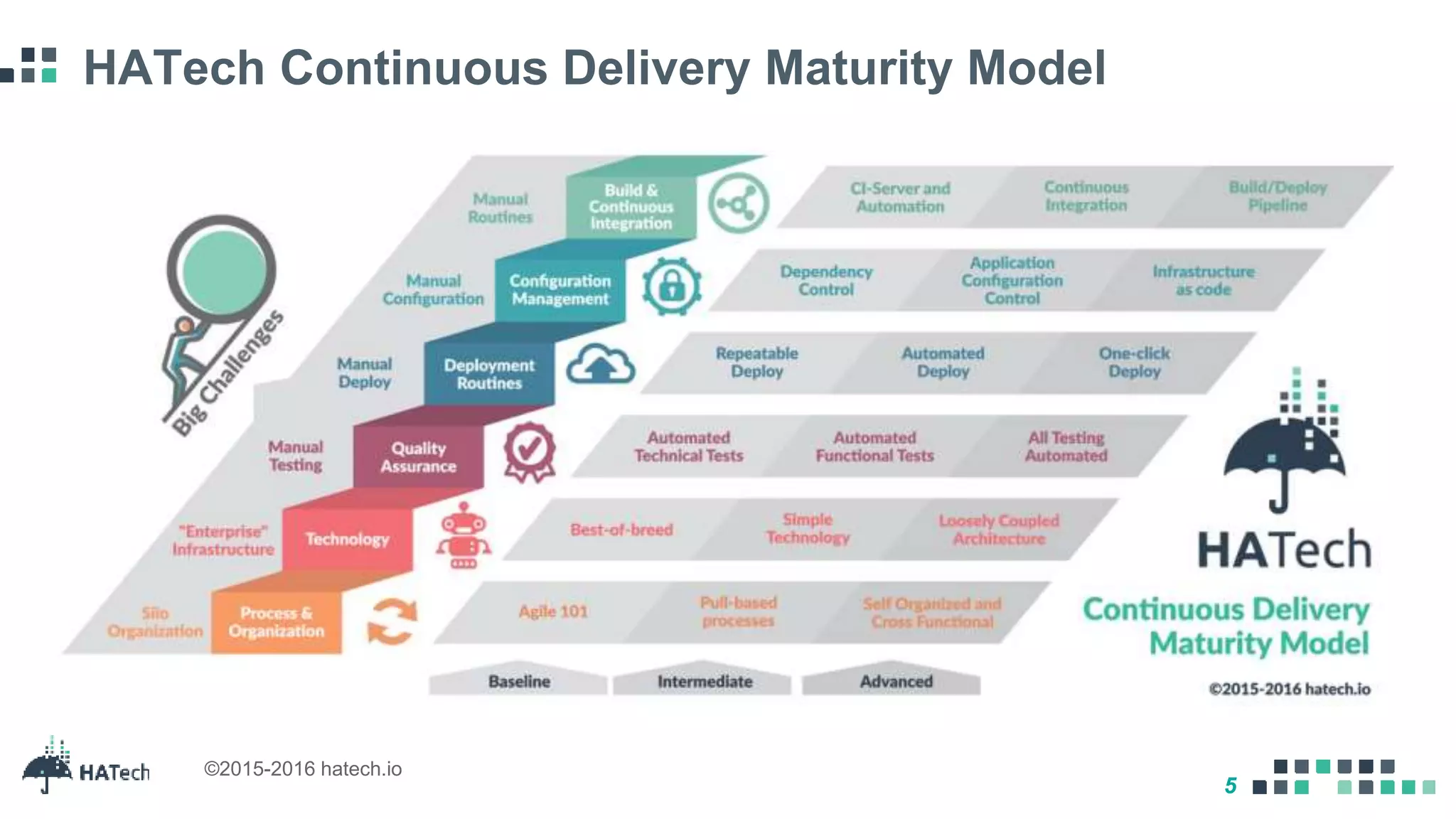

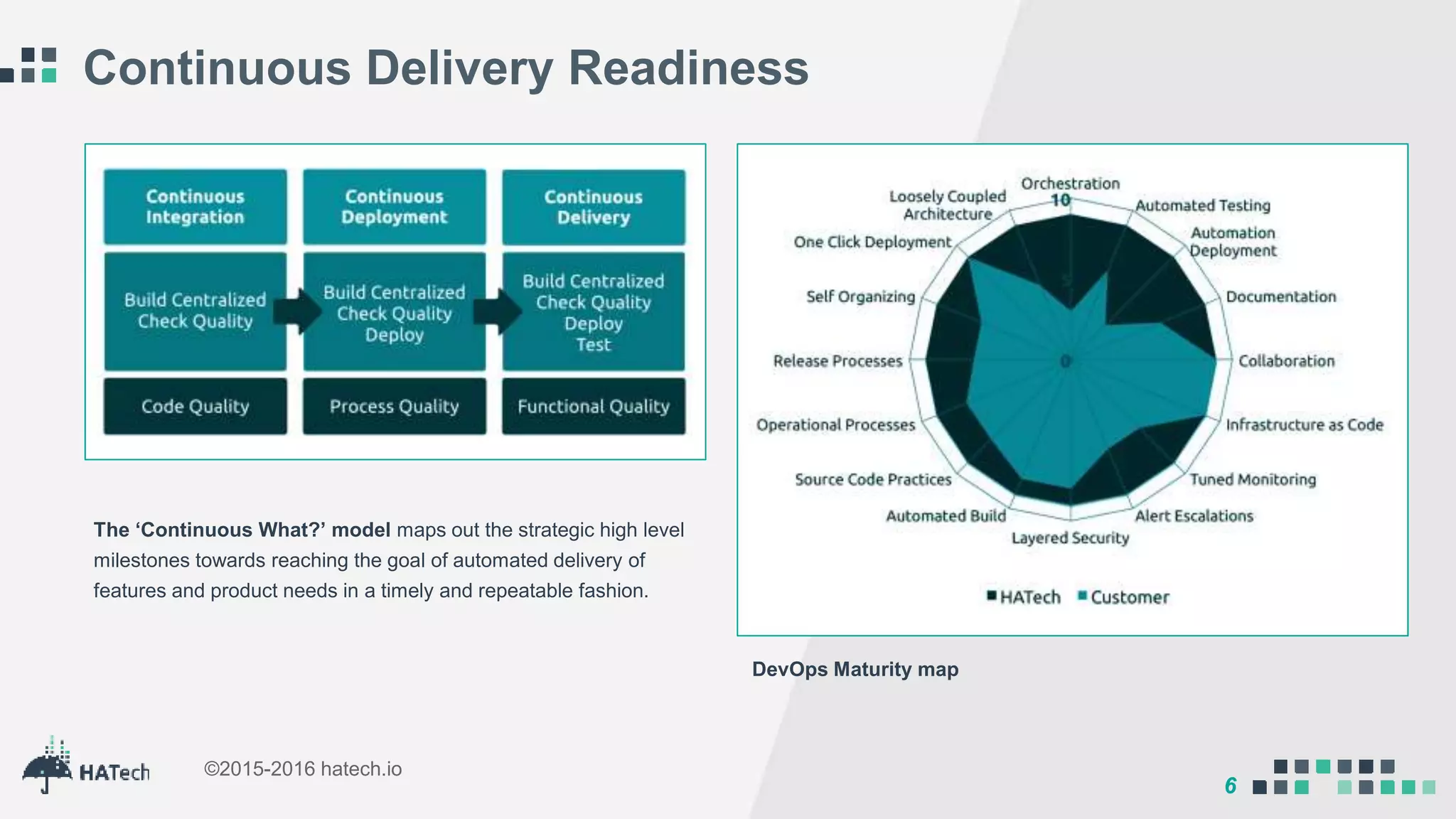

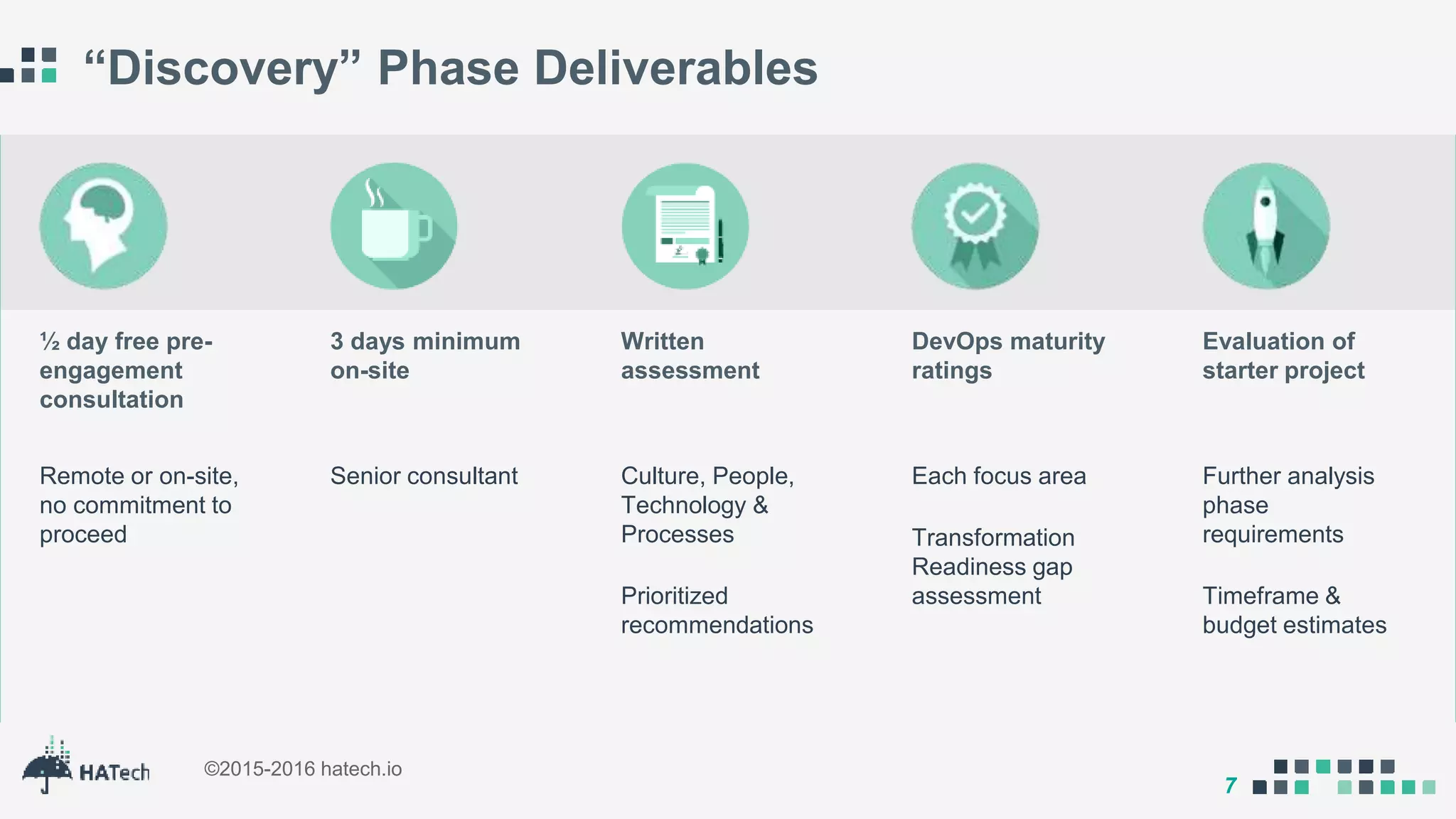

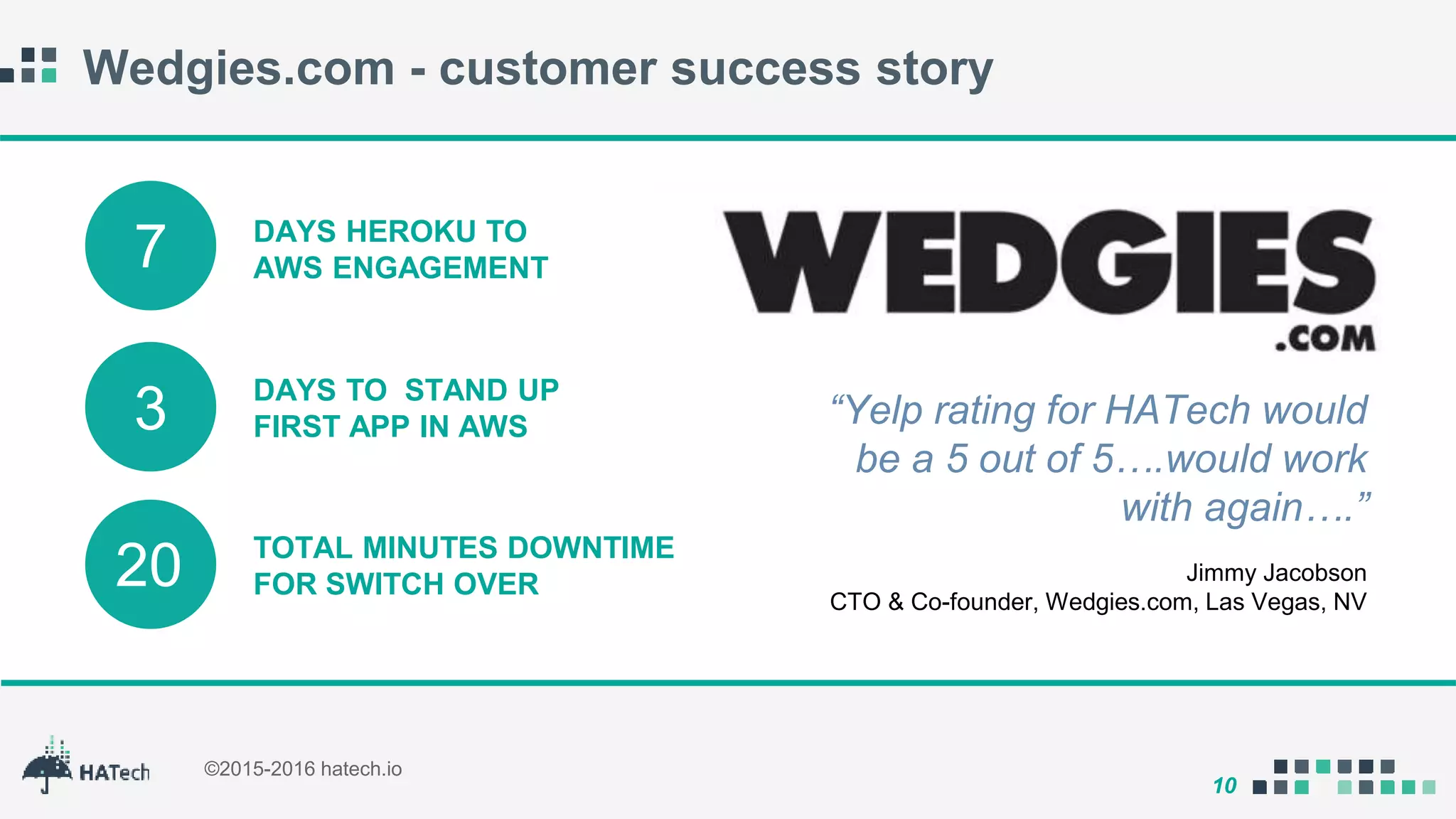

Hatech, founded in 2015, specializes in DevOps transformation, providing services such as full-stack automation engineering and managed services to enhance business agility and continuous delivery. The company is headquartered in Las Vegas with a presence in multiple locations and focuses on automating hybrid pipelines to meet client needs. Customer success stories indicate notable improvements in operational efficiency and project delivery times due to Hatech's interventions.