Harnessing Court Data using NLP and Spoken Language Technology

•

0 likes•98 views

Poster presenting the "Harnessing court data using NLP and spoken language technology". https://dinel.org.uk/research/projects/harnessingNLP4court/

Report

Share

Report

Share

Download to read offline

Recommended

UNDERSTAND SHORTTEXTS BY HARVESTING & ANALYZING SEMANTIKNOWLEDGE

The document proposes a system for understanding short texts by exploiting semantic knowledge from external knowledge bases and web corpora. It discusses challenges in analyzing short texts using traditional NLP methods due to their ambiguous and non-standard language. The system addresses this by harvesting semantic knowledge and applying knowledge-intensive approaches to tasks like segmentation, POS tagging and concept labeling to better understand semantics. An evaluation shows these knowledge-based methods are effective and efficient for short text understanding.

Answer extraction and passage retrieval for

—Question Answering systems (QASs) do the task of

retrieving text portions from a collection of documents that

contain the answer to the user’s questions. These QASs use a

variety of linguistic tools that be able to deal with small

fragments of text. Therefore, to retrieve the documents which

contains the answer from a large document collections, QASs

employ Information Retrieval (IR) techniques to minimize the

number of documents collections to a treatable amount of

relevant text. In this paper, we propose a model for passage

retrieval model that do this task with a better performance for

the purpose of Arabic QASs. We first segment each the top five

ranked documents returned by the IR module into passages.

Then, we compute the similarity score between the user’s

question terms and each passage. The top five passages (with

high similarity score) are retrieved are retrieved. Finally,

Answer Extraction techniques are applied to extract the final

answer. Our method achieved an average for precision of

87.25%, Recall of 86.2% and F1-measure of 87%.

Submission_36

The document describes a prototype system that provides information about common abbreviations to users through text messaging on low-cost mobile phones without internet access. The system receives an acronym query in Roman script from the user, scrapes a brief definition from the English Wikipedia page, translates it to the user's native language, and sends the response via text message transliterated in Roman script. It aims to help semi-literate users who may lack English knowledge or technological skills access information about abbreviations they encounter.

A Novel Method for Encoding Data Firmness in VLSI Circuits

The number of tests, corresponding test data volume and test time increase with each new fabrication process technology.

Higher circuit densities in system-on-chip (SOC) designs have led to drastic increase in test data volume. Larger test data size demands

not only higher memory requirements, but also an increase in testing power and time. Test data compression method can be used to

solve this problem by reducing the test data volume without affecting the overall system performance. The original test data is

compressed and stored in the memory. Thus, the memory size is significantly reduced. The proposed approach combines the selective

encoding method and dictionary based encoding method that reduces test data volume and test application time for testing. The

experiment is done on combinational benchmark circuit that designed using Tanner tool and the encoding algorithm is implemented

using Model -Sim

Improved Count Suffix Trees for Natural Language Data

With more and more text data stored in databases, the problem of handling natural language query predicates becomes highly important. Closely related to query optimization for these predicates is the (sub)string estimation problem, i.e., estimating the selectivity of query terms before query execution based on small summary statistics. The Count Suffix Trees (CST) is the data structure commonly used to address this problem. While selectivity estimates based on CST tend to be good, they are computationally expensive to build and require a large amount of memory for storage. To fit CST into the data dictionary of database systems, they have to be pruned severely. Pruning techniques proposed so far are based on term (suffix) frequency or on the tree depth of nodes. In this paper, we propose new filtering and pruning techniques that reduce the building cost and the size of CST over natural-language texts. The core idea is to exploit the features of the natural language data over which the CST is built. In particular, we aim at regarding only those suffixes that are useful in a linguistic sense. We use (wellknown) IR techniques to identify them. The most important innovations are as follows: (a) We propose and use a new optimistic syllabification technique to filter out suffixes. (b) We introduce a new affix and prefix stripping procedure that is more aggressive than conventional stemming techniques, which are commonly used to reduce the size of indices. (c) We observe that misspellings and other language anomalies like foreign words incur an over-proportional growth of the CST. We apply state-of-the-art trigram techniques as well as a new syllable-based non-word detection mechanism to filter out such substrings. – Our evaluation with large English text corpora shows that our new mechanisms in combination decrease the size of a CST by up to 80%, already during construction, and at the same time increase the accuracy of selectivity estimates computed from the final CST by up to 70%.

Challenge of Image Retrieval, Brighton, 2000 1 ANVIL: a System for the Retrie...

ANVIL is a system designed for the retrieval of images annotated with short captions. It uses NLP techniques to extract dependency structures from captions and queries, and then applies a robust matching algorithm to recursively explore and compare them. There are currently two main interfaces to ANVIL: a list-based display and a 2D spatial layout that allows users to interact with and navigate between similar images. ANVIL was designed to operate as part of a publicly accessible, WWW-based image retrieval server. Consequently, product-level engineering standards were required. This paper examines both the research aspects of the system and also looks at some of the design and evaluation issues.

Literature Servey of DNS

This document summarizes and compares four research papers related to Domain Name System (DNS) traffic and performance. The original 1991 research paper analyzed DNS traffic captured from root name servers and found that DNS consumed 20 times more bandwidth than necessary due to errors. It classified seven types of DNS implementation errors. The later papers proposed techniques like enhanced round robin DNS load balancing, a CDN-based DNS architecture, and a federated name resolution framework to address DNS issues in different network architectures and improve performance.

Ieee transactions on 2018 TOPICS with Abstract in audio, speech, and language...

Ieee transactions on 2018 TOPICS with Abstract in audio, speech, and Language Processing for final years Students

Recommended

UNDERSTAND SHORTTEXTS BY HARVESTING & ANALYZING SEMANTIKNOWLEDGE

The document proposes a system for understanding short texts by exploiting semantic knowledge from external knowledge bases and web corpora. It discusses challenges in analyzing short texts using traditional NLP methods due to their ambiguous and non-standard language. The system addresses this by harvesting semantic knowledge and applying knowledge-intensive approaches to tasks like segmentation, POS tagging and concept labeling to better understand semantics. An evaluation shows these knowledge-based methods are effective and efficient for short text understanding.

Answer extraction and passage retrieval for

—Question Answering systems (QASs) do the task of

retrieving text portions from a collection of documents that

contain the answer to the user’s questions. These QASs use a

variety of linguistic tools that be able to deal with small

fragments of text. Therefore, to retrieve the documents which

contains the answer from a large document collections, QASs

employ Information Retrieval (IR) techniques to minimize the

number of documents collections to a treatable amount of

relevant text. In this paper, we propose a model for passage

retrieval model that do this task with a better performance for

the purpose of Arabic QASs. We first segment each the top five

ranked documents returned by the IR module into passages.

Then, we compute the similarity score between the user’s

question terms and each passage. The top five passages (with

high similarity score) are retrieved are retrieved. Finally,

Answer Extraction techniques are applied to extract the final

answer. Our method achieved an average for precision of

87.25%, Recall of 86.2% and F1-measure of 87%.

Submission_36

The document describes a prototype system that provides information about common abbreviations to users through text messaging on low-cost mobile phones without internet access. The system receives an acronym query in Roman script from the user, scrapes a brief definition from the English Wikipedia page, translates it to the user's native language, and sends the response via text message transliterated in Roman script. It aims to help semi-literate users who may lack English knowledge or technological skills access information about abbreviations they encounter.

A Novel Method for Encoding Data Firmness in VLSI Circuits

The number of tests, corresponding test data volume and test time increase with each new fabrication process technology.

Higher circuit densities in system-on-chip (SOC) designs have led to drastic increase in test data volume. Larger test data size demands

not only higher memory requirements, but also an increase in testing power and time. Test data compression method can be used to

solve this problem by reducing the test data volume without affecting the overall system performance. The original test data is

compressed and stored in the memory. Thus, the memory size is significantly reduced. The proposed approach combines the selective

encoding method and dictionary based encoding method that reduces test data volume and test application time for testing. The

experiment is done on combinational benchmark circuit that designed using Tanner tool and the encoding algorithm is implemented

using Model -Sim

Improved Count Suffix Trees for Natural Language Data

With more and more text data stored in databases, the problem of handling natural language query predicates becomes highly important. Closely related to query optimization for these predicates is the (sub)string estimation problem, i.e., estimating the selectivity of query terms before query execution based on small summary statistics. The Count Suffix Trees (CST) is the data structure commonly used to address this problem. While selectivity estimates based on CST tend to be good, they are computationally expensive to build and require a large amount of memory for storage. To fit CST into the data dictionary of database systems, they have to be pruned severely. Pruning techniques proposed so far are based on term (suffix) frequency or on the tree depth of nodes. In this paper, we propose new filtering and pruning techniques that reduce the building cost and the size of CST over natural-language texts. The core idea is to exploit the features of the natural language data over which the CST is built. In particular, we aim at regarding only those suffixes that are useful in a linguistic sense. We use (wellknown) IR techniques to identify them. The most important innovations are as follows: (a) We propose and use a new optimistic syllabification technique to filter out suffixes. (b) We introduce a new affix and prefix stripping procedure that is more aggressive than conventional stemming techniques, which are commonly used to reduce the size of indices. (c) We observe that misspellings and other language anomalies like foreign words incur an over-proportional growth of the CST. We apply state-of-the-art trigram techniques as well as a new syllable-based non-word detection mechanism to filter out such substrings. – Our evaluation with large English text corpora shows that our new mechanisms in combination decrease the size of a CST by up to 80%, already during construction, and at the same time increase the accuracy of selectivity estimates computed from the final CST by up to 70%.

Challenge of Image Retrieval, Brighton, 2000 1 ANVIL: a System for the Retrie...

ANVIL is a system designed for the retrieval of images annotated with short captions. It uses NLP techniques to extract dependency structures from captions and queries, and then applies a robust matching algorithm to recursively explore and compare them. There are currently two main interfaces to ANVIL: a list-based display and a 2D spatial layout that allows users to interact with and navigate between similar images. ANVIL was designed to operate as part of a publicly accessible, WWW-based image retrieval server. Consequently, product-level engineering standards were required. This paper examines both the research aspects of the system and also looks at some of the design and evaluation issues.

Literature Servey of DNS

This document summarizes and compares four research papers related to Domain Name System (DNS) traffic and performance. The original 1991 research paper analyzed DNS traffic captured from root name servers and found that DNS consumed 20 times more bandwidth than necessary due to errors. It classified seven types of DNS implementation errors. The later papers proposed techniques like enhanced round robin DNS load balancing, a CDN-based DNS architecture, and a federated name resolution framework to address DNS issues in different network architectures and improve performance.

Ieee transactions on 2018 TOPICS with Abstract in audio, speech, and language...

Ieee transactions on 2018 TOPICS with Abstract in audio, speech, and Language Processing for final years Students

Implementing a Caching Scheme for Media Streaming in a Proxy Server

In the past few years, websites have moved from being

static web pages into rich media applications that use audio,

images and videos heavily in their interaction with users. This

change has made a dramatic change in network traffics

nowadays. Organizations spend a lot of effort, time and money

to improve response time and design intermediary systems that

enhance overall user experience. Media traffic represents

about 69.9-88.8% of all traffic. Therefore, enhancing networks

to accommodate this large traffic is a major trend. Content

Distribution Networks (CDNs) are now largely deployed for a

faster delivery of media. Redundancy and caching are also

implemented to decrease response time.

In this project, we are implementing a caching scheme for

media streaming in a proxy server. Unlike CDNs, which

require huge infrastructure, our caching proxy server will be

as simple as a piece of software that is portable and can be

installed in small as well as large scales. It may be deployed in

a university network, company’s private network or on ISPs

servers. This caching scheme, specially tailored for media

streaming, will reduce traffic and enhance network efficiency

in general.

Index Terms – Proxy servers, Caching, Media streaming

team10.ppt.pptx

The document describes a voice activated text editing software project that uses speech recognition, speech synthesis, and machine learning based text summarization. The software allows users to create notes, import documents, and perform text functions using voice commands. It was created to reduce the time spent manually typing documents and to provide independent digital note-taking for visually impaired individuals. The system design and algorithms for extractive and abstractive text summarization are presented along with experimental results and comparisons to other systems.

ANALYTICS OF PATENT CASE RULINGS: EMPIRICAL EVALUATION OF MODELS FOR LEGAL RE...

ANALYTICS OF PATENT CASE RULINGS: EMPIRICAL EVALUATION OF MODELS FOR LEGAL RE...Kripa (कृपा) Rajshekhar

Recent progress in incorporating word order and semantics to the decades-old, tried-and-tested bag-of-words representation of text meaning has yielded promising results in computational text classification and analysis. This development, and the availability of a large number of legal rulings from the PTAB (Patent Trial and Appeal Board motivated us to revisit possibilities for practical, computational models of legal relevance -- starting with this narrow and approachable niche of jurisprudence. We present results from our analysis and experiments towards this goal using a corpus of approximately 8000 rulings from the PTAB. This work makes three important contributions towards the development of models for legal relevance semantics: (a) Using state-of-art Natural Language Processing (NLP) methods, we characterize the diversity and types of semantic relationships that are implicit in select judgements of legal relevance at the PTAB (b) We achieve new state-of-art results on practical information retrieval tasks using our customized semantic representations on this corpus (c) We outline promising avenues for future work in the area - including preliminary evidence from human-in-loop interaction, and new forms of text representation developed using input from over a hundred interviews with practitioners in the field. Using the PTAB data set for testing relevance in patent document retrieval, instead of traditional citations search, also shows a bigger gap between the needs of practitioners and the capabilities of current information retrieval and NLP technologies.Top Essay Writing Websites

The document discusses how media content can influence society. It notes that while media were originally used for education and information, entertainment has become a major role. Television in particular has had a huge influence since being introduced. The document discusses how media portrayals can shape perceptions and spread misinformation, citing examples of how television influences behavior in young people and how some news coverage has contributed to growing Islamophobia.

A NOVEL APPROACH OF CLASSIFICATION TECHNIQUES FOR CLIR

Recent and continuing advances in online information systems are creating many opportunities

and also new problems in information retrieval. Gathering the information in different natural

language is the most difficult task, which often requires huge resources. Cross-language

information retrieval (CLIR) is the retrieval of information for a query written in the native

language. This paper deals with various classification techniques that can be used for solving

the problems encountered in CLIR.

Grouper

This document summarizes a technical seminar about Grouper, a system that provides dynamic document clustering of web search results. Grouper clusters search results using the STC algorithm to group similar documents together and make the results easier to browse. An evaluation found that Grouper produced more coherent clusters than k-means clustering and that users viewed more documents, spent less time per document, and had shorter distances between clicks when using Grouper compared to a standard ranked search results list. The conclusion discusses some limitations of the initial Grouper system and proposes improvements in a next version.

Test Estimation

This document discusses test estimation techniques. It explains that good estimates are accurate, realistic, and actionable. It recommends asking experts, using metrics and industry averages, and negotiating with managers. It also discusses estimating techniques like planning poker, three-point estimates, and understanding dependencies. The document emphasizes using historical data to predict testing time and the number of bugs found.

Understanding Natural Languange with Corpora-based Generation of Dependency G...

This document discusses training a dependency parser using an unparsed corpus rather than a manually parsed training set. It develops an iterative training method that generates training examples using heuristics from past parsing decisions. The method is shown to produce parse trees qualitatively similar to conventionally trained parsers. Three avenues for future research using this corpus-based generation method are proposed.

Ju3517011704

International Journal of Engineering Research and Applications (IJERA) is an open access online peer reviewed international journal that publishes research and review articles in the fields of Computer Science, Neural Networks, Electrical Engineering, Software Engineering, Information Technology, Mechanical Engineering, Chemical Engineering, Plastic Engineering, Food Technology, Textile Engineering, Nano Technology & science, Power Electronics, Electronics & Communication Engineering, Computational mathematics, Image processing, Civil Engineering, Structural Engineering, Environmental Engineering, VLSI Testing & Low Power VLSI Design etc.

Real-time DirectTranslation System for Sinhala and Tamil Languages.

Presented my research on "Real-time DirectTranslation System for Sinhala and Tamil Languages" at the FedCSIS 2015 Research Conference hosted by University of Lodz, Poland from 13 - 17th of September 2015.

Presentation summerstudent 2009-aug09-lbl-summer

1) Scientific research increasingly relies on large-scale data transfers between collaborating institutions over high-speed networks.

2) ESNet provides high-bandwidth connectivity between DOE sites but needs to efficiently allocate guaranteed bandwidth for transfers.

3) The presentation proposes enhancements to ESNet's OSCARS reservation system to suggest optimal reservations that meet researchers' bandwidth and timing requirements for data transfers.

Network and Multimedia QoE Management

The document discusses research on quantifying user satisfaction (QoE) in VoIP applications like Skype calls. It presents three key contributions:

1) Developing the first QoE measurement methodology based on analyzing large-scale Skype call data to correlate call duration with network quality factors like jitter and bit rate.

2) Proposing OneClick, a simple framework for crowdsourced QoE experiments based on user clicks to indicate dissatisfaction.

3) Introducing the first crowdsourcable QoE evaluation methodology to verify user judgments.

Moses

This document provides an overview of machine translation and the Moses machine translation toolkit. It defines machine translation and statistical machine translation. It describes the major components of Moses, including GIZA++ for word alignment, SRILM for language modeling, and the Moses decoder. It explains how Moses uses phrase-based translation and tuning to produce translations. It also discusses how to set up and use a Moses server for translating webpages.

A Recorded Debating Dataset

This document describes a new English audio and textual dataset of recorded debating speeches produced by professional debaters. The dataset includes 60 speeches on various controversial topics, each provided in 5 formats corresponding to different stages of production: 1) the original audio recording, 2) an automatic transcription from speech recognition, 3) a "cleaned" version of the automatic transcription, 4) a manual transcription serving as a reference, and 5) background information on the topic. The intention is to make the data available for various computational argumentation and debating research purposes such as training speech recognition systems or applying argument mining techniques.

Semi-Supervised Keyword Spotting in Arabic Speech Using Self-Training Ensembles

Arabic speech recognition suffers from the scarcity of properly labeled data. In this project, we introduce a pipeline that performs semi-supervised segmentation of audio then— after hand-labeling a small dataset—feeds labeled segments to a supervised learning framework to select, through many rounds of hyperparameter optimization, an ensemble of models to infer labels for a larger dataset; using which we improved the keyword spotter’s F1 score from 75.85% (using a baseline model) to 90.91% on a ground-truth test set. We picked the keyword na`am (yes) to spot; we defined the system’s input as an audio file of an utterance and the output as a binary label: keyword or filler.

ANALYTICS OF PATENT CASE RULINGS: EMPIRICAL EVALUATION OF MODELS FOR LEGAL RE...

ANALYTICS OF PATENT CASE RULINGS: EMPIRICAL EVALUATION OF MODELS FOR LEGAL RE...Kripa (कृपा) Rajshekhar

Recent progress in incorporating word order and semantics to the decades-old, tried-and-tested bag-of-words representation of text meaning has yielded promising results in computational text classification and analysis. This development, and the availability of a large number of legal rulings from the PTAB (Patent Trial and Appeal Board) motivated us to revisit possibilities for practical, computational models of legal relevance - starting with this narrow and approachable niche of jurisprudence. We present results from our analysis and experiments towards this goal using a corpus of approximately 8000 rulings from the PTAB. This work makes three important contributions towards the development of models for legal relevance semantics: (a) Using state-of-art Natural Language Processing (NLP) methods, we characterize the diversity and types of semantic relationships that are implicit in practical judgements of legal relevance at the PTAB (b) We achieve new state-of-art results on practical information retrieval using our customized semantic representations on this corpus (c) We outline promising avenues for future work in the area - including preliminary evidence from human-in-loop interaction, and new forms of text representation developed using input from over a hundred interviews with practitioners in the field. Finally, we argue that PTAB relevance is a practical and realistic baseline for performance measurement - with the desirable property of evaluating NLP improvements against “real world” legal judgement.A Two-Tiered On-Line Server-Side Bandwidth Reservation Framework for the Real...

This document summarizes a two-tiered bandwidth reservation framework for delivering multiple video streams from servers in real-time. The framework uses a combination of per-stream reservations and a shared aggregate reservation across all streams. Each stream is allocated a guaranteed reservation equal to the p percentile of its bandwidth distribution. An additional shared reservation provides statistical multiplexing of peak bandwidth demands. This enables delivery of streams with less total bandwidth than deterministic approaches while bounding frame drop probabilities based on system parameters. The document proposes an online admission control algorithm that uses three pre-computed parameters per stream and has linear complexity in the number of servers.

An Efficient Distributed Control Law for Load Balancing in Content Delivery N...

International Journal of Modern Engineering Research (IJMER) is Peer reviewed, online Journal. It serves as an international archival forum of scholarly research related to engineering and science education.

International Journal of Modern Engineering Research (IJMER) covers all the fields of engineering and science: Electrical Engineering, Mechanical Engineering, Civil Engineering, Chemical Engineering, Computer Engineering, Agricultural Engineering, Aerospace Engineering, Thermodynamics, Structural Engineering, Control Engineering, Robotics, Mechatronics, Fluid Mechanics, Nanotechnology, Simulators, Web-based Learning, Remote Laboratories, Engineering Design Methods, Education Research, Students' Satisfaction and Motivation, Global Projects, and Assessment…. And many more.

MADS4007_Fall2022-Intro to Web Technologies.docx.pptx

The document provides an origin story of HTTP from Dr. Tim Berners-Lee at CERN in 1989. Dr. Berners-Lee developed HTTP as a stateless protocol to allow computers to communicate and access information stored on servers. He explained that each HTTP request is treated independently, with the server not storing information about previous requests. While the students understood the concept, the stateless nature of HTTP would later require workarounds for web commerce.

FINDING OUT NOISY PATTERNS FOR RELATION EXTRACTION OF BANGLA SENTENCES

Relation extraction is one of the most important parts of natural language processing. It is the process of extracting relationships from a text. Extracted relationships actually occur between two or more entities of a certain type and these relations may have different patterns. The goal of the paper is to find out the noisy patterns for relation extraction of Bangla sentences. For the research work, seed tuples were needed containing two entities and the relation between them. We can get seed tuples from Freebase. Freebase is a large collaborative knowledge base and database of general, structured information for public use. But for Bangla language, there is no available Freebase. So we made Bangla Freebase which was the real challenge and it can be used for any other NLP based works. Then we tried to find out the noisy patterns for relation extraction by measuring conflict score.

New trends in NLP applications

Tutorial given at RANLP 2015 in Hissar, Bulgaria

Recent years have seen lots of changes in the field of computational linguistics, most of them due to the widespread use of the Internet and the benefits and problems it brings. The first part of this tutorial will discuss these changes and will focus on crowdsourcing and how it influenced the creation of annotated data.

Annotation of data employed to train and test NLP methods used to be the task of language experts who had a good understanding of the linguistic phenomena to be tackled. Given that a large number of people now have access to the Internet, crowdsourcing has become an alternative way of obtaining annotated data. The core idea of crowdsourcing is that it is possible to design tasks that can be completed by non-experts and that the outputs of these tasks can be combined to obtain high-quality linguistic annotation, which would normally be produced by experts. Examples of how crowdsourcing was employed in computational linguistics will be given.

Big data is another trend in computational linguistics as researchers rely on more and more data for improving the results of a method. The second part of the tutorial will introduce the MapReduce programming model and show how it was used in processing language. Combined with processing larger quantities of data, the field of computational linguistics has applied deep learning to various tasks successfully, improving their accuracy. An introduction to deep learning will be provided, followed by examples of how it was applied to tasks such as learning semantic representations, sentiment analysis and machine translation evaluation.

From TREC to Watson: is open domain question answering a solved problem?

The document summarizes a presentation on question answering systems. It begins by providing context on information overload and defining question answering. It then discusses the evolution of QA systems from early databases to today's open-domain systems. The presentation focuses on IBM's Watson system, providing an overview of its unprecedented ability to answer open-domain questions as well as the massive resources required for its development. It concludes by arguing that open-domain QA remains unsolved and that closed-domain, interactive QA may be more practical for real-world applications.

More Related Content

Similar to Harnessing Court Data using NLP and Spoken Language Technology

Implementing a Caching Scheme for Media Streaming in a Proxy Server

In the past few years, websites have moved from being

static web pages into rich media applications that use audio,

images and videos heavily in their interaction with users. This

change has made a dramatic change in network traffics

nowadays. Organizations spend a lot of effort, time and money

to improve response time and design intermediary systems that

enhance overall user experience. Media traffic represents

about 69.9-88.8% of all traffic. Therefore, enhancing networks

to accommodate this large traffic is a major trend. Content

Distribution Networks (CDNs) are now largely deployed for a

faster delivery of media. Redundancy and caching are also

implemented to decrease response time.

In this project, we are implementing a caching scheme for

media streaming in a proxy server. Unlike CDNs, which

require huge infrastructure, our caching proxy server will be

as simple as a piece of software that is portable and can be

installed in small as well as large scales. It may be deployed in

a university network, company’s private network or on ISPs

servers. This caching scheme, specially tailored for media

streaming, will reduce traffic and enhance network efficiency

in general.

Index Terms – Proxy servers, Caching, Media streaming

team10.ppt.pptx

The document describes a voice activated text editing software project that uses speech recognition, speech synthesis, and machine learning based text summarization. The software allows users to create notes, import documents, and perform text functions using voice commands. It was created to reduce the time spent manually typing documents and to provide independent digital note-taking for visually impaired individuals. The system design and algorithms for extractive and abstractive text summarization are presented along with experimental results and comparisons to other systems.

ANALYTICS OF PATENT CASE RULINGS: EMPIRICAL EVALUATION OF MODELS FOR LEGAL RE...

ANALYTICS OF PATENT CASE RULINGS: EMPIRICAL EVALUATION OF MODELS FOR LEGAL RE...Kripa (कृपा) Rajshekhar

Recent progress in incorporating word order and semantics to the decades-old, tried-and-tested bag-of-words representation of text meaning has yielded promising results in computational text classification and analysis. This development, and the availability of a large number of legal rulings from the PTAB (Patent Trial and Appeal Board motivated us to revisit possibilities for practical, computational models of legal relevance -- starting with this narrow and approachable niche of jurisprudence. We present results from our analysis and experiments towards this goal using a corpus of approximately 8000 rulings from the PTAB. This work makes three important contributions towards the development of models for legal relevance semantics: (a) Using state-of-art Natural Language Processing (NLP) methods, we characterize the diversity and types of semantic relationships that are implicit in select judgements of legal relevance at the PTAB (b) We achieve new state-of-art results on practical information retrieval tasks using our customized semantic representations on this corpus (c) We outline promising avenues for future work in the area - including preliminary evidence from human-in-loop interaction, and new forms of text representation developed using input from over a hundred interviews with practitioners in the field. Using the PTAB data set for testing relevance in patent document retrieval, instead of traditional citations search, also shows a bigger gap between the needs of practitioners and the capabilities of current information retrieval and NLP technologies.Top Essay Writing Websites

The document discusses how media content can influence society. It notes that while media were originally used for education and information, entertainment has become a major role. Television in particular has had a huge influence since being introduced. The document discusses how media portrayals can shape perceptions and spread misinformation, citing examples of how television influences behavior in young people and how some news coverage has contributed to growing Islamophobia.

A NOVEL APPROACH OF CLASSIFICATION TECHNIQUES FOR CLIR

Recent and continuing advances in online information systems are creating many opportunities

and also new problems in information retrieval. Gathering the information in different natural

language is the most difficult task, which often requires huge resources. Cross-language

information retrieval (CLIR) is the retrieval of information for a query written in the native

language. This paper deals with various classification techniques that can be used for solving

the problems encountered in CLIR.

Grouper

This document summarizes a technical seminar about Grouper, a system that provides dynamic document clustering of web search results. Grouper clusters search results using the STC algorithm to group similar documents together and make the results easier to browse. An evaluation found that Grouper produced more coherent clusters than k-means clustering and that users viewed more documents, spent less time per document, and had shorter distances between clicks when using Grouper compared to a standard ranked search results list. The conclusion discusses some limitations of the initial Grouper system and proposes improvements in a next version.

Test Estimation

This document discusses test estimation techniques. It explains that good estimates are accurate, realistic, and actionable. It recommends asking experts, using metrics and industry averages, and negotiating with managers. It also discusses estimating techniques like planning poker, three-point estimates, and understanding dependencies. The document emphasizes using historical data to predict testing time and the number of bugs found.

Understanding Natural Languange with Corpora-based Generation of Dependency G...

This document discusses training a dependency parser using an unparsed corpus rather than a manually parsed training set. It develops an iterative training method that generates training examples using heuristics from past parsing decisions. The method is shown to produce parse trees qualitatively similar to conventionally trained parsers. Three avenues for future research using this corpus-based generation method are proposed.

Ju3517011704

International Journal of Engineering Research and Applications (IJERA) is an open access online peer reviewed international journal that publishes research and review articles in the fields of Computer Science, Neural Networks, Electrical Engineering, Software Engineering, Information Technology, Mechanical Engineering, Chemical Engineering, Plastic Engineering, Food Technology, Textile Engineering, Nano Technology & science, Power Electronics, Electronics & Communication Engineering, Computational mathematics, Image processing, Civil Engineering, Structural Engineering, Environmental Engineering, VLSI Testing & Low Power VLSI Design etc.

Real-time DirectTranslation System for Sinhala and Tamil Languages.

Presented my research on "Real-time DirectTranslation System for Sinhala and Tamil Languages" at the FedCSIS 2015 Research Conference hosted by University of Lodz, Poland from 13 - 17th of September 2015.

Presentation summerstudent 2009-aug09-lbl-summer

1) Scientific research increasingly relies on large-scale data transfers between collaborating institutions over high-speed networks.

2) ESNet provides high-bandwidth connectivity between DOE sites but needs to efficiently allocate guaranteed bandwidth for transfers.

3) The presentation proposes enhancements to ESNet's OSCARS reservation system to suggest optimal reservations that meet researchers' bandwidth and timing requirements for data transfers.

Network and Multimedia QoE Management

The document discusses research on quantifying user satisfaction (QoE) in VoIP applications like Skype calls. It presents three key contributions:

1) Developing the first QoE measurement methodology based on analyzing large-scale Skype call data to correlate call duration with network quality factors like jitter and bit rate.

2) Proposing OneClick, a simple framework for crowdsourced QoE experiments based on user clicks to indicate dissatisfaction.

3) Introducing the first crowdsourcable QoE evaluation methodology to verify user judgments.

Moses

This document provides an overview of machine translation and the Moses machine translation toolkit. It defines machine translation and statistical machine translation. It describes the major components of Moses, including GIZA++ for word alignment, SRILM for language modeling, and the Moses decoder. It explains how Moses uses phrase-based translation and tuning to produce translations. It also discusses how to set up and use a Moses server for translating webpages.

A Recorded Debating Dataset

This document describes a new English audio and textual dataset of recorded debating speeches produced by professional debaters. The dataset includes 60 speeches on various controversial topics, each provided in 5 formats corresponding to different stages of production: 1) the original audio recording, 2) an automatic transcription from speech recognition, 3) a "cleaned" version of the automatic transcription, 4) a manual transcription serving as a reference, and 5) background information on the topic. The intention is to make the data available for various computational argumentation and debating research purposes such as training speech recognition systems or applying argument mining techniques.

Semi-Supervised Keyword Spotting in Arabic Speech Using Self-Training Ensembles

Arabic speech recognition suffers from the scarcity of properly labeled data. In this project, we introduce a pipeline that performs semi-supervised segmentation of audio then— after hand-labeling a small dataset—feeds labeled segments to a supervised learning framework to select, through many rounds of hyperparameter optimization, an ensemble of models to infer labels for a larger dataset; using which we improved the keyword spotter’s F1 score from 75.85% (using a baseline model) to 90.91% on a ground-truth test set. We picked the keyword na`am (yes) to spot; we defined the system’s input as an audio file of an utterance and the output as a binary label: keyword or filler.

ANALYTICS OF PATENT CASE RULINGS: EMPIRICAL EVALUATION OF MODELS FOR LEGAL RE...

ANALYTICS OF PATENT CASE RULINGS: EMPIRICAL EVALUATION OF MODELS FOR LEGAL RE...Kripa (कृपा) Rajshekhar

Recent progress in incorporating word order and semantics to the decades-old, tried-and-tested bag-of-words representation of text meaning has yielded promising results in computational text classification and analysis. This development, and the availability of a large number of legal rulings from the PTAB (Patent Trial and Appeal Board) motivated us to revisit possibilities for practical, computational models of legal relevance - starting with this narrow and approachable niche of jurisprudence. We present results from our analysis and experiments towards this goal using a corpus of approximately 8000 rulings from the PTAB. This work makes three important contributions towards the development of models for legal relevance semantics: (a) Using state-of-art Natural Language Processing (NLP) methods, we characterize the diversity and types of semantic relationships that are implicit in practical judgements of legal relevance at the PTAB (b) We achieve new state-of-art results on practical information retrieval using our customized semantic representations on this corpus (c) We outline promising avenues for future work in the area - including preliminary evidence from human-in-loop interaction, and new forms of text representation developed using input from over a hundred interviews with practitioners in the field. Finally, we argue that PTAB relevance is a practical and realistic baseline for performance measurement - with the desirable property of evaluating NLP improvements against “real world” legal judgement.A Two-Tiered On-Line Server-Side Bandwidth Reservation Framework for the Real...

This document summarizes a two-tiered bandwidth reservation framework for delivering multiple video streams from servers in real-time. The framework uses a combination of per-stream reservations and a shared aggregate reservation across all streams. Each stream is allocated a guaranteed reservation equal to the p percentile of its bandwidth distribution. An additional shared reservation provides statistical multiplexing of peak bandwidth demands. This enables delivery of streams with less total bandwidth than deterministic approaches while bounding frame drop probabilities based on system parameters. The document proposes an online admission control algorithm that uses three pre-computed parameters per stream and has linear complexity in the number of servers.

An Efficient Distributed Control Law for Load Balancing in Content Delivery N...

International Journal of Modern Engineering Research (IJMER) is Peer reviewed, online Journal. It serves as an international archival forum of scholarly research related to engineering and science education.

International Journal of Modern Engineering Research (IJMER) covers all the fields of engineering and science: Electrical Engineering, Mechanical Engineering, Civil Engineering, Chemical Engineering, Computer Engineering, Agricultural Engineering, Aerospace Engineering, Thermodynamics, Structural Engineering, Control Engineering, Robotics, Mechatronics, Fluid Mechanics, Nanotechnology, Simulators, Web-based Learning, Remote Laboratories, Engineering Design Methods, Education Research, Students' Satisfaction and Motivation, Global Projects, and Assessment…. And many more.

MADS4007_Fall2022-Intro to Web Technologies.docx.pptx

The document provides an origin story of HTTP from Dr. Tim Berners-Lee at CERN in 1989. Dr. Berners-Lee developed HTTP as a stateless protocol to allow computers to communicate and access information stored on servers. He explained that each HTTP request is treated independently, with the server not storing information about previous requests. While the students understood the concept, the stateless nature of HTTP would later require workarounds for web commerce.

FINDING OUT NOISY PATTERNS FOR RELATION EXTRACTION OF BANGLA SENTENCES

Relation extraction is one of the most important parts of natural language processing. It is the process of extracting relationships from a text. Extracted relationships actually occur between two or more entities of a certain type and these relations may have different patterns. The goal of the paper is to find out the noisy patterns for relation extraction of Bangla sentences. For the research work, seed tuples were needed containing two entities and the relation between them. We can get seed tuples from Freebase. Freebase is a large collaborative knowledge base and database of general, structured information for public use. But for Bangla language, there is no available Freebase. So we made Bangla Freebase which was the real challenge and it can be used for any other NLP based works. Then we tried to find out the noisy patterns for relation extraction by measuring conflict score.

Similar to Harnessing Court Data using NLP and Spoken Language Technology (20)

Implementing a Caching Scheme for Media Streaming in a Proxy Server

Implementing a Caching Scheme for Media Streaming in a Proxy Server

ANALYTICS OF PATENT CASE RULINGS: EMPIRICAL EVALUATION OF MODELS FOR LEGAL RE...

ANALYTICS OF PATENT CASE RULINGS: EMPIRICAL EVALUATION OF MODELS FOR LEGAL RE...

A NOVEL APPROACH OF CLASSIFICATION TECHNIQUES FOR CLIR

A NOVEL APPROACH OF CLASSIFICATION TECHNIQUES FOR CLIR

Understanding Natural Languange with Corpora-based Generation of Dependency G...

Understanding Natural Languange with Corpora-based Generation of Dependency G...

Real-time DirectTranslation System for Sinhala and Tamil Languages.

Real-time DirectTranslation System for Sinhala and Tamil Languages.

Semi-Supervised Keyword Spotting in Arabic Speech Using Self-Training Ensembles

Semi-Supervised Keyword Spotting in Arabic Speech Using Self-Training Ensembles

ANALYTICS OF PATENT CASE RULINGS: EMPIRICAL EVALUATION OF MODELS FOR LEGAL RE...

ANALYTICS OF PATENT CASE RULINGS: EMPIRICAL EVALUATION OF MODELS FOR LEGAL RE...

A Two-Tiered On-Line Server-Side Bandwidth Reservation Framework for the Real...

A Two-Tiered On-Line Server-Side Bandwidth Reservation Framework for the Real...

An Efficient Distributed Control Law for Load Balancing in Content Delivery N...

An Efficient Distributed Control Law for Load Balancing in Content Delivery N...

MADS4007_Fall2022-Intro to Web Technologies.docx.pptx

MADS4007_Fall2022-Intro to Web Technologies.docx.pptx

FINDING OUT NOISY PATTERNS FOR RELATION EXTRACTION OF BANGLA SENTENCES

FINDING OUT NOISY PATTERNS FOR RELATION EXTRACTION OF BANGLA SENTENCES

More from Constantin Orasan

New trends in NLP applications

Tutorial given at RANLP 2015 in Hissar, Bulgaria

Recent years have seen lots of changes in the field of computational linguistics, most of them due to the widespread use of the Internet and the benefits and problems it brings. The first part of this tutorial will discuss these changes and will focus on crowdsourcing and how it influenced the creation of annotated data.

Annotation of data employed to train and test NLP methods used to be the task of language experts who had a good understanding of the linguistic phenomena to be tackled. Given that a large number of people now have access to the Internet, crowdsourcing has become an alternative way of obtaining annotated data. The core idea of crowdsourcing is that it is possible to design tasks that can be completed by non-experts and that the outputs of these tasks can be combined to obtain high-quality linguistic annotation, which would normally be produced by experts. Examples of how crowdsourcing was employed in computational linguistics will be given.

Big data is another trend in computational linguistics as researchers rely on more and more data for improving the results of a method. The second part of the tutorial will introduce the MapReduce programming model and show how it was used in processing language. Combined with processing larger quantities of data, the field of computational linguistics has applied deep learning to various tasks successfully, improving their accuracy. An introduction to deep learning will be provided, followed by examples of how it was applied to tasks such as learning semantic representations, sentiment analysis and machine translation evaluation.

From TREC to Watson: is open domain question answering a solved problem?

The document summarizes a presentation on question answering systems. It begins by providing context on information overload and defining question answering. It then discusses the evolution of QA systems from early databases to today's open-domain systems. The presentation focuses on IBM's Watson system, providing an overview of its unprecedented ability to answer open-domain questions as well as the massive resources required for its development. It concludes by arguing that open-domain QA remains unsolved and that closed-domain, interactive QA may be more practical for real-world applications.

QALL-ME: Ontology and Semantic Web

Invited talk at Driving Future Question Answering: Research Trends And Market Perspectives Workshop, Trento, Italy

The role of linguistic information for shallow language processing

The document discusses shallow language processing and summarization. It argues that while deep language understanding is limited, shallow methods can be improved by adding linguistic information. As an example, it shows how term frequency, anaphora resolution, discourse cues and genetic algorithms can select extractive summaries that better match human abstracts, without requiring full text comprehension.

What is Computer-Aided Summarisation and does it really work?

Computer-aided summarization (CAS) uses automatic methods to identify important information in documents, which humans can then edit to produce summaries. An evaluation of a CAS tool called CAST found that it reduced the time professional summarizers needed to produce summaries by 20% on average without significantly affecting summary quality. User feedback indicated the tool was most useful for identifying related sentences to include.

Tutorial on automatic summarization

The document discusses automatic summarization and related disciplines. It defines summarization as the condensation of a source text into a shorter version by selecting key information. Automatic summarization involves producing summaries computationally. Related fields include automatic classification, keyword extraction, information retrieval, information extraction, and question answering, which all aim to organize and understand information from text.

Message project leaflet

The MESSAGE project aims to:

1) Develop tools to rapidly disseminate reliable emergency messages across Europe.

2) Ensure messages are comprehensible to facilitate response.

3) Propose making available a controlled language editing tool to allow quick and accurate editing of alerts.

Porting the QALL-ME framework to Romanian

Invited talk at Processing ROmanian in Multilingual, Interoperational and Scalable Environments (PROMISE 2010) on how to port the QALL-ME framework to a new language

Annotation of anaphora and coreference for automatic processing

This document discusses annotation of anaphora and coreference in corpora for computational linguistics. It covers several annotation schemes including MUC, which aimed to achieve high inter-annotator agreement by focusing on coreference between noun phrases. The NP4E corpus aimed to develop guidelines for annotating both noun phrase and event coreference in newspaper articles. Annotation is a time-consuming process that requires concentration to identify mentions and relations accurately. Guidelines must be clear and consistent to help annotators agree on how to mark up texts.

More from Constantin Orasan (9)

From TREC to Watson: is open domain question answering a solved problem?

From TREC to Watson: is open domain question answering a solved problem?

The role of linguistic information for shallow language processing

The role of linguistic information for shallow language processing

What is Computer-Aided Summarisation and does it really work?

What is Computer-Aided Summarisation and does it really work?

Annotation of anaphora and coreference for automatic processing

Annotation of anaphora and coreference for automatic processing

Recently uploaded

Finale of the Year: Apply for Next One!

Presentation for the event called "Finale of the Year: Apply for Next One!" organized by GDSC PJATK

Azure API Management to expose backend services securely

How to use Azure API Management to expose backend service securely

Unlock the Future of Search with MongoDB Atlas_ Vector Search Unleashed.pdf

Discover how MongoDB Atlas and vector search technology can revolutionize your application's search capabilities. This comprehensive presentation covers:

* What is Vector Search?

* Importance and benefits of vector search

* Practical use cases across various industries

* Step-by-step implementation guide

* Live demos with code snippets

* Enhancing LLM capabilities with vector search

* Best practices and optimization strategies

Perfect for developers, AI enthusiasts, and tech leaders. Learn how to leverage MongoDB Atlas to deliver highly relevant, context-aware search results, transforming your data retrieval process. Stay ahead in tech innovation and maximize the potential of your applications.

#MongoDB #VectorSearch #AI #SemanticSearch #TechInnovation #DataScience #LLM #MachineLearning #SearchTechnology

Salesforce Integration for Bonterra Impact Management (fka Social Solutions A...

Sidekick Solutions uses Bonterra Impact Management (fka Social Solutions Apricot) and automation solutions to integrate data for business workflows.

We believe integration and automation are essential to user experience and the promise of efficient work through technology. Automation is the critical ingredient to realizing that full vision. We develop integration products and services for Bonterra Case Management software to support the deployment of automations for a variety of use cases.

This video focuses on integration of Salesforce with Bonterra Impact Management.

Interested in deploying an integration with Salesforce for Bonterra Impact Management? Contact us at sales@sidekicksolutionsllc.com to discuss next steps.

HCL Notes and Domino License Cost Reduction in the World of DLAU

Webinar Recording: https://www.panagenda.com/webinars/hcl-notes-and-domino-license-cost-reduction-in-the-world-of-dlau/

The introduction of DLAU and the CCB & CCX licensing model caused quite a stir in the HCL community. As a Notes and Domino customer, you may have faced challenges with unexpected user counts and license costs. You probably have questions on how this new licensing approach works and how to benefit from it. Most importantly, you likely have budget constraints and want to save money where possible. Don’t worry, we can help with all of this!

We’ll show you how to fix common misconfigurations that cause higher-than-expected user counts, and how to identify accounts which you can deactivate to save money. There are also frequent patterns that can cause unnecessary cost, like using a person document instead of a mail-in for shared mailboxes. We’ll provide examples and solutions for those as well. And naturally we’ll explain the new licensing model.

Join HCL Ambassador Marc Thomas in this webinar with a special guest appearance from Franz Walder. It will give you the tools and know-how to stay on top of what is going on with Domino licensing. You will be able lower your cost through an optimized configuration and keep it low going forward.

These topics will be covered

- Reducing license cost by finding and fixing misconfigurations and superfluous accounts

- How do CCB and CCX licenses really work?

- Understanding the DLAU tool and how to best utilize it

- Tips for common problem areas, like team mailboxes, functional/test users, etc

- Practical examples and best practices to implement right away

Choosing The Best AWS Service For Your Website + API.pptx

Have you ever been confused by the myriad of choices offered by AWS for hosting a website or an API?

Lambda, Elastic Beanstalk, Lightsail, Amplify, S3 (and more!) can each host websites + APIs. But which one should we choose?

Which one is cheapest? Which one is fastest? Which one will scale to meet our needs?

Join me in this session as we dive into each AWS hosting service to determine which one is best for your scenario and explain why!

5th LF Energy Power Grid Model Meet-up Slides

5th Power Grid Model Meet-up

It is with great pleasure that we extend to you an invitation to the 5th Power Grid Model Meet-up, scheduled for 6th June 2024. This event will adopt a hybrid format, allowing participants to join us either through an online Mircosoft Teams session or in person at TU/e located at Den Dolech 2, Eindhoven, Netherlands. The meet-up will be hosted by Eindhoven University of Technology (TU/e), a research university specializing in engineering science & technology.

Power Grid Model

The global energy transition is placing new and unprecedented demands on Distribution System Operators (DSOs). Alongside upgrades to grid capacity, processes such as digitization, capacity optimization, and congestion management are becoming vital for delivering reliable services.

Power Grid Model is an open source project from Linux Foundation Energy and provides a calculation engine that is increasingly essential for DSOs. It offers a standards-based foundation enabling real-time power systems analysis, simulations of electrical power grids, and sophisticated what-if analysis. In addition, it enables in-depth studies and analysis of the electrical power grid’s behavior and performance. This comprehensive model incorporates essential factors such as power generation capacity, electrical losses, voltage levels, power flows, and system stability.

Power Grid Model is currently being applied in a wide variety of use cases, including grid planning, expansion, reliability, and congestion studies. It can also help in analyzing the impact of renewable energy integration, assessing the effects of disturbances or faults, and developing strategies for grid control and optimization.

What to expect

For the upcoming meetup we are organizing, we have an exciting lineup of activities planned:

-Insightful presentations covering two practical applications of the Power Grid Model.

-An update on the latest advancements in Power Grid -Model technology during the first and second quarters of 2024.

-An interactive brainstorming session to discuss and propose new feature requests.

-An opportunity to connect with fellow Power Grid Model enthusiasts and users.

Introduction of Cybersecurity with OSS at Code Europe 2024

I develop the Ruby programming language, RubyGems, and Bundler, which are package managers for Ruby. Today, I will introduce how to enhance the security of your application using open-source software (OSS) examples from Ruby and RubyGems.

The first topic is CVE (Common Vulnerabilities and Exposures). I have published CVEs many times. But what exactly is a CVE? I'll provide a basic understanding of CVEs and explain how to detect and handle vulnerabilities in OSS.

Next, let's discuss package managers. Package managers play a critical role in the OSS ecosystem. I'll explain how to manage library dependencies in your application.

I'll share insights into how the Ruby and RubyGems core team works to keep our ecosystem safe. By the end of this talk, you'll have a better understanding of how to safeguard your code.

June Patch Tuesday

Ivanti’s Patch Tuesday breakdown goes beyond patching your applications and brings you the intelligence and guidance needed to prioritize where to focus your attention first. Catch early analysis on our Ivanti blog, then join industry expert Chris Goettl for the Patch Tuesday Webinar Event. There we’ll do a deep dive into each of the bulletins and give guidance on the risks associated with the newly-identified vulnerabilities.

Columbus Data & Analytics Wednesdays - June 2024

Columbus Data & Analytics Wednesdays, June 2024 with Maria Copot 20

Let's Integrate MuleSoft RPA, COMPOSER, APM with AWS IDP along with Slack

Discover the seamless integration of RPA (Robotic Process Automation), COMPOSER, and APM with AWS IDP enhanced with Slack notifications. Explore how these technologies converge to streamline workflows, optimize performance, and ensure secure access, all while leveraging the power of AWS IDP and real-time communication via Slack notifications.

Letter and Document Automation for Bonterra Impact Management (fka Social Sol...

Sidekick Solutions uses Bonterra Impact Management (fka Social Solutions Apricot) and automation solutions to integrate data for business workflows.

We believe integration and automation are essential to user experience and the promise of efficient work through technology. Automation is the critical ingredient to realizing that full vision. We develop integration products and services for Bonterra Case Management software to support the deployment of automations for a variety of use cases.

This video focuses on automated letter generation for Bonterra Impact Management using Google Workspace or Microsoft 365.

Interested in deploying letter generation automations for Bonterra Impact Management? Contact us at sales@sidekicksolutionsllc.com to discuss next steps.

Presentation of the OECD Artificial Intelligence Review of Germany

Consult the full report at https://www.oecd.org/digital/oecd-artificial-intelligence-review-of-germany-609808d6-en.htm

Energy Efficient Video Encoding for Cloud and Edge Computing Instances

Energy Efficient Video Encoding for Cloud and Edge Computing Instances

Programming Foundation Models with DSPy - Meetup Slides

Prompting language models is hard, while programming language models is easy. In this talk, I will discuss the state-of-the-art framework DSPy for programming foundation models with its powerful optimizers and runtime constraint system.

Ocean lotus Threat actors project by John Sitima 2024 (1).pptx

Ocean Lotus cyber threat actors represent a sophisticated, persistent, and politically motivated group that poses a significant risk to organizations and individuals in the Southeast Asian region. Their continuous evolution and adaptability underscore the need for robust cybersecurity measures and international cooperation to identify and mitigate the threats posed by such advanced persistent threat groups.

Building Production Ready Search Pipelines with Spark and Milvus

Spark is the widely used ETL tool for processing, indexing and ingesting data to serving stack for search. Milvus is the production-ready open-source vector database. In this talk we will show how to use Spark to process unstructured data to extract vector representations, and push the vectors to Milvus vector database for search serving.

Recently uploaded (20)

Azure API Management to expose backend services securely

Azure API Management to expose backend services securely

Unlock the Future of Search with MongoDB Atlas_ Vector Search Unleashed.pdf

Unlock the Future of Search with MongoDB Atlas_ Vector Search Unleashed.pdf

Salesforce Integration for Bonterra Impact Management (fka Social Solutions A...

Salesforce Integration for Bonterra Impact Management (fka Social Solutions A...

HCL Notes and Domino License Cost Reduction in the World of DLAU

HCL Notes and Domino License Cost Reduction in the World of DLAU

Choosing The Best AWS Service For Your Website + API.pptx

Choosing The Best AWS Service For Your Website + API.pptx

Introduction of Cybersecurity with OSS at Code Europe 2024

Introduction of Cybersecurity with OSS at Code Europe 2024

WeTestAthens: Postman's AI & Automation Techniques

WeTestAthens: Postman's AI & Automation Techniques

Let's Integrate MuleSoft RPA, COMPOSER, APM with AWS IDP along with Slack

Let's Integrate MuleSoft RPA, COMPOSER, APM with AWS IDP along with Slack

Letter and Document Automation for Bonterra Impact Management (fka Social Sol...

Letter and Document Automation for Bonterra Impact Management (fka Social Sol...

Deep Dive: AI-Powered Marketing to Get More Leads and Customers with HyperGro...

Deep Dive: AI-Powered Marketing to Get More Leads and Customers with HyperGro...

Presentation of the OECD Artificial Intelligence Review of Germany

Presentation of the OECD Artificial Intelligence Review of Germany

Energy Efficient Video Encoding for Cloud and Edge Computing Instances

Energy Efficient Video Encoding for Cloud and Edge Computing Instances

Programming Foundation Models with DSPy - Meetup Slides

Programming Foundation Models with DSPy - Meetup Slides

Ocean lotus Threat actors project by John Sitima 2024 (1).pptx

Ocean lotus Threat actors project by John Sitima 2024 (1).pptx

Building Production Ready Search Pipelines with Spark and Milvus

Building Production Ready Search Pipelines with Spark and Milvus

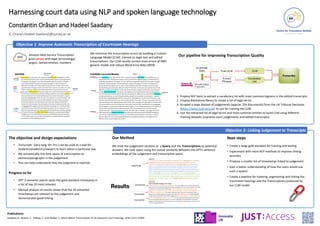

Harnessing Court Data using NLP and Spoken Language Technology

- 1. Harnessing court data using NLP and spoken language technology Constantin Orăsan and Hadeel Saadany {C.Orasan,Hadeel.Saadany}@surrey.ac.uk Objective 1: Improve Automatic Transcription of Courtroom Hearings Amazon Web Service Transcription gives errors with legal terminology/ jargon, named entities, numbers We minimize the transcription errors by building a Custom Language Model (CLM) trained on legal text and edited transcriptions. Our CLM results correct most errors of AWS generic model and reduce Word Error Rate (WER) 1. Employ NLP tools to extract a vocabulary list with most common bigrams in the edited transcripts. 2. Employ Blackstone library to create a list of legal terms 3. Scraped a large dataset of judgements (approx. 25k documents) from the UK Tribunal Decisions (https://www.judiciary.uk) to use for training the CLM. 4. Use the extracted list of legal terms and most common entities to build CLM using different training datasets (supreme court judgements and edited transcripts). Our pipeline for Improving Transcription Quality Objective 2: Linking Judgement to Transcripts The objective and design expectations • Transcripts (very long 10+ hrs.) can be used as a tool for students/academics/lawyers to learn about a particular law. • We semantically link time spans of transcription to sections/paragraphs in the judgement. • This can help understand how the judgment is reached. Progress so far • GPT 3 semantic search spots the gold-standard timestamp in a list of top 20 most relevant. • Manual analysis of results shows that the 20 extracted timestamps are relevant to the judgement and demonstrates good linking. Our Method We treat the judgement sections as a Query and the Transcriptions as potential answers. We rank spans using the cosine similarity between the GPT3 sentence embeddings of the judgement and transcription spans Next steps • Create a large gold standard for training and testing • Experiment with more NLP methods to improve linking accuracy • Produce a smaller list of timestamps linked to judgement • Gain a better understanding of how the users would use such a system • Create a pipeline for cleaning, segmenting and linking the Courtroom hearings and the Transcriptions produced by our CLM model Publications Saadany, H., Breslin, C., Orăsan, C. and Walker, S. (2022) Better Transcription of UK Supreme Court Hearings. arXiv:2211.17094