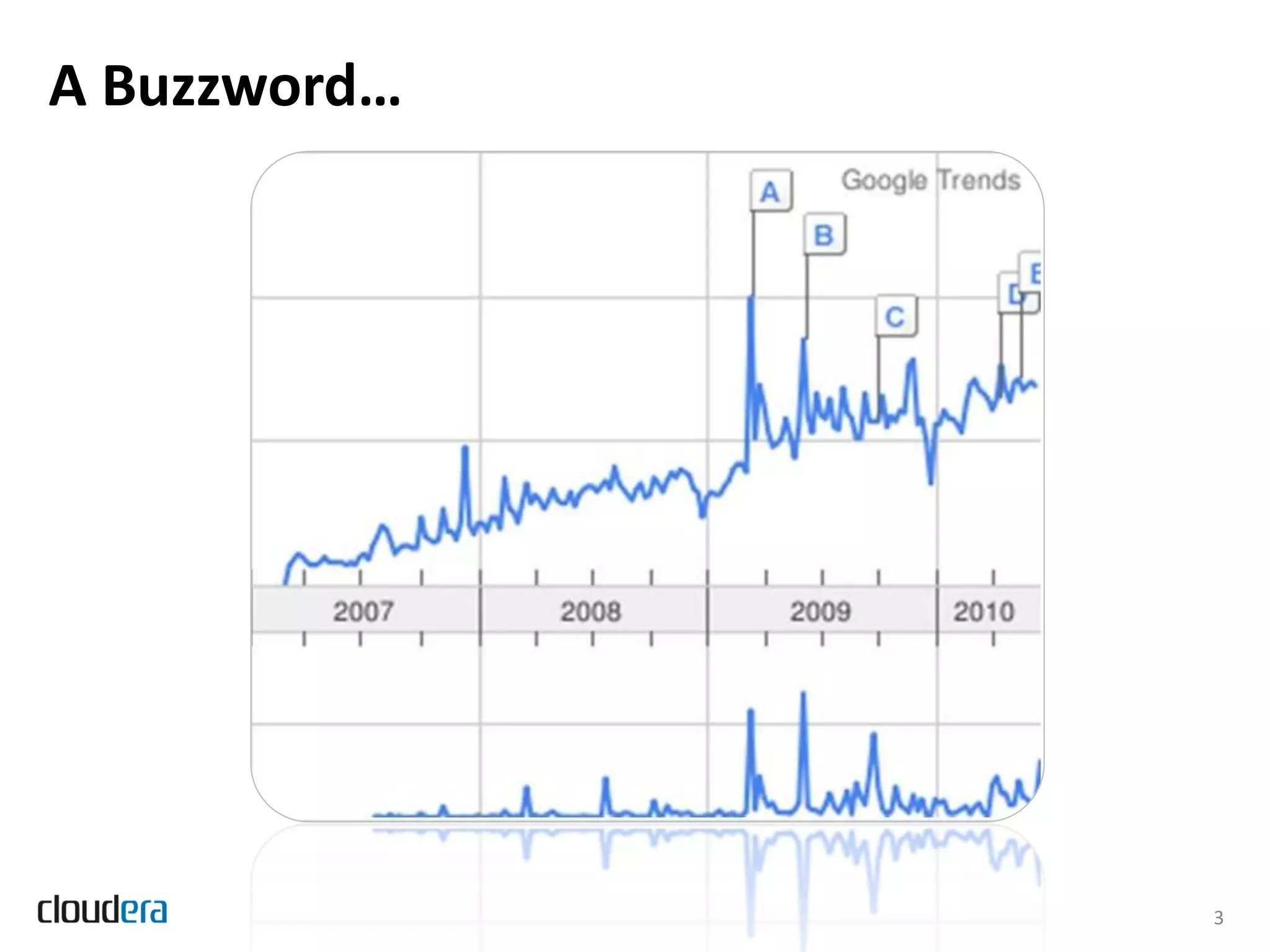

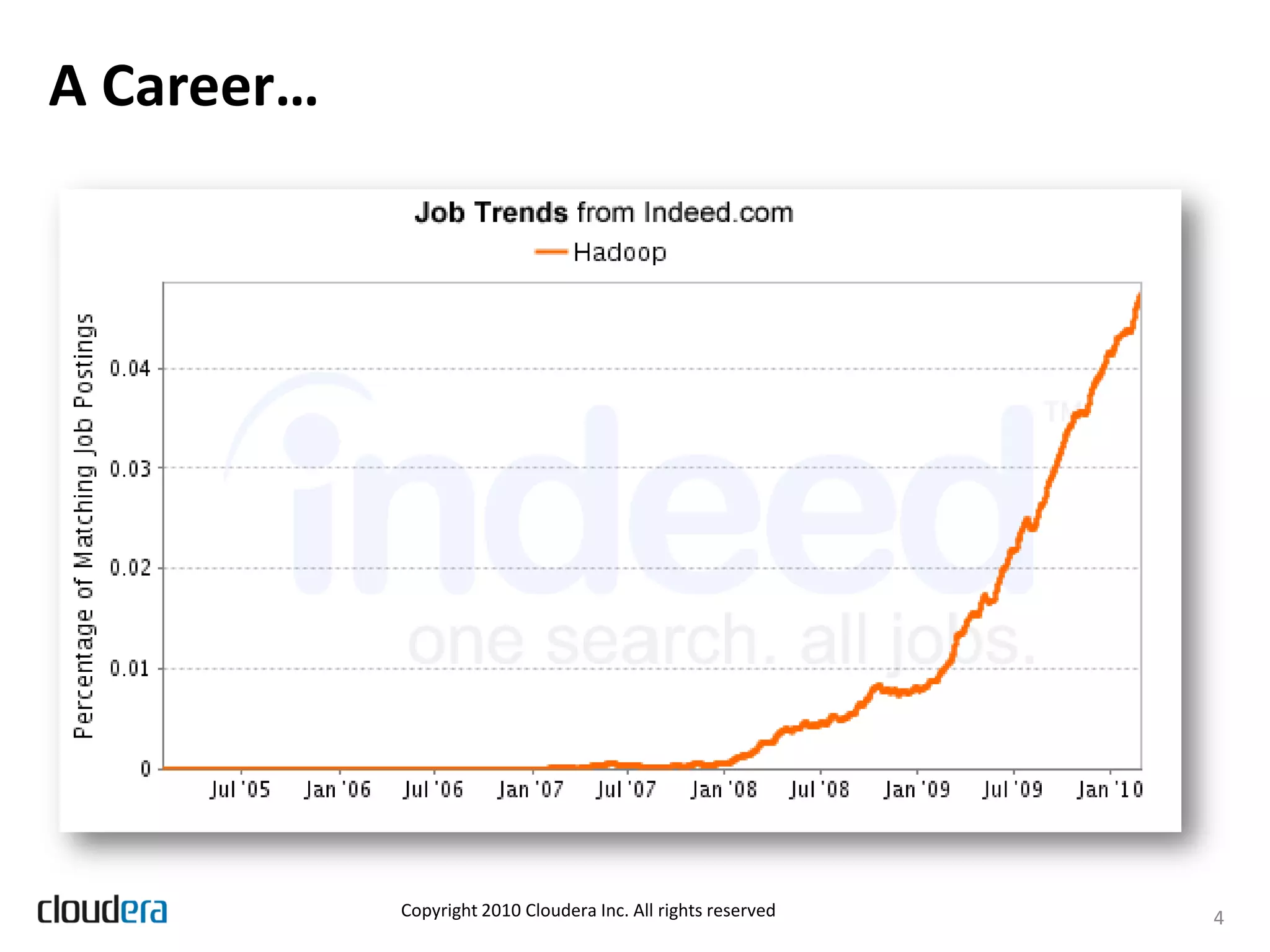

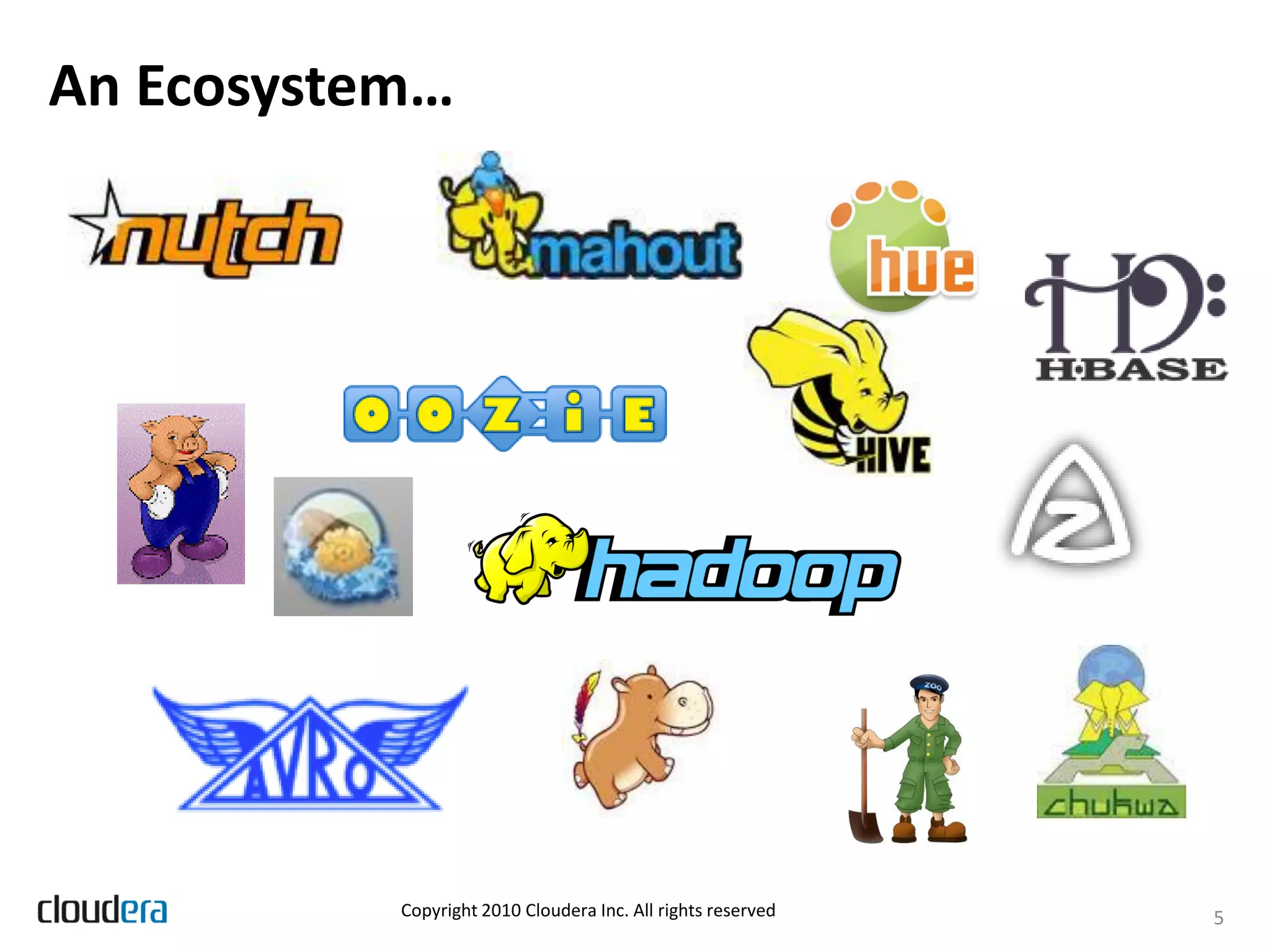

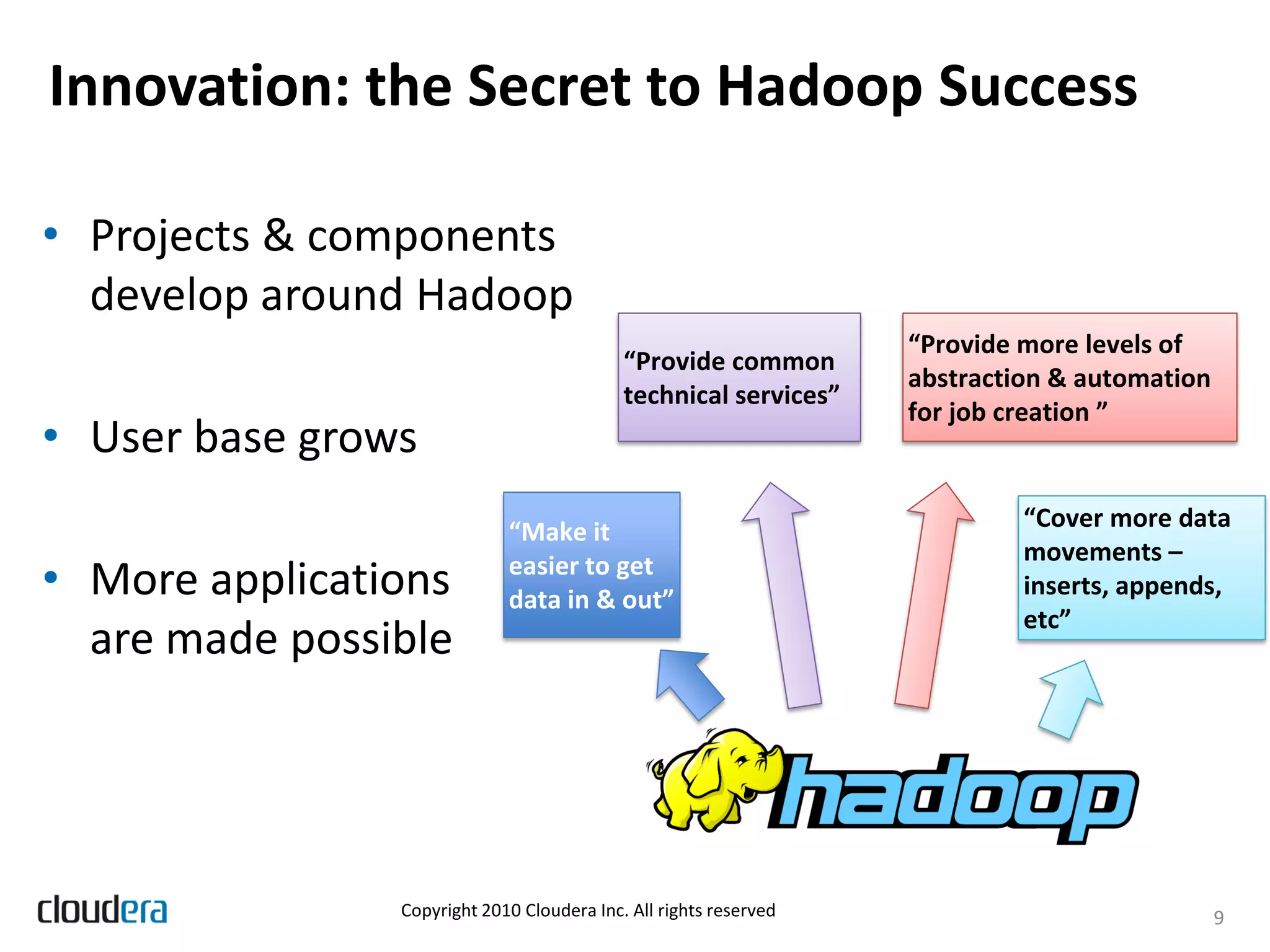

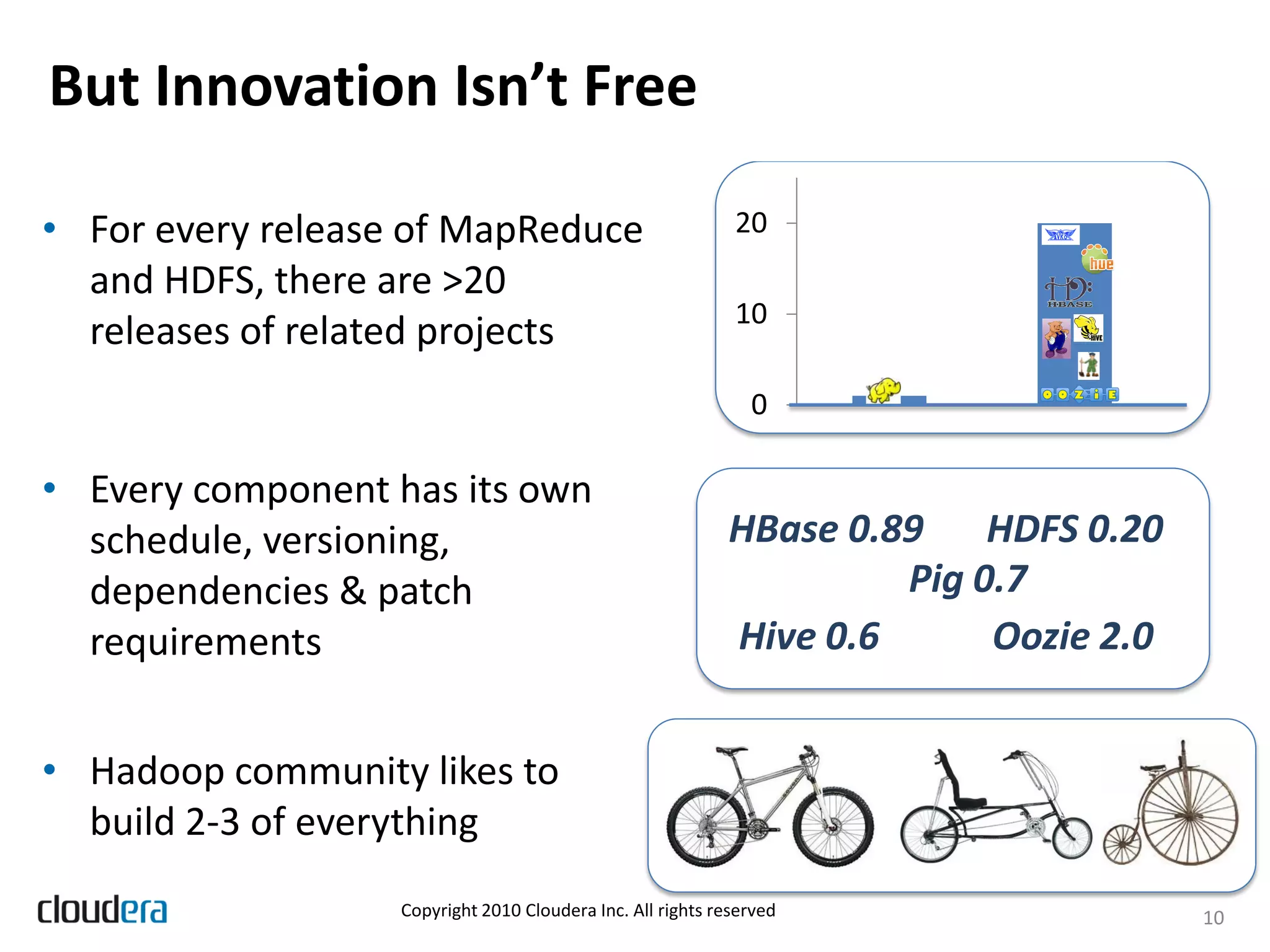

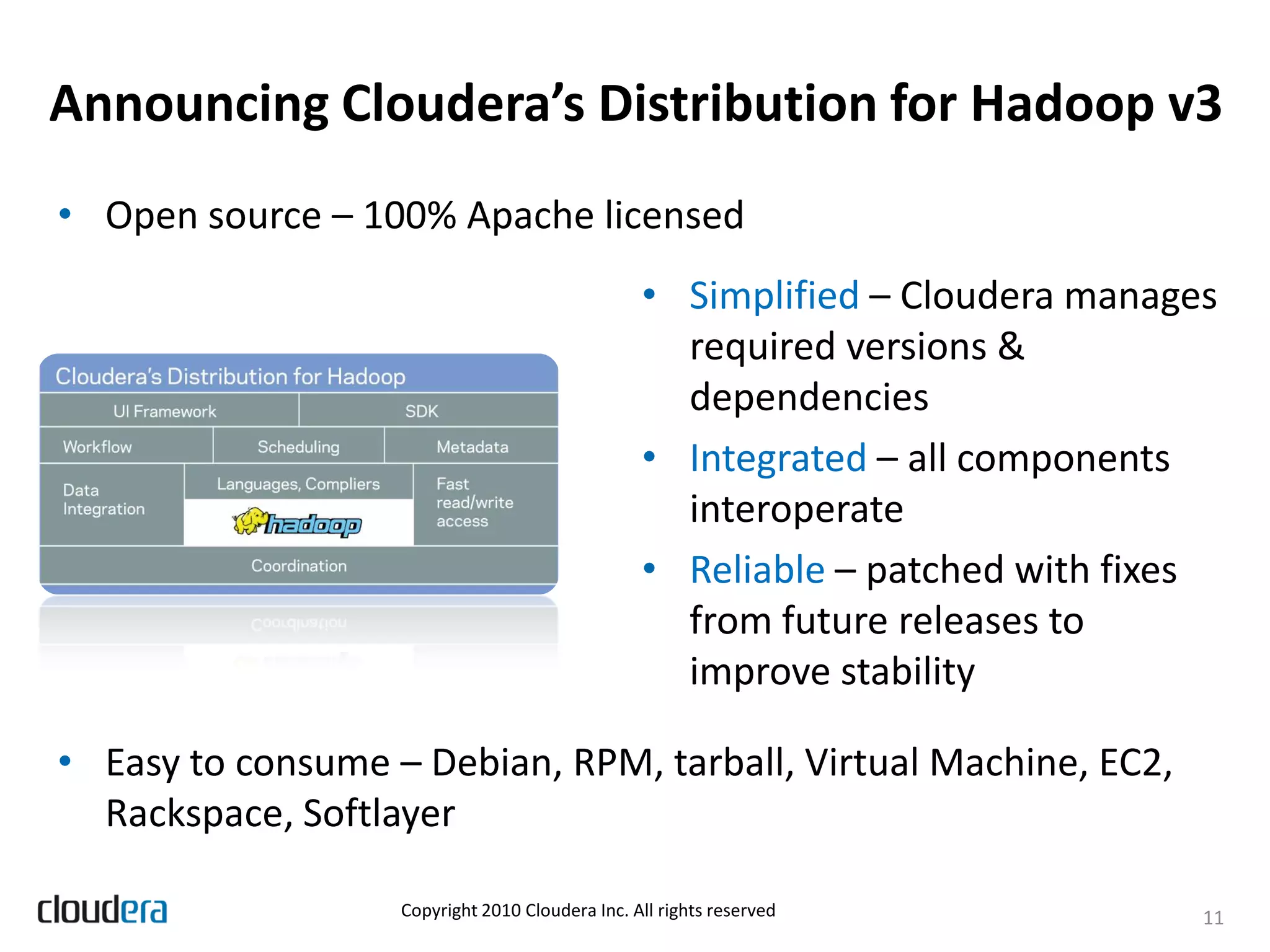

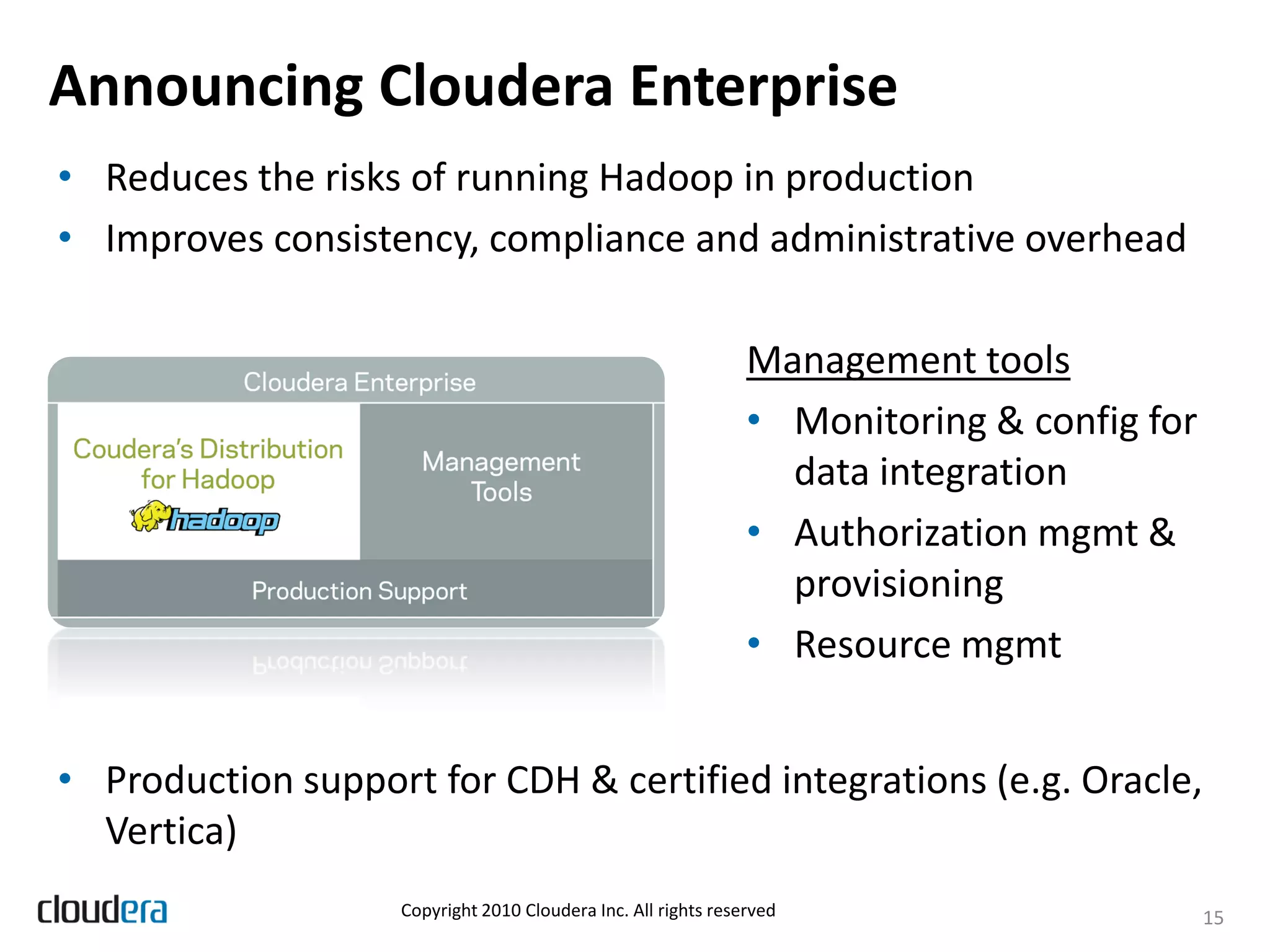

The document discusses the evolution and growth of Hadoop, highlighting its transition from a basic framework focused on MapReduce and HDFS to a comprehensive ecosystem with various components and applications. It announces Cloudera's Distribution for Hadoop (CDH) version 3, which integrates multiple components, simplifies dependency management, and introduces new features like Flume and Hue. Additionally, it addresses the challenges associated with using Hadoop in production environments, outlining Cloudera Enterprise's tools to improve manageability and compliance.