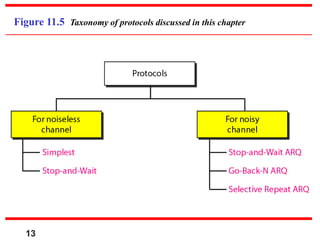

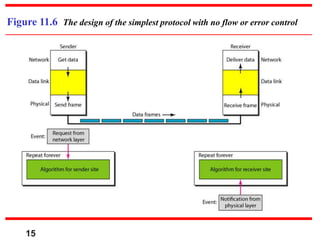

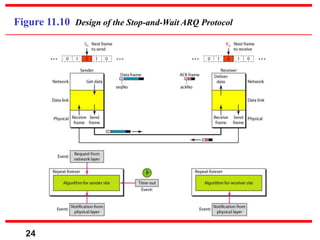

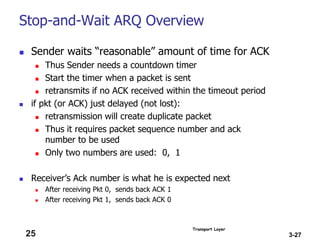

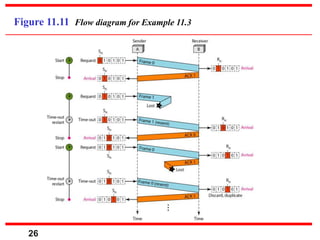

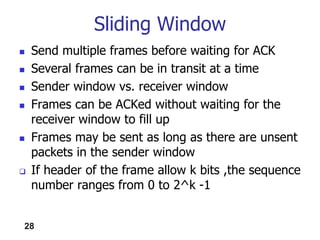

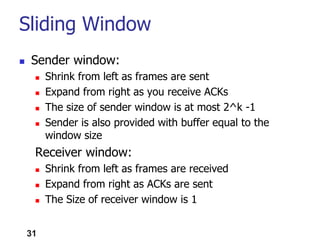

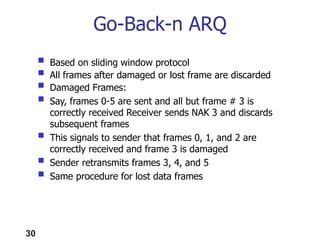

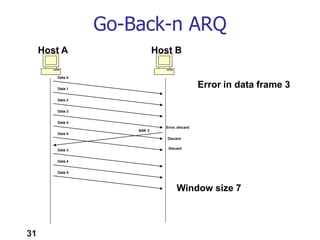

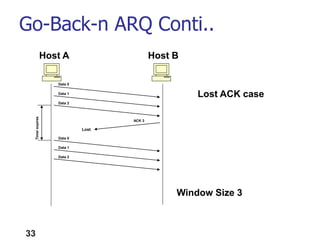

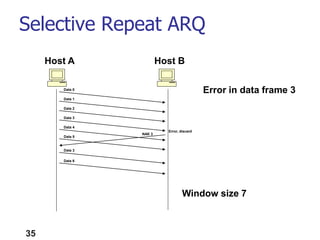

This document discusses various protocols used at the data link layer, including framing, flow control, and error control. It covers the simplest protocol, stop-and-wait protocol, and various automatic repeat request (ARQ) protocols for both noiseless and noisy channels. The key protocols discussed are stop-and-wait ARQ, go-back-N ARQ, and selective repeat ARQ. These protocols use concepts like framing, sequencing, acknowledgments, timers, and sliding windows to provide reliable data transmission over networks.