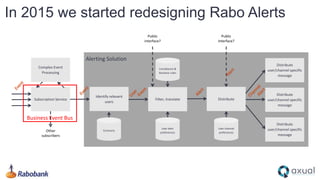

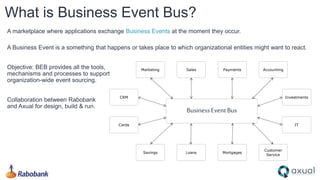

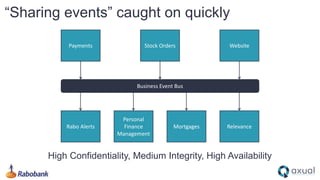

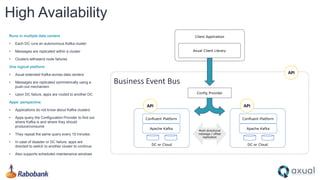

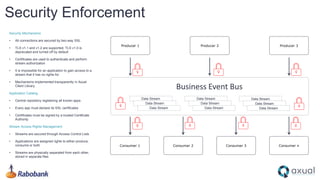

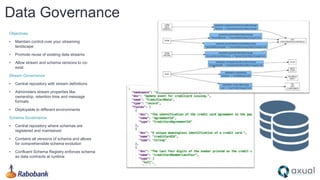

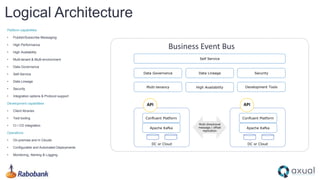

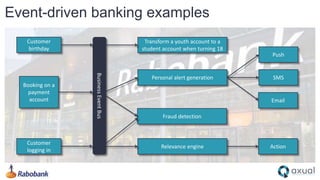

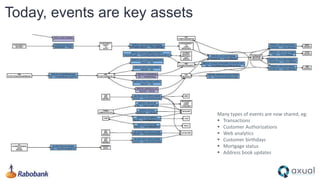

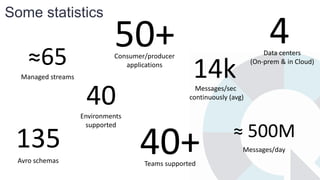

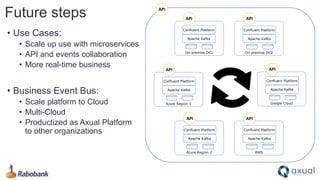

Rabobank is transitioning into an event-driven bank, leveraging a Business Event Bus to facilitate the real-time exchange of business events. The infrastructure is supported by a collaboration with Axual and utilizes Apache Kafka for messaging across multiple data centers. Key objectives include high availability, security, data governance, and empowering teams through self-service capabilities in managing data streams.