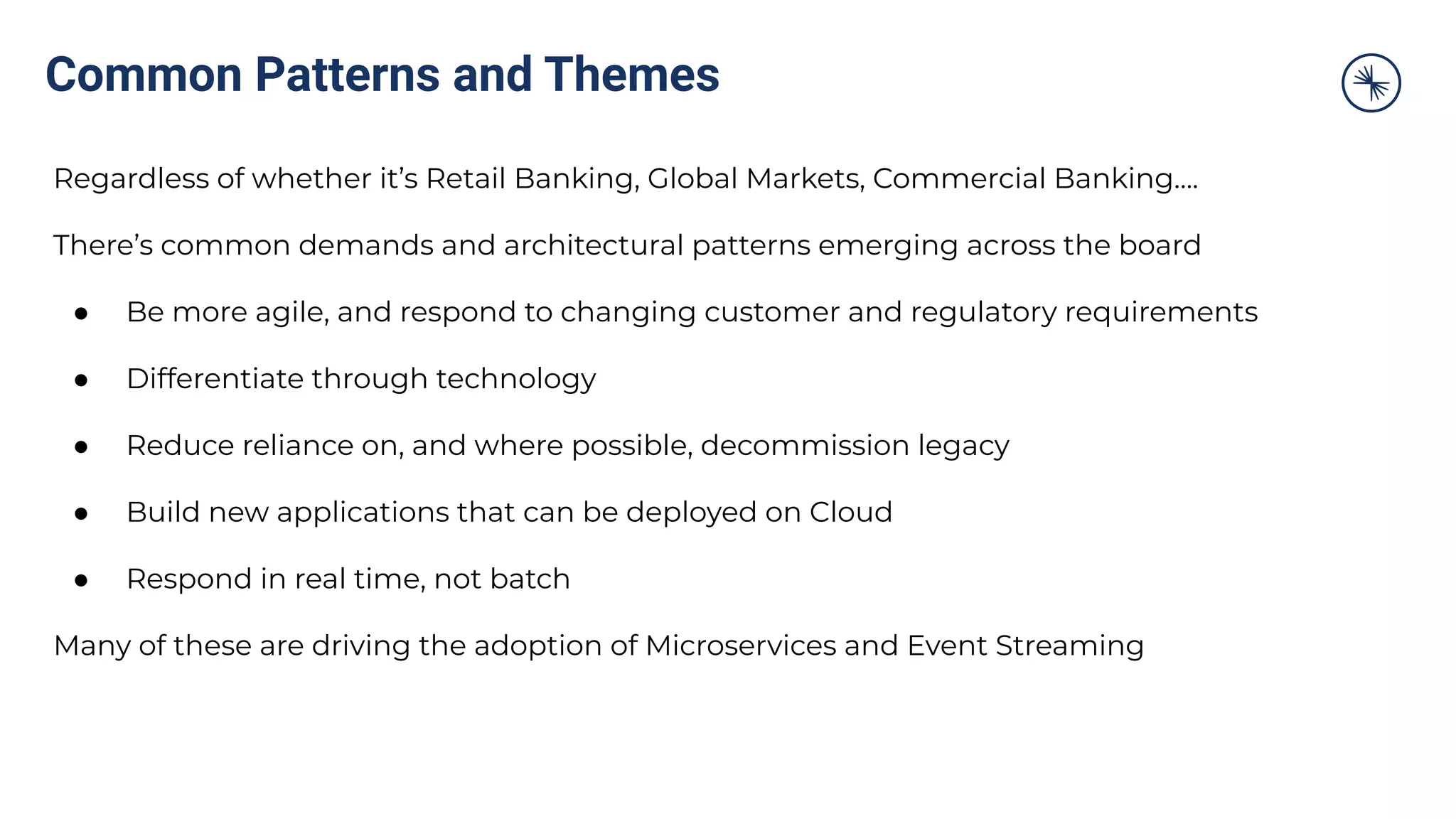

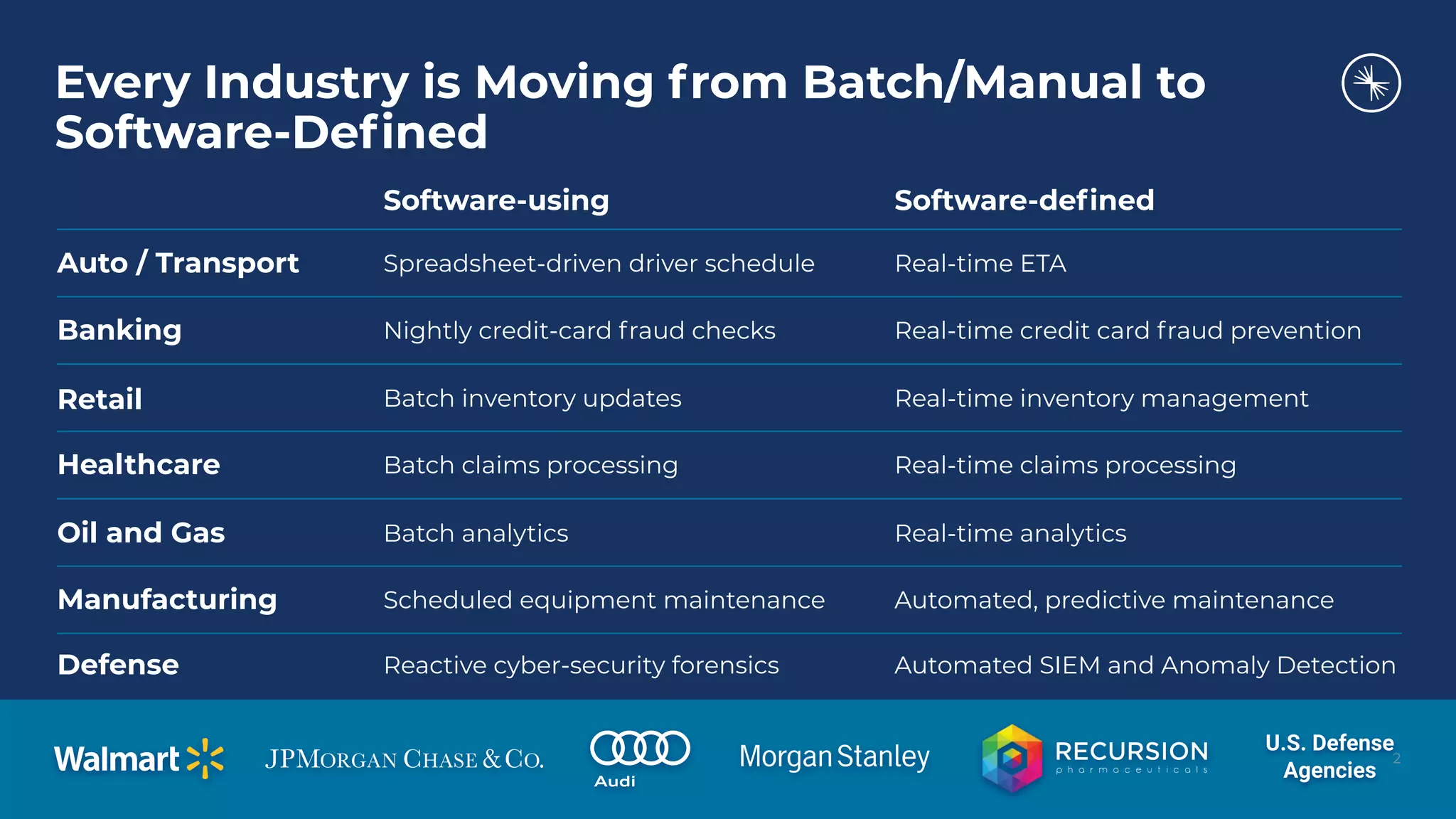

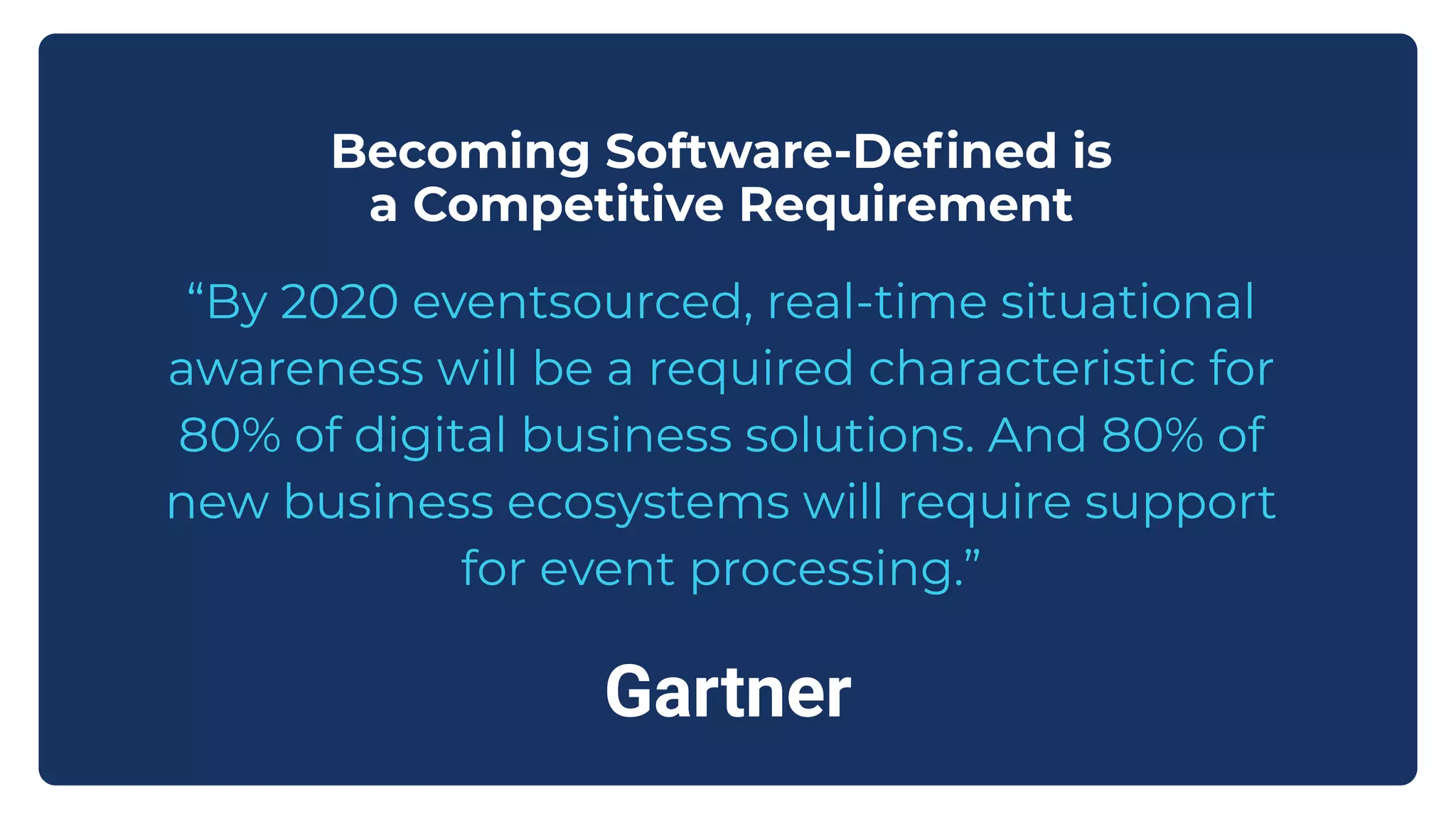

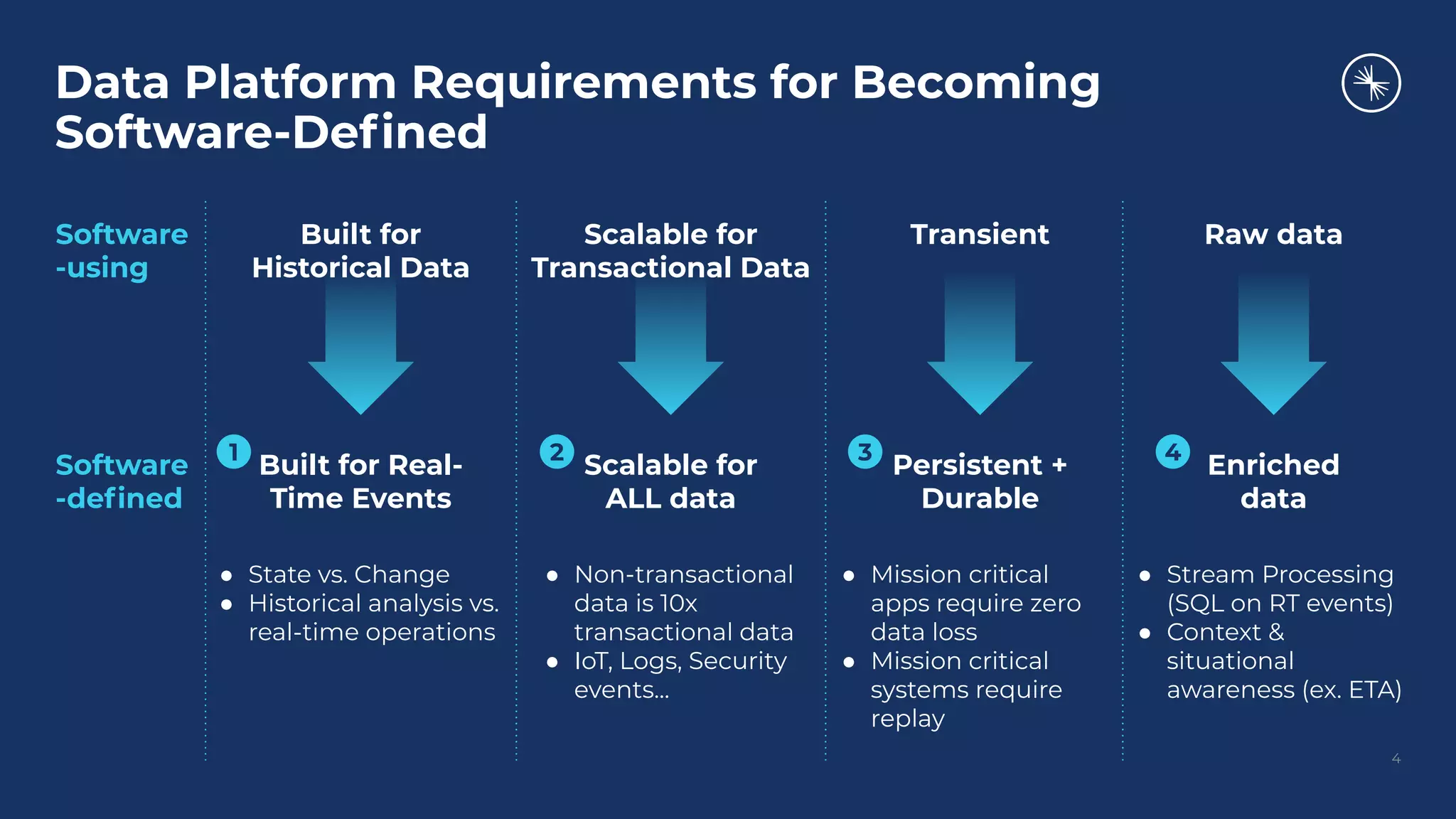

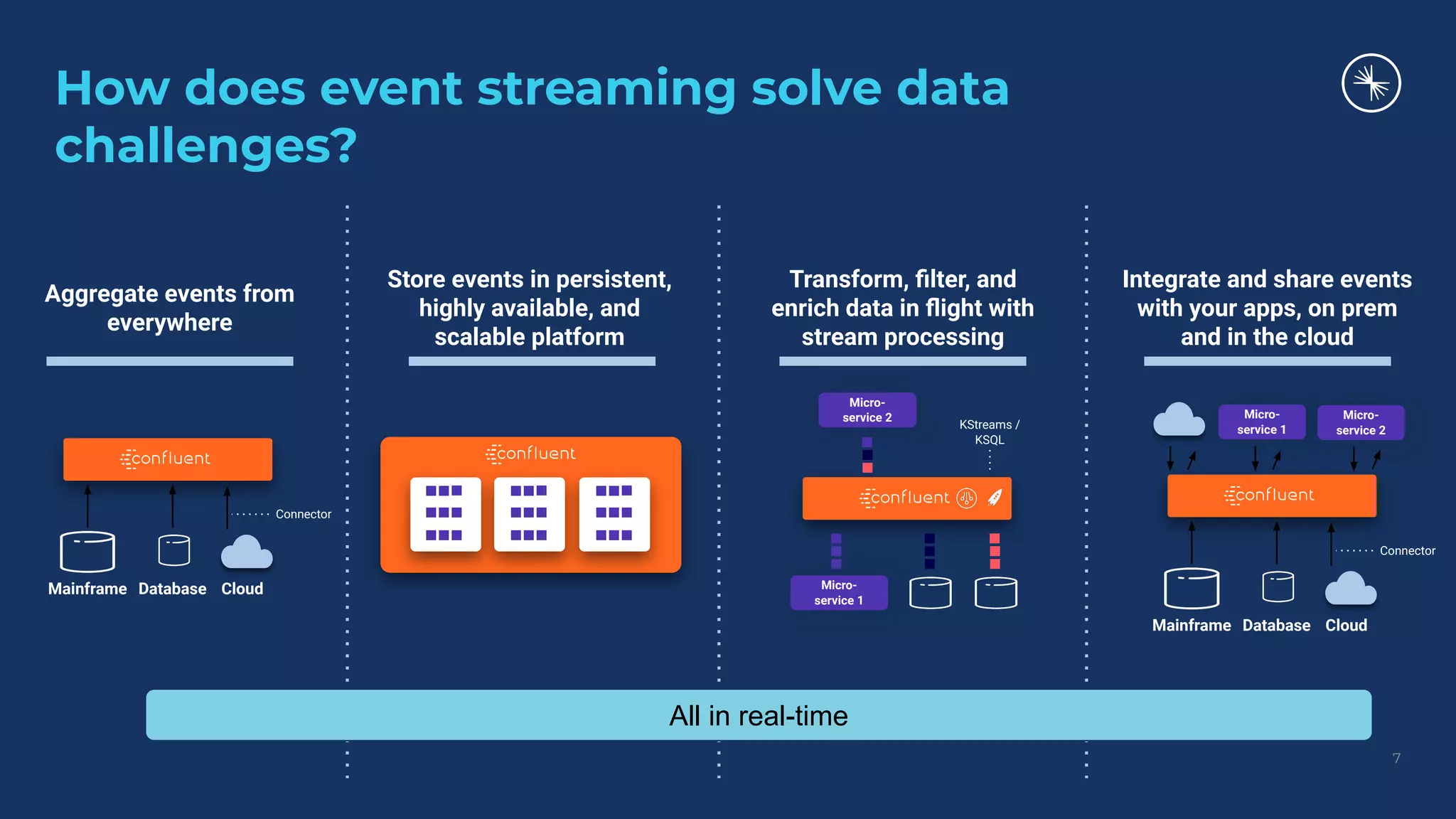

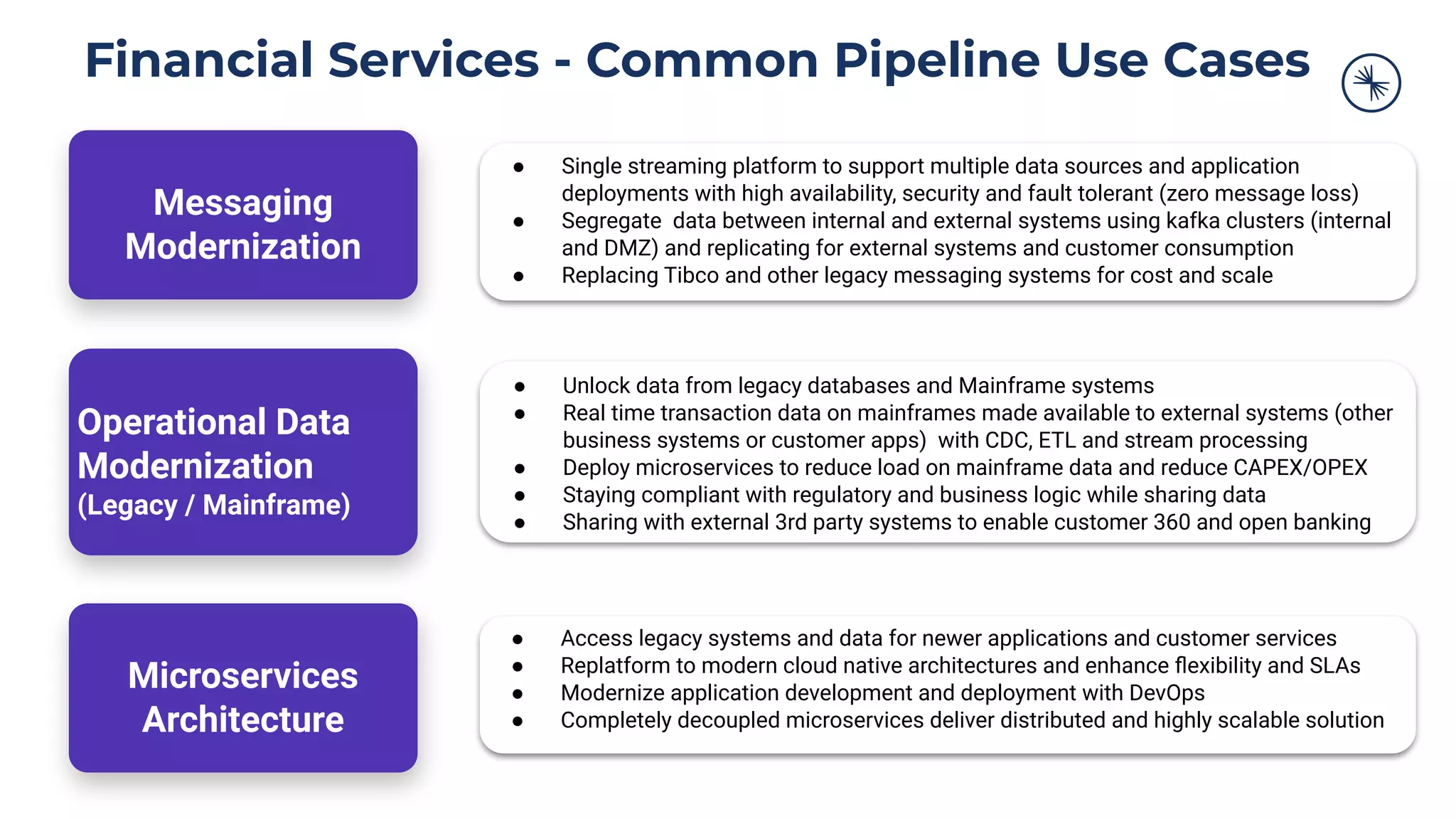

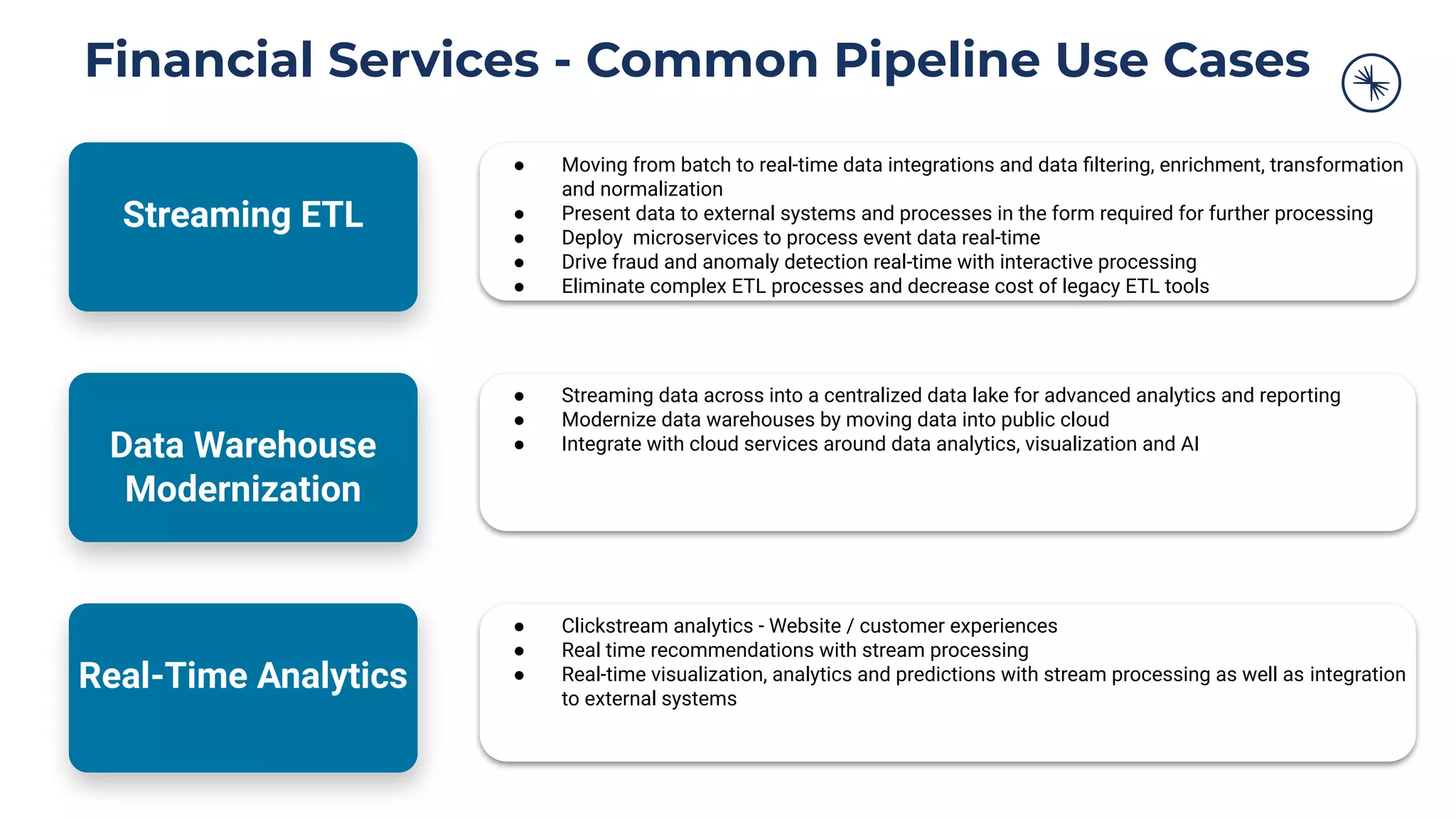

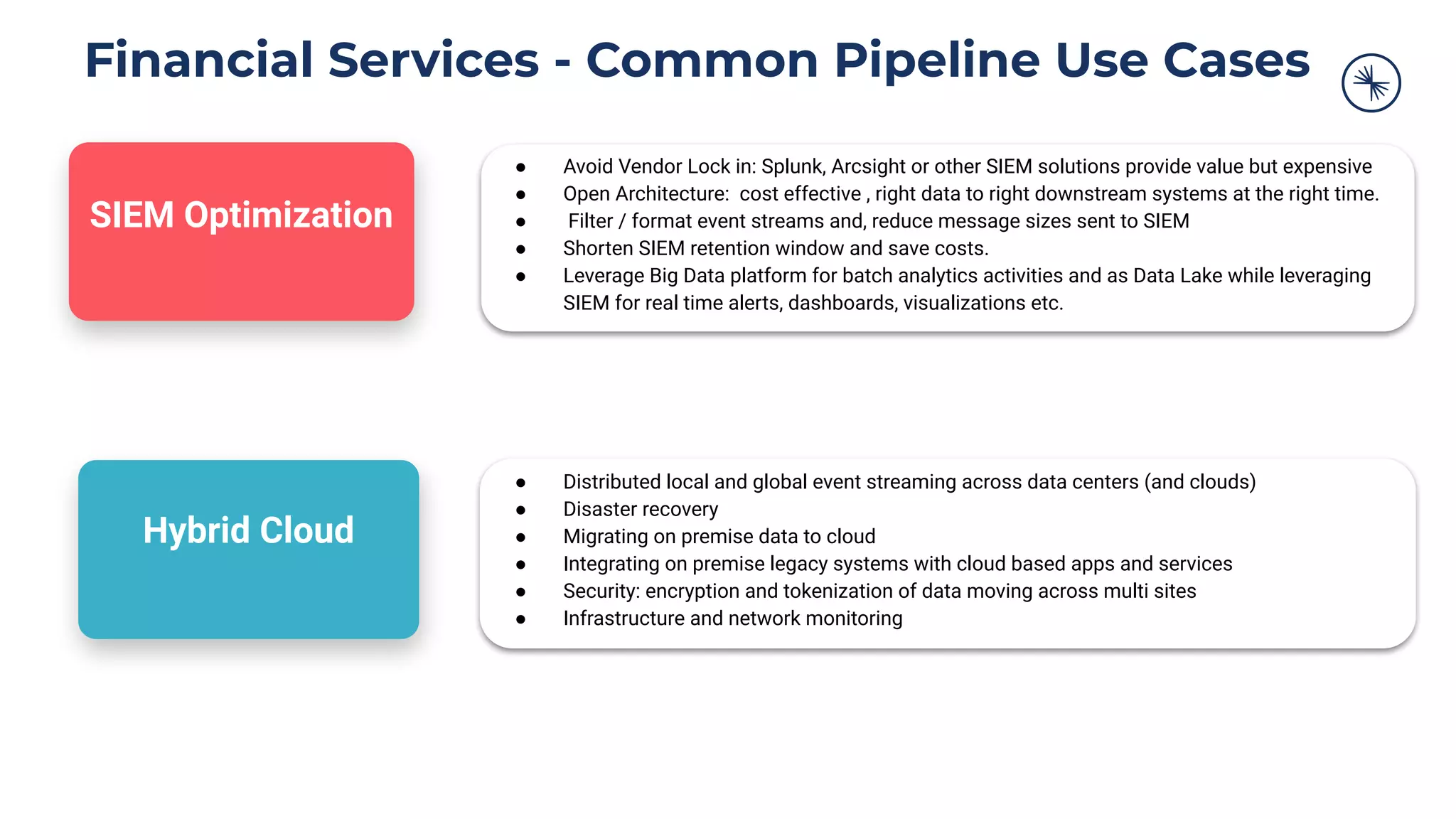

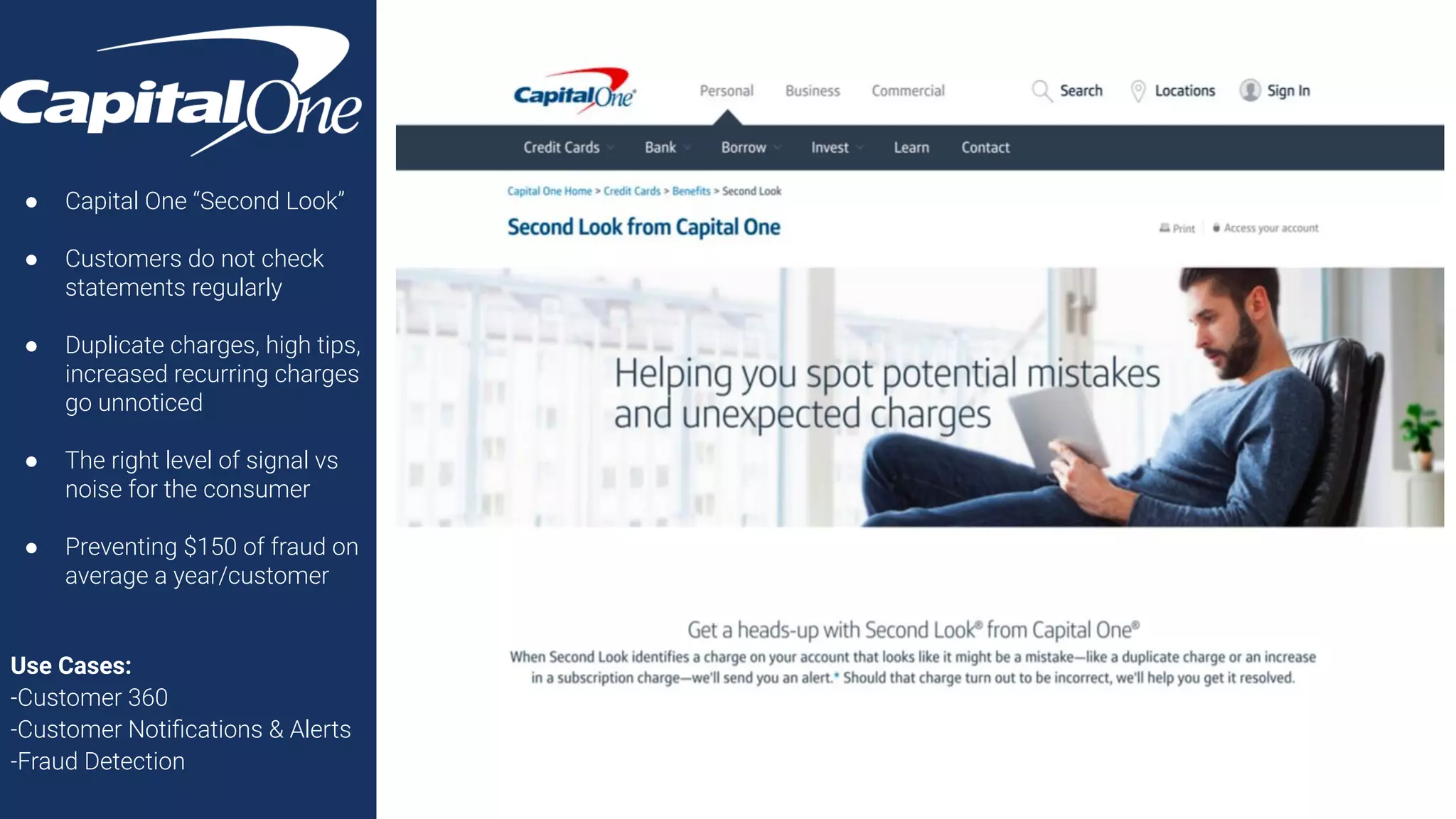

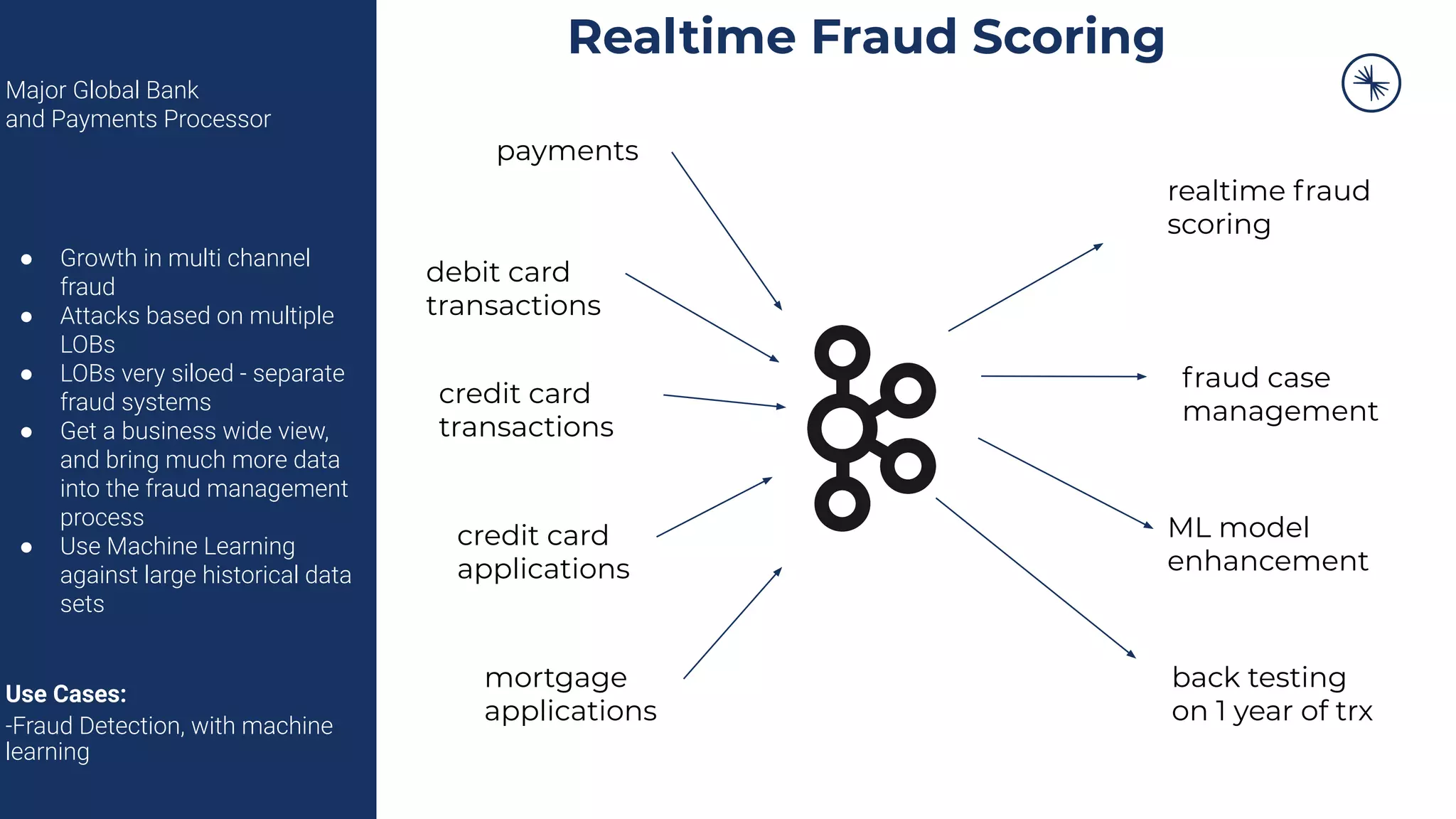

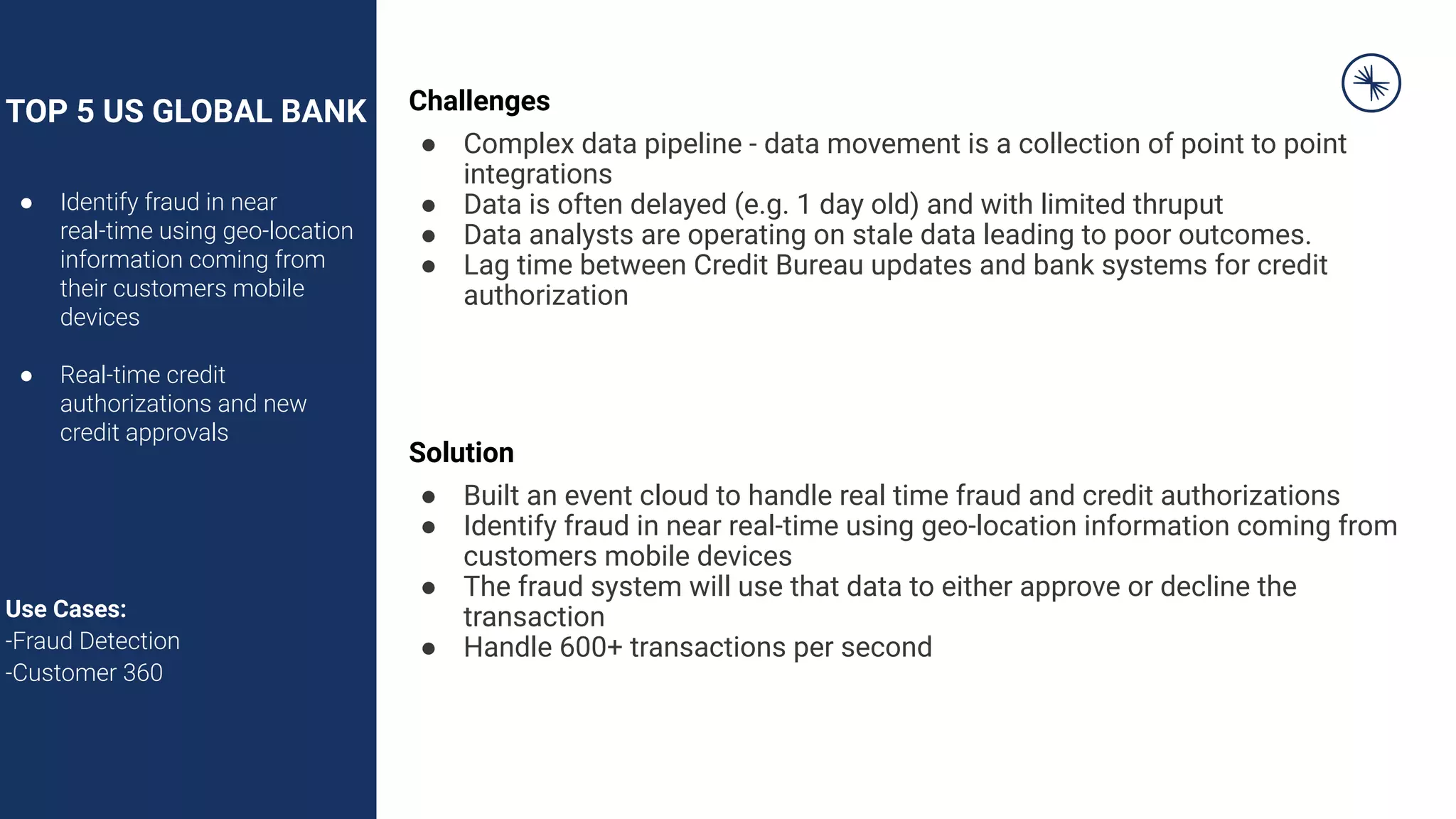

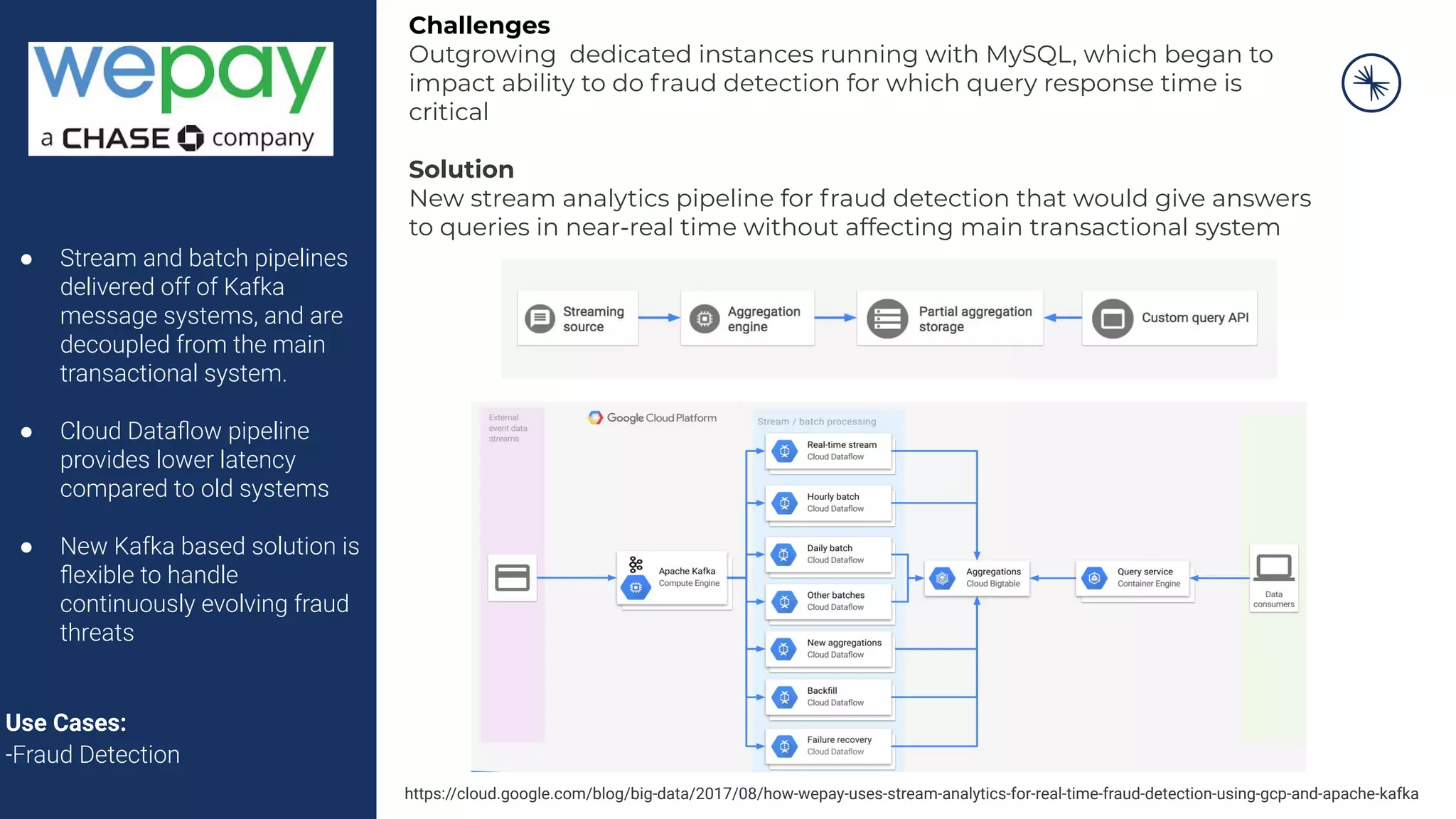

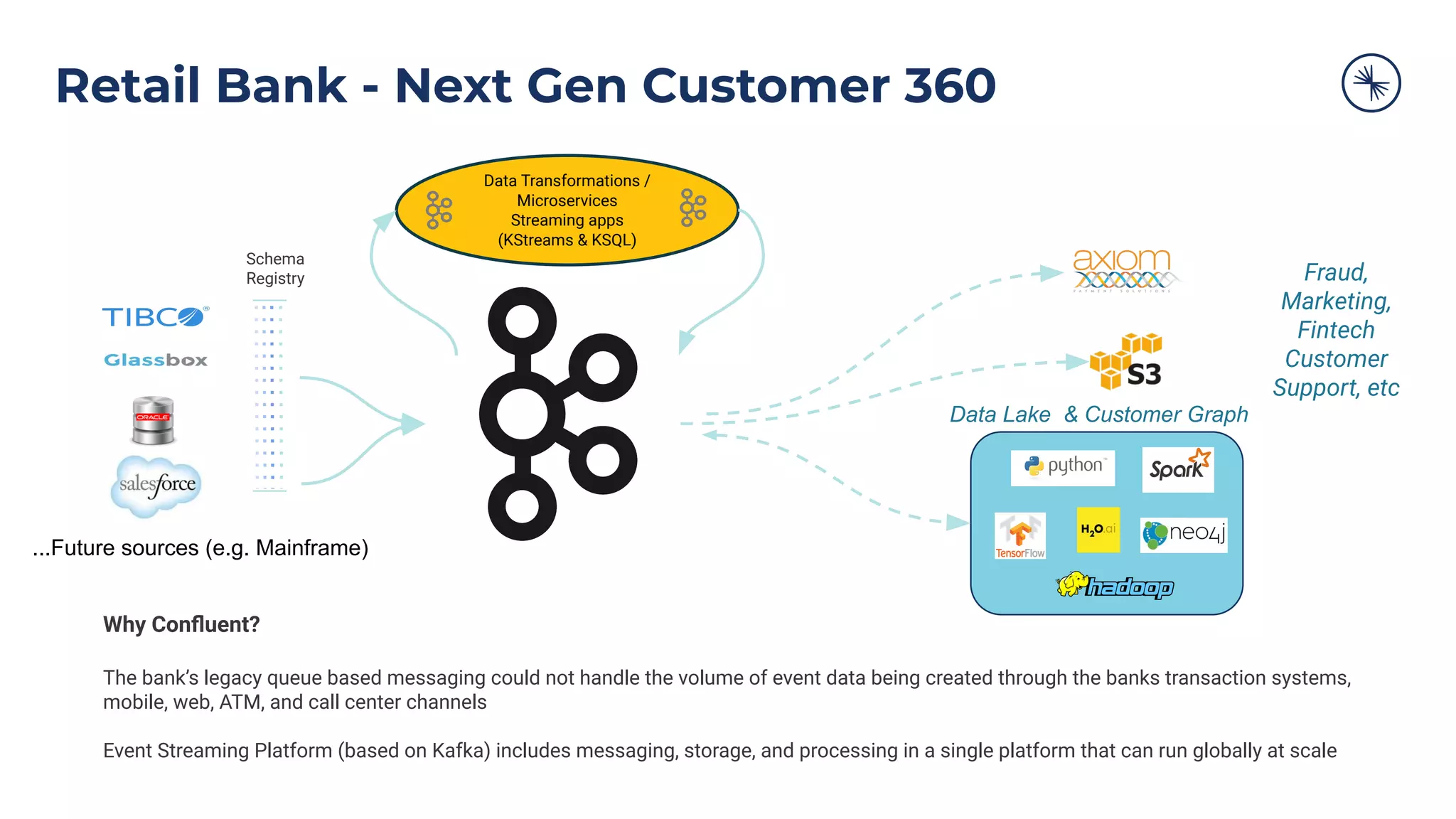

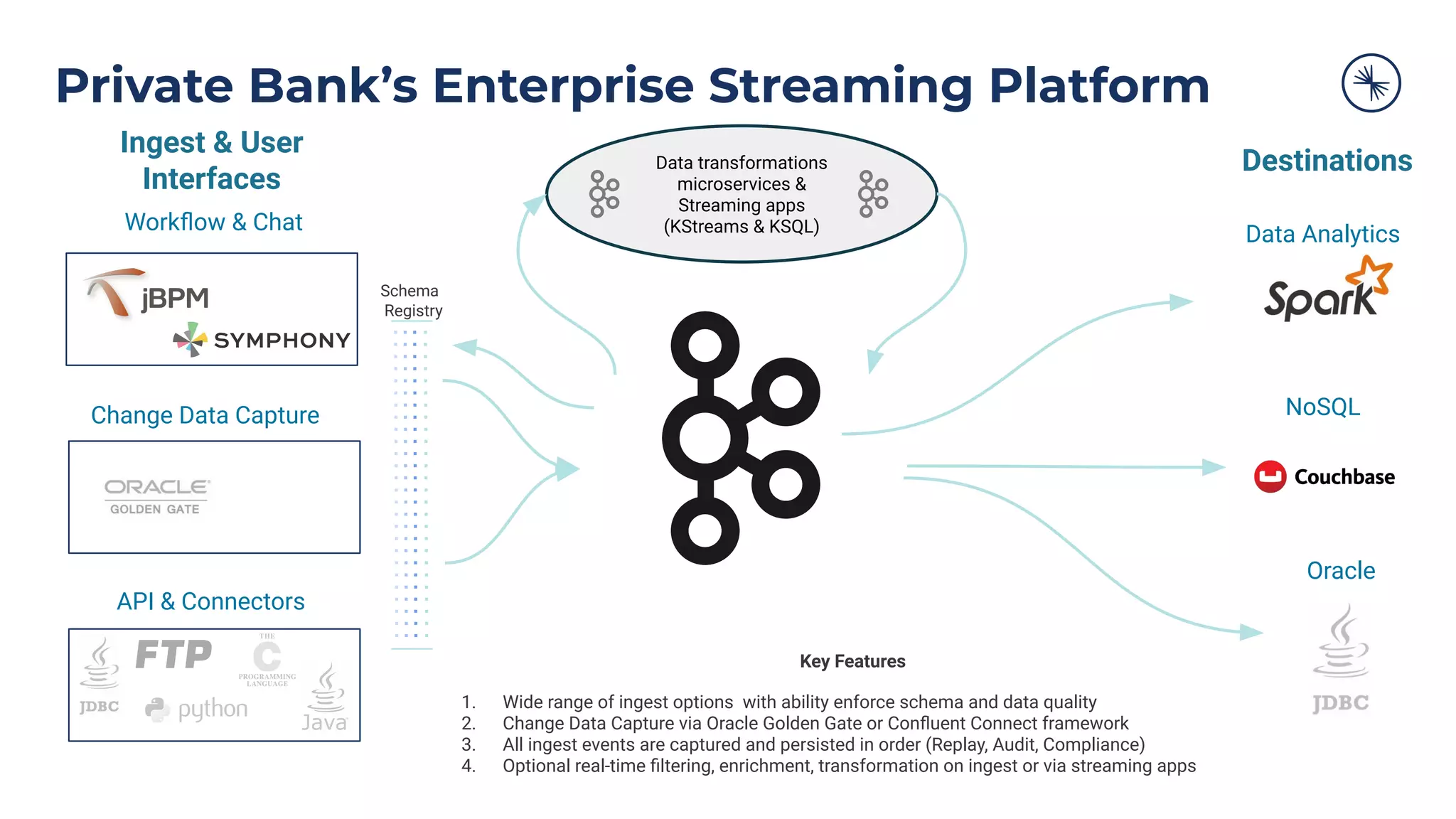

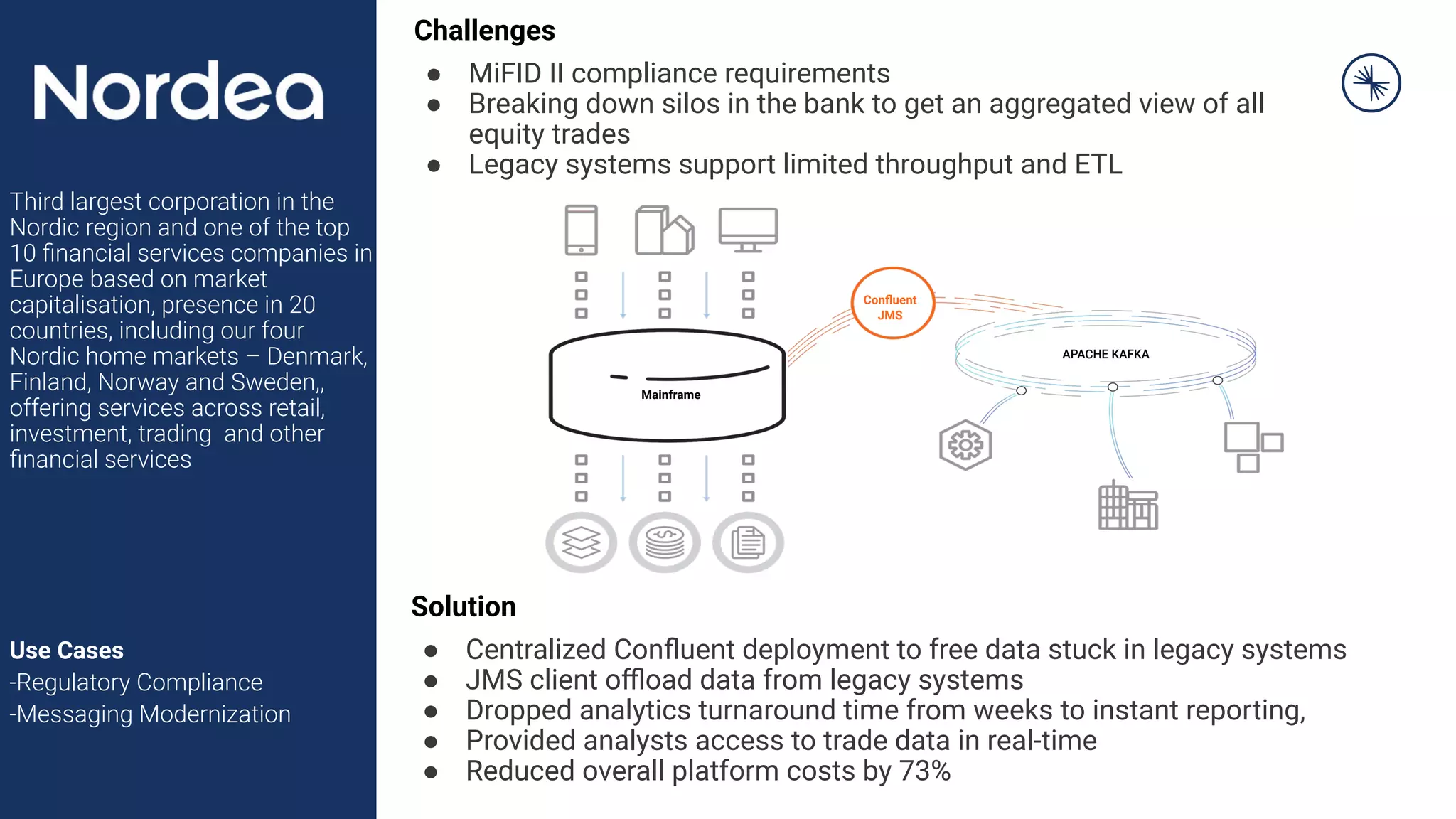

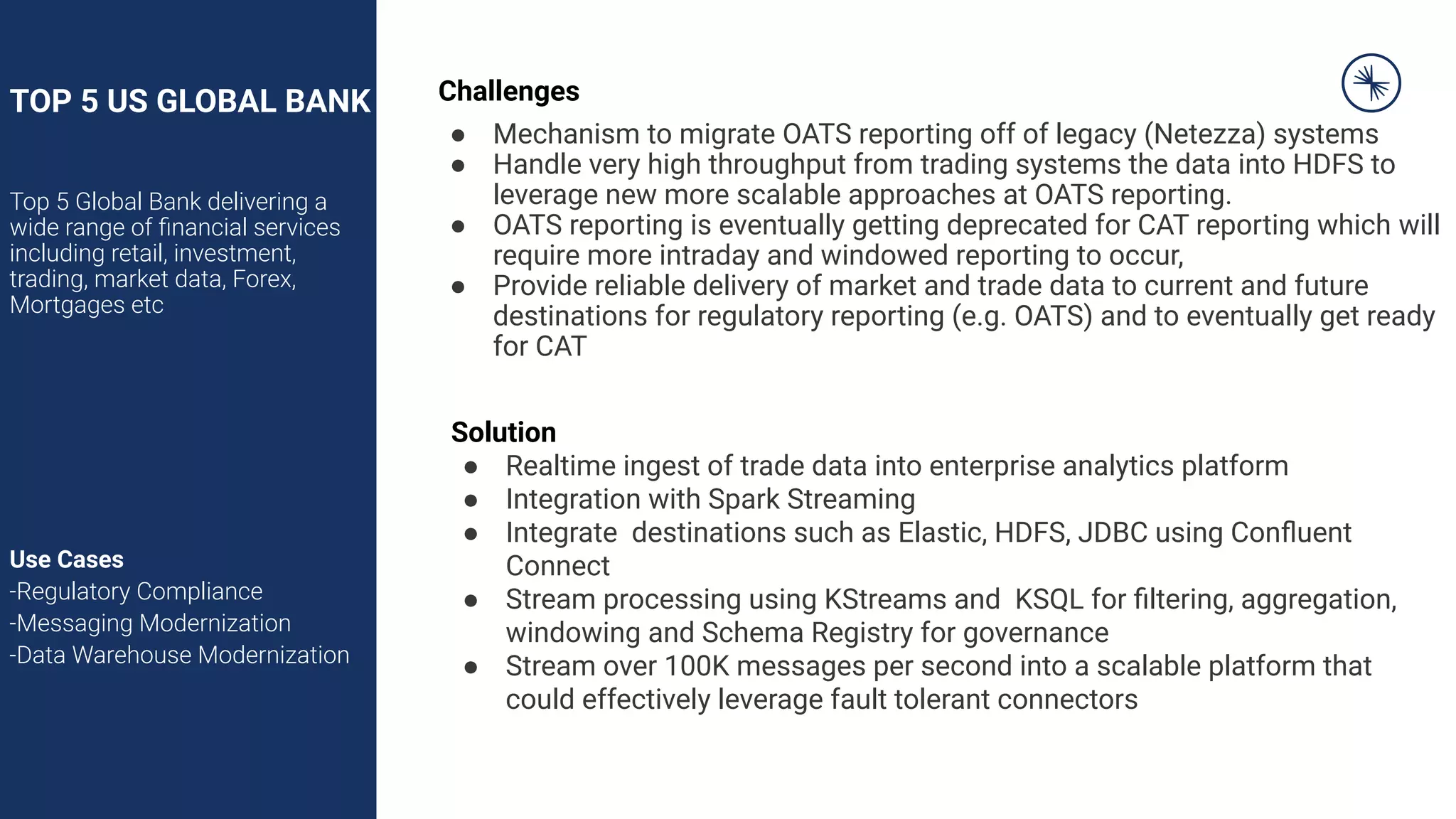

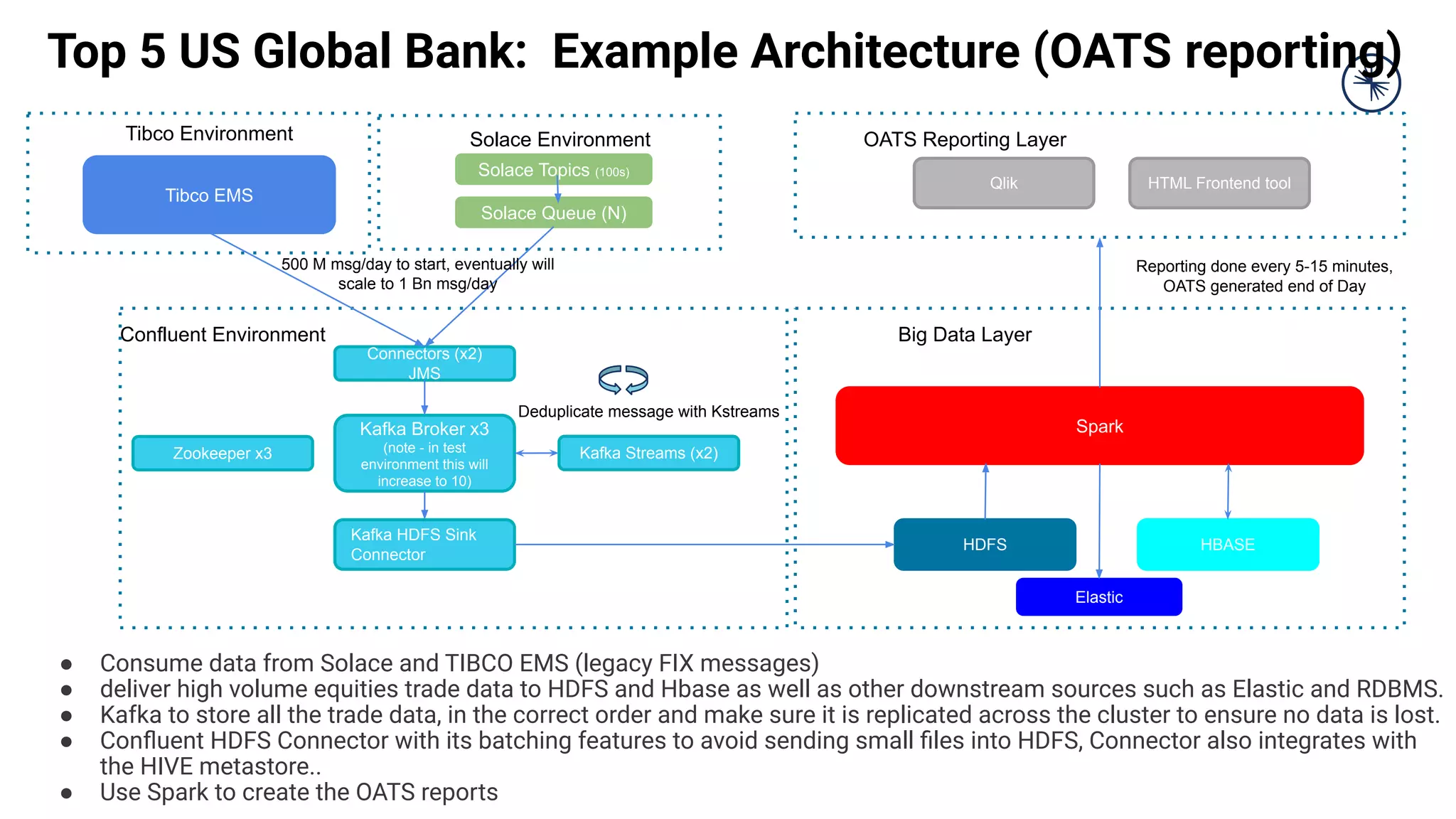

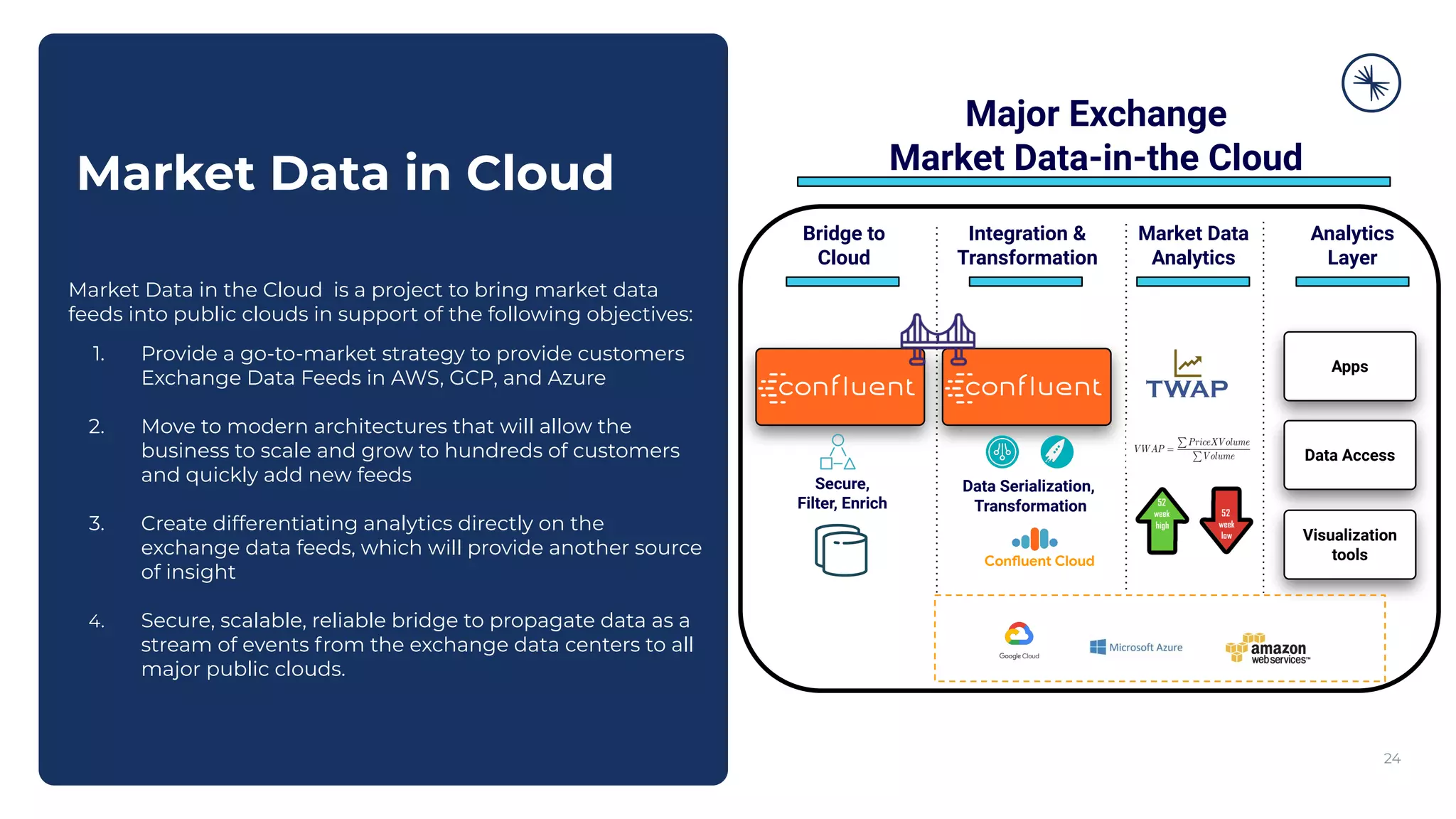

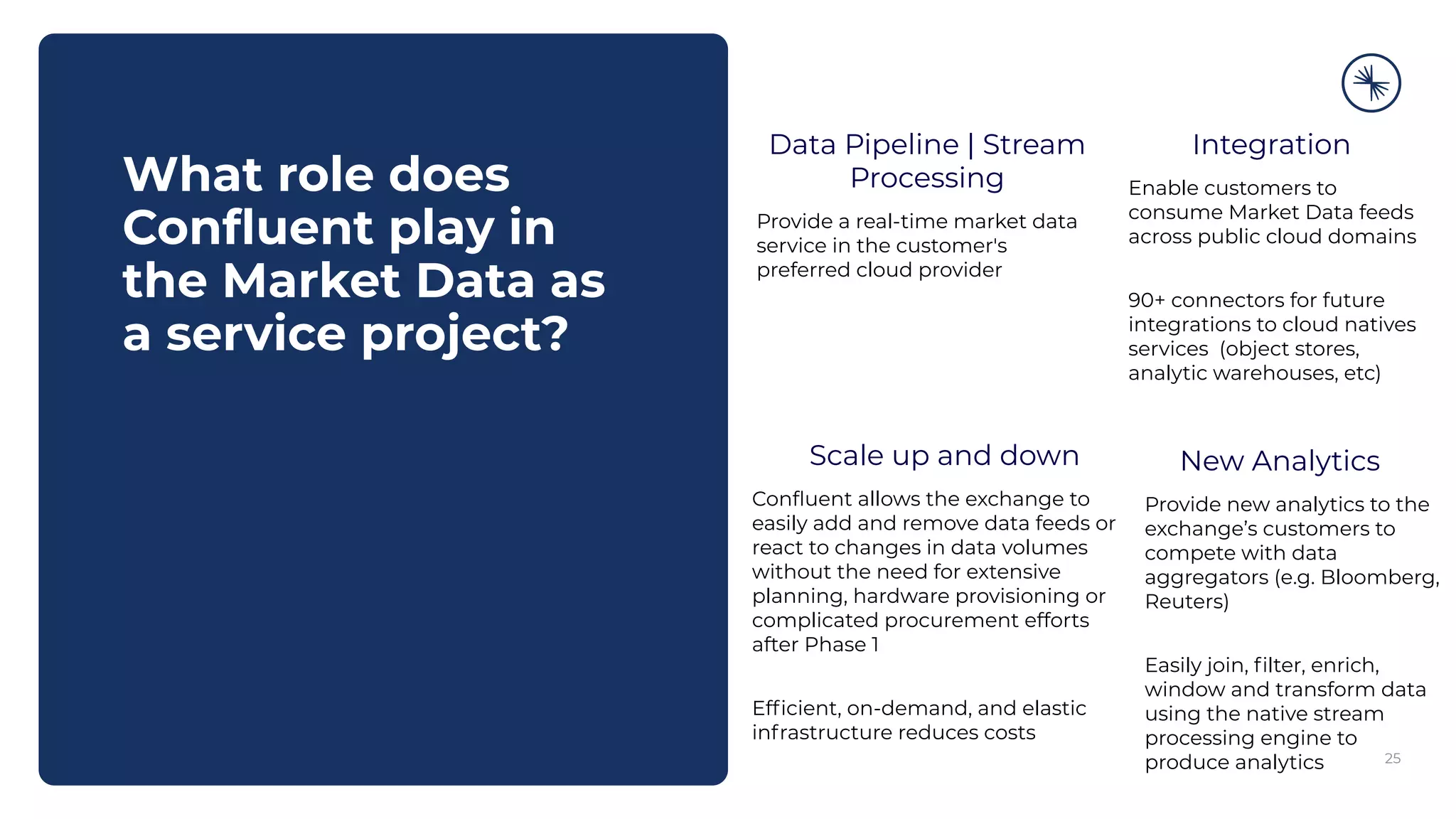

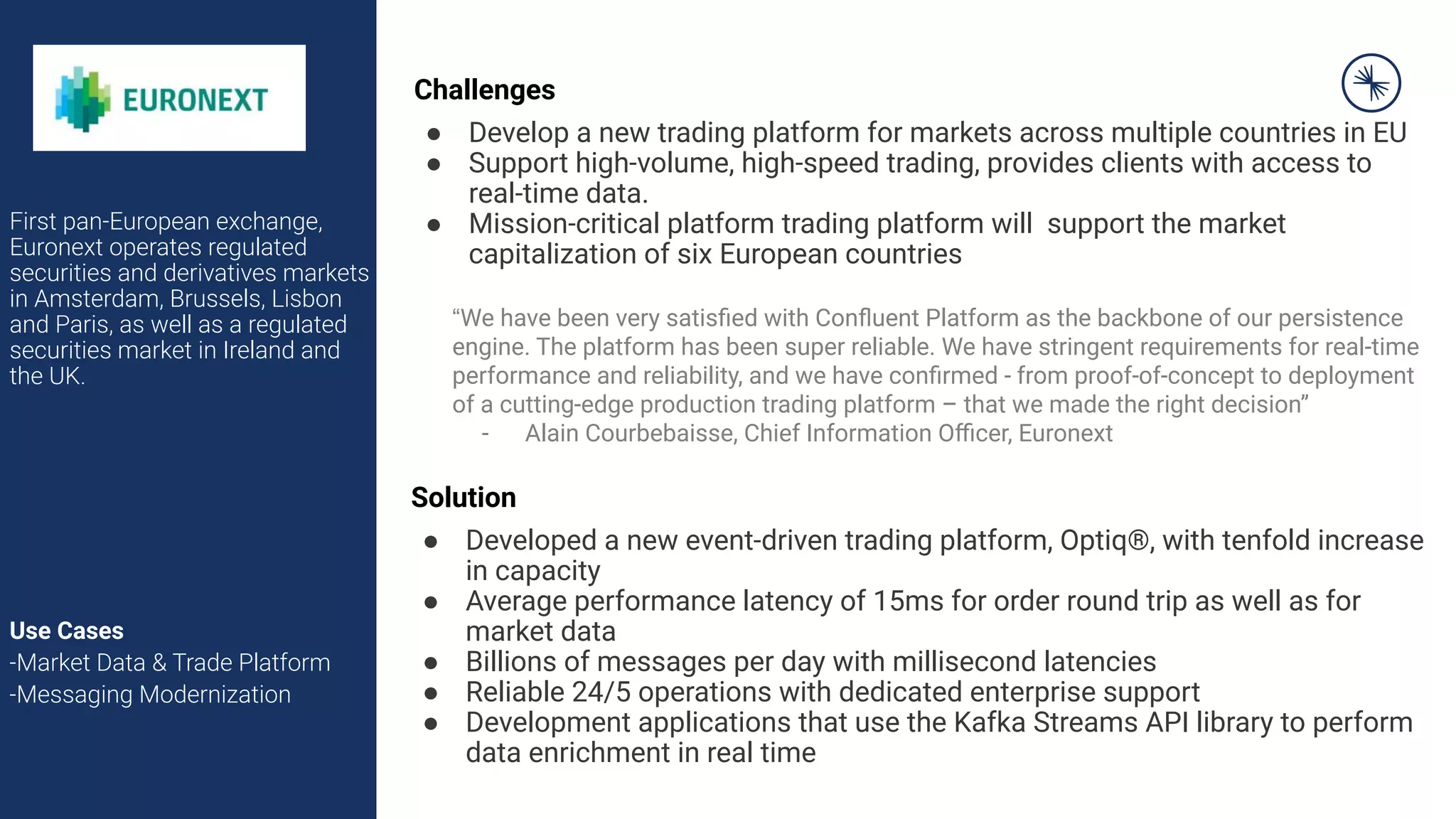

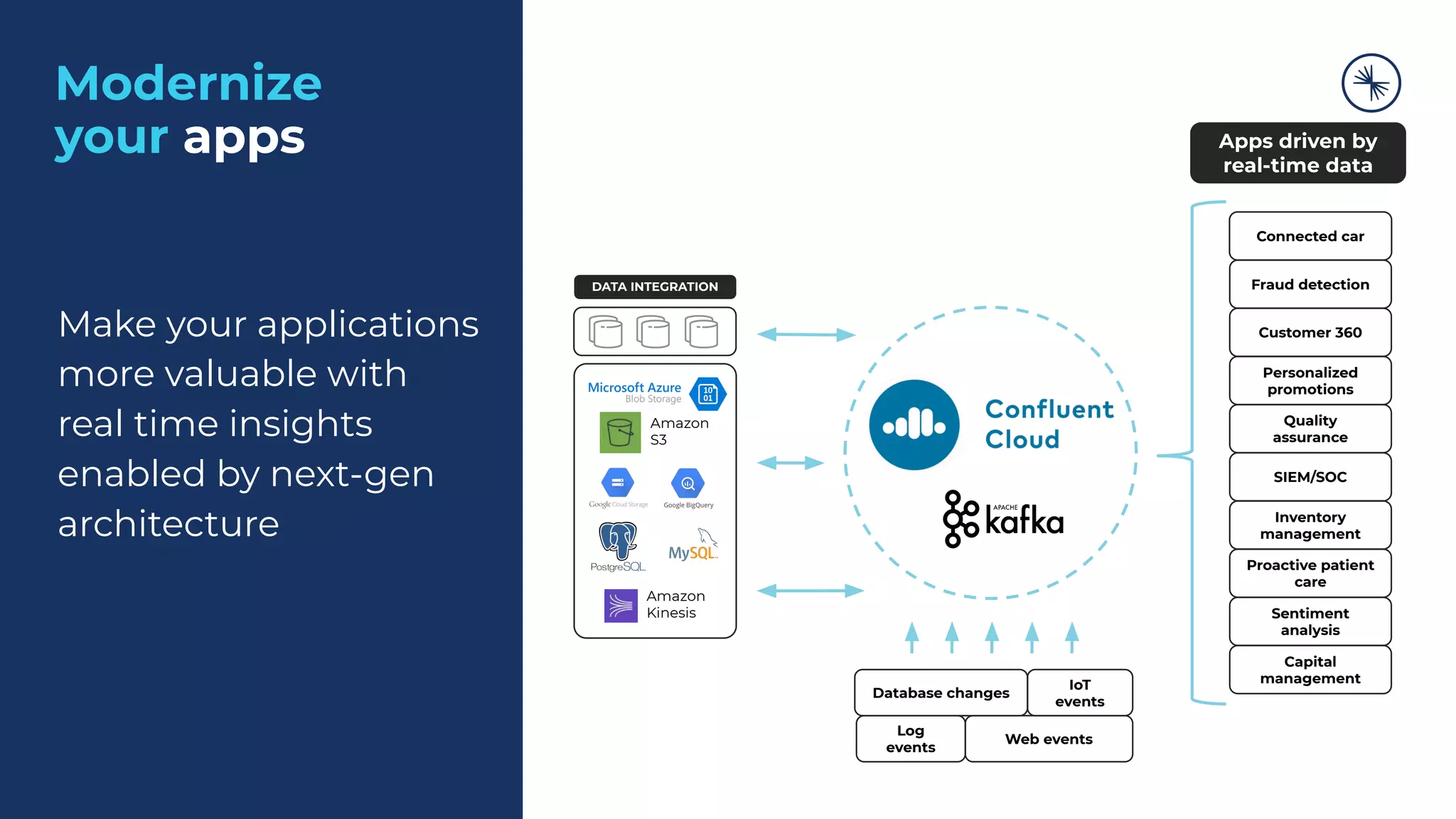

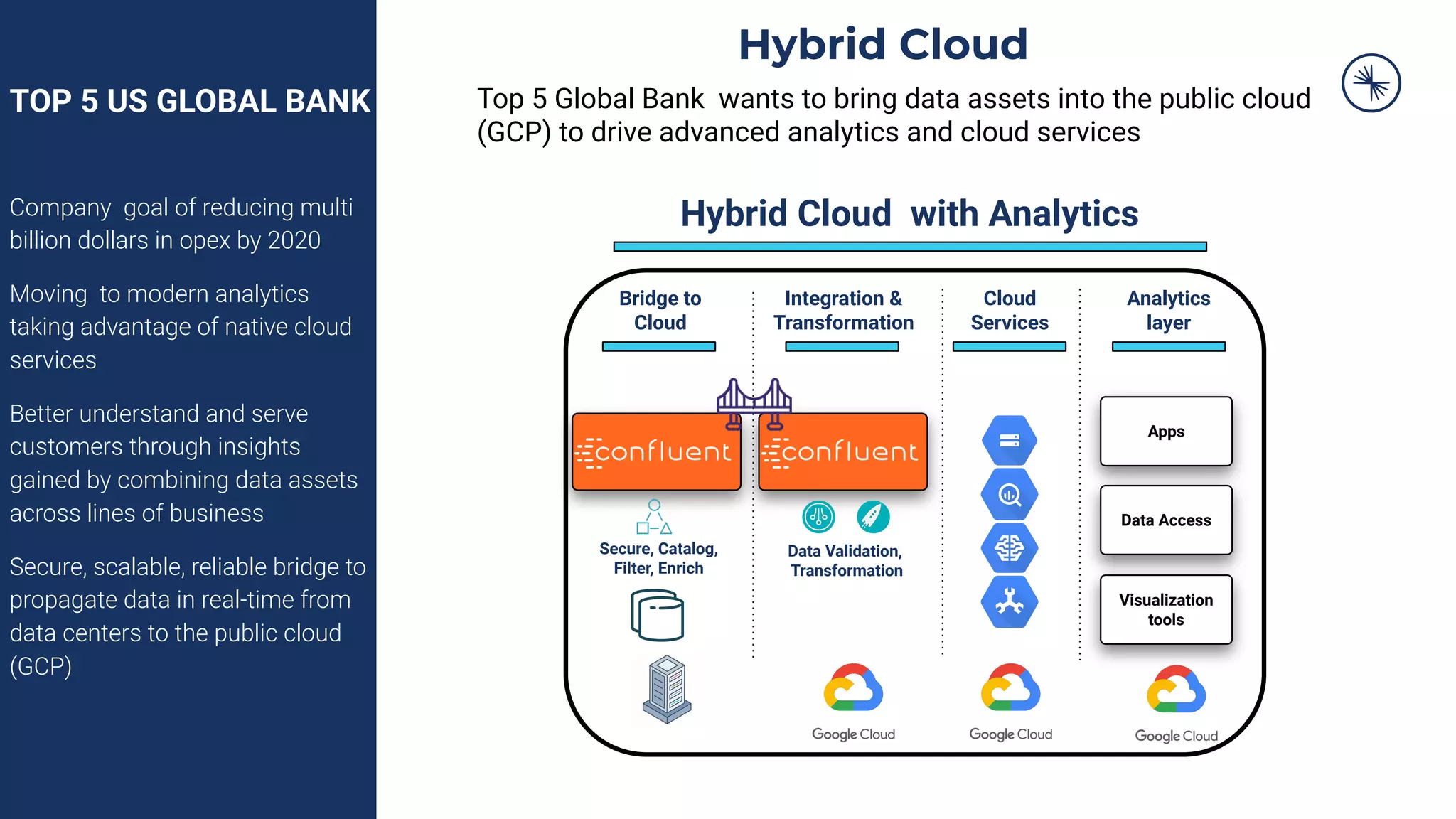

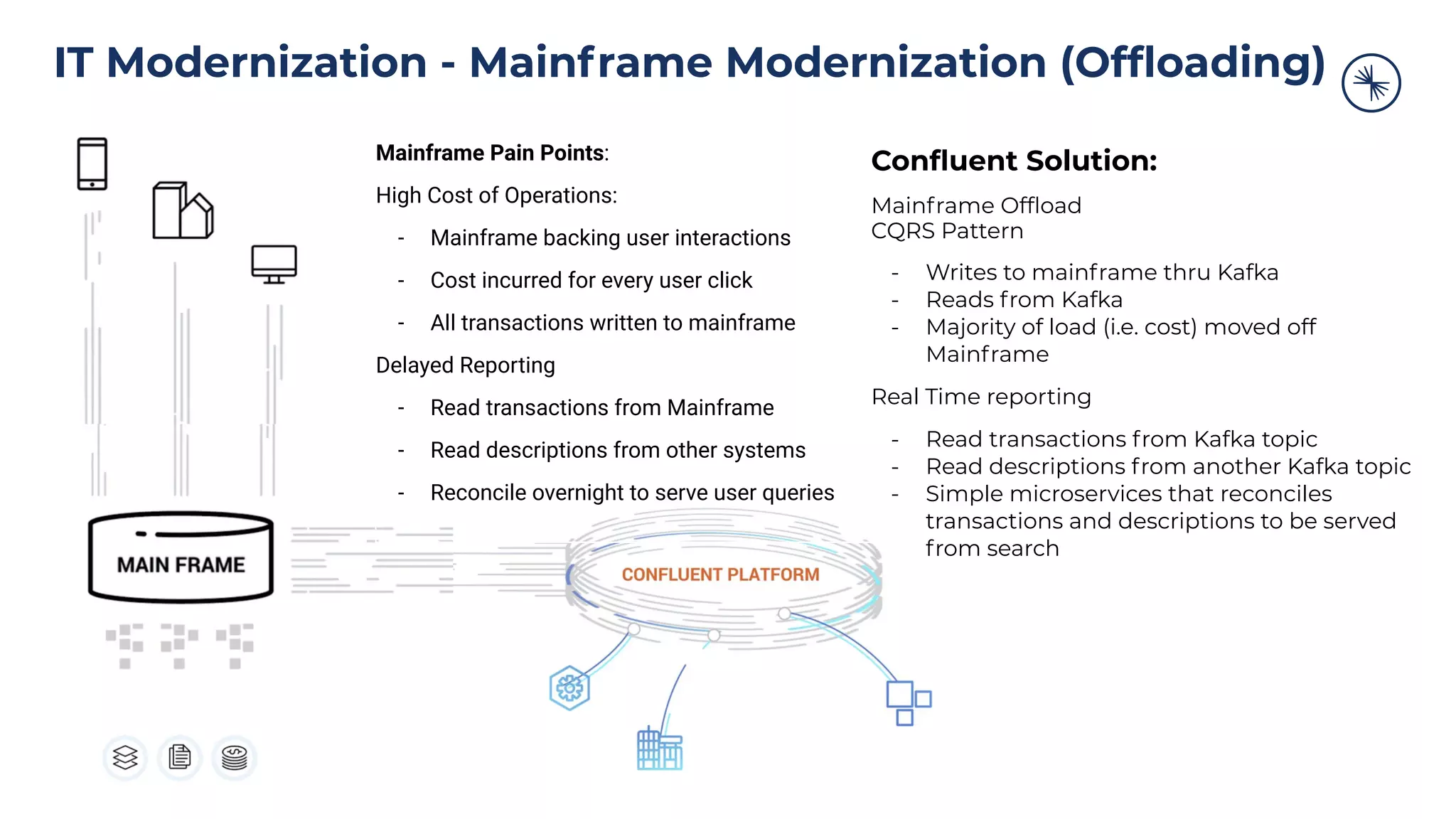

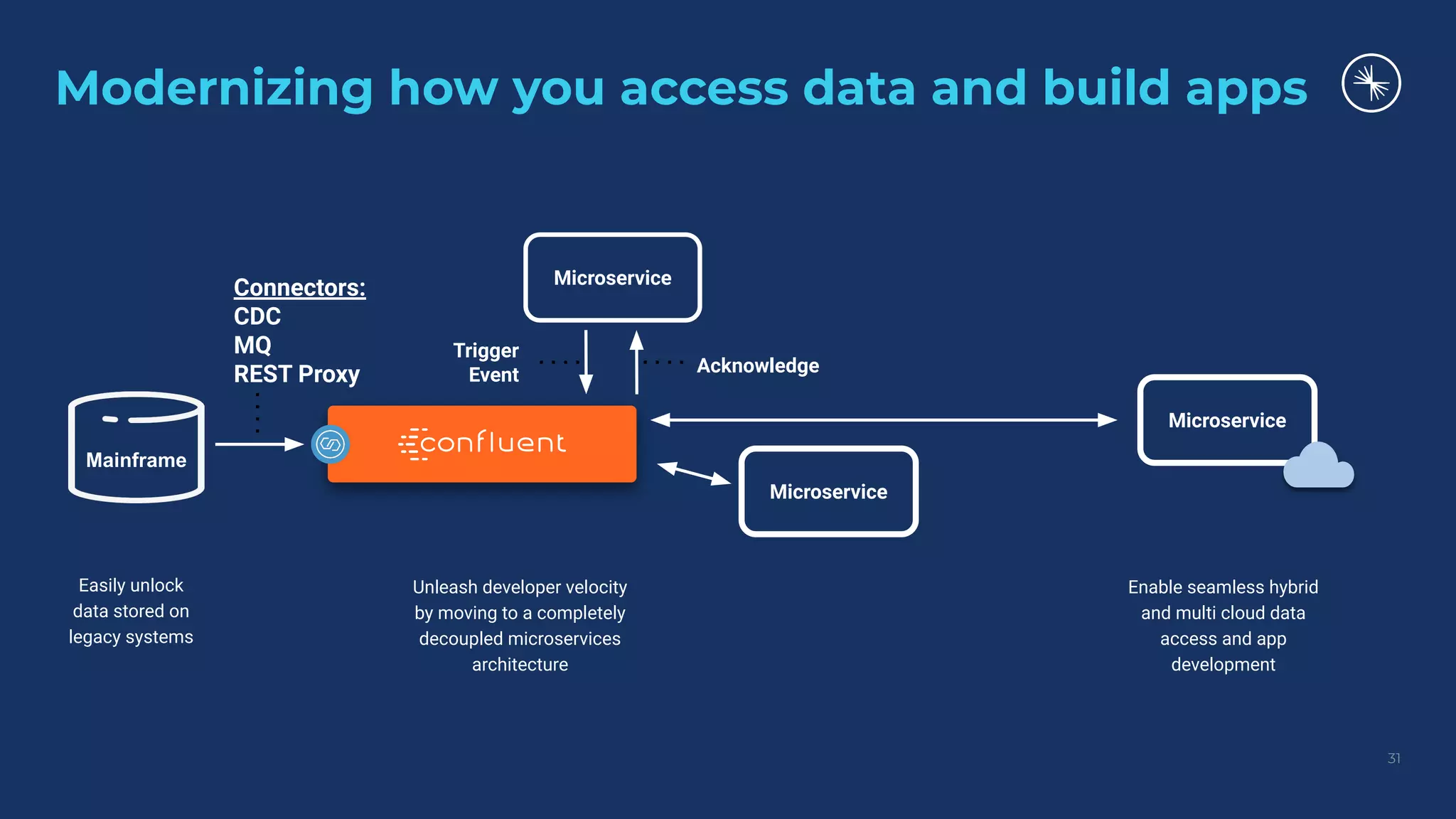

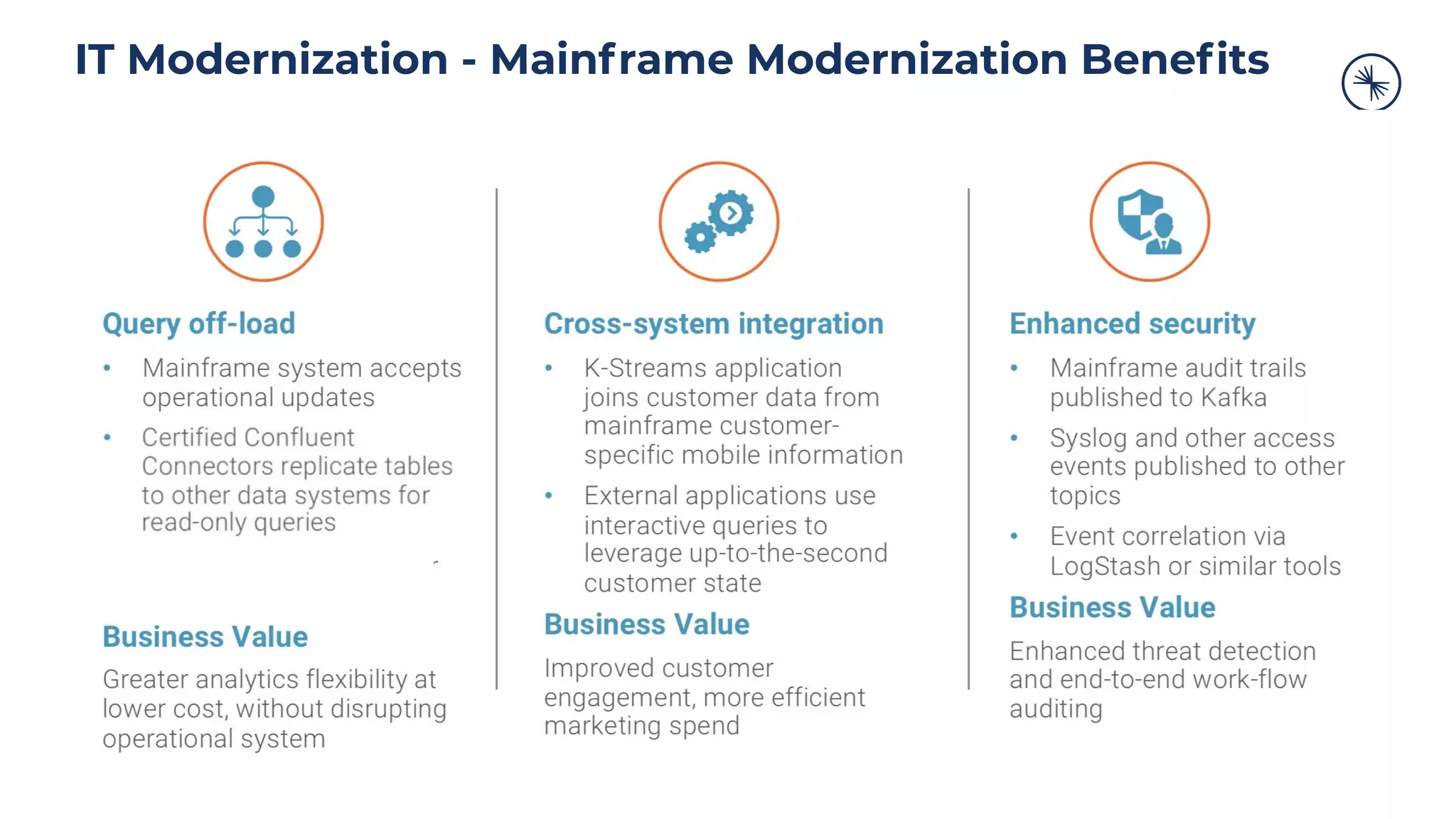

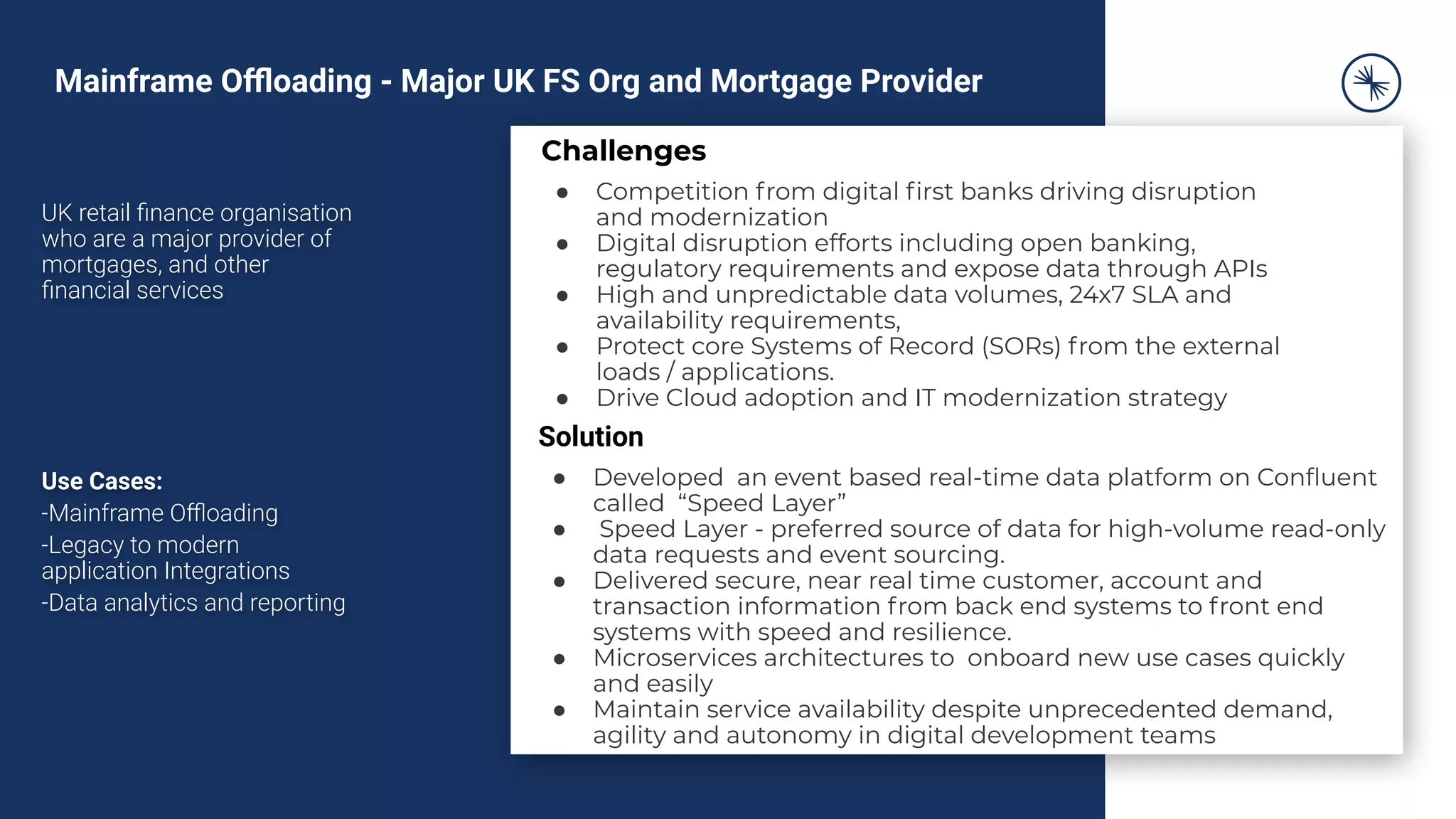

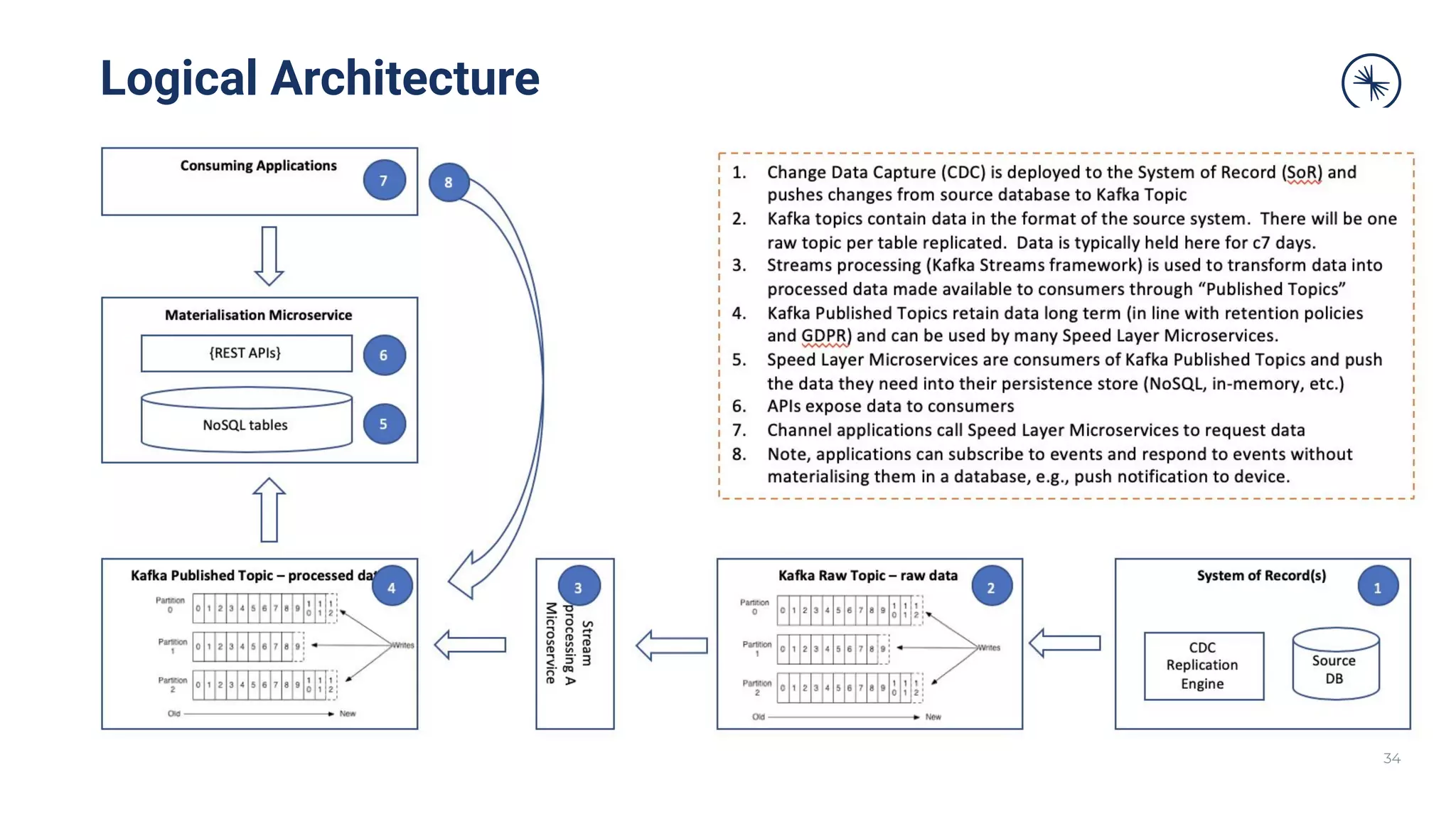

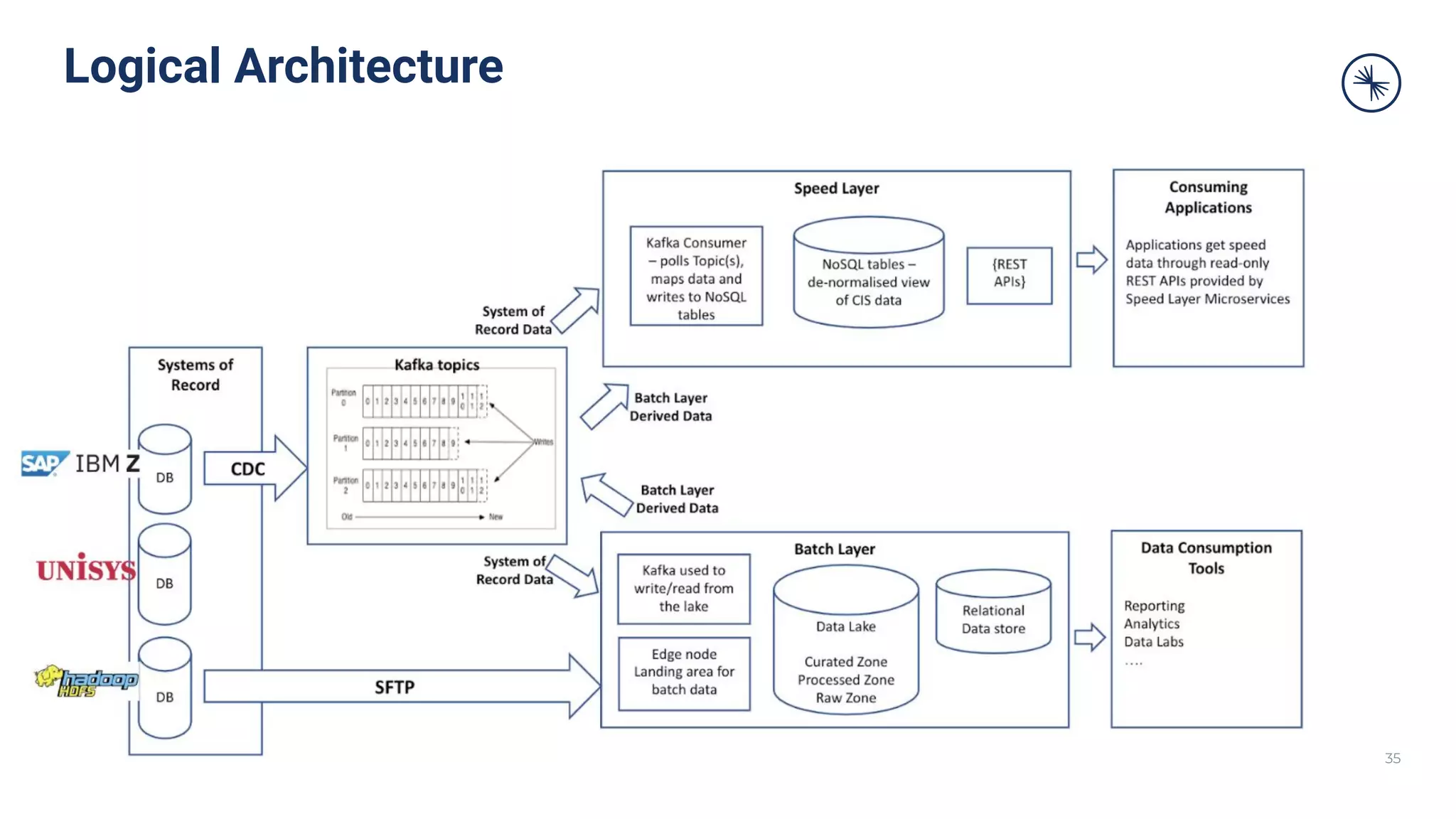

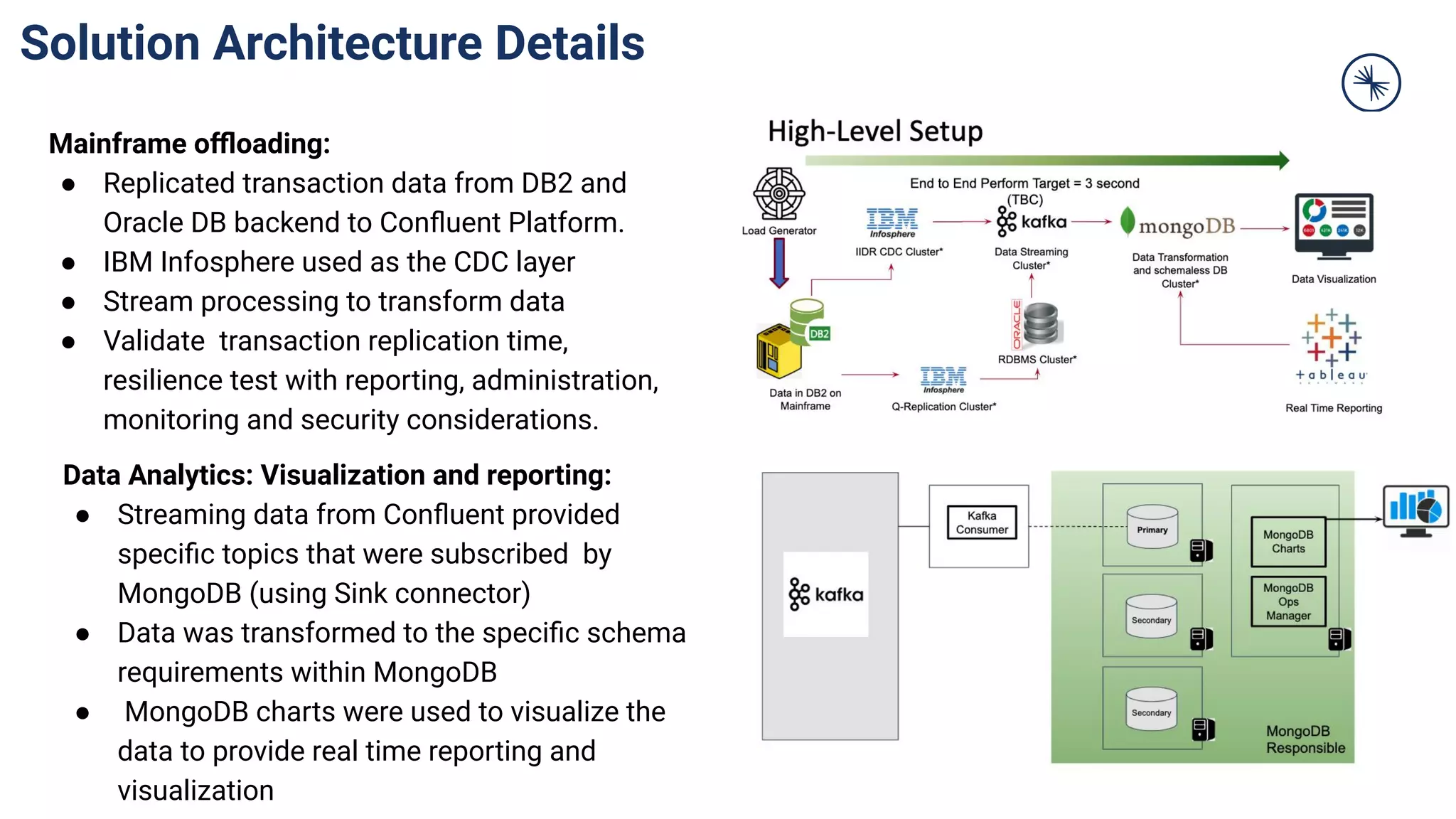

The document discusses various use cases for Confluent Kafka in the financial services sector, highlighting a shift from traditional batch processing to real-time data streaming for fraud detection, credit analytics, and regulatory compliance. It emphasizes the importance of modern data platforms supporting event processing to enhance customer experiences, optimize operational data, and facilitate regulatory requirements. Additionally, it covers challenges related to legacy systems and the modernization of data architecture through microservices and cloud integration.

![Deployment Reference Architecture

IIDR CDC

Cluster

RDBMS

Cluster

Kafka Connect

JDBC

Connector

[Source]

Kafka Connect

MongoDB

Connector

[Sink]](https://image.slidesharecdn.com/fsusecasedeck-201014101922/75/Apache-Kafka-Use-Cases-for-Financial-Services-38-2048.jpg)