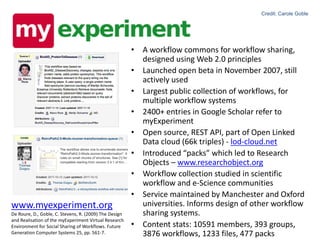

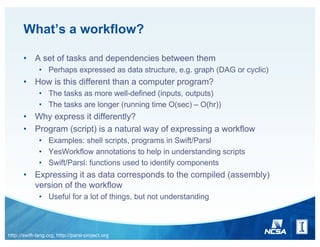

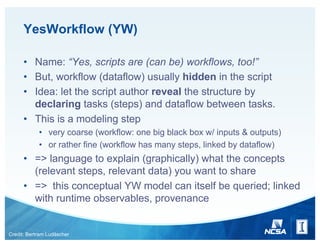

The document discusses workflows in computational contexts, defining them as task sets with dependencies, and differentiating them from standard computer programs. It highlights tools like YesWorkflow and Parsl for modeling and sharing workflows, along with platforms like GitHub and MyExperiment for collaboration and resource sharing. The paper emphasizes the importance of structured representation of workflows to facilitate understanding and reuse in scientific and computational environments.

![Parsl

• A python-based parallel scripting library (http://parsl-project.org),

based on ideas in Swift (http://swift-lang.org)

• Tasks exposed as functions (python or bash)

@App('bash', data_flow_kernel)

def echo(message, outputs=[]):

return 'echo {0} &> {outputs[0]}’

@App('python', data_flow_kernel)

def cat(inputs=[]):

with open(inputs[0]) as f:

return f.readlines()

• Return values are futures

• Other tasks can be called that depend on these futures

• Will not run until futures are satisfied/filled

• Main code used to glue functions together

hello = echo("Hello World!", outputs=['hello1.txt'])

message = cat(inputs=[hello.outputs[0]])

• Fairly easy to understand](https://image.slidesharecdn.com/2017-171114002753/85/Expressing-and-sharing-workflows-4-320.jpg)