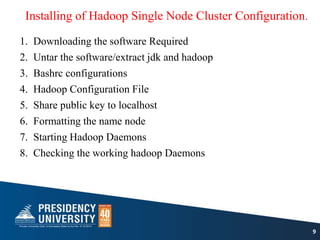

1) The document describes the steps to install a single node Hadoop cluster on a laptop or desktop.

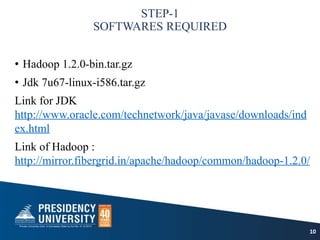

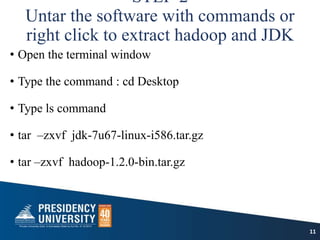

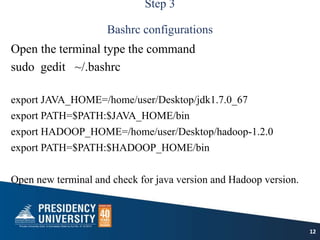

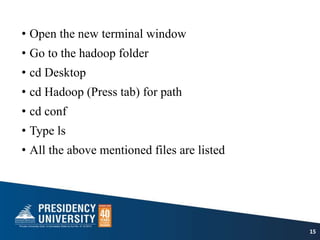

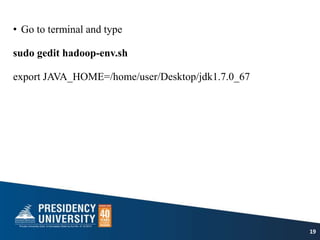

2) It involves downloading and extracting required software like Hadoop, JDK, and configuring environment variables.

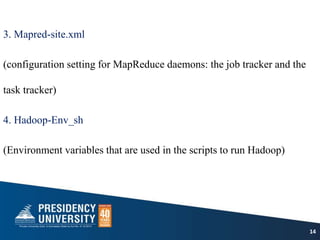

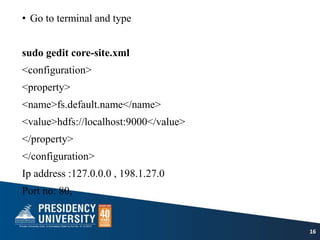

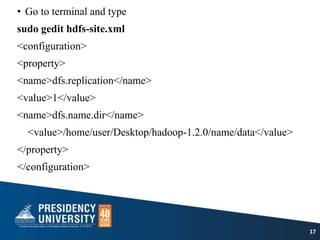

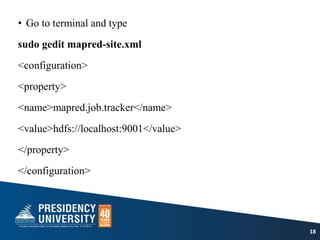

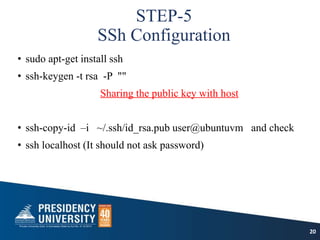

3) Key configuration files like core-site.xml, hdfs-site.xml and mapred-site.xml are edited to configure the HDFS, namenode and jobtracker.

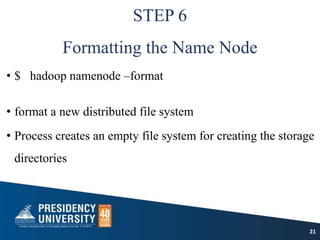

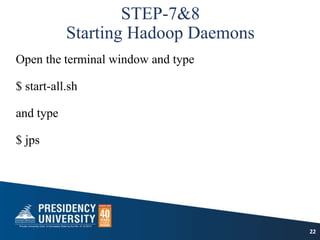

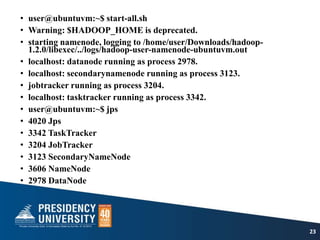

4) The namenode is formatted and Hadoop daemons like datanode, secondary namenode and jobtracker are started.