This document provides instructions for configuring Hadoop, HBase, and HBase client on a single node system. It includes steps for installing Java, adding a dedicated Hadoop user, configuring SSH, disabling IPv6, installing and configuring Hadoop, formatting HDFS, starting the Hadoop processes, running example MapReduce jobs to test the installation, and configuring HBase.

![SecondaryNameNode

Jps

NameNode

then you can be sure that hadoop is configured correctly and running.

Making sure Hadoop is working

You can see the Hadoop logs in ~/work/hadoop/logs

You should be able to see the Hadoop Namenode web interface at

http://shashwat:50070/ and the JobTracker Web Interface at

http://shashwat:50030/. If not, check that you have 5 java processes running where

each of those java processes have one of the following as their last command line (as

seen from a ps ax | grep hadoop command) :

org.apache.hadoop.mapred.JobTracker

org.apache.hadoop.hdfs.server.namenode.NameNode

org.apache.hadoop.mapred.TaskTracker

org.apache.hadoop.hdfs.server.namenode.SecondaryNameNode

org.apache.hadoop.hdfs.server.datanode.DataNode

If you do not see these 5 processes, check the logs in ~work/hadoop/logs/*.{out,log}

for messages that might give you a hint as to what went wrong.

Run some example map/reduce jobs

The Hadoop distro comes with some example / test map / reduce jobs. Here we’ll run

them and make sure things are working end to end.

cd ~/work/hadoop

# Copy the input files into the distributed filesystem

# (there will be no output visible from the command):

bin/hadoop fs -put conf input

# Run some of the examples provided:

# (there will be a large amount of INFO statements as output)

bin/hadoop jar hadoop-*-examples.jar grep input output 'dfs[a-z.]+'

# Examine the output files:

bin/hadoop fs -cat output/part-00000

The resulting output should be something like:

3 dfs.class

2 dfs.period

1 dfs.file

1 dfs.replication](https://image.slidesharecdn.com/configurehbasehadoopandhbaseclient-120528162130-phpapp01/75/Configure-h-base-hadoop-and-hbase-client-8-2048.jpg)

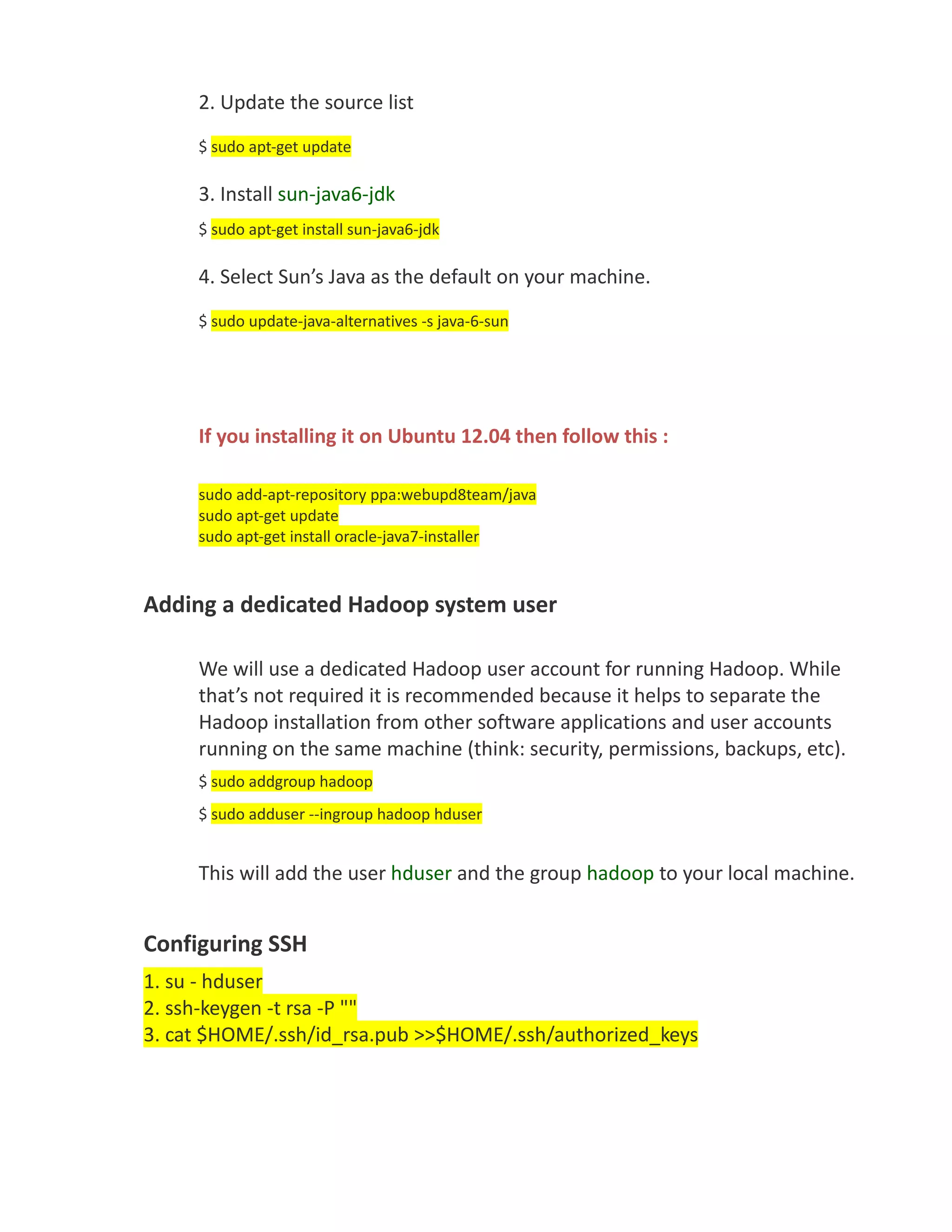

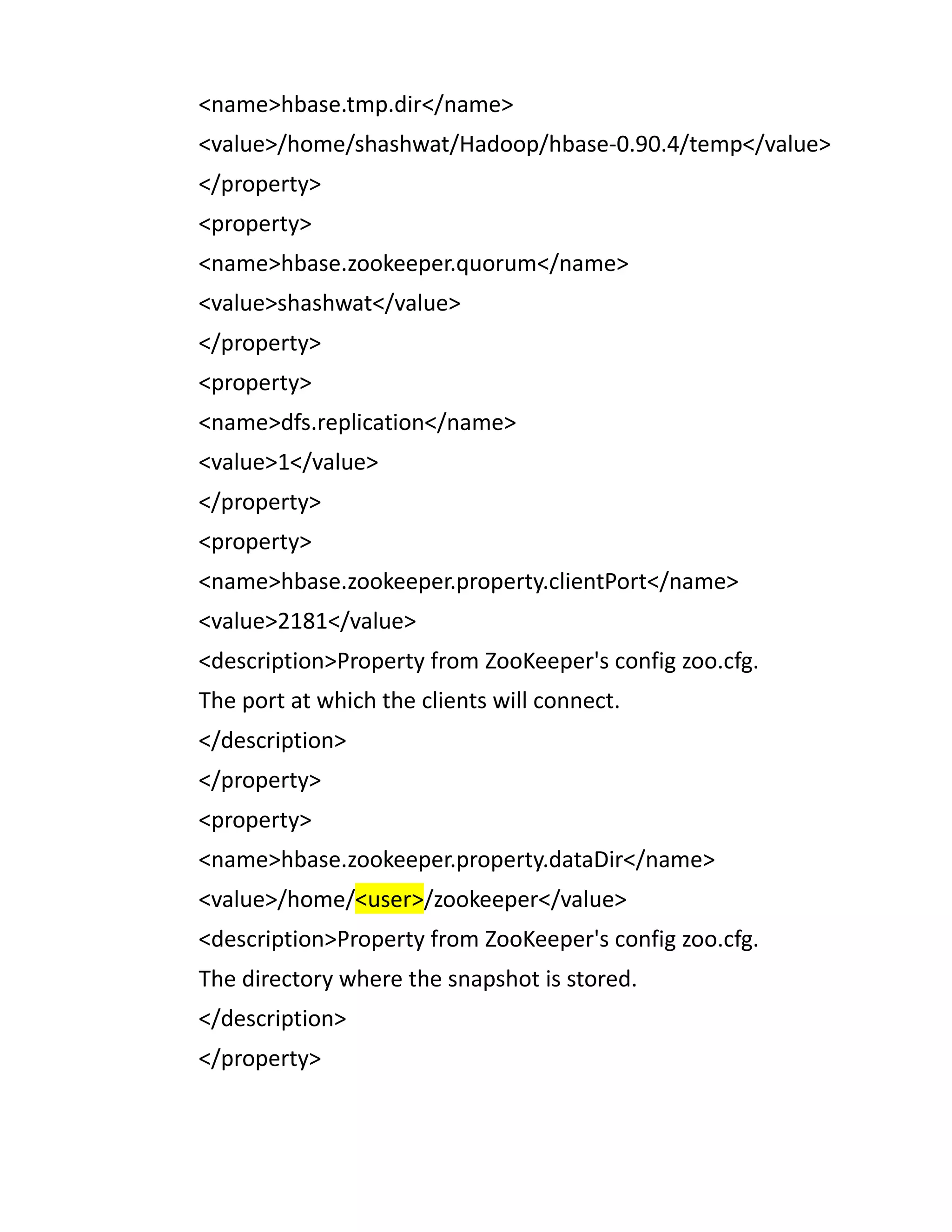

![import org.apache.hadoop.hbase.client.Result;

import org.apache.hadoop.hbase.util.Bytes;

public class HbaseClient {

/**

* @param args the command line arguments

*/

public static void main(String[] args) throws MasterNotRunningException,

ZooKeeperConnectionException, IOException {

// TODO code application logic here

System.out.println("Hbase Demo Application ");

// CONFIGURATION

// ENSURE RUNNING

Configuration conf = HBaseConfiguration.create();

conf.clear();

conf.set("hbase.zookeeper.quorum", "shashwat");

conf.set("hbase.zookeeper.property.clientPort", "2181");

conf.set("hbase.master", "shashwat:60000");

HBaseAdmin.checkHBaseAvailable(conf);

System.out.println("HBase is running!");

HTable table = new HTable(conf, "date");

System.out.println("Table obtained");

System.out.println("Fetching data now.....");

Get g = new Get(Bytes.toBytes("-101"));

Result r = table.get(g);

byte[] value = r.getValue(Bytes.toBytes("cf"), Bytes.toBytes("cf1"));

// If we convert the value bytes, we should get back 'Some Value', the

// value we inserted at this location.](https://image.slidesharecdn.com/configurehbasehadoopandhbaseclient-120528162130-phpapp01/75/Configure-h-base-hadoop-and-hbase-client-14-2048.jpg)