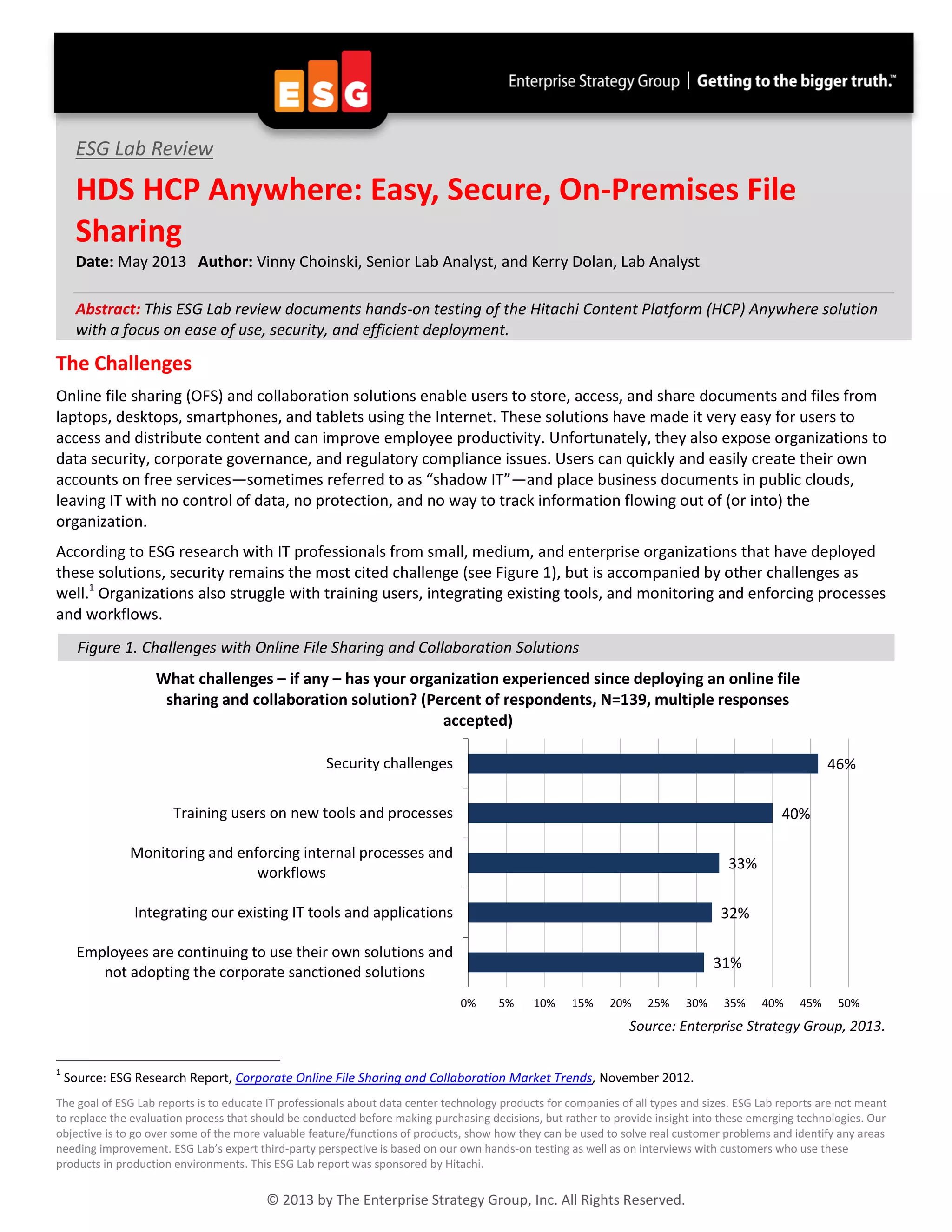

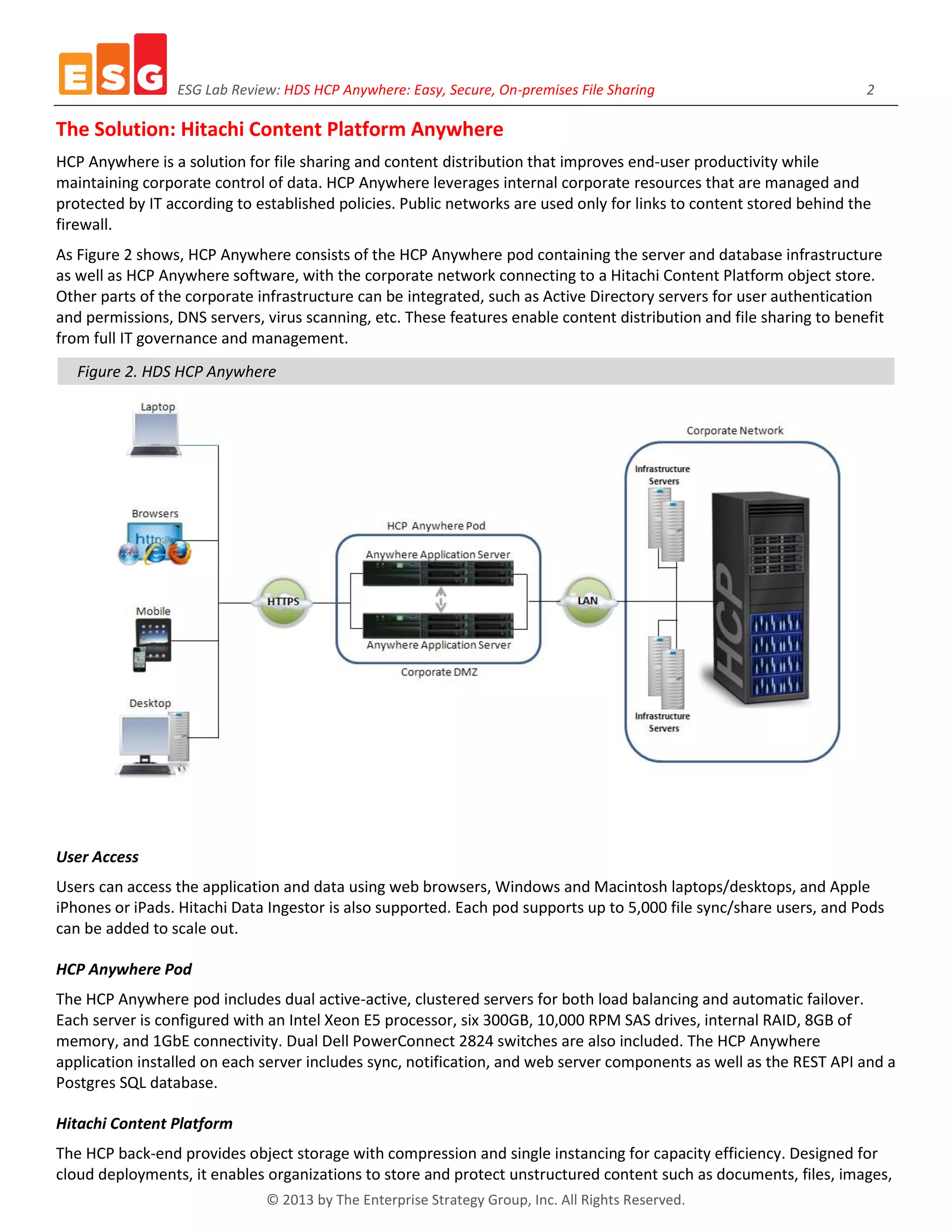

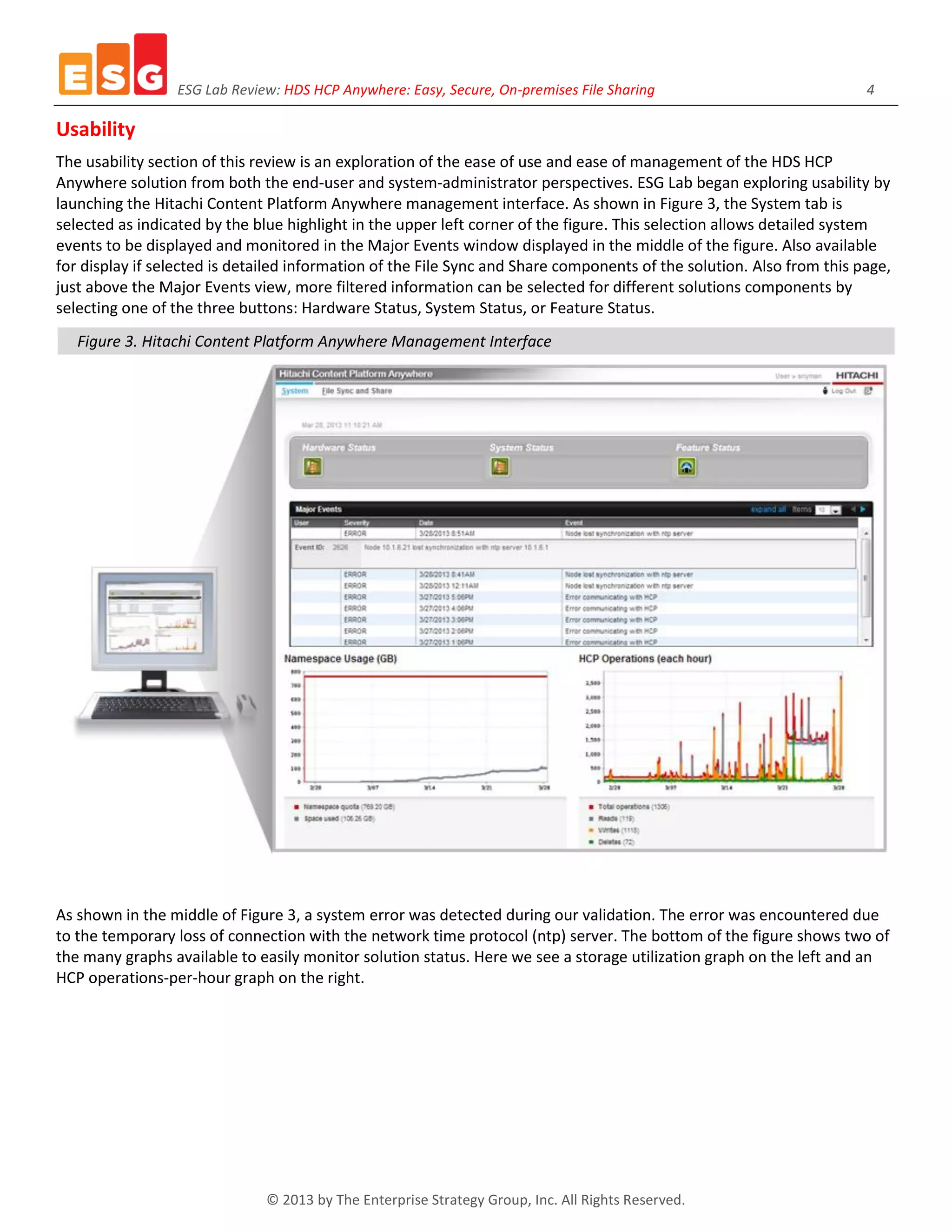

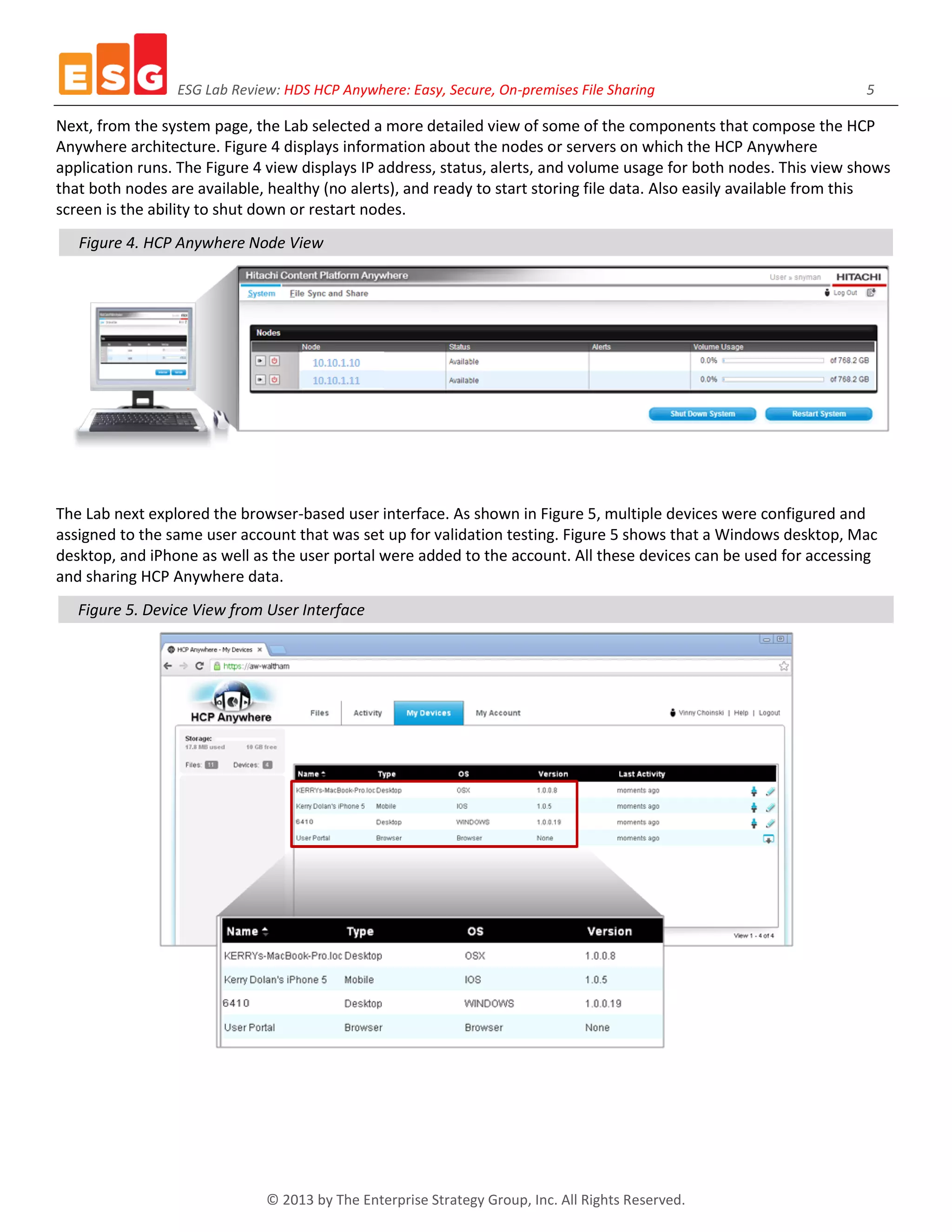

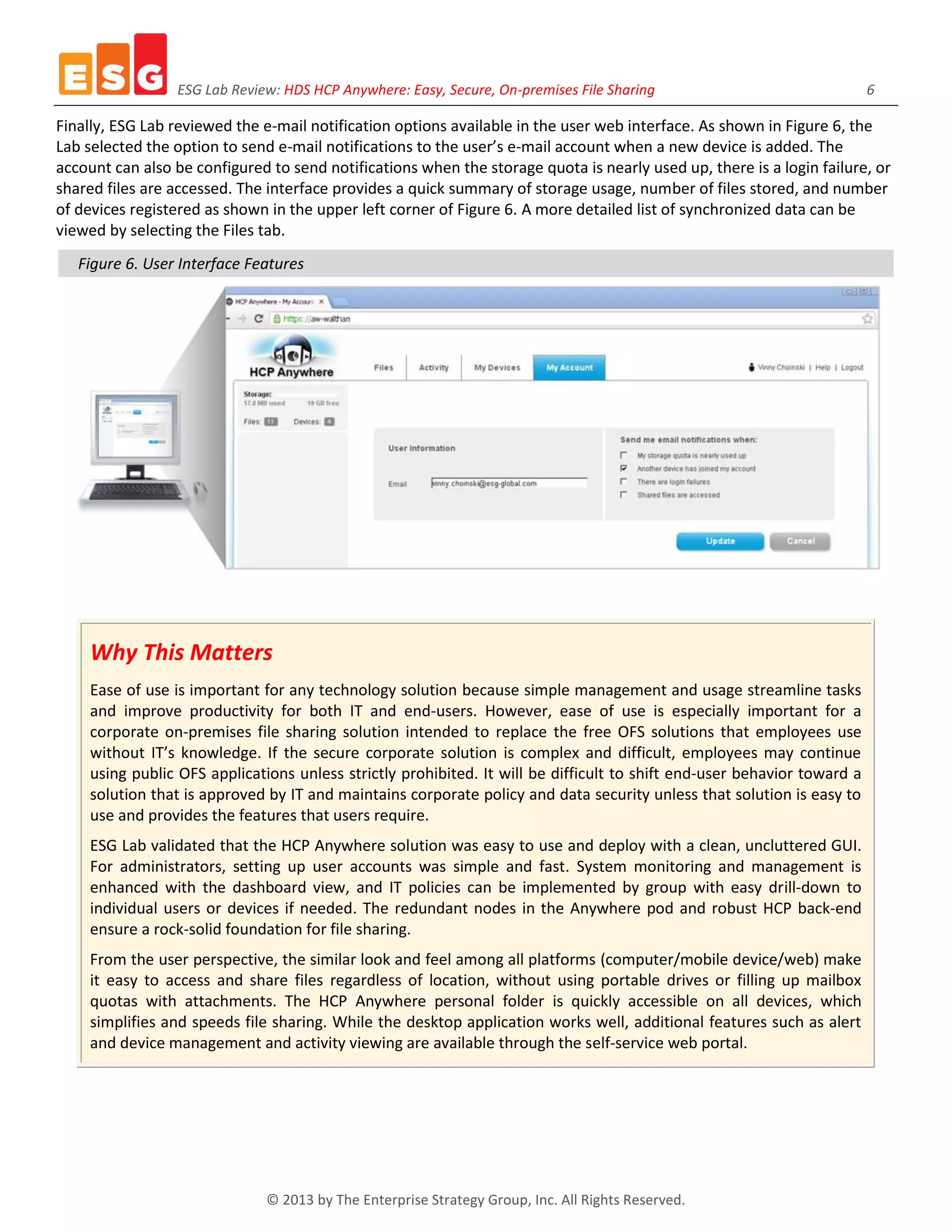

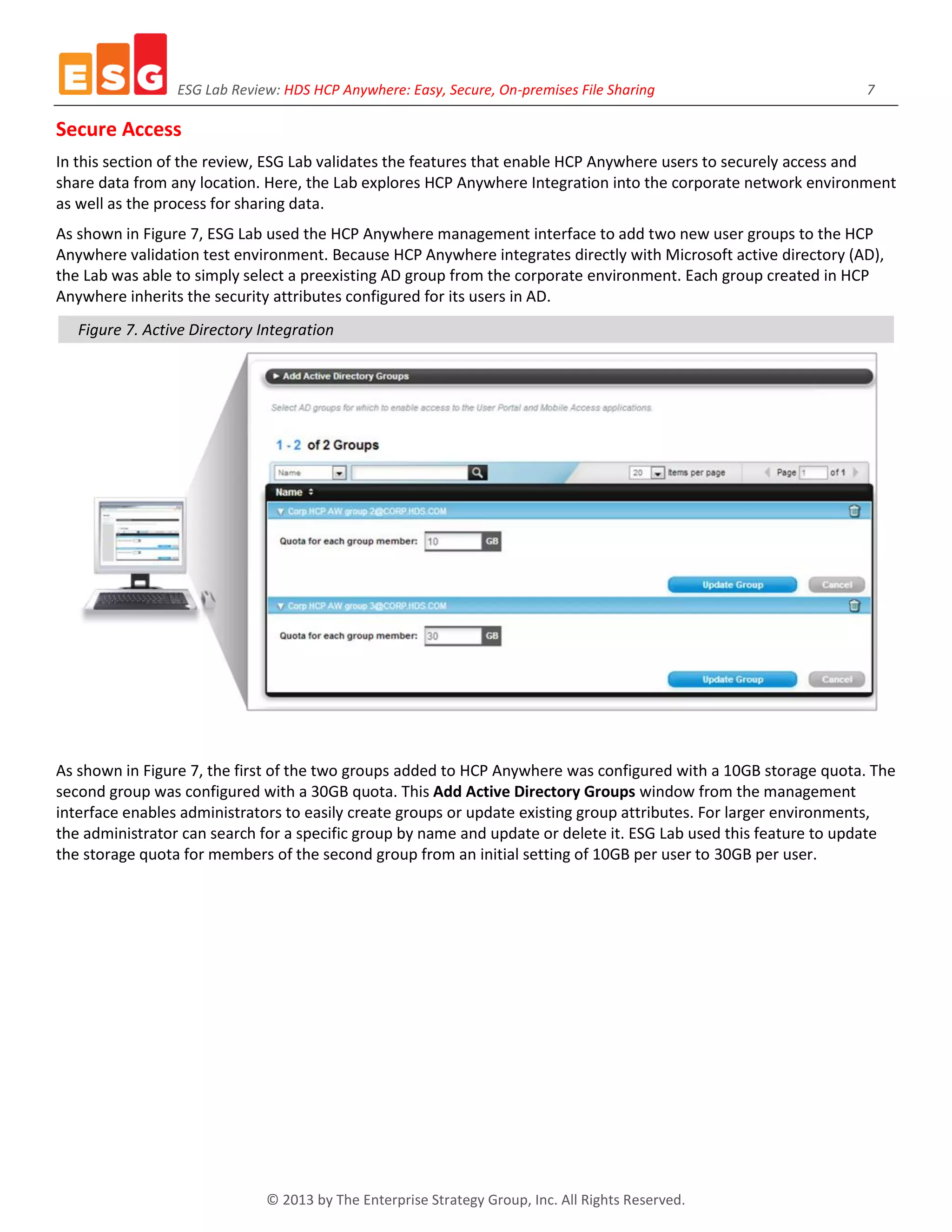

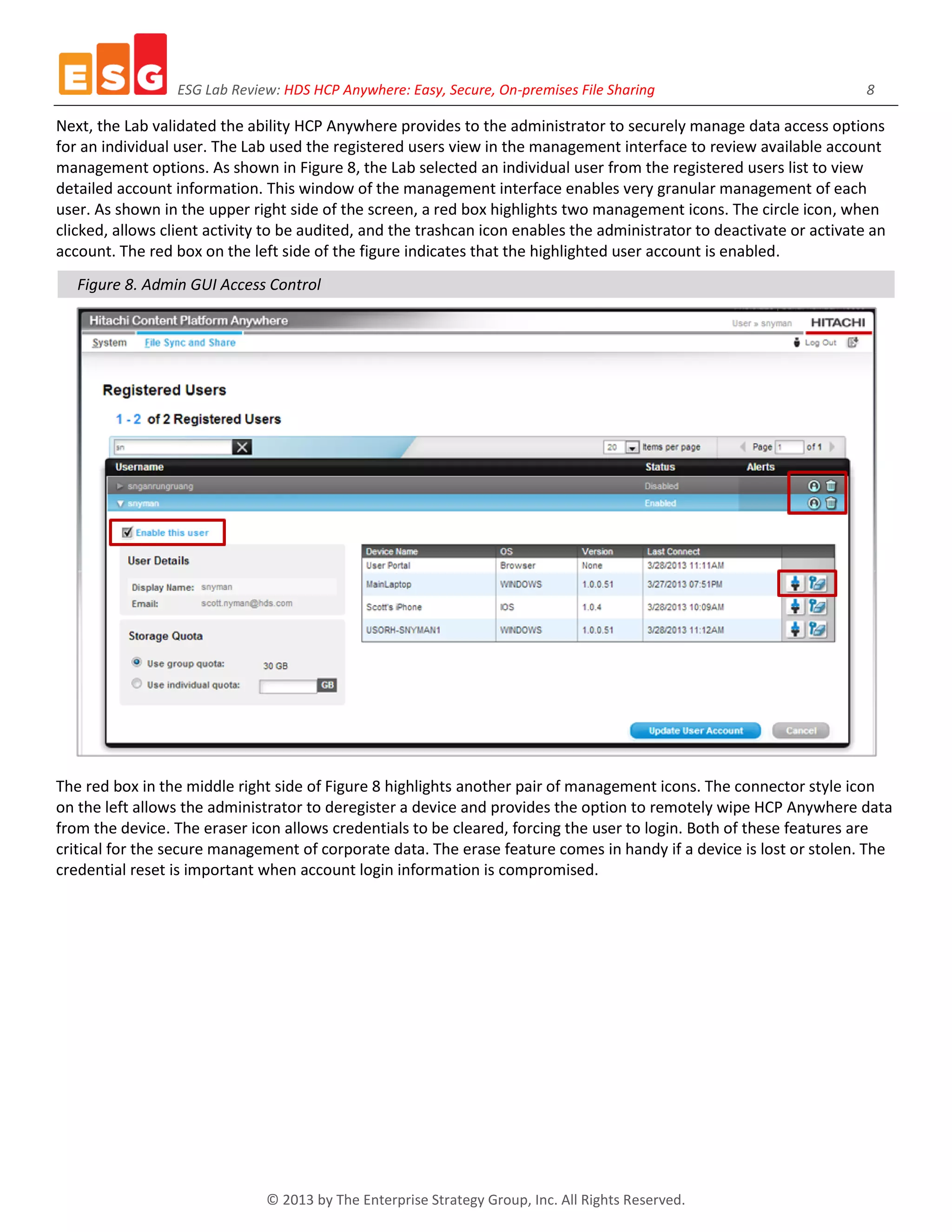

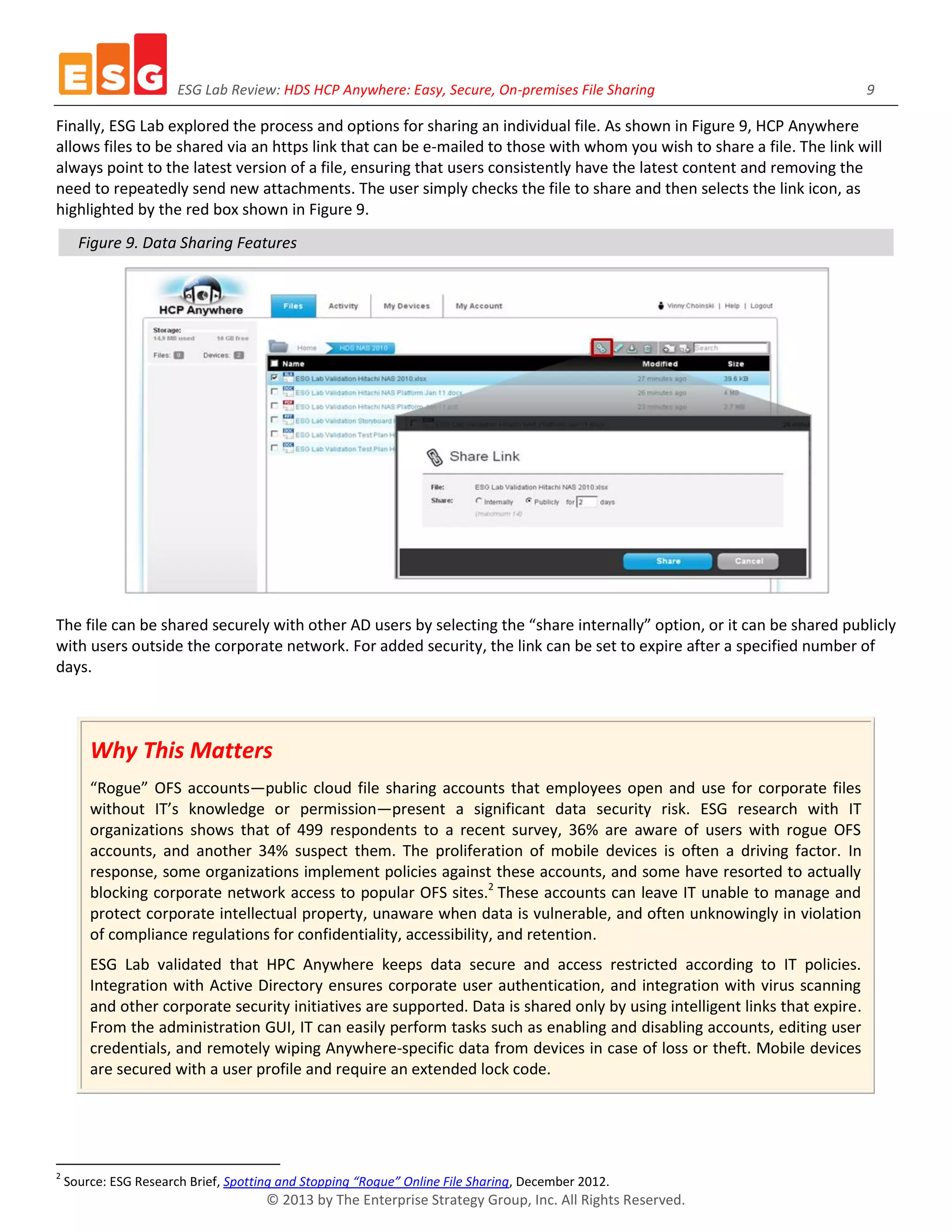

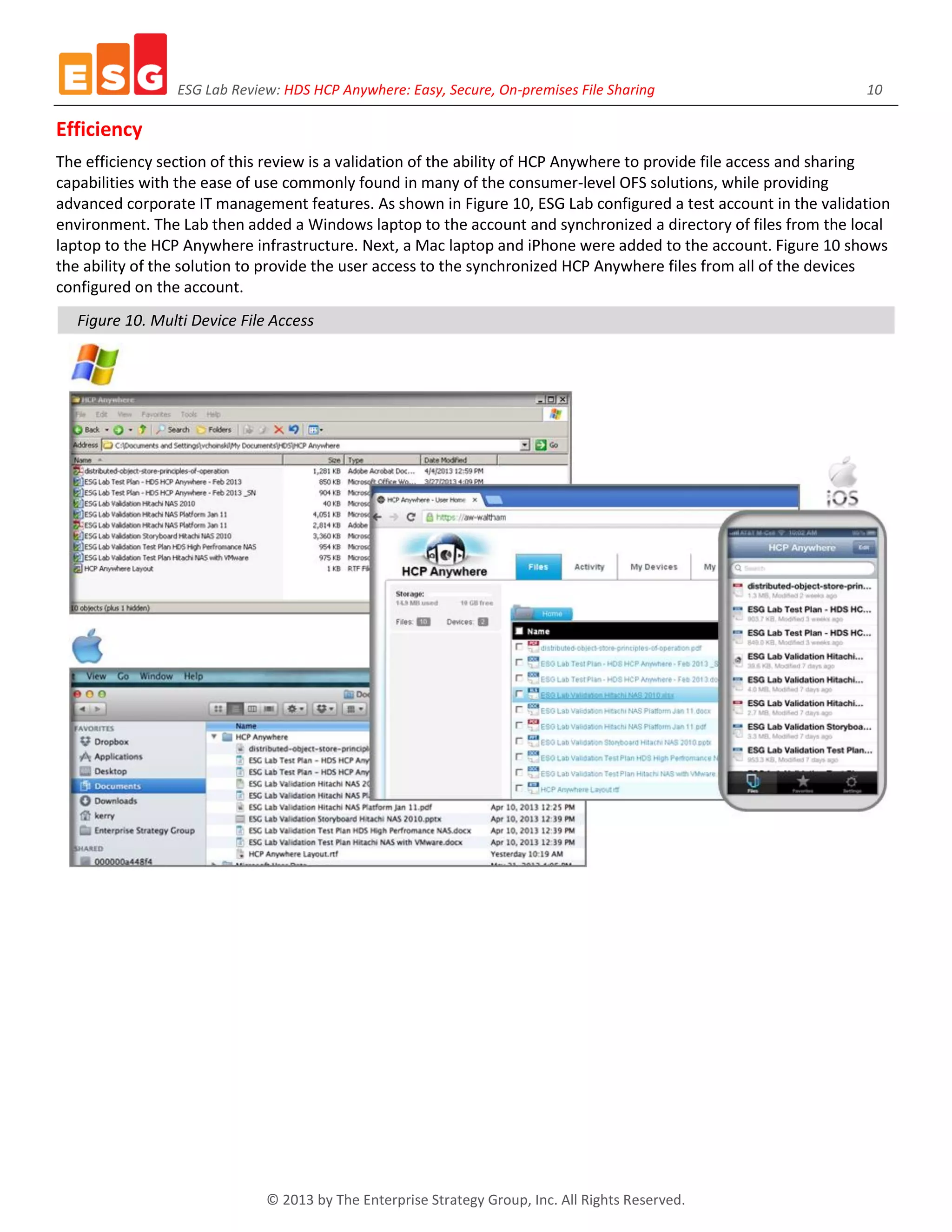

The ESG Lab reports aim to inform IT professionals about emerging data center technologies, offering insights without replacing the evaluation process for purchasing decisions. This specific report focuses on Hitachi's Content Platform Anywhere, highlighting its ease of use, security features, and corporate control while addressing common challenges associated with online file sharing and collaboration solutions. The report emphasizes the importance of secure data management and the integration of user-friendly interfaces to ensure productivity and compliance within organizations.