EnviroMap

•

1 like•366 views

Cloud-based software solution for your Environmental Monitoring Programs - from sample coding and submission to record keeping and data management.

Report

Share

Report

Share

Download to read offline

Recommended

Imagen: Photorealistic Text-to-Image Diffusion Models with Deep Language Unde...

A presentation about a new Google Research paper in the text-to-image task - Imagen.

This latent diffusion-based model outperforms DALLE-2 and other models and produces incredibly realistic images.

Continual Learning Introduction

This is a presentation by our Engineer Aurelie Peng about Continual Learning.

Introduction to knime

Brief introduction to KNIME, an open source data analytics and blending tool for data mining and data science.

MLOps in action

Machine Learning operations brings data science to the world of devops. Data scientists create models on their workstations. MLOps adds automation, validation and monitoring to any environment including machine learning on kubernetes. In this session you hear about latest developments and see it in action.

Recommended

Imagen: Photorealistic Text-to-Image Diffusion Models with Deep Language Unde...

A presentation about a new Google Research paper in the text-to-image task - Imagen.

This latent diffusion-based model outperforms DALLE-2 and other models and produces incredibly realistic images.

Continual Learning Introduction

This is a presentation by our Engineer Aurelie Peng about Continual Learning.

Introduction to knime

Brief introduction to KNIME, an open source data analytics and blending tool for data mining and data science.

MLOps in action

Machine Learning operations brings data science to the world of devops. Data scientists create models on their workstations. MLOps adds automation, validation and monitoring to any environment including machine learning on kubernetes. In this session you hear about latest developments and see it in action.

Derin Öğrenme (Deep Learning) Nedir?

Kasım 2016'da derste yaptığım, konunun bir özetini sunan döküman.

AI Modernization at AT&T and the Application to Fraud with Databricks

AT&T has been involved in AI from the beginning, with many firsts; “first to coin the term AI”, “inventors of R”, “foundational work on Conv. Neural Nets”, etc. and we have applied AI to hundreds of solutions. Today we are modernizing these AI solutions in the cloud with the help of Databricks and a variety of in-house developments. This talk will highlight our AI modernization effort along with its application to Fraud which is one of our biggest benefitting applications.

Lecture 10: ML Testing & Explainability (Full Stack Deep Learning - Spring 2021)

Lecture 10: ML Testing & Explainability (Full Stack Deep Learning - Spring 2021)

Deep learning in medicine: An introduction and applications to next-generatio...

Deep learning has enabled dramatic advances in image recognition performance. In this talk I will discuss using a deep convolutional neural network to detect genetic variation in aligned next-generation sequencing human read data. Our method, called DeepVariant, both outperforms existing genotyping tools and generalizes across genome builds and even to other species. DeepVariant represents a significant step from expert-driven statistical modeling towards more automatic deep learning approaches for developing software to interpret biological instrumentation data.

FlinkML: Large Scale Machine Learning with Apache Flink

My talk from SICS Data Science Day, describing FlinkML, the Machine Learning library for Apache Flink.

I talk about our approach to large-scale machine learning and how we utilize state-of-the-art algorithms to ensure FlinkML is a truly scalable library.

You can watch a video of the talk here: https://youtu.be/k29qoCm4c_k

AI and Machine Learning - Today’s Implementation Realities

AI and Machine Learning - Today’s Implementation Realities

www.cihanozhan.com

www.deeplab.co

Building Solution Templates and Managed Applications for the Azure Marketplace

Building Solution Templates and Managed Applications for the Azure MarketplaceMicrosoft Tech Community

Building Solution Templates and Managed Applications for the Azure Marketplace

Automated Machine Learning

A tremendous backlog of predictive modeling problems in the industry and short supply of trained data scientists have spiked interest in automation over the last few years. A new academic field, AutoML, has emerged. However, there is a significant gap between the topics that are academically interesting and automation capabilities that are necessary to solve real-world industrial problems end-to-end. An even greater challenge is enabling a non-expert to build a robust and trustworthy AI solution for their company. In this talk, we’ll discuss what an industry-grade AutoML system consists of and the scientific and engineering challenges of building it.

Lecture 6: Infrastructure & Tooling (Full Stack Deep Learning - Spring 2021)

Lecture 6: Infrastructure & Tooling (Full Stack Deep Learning - Spring 2021)

Deep Learning Workflows: Training and Inference

Discover the different AI applications and the different tools for the deep learning workflows to achieve them.

Machine Learning Introduction

Introduction slides for machine learning for beginners, it's a great starting point of the subject.

Continual Learning with Deep Architectures - Tutorial ICML 2021

Humans have the extraordinary ability to learn continually from experience. Not only we can apply previously learned knowledge and skills to new situations, we can also use these as the foundation for later learning. One of the grand goals of Artificial Intelligence (AI) is building an artificial “continual learning” agent that constructs a sophisticated understanding of the world from its own experience through the autonomous incremental development of ever more complex knowledge and skills (Parisi, 2019). However, despite early speculations and few pioneering works (Ring, 1998; Thrun, 1998; Carlson, 2010), very little research and effort has been devoted to address this vision. Current AI systems greatly suffer from the exposure to new data or environments which even slightly differ from the ones for which they have been trained for (Goodfellow, 2013). Moreover, the learning process is usually constrained on fixed datasets within narrow and isolated tasks which may hardly lead to the emergence of more complex and autonomous intelligent behaviors. In essence, continual learning and adaptation capabilities, while more than often thought as fundamental pillars of every intelligent agent, have been mostly left out of the main AI research focus.

In this tutorial, we propose to summarize the application of these ideas in light of the more recent advances in machine learning research and in the context of deep architectures for AI (Lomonaco, 2019). Starting from a motivation and a brief history, we link recent Continual Learning advances to previous research endeavours on related topics and we summarize the state-of-the-art in terms of major approaches, benchmarks and key results. In the second part of the tutorial we plan to cover more exploratory studies about Continual Learning with low supervised signals and the relationships with other paradigms such as Unsupervised, Semi-Supervised and Reinforcement Learning. We will also highlight the impact of recent Neuroscience discoveries in the design of original continual learning algorithms as well as their deployment in real-world applications. Finally, we will underline the notion of continual learning as a key technological enabler for Sustainable Machine Learning and its societal impact, as well as recap interesting research questions and directions worth addressing in the future.

Authors: Vincenzo Lomonaco, Irina Rish

Official Website: https://sites.google.com/view/cltutorial-icml2021

The Evolution of AutoML

Using AI to build AI is a promising solution to give the power of AI to those who can't afford it as those multinational corporations. The technology is also known as Automatic Machine Learning (AutoML). OneClick.ai is the first deep learning AutoML platform that make the latest AI technology accessible to anyone with/without AI background. The deck gives a 30 minutes overview of the recent history of AutoML, and how OneClick.ai innovates on it. Check out our platform at http://www.oneclick.ai

Explainability for Natural Language Processing

Tutorial at AACL'2020 (http://www.aacl2020.org/program/tutorials/#t4-explainability-for-natural-language-processing).

More recent version: https://www.slideshare.net/YunyaoLi/explainability-for-natural-language-processing-249912819

Title: Explainability for Natural Language Processing

@article{aacl2020xaitutorial,

title={Explainability for Natural Language Processing},

author= {Dhanorkar, Shipi and Li, Yunyao and Popa, Lucian and Qian, Kun and Wolf, Christine T and Xu, Anbang},

journal={AACL-IJCNLP 2020},

year={2020}

Presenter: Shipi Dhanorkar, Christine Wolf, Kun Qian, Anbang Xu, Lucian Popa and Yunyao Li

Video: https://www.youtube.com/watch?v=3tnrGe_JA0s&feature=youtu.be

Abstract:

We propose a cutting-edge tutorial that investigates the issues of transparency and interpretability as they relate to NLP. Both the research community and industry have been developing new techniques to render black-box NLP models more transparent and interpretable. Reporting from an interdisciplinary team of social science, human-computer interaction (HCI), and NLP researchers, our tutorial has two components: an introduction to explainable AI (XAI) and a review of the state-of-the-art for explainability research in NLP; and findings from a qualitative interview study of individuals working on real-world NLP projects at a large, multinational technology and consulting corporation. The first component will introduce core concepts related to explainability in NLP. Then, we will discuss explainability for NLP tasks and report on a systematic literature review of the state-of-the-art literature in AI, NLP, and HCI conferences. The second component reports on our qualitative interview study which identifies practical challenges and concerns that arise in real-world development projects which include NLP.

Unified Approach to Interpret Machine Learning Model: SHAP + LIME

For companies that solve real-world problems and generate revenue from the data science products, being able to understand why a model makes a certain prediction can be as crucial as achieving high prediction accuracy in many applications. However, as data scientists pursuing higher accuracy by implementing complex algorithms such as ensemble or deep learning models, the algorithm itself becomes a blackbox and it creates the trade-off between accuracy and interpretability of a model’s output.

To address this problem, a unified framework SHAP (SHapley Additive exPlanations) was developed to help users interpret the predictions of complex models. In this session, we will talk about how to apply SHAP to various modeling approaches (GLM, XGBoost, CNN) to explain how each feature contributes and extract intuitive insights from a particular prediction. This talk is intended to introduce the concept of general purpose model explainer, as well as help practitioners understand SHAP and its applications.

Machine Learning and Applications

Expert Session delivered during Workshop on

Image Processing and Machine Learning for Pattern Recoginition on 11th July 2016 at

University Institute of Engineering and Technology, Chandigarh

AutoML - The Future of AI

The key challenge in making AI technology more accessible to the broader community is the scarcity of AI experts. Most businesses simply don’t have the much needed resources or skills for modeling and engineering. This is why automated machine learning and deep learning technologies (AutoML and AutoDL) are increasingly valued by academics and industry. The core of AI is the model design. Automated machine learning technology reduces the barriers to AI application, enabling developers with no AI expertise to independently and easily develop and deploy AI models. Automated machine learning is expected to completely overturn the AI industry in the next few years, making AI ubiquitous.

Elastic APM: Amping up your logs and metrics for the full picture

No matter where you are in your journey to cloud native, Elastic APM helps deliver better customer experiences by spotting performance bottlenecks and identifying regressions from new deployments faster.

More Related Content

What's hot

Derin Öğrenme (Deep Learning) Nedir?

Kasım 2016'da derste yaptığım, konunun bir özetini sunan döküman.

AI Modernization at AT&T and the Application to Fraud with Databricks

AT&T has been involved in AI from the beginning, with many firsts; “first to coin the term AI”, “inventors of R”, “foundational work on Conv. Neural Nets”, etc. and we have applied AI to hundreds of solutions. Today we are modernizing these AI solutions in the cloud with the help of Databricks and a variety of in-house developments. This talk will highlight our AI modernization effort along with its application to Fraud which is one of our biggest benefitting applications.

Lecture 10: ML Testing & Explainability (Full Stack Deep Learning - Spring 2021)

Lecture 10: ML Testing & Explainability (Full Stack Deep Learning - Spring 2021)

Deep learning in medicine: An introduction and applications to next-generatio...

Deep learning has enabled dramatic advances in image recognition performance. In this talk I will discuss using a deep convolutional neural network to detect genetic variation in aligned next-generation sequencing human read data. Our method, called DeepVariant, both outperforms existing genotyping tools and generalizes across genome builds and even to other species. DeepVariant represents a significant step from expert-driven statistical modeling towards more automatic deep learning approaches for developing software to interpret biological instrumentation data.

FlinkML: Large Scale Machine Learning with Apache Flink

My talk from SICS Data Science Day, describing FlinkML, the Machine Learning library for Apache Flink.

I talk about our approach to large-scale machine learning and how we utilize state-of-the-art algorithms to ensure FlinkML is a truly scalable library.

You can watch a video of the talk here: https://youtu.be/k29qoCm4c_k

AI and Machine Learning - Today’s Implementation Realities

AI and Machine Learning - Today’s Implementation Realities

www.cihanozhan.com

www.deeplab.co

Building Solution Templates and Managed Applications for the Azure Marketplace

Building Solution Templates and Managed Applications for the Azure MarketplaceMicrosoft Tech Community

Building Solution Templates and Managed Applications for the Azure Marketplace

Automated Machine Learning

A tremendous backlog of predictive modeling problems in the industry and short supply of trained data scientists have spiked interest in automation over the last few years. A new academic field, AutoML, has emerged. However, there is a significant gap between the topics that are academically interesting and automation capabilities that are necessary to solve real-world industrial problems end-to-end. An even greater challenge is enabling a non-expert to build a robust and trustworthy AI solution for their company. In this talk, we’ll discuss what an industry-grade AutoML system consists of and the scientific and engineering challenges of building it.

Lecture 6: Infrastructure & Tooling (Full Stack Deep Learning - Spring 2021)

Lecture 6: Infrastructure & Tooling (Full Stack Deep Learning - Spring 2021)

Deep Learning Workflows: Training and Inference

Discover the different AI applications and the different tools for the deep learning workflows to achieve them.

Machine Learning Introduction

Introduction slides for machine learning for beginners, it's a great starting point of the subject.

Continual Learning with Deep Architectures - Tutorial ICML 2021

Humans have the extraordinary ability to learn continually from experience. Not only we can apply previously learned knowledge and skills to new situations, we can also use these as the foundation for later learning. One of the grand goals of Artificial Intelligence (AI) is building an artificial “continual learning” agent that constructs a sophisticated understanding of the world from its own experience through the autonomous incremental development of ever more complex knowledge and skills (Parisi, 2019). However, despite early speculations and few pioneering works (Ring, 1998; Thrun, 1998; Carlson, 2010), very little research and effort has been devoted to address this vision. Current AI systems greatly suffer from the exposure to new data or environments which even slightly differ from the ones for which they have been trained for (Goodfellow, 2013). Moreover, the learning process is usually constrained on fixed datasets within narrow and isolated tasks which may hardly lead to the emergence of more complex and autonomous intelligent behaviors. In essence, continual learning and adaptation capabilities, while more than often thought as fundamental pillars of every intelligent agent, have been mostly left out of the main AI research focus.

In this tutorial, we propose to summarize the application of these ideas in light of the more recent advances in machine learning research and in the context of deep architectures for AI (Lomonaco, 2019). Starting from a motivation and a brief history, we link recent Continual Learning advances to previous research endeavours on related topics and we summarize the state-of-the-art in terms of major approaches, benchmarks and key results. In the second part of the tutorial we plan to cover more exploratory studies about Continual Learning with low supervised signals and the relationships with other paradigms such as Unsupervised, Semi-Supervised and Reinforcement Learning. We will also highlight the impact of recent Neuroscience discoveries in the design of original continual learning algorithms as well as their deployment in real-world applications. Finally, we will underline the notion of continual learning as a key technological enabler for Sustainable Machine Learning and its societal impact, as well as recap interesting research questions and directions worth addressing in the future.

Authors: Vincenzo Lomonaco, Irina Rish

Official Website: https://sites.google.com/view/cltutorial-icml2021

The Evolution of AutoML

Using AI to build AI is a promising solution to give the power of AI to those who can't afford it as those multinational corporations. The technology is also known as Automatic Machine Learning (AutoML). OneClick.ai is the first deep learning AutoML platform that make the latest AI technology accessible to anyone with/without AI background. The deck gives a 30 minutes overview of the recent history of AutoML, and how OneClick.ai innovates on it. Check out our platform at http://www.oneclick.ai

Explainability for Natural Language Processing

Tutorial at AACL'2020 (http://www.aacl2020.org/program/tutorials/#t4-explainability-for-natural-language-processing).

More recent version: https://www.slideshare.net/YunyaoLi/explainability-for-natural-language-processing-249912819

Title: Explainability for Natural Language Processing

@article{aacl2020xaitutorial,

title={Explainability for Natural Language Processing},

author= {Dhanorkar, Shipi and Li, Yunyao and Popa, Lucian and Qian, Kun and Wolf, Christine T and Xu, Anbang},

journal={AACL-IJCNLP 2020},

year={2020}

Presenter: Shipi Dhanorkar, Christine Wolf, Kun Qian, Anbang Xu, Lucian Popa and Yunyao Li

Video: https://www.youtube.com/watch?v=3tnrGe_JA0s&feature=youtu.be

Abstract:

We propose a cutting-edge tutorial that investigates the issues of transparency and interpretability as they relate to NLP. Both the research community and industry have been developing new techniques to render black-box NLP models more transparent and interpretable. Reporting from an interdisciplinary team of social science, human-computer interaction (HCI), and NLP researchers, our tutorial has two components: an introduction to explainable AI (XAI) and a review of the state-of-the-art for explainability research in NLP; and findings from a qualitative interview study of individuals working on real-world NLP projects at a large, multinational technology and consulting corporation. The first component will introduce core concepts related to explainability in NLP. Then, we will discuss explainability for NLP tasks and report on a systematic literature review of the state-of-the-art literature in AI, NLP, and HCI conferences. The second component reports on our qualitative interview study which identifies practical challenges and concerns that arise in real-world development projects which include NLP.

Unified Approach to Interpret Machine Learning Model: SHAP + LIME

For companies that solve real-world problems and generate revenue from the data science products, being able to understand why a model makes a certain prediction can be as crucial as achieving high prediction accuracy in many applications. However, as data scientists pursuing higher accuracy by implementing complex algorithms such as ensemble or deep learning models, the algorithm itself becomes a blackbox and it creates the trade-off between accuracy and interpretability of a model’s output.

To address this problem, a unified framework SHAP (SHapley Additive exPlanations) was developed to help users interpret the predictions of complex models. In this session, we will talk about how to apply SHAP to various modeling approaches (GLM, XGBoost, CNN) to explain how each feature contributes and extract intuitive insights from a particular prediction. This talk is intended to introduce the concept of general purpose model explainer, as well as help practitioners understand SHAP and its applications.

Machine Learning and Applications

Expert Session delivered during Workshop on

Image Processing and Machine Learning for Pattern Recoginition on 11th July 2016 at

University Institute of Engineering and Technology, Chandigarh

AutoML - The Future of AI

The key challenge in making AI technology more accessible to the broader community is the scarcity of AI experts. Most businesses simply don’t have the much needed resources or skills for modeling and engineering. This is why automated machine learning and deep learning technologies (AutoML and AutoDL) are increasingly valued by academics and industry. The core of AI is the model design. Automated machine learning technology reduces the barriers to AI application, enabling developers with no AI expertise to independently and easily develop and deploy AI models. Automated machine learning is expected to completely overturn the AI industry in the next few years, making AI ubiquitous.

What's hot (20)

AI Modernization at AT&T and the Application to Fraud with Databricks

AI Modernization at AT&T and the Application to Fraud with Databricks

Lecture 10: ML Testing & Explainability (Full Stack Deep Learning - Spring 2021)

Lecture 10: ML Testing & Explainability (Full Stack Deep Learning - Spring 2021)

Deep learning in medicine: An introduction and applications to next-generatio...

Deep learning in medicine: An introduction and applications to next-generatio...

FlinkML: Large Scale Machine Learning with Apache Flink

FlinkML: Large Scale Machine Learning with Apache Flink

AI and Machine Learning - Today’s Implementation Realities

AI and Machine Learning - Today’s Implementation Realities

Building Solution Templates and Managed Applications for the Azure Marketplace

Building Solution Templates and Managed Applications for the Azure Marketplace

Lecture 6: Infrastructure & Tooling (Full Stack Deep Learning - Spring 2021)

Lecture 6: Infrastructure & Tooling (Full Stack Deep Learning - Spring 2021)

Continual Learning with Deep Architectures - Tutorial ICML 2021

Continual Learning with Deep Architectures - Tutorial ICML 2021

Unified Approach to Interpret Machine Learning Model: SHAP + LIME

Unified Approach to Interpret Machine Learning Model: SHAP + LIME

Similar to EnviroMap

Elastic APM: Amping up your logs and metrics for the full picture

No matter where you are in your journey to cloud native, Elastic APM helps deliver better customer experiences by spotting performance bottlenecks and identifying regressions from new deployments faster.

Best Practices for Implementing Automated Functional Testing

In the fast-paced world of software development, automated functional testing has become indispensable for ensuring the quality and reliability of applications. However, implementing automated testing effectively requires careful planning, strategic execution, and adherence to best practices. This comprehensive guide explores the key principles and strategies for successfully implementing automated functional testing in your organization.

Elastic APM: amplificação dos seus logs e métricas para proporcionar um panor...

Não importa onde você esteja em sua jornada rumo à nuvem, o Elastic APM ajuda a oferecer melhores experiências ao cliente, identificando gargalos de desempenho e identificando regressões de novas implantações com mais rapidez.

Elastic APM: Amplía tus logs y métricas para ver el panorama completo

No importa dónde se encuentre en su viaje a la nube nativa, Elastic APM ayuda a ofrecer mejores experiencias al cliente al detectar los cuellos de botella en el rendimiento e identificar más rápidamente las regresiones de las nuevas implementaciones.

Elastic APM: Combinalo con tus logs y métricas para una visibilidad completa

Las aplicaciones suelen ser la interfaz de cliente principal en las organizaciones modernas, lo que tiene un impacto directo sobre resultados como los ingresos y pérdidas de clientes. Elastic APM puede ayudarte a ofrecer mejores prácticas mediante la rápida detección de los cuellos de botella en materia de rendimiento y de las regresiones en los nuevos despliegues . Descubre cómo obtener una visión completa sobre los servicios en los que se basan tus aplicaciones, del frontend al backend, para optimizar su rendimiento.

Plant check Mobile Operator Rounds English

Smart Mobile Operator Rounds with trends of manual readings and Manager's Dashboard to improve reliability and availability in industrial facilities.

Mobile PlantCheck.net ® Platform (everything on your local server) Operator Rounds with trends of manual readings and Manager's Dashboard to improve reliability and availability in industrial facilities.

+ Reduces o&m team's work effort

+ Increases reliability and availability

+ Enables analysis on data which was "not there" before;

+Saves OPEX

Elastic APM : développez vos logs et vos indicateurs pour obtenir une vue com...

Pour les organisations modernes, les applications sont souvent l'interface client principale, et influencent directement les résultats tels que le chiffre d'affaires et la fidélisation de la clientèle. Quelle que soit votre progression dans votre parcours vers les solutions cloud natives, Elastic APM peut vous aider à améliorer les expériences clients en détectant plus tôt les goulets d'étranglement des performances et en identifiant plus rapidement les régressions à partir des nouveaux déploiements. Découvrez comment obtenir une vue complète des services qui alimentent vos applications, du front-end au back-end, pour garantir un fonctionnement optimal.

A methodology for full system power modeling in heterogeneous data centers

The need for energy-awareness in current data centers has encouraged the use of power modeling to estimate their power consumption. However, existing models present noticeable limitations, which make them application-dependent, platform-dependent, inaccurate, or computationally complex. In this paper, we propose a platform-and application-agnostic methodology for full-system power modeling in heterogeneous data centers that overcomes those limitations. It derives a single model per platform, which works with high accuracy for heterogeneous applications with different patterns of resource usage and energy consumption, by systematically selecting a minimum set of resource usage indicators and extracting complex relations among them that capture the impact on energy consumption of all the resources in the system. We demonstrate our methodology by generating power models for heterogeneous platforms with very different power consumption profiles. Our validation experiments with real Cloud applications show that such models provide high accuracy (around 5% of average estimation error).

https://www.bsc.es/research-and-development/publications/methodology-full-system-power-modeling-heterogeneous-data

Production Monitoring Platform

Designing and developing a production monitoring platform from product management perspective.

Elastic APM: Amping up your logs and metrics for the full picture

No matter where you are in your journey to cloud native, Elastic APM can help you deliver better customer experiences by spotting performance bottlenecks sooner and identifying regressions from new deployments faster. Learn how to get a complete view of the services that power your applications — from frontend to backend — to keep them running smoothly.

Plant Maintenance & Condition Monitoring

Condition monitoring (or CM) is the process of monitoring a parameter of condition in machinery (vibration, temperature etc.) and identify a significant change which is indicative of a developing fault.

The use of condition monitoring allows maintenance to be scheduled, or other actions to be taken to prevent failure and avoid its consequences.

Enhanced Data Visualization provided for 200,000 Machines with OpenTSDB and C...

YASH tuned applications and databases to maximize system performance, distributed the storage of monitored data, and eliminated destructive down-sampling.

Monitoring Docker Containers and Dockererized Application

A Design/approach to monitor Docker and Dockerized Applications.

Discussions on present day challenges in Monitoring and especially the containers.

Presented at Openstack Summit at Tokyo 2015

Similar to EnviroMap (20)

CSense: A Stream-Processing Toolkit for Robust and High-Rate Mobile Sensing A...

CSense: A Stream-Processing Toolkit for Robust and High-Rate Mobile Sensing A...

Elastic APM: Amping up your logs and metrics for the full picture

Elastic APM: Amping up your logs and metrics for the full picture

Best Practices for Implementing Automated Functional Testing

Best Practices for Implementing Automated Functional Testing

Elastic APM: amplificação dos seus logs e métricas para proporcionar um panor...

Elastic APM: amplificação dos seus logs e métricas para proporcionar um panor...

Elastic APM: Amplía tus logs y métricas para ver el panorama completo

Elastic APM: Amplía tus logs y métricas para ver el panorama completo

Elastic APM: Combinalo con tus logs y métricas para una visibilidad completa

Elastic APM: Combinalo con tus logs y métricas para una visibilidad completa

Real-time Geographic Information System (GIS) for.pptx

Real-time Geographic Information System (GIS) for.pptx

Elastic APM : développez vos logs et vos indicateurs pour obtenir une vue com...

Elastic APM : développez vos logs et vos indicateurs pour obtenir une vue com...

A methodology for full system power modeling in heterogeneous data centers

A methodology for full system power modeling in heterogeneous data centers

Elastic APM: Amping up your logs and metrics for the full picture

Elastic APM: Amping up your logs and metrics for the full picture

Enhanced Data Visualization provided for 200,000 Machines with OpenTSDB and C...

Enhanced Data Visualization provided for 200,000 Machines with OpenTSDB and C...

Monitoring Docker Containers and Dockererized Application

Monitoring Docker Containers and Dockererized Application

Recently uploaded

MS Wine Day 2024 Arapitsas Advancements in Wine Metabolomics Research

Keynote presentation at the MS Wine Day conference.

Best hotel in keerthy hotel manage ment

Hotel management involves overseeing all aspects of a hotel's operations to ensure smooth functioning and exceptional guest experiences. This multifaceted role includes tasks such as managing staff, handling reservations, maintaining facilities, overseeing finances, and implementing marketing strategies to attract guests. Effective hotel management requires strong leadership, communication, organizational, and problem-solving skills to navigate the complexities of the hospitality industry and ensure guest satisfaction while maximizing profitability.

Vietnam Mushroom Market Growth, Demand and Challenges of the Key Industry Pla...

The Vietnam mushroom market size is projected to exhibit a growth rate (CAGR) of 6.52% during 2024-2032.

More Info:- https://www.imarcgroup.com/vietnam-mushroom-market

Food Processing and Preservation Presentation.pptx

The presentation covers key areas on food processing and preservation highlighting the traditional methods and the current, modern methods applicable worldwide for both small and large scale.

Recently uploaded (8)

Food and beverage service Restaurant Services notes V1.pptx

Food and beverage service Restaurant Services notes V1.pptx

MS Wine Day 2024 Arapitsas Advancements in Wine Metabolomics Research

MS Wine Day 2024 Arapitsas Advancements in Wine Metabolomics Research

Water treatment study ,a method to purify waste water

Water treatment study ,a method to purify waste water

Vietnam Mushroom Market Growth, Demand and Challenges of the Key Industry Pla...

Vietnam Mushroom Market Growth, Demand and Challenges of the Key Industry Pla...

Food Processing and Preservation Presentation.pptx

Food Processing and Preservation Presentation.pptx

EnviroMap

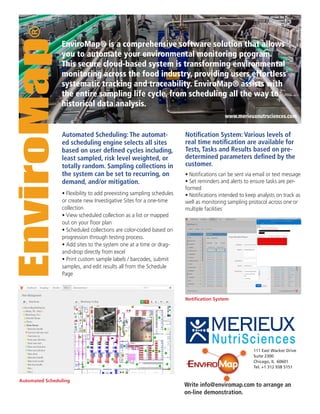

- 1. • Flexibility to add preexisting sampling schedules or create new Investigative Sites for a one-time collection • View scheduled collection as a list or mapped out on your floor plan • Scheduled collections are color-coded based on progression through testing process. • Add sites to the system one at a time or drag- and-drop directly from excel • Print custom sample labels / barcodes, submit samples, and edit results all from the Schedule Page EnviroMap® is a comprehensive software solution that allows you to automate your environmental monitoring program. This secure cloud-based system is transforming environmental monitoring across the food industry, providing users effortless systematic tracking and traceability. EnviroMap® assists with the entire sampling life cycle, from scheduling all the way to historical data analysis. Notification System: Various levels of real time notification are available for Tests, Tasks and Results based on pre- determined parameters defined by the customer. • Notifications can be sent via email or text message • Set reminders and alerts to ensure tasks are per- formed • Notifications intended to keep analysts on track as well as monitoring sampling protocol across one or multiple facilities EnviroMap® Automated Scheduling: The automat- ed scheduling engine selects all sites based on user defined cycles including, least sampled, risk level weighted, or totally random. Sampling collections in the system can be set to recurring, on demand, and/or mitigation. Notification System Automated Scheduling 111 East Wacker Drive Suite 2300 Chicago, IL 60601 Tel. +1 312 938 5151 www.merieuxnutrsciences.com Write info@enviromap.com to arrange an on-line demonstration.

- 2. Non-conformance: Once a positive or out- of-limit result has been confirmed, mitiga- tion collections will automatically appear on the calendar. Analysts can begin the re-sampling process based on parameters outlined by your organization. EnviroMap® MXNS Integration: EnviroMap allows for the seamless integration with Mérieux NutriSciences LIMS and myMXNS. • Sample submission propagates the required Mérieux NutriSciences SARF fields with the added flexibility of setting up multiple template options • Results from Mérieux NutriSciences LIMS are exported back to EnviroMap providing com- plete traceability and historical analysis • In house results can also be integrated into the system using a spreadsheet. • Ability to pre-schedule corrective actions that will activate as soon as a sample is submitted as out-of- limit or positive • A parent-child relationship will exist between the original out-of-spec site and subsequent mitigations illustrating track and traceability • Manage investigative sites, workflow stoppage and perimeter reswabbing in response to a correc- tive action Historical Analysis: Flexible and easy to use reporting tools to present, analyze, and share results. Numerous reporting options including Grids, Maps, Bar Charts, Pie Charts, Line Graphs, and Summary Reports. • Analyze data and chart results directly in EnviroMap using the charting feature. Provides a visual summary of your environmental program • Result map includes options for displaying as Sample Count, Re- sult Count, or Percent Out-of-Limit for a specified time period • Reports can be exported to Excel for additional analysis, emailed as an attachment, or saved as an image for presentations Non-conformance Environmental Monitoring Management Software MXNS Integration Historical Analysis Powered by Mérieux NutriSciences