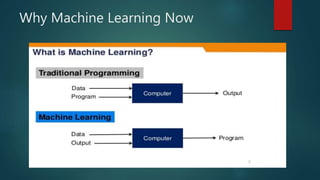

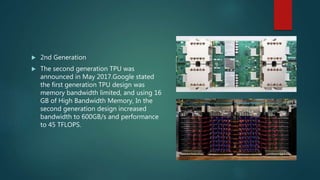

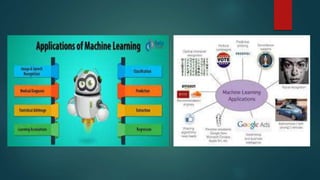

The document discusses the various aspects of machine learning, including communication channels and the types of technologies involved. It highlights the importance of big data and advanced computing power, particularly through innovations like Google’s Tensor Processing Units (TPUs). Additionally, it notes potential pitfalls in hardware design and references a research presentation by several authors.