Real-time Emotion Recognition

•Download as PPTX, PDF•

1 like•615 views

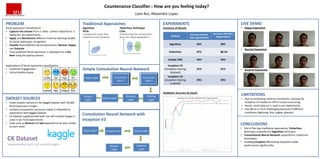

Machine Learning project: Real-time Emotion Recognition using different models (Eigenface - Principle Component Analysis, Fisherface - Linear Discrimination Analysis, Convolution Neural Network) Technologies: Python, OpenCV, Keras, Amazon AWS

Report

Share

Report

Share

Recommended

fpres

The document discusses applying the sum of absolute differences (SAD) algorithm to perform stereo matching on images using disparity maps. Key points include:

- The author proposes using a neighbor-based ranking approach to simplify pixel comparisons for SAD, addressing issues with intensity variations.

- An initial test of the approach yielded promising naive results, though the training data set was very small.

- Further experiments increasing the size and quality of the training data set showed accuracy rates from 66-91% for k-nearest neighbor classification, providing evidence the method is viable.

- However, more work is needed to improve feature selection and evaluation to fully validate the approach.

HueDecide: A lecture voting system augmented by IoT

This project proposes a system called Hue Decide that allows students in a lecture to provide real-time feedback to lecturers through a mobile app and website. Students can vote if they understand or don't understand topics by selecting positive or negative options on their devices, and these aggregate votes will be visualized by changing the color of a Philips Hue lightbulb in the lecture hall from red to green. The system is designed to be simple to use with separate workflows for students and lecturers, and includes requirements, designs, and implementation details. It aims to give lecturers a quick understanding of student comprehension without interrupting the flow of the lecture.

Deep learning - Chatbot

The document discusses sequence to sequence learning for chatbots. It provides an overview of the network architecture, including encoding the input sequence and decoding the output sequence. LSTM is used to model language. The loss function is cross entropy loss. Improvement techniques discussed include attention mechanism to focus on relevant parts of the input, and beam search to find higher probability full sequences during decoding.

Final year ppt

This document summarizes a student project on face detection and recognition. The project used OpenCV with Python to detect faces in images and video in real-time. It extracts Haar features and compares them to a training database to recognize faces. The system was able to identify multiple faces with reasonable accuracy, though performance decreased with head tilts or low image quality. Future work could improve robustness to disguises and add emotion or gender analysis.

Sample PPT_TNSDC about the face emotion.pptx

This document outlines a project to develop a real-time system for detecting facial emotions and estimating age using deep learning algorithms. The system aims to (1) recognize emotions like happiness, sadness, anger, and surprise using a CNN model trained on a diverse dataset, (2) estimate age ranges utilizing facial features and wrinkles through another CNN model, and (3) optimize the solution for real-time processing on various hardware with high accuracy despite variability in inputs. The document discusses the problem statement, objectives, proposed solution, model development approach including preprocessing, and potential applications in security, healthcare and customer service.

Python Project.pptx

Sahil Verma submitted an internship training report on Python facial emotion detection. The report introduced Freecodecamp, the internship platform, and discussed using convolutional neural networks and libraries like OpenCV, DeepFace and HaarCascade to detect faces in video streams and identify emotions by comparing faces to a training dataset. However, when testing the live emotion detection, the program crashed and was unable to handle changes in facial expressions in real-time as required for the intended demonstration. While the concepts and integration of libraries worked as expected on stored images, live video processing posed challenges that prevented a successful demonstration.

presentation

The document proposes a dense optical flow-based approach to emotion recognition from videos. It extracts dense optical flow features from labeled training videos to train an SVM classifier. During testing, it determines facial movements from unlabeled videos using optical flow and classifies the emotion. It achieved 82-90% accuracy on a dataset of 372 videos across 6 people expressing 4 emotions. Future work includes combining classifiers focusing on different facial regions and testing robustness to distance and camera angle.

Robust face name graph matching for movie character identification - Final PPT

Robust face name graph matching for movie character identification - Final PPTPriyadarshini Dasarathan

The document proposes a robust face name graph matching system for movie character identification. It involves detecting faces accurately using clustering, representing them in a graph, and matching faces using an edit-operation based graph matching algorithm. The system aims to identify characters in low resolution or complex backgrounds. It analyzes existing limitations and proposes a two-scheme approach using external script resources and original graphs. The system architecture involves modules for login/authentication, detection, training, and recognition. It concludes the proposed schemes improve clustering and identification of faces from movies and discusses future work on optimal functions for genres and exploiting more character relationships.Recommended

fpres

The document discusses applying the sum of absolute differences (SAD) algorithm to perform stereo matching on images using disparity maps. Key points include:

- The author proposes using a neighbor-based ranking approach to simplify pixel comparisons for SAD, addressing issues with intensity variations.

- An initial test of the approach yielded promising naive results, though the training data set was very small.

- Further experiments increasing the size and quality of the training data set showed accuracy rates from 66-91% for k-nearest neighbor classification, providing evidence the method is viable.

- However, more work is needed to improve feature selection and evaluation to fully validate the approach.

HueDecide: A lecture voting system augmented by IoT

This project proposes a system called Hue Decide that allows students in a lecture to provide real-time feedback to lecturers through a mobile app and website. Students can vote if they understand or don't understand topics by selecting positive or negative options on their devices, and these aggregate votes will be visualized by changing the color of a Philips Hue lightbulb in the lecture hall from red to green. The system is designed to be simple to use with separate workflows for students and lecturers, and includes requirements, designs, and implementation details. It aims to give lecturers a quick understanding of student comprehension without interrupting the flow of the lecture.

Deep learning - Chatbot

The document discusses sequence to sequence learning for chatbots. It provides an overview of the network architecture, including encoding the input sequence and decoding the output sequence. LSTM is used to model language. The loss function is cross entropy loss. Improvement techniques discussed include attention mechanism to focus on relevant parts of the input, and beam search to find higher probability full sequences during decoding.

Final year ppt

This document summarizes a student project on face detection and recognition. The project used OpenCV with Python to detect faces in images and video in real-time. It extracts Haar features and compares them to a training database to recognize faces. The system was able to identify multiple faces with reasonable accuracy, though performance decreased with head tilts or low image quality. Future work could improve robustness to disguises and add emotion or gender analysis.

Sample PPT_TNSDC about the face emotion.pptx

This document outlines a project to develop a real-time system for detecting facial emotions and estimating age using deep learning algorithms. The system aims to (1) recognize emotions like happiness, sadness, anger, and surprise using a CNN model trained on a diverse dataset, (2) estimate age ranges utilizing facial features and wrinkles through another CNN model, and (3) optimize the solution for real-time processing on various hardware with high accuracy despite variability in inputs. The document discusses the problem statement, objectives, proposed solution, model development approach including preprocessing, and potential applications in security, healthcare and customer service.

Python Project.pptx

Sahil Verma submitted an internship training report on Python facial emotion detection. The report introduced Freecodecamp, the internship platform, and discussed using convolutional neural networks and libraries like OpenCV, DeepFace and HaarCascade to detect faces in video streams and identify emotions by comparing faces to a training dataset. However, when testing the live emotion detection, the program crashed and was unable to handle changes in facial expressions in real-time as required for the intended demonstration. While the concepts and integration of libraries worked as expected on stored images, live video processing posed challenges that prevented a successful demonstration.

presentation

The document proposes a dense optical flow-based approach to emotion recognition from videos. It extracts dense optical flow features from labeled training videos to train an SVM classifier. During testing, it determines facial movements from unlabeled videos using optical flow and classifies the emotion. It achieved 82-90% accuracy on a dataset of 372 videos across 6 people expressing 4 emotions. Future work includes combining classifiers focusing on different facial regions and testing robustness to distance and camera angle.

Robust face name graph matching for movie character identification - Final PPT

Robust face name graph matching for movie character identification - Final PPTPriyadarshini Dasarathan

The document proposes a robust face name graph matching system for movie character identification. It involves detecting faces accurately using clustering, representing them in a graph, and matching faces using an edit-operation based graph matching algorithm. The system aims to identify characters in low resolution or complex backgrounds. It analyzes existing limitations and proposes a two-scheme approach using external script resources and original graphs. The system architecture involves modules for login/authentication, detection, training, and recognition. It concludes the proposed schemes improve clustering and identification of faces from movies and discusses future work on optimal functions for genres and exploiting more character relationships.Deep Representation: Building a Semantic Image Search Engine

This document discusses building a semantic image search engine through deep representation learning. It describes using a pre-trained convolutional neural network to extract image embeddings, which are then used to build an index for fast proximity search of similar images. The model is further trained to predict word embeddings for images, allowing image search to be guided by text queries beyond the initial training classes. This provides a way to build a joint image-text embedding space and enable generalized image search using only a small dataset for training.

Face recognition technology

This document discusses face recognition technology. It defines biometrics as measurable human characteristics used for identification. Face recognition is a biometric that analyzes facial features from images. It has advantages over other biometrics like fingerprints in not requiring physical contact. The document outlines the process of face recognition including image capture, feature extraction, comparison, and matching. It also discusses factors like accuracy rates and response time.

ppt.pdf

This document describes a study that used convolutional neural networks (CNNs) for animal classification from images. The study proposed a novel method for animal face classification using CNN features. The CNN model was trained on images to classify animals into different classes. The model achieved over 90% accuracy on the test data. The authors concluded that CNNs are well-suited for image classification tasks like animal classification due to their ability to automatically extract relevant features from images. Future work could involve classifying other objects using this deep learning approach.

Emotion recognition using image processing in deep learning

User’s emotion using its facial expressions will be detected. These expressions can be derived from the live feed via system's camera or any pre-existing image available in the memory. Emotions possessed by humans can be recognized and has a vast scope of study in the computer vision industry upon which several researches have already been done.

We propose a compact CNN model for facial expression recognition.

The work has been implemented using Python Open Source Computer Vision Library (OpenCV) and NumPy,pandas,keras packages. The scanned image (testing dataset) is being compared to training dataset and thus emotion is predicted.

Big Data Spain 2018: How to build Weighted XGBoost ML model for Imbalance dat...

Alok Singh is a Principal Engineer at IBM CODAIT who has built multiple analytical frameworks and machine learning algorithms. The presentation provides an overview of building predictive models for imbalanced datasets using scikit-learn and XGBoost. It discusses challenges with imbalanced data, evaluation metrics like confusion matrix and ROC curves, and techniques for imbalanced learning including weighted classes, oversampling minorities and undersampling majorities, and SMOTE. The presentation concludes with a hands-on tutorial demonstrating these techniques on an imbalanced bank marketing dataset.

ppt 20BET1024.pptx

This document describes a project to implement real-time facial recognition using OpenCV and Python. The project uses a laptop's webcam to capture video frames and detect and recognize faces in each frame. It trains an image dataset with face images and IDs then detects faces in each new video frame. It predicts faces by comparing features to the training data and labels matches based on a confidence level threshold. The document outlines the use of Haar cascade classifiers, LBPH algorithms, and OpenCV functions to complete the facial recognition process in real-time on new video frames from the webcam.

cvpresentation-190812154654 (1).pptx

This document describes a project to implement real-time facial recognition using OpenCV and Python. The project uses a laptop's webcam to capture video frames and detect and recognize faces in each frame. It trains an image dataset with face images and IDs then detects faces in each new video frame. It predicts faces by comparing features to the training data and labels matches based on a confidence level threshold. The document outlines the use of Haar cascade classifiers, LBPH algorithms, and OpenCV functions to complete the facial recognition process in real-time on new video frames from the webcam.

An Enhanced Independent Component-Based Human Facial Expression Recognition ...

This document presents a facial expression recognition system that uses enhanced independent component analysis and fisher linear discriminant analysis (EICA-FLDA) for feature extraction from video frames, and hidden Markov models (HMM) for expression recognition. The system is tested on the Cohn-Kanade facial expression database and achieves a mean recognition rate of 93.23% for six universal expressions (anger, joy, sad, disgust, fear, surprise). Facial expression recognition has applications in human-computer interaction domains like online gaming.

Machine Learning without the Math: An overview of Machine Learning

A brief overview of Machine Learning and its associated tasks from a high level. This presentation discusses key concepts without the maths.The more mathematically inclined are referred to Bishops book on Pattern Recognition and Machine Learning.

FACE RECOGNITION ACROSS NON-UNIFORM MOTION BLUR

we will get the original image by giving the read command in the MAT LAB code. The remaining images are the illuminated image, blurred image, de-blurred image, illuminated blurred image which is modulated with the LBP technique, original image which is modulated with the LBP technique and the closest match gallery image. The closest match gallery image is obtained by comparing with all the images present in the database.

A study on face recognition technique based on eigenface

This document summarizes a study on face recognition techniques based on eigenfaces. It discusses the eigenface algorithm which represents faces as weighted combinations of eigenvectors derived from face images. The document outlines the eigenface initialization process and recognition steps. It also summarizes experimental results testing recognition accuracy on several databases using different numbers of training images per person. The conclusion discusses improving single-sample-per-person recognition for real-time applications like identifying individuals from CCTV footage using their Aadhaar card face image as the training sample.

Computer Vision - Real Time Face Recognition using Open CV and Python

This project is used for creation,training and detection/recognition of Face Datasets using Machine learning algorithm on Laptop’s Webcam.

Face Recognition

Face Recognition Across Non-Uniform Motion Blur, Illumination & Pose IEEE (Only an overview, for seminars and presentations)

Facial recognition locker for android

This document describes a facial recognition locker app for Android called FaceRecog. It allows users to unlock their phone, block calls, and replace the lock screen using facial recognition. The app requires one administrator who can unlock with a password in addition to trained faces. It gives users freedom to train their face in different conditions and set a confidence threshold. The app uses face detection and recognition approaches like LBP (Local Binary Patterns) and detects facial features to recognize faces. Future work includes improving performance and extending it to handle illumination and poses.

A DEEP LEARNING APPROACH FOR SEMANTIC SEGMENTATION IN BRAIN TUMOR IMAGES

Digital image processing is vast fields which can be using various applications. Which include Detection of criminal face, fingerprint authentication system, in medical field, object recognition etc. Brain tumor detection plays an important role in medical field. Brain tumor detection is detection of tumor affected part in the brain along with its shape size and boundary, so it useful in medical field.

Segmentation and the subsequent quantitative assessment of lesions in medical images provide valuable information for the analysis of neuropathologist and are important for planning of treatment strategies, monitoring of disease progression and prediction of patient outcome. For a better understanding of the pathophysiology of diseases, quantitative imaging can reveal clues about the disease characteristics and effects on particular anatomical structures

Online exam software

The document describes an online exam software called Skill Evaluation Lab that can be used to conduct online exams as an alternative to paper-based exams. It lists several features of the software including the ability to create questions in various formats, include voice questions and handwritten responses, communicate with exam takers, and generate performance reports. It then provides examples of how the software could be used by colleges, corporations, schools, governments, training institutions, and recruitment agencies to conduct exams, evaluate skills, and improve training and recruitment processes.

ppt 20BET1024.pptx

This document describes a project to implement real-time facial recognition using OpenCV and Python. The goals are to train an image dataset with face IDs, detect faces in webcam video frames, predict faces based on a confidence level, and improve the model with more training data. The process involves creating training and test datasets, training the data using LBPH to extract features, and predicting faces in new frames in real-time by comparing histograms. The project uses Anaconda, OpenCV libraries, and common functions for detection, drawing, labeling, and training. Further enhancements could include more training data and implementing CNNs with TensorFlow.

neuralAC

This document discusses using a cascade correlation neural network (CCNN) to capture the drawing style of a caricaturist in order to automatically generate caricatures. It proposes extracting facial components from original images, mean faces, and caricatures to create training data. The CCNN is trained using this data to learn the exaggerations made by the caricaturist. Experiments show the CCNN can accurately predict nonlinear exaggerations to components. The approach aims to address limitations of existing caricature generation systems by learning an individual artist's unique style through training on their deformations of facial objects.

KorraAI - a probabilistic virtual agent framework

In this presentation a new framework for conceiving and building embodied conversational agents (ECAs) called KorraAI is described. KorraAI can model the ECA overall behavior. It allows an ECA to be proactive and to encode changes in human behavior over time as well as based over interactions with users. It is a framework that avoids fixed interaction workflows and is designed not to be predictable on different levels of interaction with the human users. This is achieved by using statistical distributions, probabilistic programming, probabilistic inference and Bayesian networks. Management of uncertain data is also integrated. A demo can be downloaded. KorraAI is plugin-based and ECA models can be easily shared between ECA designers.

Face recognition: A Comparison of Appearance Based Approaches

Face recognition approaches can be divided into three main categories: direct correlation, eigenfaces, and fisherfaces. Direct correlation directly compares pixel intensity values between images. Eigenfaces uses principal component analysis to project faces into a face space defined by eigenvectors. Fisherfaces aims to maximize between-class variations while minimizing within-class variations to better account for differences in lighting and expressions. Pre-processing techniques like color normalization, histogram equalization, and edge detection can improve the accuracy of face recognition systems by reducing the effects of lighting variations. Testing various pre-processing techniques on different approaches found that the fisherfaces method combined with SLBC preprocessing achieved the lowest error rate of 17.8%, followed closely by direct correlation with intensity normalization at 18.

GraphRAG for Life Science to increase LLM accuracy

GraphRAG for life science domain, where you retriever information from biomedical knowledge graphs using LLMs to increase the accuracy and performance of generated answers

Cosa hanno in comune un mattoncino Lego e la backdoor XZ?

ABSTRACT: A prima vista, un mattoncino Lego e la backdoor XZ potrebbero avere in comune il fatto di essere entrambi blocchi di costruzione, o dipendenze di progetti creativi e software. La realtà è che un mattoncino Lego e il caso della backdoor XZ hanno molto di più di tutto ciò in comune.

Partecipate alla presentazione per immergervi in una storia di interoperabilità, standard e formati aperti, per poi discutere del ruolo importante che i contributori hanno in una comunità open source sostenibile.

BIO: Sostenitrice del software libero e dei formati standard e aperti. È stata un membro attivo dei progetti Fedora e openSUSE e ha co-fondato l'Associazione LibreItalia dove è stata coinvolta in diversi eventi, migrazioni e formazione relativi a LibreOffice. In precedenza ha lavorato a migrazioni e corsi di formazione su LibreOffice per diverse amministrazioni pubbliche e privati. Da gennaio 2020 lavora in SUSE come Software Release Engineer per Uyuni e SUSE Manager e quando non segue la sua passione per i computer e per Geeko coltiva la sua curiosità per l'astronomia (da cui deriva il suo nickname deneb_alpha).

More Related Content

Similar to Real-time Emotion Recognition

Deep Representation: Building a Semantic Image Search Engine

This document discusses building a semantic image search engine through deep representation learning. It describes using a pre-trained convolutional neural network to extract image embeddings, which are then used to build an index for fast proximity search of similar images. The model is further trained to predict word embeddings for images, allowing image search to be guided by text queries beyond the initial training classes. This provides a way to build a joint image-text embedding space and enable generalized image search using only a small dataset for training.

Face recognition technology

This document discusses face recognition technology. It defines biometrics as measurable human characteristics used for identification. Face recognition is a biometric that analyzes facial features from images. It has advantages over other biometrics like fingerprints in not requiring physical contact. The document outlines the process of face recognition including image capture, feature extraction, comparison, and matching. It also discusses factors like accuracy rates and response time.

ppt.pdf

This document describes a study that used convolutional neural networks (CNNs) for animal classification from images. The study proposed a novel method for animal face classification using CNN features. The CNN model was trained on images to classify animals into different classes. The model achieved over 90% accuracy on the test data. The authors concluded that CNNs are well-suited for image classification tasks like animal classification due to their ability to automatically extract relevant features from images. Future work could involve classifying other objects using this deep learning approach.

Emotion recognition using image processing in deep learning

User’s emotion using its facial expressions will be detected. These expressions can be derived from the live feed via system's camera or any pre-existing image available in the memory. Emotions possessed by humans can be recognized and has a vast scope of study in the computer vision industry upon which several researches have already been done.

We propose a compact CNN model for facial expression recognition.

The work has been implemented using Python Open Source Computer Vision Library (OpenCV) and NumPy,pandas,keras packages. The scanned image (testing dataset) is being compared to training dataset and thus emotion is predicted.

Big Data Spain 2018: How to build Weighted XGBoost ML model for Imbalance dat...

Alok Singh is a Principal Engineer at IBM CODAIT who has built multiple analytical frameworks and machine learning algorithms. The presentation provides an overview of building predictive models for imbalanced datasets using scikit-learn and XGBoost. It discusses challenges with imbalanced data, evaluation metrics like confusion matrix and ROC curves, and techniques for imbalanced learning including weighted classes, oversampling minorities and undersampling majorities, and SMOTE. The presentation concludes with a hands-on tutorial demonstrating these techniques on an imbalanced bank marketing dataset.

ppt 20BET1024.pptx

This document describes a project to implement real-time facial recognition using OpenCV and Python. The project uses a laptop's webcam to capture video frames and detect and recognize faces in each frame. It trains an image dataset with face images and IDs then detects faces in each new video frame. It predicts faces by comparing features to the training data and labels matches based on a confidence level threshold. The document outlines the use of Haar cascade classifiers, LBPH algorithms, and OpenCV functions to complete the facial recognition process in real-time on new video frames from the webcam.

cvpresentation-190812154654 (1).pptx

This document describes a project to implement real-time facial recognition using OpenCV and Python. The project uses a laptop's webcam to capture video frames and detect and recognize faces in each frame. It trains an image dataset with face images and IDs then detects faces in each new video frame. It predicts faces by comparing features to the training data and labels matches based on a confidence level threshold. The document outlines the use of Haar cascade classifiers, LBPH algorithms, and OpenCV functions to complete the facial recognition process in real-time on new video frames from the webcam.

An Enhanced Independent Component-Based Human Facial Expression Recognition ...

This document presents a facial expression recognition system that uses enhanced independent component analysis and fisher linear discriminant analysis (EICA-FLDA) for feature extraction from video frames, and hidden Markov models (HMM) for expression recognition. The system is tested on the Cohn-Kanade facial expression database and achieves a mean recognition rate of 93.23% for six universal expressions (anger, joy, sad, disgust, fear, surprise). Facial expression recognition has applications in human-computer interaction domains like online gaming.

Machine Learning without the Math: An overview of Machine Learning

A brief overview of Machine Learning and its associated tasks from a high level. This presentation discusses key concepts without the maths.The more mathematically inclined are referred to Bishops book on Pattern Recognition and Machine Learning.

FACE RECOGNITION ACROSS NON-UNIFORM MOTION BLUR

we will get the original image by giving the read command in the MAT LAB code. The remaining images are the illuminated image, blurred image, de-blurred image, illuminated blurred image which is modulated with the LBP technique, original image which is modulated with the LBP technique and the closest match gallery image. The closest match gallery image is obtained by comparing with all the images present in the database.

A study on face recognition technique based on eigenface

This document summarizes a study on face recognition techniques based on eigenfaces. It discusses the eigenface algorithm which represents faces as weighted combinations of eigenvectors derived from face images. The document outlines the eigenface initialization process and recognition steps. It also summarizes experimental results testing recognition accuracy on several databases using different numbers of training images per person. The conclusion discusses improving single-sample-per-person recognition for real-time applications like identifying individuals from CCTV footage using their Aadhaar card face image as the training sample.

Computer Vision - Real Time Face Recognition using Open CV and Python

This project is used for creation,training and detection/recognition of Face Datasets using Machine learning algorithm on Laptop’s Webcam.

Face Recognition

Face Recognition Across Non-Uniform Motion Blur, Illumination & Pose IEEE (Only an overview, for seminars and presentations)

Facial recognition locker for android

This document describes a facial recognition locker app for Android called FaceRecog. It allows users to unlock their phone, block calls, and replace the lock screen using facial recognition. The app requires one administrator who can unlock with a password in addition to trained faces. It gives users freedom to train their face in different conditions and set a confidence threshold. The app uses face detection and recognition approaches like LBP (Local Binary Patterns) and detects facial features to recognize faces. Future work includes improving performance and extending it to handle illumination and poses.

A DEEP LEARNING APPROACH FOR SEMANTIC SEGMENTATION IN BRAIN TUMOR IMAGES

Digital image processing is vast fields which can be using various applications. Which include Detection of criminal face, fingerprint authentication system, in medical field, object recognition etc. Brain tumor detection plays an important role in medical field. Brain tumor detection is detection of tumor affected part in the brain along with its shape size and boundary, so it useful in medical field.

Segmentation and the subsequent quantitative assessment of lesions in medical images provide valuable information for the analysis of neuropathologist and are important for planning of treatment strategies, monitoring of disease progression and prediction of patient outcome. For a better understanding of the pathophysiology of diseases, quantitative imaging can reveal clues about the disease characteristics and effects on particular anatomical structures

Online exam software

The document describes an online exam software called Skill Evaluation Lab that can be used to conduct online exams as an alternative to paper-based exams. It lists several features of the software including the ability to create questions in various formats, include voice questions and handwritten responses, communicate with exam takers, and generate performance reports. It then provides examples of how the software could be used by colleges, corporations, schools, governments, training institutions, and recruitment agencies to conduct exams, evaluate skills, and improve training and recruitment processes.

ppt 20BET1024.pptx

This document describes a project to implement real-time facial recognition using OpenCV and Python. The goals are to train an image dataset with face IDs, detect faces in webcam video frames, predict faces based on a confidence level, and improve the model with more training data. The process involves creating training and test datasets, training the data using LBPH to extract features, and predicting faces in new frames in real-time by comparing histograms. The project uses Anaconda, OpenCV libraries, and common functions for detection, drawing, labeling, and training. Further enhancements could include more training data and implementing CNNs with TensorFlow.

neuralAC

This document discusses using a cascade correlation neural network (CCNN) to capture the drawing style of a caricaturist in order to automatically generate caricatures. It proposes extracting facial components from original images, mean faces, and caricatures to create training data. The CCNN is trained using this data to learn the exaggerations made by the caricaturist. Experiments show the CCNN can accurately predict nonlinear exaggerations to components. The approach aims to address limitations of existing caricature generation systems by learning an individual artist's unique style through training on their deformations of facial objects.

KorraAI - a probabilistic virtual agent framework

In this presentation a new framework for conceiving and building embodied conversational agents (ECAs) called KorraAI is described. KorraAI can model the ECA overall behavior. It allows an ECA to be proactive and to encode changes in human behavior over time as well as based over interactions with users. It is a framework that avoids fixed interaction workflows and is designed not to be predictable on different levels of interaction with the human users. This is achieved by using statistical distributions, probabilistic programming, probabilistic inference and Bayesian networks. Management of uncertain data is also integrated. A demo can be downloaded. KorraAI is plugin-based and ECA models can be easily shared between ECA designers.

Face recognition: A Comparison of Appearance Based Approaches

Face recognition approaches can be divided into three main categories: direct correlation, eigenfaces, and fisherfaces. Direct correlation directly compares pixel intensity values between images. Eigenfaces uses principal component analysis to project faces into a face space defined by eigenvectors. Fisherfaces aims to maximize between-class variations while minimizing within-class variations to better account for differences in lighting and expressions. Pre-processing techniques like color normalization, histogram equalization, and edge detection can improve the accuracy of face recognition systems by reducing the effects of lighting variations. Testing various pre-processing techniques on different approaches found that the fisherfaces method combined with SLBC preprocessing achieved the lowest error rate of 17.8%, followed closely by direct correlation with intensity normalization at 18.

Similar to Real-time Emotion Recognition (20)

Deep Representation: Building a Semantic Image Search Engine

Deep Representation: Building a Semantic Image Search Engine

Emotion recognition using image processing in deep learning

Emotion recognition using image processing in deep learning

Big Data Spain 2018: How to build Weighted XGBoost ML model for Imbalance dat...

Big Data Spain 2018: How to build Weighted XGBoost ML model for Imbalance dat...

An Enhanced Independent Component-Based Human Facial Expression Recognition ...

An Enhanced Independent Component-Based Human Facial Expression Recognition ...

Machine Learning without the Math: An overview of Machine Learning

Machine Learning without the Math: An overview of Machine Learning

A study on face recognition technique based on eigenface

A study on face recognition technique based on eigenface

Computer Vision - Real Time Face Recognition using Open CV and Python

Computer Vision - Real Time Face Recognition using Open CV and Python

A DEEP LEARNING APPROACH FOR SEMANTIC SEGMENTATION IN BRAIN TUMOR IMAGES

A DEEP LEARNING APPROACH FOR SEMANTIC SEGMENTATION IN BRAIN TUMOR IMAGES

Face recognition: A Comparison of Appearance Based Approaches

Face recognition: A Comparison of Appearance Based Approaches

Recently uploaded

GraphRAG for Life Science to increase LLM accuracy

GraphRAG for life science domain, where you retriever information from biomedical knowledge graphs using LLMs to increase the accuracy and performance of generated answers

Cosa hanno in comune un mattoncino Lego e la backdoor XZ?

ABSTRACT: A prima vista, un mattoncino Lego e la backdoor XZ potrebbero avere in comune il fatto di essere entrambi blocchi di costruzione, o dipendenze di progetti creativi e software. La realtà è che un mattoncino Lego e il caso della backdoor XZ hanno molto di più di tutto ciò in comune.

Partecipate alla presentazione per immergervi in una storia di interoperabilità, standard e formati aperti, per poi discutere del ruolo importante che i contributori hanno in una comunità open source sostenibile.

BIO: Sostenitrice del software libero e dei formati standard e aperti. È stata un membro attivo dei progetti Fedora e openSUSE e ha co-fondato l'Associazione LibreItalia dove è stata coinvolta in diversi eventi, migrazioni e formazione relativi a LibreOffice. In precedenza ha lavorato a migrazioni e corsi di formazione su LibreOffice per diverse amministrazioni pubbliche e privati. Da gennaio 2020 lavora in SUSE come Software Release Engineer per Uyuni e SUSE Manager e quando non segue la sua passione per i computer e per Geeko coltiva la sua curiosità per l'astronomia (da cui deriva il suo nickname deneb_alpha).

Uni Systems Copilot event_05062024_C.Vlachos.pdf

Unlocking Productivity: Leveraging the Potential of Copilot in Microsoft 365, a presentation by Christoforos Vlachos, Senior Solutions Manager – Modern Workplace, Uni Systems

GraphSummit Singapore | The Future of Agility: Supercharging Digital Transfor...

Leonard Jayamohan, Partner & Generative AI Lead, Deloitte

This keynote will reveal how Deloitte leverages Neo4j’s graph power for groundbreaking digital twin solutions, achieving a staggering 100x performance boost. Discover the essential role knowledge graphs play in successful generative AI implementations. Plus, get an exclusive look at an innovative Neo4j + Generative AI solution Deloitte is developing in-house.

Goodbye Windows 11: Make Way for Nitrux Linux 3.5.0!

As the digital landscape continually evolves, operating systems play a critical role in shaping user experiences and productivity. The launch of Nitrux Linux 3.5.0 marks a significant milestone, offering a robust alternative to traditional systems such as Windows 11. This article delves into the essence of Nitrux Linux 3.5.0, exploring its unique features, advantages, and how it stands as a compelling choice for both casual users and tech enthusiasts.

Microsoft - Power Platform_G.Aspiotis.pdf

Revolutionizing Application Development

with AI-powered low-code, presentation by George Aspiotis, Sr. Partner Development Manager, Microsoft

Building Production Ready Search Pipelines with Spark and Milvus

Spark is the widely used ETL tool for processing, indexing and ingesting data to serving stack for search. Milvus is the production-ready open-source vector database. In this talk we will show how to use Spark to process unstructured data to extract vector representations, and push the vectors to Milvus vector database for search serving.

UiPath Test Automation using UiPath Test Suite series, part 5

Welcome to UiPath Test Automation using UiPath Test Suite series part 5. In this session, we will cover CI/CD with devops.

Topics covered:

CI/CD with in UiPath

End-to-end overview of CI/CD pipeline with Azure devops

Speaker:

Lyndsey Byblow, Test Suite Sales Engineer @ UiPath, Inc.

Artificial Intelligence for XMLDevelopment

In the rapidly evolving landscape of technologies, XML continues to play a vital role in structuring, storing, and transporting data across diverse systems. The recent advancements in artificial intelligence (AI) present new methodologies for enhancing XML development workflows, introducing efficiency, automation, and intelligent capabilities. This presentation will outline the scope and perspective of utilizing AI in XML development. The potential benefits and the possible pitfalls will be highlighted, providing a balanced view of the subject.

We will explore the capabilities of AI in understanding XML markup languages and autonomously creating structured XML content. Additionally, we will examine the capacity of AI to enrich plain text with appropriate XML markup. Practical examples and methodological guidelines will be provided to elucidate how AI can be effectively prompted to interpret and generate accurate XML markup.

Further emphasis will be placed on the role of AI in developing XSLT, or schemas such as XSD and Schematron. We will address the techniques and strategies adopted to create prompts for generating code, explaining code, or refactoring the code, and the results achieved.

The discussion will extend to how AI can be used to transform XML content. In particular, the focus will be on the use of AI XPath extension functions in XSLT, Schematron, Schematron Quick Fixes, or for XML content refactoring.

The presentation aims to deliver a comprehensive overview of AI usage in XML development, providing attendees with the necessary knowledge to make informed decisions. Whether you’re at the early stages of adopting AI or considering integrating it in advanced XML development, this presentation will cover all levels of expertise.

By highlighting the potential advantages and challenges of integrating AI with XML development tools and languages, the presentation seeks to inspire thoughtful conversation around the future of XML development. We’ll not only delve into the technical aspects of AI-powered XML development but also discuss practical implications and possible future directions.

GraphSummit Singapore | Neo4j Product Vision & Roadmap - Q2 2024

Maruthi Prithivirajan, Head of ASEAN & IN Solution Architecture, Neo4j

Get an inside look at the latest Neo4j innovations that enable relationship-driven intelligence at scale. Learn more about the newest cloud integrations and product enhancements that make Neo4j an essential choice for developers building apps with interconnected data and generative AI.

Removing Uninteresting Bytes in Software Fuzzing

Imagine a world where software fuzzing, the process of mutating bytes in test seeds to uncover hidden and erroneous program behaviors, becomes faster and more effective. A lot depends on the initial seeds, which can significantly dictate the trajectory of a fuzzing campaign, particularly in terms of how long it takes to uncover interesting behaviour in your code. We introduce DIAR, a technique designed to speedup fuzzing campaigns by pinpointing and eliminating those uninteresting bytes in the seeds. Picture this: instead of wasting valuable resources on meaningless mutations in large, bloated seeds, DIAR removes the unnecessary bytes, streamlining the entire process.

In this work, we equipped AFL, a popular fuzzer, with DIAR and examined two critical Linux libraries -- Libxml's xmllint, a tool for parsing xml documents, and Binutil's readelf, an essential debugging and security analysis command-line tool used to display detailed information about ELF (Executable and Linkable Format). Our preliminary results show that AFL+DIAR does not only discover new paths more quickly but also achieves higher coverage overall. This work thus showcases how starting with lean and optimized seeds can lead to faster, more comprehensive fuzzing campaigns -- and DIAR helps you find such seeds.

- These are slides of the talk given at IEEE International Conference on Software Testing Verification and Validation Workshop, ICSTW 2022.

Communications Mining Series - Zero to Hero - Session 1

This session provides introduction to UiPath Communication Mining, importance and platform overview. You will acquire a good understand of the phases in Communication Mining as we go over the platform with you. Topics covered:

• Communication Mining Overview

• Why is it important?

• How can it help today’s business and the benefits

• Phases in Communication Mining

• Demo on Platform overview

• Q/A

Mind map of terminologies used in context of Generative AI

Mind map of common terms used in context of Generative AI.

How to Get CNIC Information System with Paksim Ga.pptx

Pakdata Cf is a groundbreaking system designed to streamline and facilitate access to CNIC information. This innovative platform leverages advanced technology to provide users with efficient and secure access to their CNIC details.

20240607 QFM018 Elixir Reading List May 2024

Everything I found interesting about the Elixir programming ecosystem in May 2024

Climate Impact of Software Testing at Nordic Testing Days

My slides at Nordic Testing Days 6.6.2024

Climate impact / sustainability of software testing discussed on the talk. ICT and testing must carry their part of global responsibility to help with the climat warming. We can minimize the carbon footprint but we can also have a carbon handprint, a positive impact on the climate. Quality characteristics can be added with sustainability, and then measured continuously. Test environments can be used less, and in smaller scale and on demand. Test techniques can be used in optimizing or minimizing number of tests. Test automation can be used to speed up testing.

Driving Business Innovation: Latest Generative AI Advancements & Success Story

Are you ready to revolutionize how you handle data? Join us for a webinar where we’ll bring you up to speed with the latest advancements in Generative AI technology and discover how leveraging FME with tools from giants like Google Gemini, Amazon, and Microsoft OpenAI can supercharge your workflow efficiency.

During the hour, we’ll take you through:

Guest Speaker Segment with Hannah Barrington: Dive into the world of dynamic real estate marketing with Hannah, the Marketing Manager at Workspace Group. Hear firsthand how their team generates engaging descriptions for thousands of office units by integrating diverse data sources—from PDF floorplans to web pages—using FME transformers, like OpenAIVisionConnector and AnthropicVisionConnector. This use case will show you how GenAI can streamline content creation for marketing across the board.

Ollama Use Case: Learn how Scenario Specialist Dmitri Bagh has utilized Ollama within FME to input data, create custom models, and enhance security protocols. This segment will include demos to illustrate the full capabilities of FME in AI-driven processes.

Custom AI Models: Discover how to leverage FME to build personalized AI models using your data. Whether it’s populating a model with local data for added security or integrating public AI tools, find out how FME facilitates a versatile and secure approach to AI.

We’ll wrap up with a live Q&A session where you can engage with our experts on your specific use cases, and learn more about optimizing your data workflows with AI.

This webinar is ideal for professionals seeking to harness the power of AI within their data management systems while ensuring high levels of customization and security. Whether you're a novice or an expert, gain actionable insights and strategies to elevate your data processes. Join us to see how FME and AI can revolutionize how you work with data!

UiPath Test Automation using UiPath Test Suite series, part 6

Welcome to UiPath Test Automation using UiPath Test Suite series part 6. In this session, we will cover Test Automation with generative AI and Open AI.

UiPath Test Automation with generative AI and Open AI webinar offers an in-depth exploration of leveraging cutting-edge technologies for test automation within the UiPath platform. Attendees will delve into the integration of generative AI, a test automation solution, with Open AI advanced natural language processing capabilities.

Throughout the session, participants will discover how this synergy empowers testers to automate repetitive tasks, enhance testing accuracy, and expedite the software testing life cycle. Topics covered include the seamless integration process, practical use cases, and the benefits of harnessing AI-driven automation for UiPath testing initiatives. By attending this webinar, testers, and automation professionals can gain valuable insights into harnessing the power of AI to optimize their test automation workflows within the UiPath ecosystem, ultimately driving efficiency and quality in software development processes.

What will you get from this session?

1. Insights into integrating generative AI.

2. Understanding how this integration enhances test automation within the UiPath platform

3. Practical demonstrations

4. Exploration of real-world use cases illustrating the benefits of AI-driven test automation for UiPath

Topics covered:

What is generative AI

Test Automation with generative AI and Open AI.

UiPath integration with generative AI

Speaker:

Deepak Rai, Automation Practice Lead, Boundaryless Group and UiPath MVP

GraphSummit Singapore | Graphing Success: Revolutionising Organisational Stru...

Sudheer Mechineni, Head of Application Frameworks, Standard Chartered Bank

Discover how Standard Chartered Bank harnessed the power of Neo4j to transform complex data access challenges into a dynamic, scalable graph database solution. This keynote will cover their journey from initial adoption to deploying a fully automated, enterprise-grade causal cluster, highlighting key strategies for modelling organisational changes and ensuring robust disaster recovery. Learn how these innovations have not only enhanced Standard Chartered Bank’s data infrastructure but also positioned them as pioneers in the banking sector’s adoption of graph technology.

Recently uploaded (20)

GraphRAG for Life Science to increase LLM accuracy

GraphRAG for Life Science to increase LLM accuracy

Cosa hanno in comune un mattoncino Lego e la backdoor XZ?

Cosa hanno in comune un mattoncino Lego e la backdoor XZ?

GraphSummit Singapore | The Future of Agility: Supercharging Digital Transfor...

GraphSummit Singapore | The Future of Agility: Supercharging Digital Transfor...

Goodbye Windows 11: Make Way for Nitrux Linux 3.5.0!

Goodbye Windows 11: Make Way for Nitrux Linux 3.5.0!

Building Production Ready Search Pipelines with Spark and Milvus

Building Production Ready Search Pipelines with Spark and Milvus

UiPath Test Automation using UiPath Test Suite series, part 5

UiPath Test Automation using UiPath Test Suite series, part 5

GraphSummit Singapore | Neo4j Product Vision & Roadmap - Q2 2024

GraphSummit Singapore | Neo4j Product Vision & Roadmap - Q2 2024

Communications Mining Series - Zero to Hero - Session 1

Communications Mining Series - Zero to Hero - Session 1

Mind map of terminologies used in context of Generative AI

Mind map of terminologies used in context of Generative AI

How to Get CNIC Information System with Paksim Ga.pptx

How to Get CNIC Information System with Paksim Ga.pptx

Climate Impact of Software Testing at Nordic Testing Days

Climate Impact of Software Testing at Nordic Testing Days

Driving Business Innovation: Latest Generative AI Advancements & Success Story

Driving Business Innovation: Latest Generative AI Advancements & Success Story

UiPath Test Automation using UiPath Test Suite series, part 6

UiPath Test Automation using UiPath Test Suite series, part 6

GraphSummit Singapore | Graphing Success: Revolutionising Organisational Stru...

GraphSummit Singapore | Graphing Success: Revolutionising Organisational Stru...

Real-time Emotion Recognition

- 1. Traditional Approaches EigenFace FisherFace Technique Convolution Neural Network with Inception V3 Simon Fraser University PROBLEM Facial expression classification: • Capture live stream from a video camera attached to a laptop for our experiments. • Apply and Benchmark different machine learning models for facial expression recognition • Classify three different facial expressions: Neutral, Happy and Surprise. • Final predicted facial expression is displayed via a live feed using the laptop camera. Applications of facial expression classification: • Customer Engagement • Virtual Reality Avatar Countenance Classifier : How are you feeling today? Liam Bui, Alexandre Lopes Simple Convolution Neural Network EXPERIMENTS Summary of Results Validation Accuracy by Epoch DATASET SOURCES • Initial models trained on the Kaggle Dataset with 35,000 facial expression images • Limited computation resources makes it infeasible to experiment with Kaggle Dataset • CK Dataset supplemented with our self-created images is used in our final experiments • Class such as Neutral and Sad appeared to be very similar to each other. LIVE DEMO • Happy Expression • Neutral Expression • Surprise Expression Method Accuracy without data augmentation Accuracy with Data Augmentation EigenFace 66% 68% FisherFace 87% 88.2% Simple CNN 94% 94% Inception V3 (Inception training disabled) 91% 91% Inception V3 (Inception training enabled) 99% 99% CONCLUSIONS • Out of the two traditional approaches, FisherFace technique outperforms EigenFace technique. • Convolutional Neural Network outperforms traditional techniques. • Enabling Inception V3 training improves model performance significantly. Input Layer Convolution Layer 1 Convolution Layer 2 Pooling Layer Dense Layer Output Layer Dropout Layer 0 10 20 30 40 50 60 70 80 90 100 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Validation% Inception V3 vs Simple CNN(with Data Augmentation) Validation Accuracy - CNN Validation Accuracy - Inception V3 LIMITATIONS • Due to computing resource constraints, training the Inception V3 model on CPU’S is time consuming. • Hence, small data set is used in our experiments • Live demo is more challenging because of different conditions (lightning, blur, angles, glasses) Input Layer Inception V3 Dense Layer Output Layer Dropout Layer