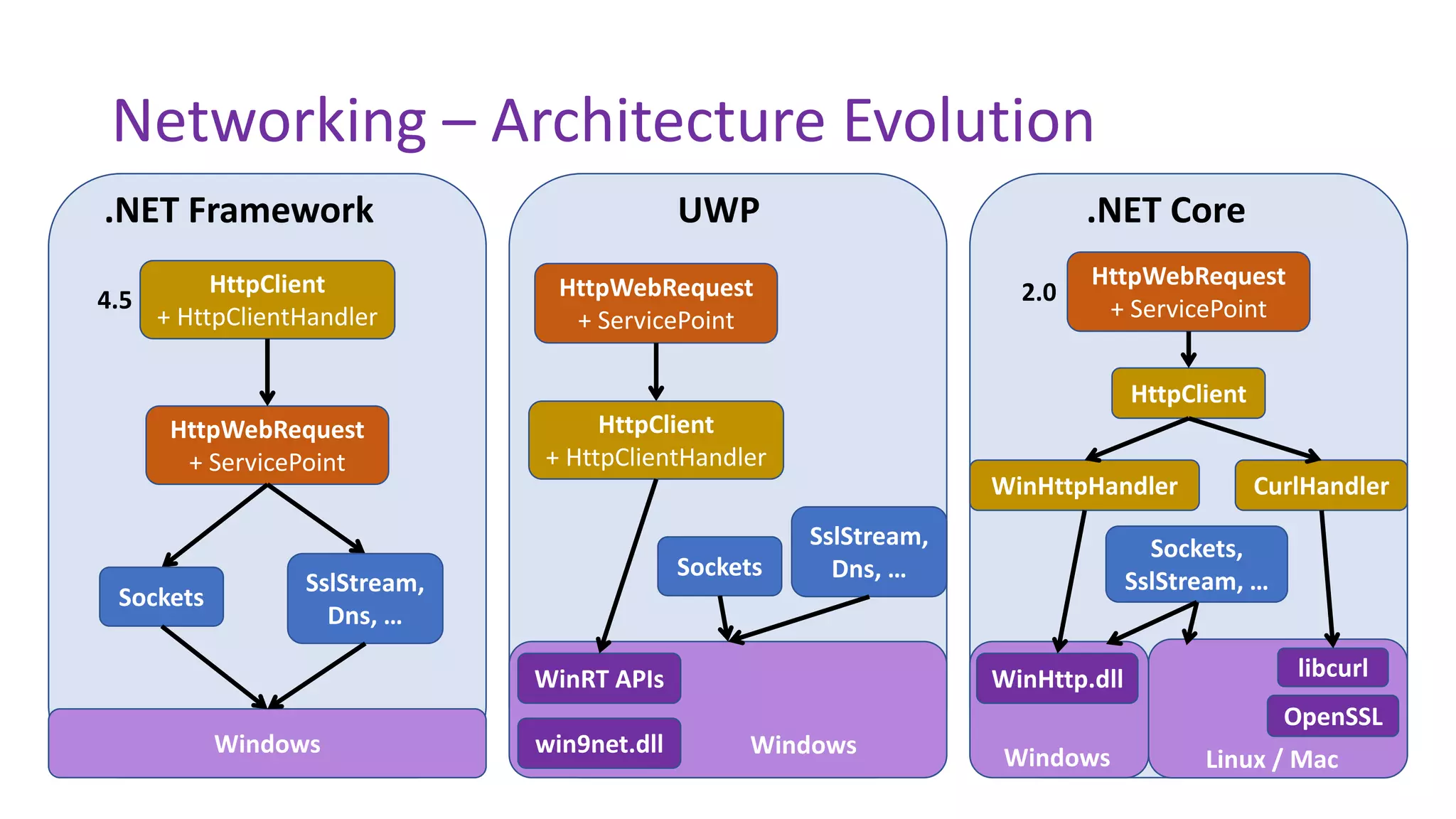

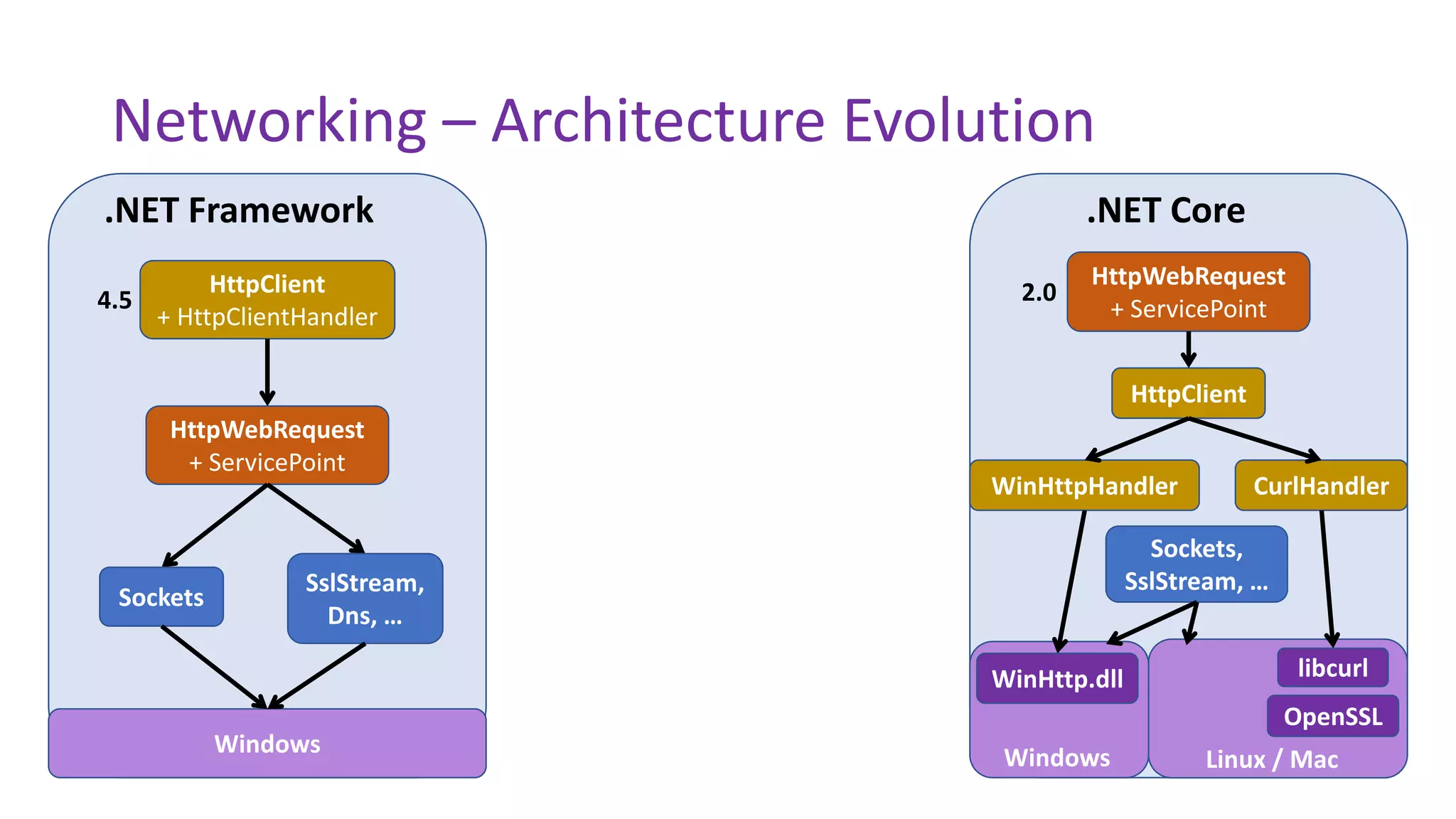

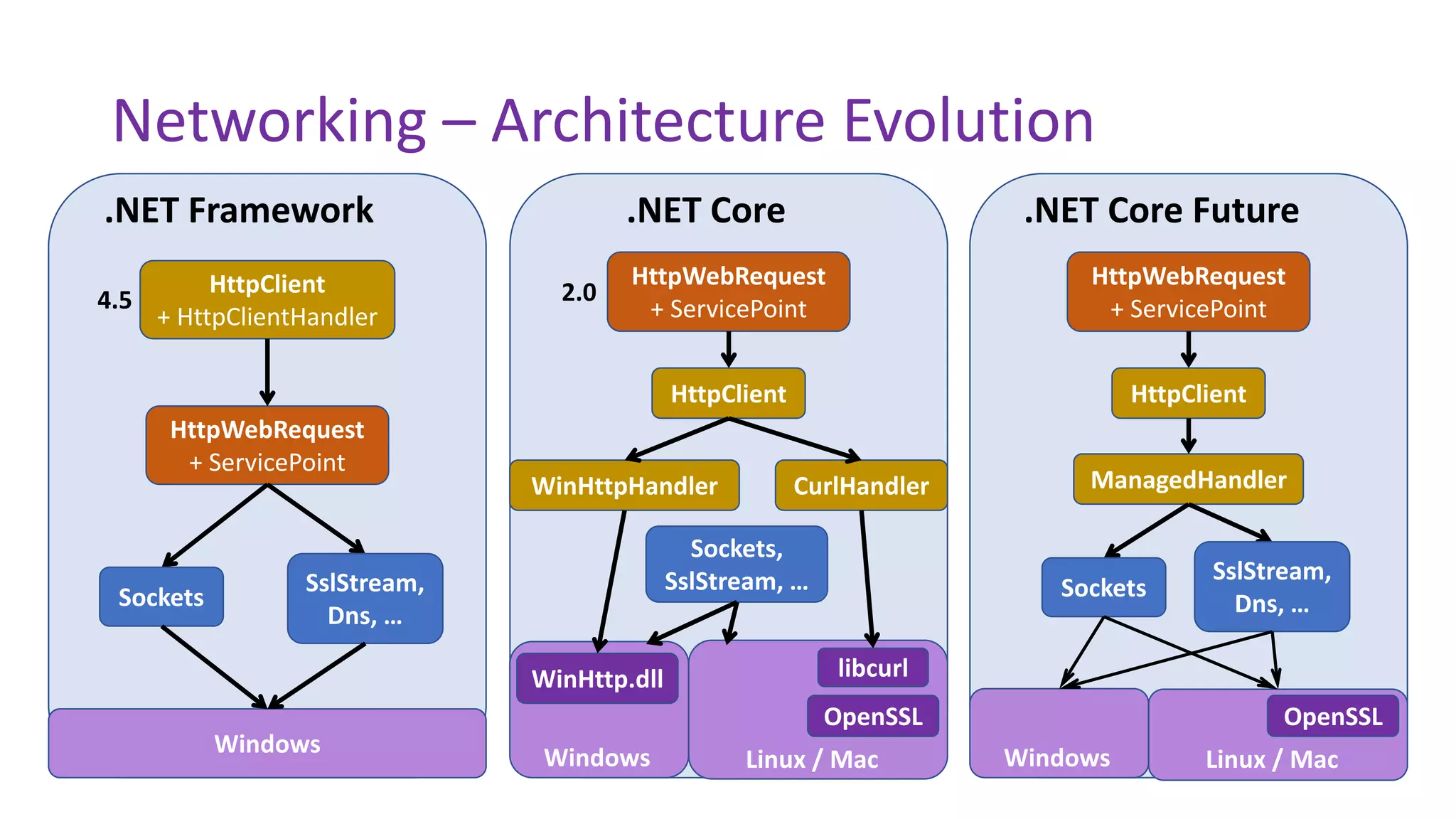

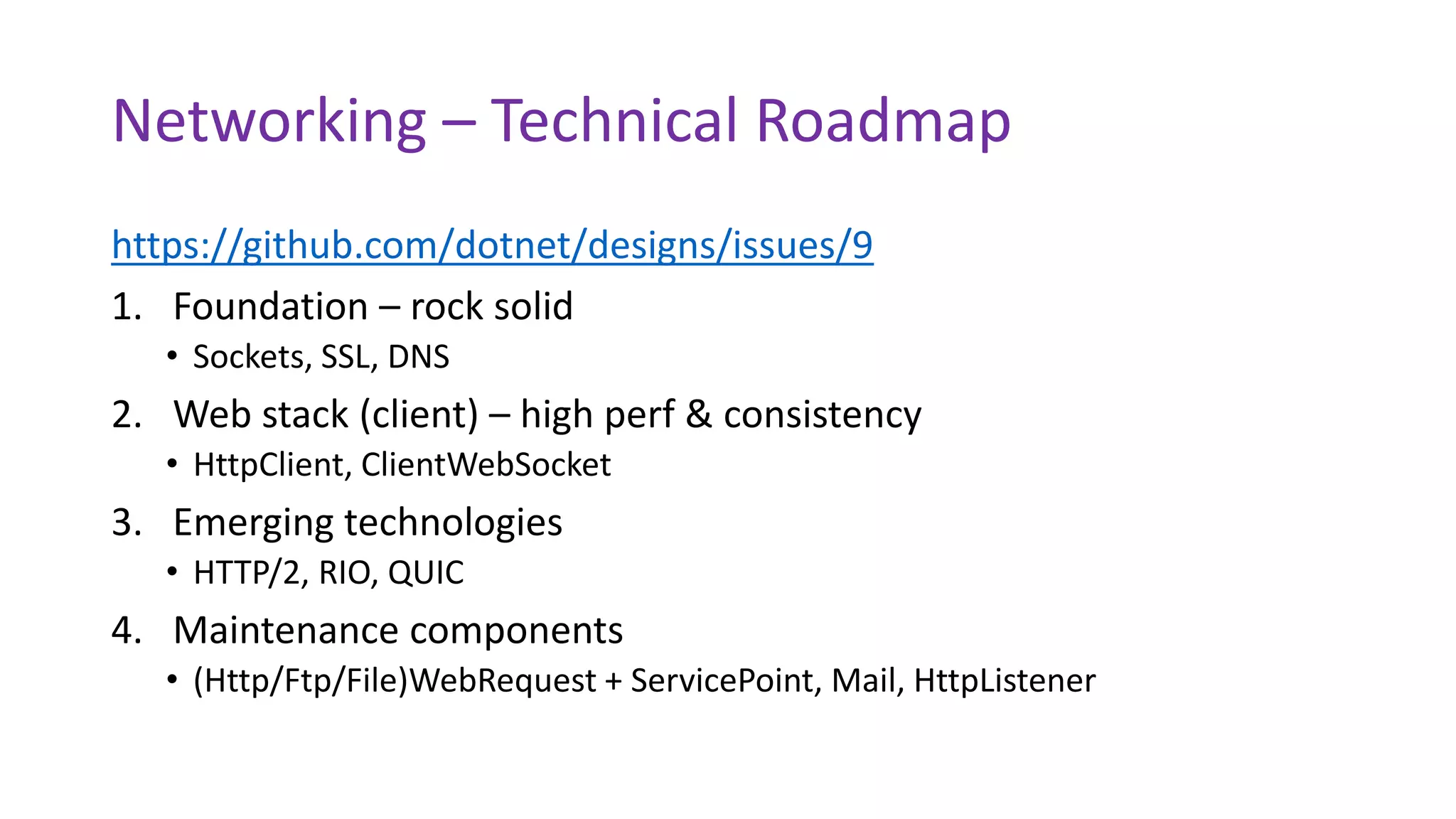

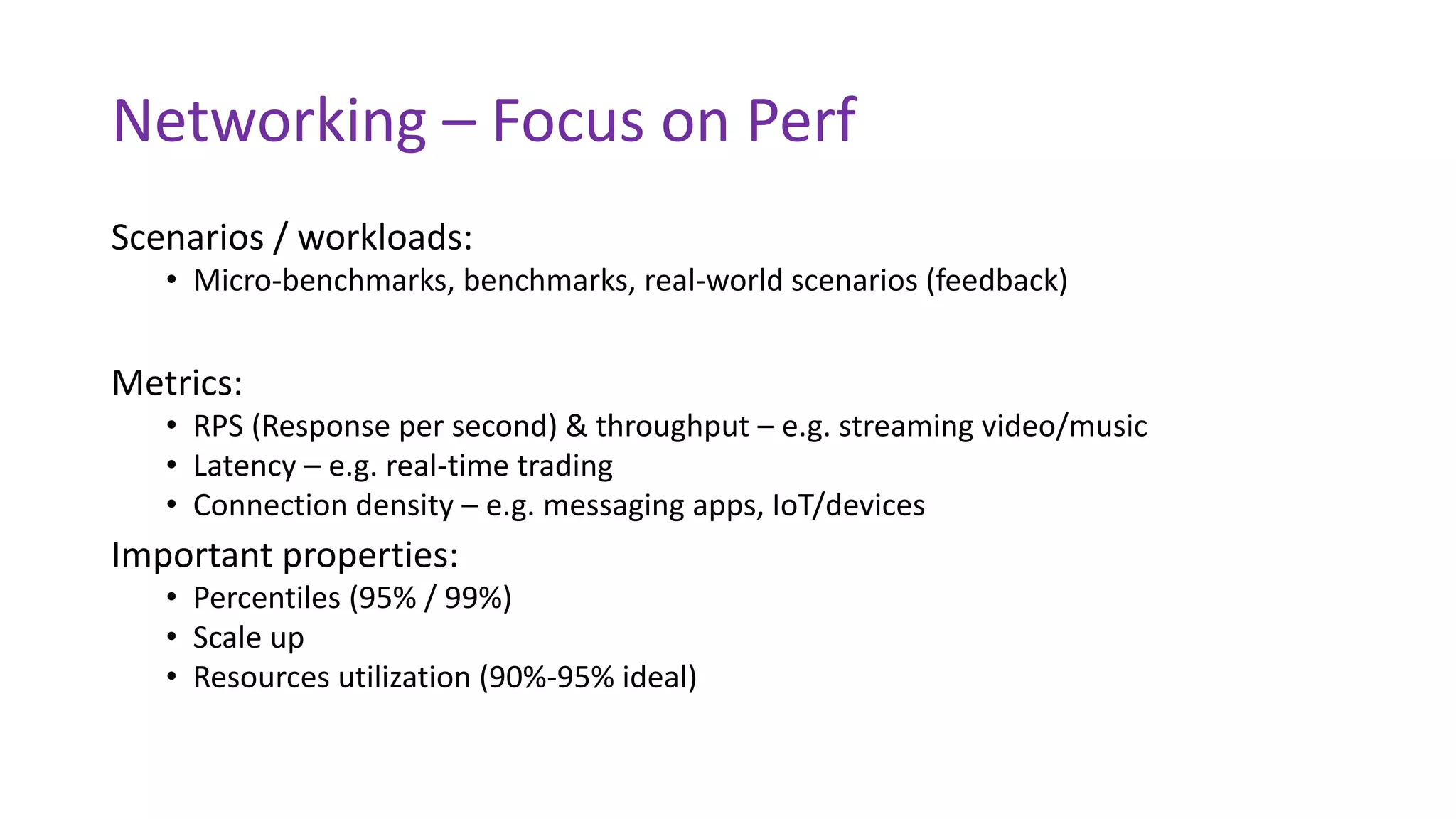

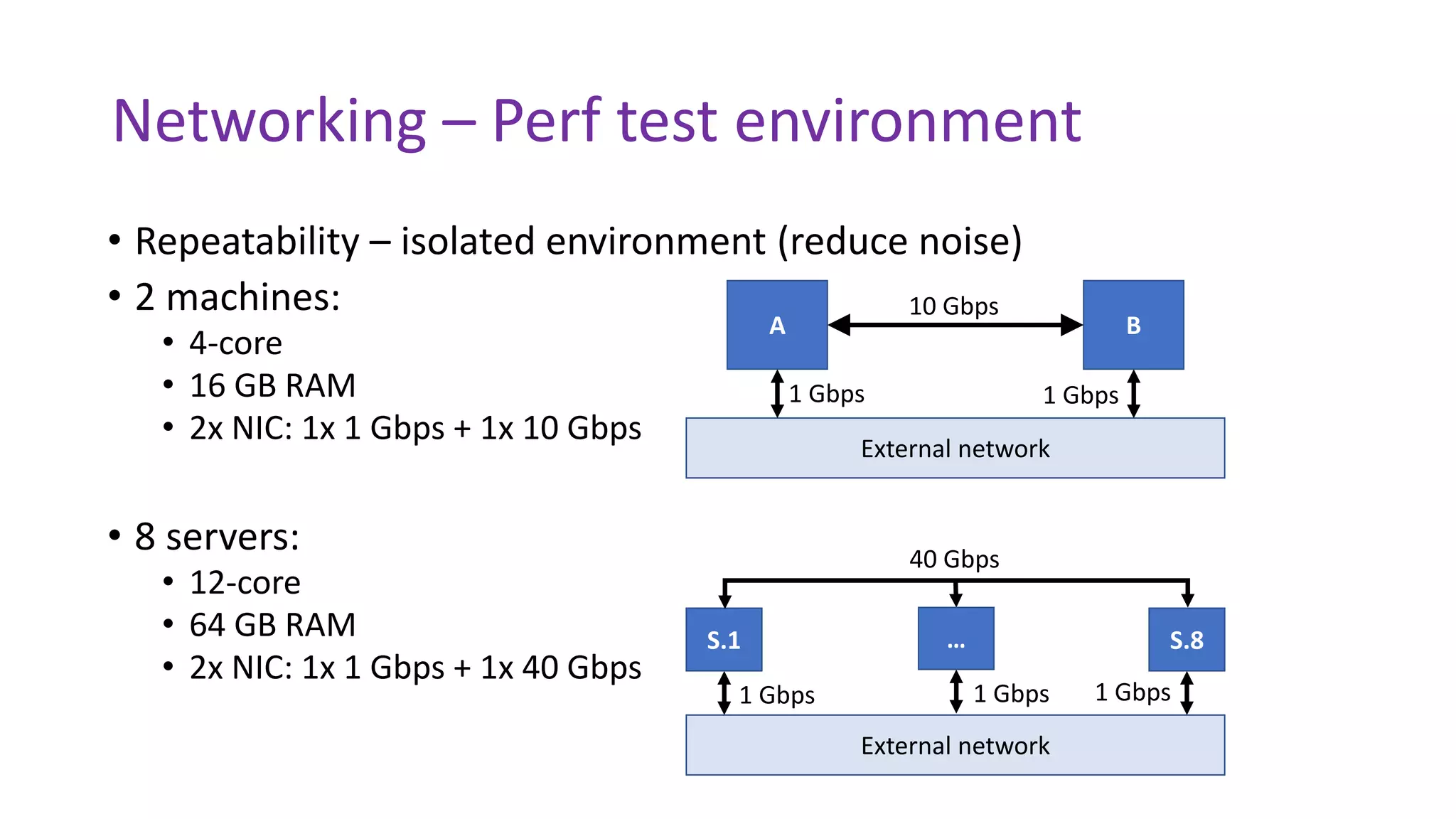

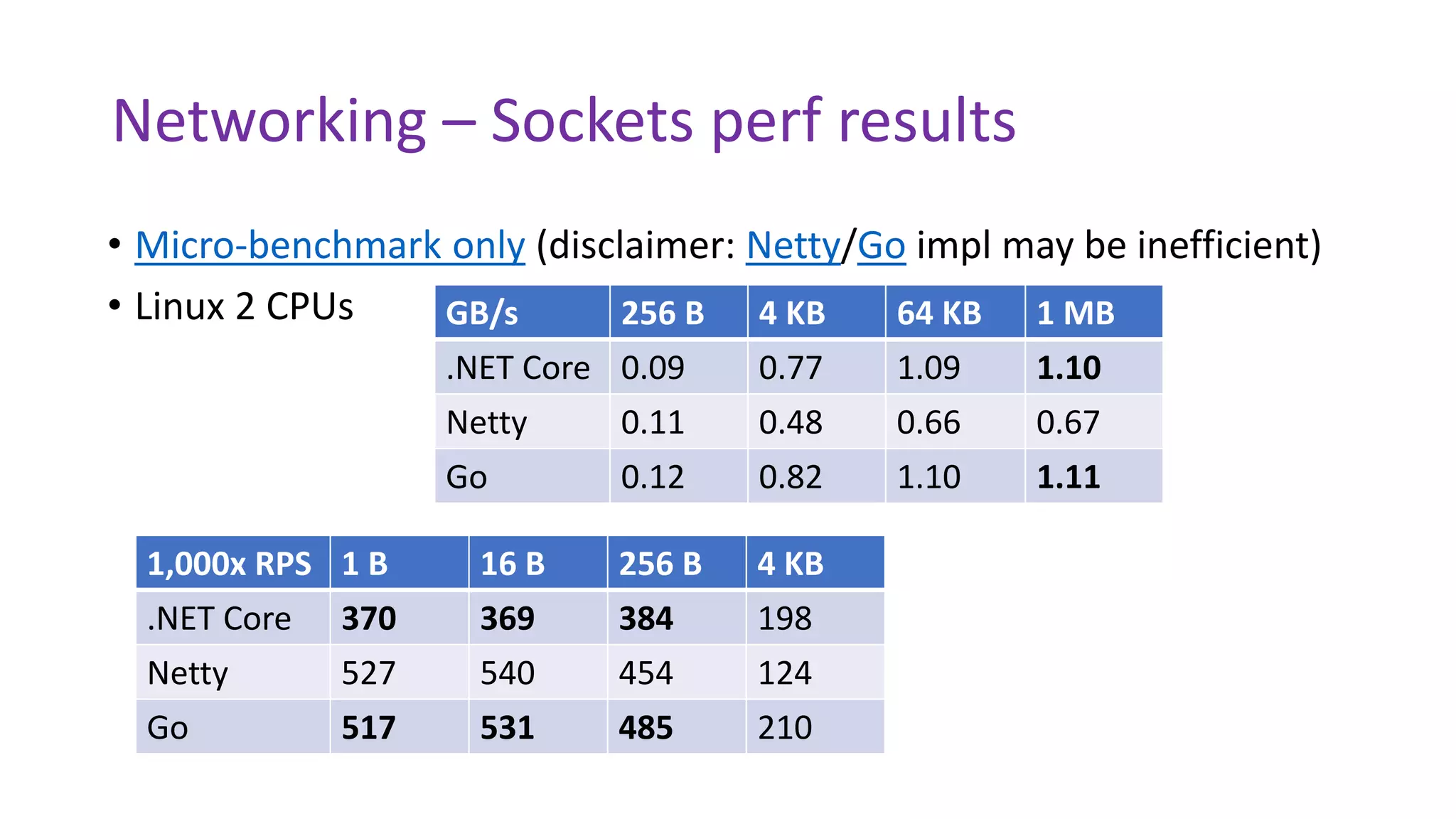

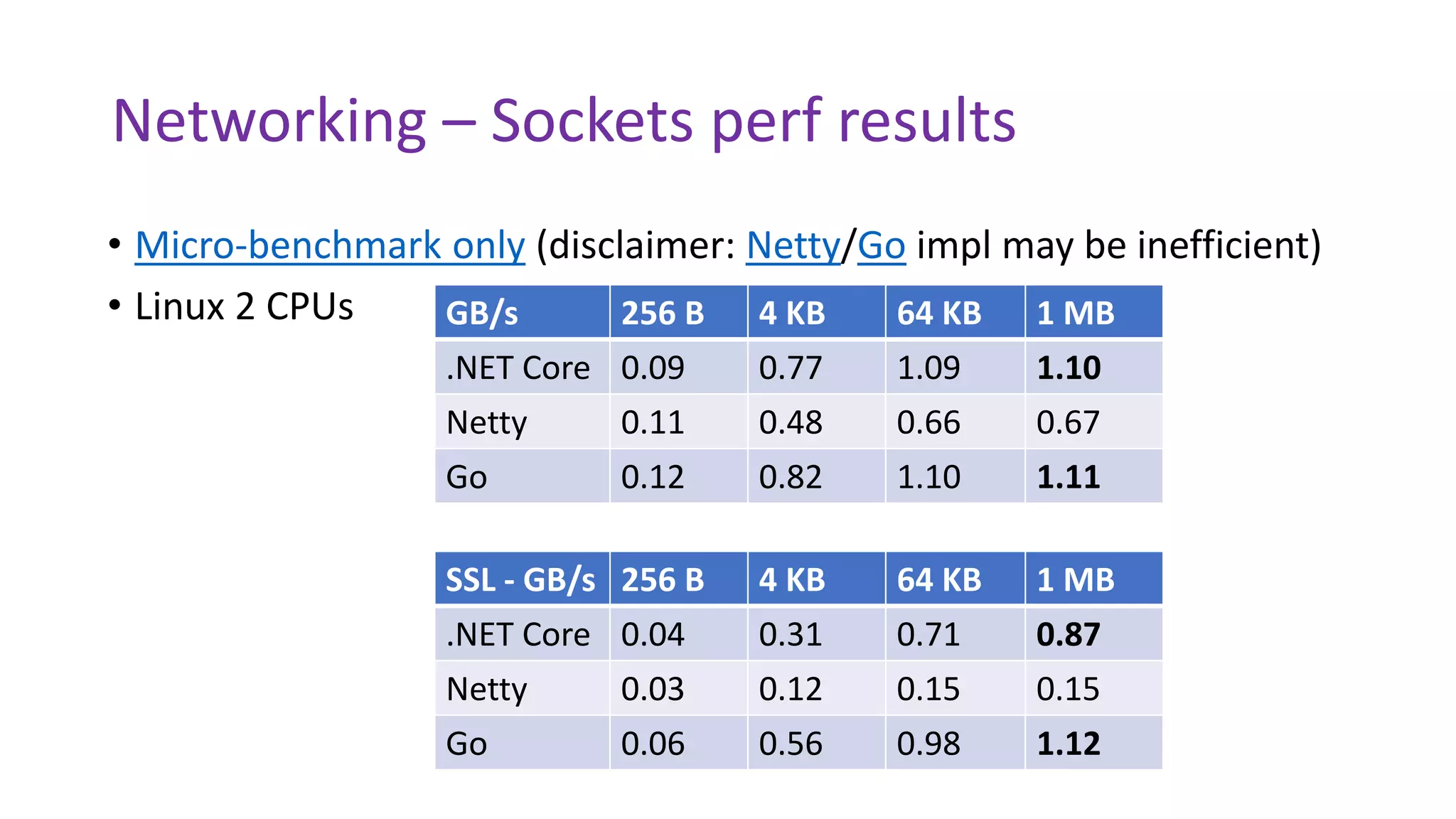

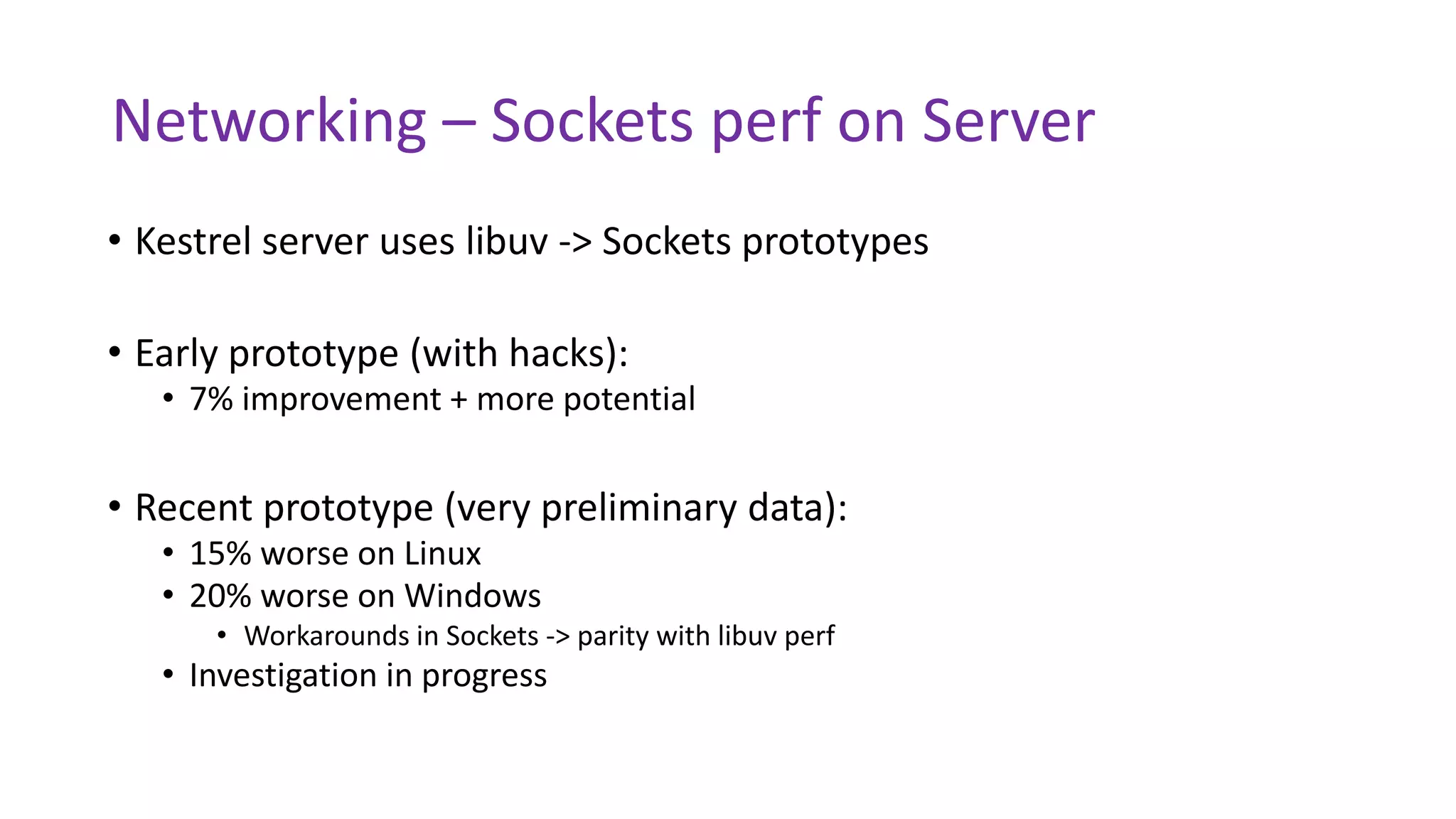

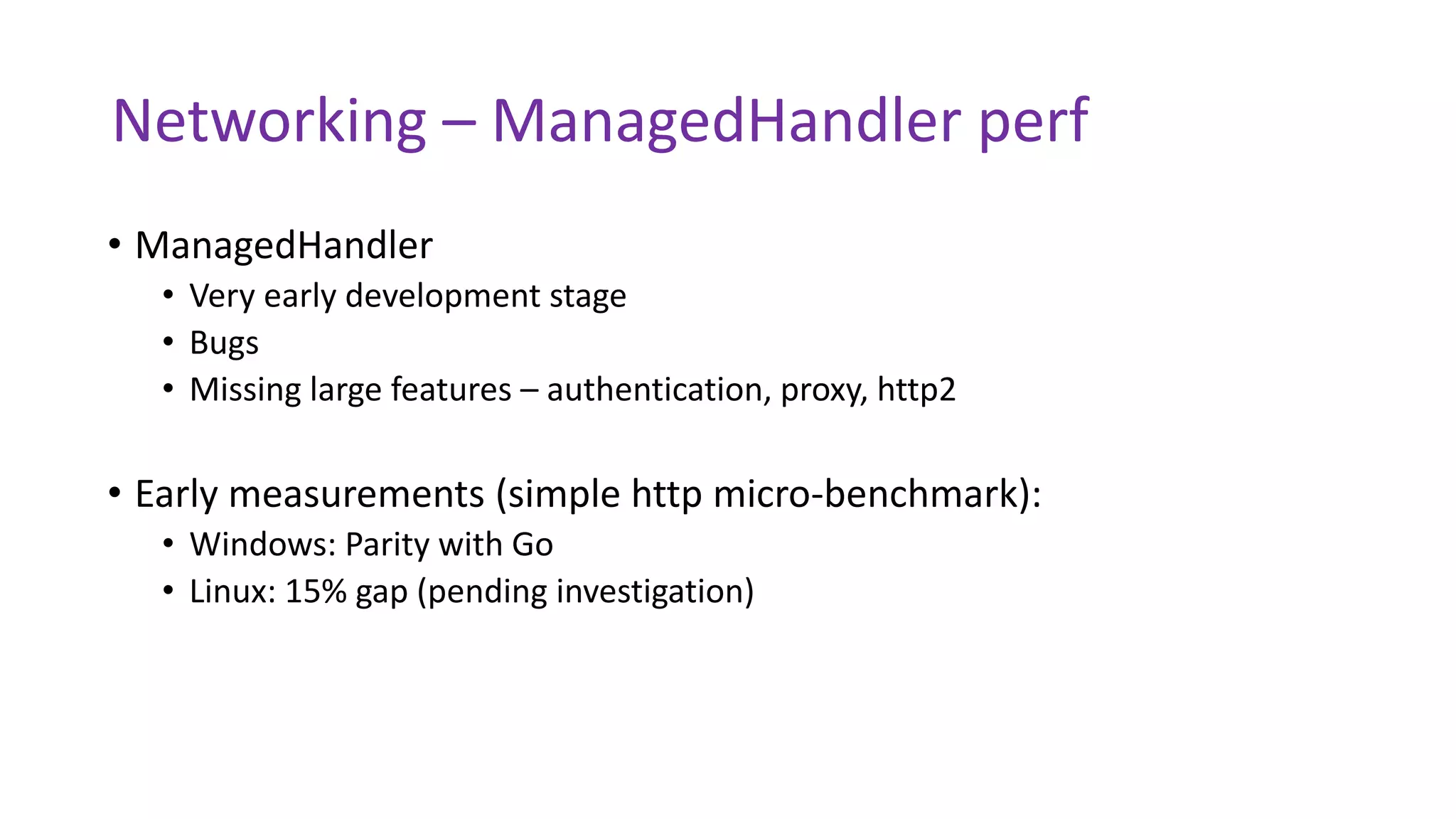

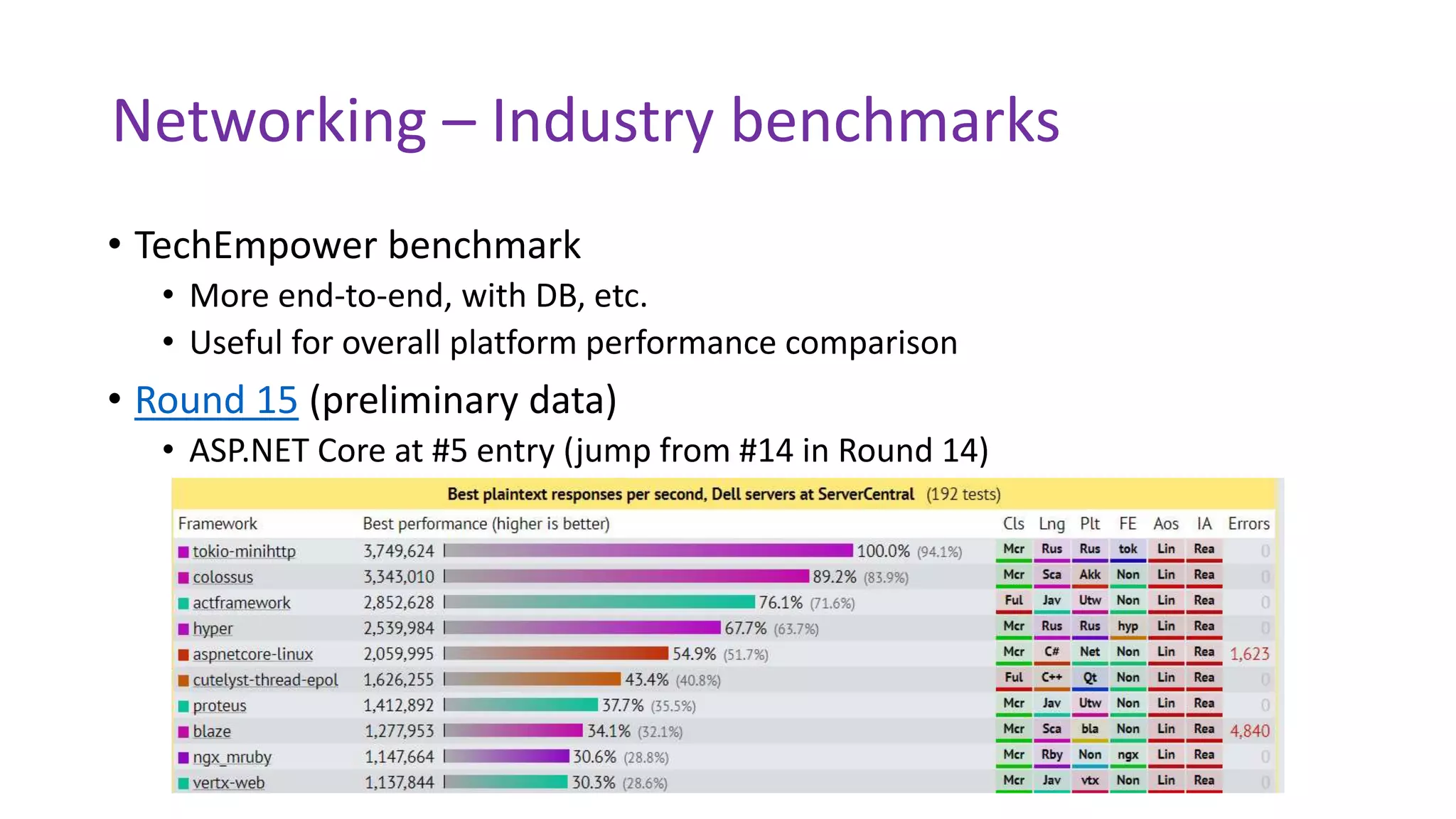

The document discusses the evolution and performance of the .NET Core networking stack, highlighting its architecture, ongoing improvements, and performance testing results. Key focus areas include high performance for various workloads, technical roadmap, and benchmarking against other technologies. The presentation emphasizes the importance of proactive investments in performance and consistent networking solutions across platforms.