Embed presentation

Downloaded 17 times

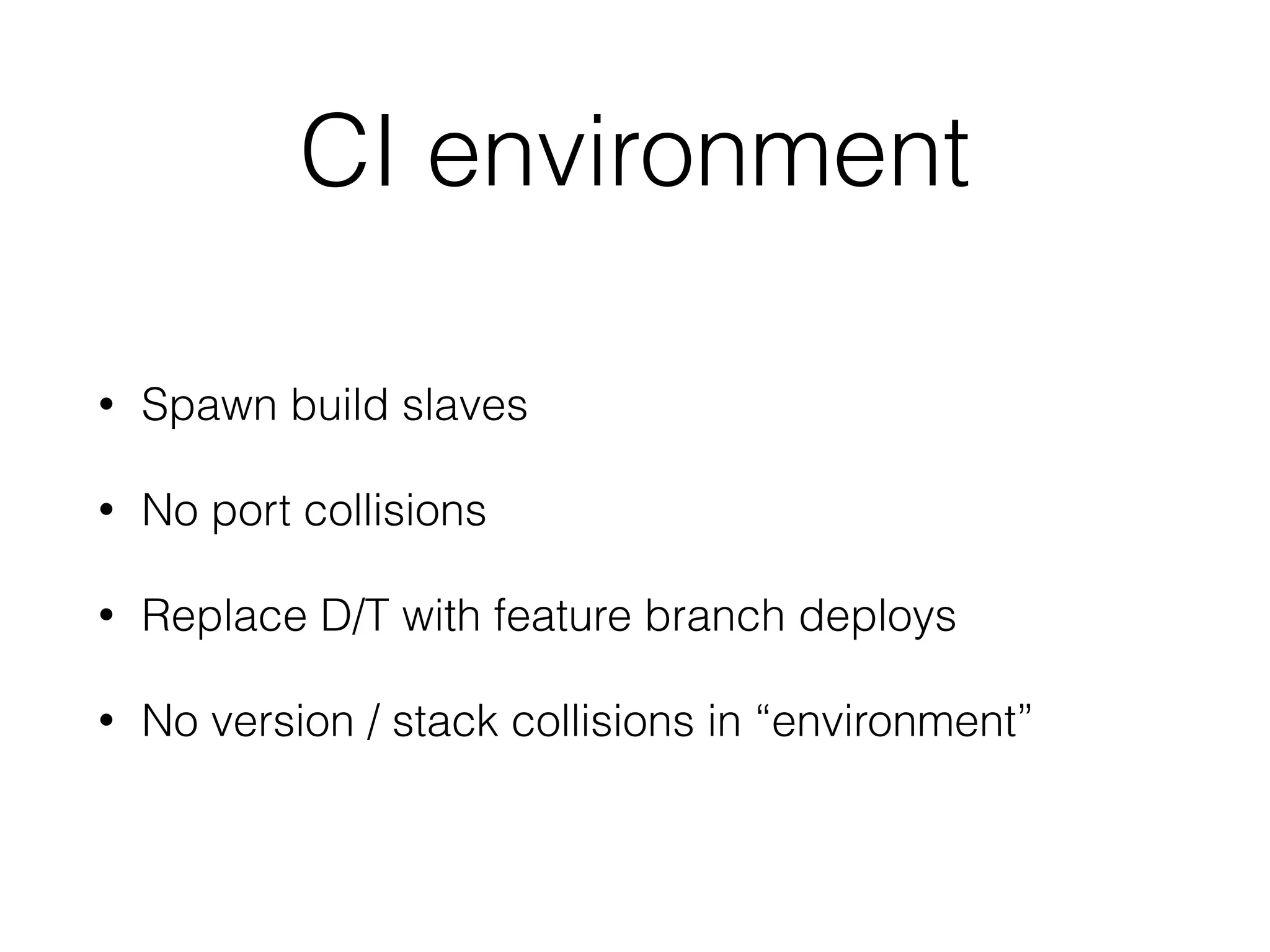

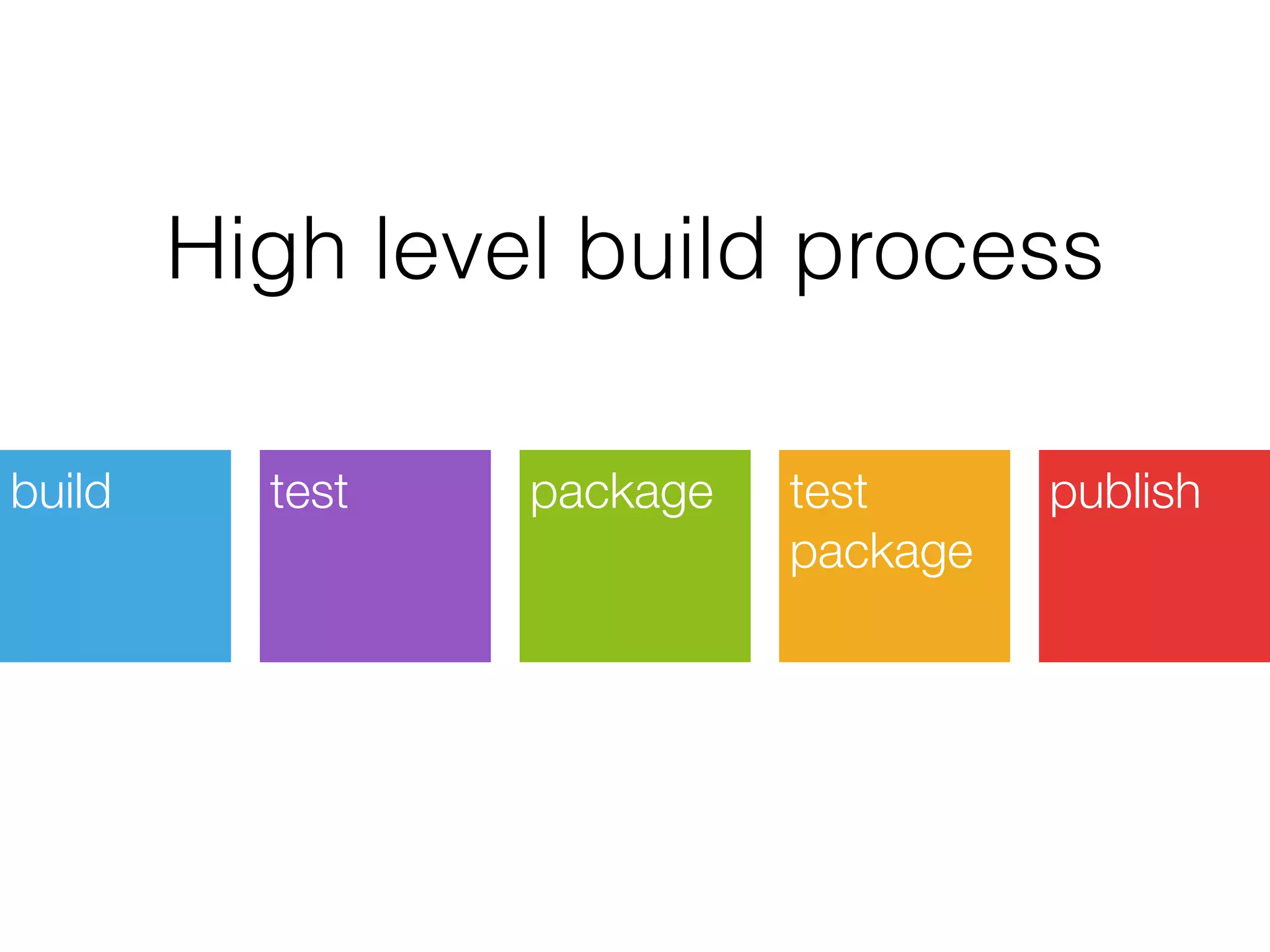

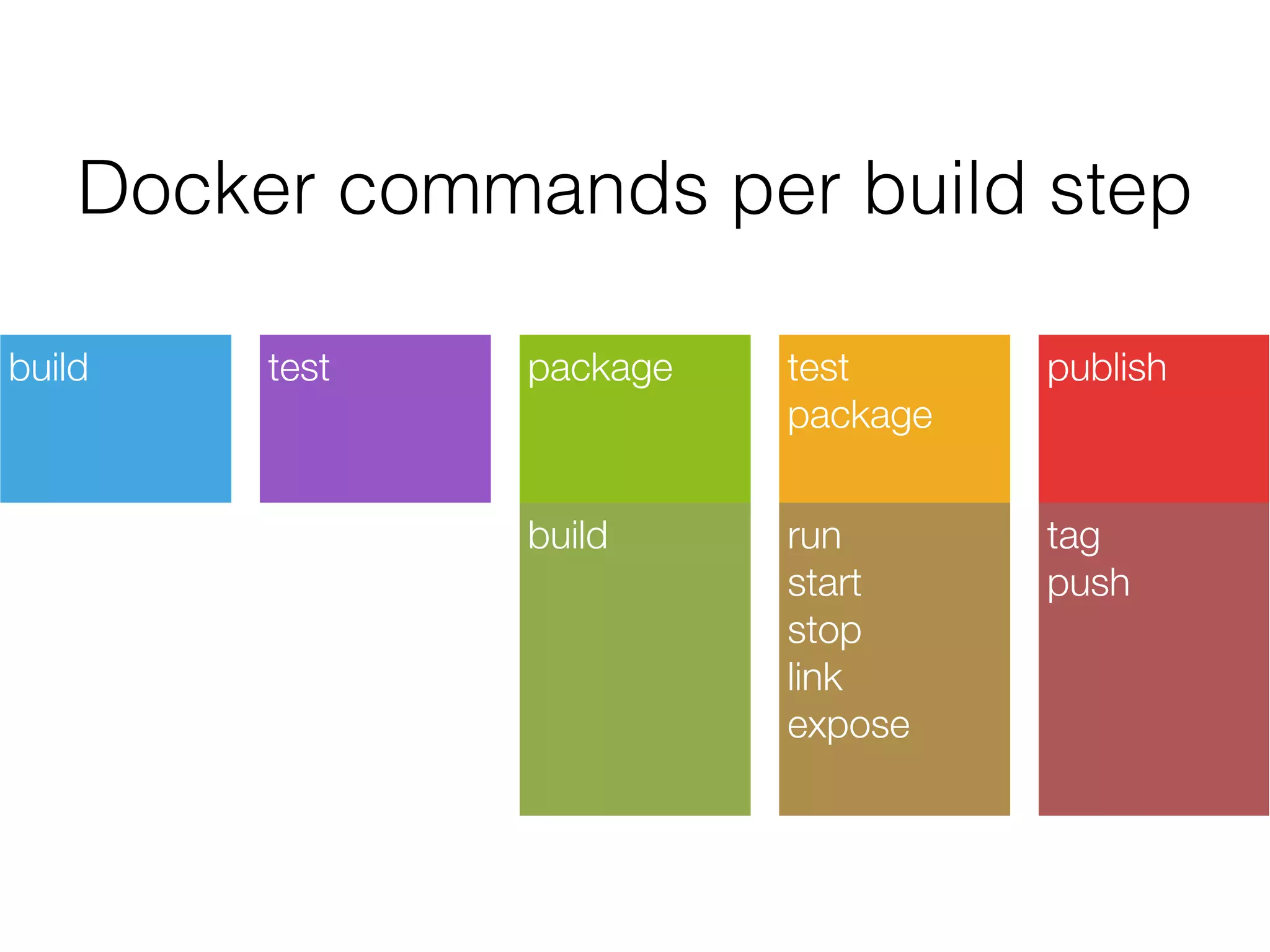

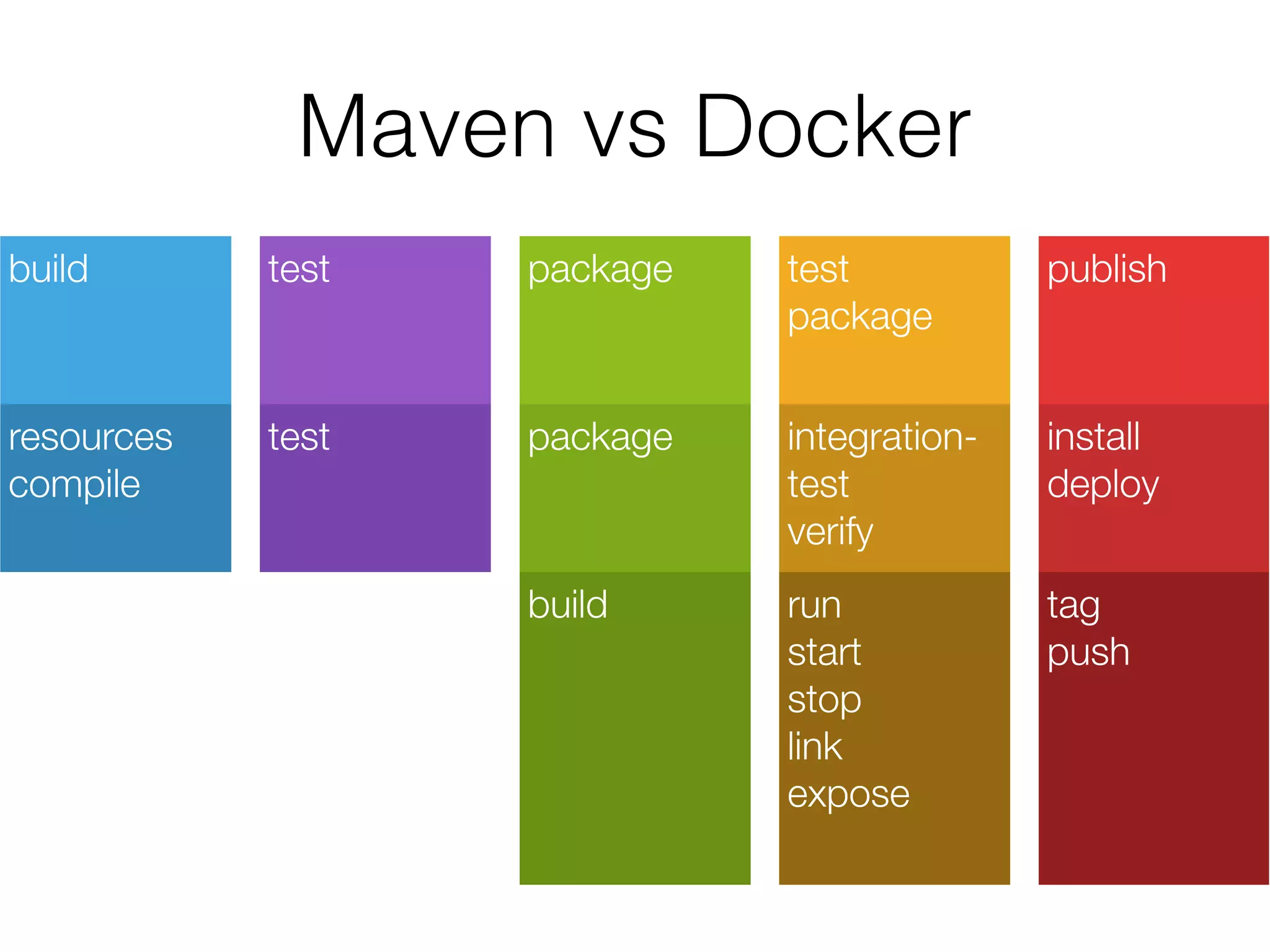

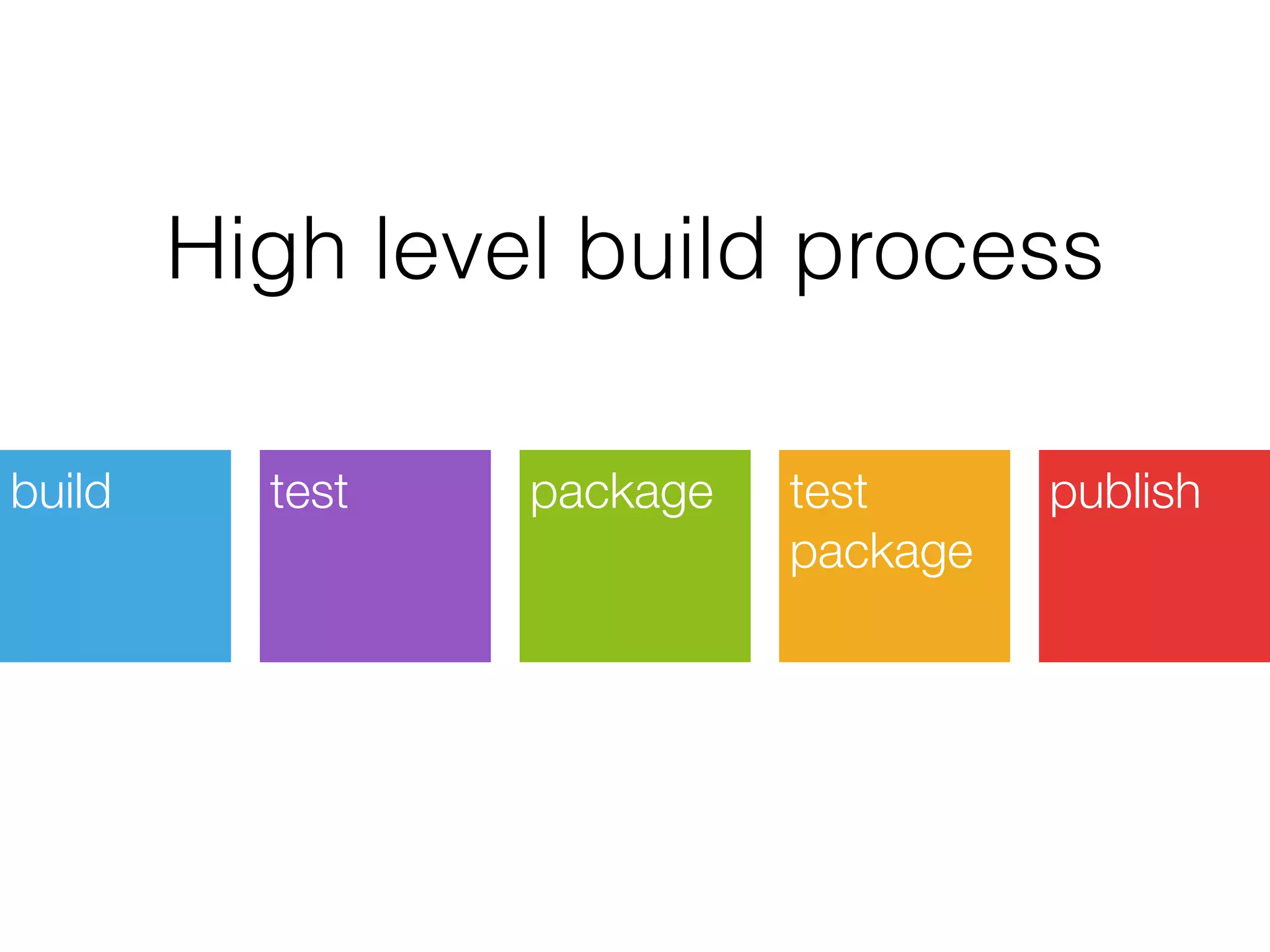

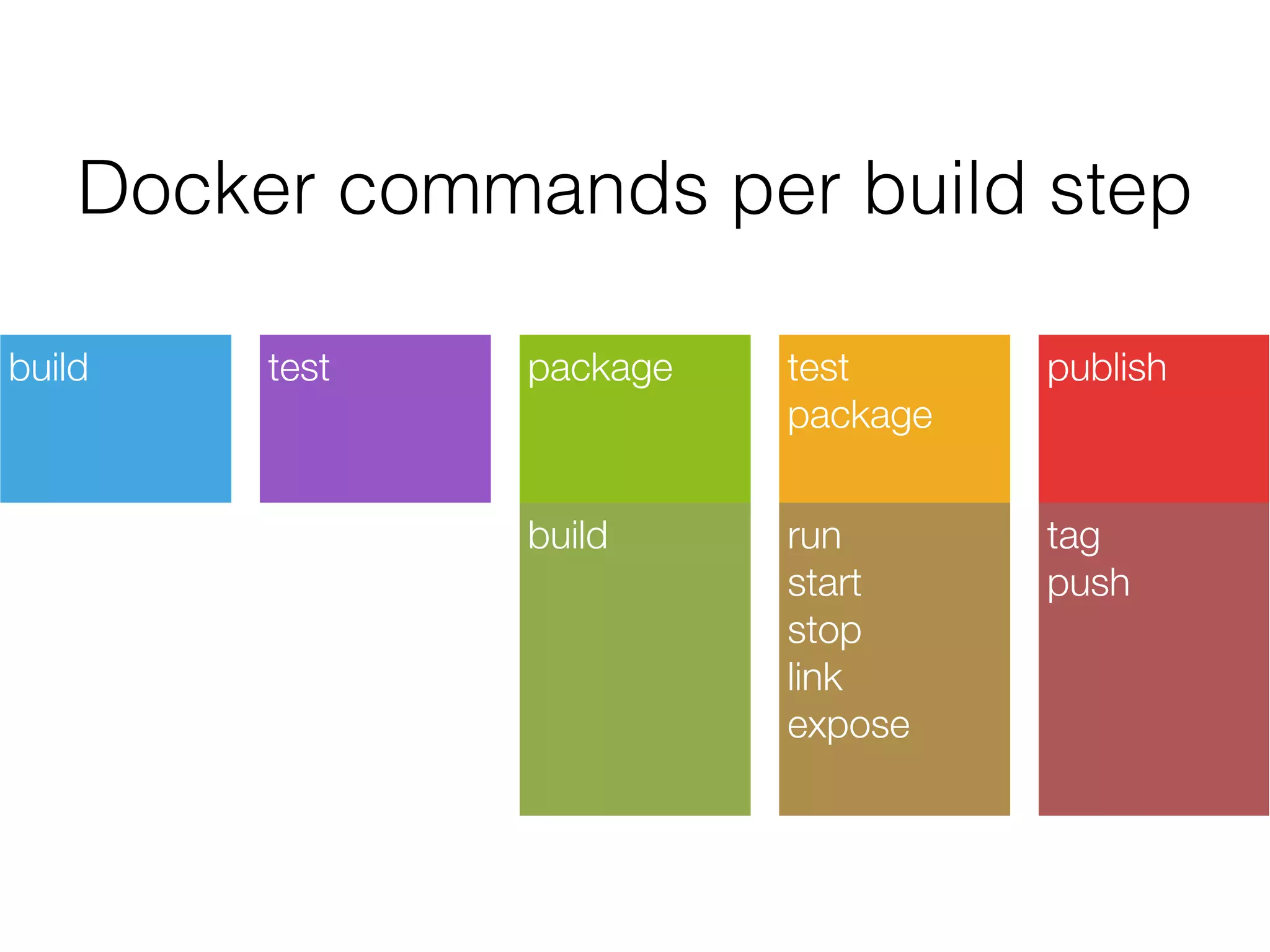

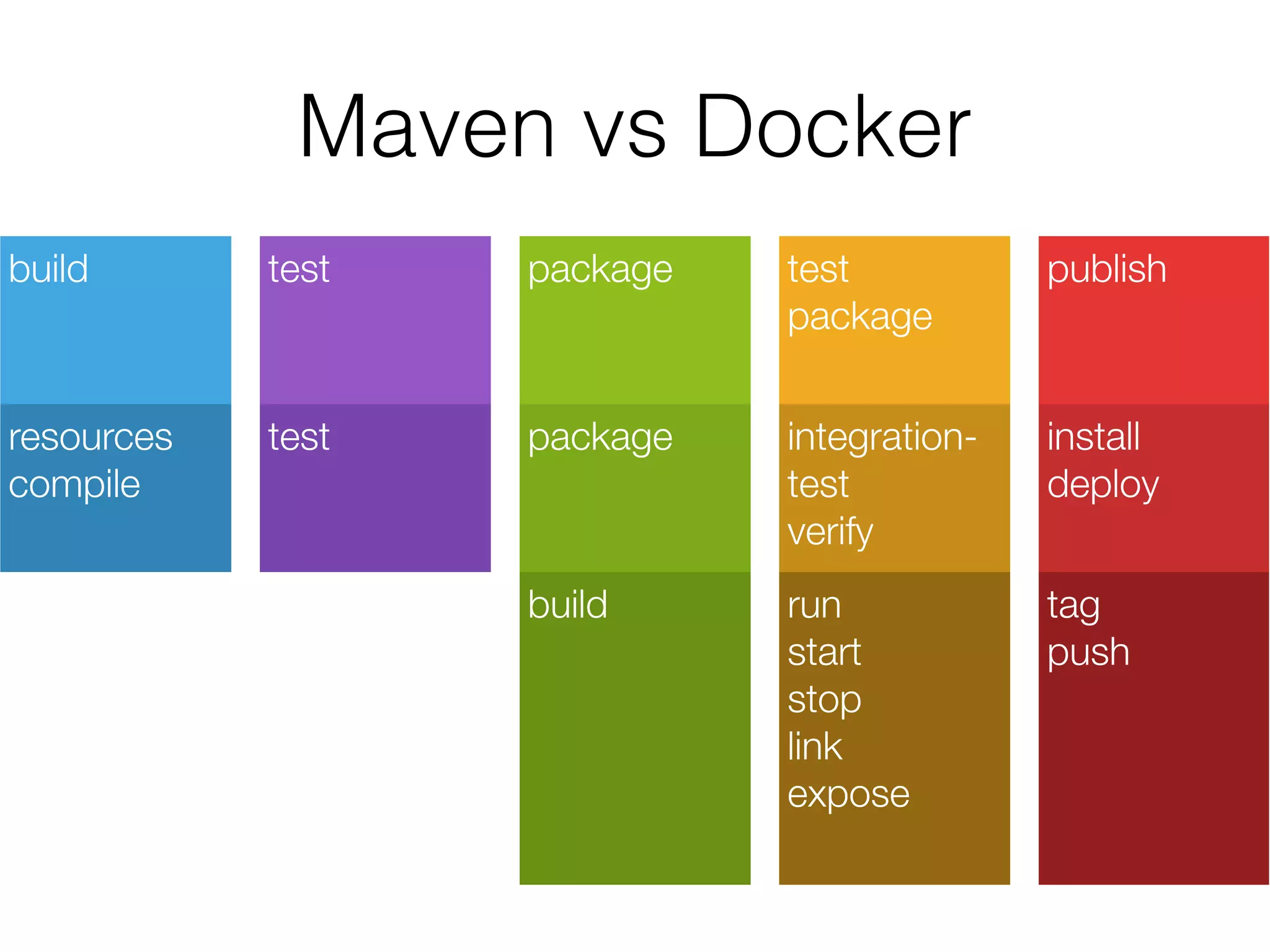

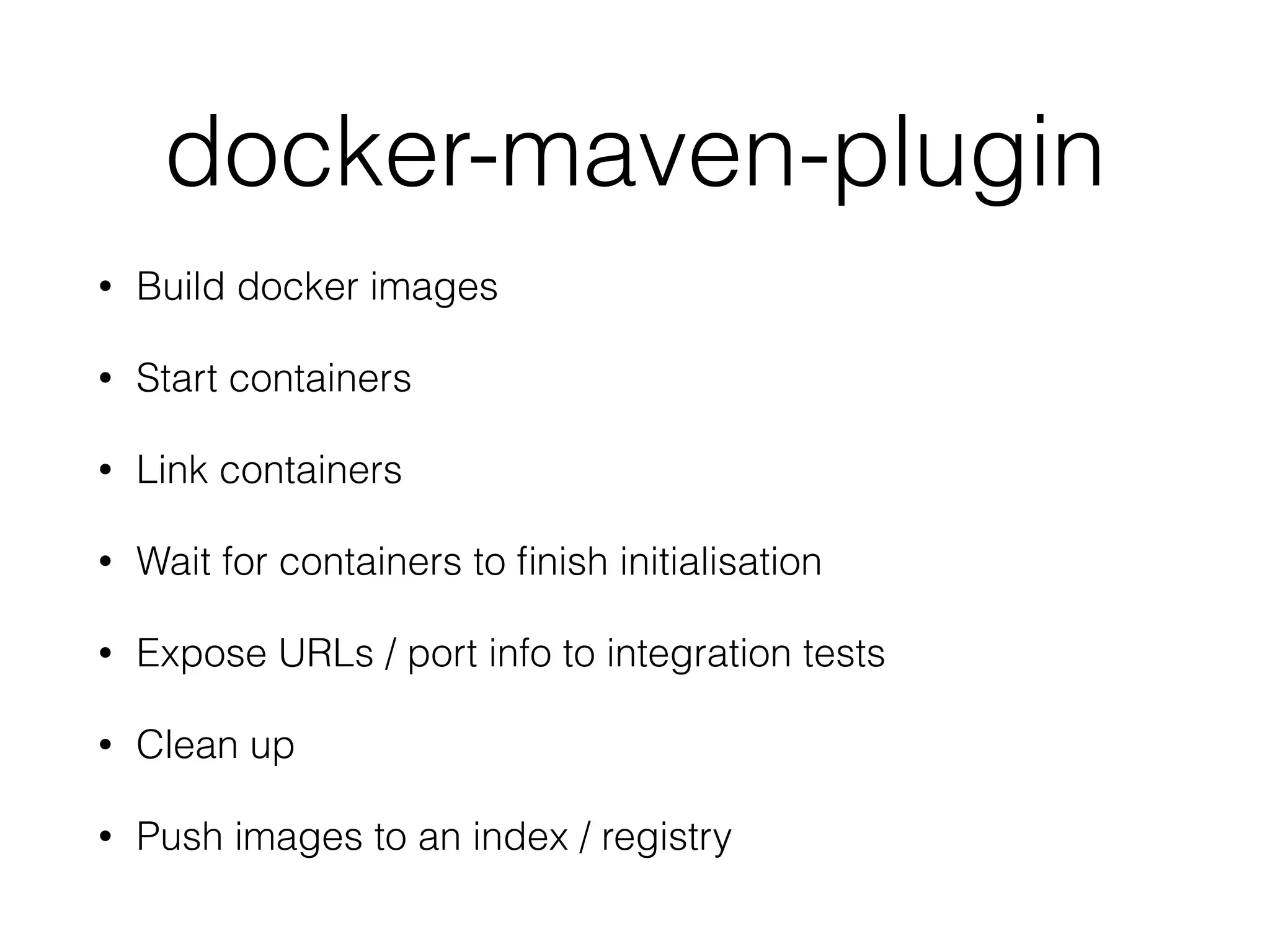

The document discusses how Docker can be used for integration testing. It describes how Docker allows running production environments locally, creating proofs of concept, and avoiding port collisions in continuous integration environments. It also outlines how Docker commands like build, run, start, stop, link, expose, and tag can fit into different steps of a build process. Finally, it introduces the docker-maven-plugin for building Docker images and integrating Docker into the Maven build lifecycle for testing.