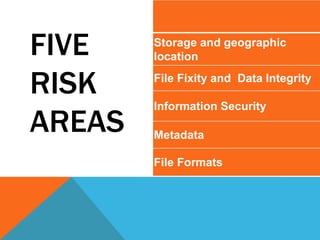

The workshop led by Trevor Owens on July 11, 2019, focused on forecasting costs related to the preservation and access of biomedical data, highlighting risks like storage damage and data integrity loss. Mitigation strategies involve managing multiple copies, maintaining security protocols, and producing comprehensive metadata. Key recommendations emphasize the importance of trained staff, adequate funding, and ongoing software and hardware refresh cycles for effective digital preservation.