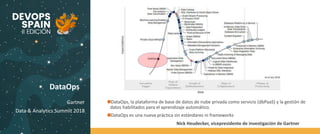

This document discusses DataOps, which is an agile methodology for developing and deploying data-intensive applications. DataOps supports cross-functional collaboration and fast time to value. It expands on DevOps practices to include data-related roles like data engineers and data scientists. The key goals of DataOps are to promote continuous model deployment, repeatability, productivity, agility, self-service, and to make data central to applications. It discusses how DataOps brings flexibility and focus to data-driven organizations through principles like continuous model deployment, improved efficiency, and faster time to value.