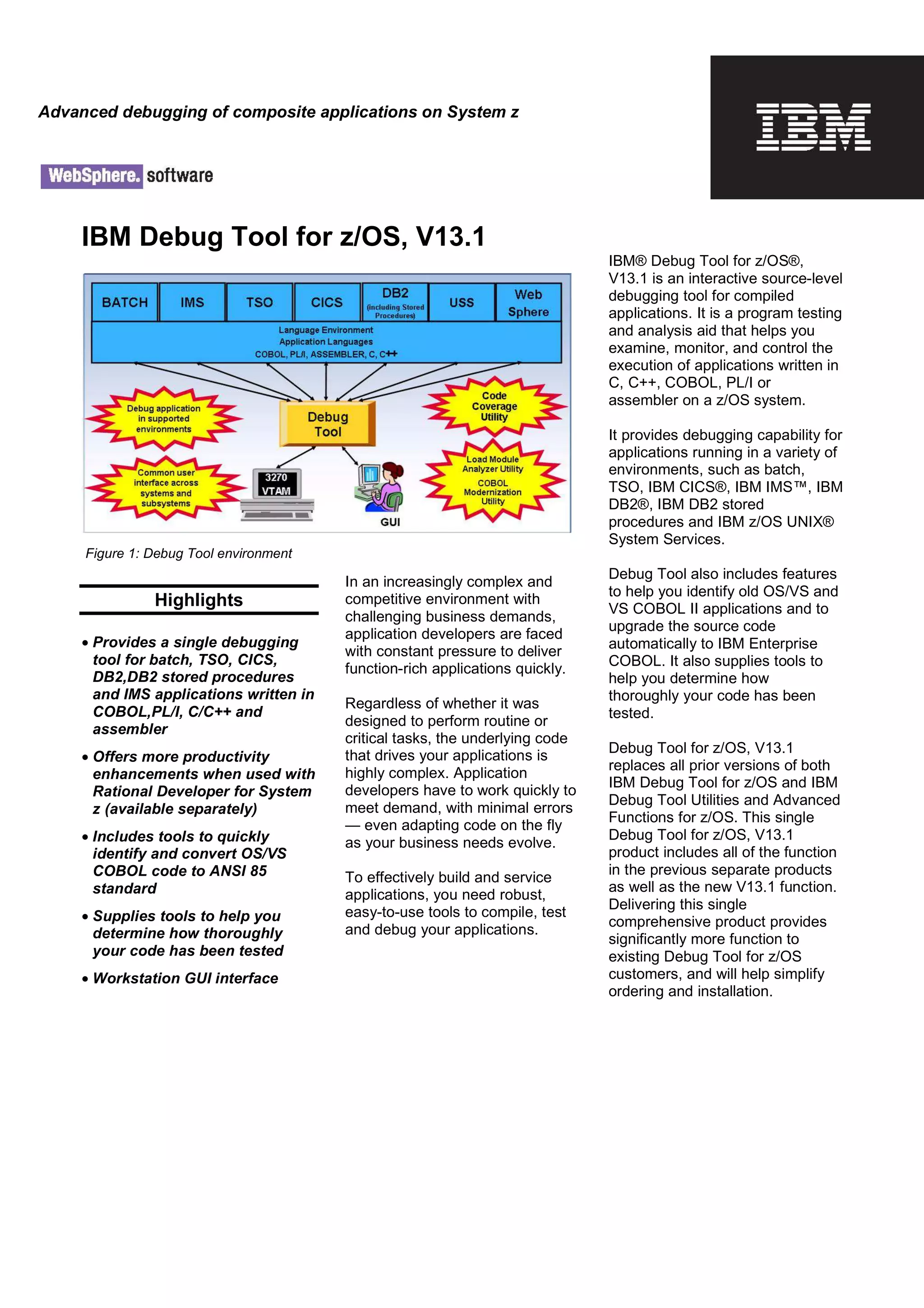

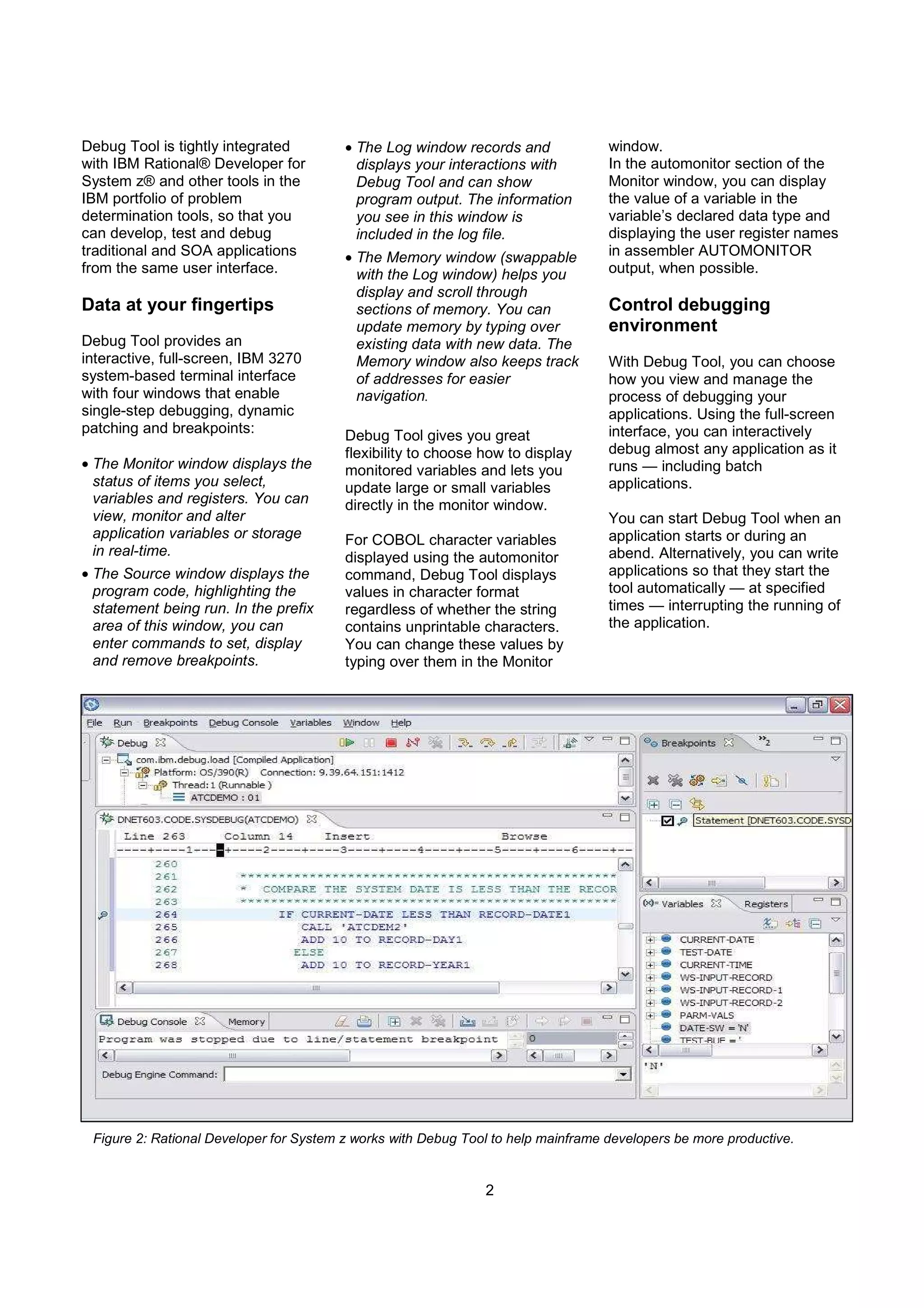

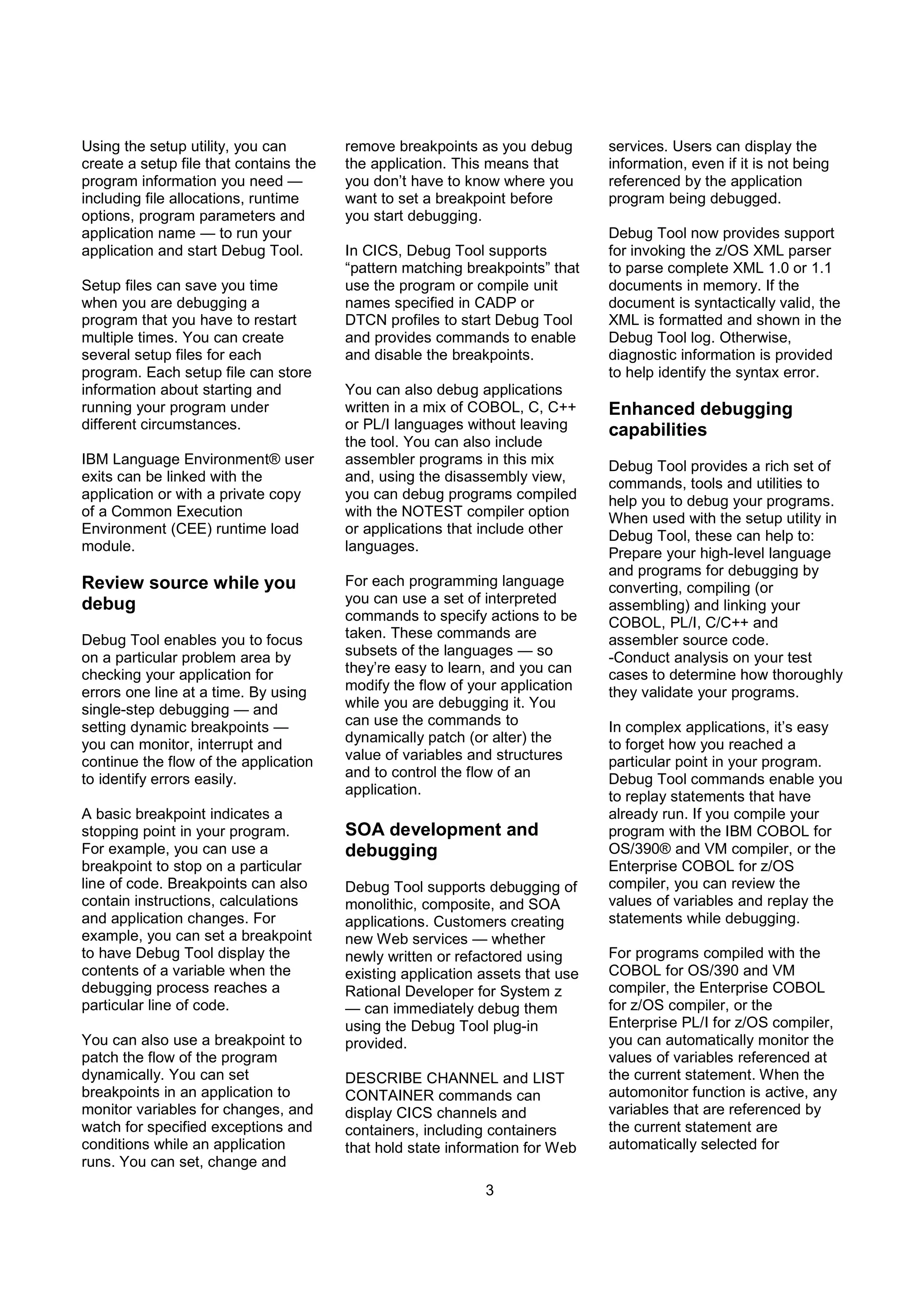

IBM Debug Tool for z/OS, v13.1 is a comprehensive source-level debugging tool supporting various programming languages and environments, enhancing productivity for application developers. It integrates with IBM Rational Developer for System z, offers advanced debugging features like single-step execution, real-time variable monitoring, and extensive reporting capabilities. The new version introduces functionalities for handling web services, improving code coverage analysis, and more efficient debugging processes, all aimed at streamlining application development and troubleshooting.