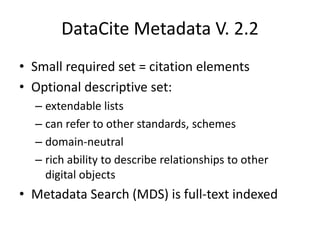

The document discusses dataset identification and citation using DataCite and EZID. It provides an introduction to DataCite, which helps researchers find, access, and reuse data, and EZID, which allows for easy creation and management of DataCite DOIs and other identifiers. The document outlines the DataCite metadata schema, describes functionality available through EZID, and recommends next steps for using these services.