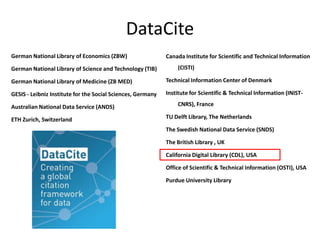

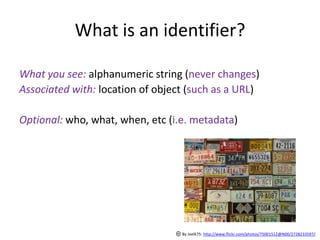

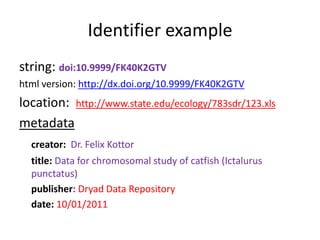

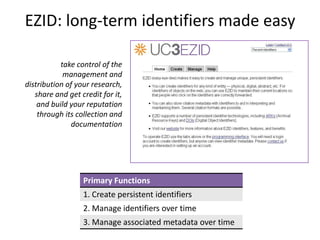

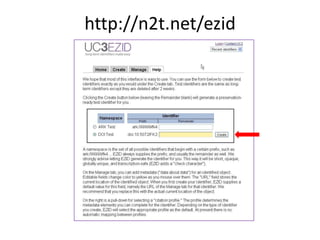

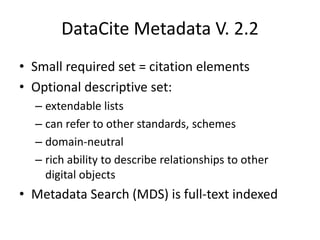

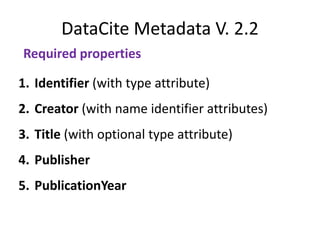

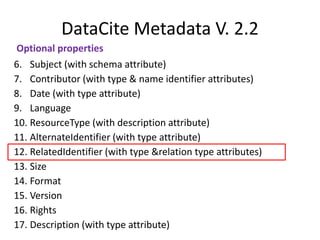

The document discusses dataset metadata tools and approaches for access and preservation, highlighting requirements for dataset descriptions and key identifying elements. It details Datacite metadata standards, the role of persistent identifiers, and the services provided by platforms like EZID and the California Digital Library. Additionally, the document outlines next steps for improving metadata services and enhancing data management planning.