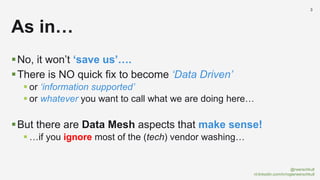

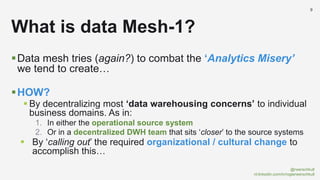

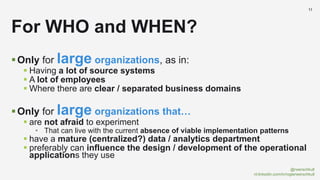

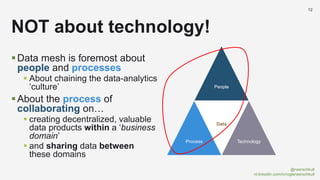

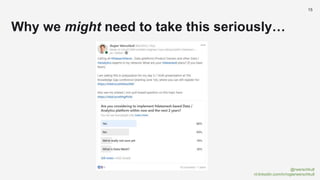

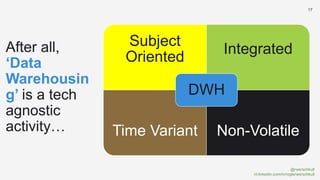

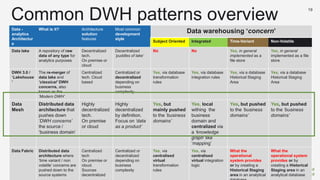

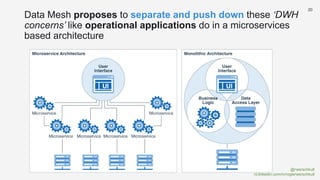

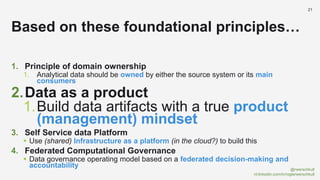

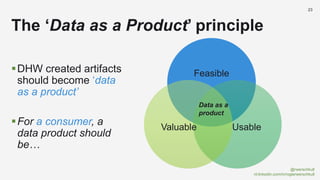

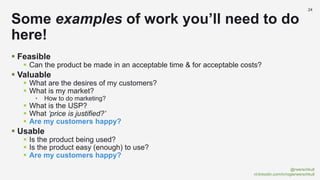

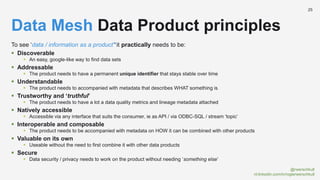

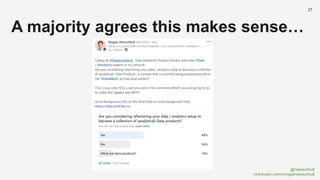

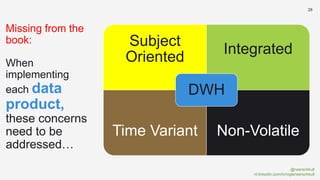

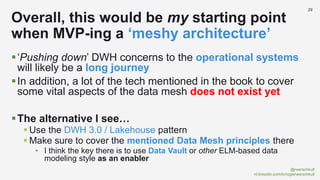

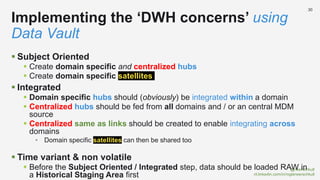

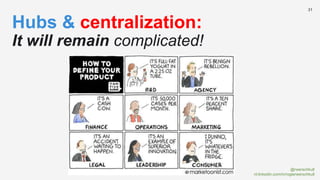

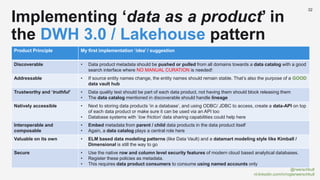

The document discusses the concept of data mesh, arguing it will not solve the analytics challenges faced by organizations but highlights its potential benefits when implemented correctly. It emphasizes that successful data mesh implementation relies on decentralization, cultural change, and viewing data as a product. Additionally, it critiques the existing literature on data mesh for lacking practical implementation guidance while suggesting an alternative approach using data warehousing principles.