Embed presentation

Download to read offline

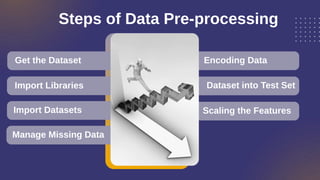

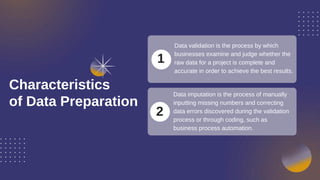

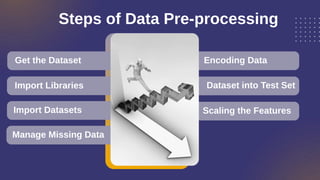

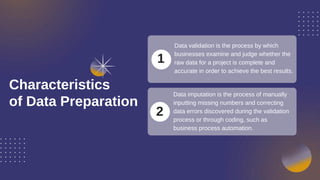

Data preprocessing transforms raw data into a standardized format for analysis, enhancing the accuracy and efficiency of machine learning projects. Key components include managing missing data, encoding, and scaling features, along with validation processes to ensure data completeness and accuracy. Companies typically employ data analysts to handle preprocessing before engaging machine learning development services.