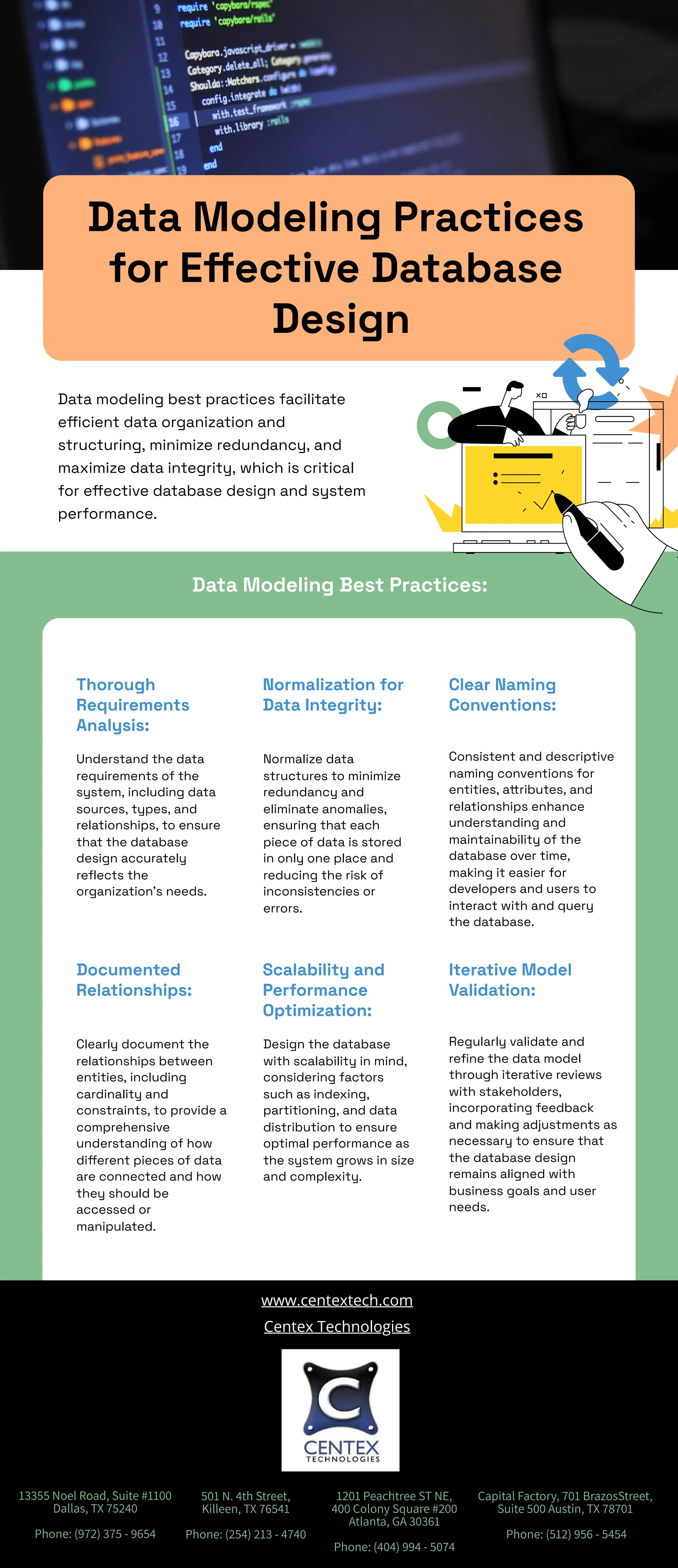

Data modeling best practices focus on efficient organization, minimizing redundancy, and maximizing data integrity for effective database design. Key practices include thorough requirements analysis, normalization, and clear naming conventions, which enhance understanding and maintainability. Additionally, documenting relationships, designing for scalability, and iterative model validation with stakeholders ensure the database aligns with business goals.