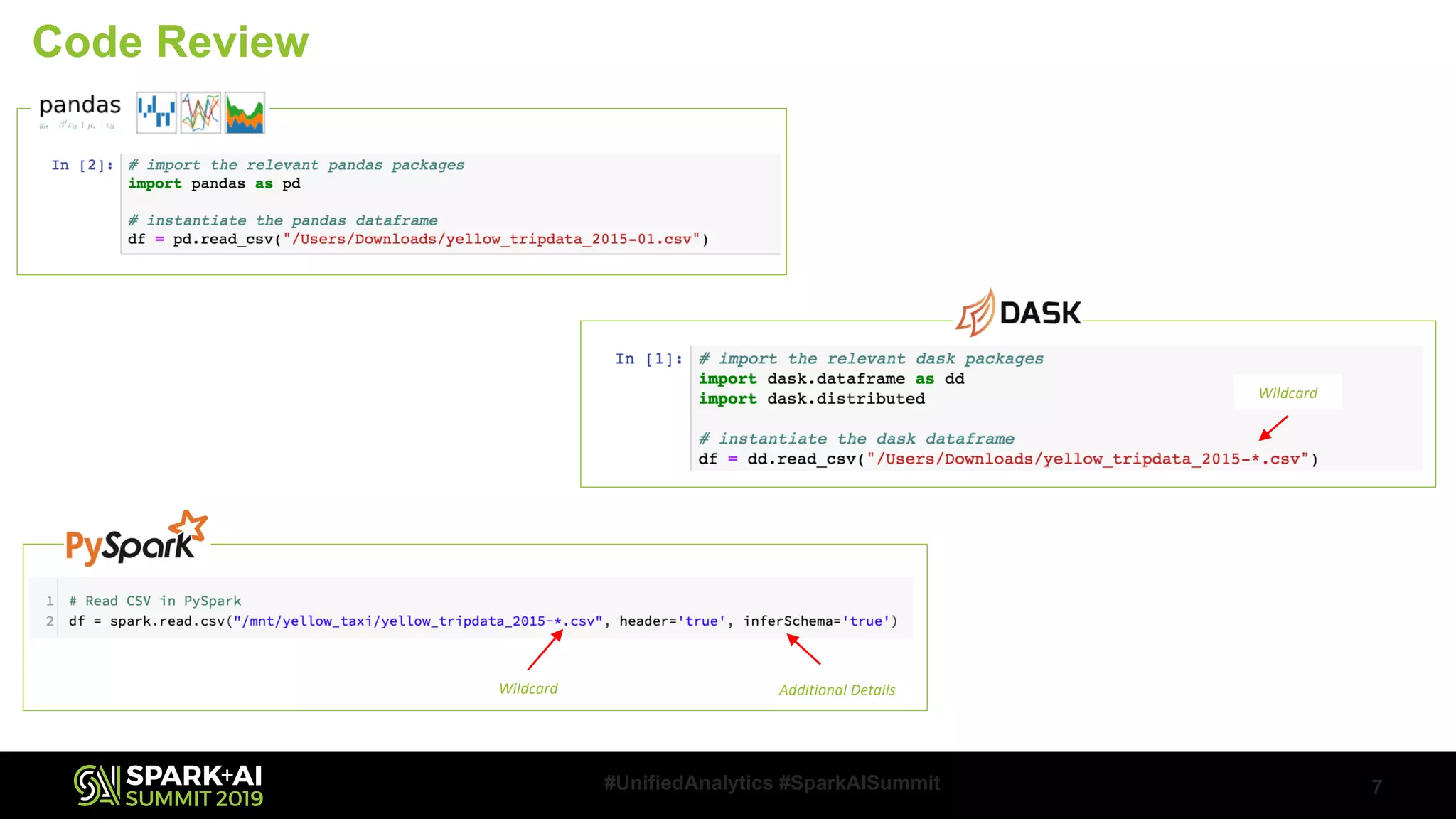

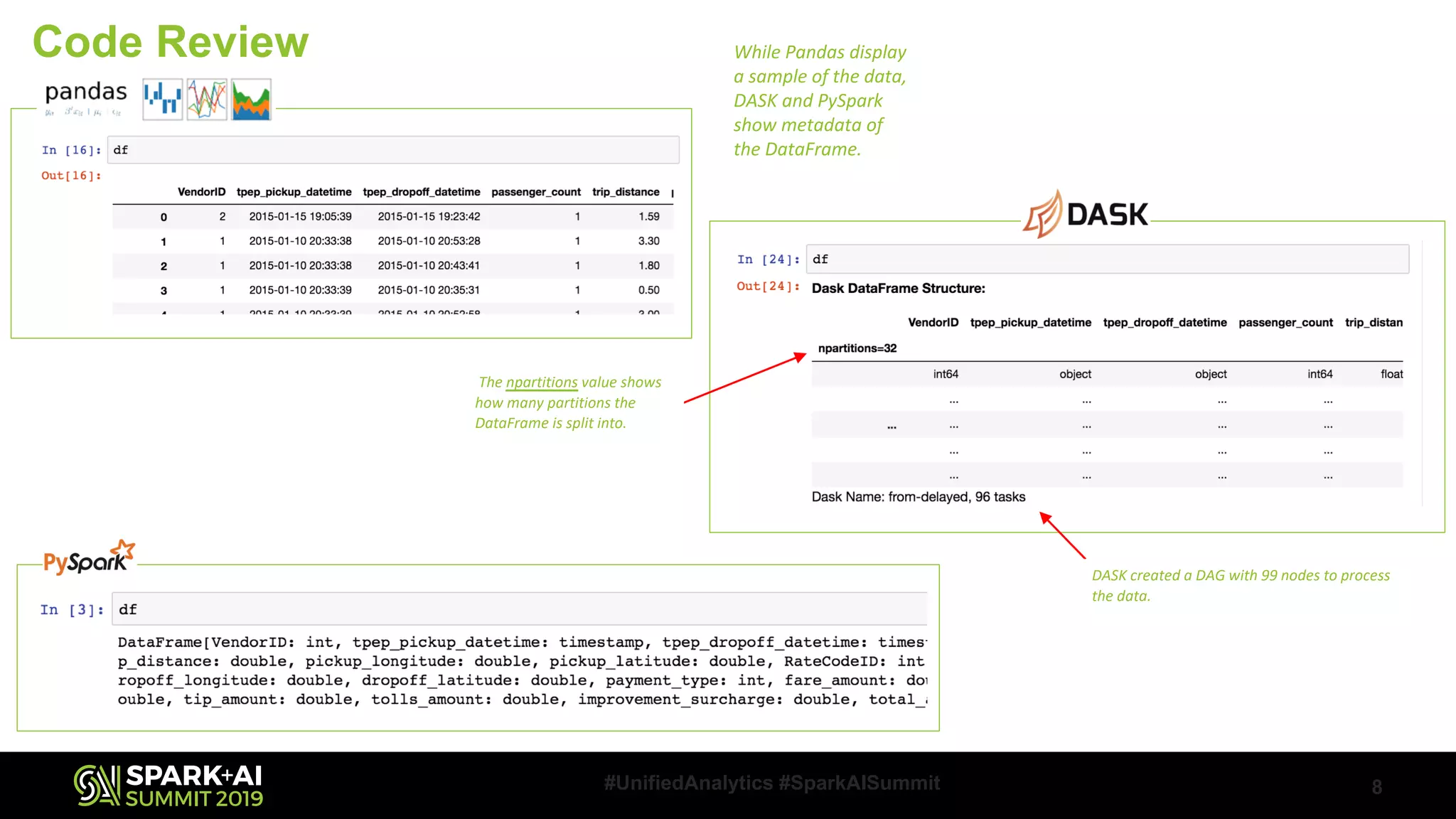

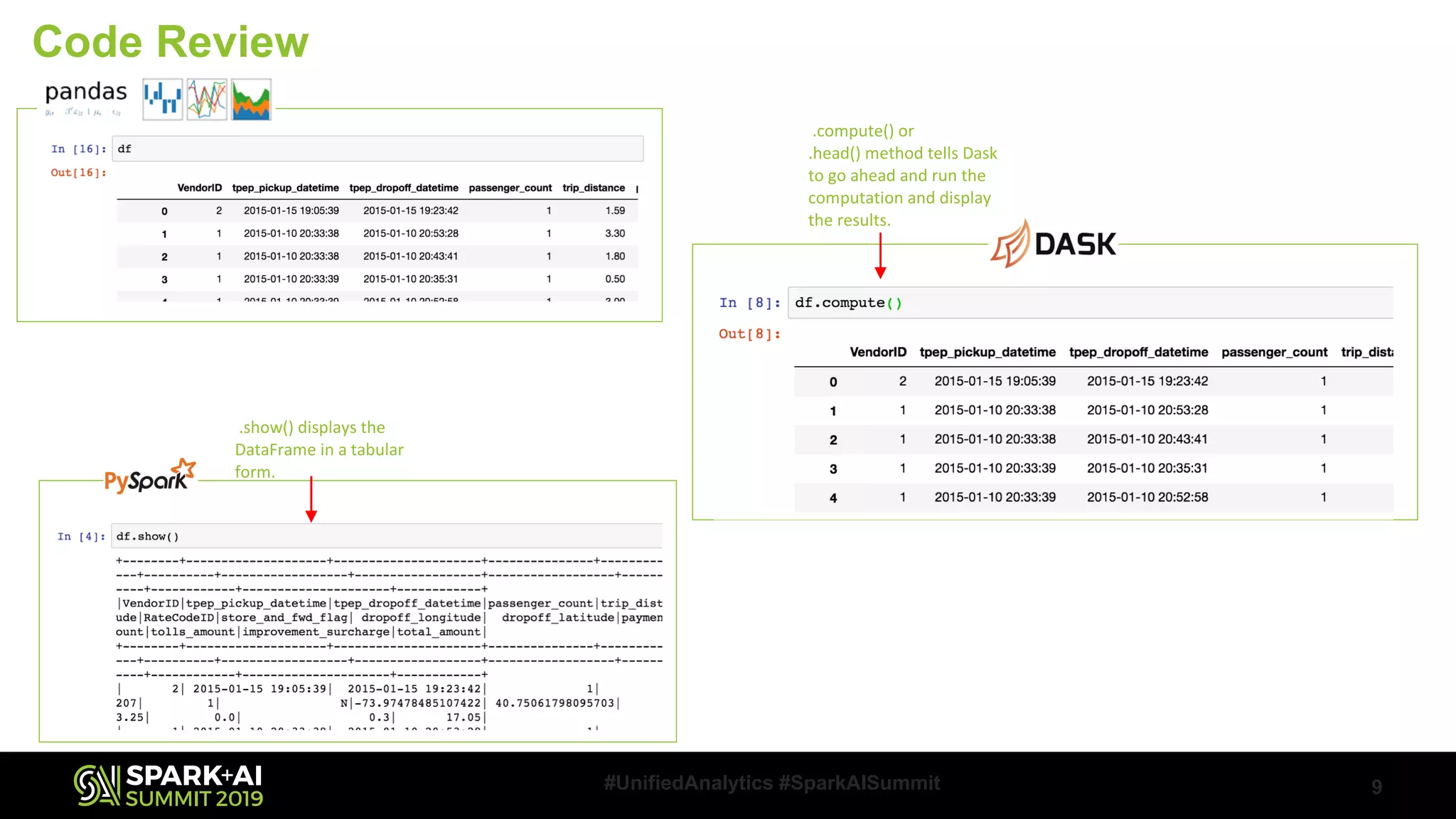

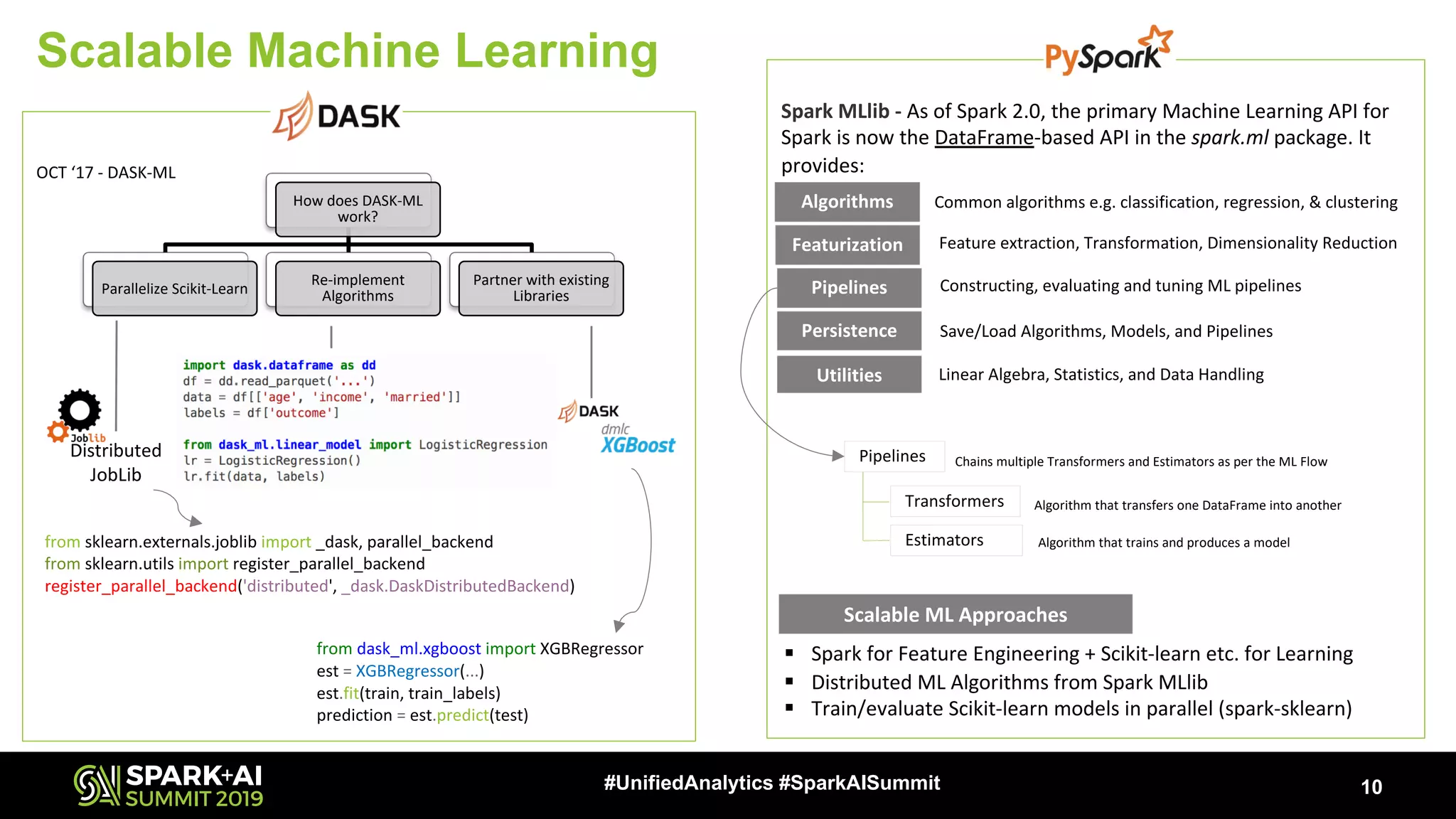

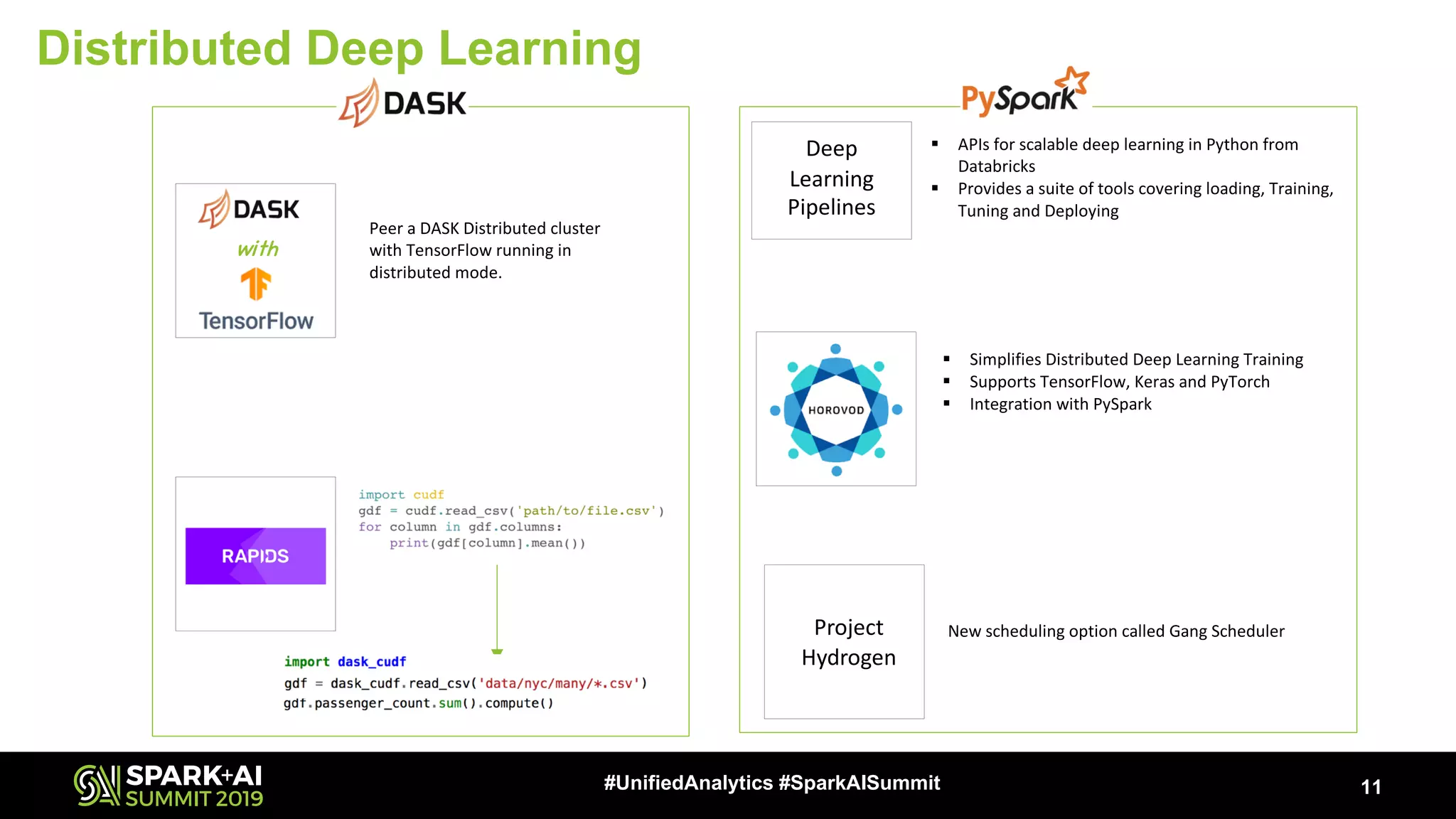

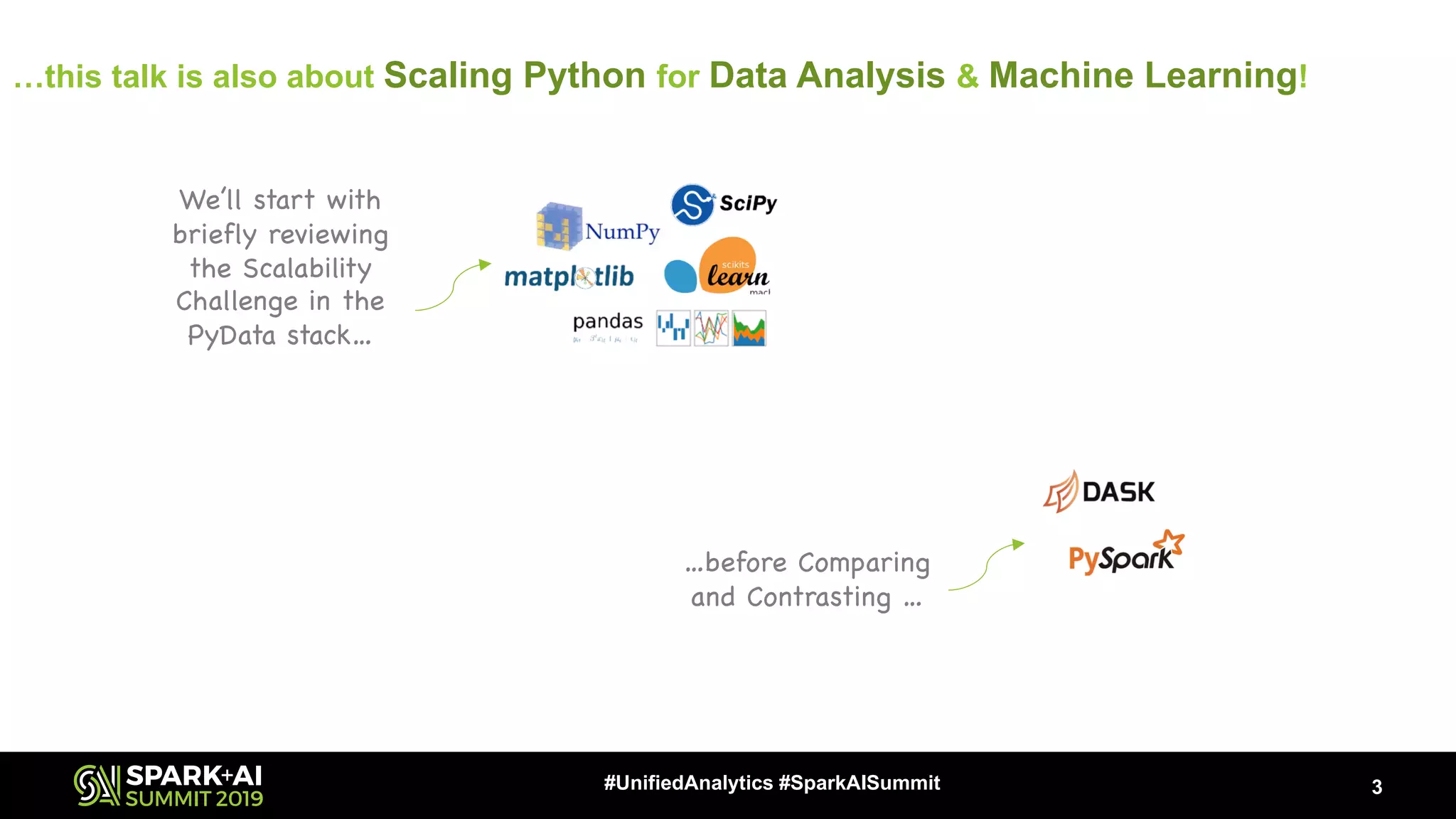

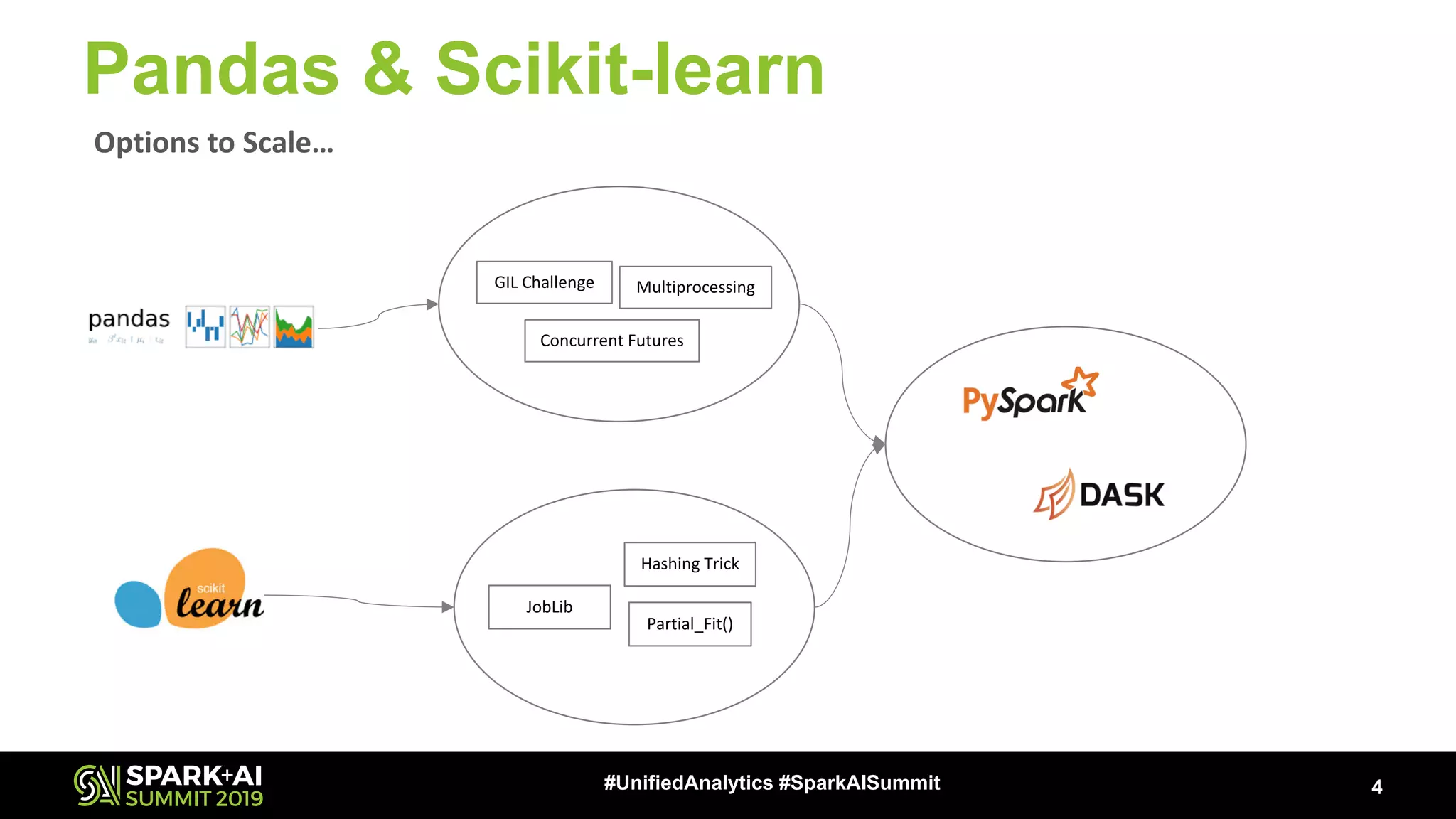

Gurpreet Singh from Microsoft gave a talk on scaling Python for data analysis and machine learning using DASK and Apache Spark. He discussed the challenges of scaling the Python data stack and compared options like DASK, Spark, and Spark MLlib. He provided examples of using DASK and PySpark DataFrames for parallel processing and showed how DASK-ML can be used to parallelize Scikit-Learn models. Distributed deep learning with tools like Project Hydrogen was also covered.

![5#UnifiedAnalytics #SparkAISummit

High Level APIs

RDD

Directed Acyclic Graph (DAG)

Lazy

Execution

Task Scheduler

Synchronous

Multiprocessing

Threaded

Local Spark Standalone

Low Level APIs

Custom

Algorithms

Spark Streaming

Spark MLlib

GraphFrames

Spark SQL /

DataFrames

Distributed

DASK Delayed DASK Futures

Design

Approach

DASK Arrays

[Parallel NumPy]

DASK DataFrames

[Parallel Pandas]

DASK-ML

[Parallel Scikit-learn]

DASK Bag

[Parallel Lists]

Mesos YARN

Local Scheduler

Pluggable Task Scheduling System

Custom

Graphs

Submit

graph as

Python

Dictionary

object

GraphX doesn’tsupport Python](https://image.slidesharecdn.com/052020gurpreetsingh-190509225732/75/DASK-and-Apache-Spark-5-2048.jpg)

![DASK DataFrame & PySpark

6#UnifiedAnalytics #SparkAISummit

DASK DataFrames

[Parallel Pandas]

§ Performance Concerns due to the PySpark Design§ DASK DataFrames API is not identical with Pandas API

§ Performance Concerns with Operations involving Shuffling

§ Inefficiencies of Pandas are carried over

Challenges Challenges

§ Follow the Pandas Performance tips

§ Avoid Shuffle, Use pre-sorting, Persist the Results

§ Use DataFrames API

§ Use Vectorized/Pandas UDF (Spark v2.3 onwards)

RecommendationsRecommendations](https://image.slidesharecdn.com/052020gurpreetsingh-190509225732/75/DASK-and-Apache-Spark-6-2048.jpg)