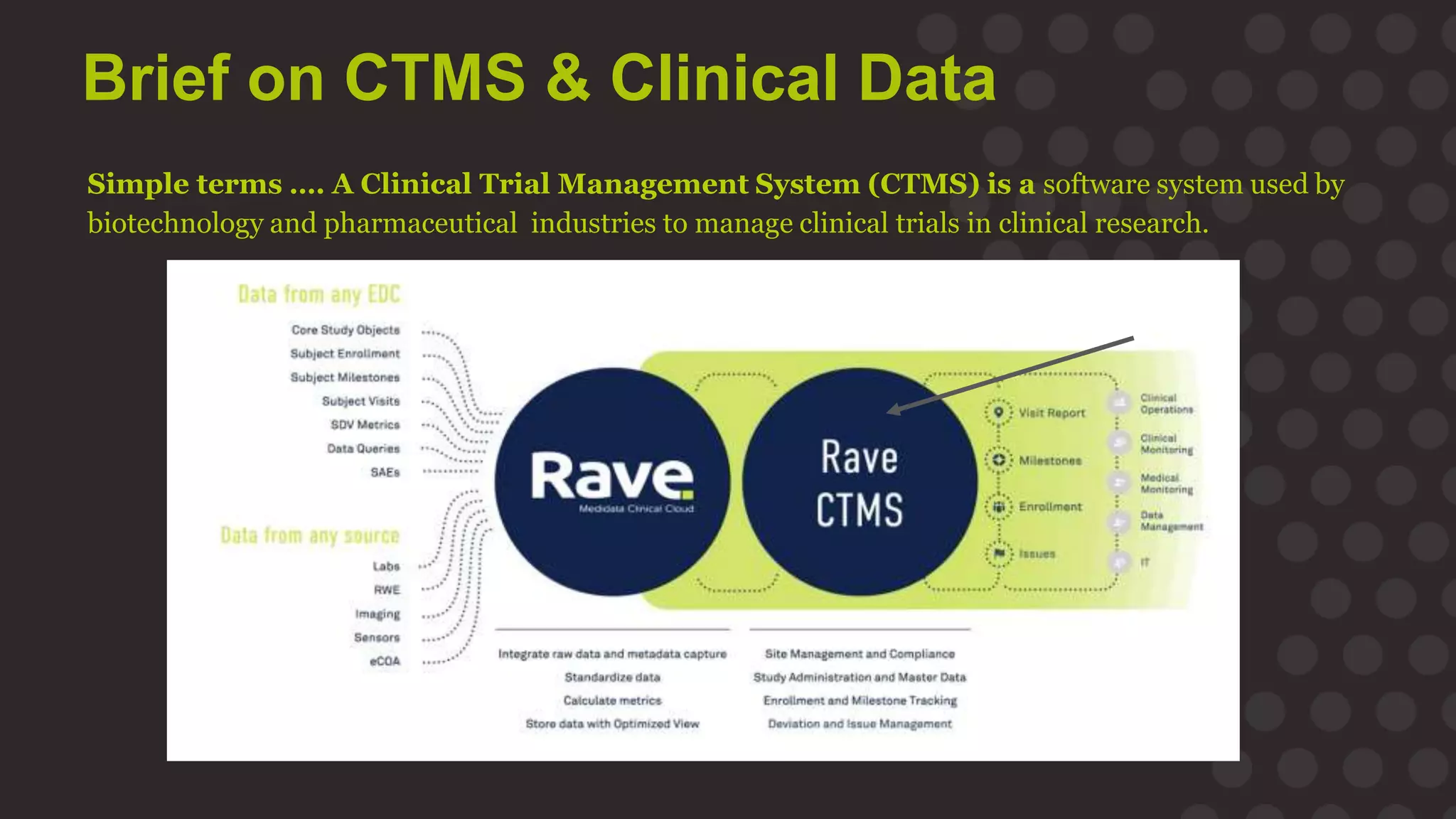

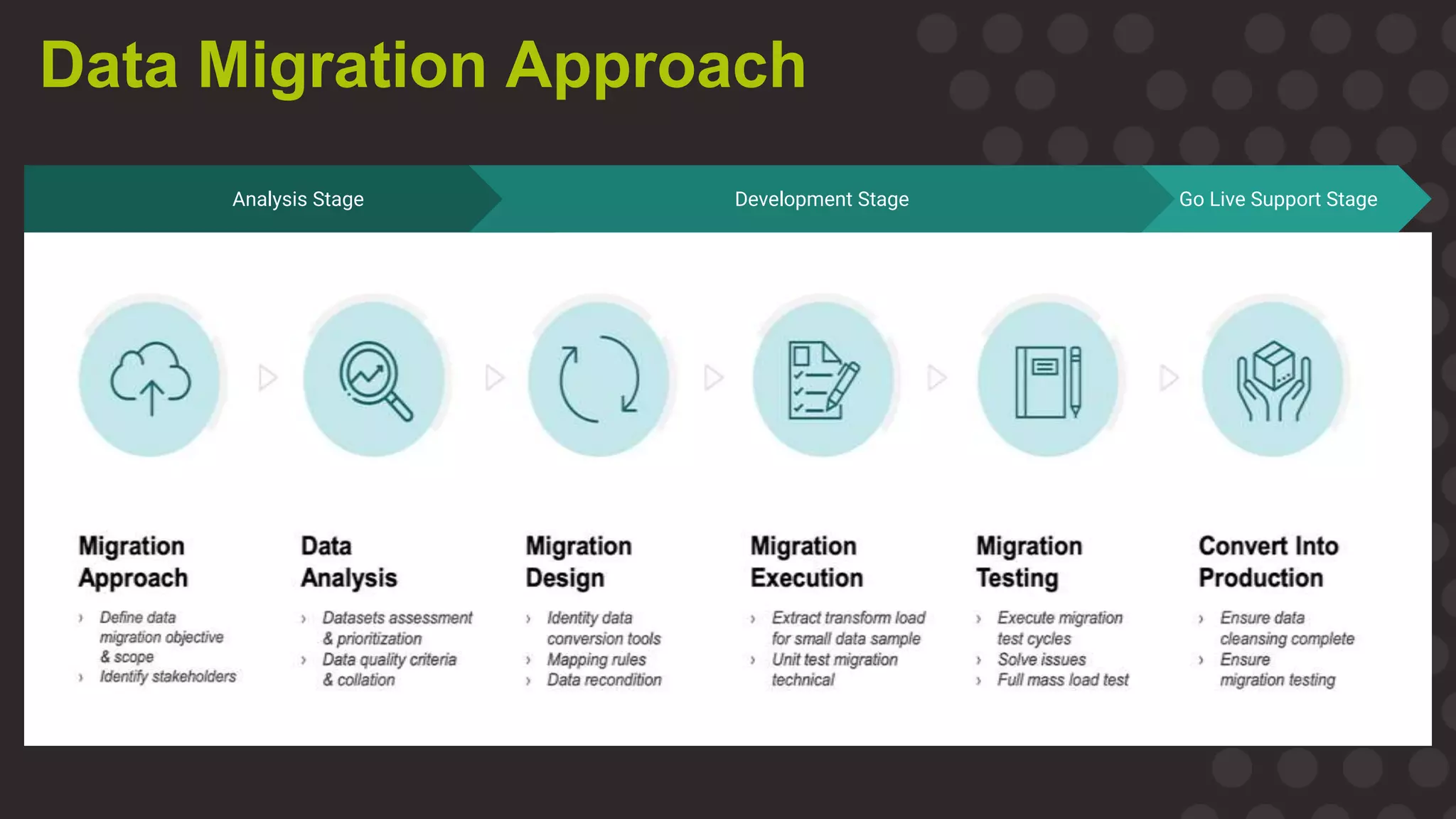

The document discusses the importance of data migration in life sciences, highlighting Medidata's role in facilitating this process with its clinical trial management system (CTMS). It outlines the necessary steps and best practices for a successful migration, while addressing challenges, risks, and the vision for a streamlined, efficient data management solution. Key points include the risks of poor data migration, the need for thorough planning, and the importance of clear governance and validation strategies.