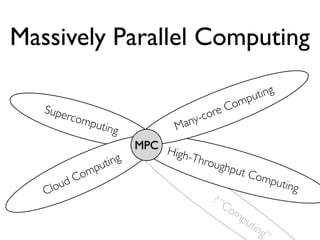

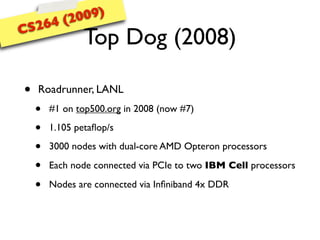

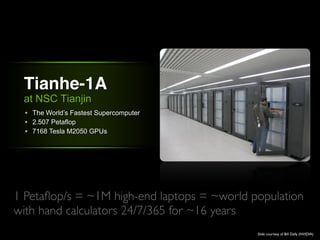

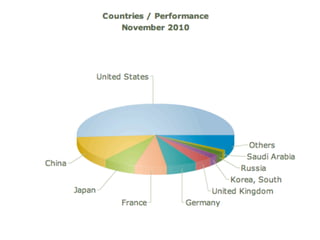

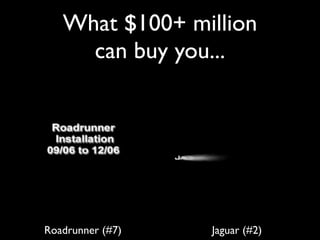

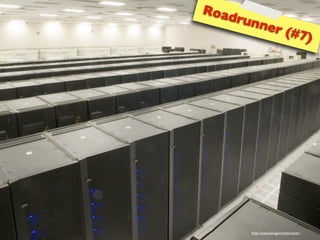

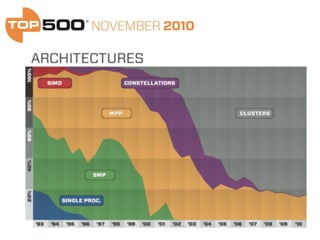

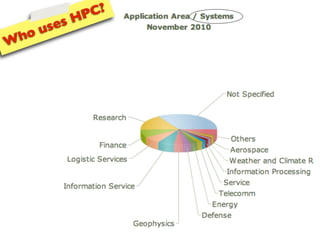

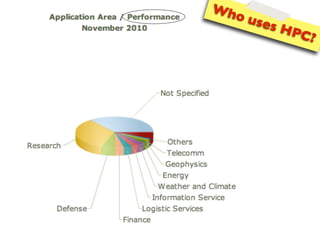

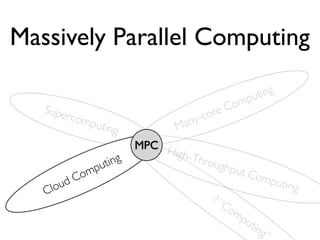

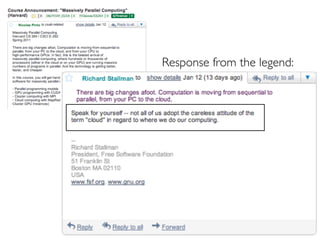

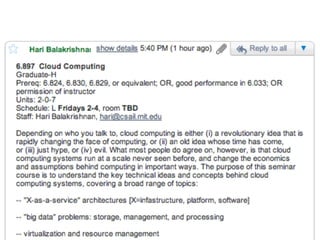

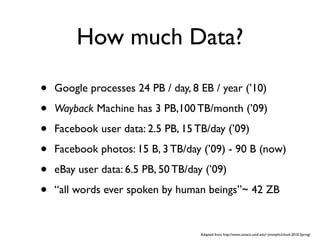

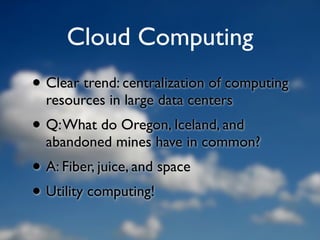

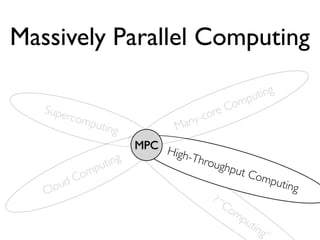

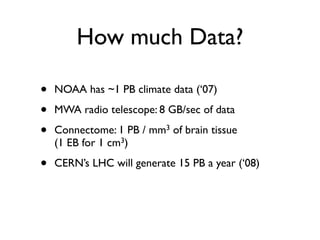

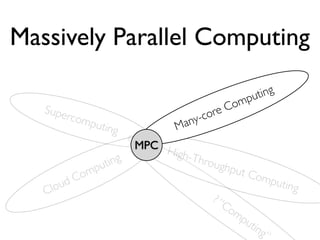

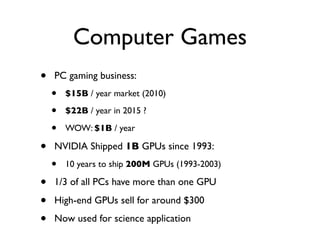

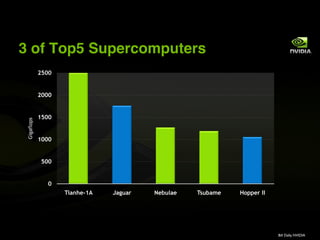

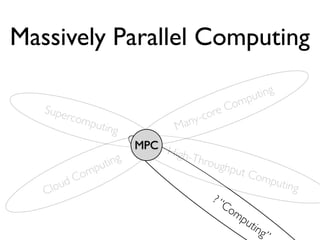

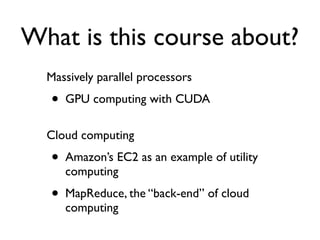

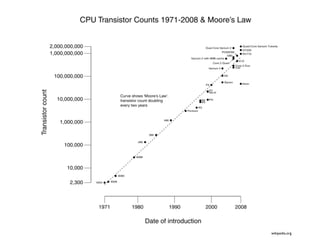

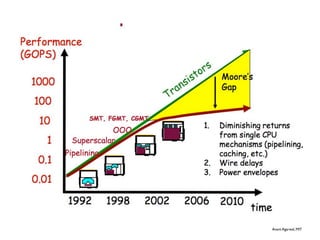

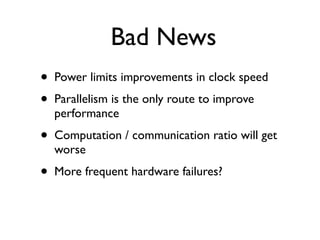

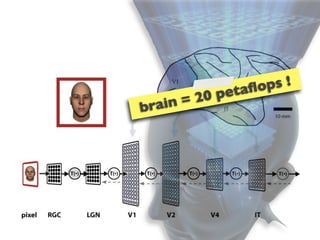

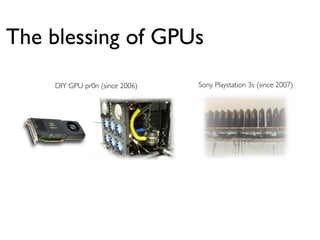

The document provides an outline for a lecture on massively parallel computing. It discusses how modeling and simulation problems require high-performance computing and are driving the development of new computing architectures. It mentions some of the world's most powerful supercomputers like Roadrunner and Tianhe-1A. It also discusses how cloud computing, data processing needs, and gaming are contributing to growth in parallel computing. The document outlines how data from science experiments and the web are exploding in size and driving the need for new parallel and distributed solutions.

![speed

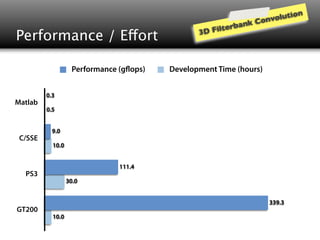

(in billion floating point operations per second)

Q9450 (Matlab/C) [2008] 0.3

Q9450 (C/SSE) [2008] 9.0

7900GTX (OpenGL/Cg) [2006] 68.2

PS3/Cell (C/ASM) [2007] 111.4

8800GTX (CUDA1.x) [2007] 192.7

GTX280 (CUDA2.x) [2008] 339.3

cha n ging...

e

GTX480 (CUDA3.x) [2010]

pe edu p is g a m 974.3

(Fermi)

>1 000X s

Pinto, Doukhan, DiCarlo, Cox PLoS 2009

Pinto, Cox GPU Comp. Gems 2011](https://image.slidesharecdn.com/cs264201101-introductionshare-110206153841-phpapp01/85/Harvard-CS264-01-Introduction-115-320.jpg)

![Empirical results...

Performance (g ops)

Q9450 (Matlab/C) [2008] 0.3

Q9450 (C/SSE) [2008] 9.0

7900GTX (Cg) [2006] 68.2

PS3/Cell (C/ASM) [2007] 111.4

8800GTX (CUDA1.x) [2007] 192.7

GTX280 (CUDA2.x) [2008] 339.3

.

GTX480 (CUDA3.x) [2010] e cha nging.. 974.3

g am

e edup is

>1 0 00X sp](https://image.slidesharecdn.com/cs264201101-introductionshare-110206153841-phpapp01/85/Harvard-CS264-01-Introduction-142-320.jpg)