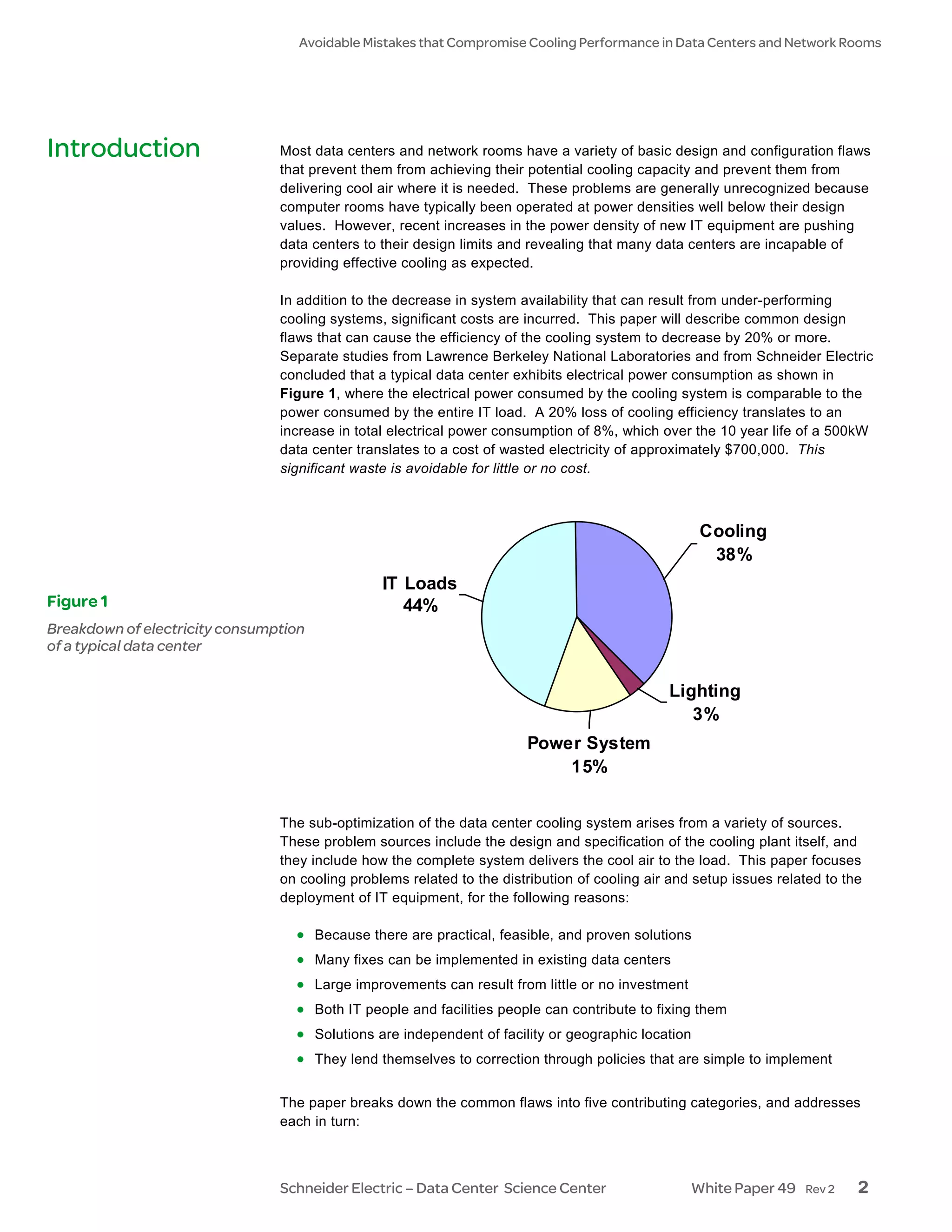

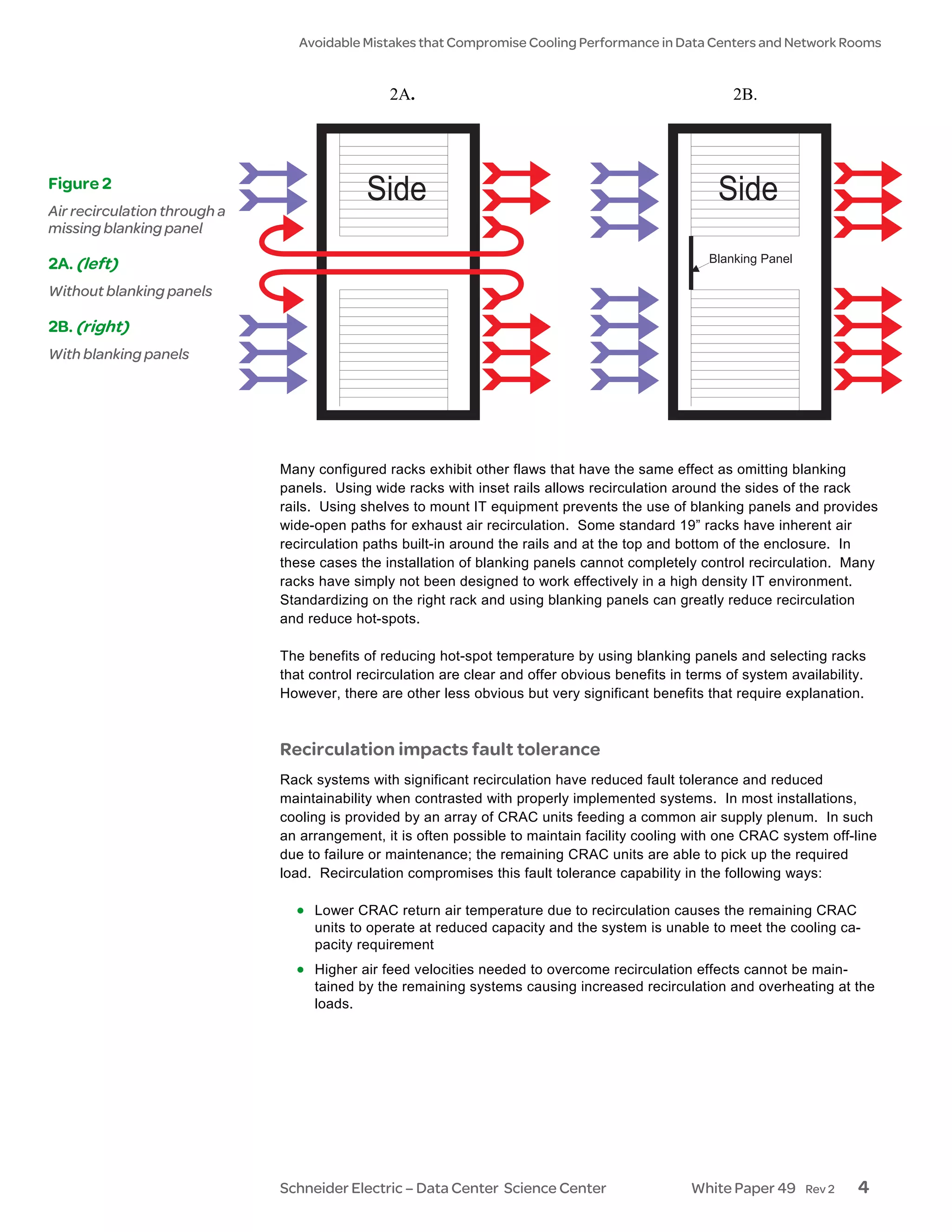

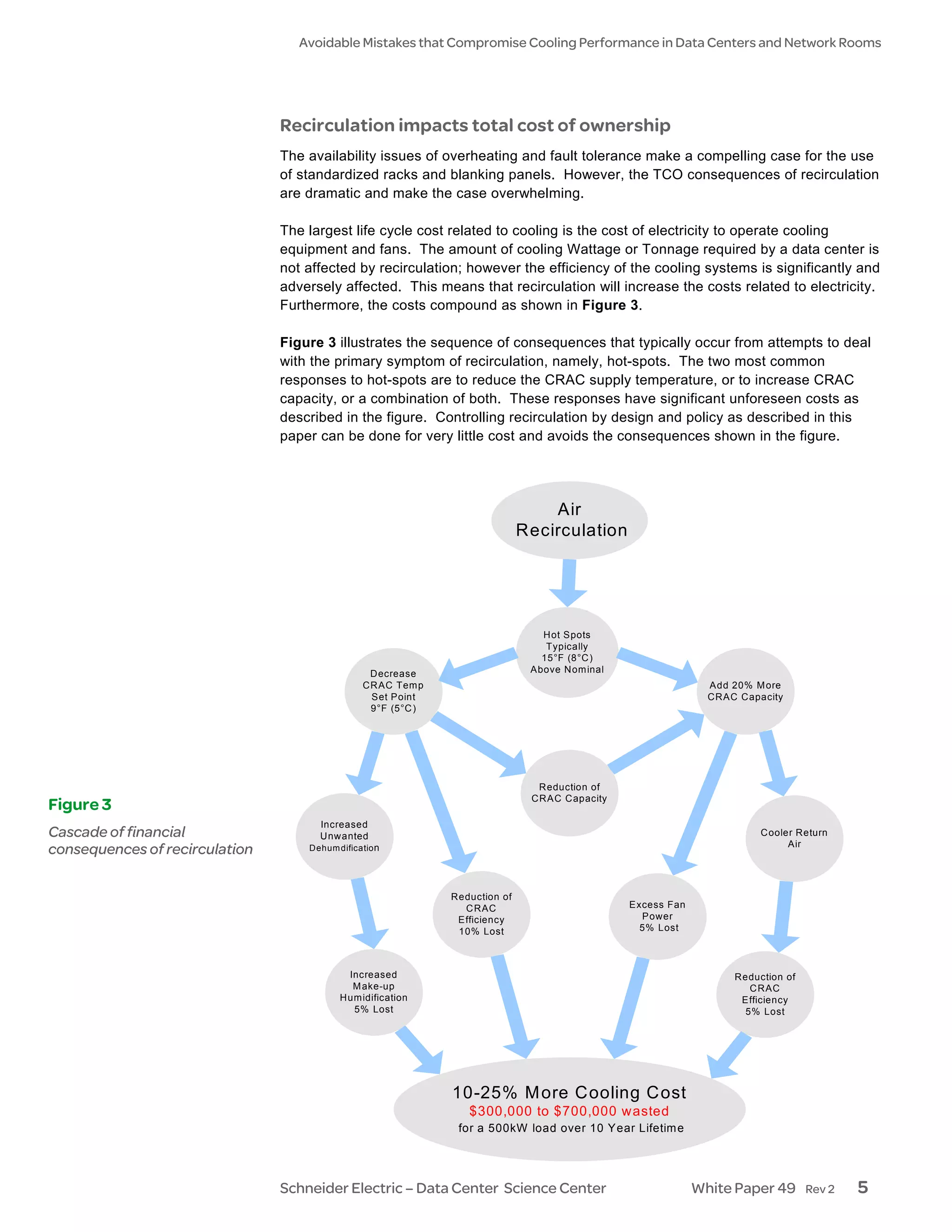

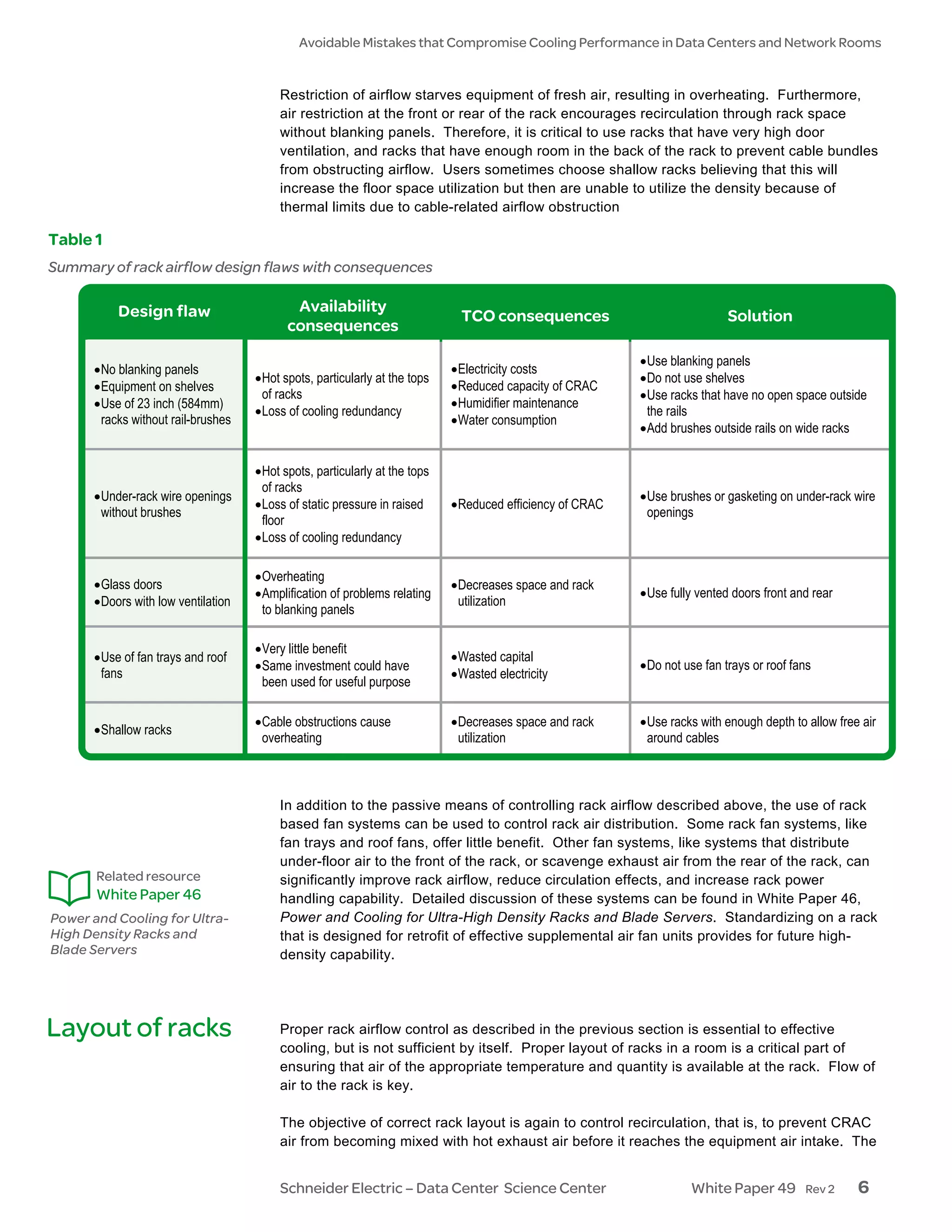

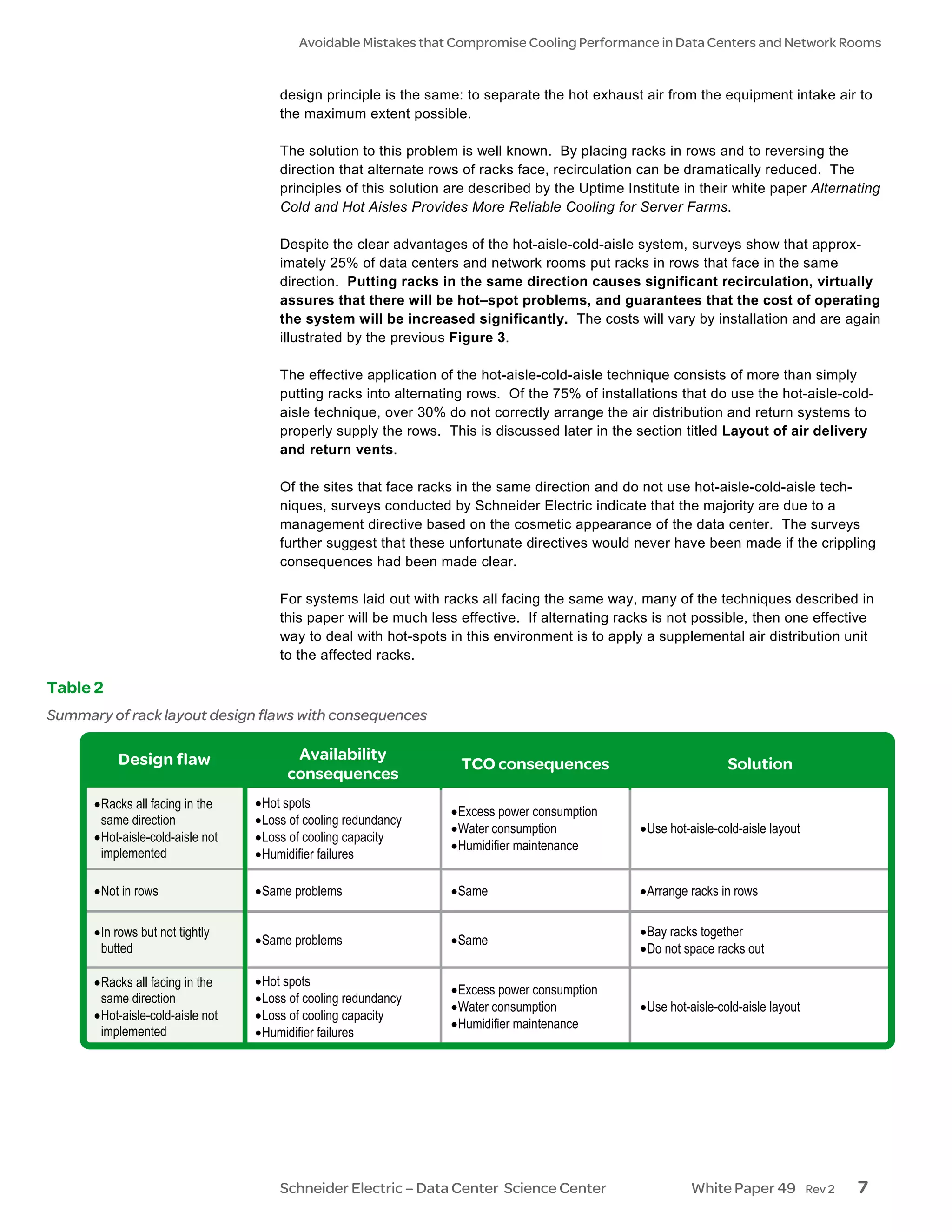

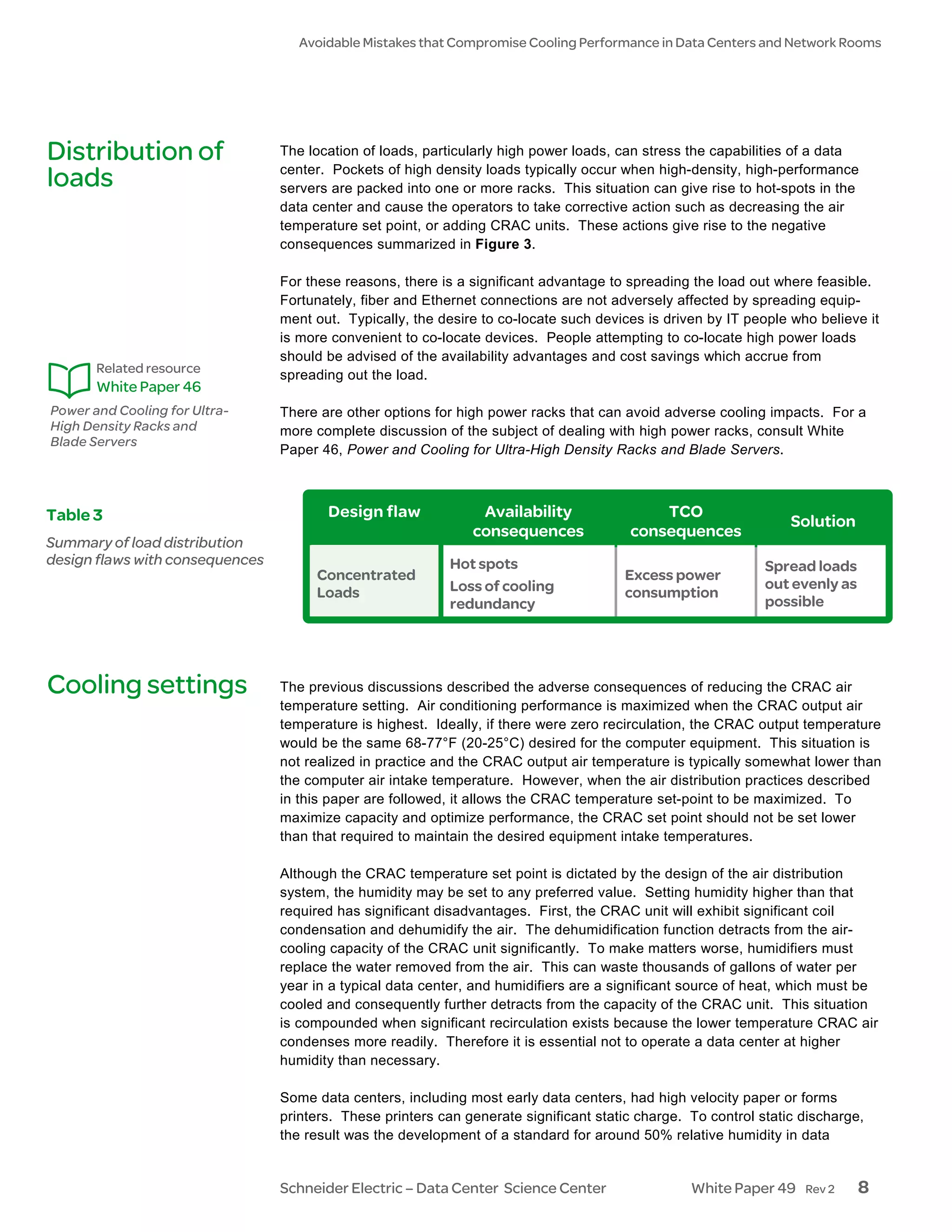

This document discusses common mistakes that compromise cooling performance in data centers and network rooms. It examines five categories of typical mistakes: airflow in the rack, rack layout, load distribution, cooling settings, and air delivery/return vent layout. Mistakes such as omitting blanking panels, improper rack layout, and obstructed airflow can cause hot spots, decrease fault tolerance, reduce efficiency and capacity, increasing costs by up to 25% over the lifetime of the data center. The document provides solutions to address each problem through standardized racks, blanking panels, proper hot/cold aisle layout, unobstructed airflow, and policies to prevent these issues.