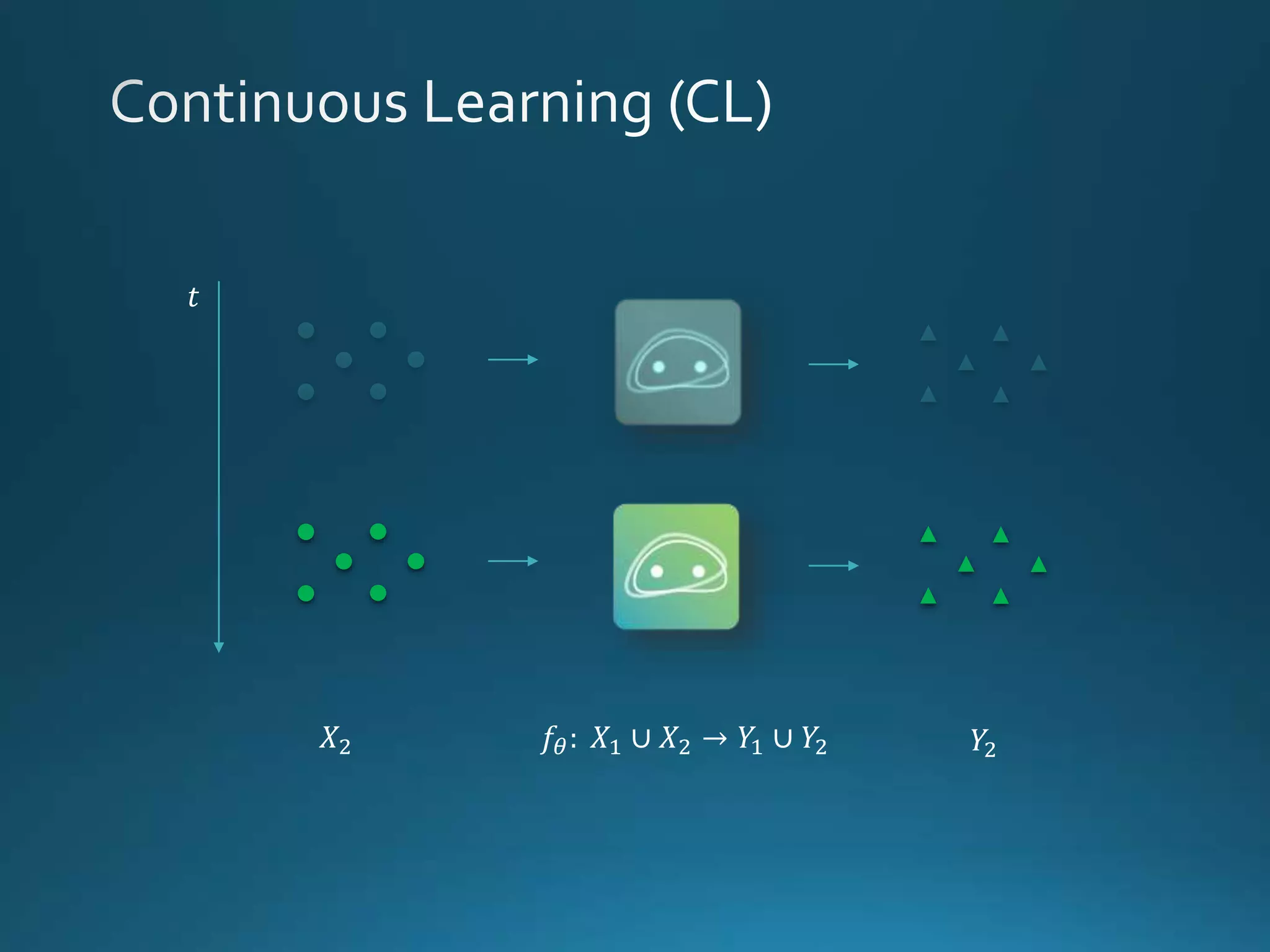

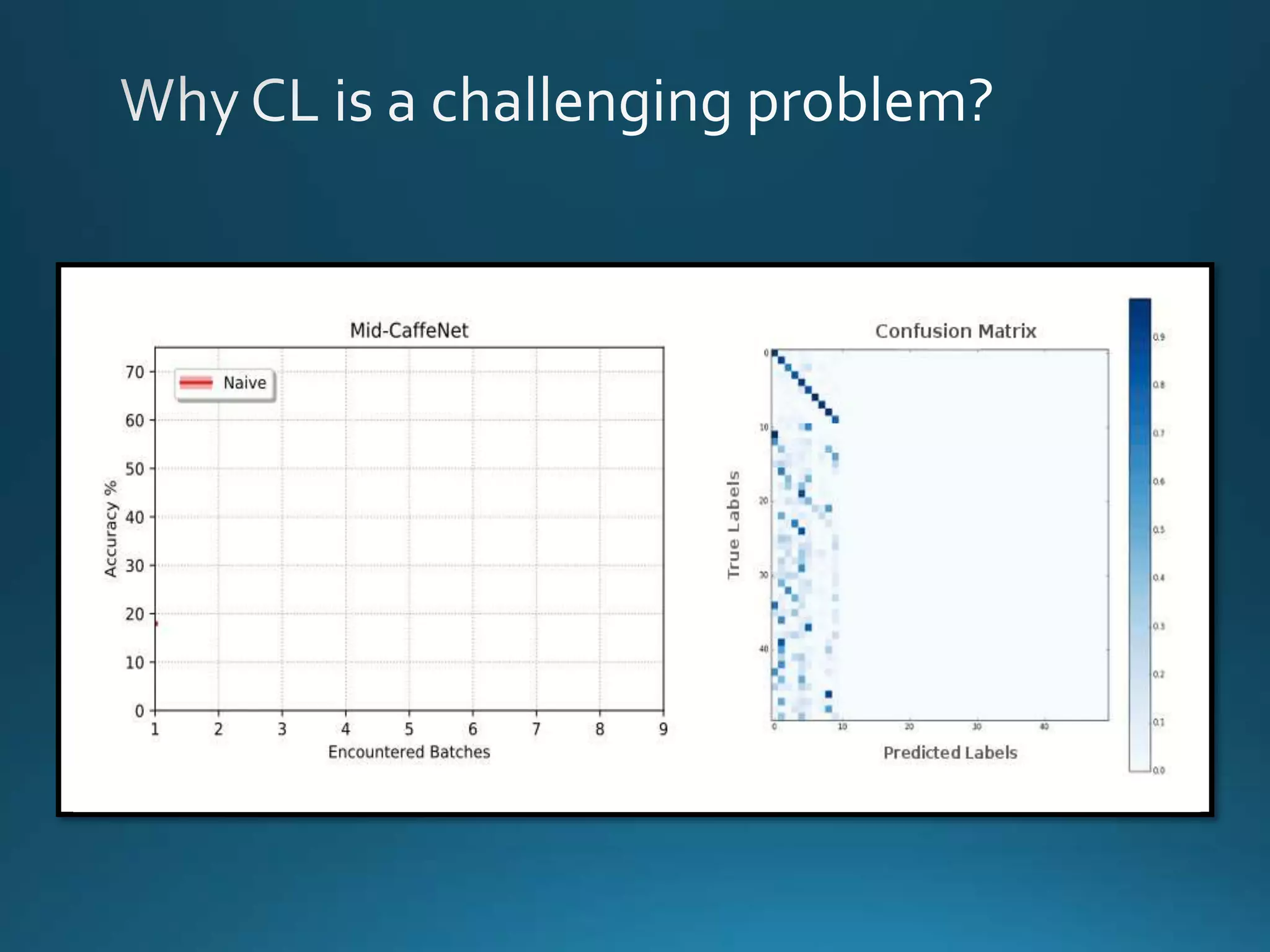

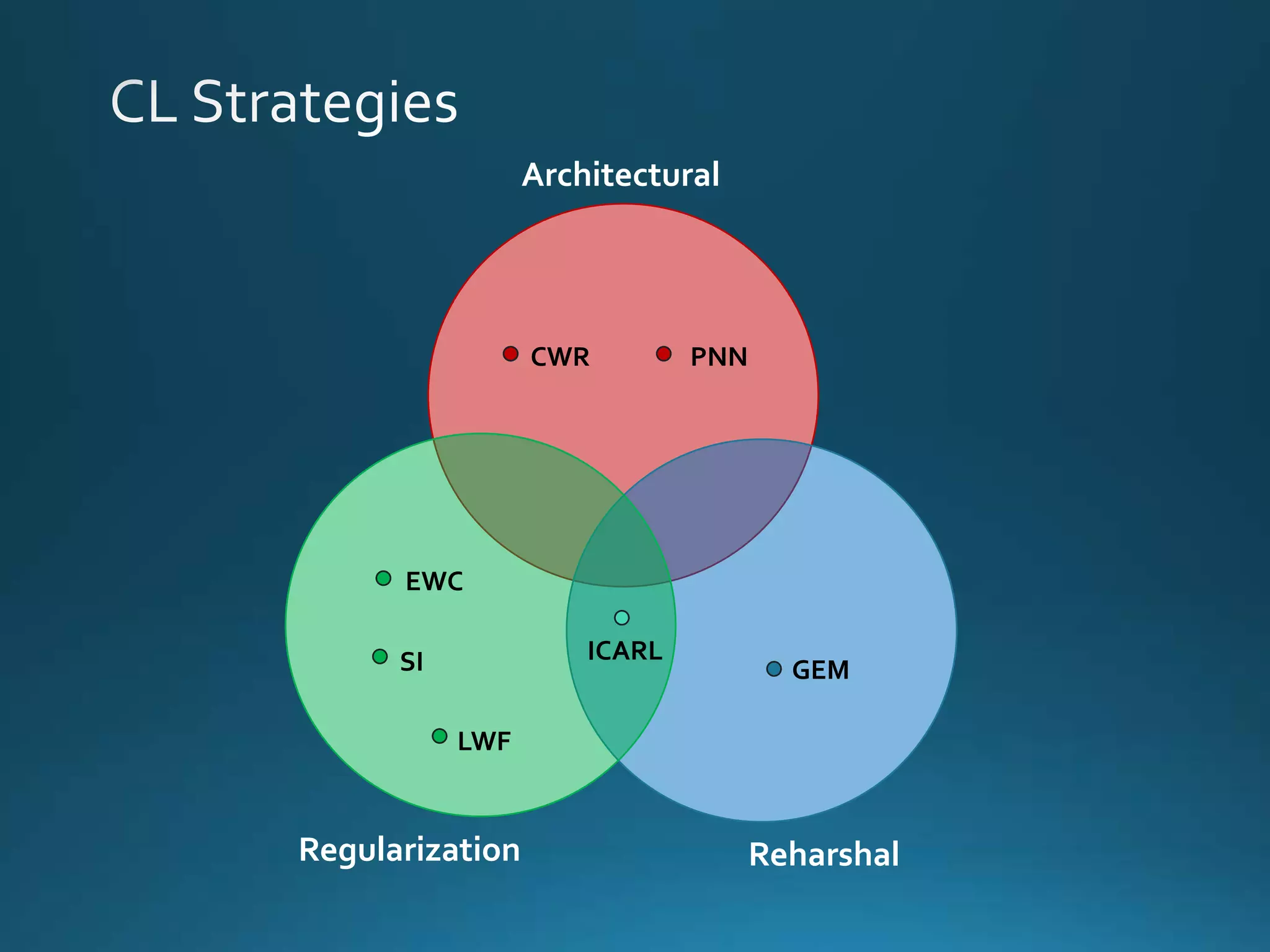

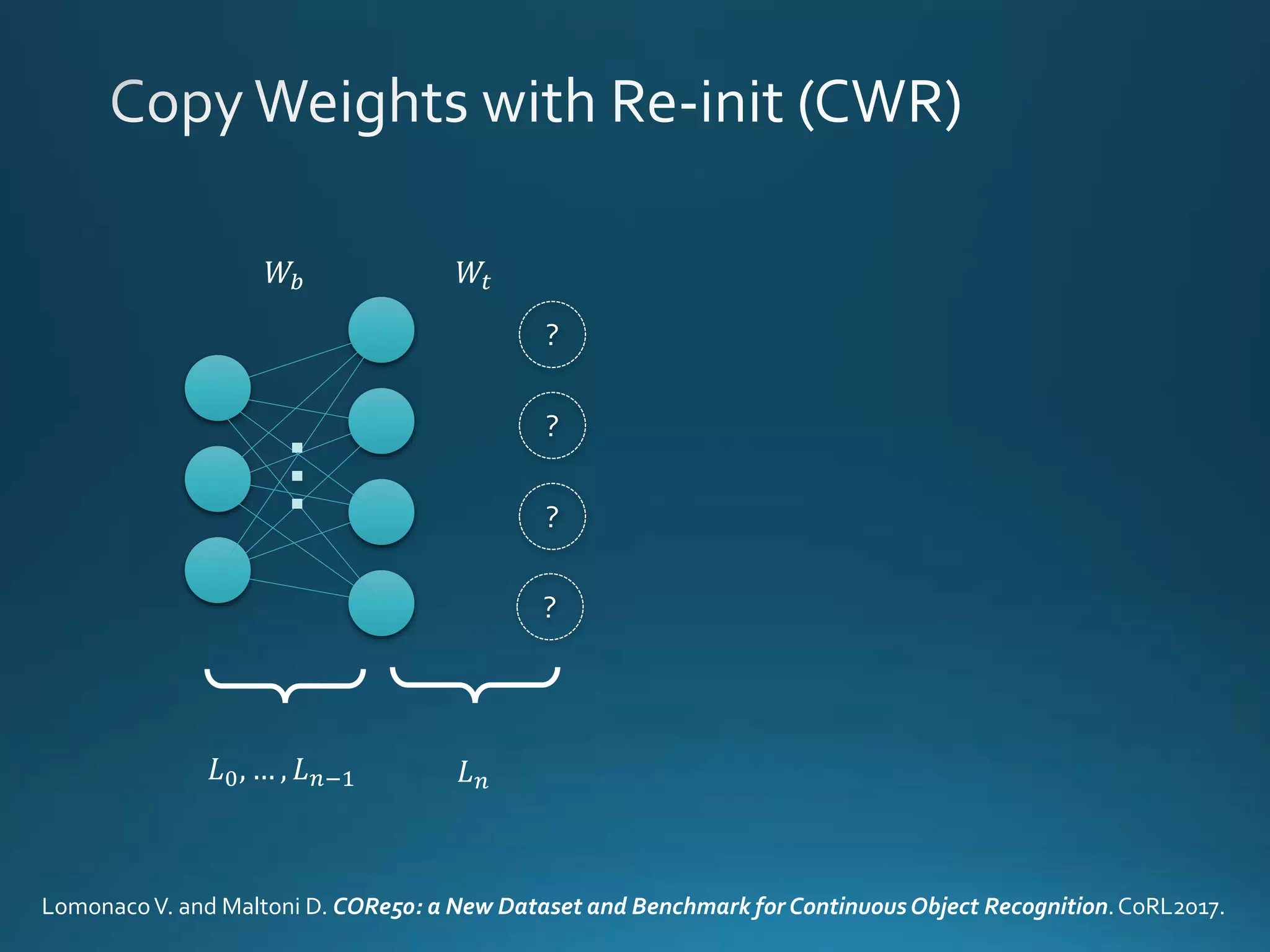

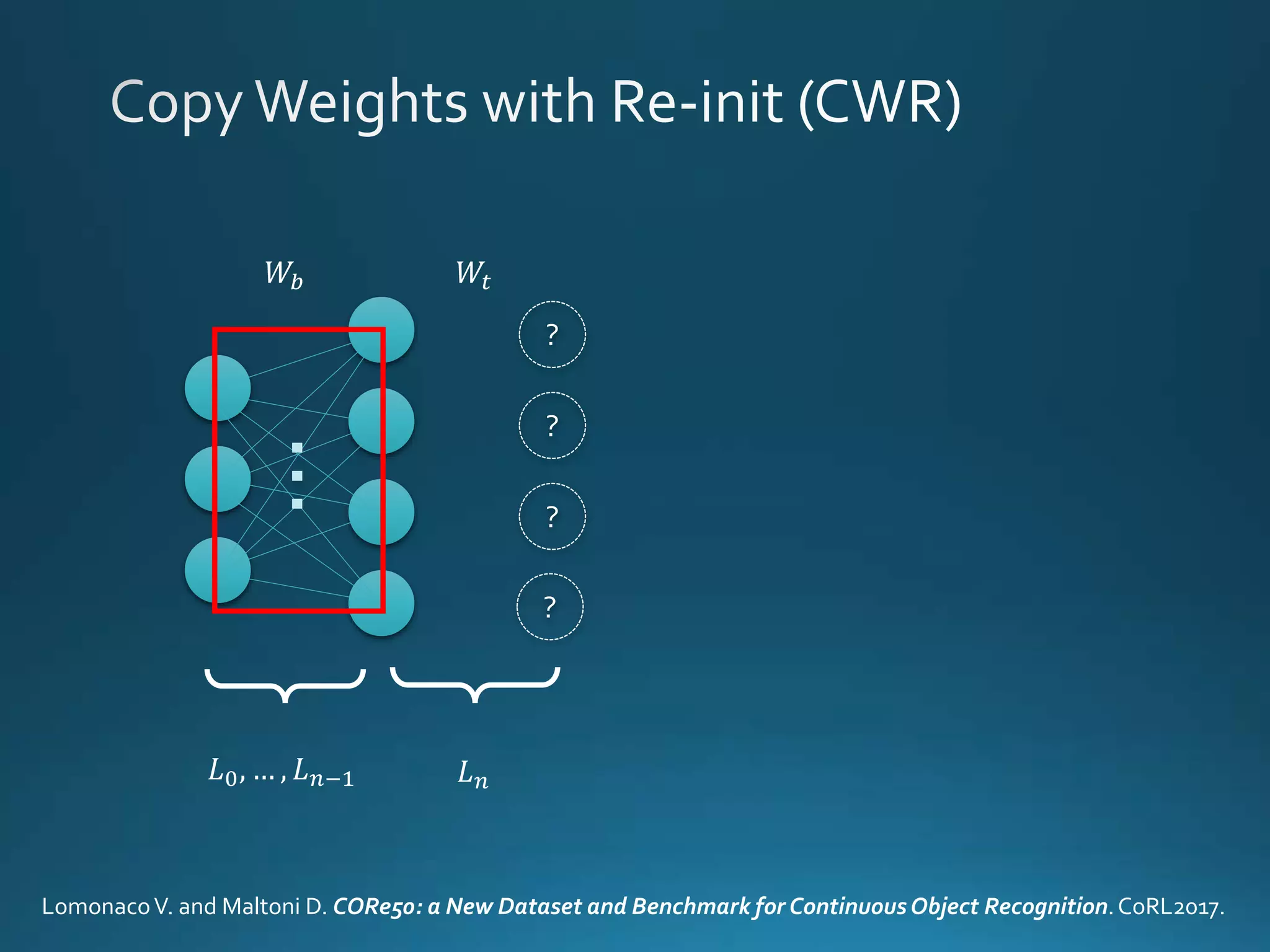

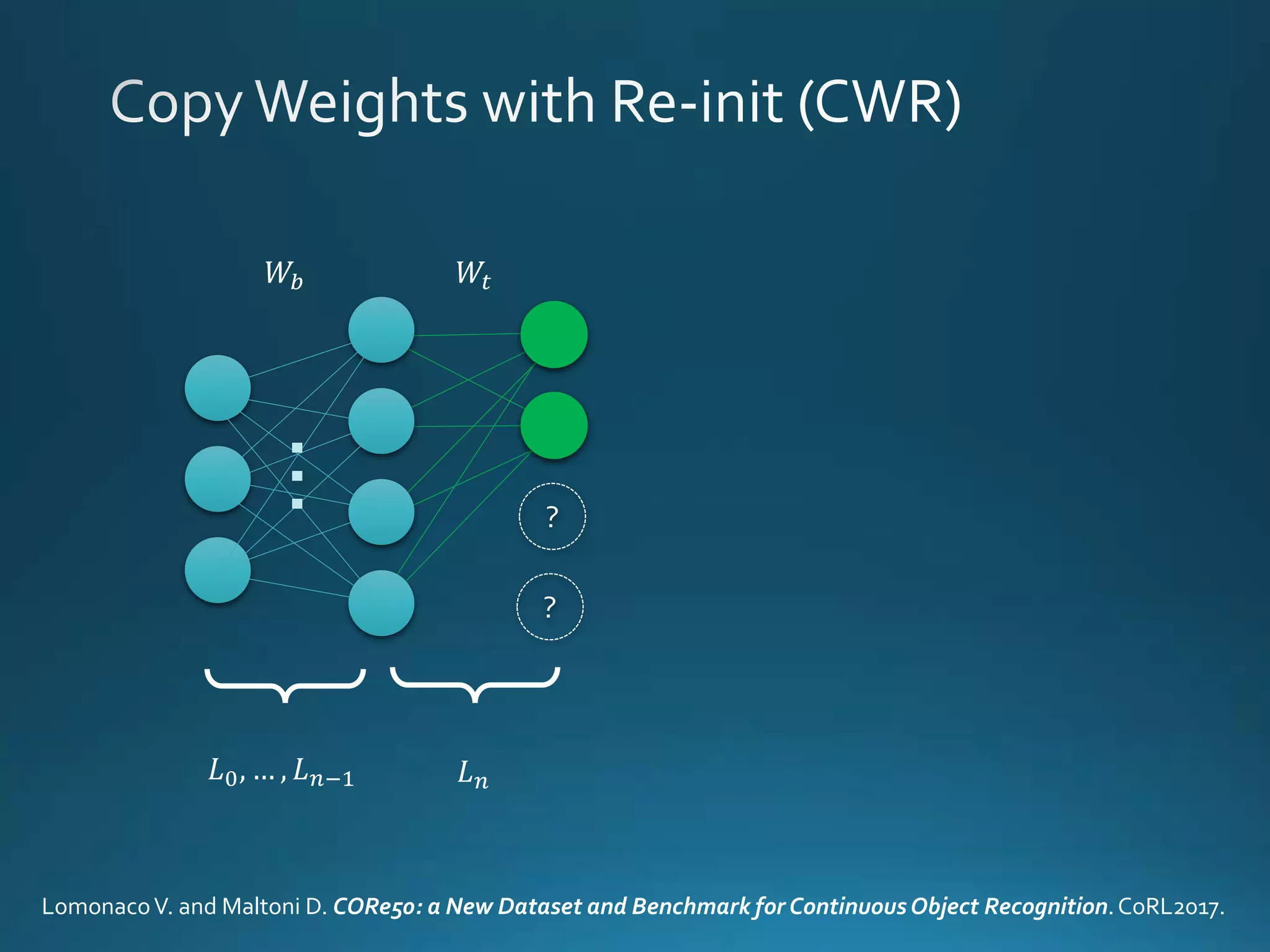

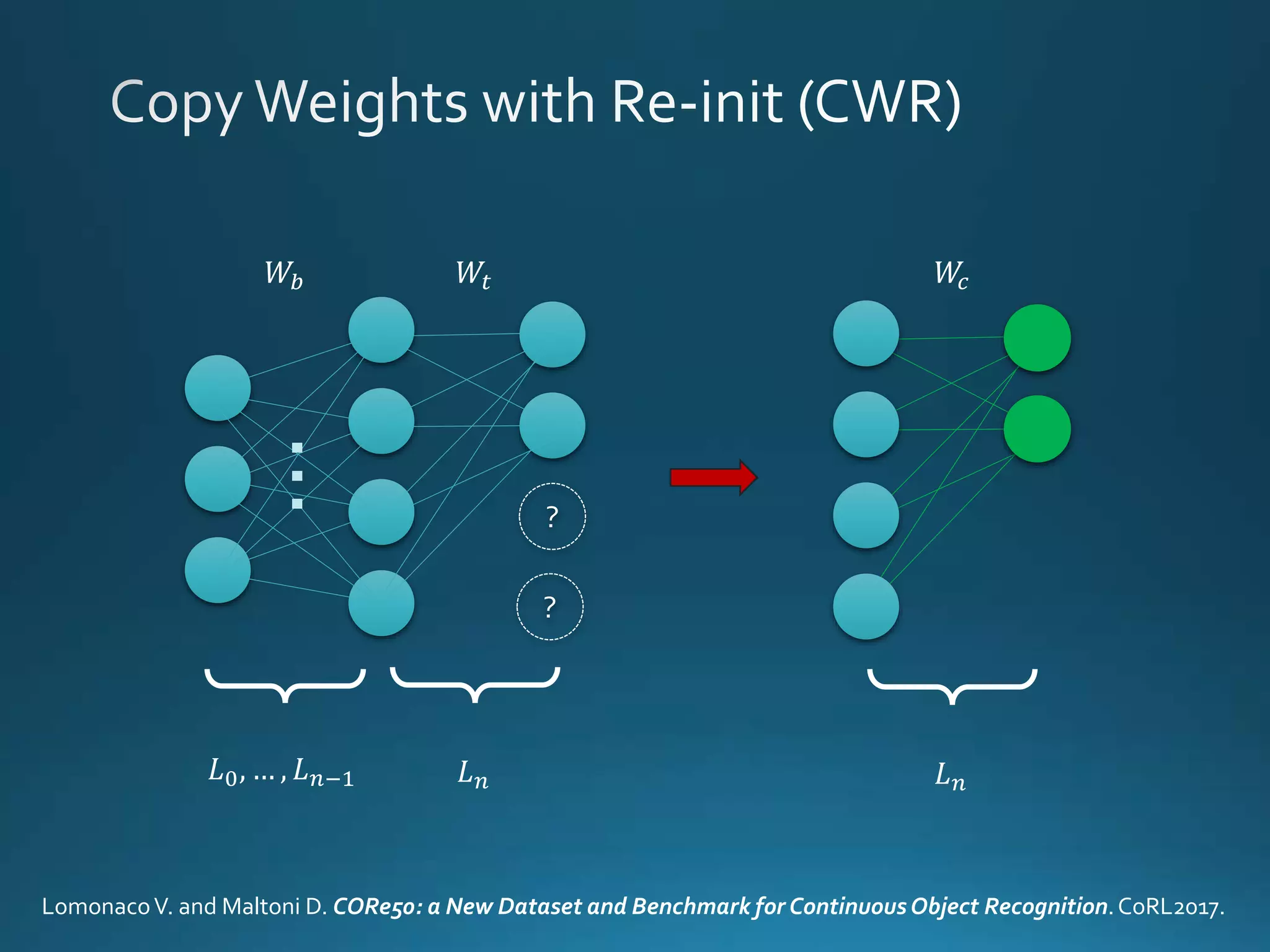

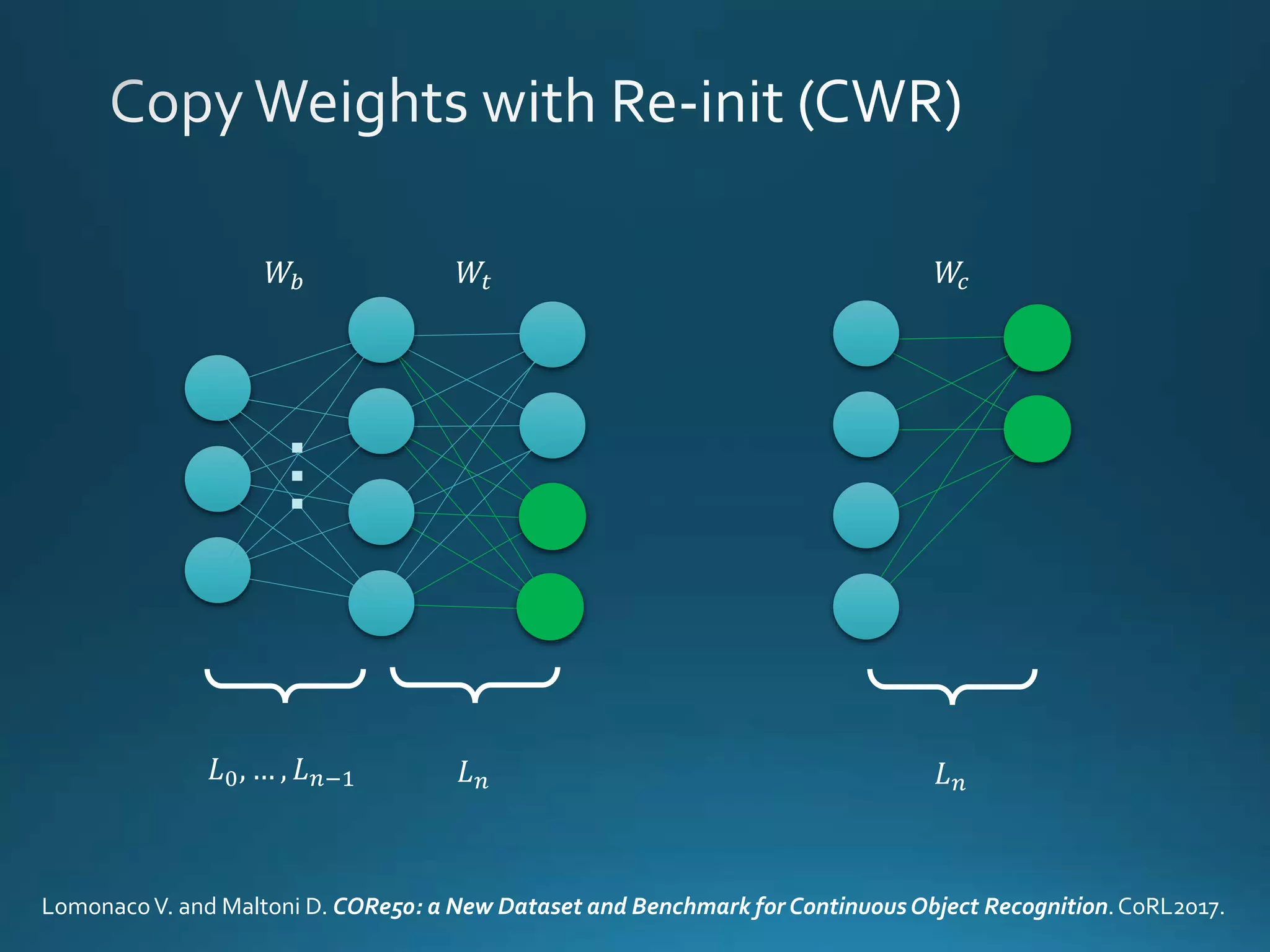

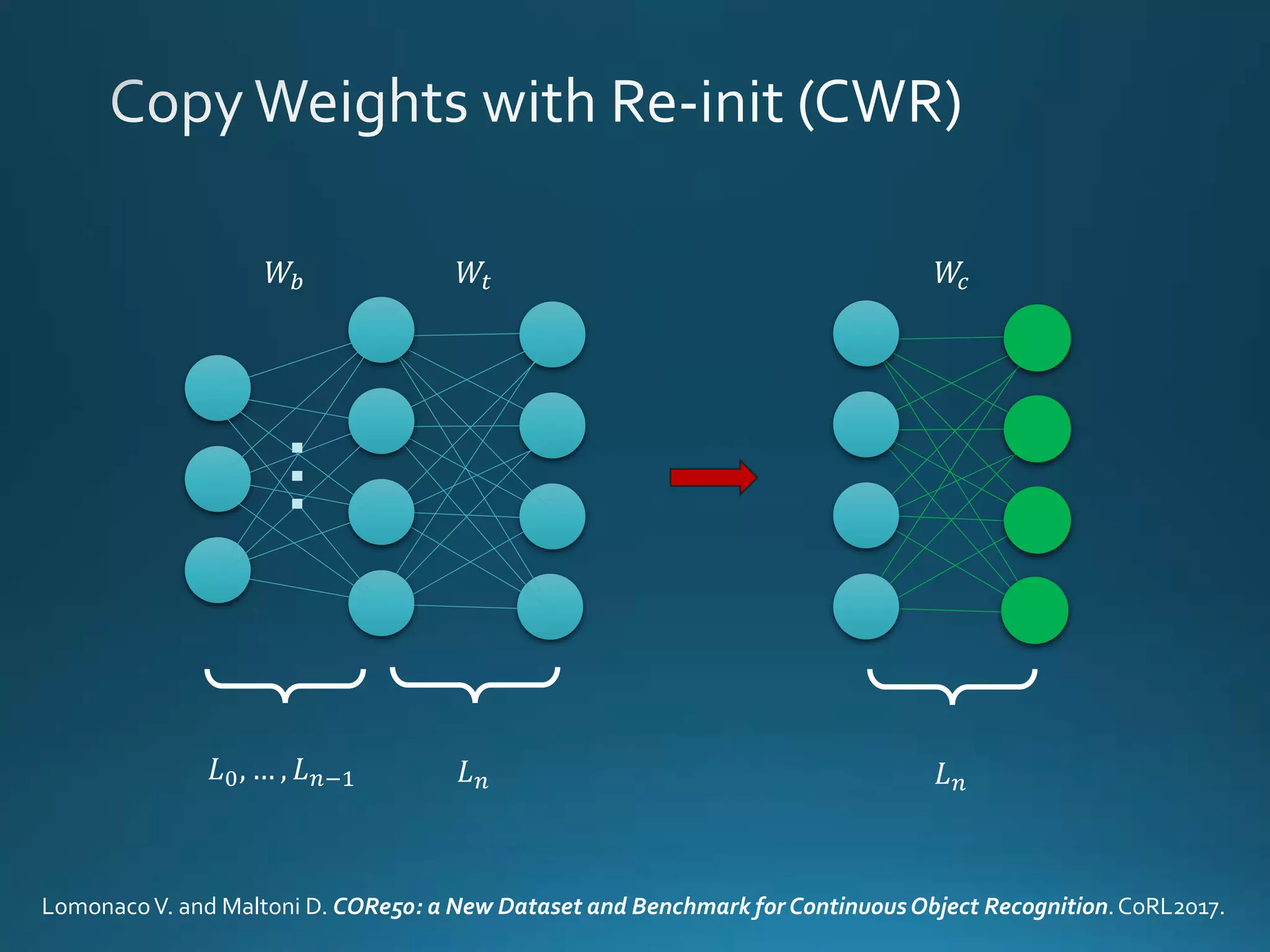

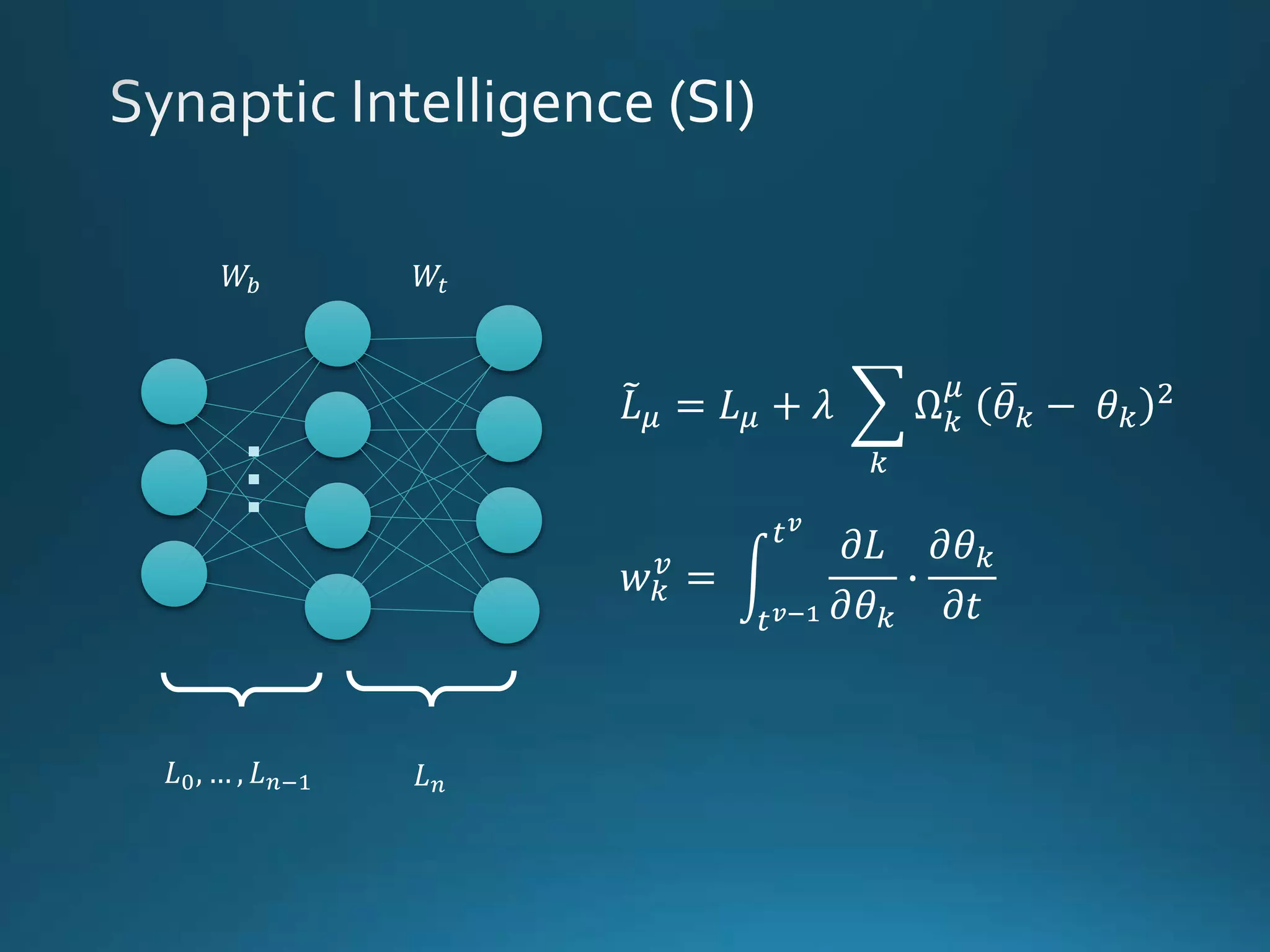

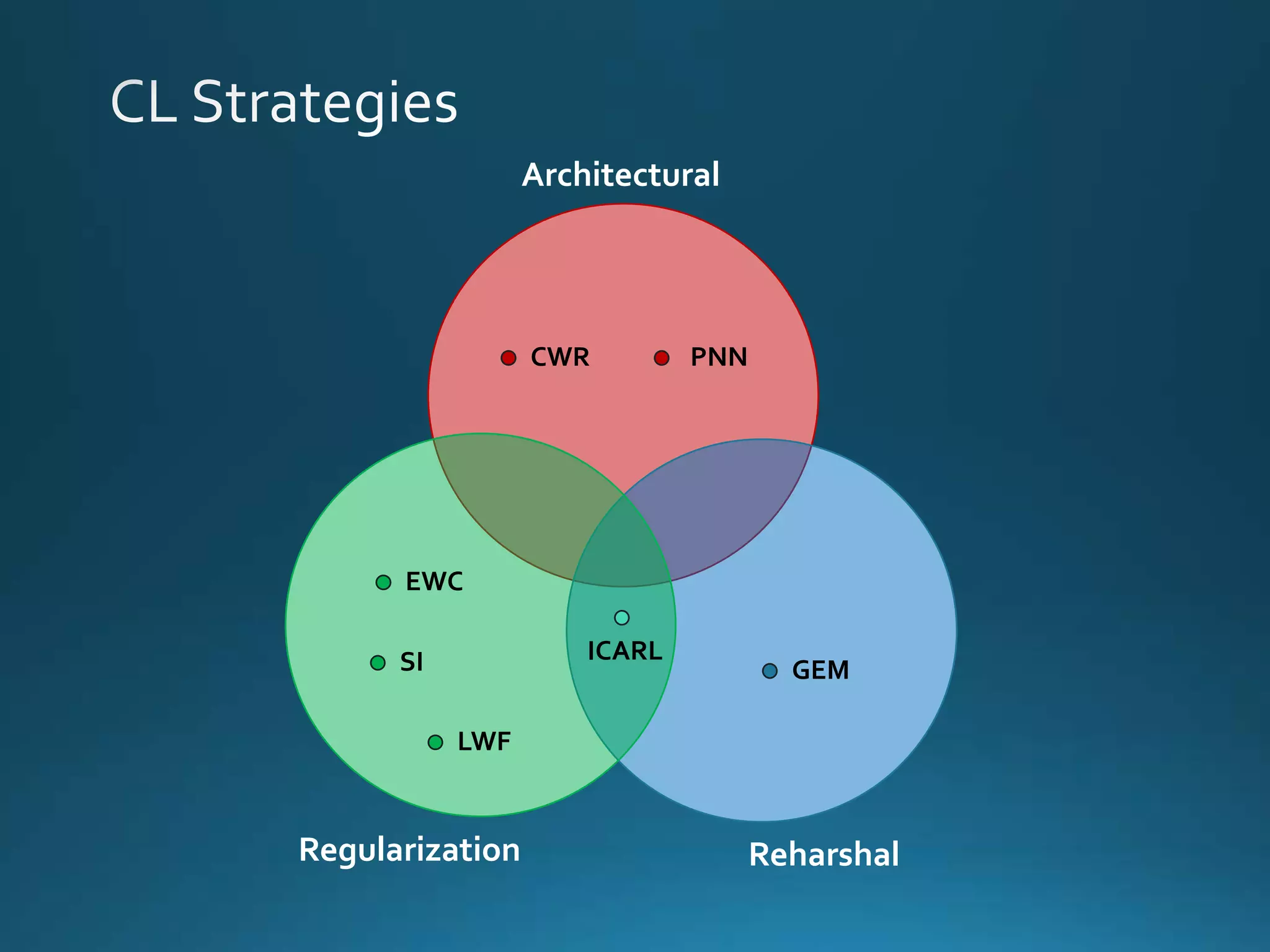

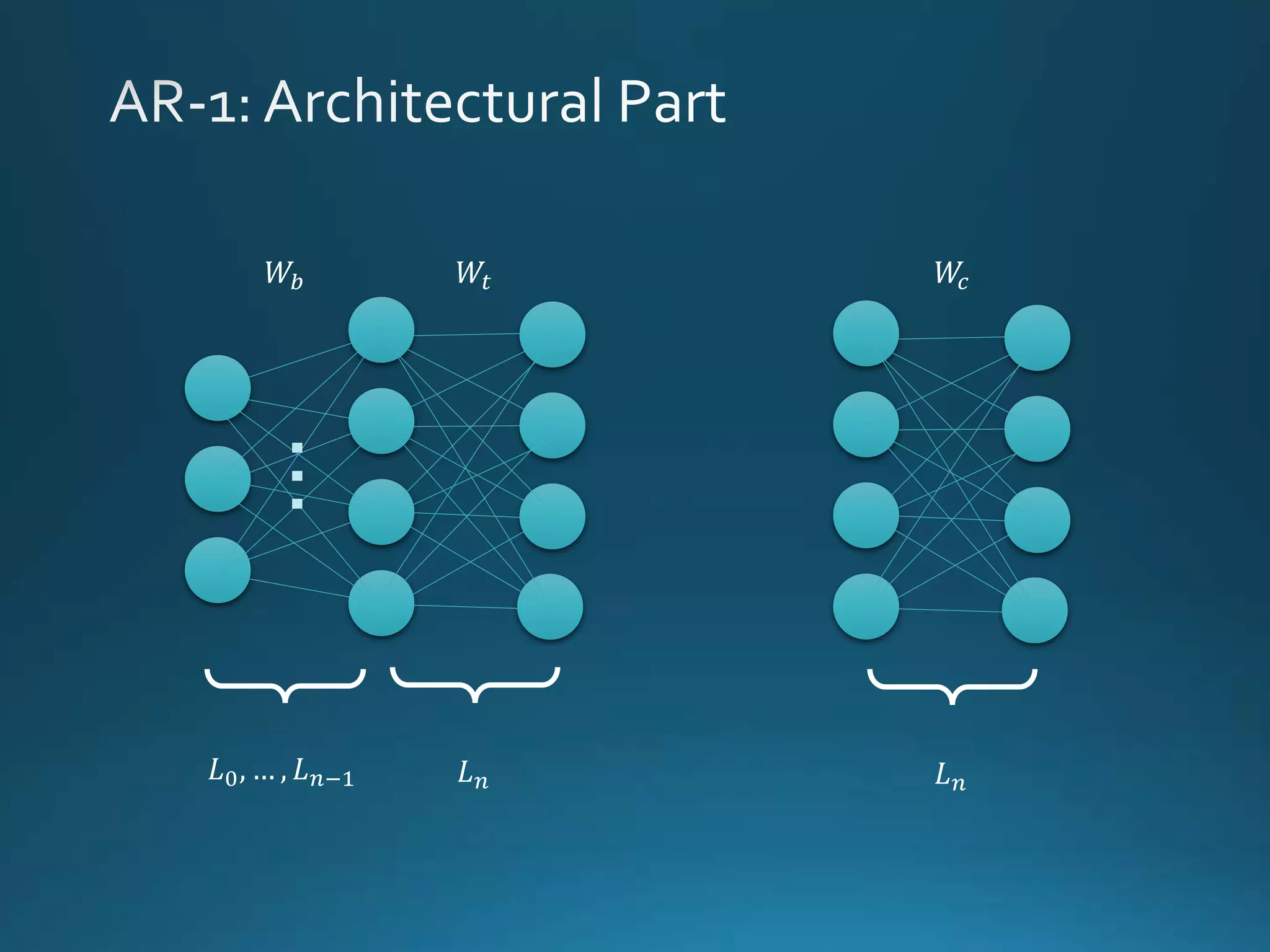

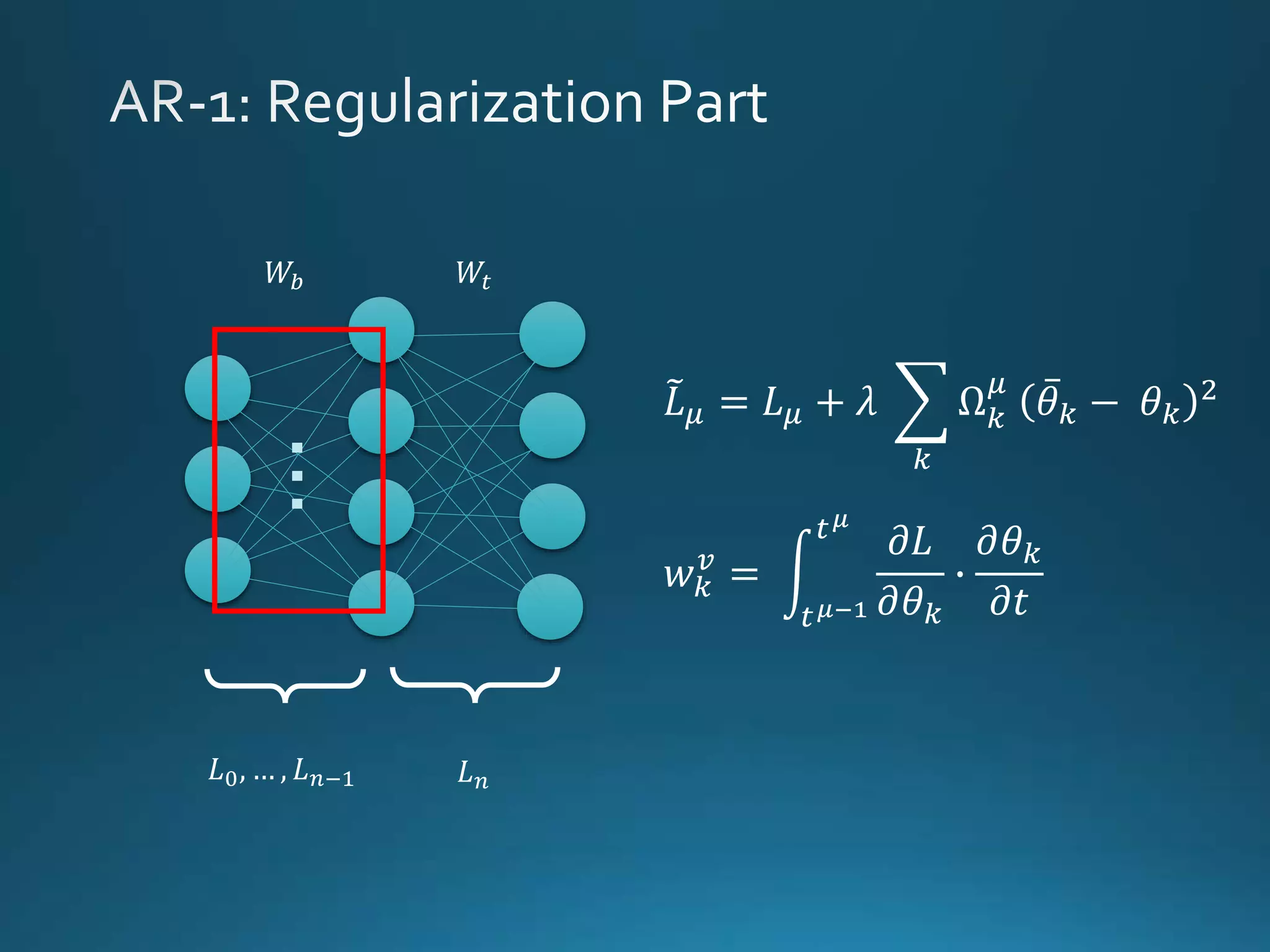

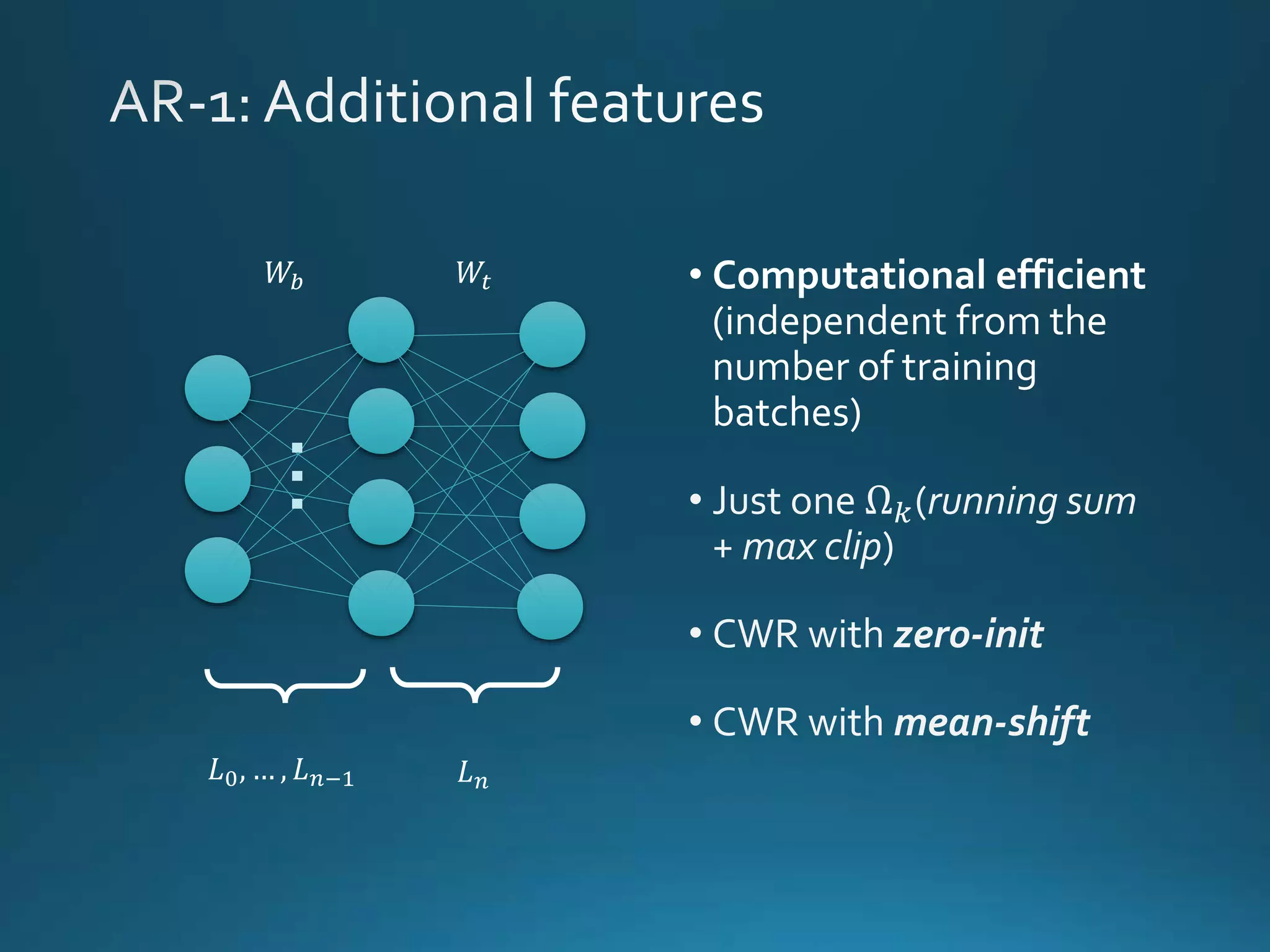

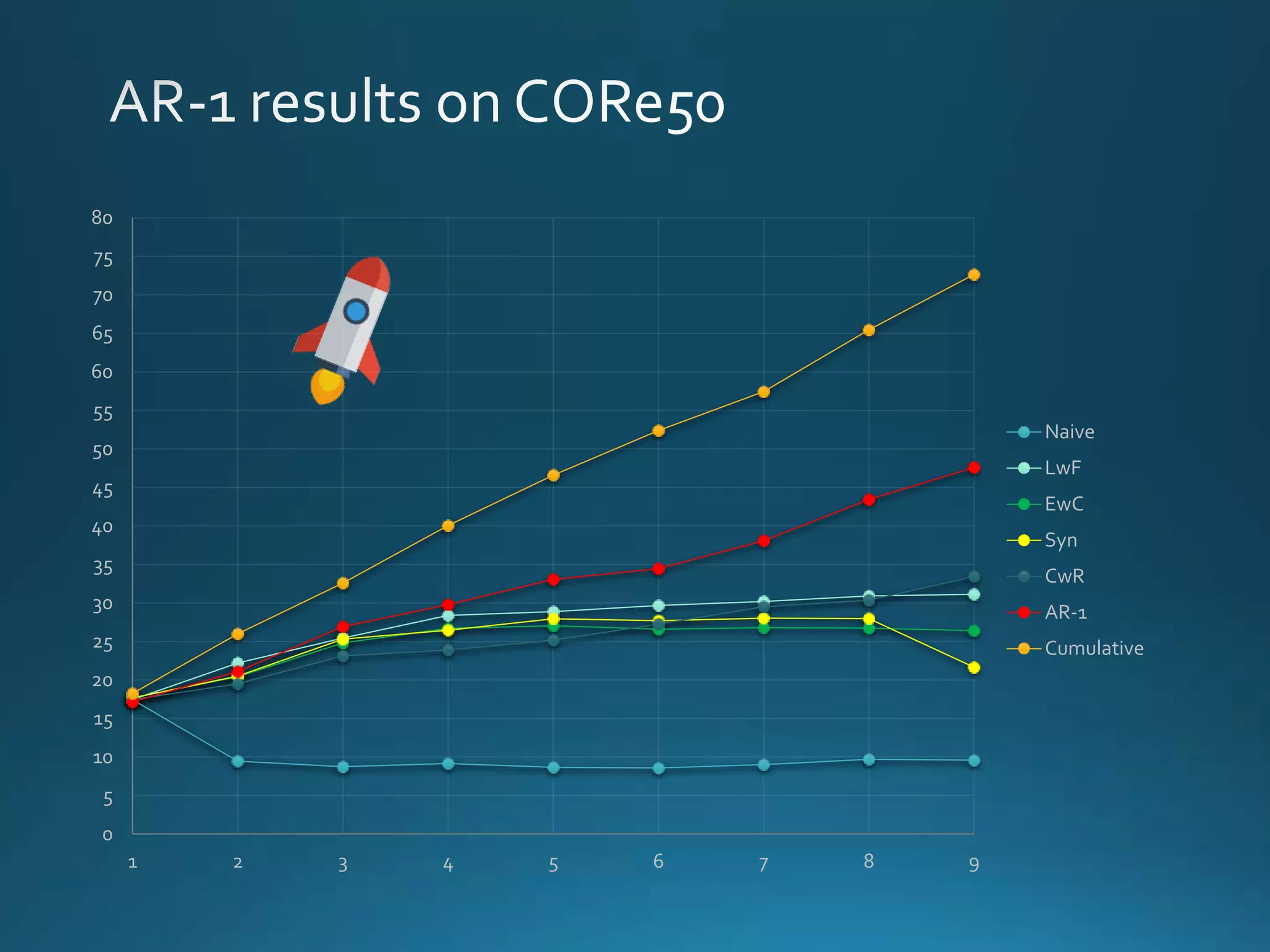

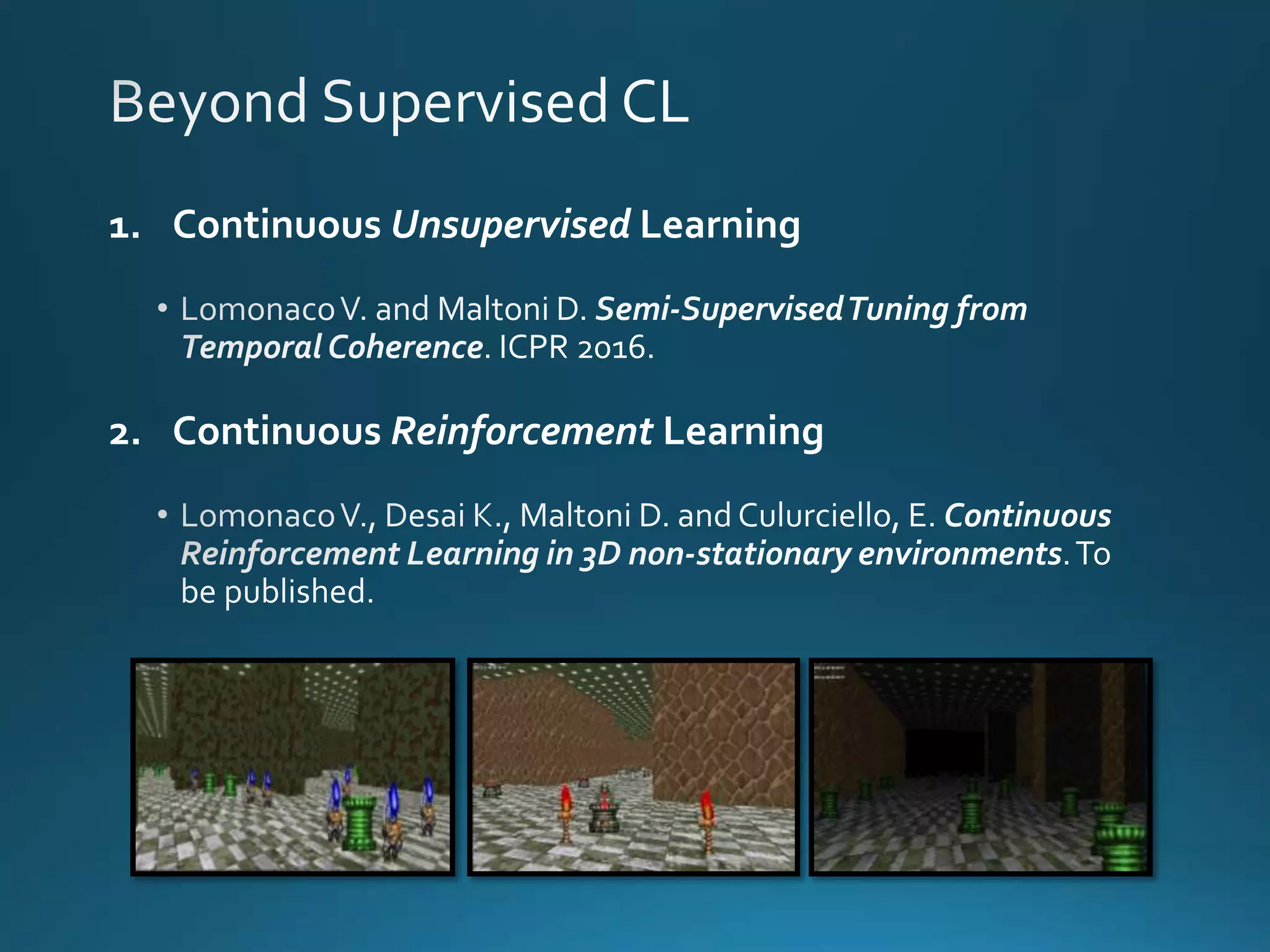

Vincenzo Lomonaco from the University of Bologna discusses advancements in deep learning and presents a new benchmark dataset, Core50, for continuous object recognition. The focus is on incremental learning with limited access to previous data and maintaining computational efficiency. Key techniques mentioned include architectural and regularization approaches for training effective models over time.