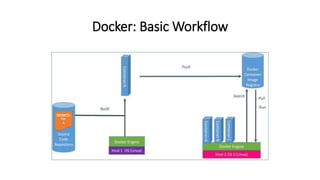

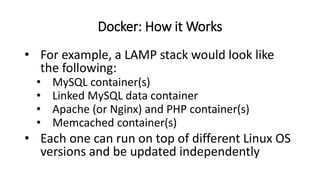

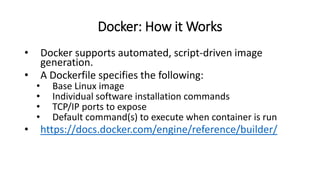

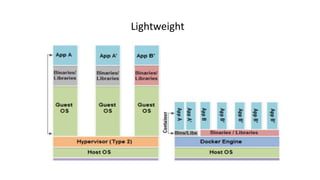

This document discusses containers and Docker. It begins by explaining that cloud infrastructures comprise virtual resources like compute and storage nodes that are administered through software. Docker is introduced as a standard way to package code and dependencies into portable containers that can run anywhere. Key benefits of Docker include increased efficiency, consistency, and security compared to traditional virtual machines. Some weaknesses are that Docker may not be suitable for all applications and large container management can be difficult. Interesting uses of Docker include malware analysis sandboxes, isolating Skype sessions, and managing Raspberry Pi clusters with Docker Swarm.